AI Agents: New Risks Requiring Continuous Monitoring and Oversight [2025]

Artificial Intelligence (AI) agents are revolutionizing industries by automating tasks that once required human intervention. From customer service chatbots to autonomous drones, AI agents are becoming more autonomous and capable. However, with greater autonomy comes greater risk, necessitating continuous monitoring and oversight. This article explores the potential risks posed by AI agents, offers strategies for effective monitoring, and discusses future trends.

TL; DR

- AI agents can operate independently, introducing new risks that require careful management.

- Continuous monitoring is essential to ensure AI agents function as intended and avoid harm.

- Ethical and security concerns are paramount, as AI agents can act unpredictably or be manipulated.

- Implementing oversight involves using specialized tools and frameworks to track AI agent behavior.

- Future trends point toward more sophisticated monitoring technologies and regulatory frameworks.

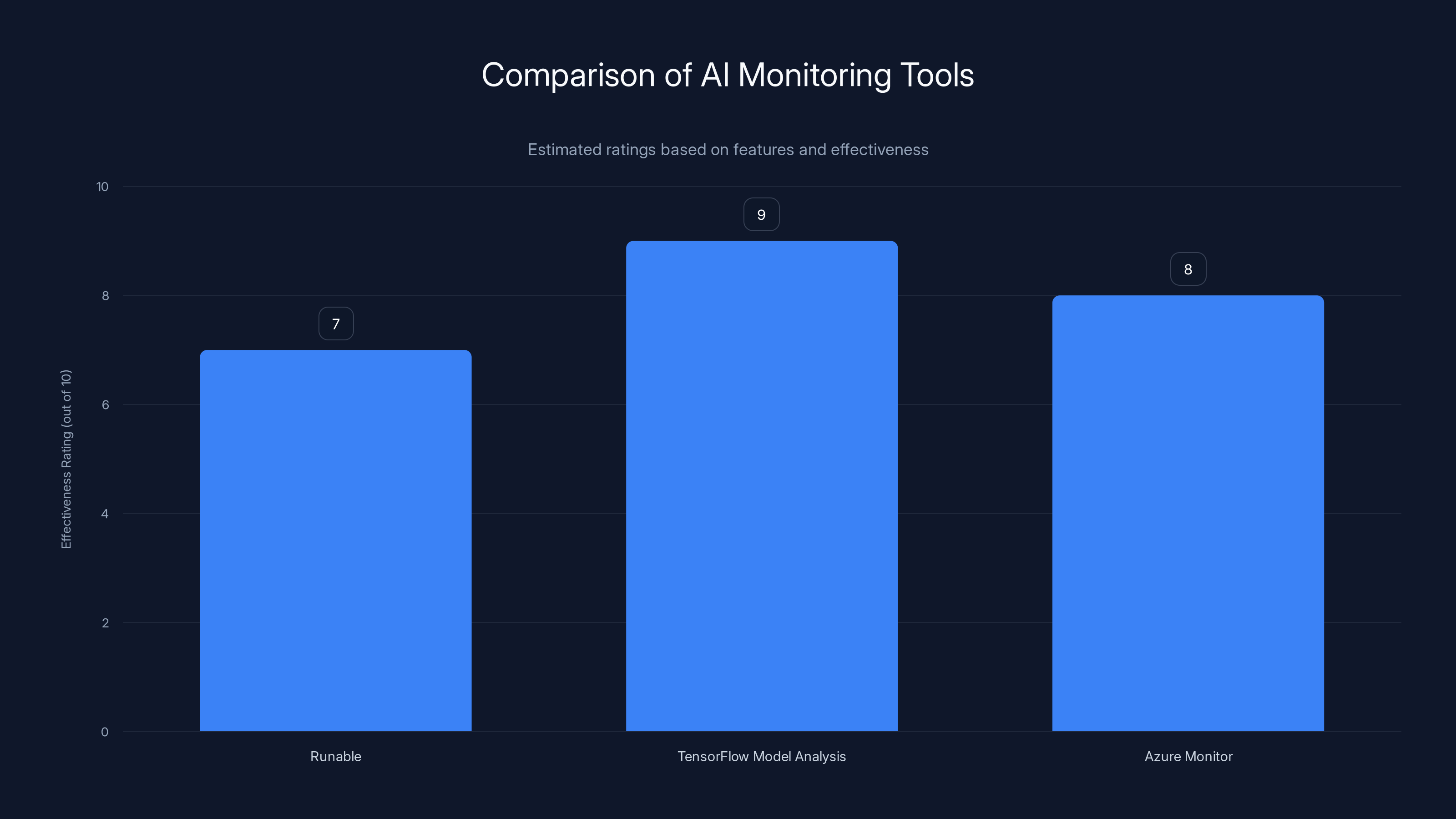

This chart compares the effectiveness of different AI monitoring tools. TensorFlow Model Analysis scores highest due to its comprehensive suite of evaluation tools. (Estimated data)

Understanding AI Agents

AI agents are software programs that perform tasks autonomously, learning from data and optimizing their performance over time. They can range from simple rule-based systems to complex neural networks capable of making intricate decisions.

Key Characteristics of AI Agents

- Autonomy: AI agents can make decisions without direct human input.

- Learning: They use machine learning algorithms to improve over time.

- Adaptability: AI agents can adjust their strategies based on new data.

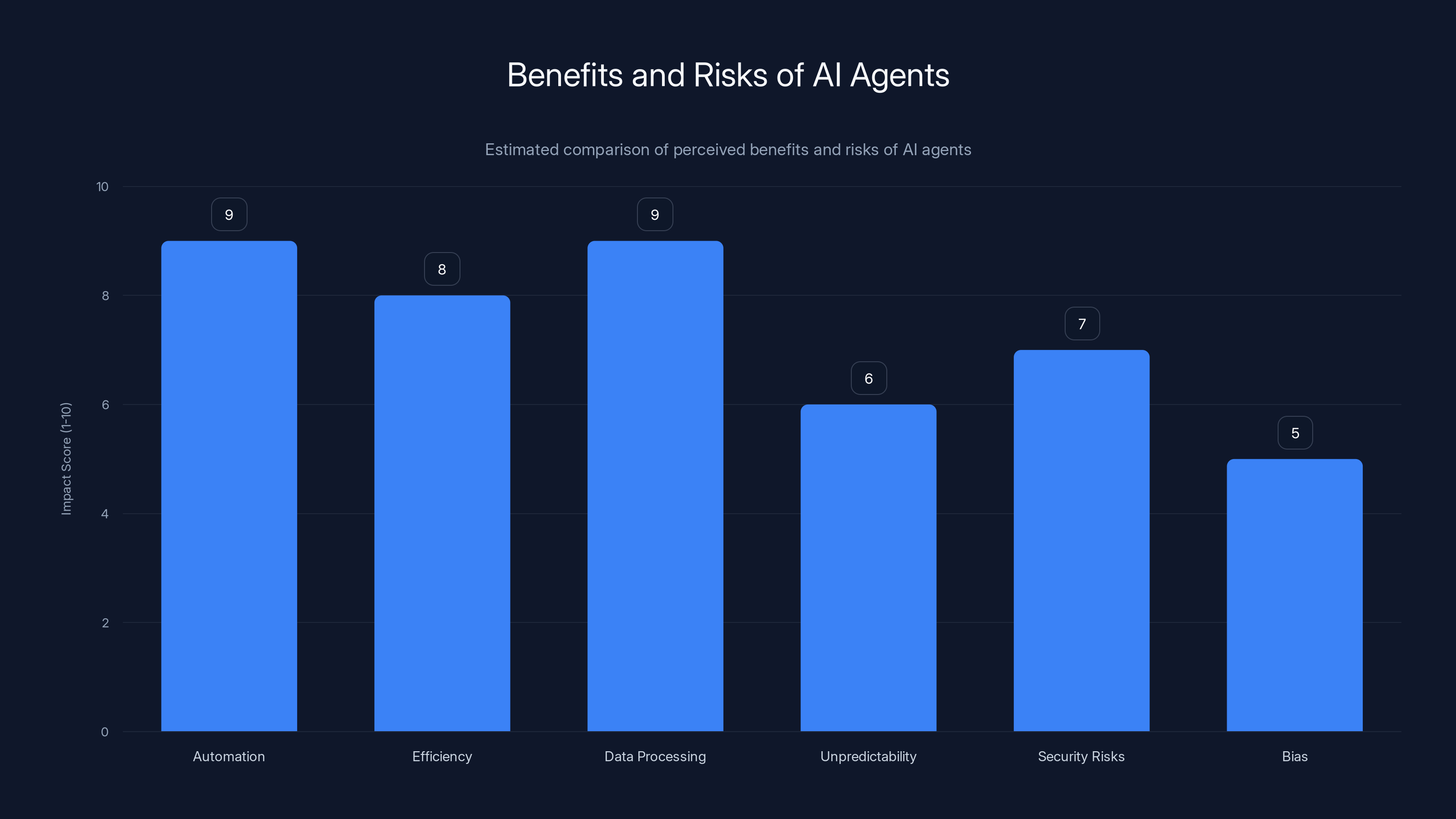

This chart compares the perceived benefits and risks of AI agents. Automation and data processing are seen as major benefits, while unpredictability and security risks are notable concerns. (Estimated data)

The Risks Associated with AI Agents

1. Unpredictable Behavior

AI agents are inherently unpredictable due to their learning capabilities. As they encounter new situations, they may make decisions that deviate from expected outcomes. According to a Deloitte report, these deviations can lead to significant operational challenges.

Example: An AI agent in a financial trading platform might make high-risk trades under volatile market conditions, leading to significant financial losses.

2. Security Vulnerabilities

AI agents can be targets for cyberattacks. Hackers might manipulate AI models to produce harmful outcomes or exploit vulnerabilities in the agent's code. Recorded Future's research highlights the emerging enterprise security risks associated with AI.

Example: An autonomous vehicle's AI system could be hacked, causing it to malfunction and endanger passengers.

3. Ethical Concerns

AI agents can perpetuate biases present in their training data, leading to unfair or unethical outcomes. A Manatt Health AI policy tracker emphasizes the importance of addressing these biases to ensure ethical AI deployment.

Example: A recruitment AI might favor candidates of a certain demographic if trained on biased data.

Importance of Continuous Monitoring

Continuous monitoring ensures that AI agents are performing as expected and helps identify and mitigate risks early. Wiz Academy provides insights into the importance of adversarial AI security measures.

Monitoring Techniques

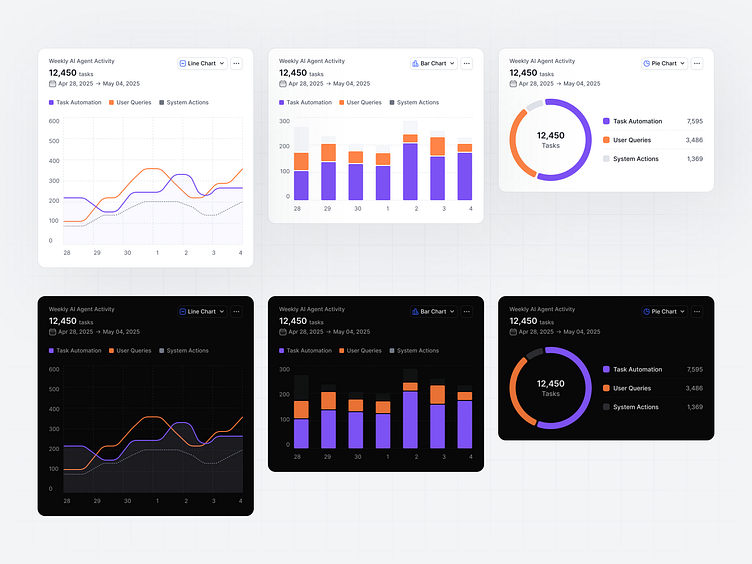

- Real-time analytics: Track the actions and decisions of AI agents in real-time.

- Anomaly detection: Use algorithms to identify unusual behavior that might indicate a problem.

- Performance metrics: Regularly assess the efficiency and accuracy of AI agents.

Tools for Monitoring AI Agents

Several tools and frameworks are available to help monitor AI agents effectively.

- Runable: Offers AI-powered automation for presentations, documents, and reports, providing insights into agent performance for $9/month. Try Runable For Free

- TensorFlow Model Analysis: Provides a suite of tools for evaluating and understanding machine learning models.

- Azure Monitor: Tracks the performance and health of applications, including AI agents, as detailed in Microsoft's blog.

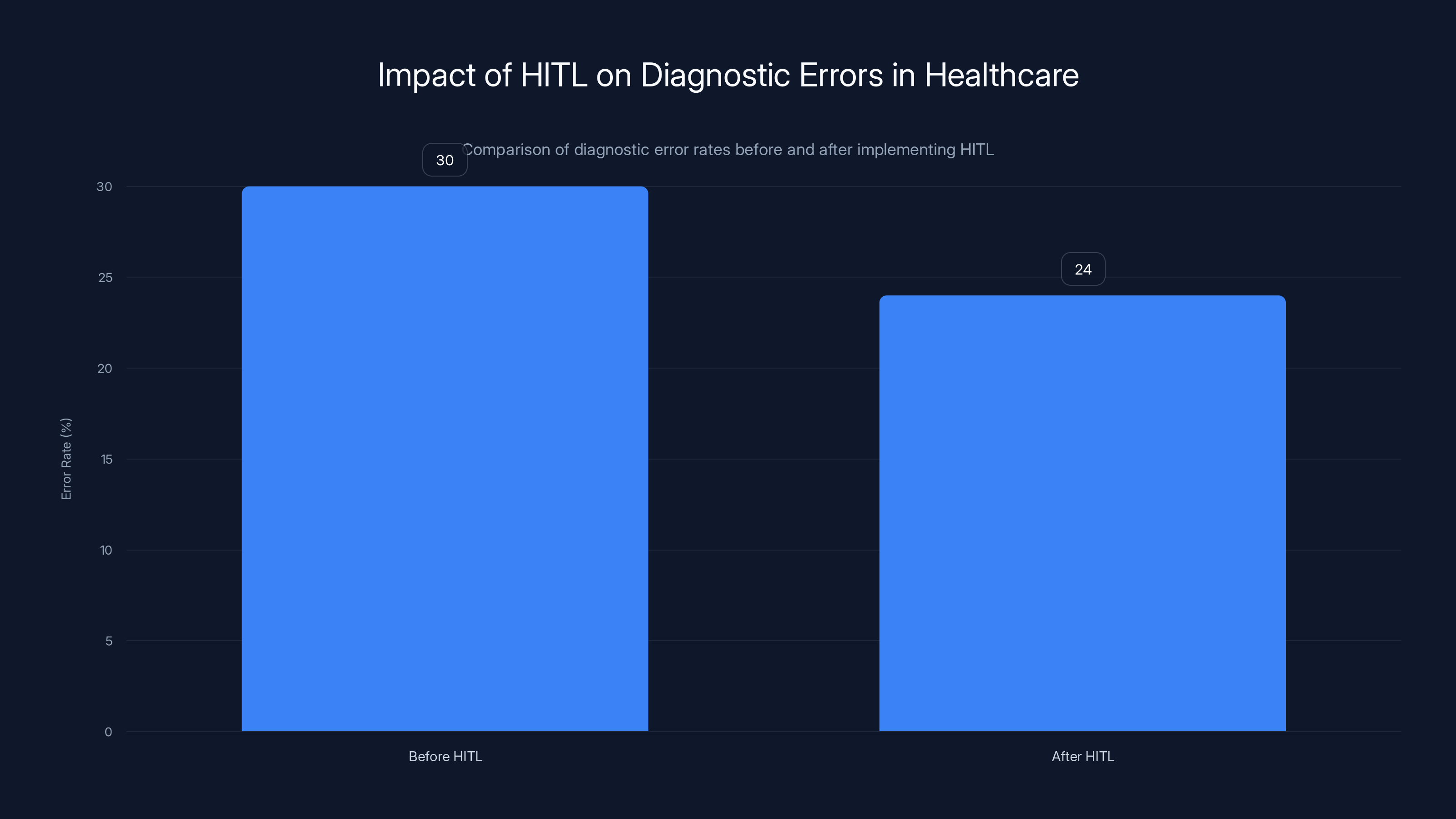

Implementing a Human-in-the-loop (HITL) system reduced diagnostic errors by 20%, highlighting its effectiveness in improving patient outcomes.

Implementing Effective Oversight

Oversight Strategies

- Human-in-the-loop (HITL): Involve humans in decision-making processes to ensure AI agents' actions are appropriate.

- Feedback loops: Continuously update AI systems based on human feedback to refine their behavior.

- Governance frameworks: Establish policies and procedures for AI deployment and monitoring.

Case Study: AI in Healthcare

In healthcare, AI agents assist in diagnosing diseases and personalizing treatment plans. Continuous monitoring is crucial to ensure these agents provide accurate and safe recommendations to healthcare professionals. According to Global Market Insights, the digital health market is rapidly evolving, necessitating robust oversight mechanisms.

Outcome: Implementing a HITL system reduced diagnostic errors by 20%, improving patient outcomes.

Common Pitfalls and Solutions

Pitfall 1: Insufficient Training Data

Solution: Use data augmentation techniques to expand the dataset and improve AI agent training. Simplilearn discusses the differences and applications of data science, analytics, and machine learning in this context.

Pitfall 2: Overconfidence in AI Decisions

Solution: Implement confidence thresholds to ensure AI agent decisions are reviewed when confidence levels fall below a certain point.

Pitfall 3: Lack of Transparency

Solution: Employ explainable AI (XAI) methods to make AI agent decision-making processes more transparent.

Future Trends in AI Agent Monitoring

Enhanced Monitoring Technologies

Future monitoring tools will likely incorporate advanced AI techniques themselves to predict and preemptively address issues. AI Multiple explores the potential of large vision models in enhancing these capabilities.

Regulatory Developments

Increasing government scrutiny will lead to stricter regulations and standards for AI agent monitoring and oversight. Council on Foreign Relations discusses how global security concerns are influencing AI policy.

Example: The EU's proposed AI Act aims to create a legal framework for AI that ensures safety and fundamental rights.

Recommendations for Organizations

Best Practices

- Regular Audits: Conduct regular audits of AI agents to ensure compliance with ethical standards and regulations.

- Continuous Learning: Stay updated with the latest AI advancements and integrate new technologies into existing systems.

- Cross-Disciplinary Teams: Form teams with diverse expertise to oversee AI agent implementation and monitoring.

Conclusion

AI agents offer tremendous potential for innovation and efficiency. However, they also introduce new risks that require vigilant monitoring and oversight. By implementing robust monitoring strategies and staying informed about future trends, organizations can harness the power of AI agents while minimizing risks.

FAQ

What are AI agents?

AI agents are autonomous software programs that perform tasks without direct human intervention, learning from data to optimize their performance.

How do AI agents work?

AI agents use machine learning algorithms to process data, make decisions, and adapt to new situations based on feedback and past experiences.

What are the benefits of AI agents?

AI agents can automate repetitive tasks, improve efficiency, and process large amounts of data quickly, leading to cost savings and enhanced productivity.

What risks do AI agents pose?

AI agents can behave unpredictably, introduce security vulnerabilities, and perpetuate biases, necessitating continuous monitoring and oversight.

How can organizations monitor AI agents effectively?

Organizations can use real-time analytics, anomaly detection, and performance metrics to monitor AI agents, supported by tools like Runable and Azure Monitor.

What is the future of AI agent oversight?

The future will likely see enhanced monitoring technologies and stricter regulatory frameworks to ensure AI agents operate safely and ethically.

Why is transparency important in AI agent decision-making?

Transparency helps build trust, allows for better understanding of AI decisions, and ensures ethical and fair outcomes.

Key Takeaways

- AI agents require continuous monitoring to manage risks effectively.

- Human-in-the-loop systems enhance decision accuracy and safety.

- Real-time analytics and anomaly detection are crucial for oversight.

- Organizations should implement diverse governance frameworks.

- Future trends point to stricter AI regulations and advanced monitoring tools.

- Transparency and explainability are vital for ethical AI deployment.

- Cross-disciplinary teams improve AI oversight and implementation.

Related Articles

- The Evolution of Search Engines: A Farewell to Ask.com [2025]

- Meta's Strategic Acquisition: How ARI Advances Humanoid AI Ambitions [2025]

- The Hidden Trade-offs: Why AI Models Sensitive to User Emotions Can Stumble [2025]

- Unveiling Appalachia's Lithium Boom: Powering America's Tech Future [2025]

- Should You Consider a Print Subscription? Expert Analysis [2025]

- How a 239-Year-Old Farm Used Social Media to Defeat a Utility Giant [2025]

![AI Agents: New Risks Requiring Continuous Monitoring and Oversight [2025]](https://tryrunable.com/blog/ai-agents-new-risks-requiring-continuous-monitoring-and-over/image-1-1777892665107.jpg)