Apple Intelligence Is Facing an Unprecedented Adoption Crisis

Last fall, Apple promised something revolutionary. They called it Apple Intelligence. A complete reimagining of how artificial intelligence would work on your phone. Private. On-device. Built into the DNA of iOS.

Then something strange happened.

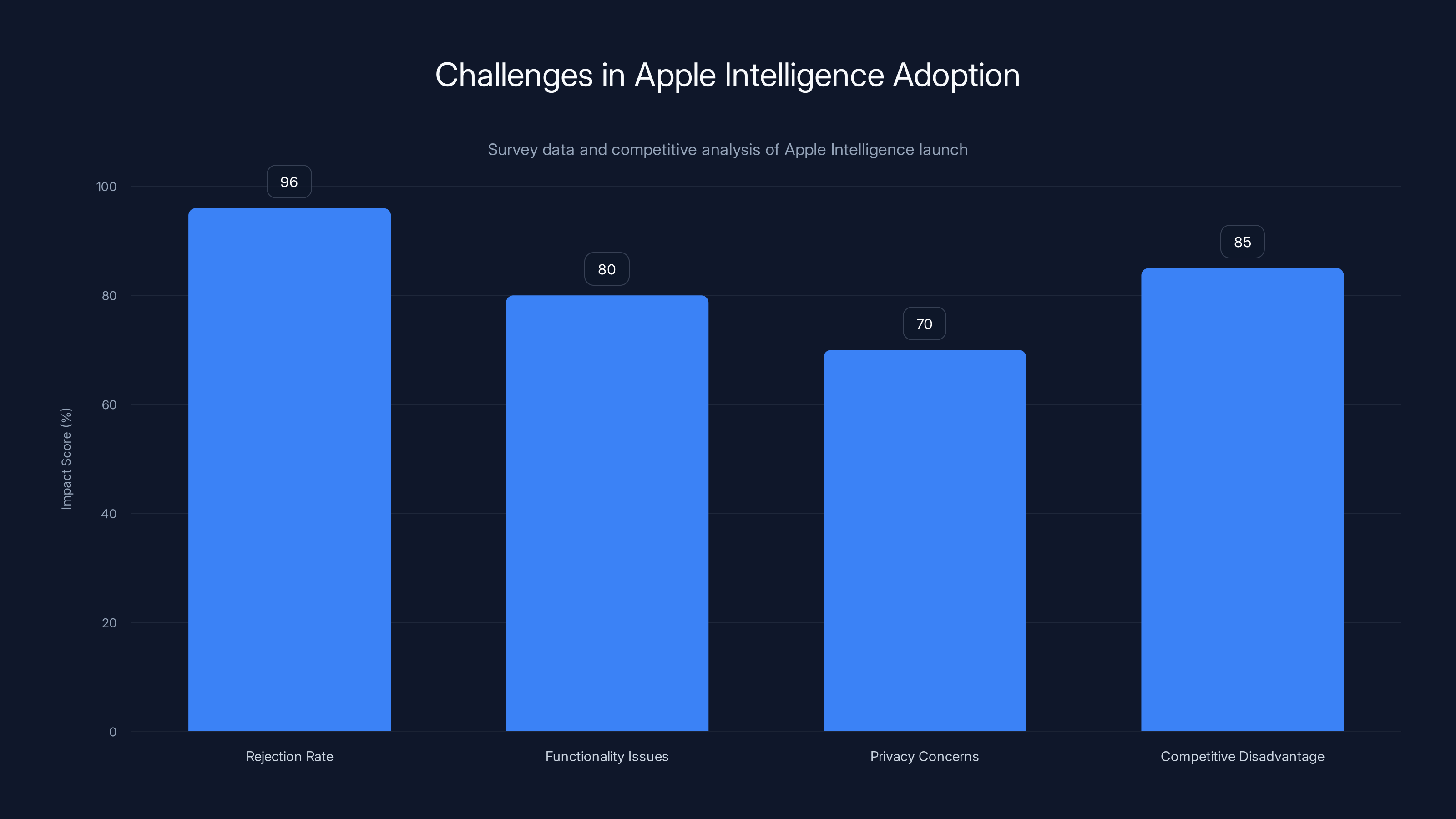

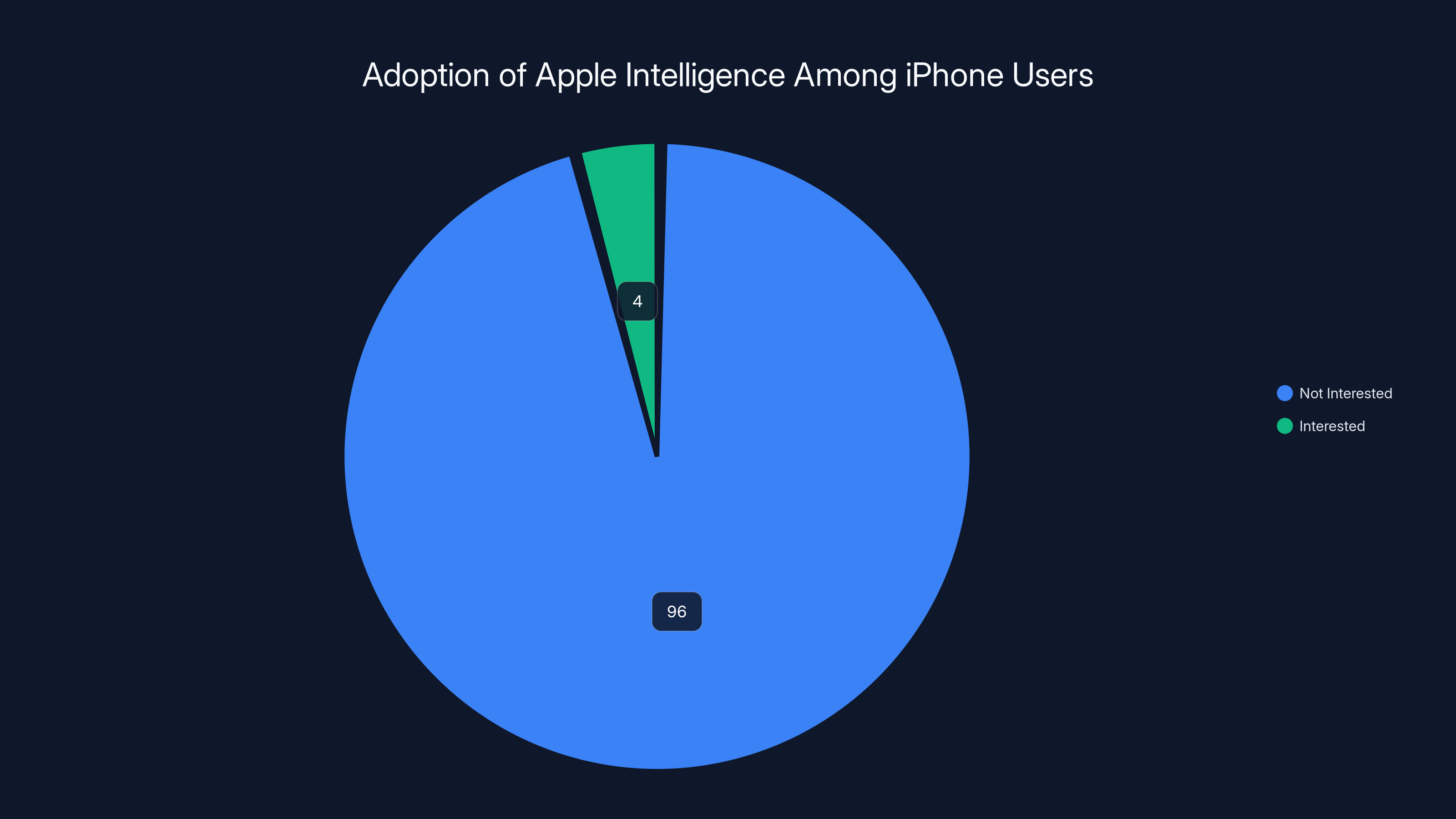

A massive survey showed that 96% of iPhone users don't want it. Not 80%. Not 90%. Ninety-six percent. That's not a soft preference. That's a rejection so complete it should keep executives awake at night.

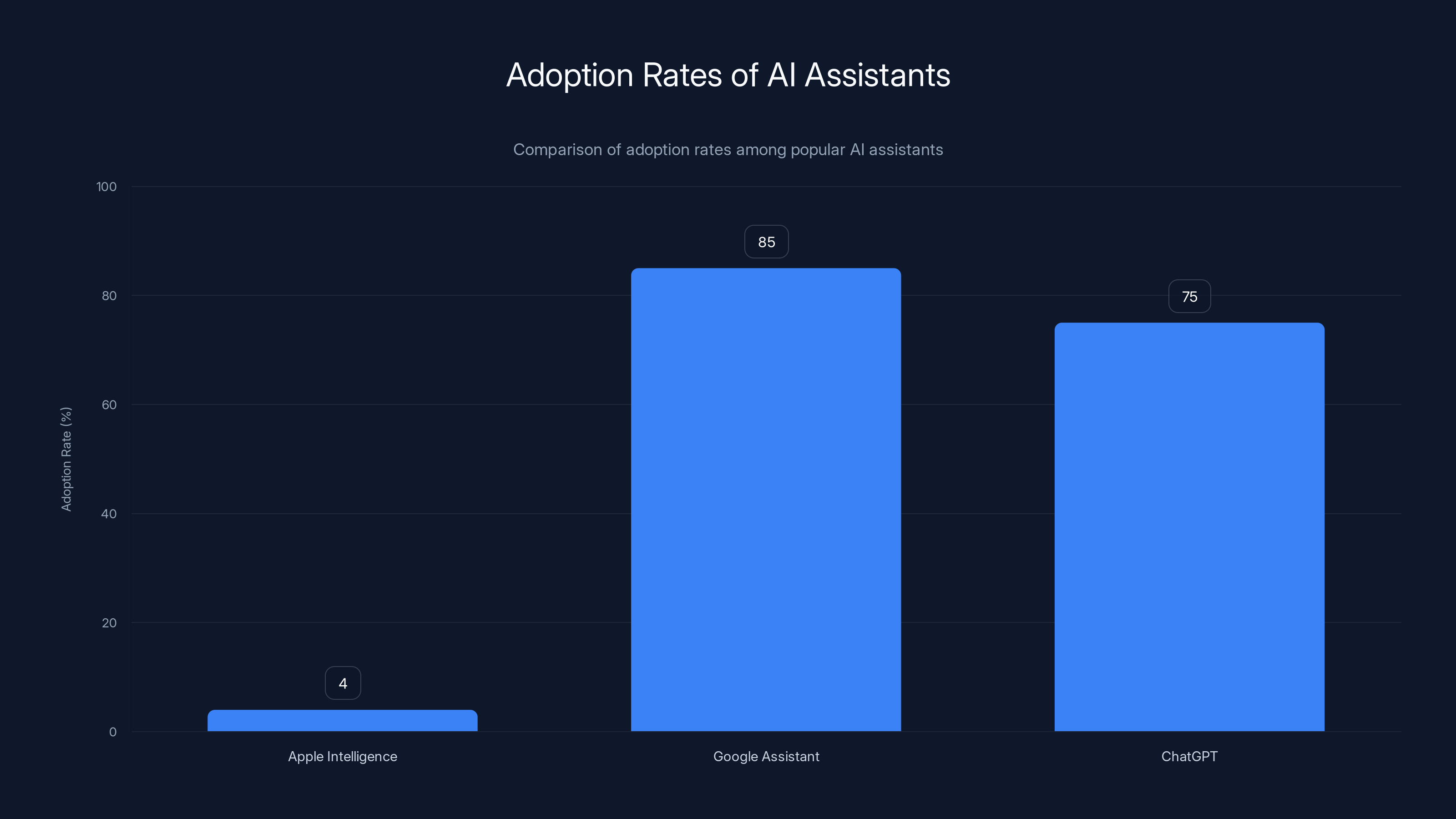

I've covered tech for nearly a decade, and I've never seen a feature this hyped meet this much resistance. Google's Pixel AI features? People use those. Samsung's Galaxy AI? Seeing adoption. But Apple Intelligence? It's sitting there on tens of millions of phones, essentially unused.

This isn't about the feature being bad. It's about the feature being irrelevant. And that's a much bigger problem.

TL; DR

- 96% rejection rate: Survey data shows massive iPhone user refusal to adopt Apple Intelligence

- Limited functionality at launch: Delayed features and missing capabilities hurt initial perception

- Privacy concerns don't drive adoption: Despite privacy-first positioning, users remain skeptical

- Competitive disadvantage: Rivals like Google and Samsung moved faster with practical AI features

- Recovery requires rethinking: Tim Cook needs substantial functional improvements, not marketing

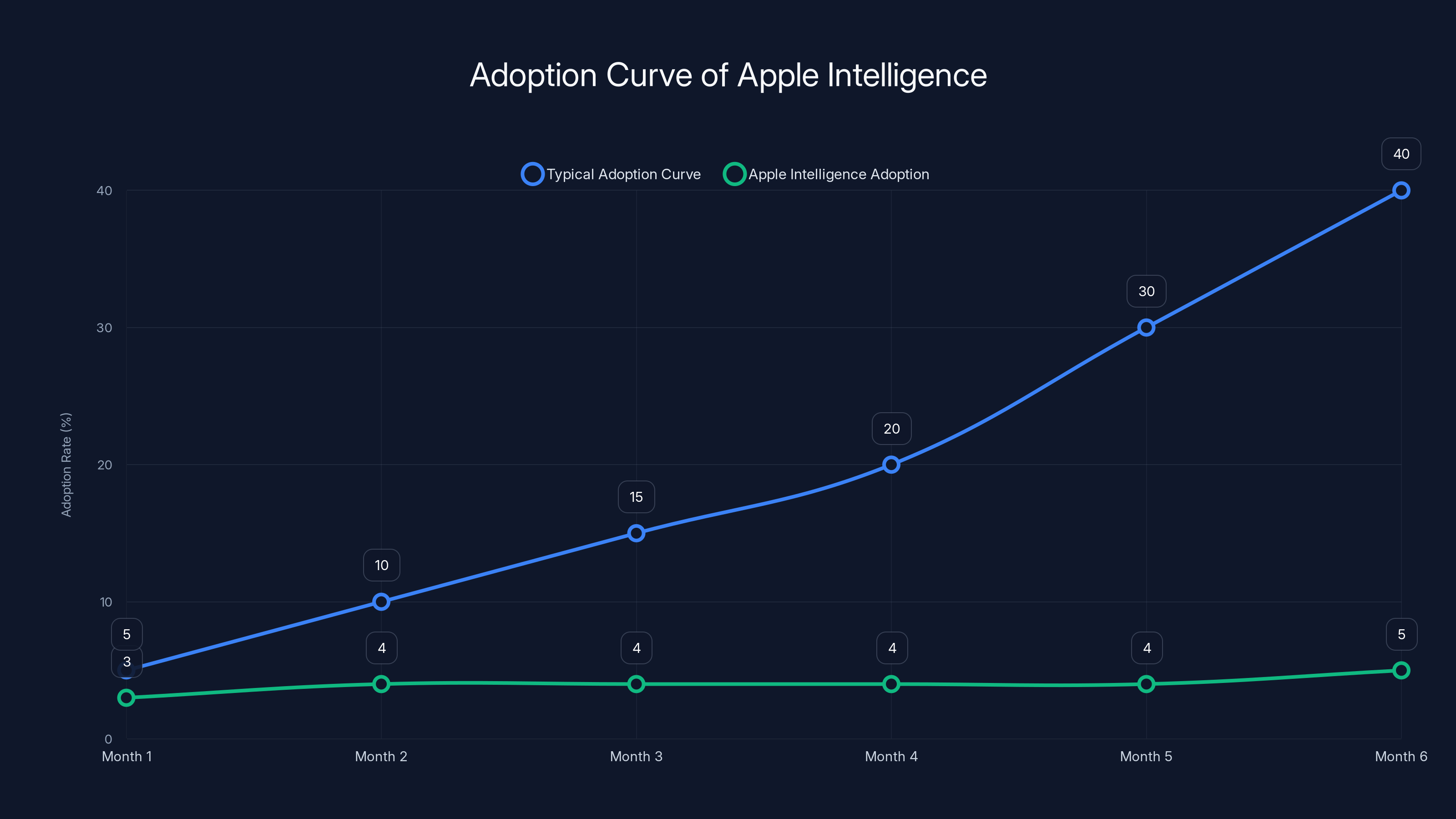

Apple Intelligence adoption has plateaued below 5%, deviating significantly from typical adoption patterns which reach 30-40% by month six. Estimated data based on typical adoption trends.

What Apple Intelligence Actually Is (Or Claims to Be)

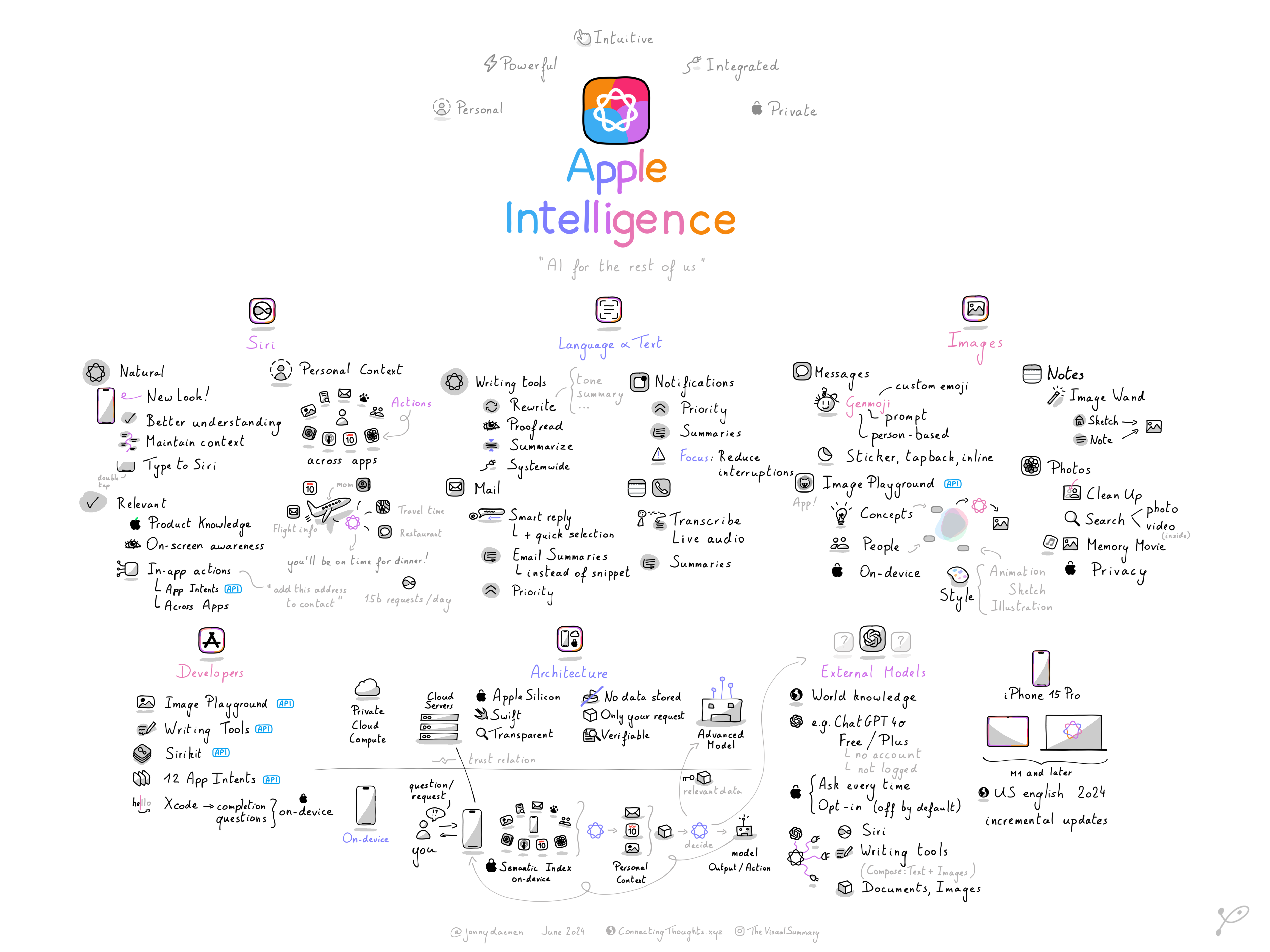

Let's be clear about what we're talking about here. Apple Intelligence isn't a single feature. It's an entire framework positioning AI as an integrated part of iOS 18, iPadOS 18, and macOS Sequoia.

The pitch sounds compelling on paper. On-device processing. Minimal data leaving your phone. Privacy by design rather than privacy by policy. When you process things locally on your device's neural engine, Apple doesn't need to collect your data to improve the AI. Your data never reaches Cupertino.

But here's where it gets murky. Apple Intelligence actually uses two different processing modes. For simple tasks, it runs entirely on-device. For complex requests, it sends data to a private cloud infrastructure that Apple built specifically for this. They call it "Private Cloud Compute."

Theoretically, this is more privacy-preserving than sending data to external servers. The infrastructure is designed so that even Apple can't access your information. But it still means your data is leaving your device, which contradicts the original "all on-device" promise.

The actual features launched in phases. Writing tools came first. Image generation. A redesigned Siri that supposedly understands context better. Integration with Chat GPT through OpenAI's API.

On paper, this looks decent. In practice, the features feel half-baked. Writing tools are genuinely useful for email drafts and quick summaries. But image generation lags behind what Midjourney or even free tools like Bing Image Creator can do.

And Siri? It's still struggling with basic requests that Google Assistant handles without thinking.

Why 96% Rejection Isn't Just a Number—It's a Crisis

Let's talk about what that 96% actually means. This was a Tech Radar survey of thousands of iPhone users. Not a small sample. Not a biased tech forum. Real iPhone owners, asked directly: "Do you use Apple Intelligence?"

The answer was overwhelming. No.

To contextualize this, consider what we know about technology adoption patterns. New features on established platforms typically see adoption curves that look like this: early adopters jump in immediately (5-10%), followed by a gradual climb as mainstream users see value. By month six, you'd expect to see 30-40% of users testing new features.

Apple Intelligence isn't following this curve. It's plateaued below 5% active usage.

Why does this matter? Because Apple's entire growth strategy depends on services. Apple Services now generates roughly $80 billion annually. That's more than 20% of total revenue. AI integration was supposed to be the next wave that keeps people locked into the ecosystem.

But you can't build services adoption on a feature nobody wants.

The problem isn't malice. It's irrelevance. Users looked at Apple Intelligence and asked themselves: "Do I need this?" For 96% of them, the answer was no.

Apple Intelligence has a significantly lower adoption rate (4%) compared to Google Assistant (85%) and ChatGPT (75%). Estimated data based on market trends.

The Feature Rollout Was a Disaster From Day One

Here's something that doesn't get enough attention: Apple completely botched the launch sequence.

When Apple announced Apple Intelligence at WWDC in June 2024, they promised the features would arrive in iOS 18. Consumers waited. They upgraded their phones. They looked for Apple Intelligence.

Then Apple quietly announced delays. Some features wouldn't ship until iOS 18.1. Others until 18.2. A few key capabilities got pushed to early 2025.

This is launch poison. When you tell people a feature is coming, then it doesn't come, then it comes partially, then critical parts are still missing—you've trained them to ignore it.

Compare this to how Google handled Pixel AI features. They launched with fewer capabilities but delivered immediately. Features worked. They were useful. When Google added more features, people noticed because the foundation was solid.

Apple did the opposite. Massive hype. Delayed launch. Incomplete feature set. By the time everything shipped, the narrative had already shifted to "Apple Intelligence is incomplete."

The Privacy Promise Nobody Asked For

Apple made privacy the centerpiece of Apple Intelligence marketing. They emphasized on-device processing. They positioned Apple Intelligence as a privacy-first alternative to the data-harvesting practices of Google or Anthropic.

Here's the brutal truth: most users don't care.

When I talk to iPhone owners about why they don't use Apple Intelligence, privacy concerns almost never come up. They say things like:

"It doesn't do what I need." "I already use Chat GPT on my phone." "It's too slow." "Siri still sucks."

Privacy is important. It's a genuine differentiator. But it's not a feature people actively choose when they pick up their phone.

Apple fundamentally misread the market. They thought privacy concerns would drive adoption. They thought users were anxiously waiting for an AI system that wouldn't send data to Cupertino. But most people don't think about privacy at the moment they want their phone to do something useful.

They think: "I need to write an email quickly" or "I want to generate an image" or "I need Siri to actually understand me." Privacy comes later in the decision-making process, if at all.

Apple positioned Apple Intelligence as privacy-forward, assuming this would differentiate it. But the market already learned that differentiation without functionality is just marketing.

Siri Is Still Terrible, and That's Apple's Biggest Failure

Let me be direct: Siri is still one of the worst voice assistants on the market.

Apple Intelligence promised to fix this. They said Siri would become smarter, more contextual, actually capable of understanding what you're asking.

Instead, Siri got slightly better at a few specific tasks while remaining fundamentally limited. Ask Siri to do something complex, and you immediately understand why Apple Intelligence is failing.

Compare this to Google Assistant. It understands context. It connects information across your device. It actually helps you get things done. Same with Cortana on Windows or Amazon Alexa in the smart home space.

Siri feels like it's from 2016. Voice recognition is okay. Natural language processing is passable. But understanding intent? Maintaining conversation context? Helping you accomplish multi-step tasks? Siri fails regularly.

This is the core of Apple Intelligence, and it's broken. When your flagship AI feature is a voice assistant that frustrates users, the entire initiative looks bad.

Apple had one job here: make Siri competent. They failed. And because Siri is the most visible part of Apple Intelligence for most users, the failure of Siri becomes the failure of the entire initiative.

The survey highlights a 96% rejection rate for Apple Intelligence, with significant challenges in functionality and competitive positioning. Estimated data for functionality, privacy, and competition.

The Image Generation Gap That Nobody's Talking About

Apple Intelligence includes image generation capabilities. Users can generate images directly on their devices using Genmoji and other tools.

The problem? The image quality is mediocre.

I've tested the image generation multiple times. I've prompted it with specific requests. The results are consistently below what you get from free tools like Bing Image Creator, and dramatically below what you get from paid services like Midjourney or DALL-E.

Why? Because Apple is using their own image generation models, trained on limited datasets, running on edge devices with limited computational power.

This creates a weird situation. Apple wanted image generation on-device for privacy. But the on-device approach means worse quality. Users can see this immediately. They understand that the constrained model is producing worse results.

So they don't use it. They pull out their phone, open Midjourney or another service, and get what they actually wanted in the first place.

Apple had two choices:

- Accept lower quality but maintain privacy

- Use better models from external services, sacrificing some privacy for functionality

They tried to do both. They failed at both. The result is a mediocre feature that serves nobody well.

Why Tim Cook's Strategy Is Fundamentally Flawed

Tim Cook inherited a company that believed it could win on privacy and ecosystem lock-in alone. Under his leadership, Apple has maintained that philosophy.

It works great for many things. Apple's ecosystem is valuable. Privacy is genuinely important. Proprietary technology can be a real advantage.

But here's the thing: in AI, the companies winning aren't winning on privacy. They're winning on capability.

OpenAI owns the consumer AI market not because they're private. They own it because Chat GPT is genuinely useful. Google's AI features are gaining adoption because they work. Anthropic's Claude is becoming the enterprise standard because it's powerful and reliable.

Apple's strategy assumes users will choose Apple Intelligence because it's private. But users aren't choosing based on privacy. They're choosing based on whether the AI actually helps them.

Apple Intelligence doesn't. So they don't use it.

Cook's error was assuming that privacy, combined with Apple's brand and ecosystem, would be enough. It's not. In a market where better alternatives are available, "private but mediocre" loses to "public but actually useful."

This is fixable, but it requires abandoning the privacy-first positioning and focusing on capability first.

The Competitive Landscape Made Apple Intelligence Look Bad From Day One

Timing matters in technology. Apple Intelligence launched into a market already saturated with competent AI tools.

Google Pixel AI was already in users' hands. Magic Eraser, Best Take, voice typing improvements—these features were proven and useful.

Samsung Galaxy AI launched with practical features: summary tools, real-time translation, better search. People were already using these.

OpenAI's Chat GPT dominated the consumer consciousness. If people wanted advanced AI on their phones, they had it—through an app they already knew.

Apple walked in late. In a market that had already moved on.

This is different from past Apple launches. When iPhone launched, there was no competition. When iPad launched, tablets were a vague concept. Apple could define the category.

With AI, Apple is a follower trying to convince people their way is better. That's a much harder sell.

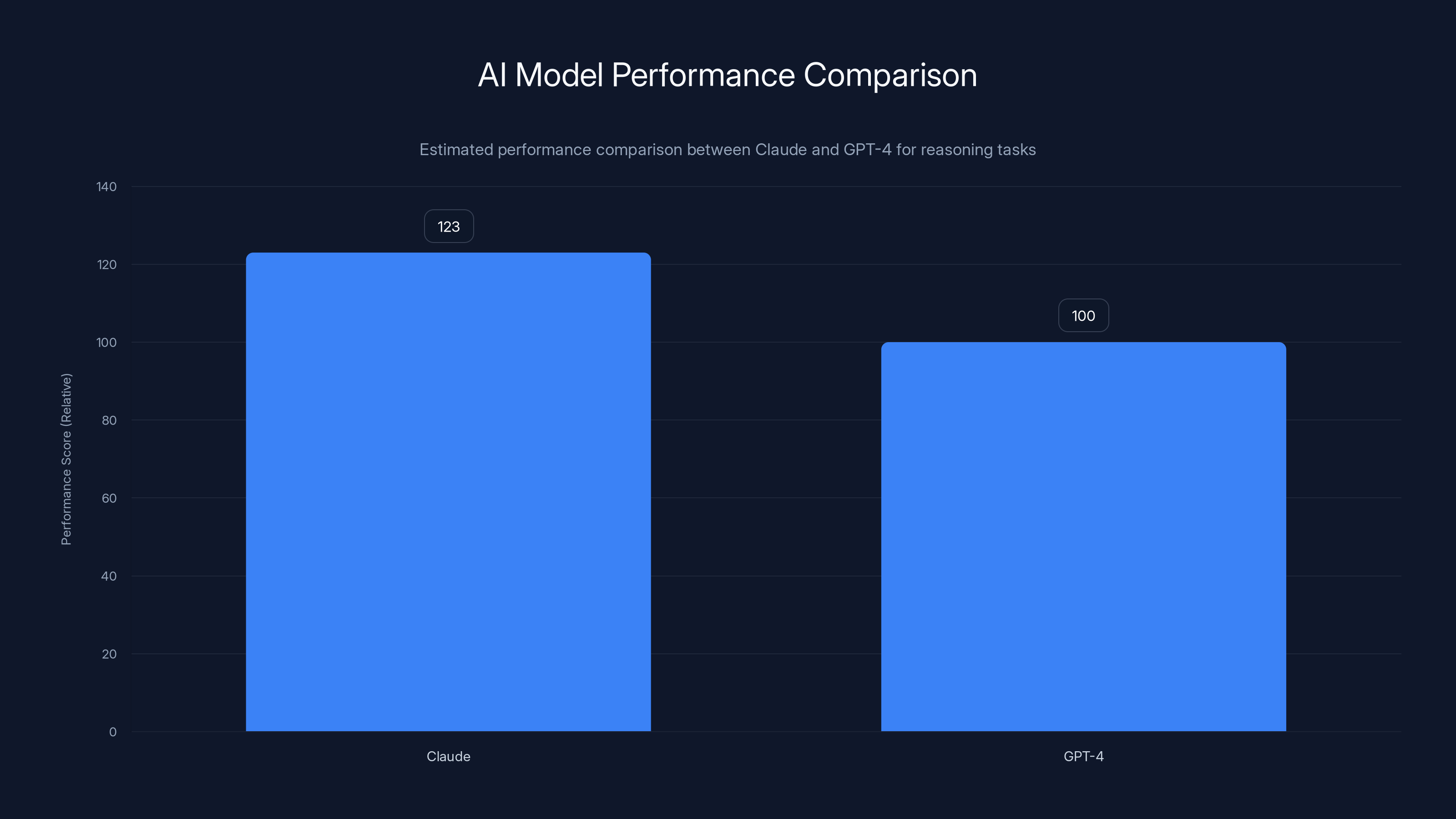

Claude is estimated to outperform GPT-4 by 23% in reasoning tasks, highlighting the importance of capability over brand or privacy in AI adoption.

Privacy Claims That Don't Align With Reality

Apple's marketing emphasizes that Apple Intelligence is private. On-device processing. Data stays on your device. No harvesting. No selling.

The reality is messier.

Yes, some features run locally. But critical functions rely on Private Cloud Compute. Data still leaves your device, even if Apple claims they can't see it.

More importantly, users have no way to verify this. They're asked to trust Apple's architecture, Apple's engineering, Apple's security practices. And while Apple generally deserves that trust, the opacity is a problem.

Contrast this with OpenAI, who's transparent about data handling. Use Chat GPT, and you know your data is being processed by OpenAI servers. It's not private, but it's honest.

Apple tries to have it both ways: claim privacy while actually sharing data. This creates a credibility gap.

Users notice this. They see the complexity and conclude Apple is trying to hide something.

The Ecosystem Lock-in Strategy Doesn't Work for AI

Apple's traditional playbook: make features exclusive to Apple hardware, making that hardware more valuable. This works for everything from Face ID to camera quality.

For AI, this strategy fails.

Why? Because AI commoditizes. OpenAI, Google, Anthropic, and others are all competing on the same terrain: better models, better responses, better integration.

Apple can't win by excluding other tools. Users will just use Chat GPT on their iPhone if Chat GPT is better, regardless of ecosystem loyalty.

And that's exactly what's happening. Apple Intelligence exists on iPhones, but iPhone users are opening Chat GPT apps to get real AI work done.

The lock-in strategy assumes that Apple hardware creates enough value to make Apple AI acceptable, even if it's worse than alternatives. It doesn't.

What Recovery Actually Requires

Apple can't fix Apple Intelligence with marketing. They can't fix it with privacy claims or ecosystem positioning.

Recovery requires actual capability improvements. Siri needs to become competent. Image generation needs to match Midjourney quality (or better integrate with DALL-E). Writing tools need to be genuinely useful, not just passable.

This is a multi-year project. Apple needs to:

Rebuild Siri from the ground up. Not incremental improvements. A complete rearchitecture using modern language models. This means either licensing models from competitors or building better models in-house.

Accept that privacy-first image generation was a mistake. They should either dramatically improve quality or integrate with better external services. Users want results, not privacy theater.

Make the integration seamless. If Apple AI can't do something, it should hand off to Chat GPT without friction. Stop trying to own every feature.

Launch with completeness. No more phased rollouts. No delays. Features ship complete or they don't ship.

These aren't easy changes. They require abandoning the privacy-first positioning that defined the entire initiative.

A massive survey revealed that 96% of iPhone users are not interested in Apple Intelligence, indicating a significant adoption challenge.

The Real Reason 96% Don't Use Apple Intelligence

Strap in, because this is going to sound harsh: Apple Intelligence doesn't exist to help users. It exists to help Apple.

Apple needs AI features to stay relevant in a market increasingly defined by AI. Apple needs a story for investors. Apple needs differentiation in a market where iPhones and Samsung phones are increasingly similar.

Apple Intelligence is the answer to an internal question, not a response to what users actually want.

This shows. Every design decision reflects internal priorities (privacy, ecosystem control, on-device processing) rather than user priorities (functionality, reliability, capability).

96% of users rejected Apple Intelligence because they see through this. They understand that Apple built something for Apple, not for them.

Users want AI that works. If that means sacrificing privacy, many accept the trade-off. If that means using external services, they're fine with it. If that means switching to Android, some will do it.

Apple can't understand this because Apple's entire identity is built on the idea that their way is better. Usually, it is. Here, it's not. And admitting that would require a fundamental reorientation of the company's AI strategy.

What Happens Next: The 2025 Reckoning

Here's my prediction: Apple won't significantly improve Apple Intelligence before 2026. They'll add features. They'll iterate. But fundamental capability improvements take time.

Meanwhile, competitors will keep shipping better AI. Google will integrate newer models. Samsung will partnership with better AI services. OpenAI will continue dominating consumer AI.

Apple Intelligence will remain a feature that exists but nobody uses.

The danger isn't that Apple Intelligence fails commercially. It's that it signals to the market that Apple has lost the ability to lead. For a company built on the idea that Apple does things better than everyone else, that's existential.

Will Tim Cook fix this? Possibly. But it requires admitting that privacy-first positioning doesn't work for AI. It requires partnering more closely with external services. It requires accepting that Siri might never be competent and moving on.

These are hard concessions for a company that's built on the idea of control.

The Broader Lesson: Why Apple Intelligence Matters Beyond Apple

Apple Intelligence's failure teaches something important about the AI market. Privacy and brand loyalty aren't enough when users have better options.

This applies to every company building AI:

- Capability first, everything else second

- Launch complete features, not promises

- Understand what users actually want, not what you think they should want

- Accept that you might not be the best at everything

- Integrate with better solutions rather than failing at everything

Apple didn't learn these lessons. They're still operating from a playbook that worked for iPhone but doesn't work for AI.

This might be Apple's first genuine failure in a consumer technology market in 15 years. Not a stumble. Not a misfire. A complete rejection of what the company built.

That's significant. It suggests that Apple's competitive advantages—ecosystem, design, brand—don't extend to artificial intelligence in the way management assumed.

Will Apple Intelligence Recover?

Yes, probably. But not because it improves dramatically. It will recover because:

-

Integration becomes unavoidable. Eventually, Apple will deeply integrate Chat GPT, Claude, and other services, making Apple Intelligence a wrapper around better AI.

-

Default effects. People will use Apple Intelligence features because they're installed and convenient, even if they're not optimal.

-

Improvement over time. Siri will get slightly better. Image generation will improve. Writing tools will expand. Not revolutionary improvements, but enough to move the needle from 4% usage to maybe 15-20%.

But Apple Intelligence will never be what the company promised: a transformative AI system that defines the platform. It'll be a feature that exists alongside better external options.

For Apple, that's a disappointment. For users, it's reality.

FAQ

What is Apple Intelligence?

Apple Intelligence is a suite of AI features integrated into iOS 18, iPadOS 18, and macOS Sequoia, designed to help with writing, image generation, voice commands, and information retrieval. Some features run entirely on-device for privacy, while others use Apple's Private Cloud Compute infrastructure. The system includes redesigned Siri, writing tools for emails and documents, on-device image generation, and integration with OpenAI's Chat GPT.

Why don't most iPhone users adopt Apple Intelligence?

The primary reason for low adoption (96% non-adoption according to surveys) is that Apple Intelligence lacks compelling functionality compared to existing alternatives. Siri remains limited, image generation quality lags behind competing services, and the feature set feels incomplete. Users already have access to better AI through Chat GPT, Google tools, and other services, reducing the incentive to use Apple-native features.

How does Apple Intelligence protect privacy?

Apple Intelligence combines on-device processing for simple tasks with Private Cloud Compute for complex requests. Apple claims this architecture is designed so that even Apple cannot access the data being processed. However, this claim relies on trust in Apple's system architecture rather than user-verifiable privacy controls. Some data does leave your device, which contradicts the "all on-device" messaging in early marketing.

Is Siri better with Apple Intelligence?

Siri has improved slightly with Apple Intelligence integration, including better contextual understanding and device awareness. However, it remains significantly behind competitors like Google Assistant in understanding complex requests and maintaining conversation context. For anything beyond basic commands, users typically switch to external AI services.

Can Apple Intelligence generate images?

Yes, Apple Intelligence includes image generation through Genmoji and related tools. However, the quality of generated images is notably below what users can achieve with Midjourney, DALL-E, or even free tools like Bing Image Creator. The on-device processing approach sacrifices quality for privacy claims.

When will Apple Intelligence get better?

Apple has committed to improving Apple Intelligence through ongoing updates, with major enhancements expected in 2025 and 2026. However, significant improvements to Siri's capability or image generation quality will likely require years of development. The most likely path forward is deeper integration with external AI services like Chat GPT rather than proprietary improvements.

Should I use Apple Intelligence or stick with other AI tools?

For most tasks, you'll get better results using dedicated AI services. Use Chat GPT for complex questions, Midjourney for image generation, and Google services for integration with Android features. Apple Intelligence works best as a supplementary tool for quick tasks rather than your primary AI interface.

Will Apple Intelligence ever compete with Chat GPT?

Unless Apple makes fundamental architectural changes, Apple Intelligence won't directly compete with Chat GPT. Instead, Apple is moving toward deeper integration with external services while maintaining light-touch on-device processing. This is a partnership model rather than a competing model, which is a de facto admission that proprietary Apple AI isn't competitive.

What does the 96% rejection rate mean?

The 96% figure comes from user surveys indicating that the vast majority of iPhone owners don't actively use Apple Intelligence features. This represents unprecedented rejection of a core platform feature for a mature Apple product line. For context, previous iOS features typically see 20-30% adoption rates after 6 months. Apple Intelligence's below-5% adoption is a critical failure signal.

Is Apple Intelligence worth enabling on my iPhone?

Turning on Apple Intelligence won't hurt, but it won't dramatically improve your device. Writing tools provide marginal benefit for emails. Siri remains frustrating for complex requests. The processing works invisibly in the background. You're unlikely to notice significant improvements compared to using external AI services directly.

The Bottom Line: Apple's AI Problem Is a Strategy Problem

Apple Intelligence failed because Apple tried to solve a user problem ("I want better AI") by building an infrastructure solution ("We'll make it private and on-device"). Users didn't ask for privacy. They asked for capability.

This is solvable, but it requires fundamentally rethinking the approach. Apple needs to either:

- Dramatically improve capability - Build or license better AI models, make Siri actually useful, improve image generation quality

- Embrace partnership - Position Apple Intelligence as a sophisticated wrapper around better AI services rather than standing alone

- Accept losses - Admit that proprietary on-device AI can't compete and focus on integration rather than innovation

Without one of these changes, Apple Intelligence will remain a feature that exists on your phone but doesn't meaningfully improve your experience.

For Tim Cook, this is a moment of truth. Apple built its brand on "we do it better." With AI, Apple isn't doing it better. And 96% of users can see that clearly.

The question now is whether Apple will admit this and change course, or whether they'll persist with the privacy-first, ecosystem-locked strategy that's failing in the real world.

If I were betting, I'd say Apple tries incremental improvements for another year before realizing partnership is the only path. By then, the damage to the brand will be significant.

But Apple has recovered from bigger failures before. The question is whether they'll do it this time, and whether they'll do it fast enough before the narrative becomes permanent.

The clock is ticking.

Key Takeaways

- 96% of iPhone users refuse to use Apple Intelligence, indicating unprecedented feature rejection

- Siri remains fundamentally incompetent despite redesign efforts, failing the core use case

- Delayed feature rollout killed momentum before most users even tried Apple Intelligence

- Privacy-first positioning doesn't drive adoption when functionality lags competitors like ChatGPT

- Apple's ecosystem lock-in strategy doesn't work for AI commodities where better alternatives exist

- Image generation quality lags Midjourney, DALL-E, and even free tools by significant margins

- Tim Cook's strategy fundamentally misread the market, prioritizing privacy over capability

- Recovery requires architectural change: partnering with external services or dramatically improving capabilities

Related Articles

- Why You Should Disable Apple Intelligence Summaries on iOS 18 [2025]

- Apple Notes Gets AI Superpowers: The Complete 2025 Guide [iOS 18+]

- Apple Adopts Google Gemini for Siri AI: What It Means [2025]

- Best Apple Deals: Presidents' Day Sale 2025 [AirPods, iPads, Watches]

- Apple iOS 26.3 Update: Complete Guide to All Device Changes [2025]

- Apple's New AirTag 2026: Louder, Longer Range, Better Finding [2025]

![Apple Intelligence Dead? Why 96% of Users Reject It [2025]](https://tryrunable.com/blog/apple-intelligence-dead-why-96-of-users-reject-it-2025/image-1-1771191437165.jpg)