Balancing AI Autonomy and Control: Anthropic's Claude Code Update [2025]

AI technology is advancing rapidly, and with it comes the challenge of balancing autonomy and control. Anthropic's latest update to Claude Code introduces innovative features that aim to address this challenge. In this article, we'll explore the details of this update, its implications for developers, and best practices for implementing AI in a controlled yet efficient manner.

TL; DR

- AI Autonomy: Anthropic's Claude Code update enhances AI autonomy while maintaining necessary safeguards.

- Auto Mode: New auto mode allows AI to decide safe actions independently, reducing the need for constant human oversight.

- Security Measures: Enhanced safeguards against risky behavior and prompt injection attacks.

- Industry Shift: Reflects a broader industry trend towards more autonomous AI tools.

- Future Implications: Sets the stage for future AI developments with a focus on safety and efficiency.

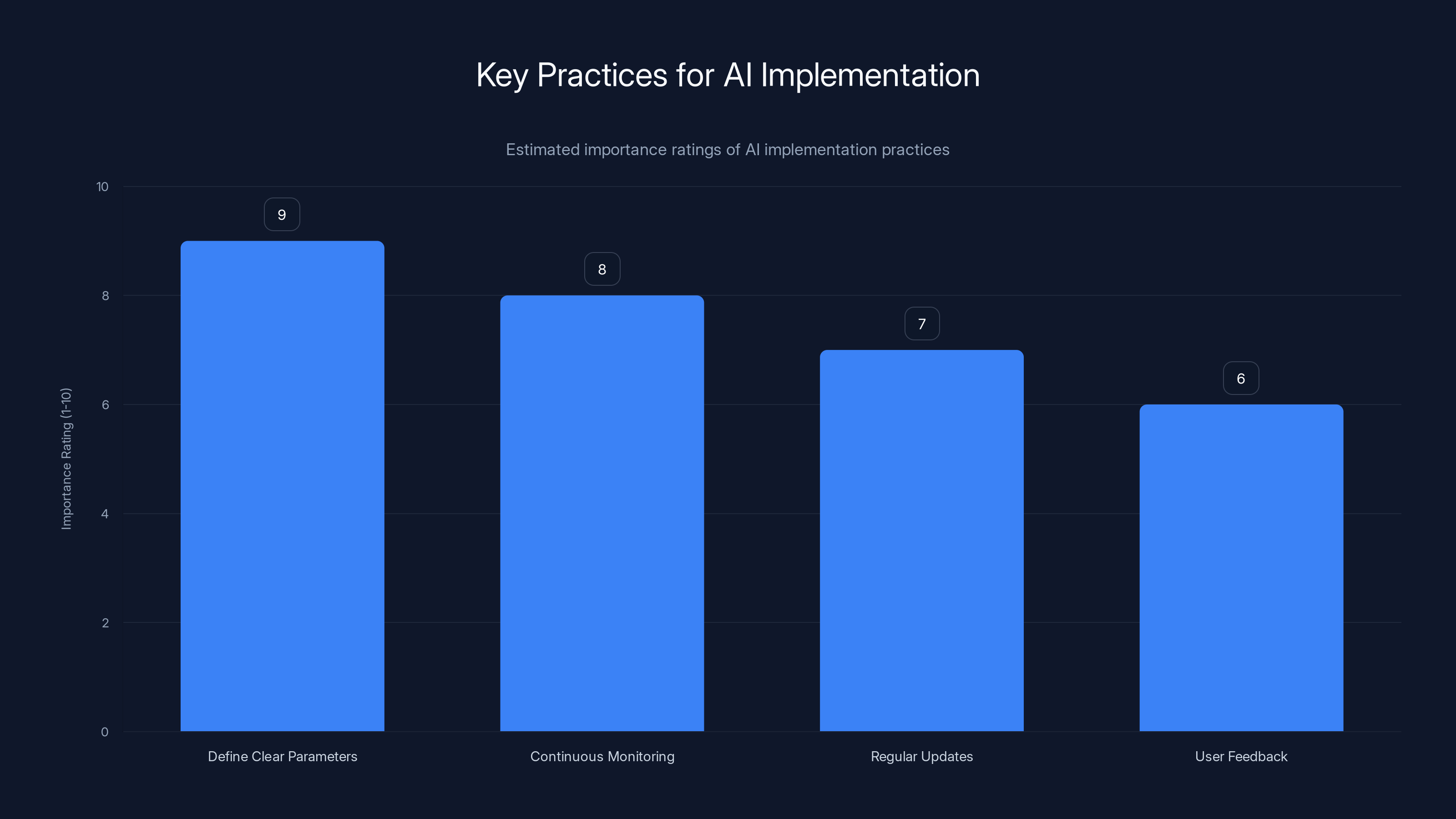

Defining clear parameters is rated as the most important practice for AI implementation, followed by continuous monitoring. (Estimated data)

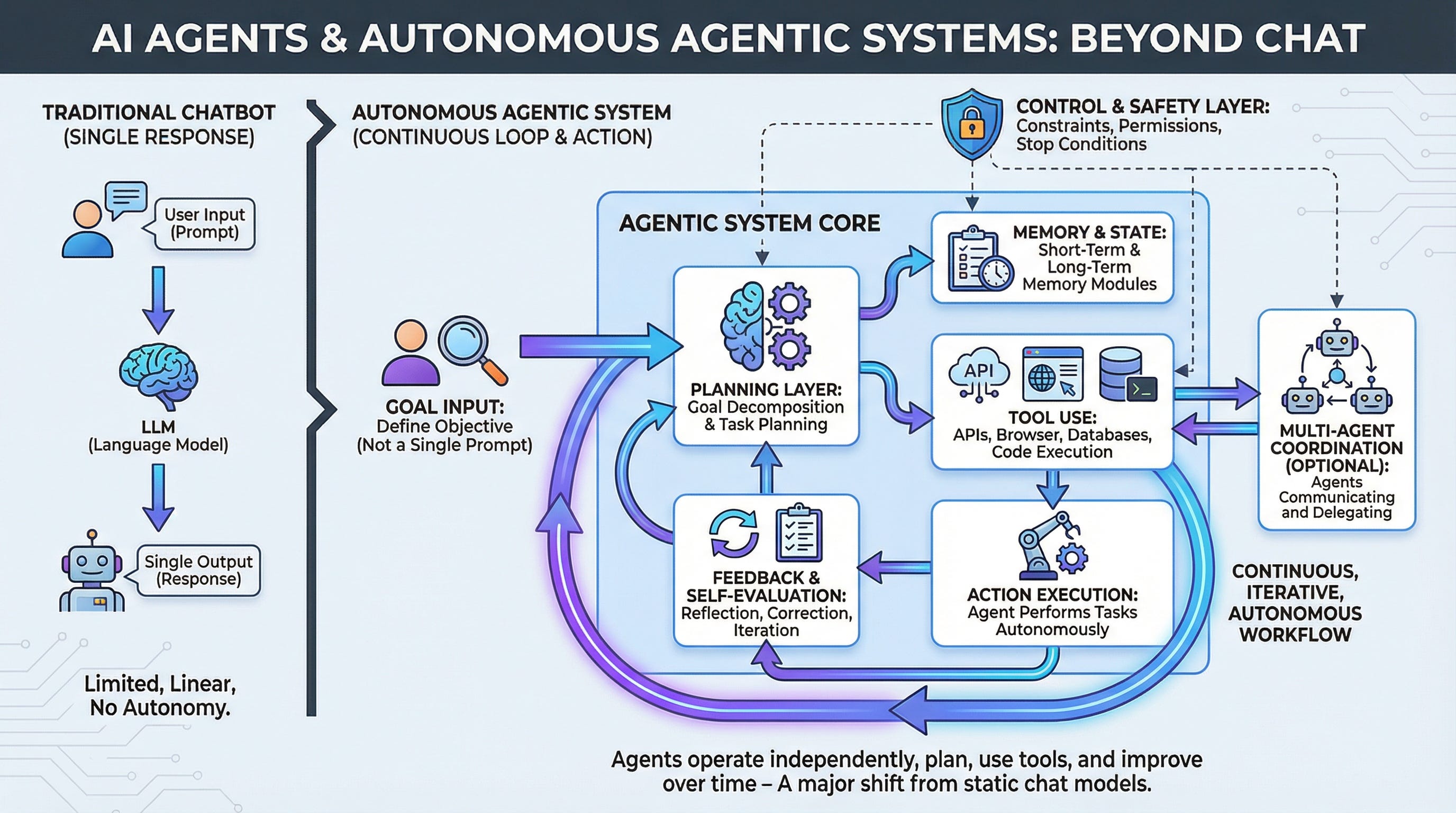

The Growing Need for AI Autonomy

As AI models become more sophisticated, the demand for autonomous decision-making capabilities grows. Developers and businesses seek AI tools that can operate independently without compromising safety or reliability.

The Challenge of Control

One of the main challenges in AI development is finding the right balance between autonomy and control. Too many guardrails can slow down AI processes, while too few can lead to unpredictable and potentially harmful behavior.

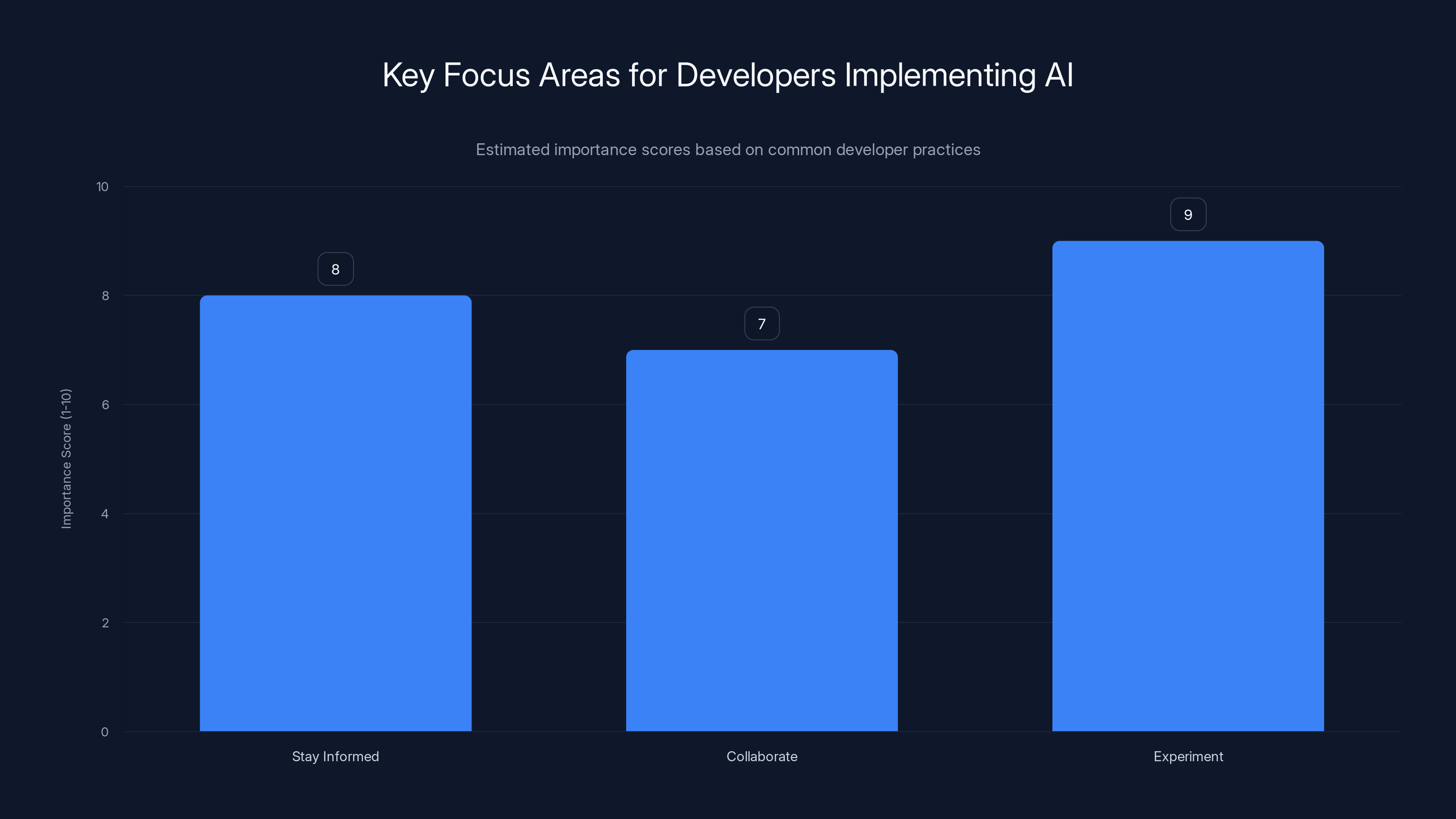

Experimenting with new features is rated highest in importance for developers implementing AI, followed by staying informed and collaboration. Estimated data.

Anthropic's Approach: Claude Code Update

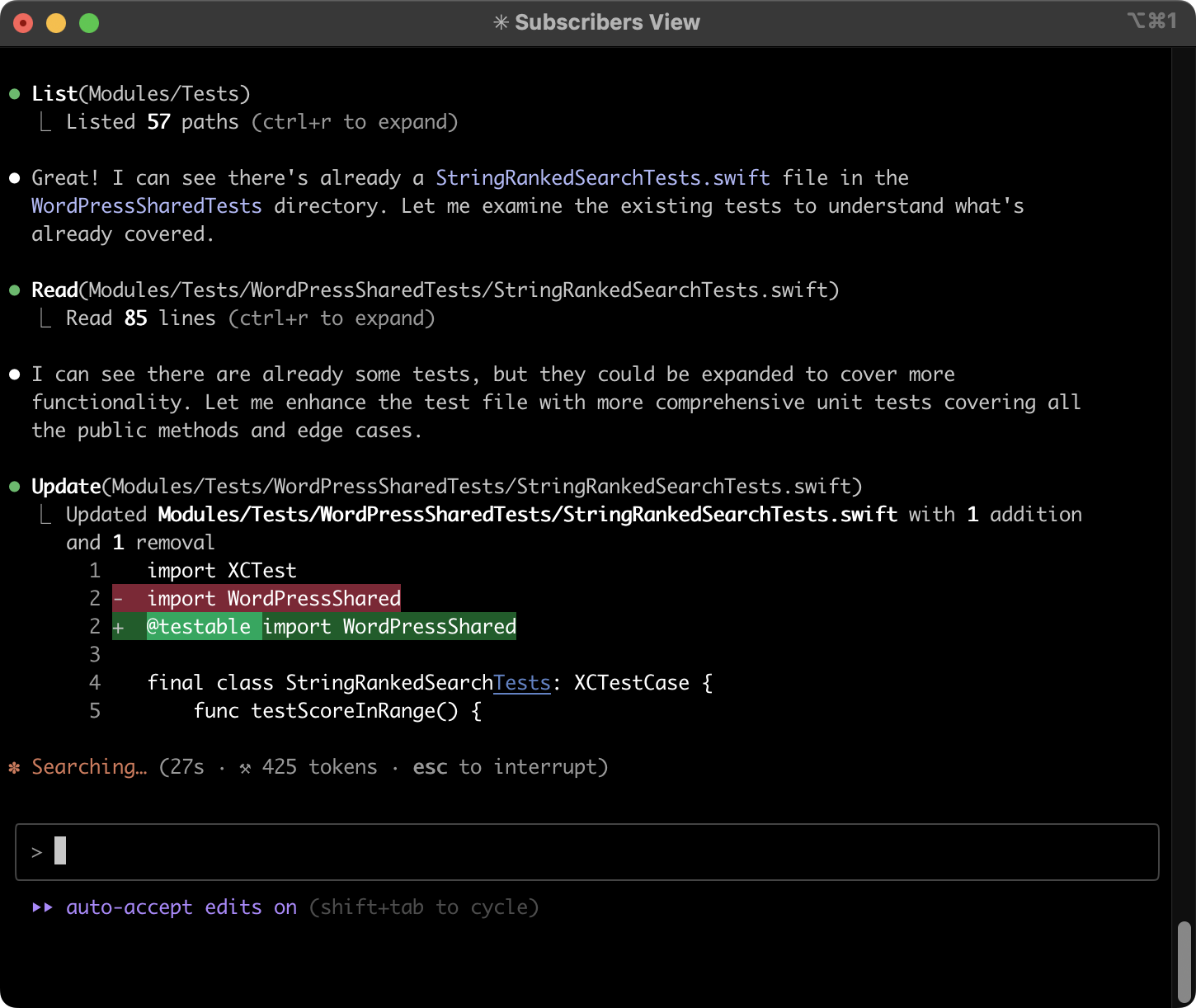

Anthropic's Claude Code update is a significant step towards achieving this balance. The update introduces a new auto mode, which allows AI to decide which actions are safe to take on its own, with some limits.

Auto Mode: A Closer Look

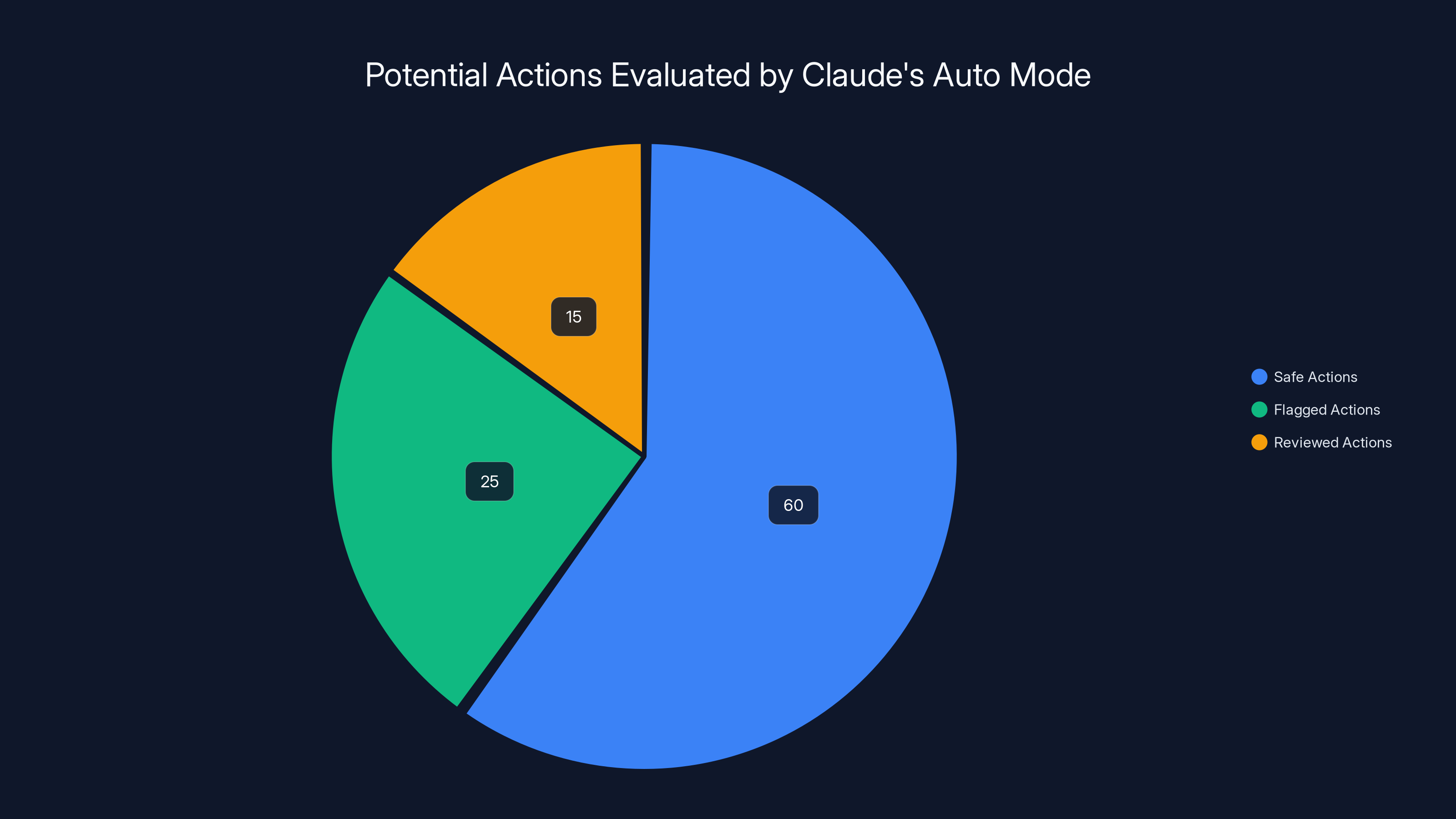

The new auto mode is designed to review each action before it runs, checking for risky behavior and prompt injection attacks. This approach aims to eliminate the need for constant human oversight while ensuring the AI operates safely.

How Auto Mode Works

Auto mode uses AI safeguards to analyze potential actions. It evaluates the context and intent of each action, comparing it against predefined safety parameters. If an action is deemed safe, it proceeds without further intervention.

Code Example of Auto Mode Implementation

python# Example of auto mode implementation in Claude Code

class Auto Mode AI:

def __init__(self, safeguards):

self.safeguards = safeguards

def evaluate_action(self, action):

return self.safeguards.is_safe(action)

def execute(self, action):

if self.evaluate_action(action):

# Execute the action

print("Action executed successfully.")

else:

print("Action flagged as risky.")

# Define safeguards

safeguards = Safeguard System()

# Initialize AI with auto mode

ai = Auto Mode AI(safeguards)

# Evaluate and execute an action

ai.execute("send_email")

Security Measures: Safeguarding AI Actions

Security is a critical aspect of AI development. Anthropic's update includes enhanced safeguards against risky behavior and prompt injection attacks.

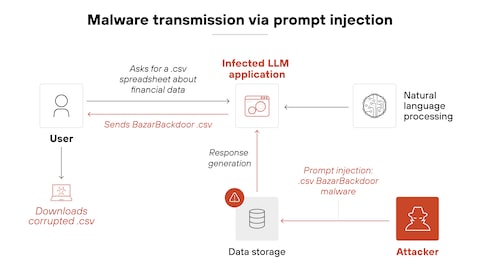

Understanding Prompt Injection Attacks

Prompt injection is a type of attack where malicious instructions are hidden in the content the AI is processing. These attacks can cause the AI to behave unpredictably or execute unintended actions.

Implementing Safeguards

Anthropic's safeguards include:

- Behavior Monitoring: Continuous analysis of AI actions for signs of risky behavior.

- Contextual Analysis: Evaluating the context of prompts to detect hidden malicious instructions.

- Real-Time Alerts: Notifying developers of potential security threats in real-time.

Estimated data shows that 60% of actions are deemed safe, 25% are flagged, and 15% require further review by Claude's Auto Mode.

Practical Implementation Guides

Implementing AI with the right balance of autonomy and control requires careful planning and execution.

Best Practices for AI Implementation

- Define Clear Parameters: Establish clear safety parameters for AI actions.

- Continuous Monitoring: Implement systems for continuous monitoring of AI behavior.

- Regular Updates: Keep AI models and security measures up to date.

- User Feedback: Incorporate user feedback to improve AI performance and safety.

Common Pitfalls and Solutions

Developers may encounter challenges when implementing AI with autonomy. Here are some common pitfalls and solutions:

Pitfall: Over-Restrictive Safeguards

Solution: Balance safeguards with operational flexibility to avoid stifling AI capabilities.

Pitfall: Insufficient Testing

Solution: Conduct extensive testing in diverse scenarios to ensure AI reliability.

Future Trends in AI Autonomy

The trend towards more autonomous AI tools is expected to continue, with a focus on enhancing safety and efficiency.

Predictions for AI Development

- Increased Autonomy: AI models will gain more decision-making capabilities without compromising safety.

- Advanced Safeguards: Development of more sophisticated safeguards to protect against evolving threats.

- Collaborative AI: Integration of AI tools that can collaborate with each other and with humans seamlessly.

Recommendations for Developers

Developers looking to implement AI with autonomy should:

- Stay Informed: Keep up with the latest developments in AI technology and security.

- Collaborate: Work with other developers and stakeholders to share insights and best practices.

- Experiment: Test new features and approaches to find the best solutions for specific use cases.

Conclusion

Anthropic's Claude Code update represents a significant advancement in AI autonomy. By balancing control and efficiency, it sets the stage for future developments in AI technology. As the industry continues to evolve, developers must remain vigilant and adaptive, ensuring that AI tools operate safely and effectively.

Key Takeaways

- Anthropic's Claude Code update enhances AI autonomy with new auto mode.

- Auto mode allows AI to make safe decisions independently, reducing need for human oversight.

- Enhanced safeguards protect against risky behavior and prompt injection attacks.

- Reflects industry trend towards more autonomous AI tools with safety measures.

- Future developments in AI will focus on increased autonomy and advanced safeguards.

Related Articles

- Claude Code and Cowork: Transforming AI Interaction with Your Computer [2025]

- Siri's Next Chapter: Apple's Standalone Revolution [2025]

- Mastering Anthropic's Claude Code and Cowork: Revolutionizing Human-Computer Interaction [2025]

- Building Your Own Robot Snowman: A Comprehensive Guide [2025]

- Navigating the Digital Landscape: EFF Leadership Change Amid AI and ICE Controversies [2025]

- How AI Will Revolutionize Data Readiness [2025]

FAQ

What is Balancing AI Autonomy and Control: Anthropic's Claude Code Update [2025]?

AI technology is advancing rapidly, and with it comes the challenge of balancing autonomy and control

What does tl; dr mean?

Anthropic's latest update to Claude Code introduces innovative features that aim to address this challenge

Why is Balancing AI Autonomy and Control: Anthropic's Claude Code Update [2025] important in 2025?

In this article, we'll explore the details of this update, its implications for developers, and best practices for implementing AI in a controlled yet efficient manner

How can I get started with Balancing AI Autonomy and Control: Anthropic's Claude Code Update [2025]?

- AI Autonomy: Anthropic's Claude Code update enhances AI autonomy while maintaining necessary safeguards

What are the key benefits of Balancing AI Autonomy and Control: Anthropic's Claude Code Update [2025]?

- Auto Mode: New auto mode allows AI to decide safe actions independently, reducing the need for constant human oversight

What challenges should I expect?

- Security Measures: Enhanced safeguards against risky behavior and prompt injection attacks

![Balancing AI Autonomy and Control: Anthropic's Claude Code Update [2025]](https://tryrunable.com/blog/balancing-ai-autonomy-and-control-anthropic-s-claude-code-up/image-1-1774388274666.jpg)