Introduction: When Networking Hardware Becomes an Edge Computing Platform

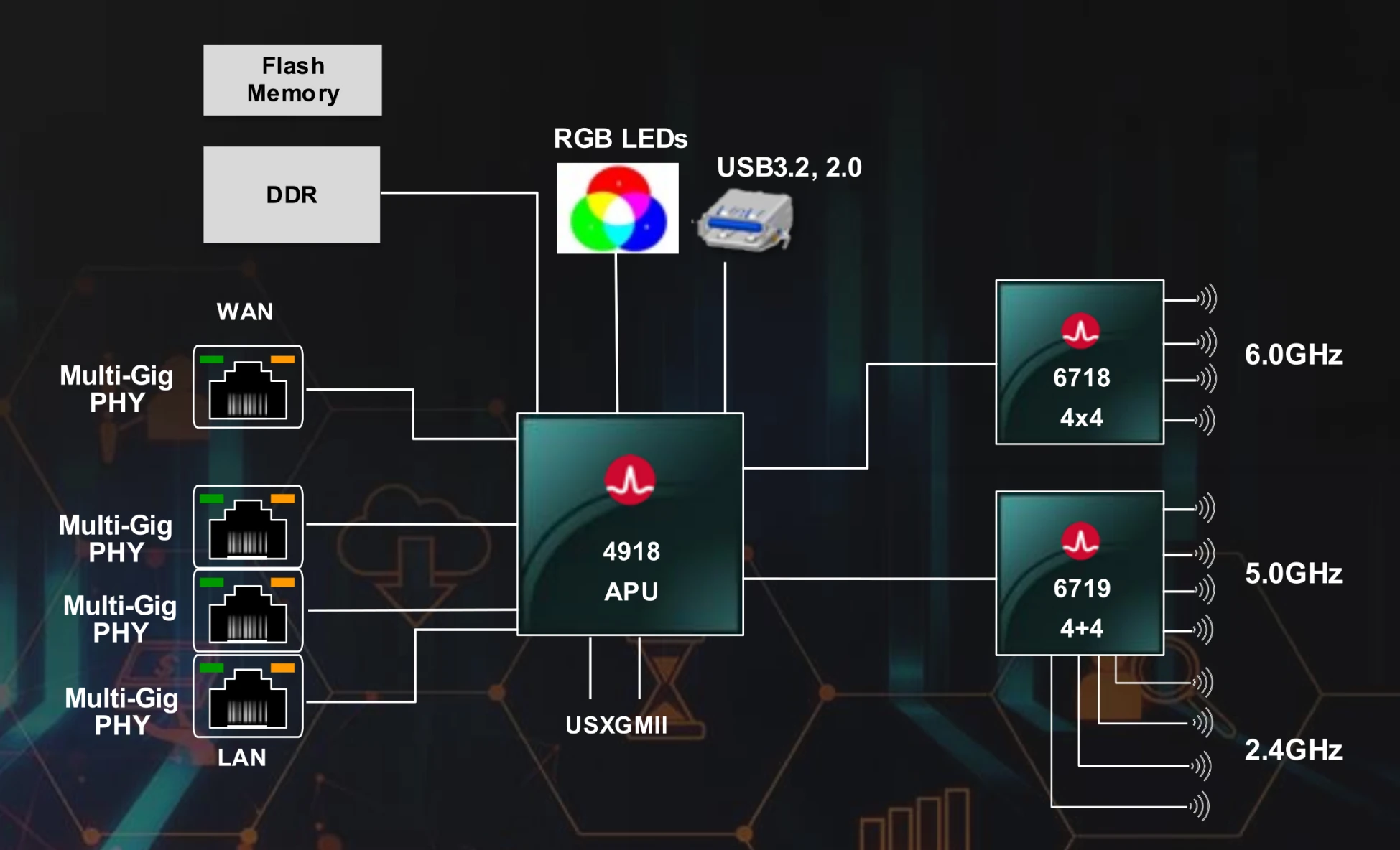

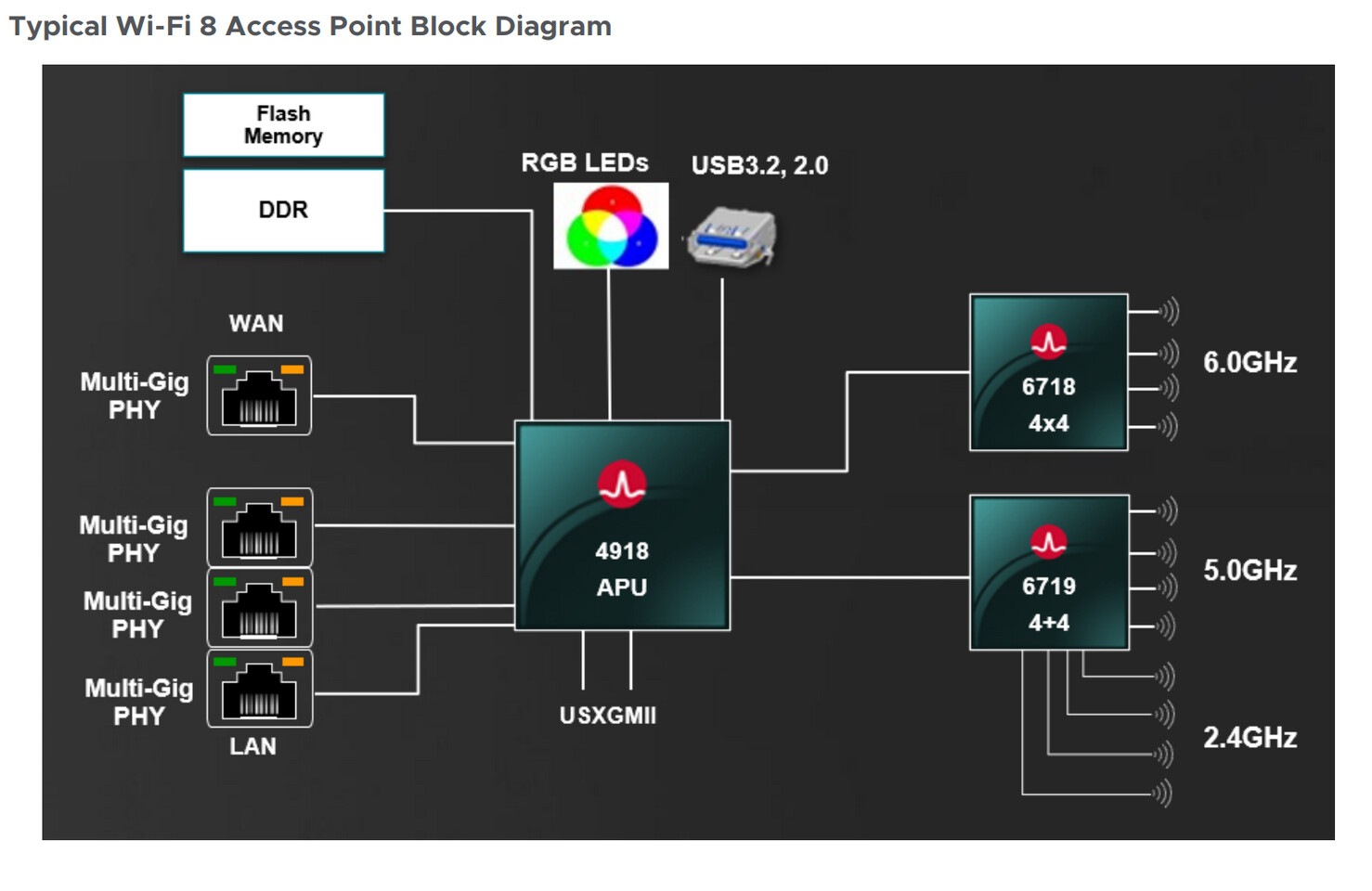

Broadcom just did something unusual. They took a term AMD popularized, flipped its meaning entirely, and launched it into the Wi-Fi 8 era. The BCM4918 is branded as an "Accelerated Processing Unit"—but here's the twist: it's got nothing to do with graphics, and everything to do with handling network traffic faster than a traditional CPU ever could.

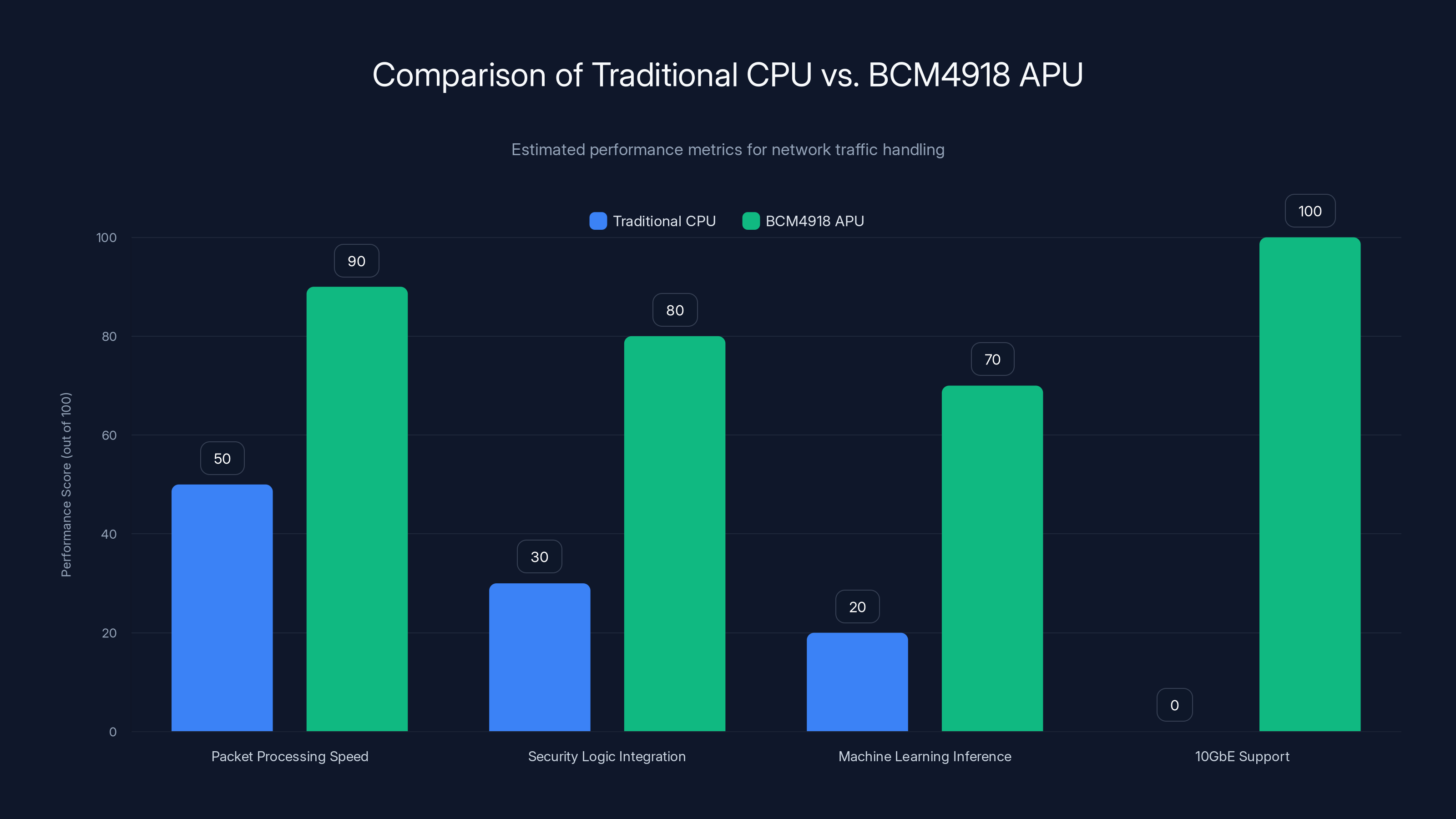

For decades, APU meant one thing: a processor with integrated graphics. AMD's Ryzen processors with Radeon cores? That's an APU. But Broadcom's interpretation is different. Their BCM4918 APU doesn't render pixels. Instead, it accelerates packet processing, integrates security logic, runs machine learning inference locally, and manages wired backhaul connections with native 10 GbE support. It's a repurposing of terminology that actually makes technical sense once you dig into what's inside.

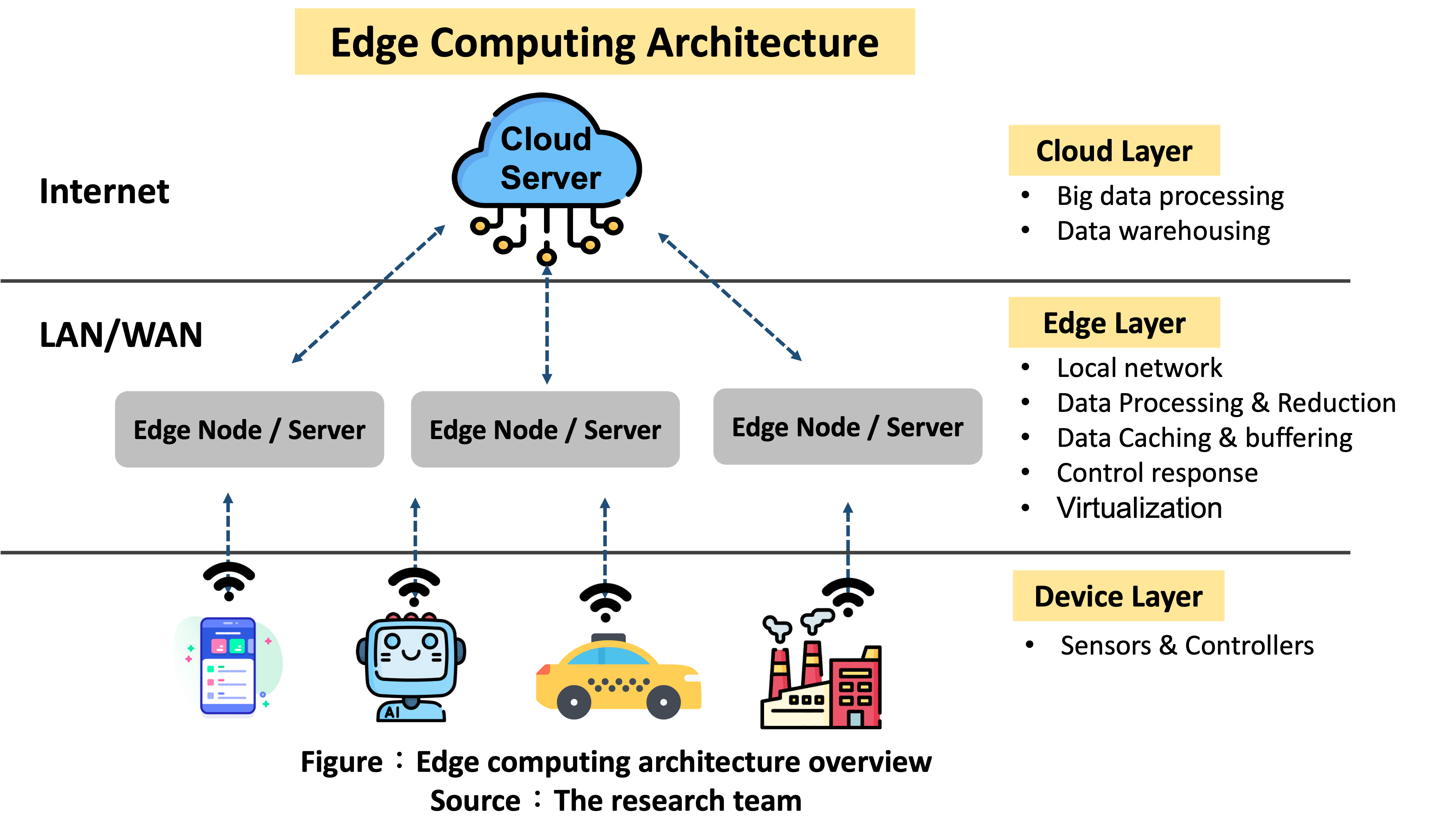

This matters more than it might seem on the surface. Consumer Wi-Fi routers are no longer simple wireless repeaters. They're becoming edge computing nodes—devices that sit at the boundary between your home network and the internet, capable of processing data locally, applying intelligent traffic management, and running AI-driven features without shipping everything to the cloud. The BCM4918 is Broadcom's answer to this evolution.

What's particularly interesting is the architectural shift it represents. For years, access point chips handled everything through a general-purpose CPU. All traffic flowed through the same processor. All decisions came from the same cores. Now, with the BCM4918, Broadcom is separating control-plane operations (like settings and firmware) from the actual data plane (the traffic flowing through your network). That separation changes everything about performance headroom and what vendors can do with software updates.

The adoption of 10 GbE wired connectivity isn't frivolous either. Mesh routers have matured. Multi-node systems are the norm in larger homes. The bottleneck shifted from wireless speed to the wired backhaul connecting nodes together. Ten gigabits per second sounds excessive for a home network, but when you're aggregating multiple wireless clients across multiple bands, that headroom becomes practical. You avoid artificial congestion before it starts.

This article breaks down exactly what the BCM4918 is, how it differs from previous generations, what the architecture actually does, and why the timing matters right now. If you're curious about where consumer networking hardware is headed, this is the story.

TL; DR

- BCM4918 is Broadcom's Wi-Fi 8 flagship processor that redefines APU as a networking chip, not a graphics processor

- Quad-core ARM CPU with dedicated packet processor separates control-plane and data-plane operations, reducing CPU bottlenecks

- Native 10 GbE support addresses rising demand for wired backhaul in mesh router systems

- Integrated Neural Engine enables local AI inference for edge processing without cloud dependency

- Consolidated architecture reduces PCB complexity and power consumption in access point designs

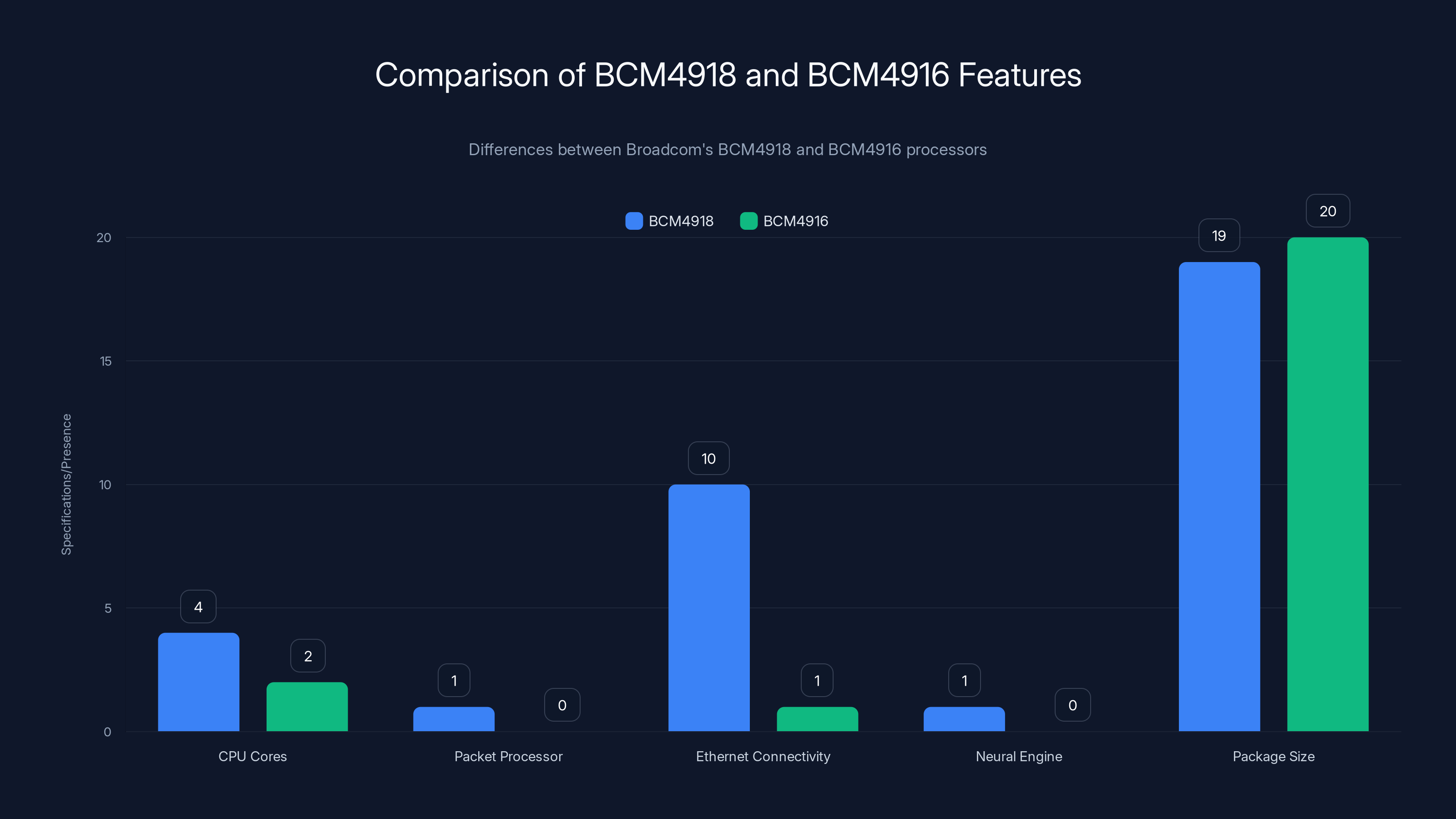

The BCM4918 significantly enhances performance with more CPU cores, a dedicated packet processor, and 10GbE support, while also integrating a Neural Engine for AI tasks. Estimated data used for package size comparison.

The APU Redefinition: From Graphics to Networking Acceleration

When most people hear "APU," they think AMD. The company spent years building this brand association: a CPU and GPU on the same die, sharing memory, reducing latency between compute and graphics. It worked. For integrated graphics in laptops and desktops, APU became the industry term.

Broadcom's move to resurrect this label for networking silicon is clever branding, but it's also technically justified. An accelerated processing unit, in the broadest sense, is a processor that accelerates specific workloads beyond what a general-purpose CPU can handle. GPU acceleration works for graphics. AI accelerators work for machine learning. Networking acceleration works for packet processing.

Historically, routers and access points used general-purpose CPUs—ARM processors, MIPS chips, sometimes even x86 variants—to handle everything. Every packet, every routing decision, every security check flowed through the same cores. This created a fundamental bottleneck. You could have Wi-Fi 6E radio capable of 11 Gbps (combined across bands), but the CPU architecture might only sustain a few gigabits of actual throughput before contention became severe.

The BCM4918 solves this by adding dedicated packet processors. These are fixed-function engines designed specifically for moving data from point A to point B. They don't execute arbitrary code. They follow a pipeline: receive packet, match against rules, apply transformations, forward to destination. No branching. No cache misses. No CPU involvement unless the packet requires something special.

This is called separation of concerns in networking. The control plane is where policy decisions live: which traffic gets prioritized, which clients get blocked, which security rules apply. The data plane is the fast path: take this packet and move it. The BCM4918 dedicates a quad-core ARM processor for control-plane work and a dual-issue packet processor for data-plane work. They operate independently. When your router makes a configuration change, it doesn't affect active traffic throughput. When your router forwards traffic, it doesn't interrupt firmware updates or background AI analysis.

What's remarkable is how many access points haven't adopted this architecture. Many consumer routers still route everything through a single ARM core or dual-core system. They work fine for typical home use, but they can't scale. Add too many clients. Saturate the backhaul. Run demanding smart home protocols. Suddenly everything gets slow because the CPU is bottlenecked.

Broadcom isn't the only vendor moving this direction—high-end enterprise access points have used data-plane acceleration for years—but bringing it to residential Wi-Fi 8 platforms is significant. It means vendors can design routers that don't get bogged down as home networks get more complex.

The Quad-Core ARM Architecture: Control Plane Meets Data Plane

Inside the BCM4918, the CPU complex consists of four ARM cores running ARMv8 instruction set. These aren't your phone's main processors—they're typically clocked lower, optimized for control-plane operations. Think of them as the "brain" of the access point, handling all the intelligent decisions.

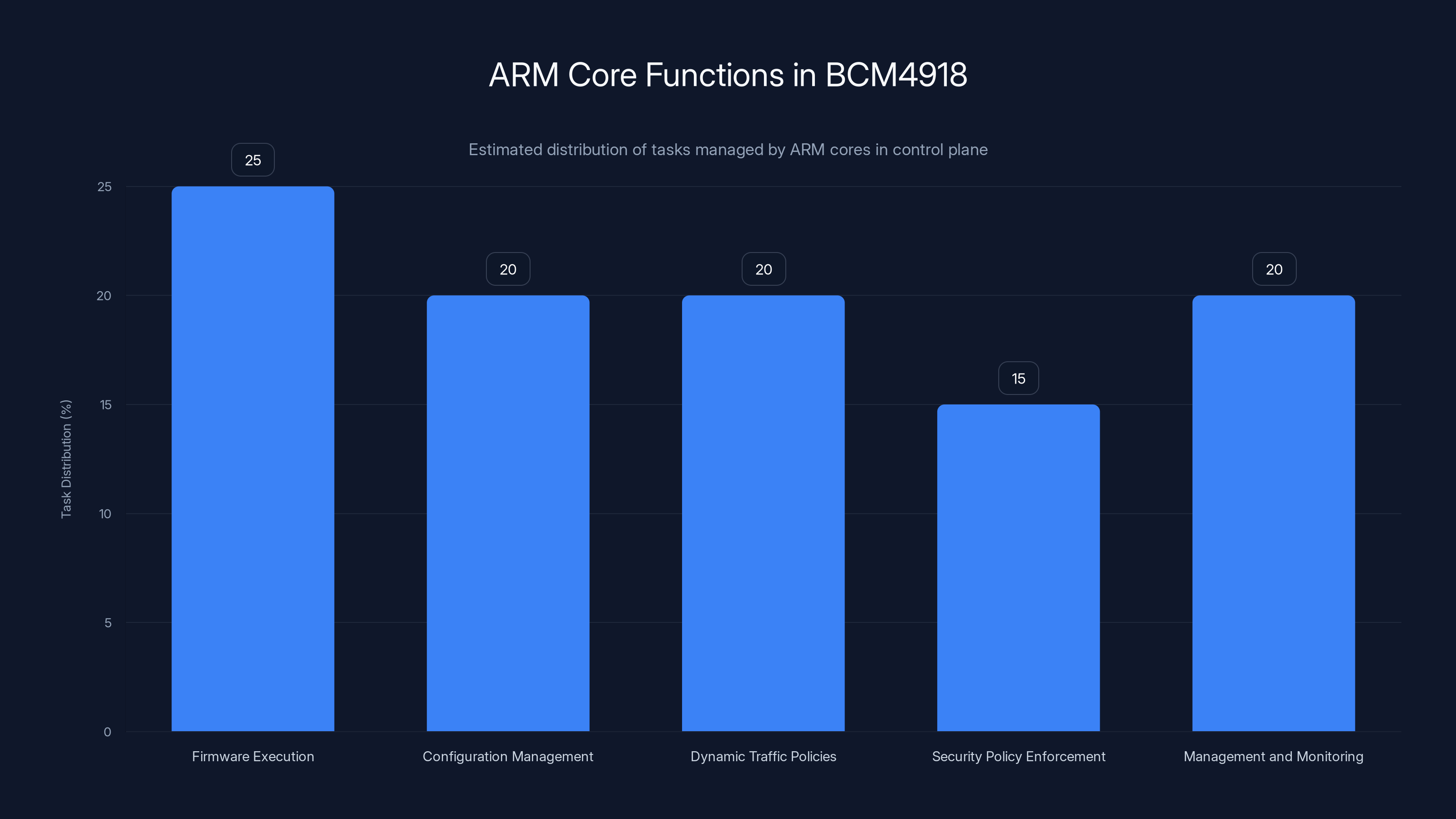

These cores manage:

- Firmware execution: The actual operating system and application code runs here

- Configuration management: When you change Wi-Fi settings through an app, this is where those commands execute

- Dynamic traffic policies: Algorithms that decide how to distribute bandwidth among clients

- Security policy enforcement: Rules checking, threat detection, firewall logic

- Management and monitoring: Collecting statistics, generating logs, responding to diagnostics

Critically, these cores run asynchronously from the data plane. While they're executing firmware or processing a configuration change, network traffic keeps flowing. The dedicated packet processor doesn't care what the CPU is doing.

The dual-issue packet processor is the workhorse. "Dual-issue" means it can initiate two operations per clock cycle—this provides instruction-level parallelism for packet handling. It's optimized specifically for the repetitive work of packet forwarding: extract headers, check routing tables, apply QoS rules, forward to the next hop or wireless interface.

Wired and wireless data paths have independent processing pipelines. Packets coming from the wired backhaul (10 GbE) flow through one pipeline. Wireless packets flow through another. This prevents them from interfering with each other. If one path gets congested, the other continues smoothly. Combined with internal buffering and flow control logic, this design handles asymmetric traffic patterns gracefully.

The implications are subtle but important. In older routers, a sustained high-speed wired backhaul connection could interfere with Wi-Fi performance because both were competing for CPU cycles. In the BCM4918, wired and wireless packets can flow independently. The CPU isn't the limiting factor anymore.

Memory architecture matters too. The BCM4918 integrates on-die memory for packet buffers and lookup tables. This reduces latency compared to systems that rely on external DRAM. Packets don't have to travel far. Routing lookups happen locally. Cache coherency issues are minimized.

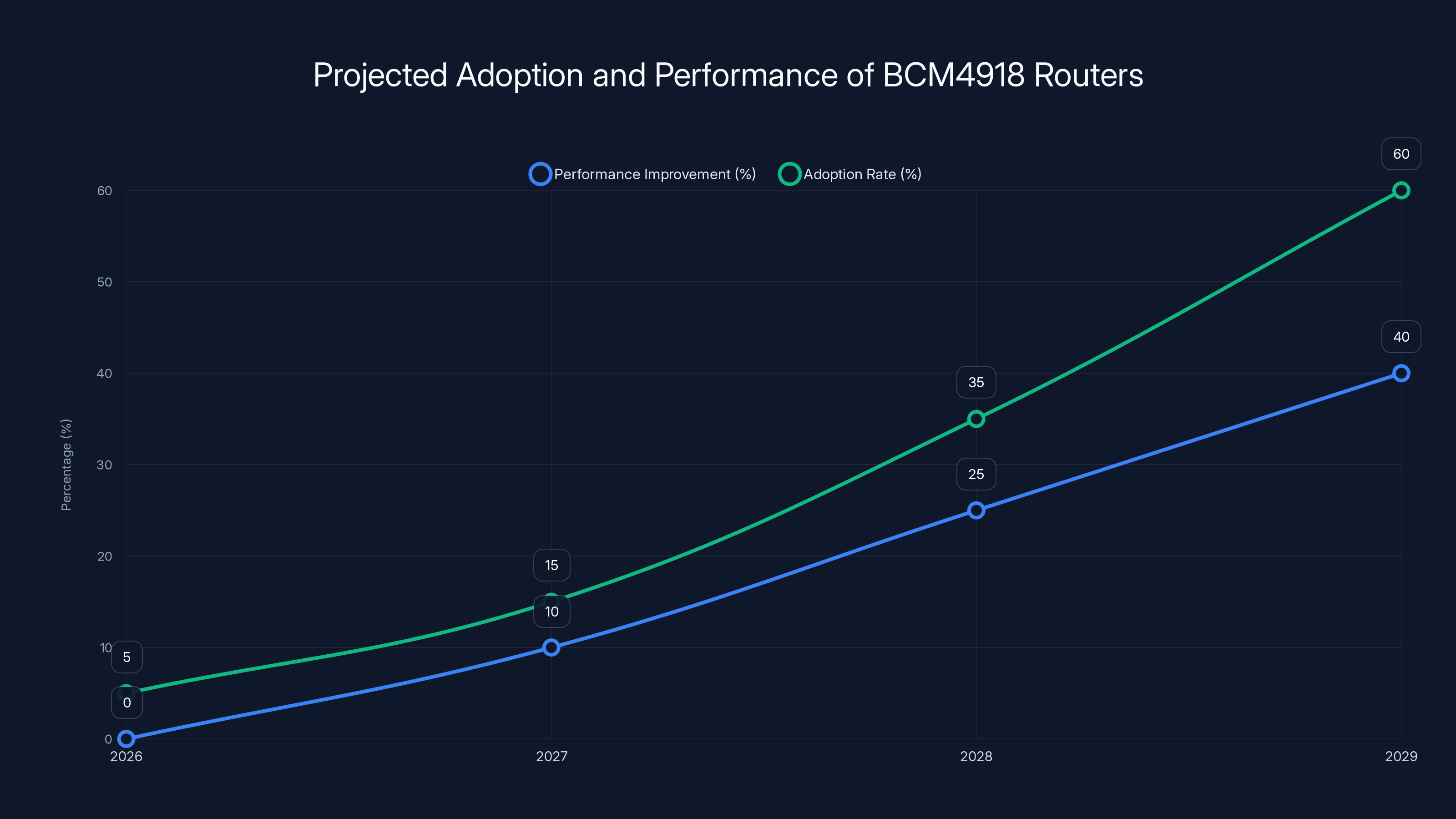

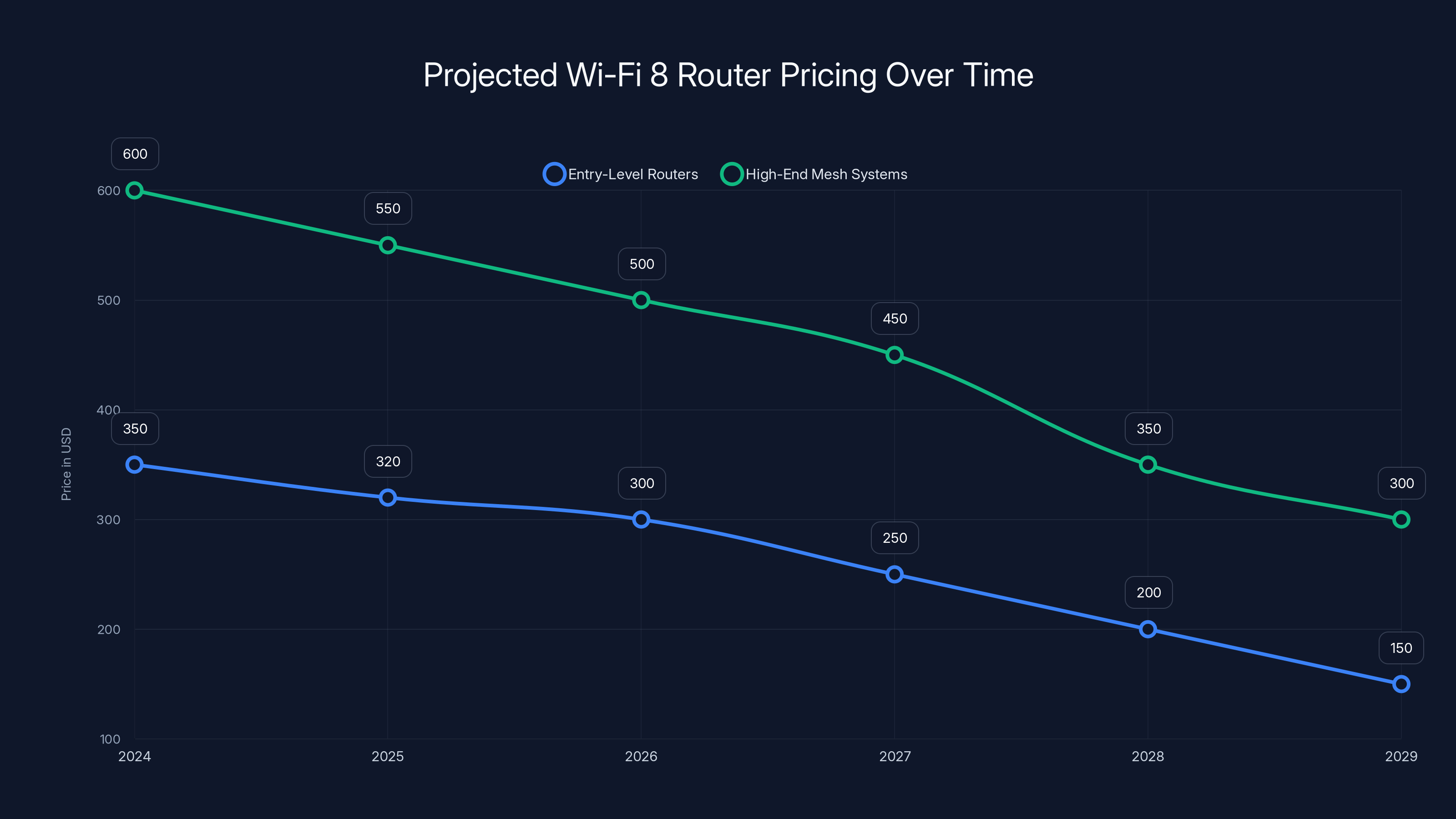

The BCM4918 routers are expected to see a gradual increase in performance and adoption, with significant improvements and mainstream adoption projected by 2029. Estimated data.

10 GbE Integration: Why Mesh Routers Need Wired Speed

Ten gigabits per second sounds excessive. Your home internet connection is probably 100 Mbps to 1 Gbps. Your Wi-Fi 6E achieves perhaps 2 Gbps in ideal conditions across all bands combined. Why would you need 10 times that capacity internally?

Because mesh networks have multiplied the problem. A modern three-node mesh system in a home might have:

- Node 1 (Gateway): Connected to internet via 1 Gbps WAN link, also serving 8 clients on 6 GHz and 5 GHz

- Node 2 (Backhaul): Serving 12 clients via Wi-Fi, backhauled to Node 1

- Node 3 (Backhaul): Serving 10 clients via Wi-Fi, backhauled through Node 1 or Node 2

Now add up the bandwidth. Those clients collectively want to move data. Some are downloading movies. Some are uploading to cloud storage. Some are playing online games. The wireless channel is shared (that's how Wi-Fi works), so if 12 clients on a single 5 GHz radio collectively want to push data, they're sharing that radio's capacity.

But here's where it gets interesting: the backhaul link doesn't serve one client. It serves all clients downstream of that node. If Node 2 has 12 clients and they're all moving data, the backhaul link needs to handle the aggregated throughput from all 12, not just one.

Gigabit Ethernet (1 Gbps) becomes a bottleneck. You get 1,000 Mbps of capacity to the wired backhaul. Divide that among 12 clients, and each gets roughly 83 Mbps. That sounds reasonable until you realize it's a shared capacity. If one client wants to upload a large file, it throttles everyone else.

With 10 GbE, you get 10 times that capacity. Headroom. Breathing room. Even with 30 clients on a single backhaul node, each can theoretically achieve multi-Gbps speeds if they're the only one using the link. Realistically, they won't be, but the point is clear: the backhaul isn't the constraint anymore.

Broadcom's integration of 10 GbE PHYs directly on the BCM4918 means the processor doesn't need external chips to handle gigabit Ethernet. That saves board space, reduces latency (fewer hops between processor and network interface), and simplifies the design. PCB designers can fit all the critical networking silicon on a smaller motherboard.

Challenges remain. Most home networking hardware still uses 1 GbE wired connections. Moving from 1 GbE to 10 GbE requires better cabling (Cat 6A or Cat 7), new network equipment, and vendor support. But the trend is clear. As wireless speeds increase, wired backhaul becomes the target for improvement.

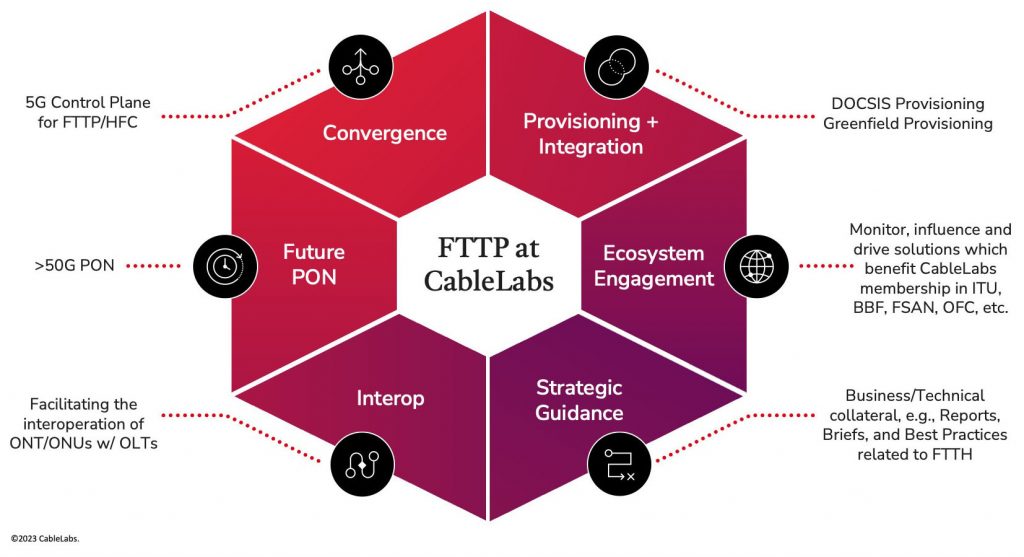

The Neural Engine: Local AI Processing Without the Cloud

Machine learning is everywhere in consumer tech now, but most of it happens in the cloud. Your phone recognizes faces locally, but more complex analysis ships to servers. Voice assistants transcribe audio locally but send transcriptions to cloud APIs for intent understanding. This pattern trades latency and privacy for access to massive computing resources.

Networking hardware represents an interesting opportunity. An access point sits at a fixed location with consistent power, typically stays on, and has direct access to all traffic flowing through the home network. If you want to run AI-driven network features locally, an access point is a logical place to do it.

Broadcom integrated a "Neural Engine" into the BCM4918. The term is intentionally vague—Broadcom hasn't published extensive specifications—but it indicates dedicated silicon for machine learning inference. Not training (that requires massive compute). Inference: taking a trained model and running new data through it to generate predictions.

Potential use cases include:

- Traffic classification: Identifying what each data flow is (video streaming, web browsing, gaming, security cameras) without inspecting encrypted payloads

- Threat detection: Analyzing packet patterns to identify DDoS attacks, port scans, or anomalous behavior before it impacts network performance

- Client profiling: Understanding device types and behavior patterns to apply intelligent QoS (Quality of Service) policies automatically

- Anomaly detection: Learning normal patterns and alerting when something unusual occurs

- Performance prediction: Estimating which clients need prioritization based on application type and historical patterns

The advantage is latency and privacy. Traditional machine learning requires sending data to the cloud, waiting for results, and acting on them. That takes hundreds of milliseconds. Local processing happens in microseconds. Plus, traffic data never leaves your home.

But here's the catch: Broadcom's documentation is sparse on what the Neural Engine actually does. What models are supported? What inference throughput (operations per second) can it achieve? How much power does it consume? Without these details, it's hard to assess how practical this feature is.

The physics of machine learning on embedded hardware is harsh. Small models (millions of parameters) run fast but are less accurate. Large models (billions of parameters) are accurate but require significant compute. Consumer networking hardware has limited power and thermal budgets. The Neural Engine probably supports smaller, more specialized models: traffic classifiers trained on millions of flow samples, anomaly detectors, device-type identifiers. Not general-purpose AI.

This is where vendor software differentiation becomes critical. The BCM4918 provides the hardware foundation. What vendors do with it depends entirely on firmware. A vendor could ignore it entirely and get a perfectly functional access point. Or they could develop sophisticated ML-driven features that adapt to your home network automatically. Broadcom provides the tools; vendors decide how to use them.

Security Architecture: Cryptography and Secure Boot in Hardware

Network security increasingly happens at the hardware level. The BCM4918 integrates multiple security subsystems directly on the die, which matters more than it might initially seem.

First, secure boot. The processor contains cryptographic engines specifically for verifying that the firmware running on the device is legitimate. When the access point powers on, it checks that the operating system and drivers haven't been modified by an attacker. If verification fails, the device doesn't boot. This prevents compromised firmware from persisting across reboots.

Second, hardware-accelerated cryptography. Encryption and decryption are mathematically intensive. Running them in software on a general-purpose CPU is slow and creates timing-attack vulnerabilities (side channels where attackers infer keys by measuring how long operations take). Dedicated cryptographic hardware eliminates this risk and accelerates operations. WPA3 encryption for wireless traffic, SSL/TLS for secure connections, secure key storage—all benefit from hardware acceleration.

Third, secure key storage. The BCM4918 integrates a secure enclave or trusted platform module (TPM) equivalent. Private keys never exist in main memory. They're stored in a protected area that can only be accessed by authorized code. Even if an attacker compromises the firmware, they can't extract stored keys.

These features matter because access points sit between your devices and the internet. A compromised router can intercept traffic, inject malware, or harvest credentials. Vendors update firmware frequently to patch vulnerabilities. Hardware-based security ensures that even if the firmware has flaws, the core security mechanisms can't be bypassed.

Broadcom emphasizes this is built for "standard residential temperatures," which is oddly specific. It implies the hardware is designed for consumer environments where you don't have active cooling. A smaller 19 x 19 mm package with integrated security, compute, and networking requires careful power distribution and thermal management. Broadcom claims this is solved through design, but we won't really know until routers ship and teardowns reveal the actual PCB layout.

Estimated data shows that firmware execution and management tasks occupy the largest share of ARM core activities in the BCM4918 architecture.

Board Complexity: Consolidation as Cost and Design Advantage

The BCM4918 is a System-on-Chip (SoC). Nearly everything you need for an access point is on one die. Compare that to historical designs where you'd need:

- A main CPU (separate chip)

- Networking Ethernet controllers (separate chip)

- Wi-Fi radio controllers (separate chips)

- Security co-processor (separate chip)

- Analog frontend for power delivery (multiple support chips)

Each additional chip requires:

- PCB real estate (more layers, larger board)

- Power distribution networks (voltage regulators, capacitors, traces)

- Thermal management (heatsinks, airflow considerations)

- Interconnect complexity (bus protocols, clock distribution)

- Supply chain management (more vendors, more lead times)

- Cost (component cost, assembly complexity, testing)

By consolidating all these functions onto one chip, the BCM4918 reduces board complexity measurably. A smaller motherboard means a more compact device. Lower power distribution overhead means higher efficiency. Fewer chips means fewer points of failure.

This has downstream effects. Smaller devices are easier to place in homes. Lower power consumption reduces operating costs and heat generation, extending the lifespan of the system. Simpler designs are easier to manufacture consistently, potentially leading to better quality and lower defect rates.

From a design perspective, the BCM4918 is a consolidation play. It trades flexibility (you can't easily upgrade or swap individual components) for efficiency and cost reduction. Vendors who use this chip benefit from a more capable platform without dramatically increasing the engineering complexity of bringing a router to market.

PCIe Gen 3 Expansion: Flexibility for Vendor Differentiation

The BCM4918 includes four PCIe Gen 3 interfaces. These allow vendors to attach additional components beyond what Broadcom built into the chip. Think of it as growth points for future capabilities.

Typical uses for PCIe expansion in access points:

- Additional Wi-Fi radios: Some vendors use Wi-Fi 6E but also want to add extra radios for specific purposes (dedicated backhaul radio, extended coverage, etc.)

- Storage controllers: If a vendor wants to offer local recording for security cameras or large-file caching

- Custom accelerators: Specialized silicon for specific vendor features (proprietary traffic shaping, unique security algorithms)

- Cellular modems: For 4G/5G backup connectivity in premium routers

- Sensor interfaces: For environmental monitoring (temperature, humidity, air quality)

Gen 3 PCIe provides decent bandwidth—about 2.9 GB/s per lane. With four lanes, that's plenty for most peripherals. A modern Wi-Fi 6E radio needs roughly 400-600 MB/s during peak throughput. You could attach four radios and still have bandwidth available.

The dual USB controllers are for lower-bandwidth attachments. USB 3.0 provides up to 400 MB/s. USB 2.0 provides 60 MB/s. These might connect:

- USB storage devices: For local network backups or media storage

- USB cameras: For connected home security features

- Custom peripherals: Vendor-specific hardware

This flexibility is important for vendor differentiation. Broadcom provides the core platform. Vendors add their own twist through expansion modules. It's a strategy that has worked well in enterprise networking for years and is now moving to consumer devices.

The System-on-Chip Tradeoffs: Power and Thermals

Consolidating everything onto a single die creates tradeoffs. The BCM4918 is a 19 x 19 mm package. That's dense. Small size means high component density, which creates heat concentration. One chip dissipating power across multiple functions means thermal management matters.

Broadcom rates it for "standard residential temperatures," typically 0°C to 40°C ambient. Compare that to industrial networking equipment, which often supports 0°C to 50°C or higher. The constraint is real. In warm climates, during summer, in a poorly ventilated cabinet, an access point might run hotter than intended.

Power consumption is another constraint. A single power domain means you can't selectively disable unused components. If the CPU complex needs to wake up, the entire chip wakes. If the packet processor is idle, it's still consuming some power. Modern SoCs use power gating and clock gating to minimize this, but the overhead still exists.

Vendors will need to design for these constraints. Probably with larger heatspreaders than in previous routers. Maybe with active thermal management (fans, which add complexity). The engineering tradeoff is: better performance and simpler design versus slightly higher thermal and power challenges.

For most home environments, this probably isn't a practical constraint. A well-designed router using the BCM4918 will operate fine in typical home conditions. But in edge cases—hot climates, poorly ventilated spaces, continuous high-load scenarios—thermal management matters.

Wi-Fi 8 routers are expected to start at premium prices (

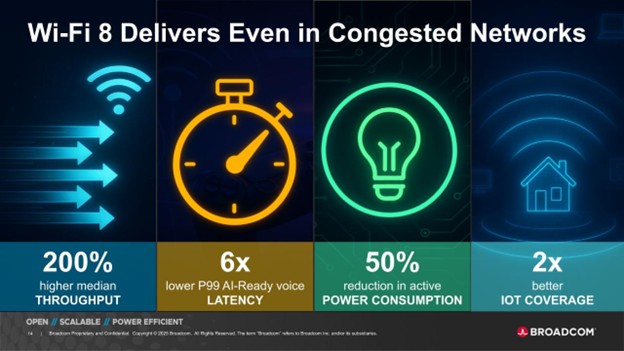

Wi-Fi 8 Standards Context: Why This Chip Exists Now

Wi-Fi 8, officially 802.11be, is the newest wireless standard. It increases theoretical maximum throughput (46 Gbps combined), adds 6 GHz support (new spectrum band), and improves efficiency. But it's not just faster—it's more complex.

More complexity requires more processing power. Wider channel widths (up to 320 MHz), more spatial streams, improved modulation schemes, and additional signaling overhead—all of these demand more compute from the access point. The CPU that could handle Wi-Fi 6E might struggle with Wi-Fi 8 under high client density.

Enter the BCM4918. It's explicitly designed for Wi-Fi 8's computational requirements. The quad-core CPU handles the increased protocol complexity. The packet processor handles increased traffic throughput. The neural engine supports new adaptive algorithms.

What's interesting is timing. Wi-Fi 8 was standardized in 2023, but commercial hardware is just arriving in 2025. That's a typical 18-24 month delay between standard ratification and product release. The BCM4918's introduction in late 2024 / early 2025 positions it perfectly for the Wi-Fi 8 wave.

This also reflects competitive dynamics. Qualcomm and MediaTek have Wi-Fi 8 solutions. Broadcom needed to stay competitive. The BCM4918 is their answer to the question: "How do we build access points that don't bottleneck when Wi-Fi 8 comes?"

Edge Computing Implications: Access Points as Computing Nodes

Broadcom's marketing emphasizes that the BCM4918 turns access points into "edge computing platforms." That's not empty hype. It reflects a genuine shift in what routers do.

For 20 years, an access point's job was: receive wireless traffic, forward it to the wired network (or vice versa). Simple. Straightforward. A dumb pipe.

Now, access points are becoming smart intermediaries. They can:

- Process and cache content: Store frequently accessed data locally, reducing WAN latency

- Apply AI-driven policies: Intelligently prioritize traffic based on application type and client needs

- Run local services: Host databases, perform analytics, run containerized workloads

- Interact with IoT ecosystems: Coordinate smart home devices, collect sensor data, trigger automation

- Provide security services: Firewall, threat detection, VPN endpoint, encrypted tunneling

The BCM4918 supports this through multiple mechanisms. The quad-core CPU can run Linux or RTOS. The packet processor handles high-speed data movement. The Neural Engine enables local AI. Expansion interfaces allow additional capabilities.

This is increasingly important as homes become more connected. A typical modern home might have 50+ connected devices (phones, tablets, computers, smart speakers, cameras, sensors, lights, thermostats, etc.). Coordinating all of these requires intelligence at the network edge.

Cloud-based management doesn't cut it. Latency kills responsiveness. Bandwidth costs money. Privacy becomes a concern when everything uploads to vendor servers. Local processing solves all three problems.

The BCM4918 is part of a broader industry trend toward edge computing. Every vendor is moving compute closer to the source of data. Broadcom's contribution is making this practical for residential access points.

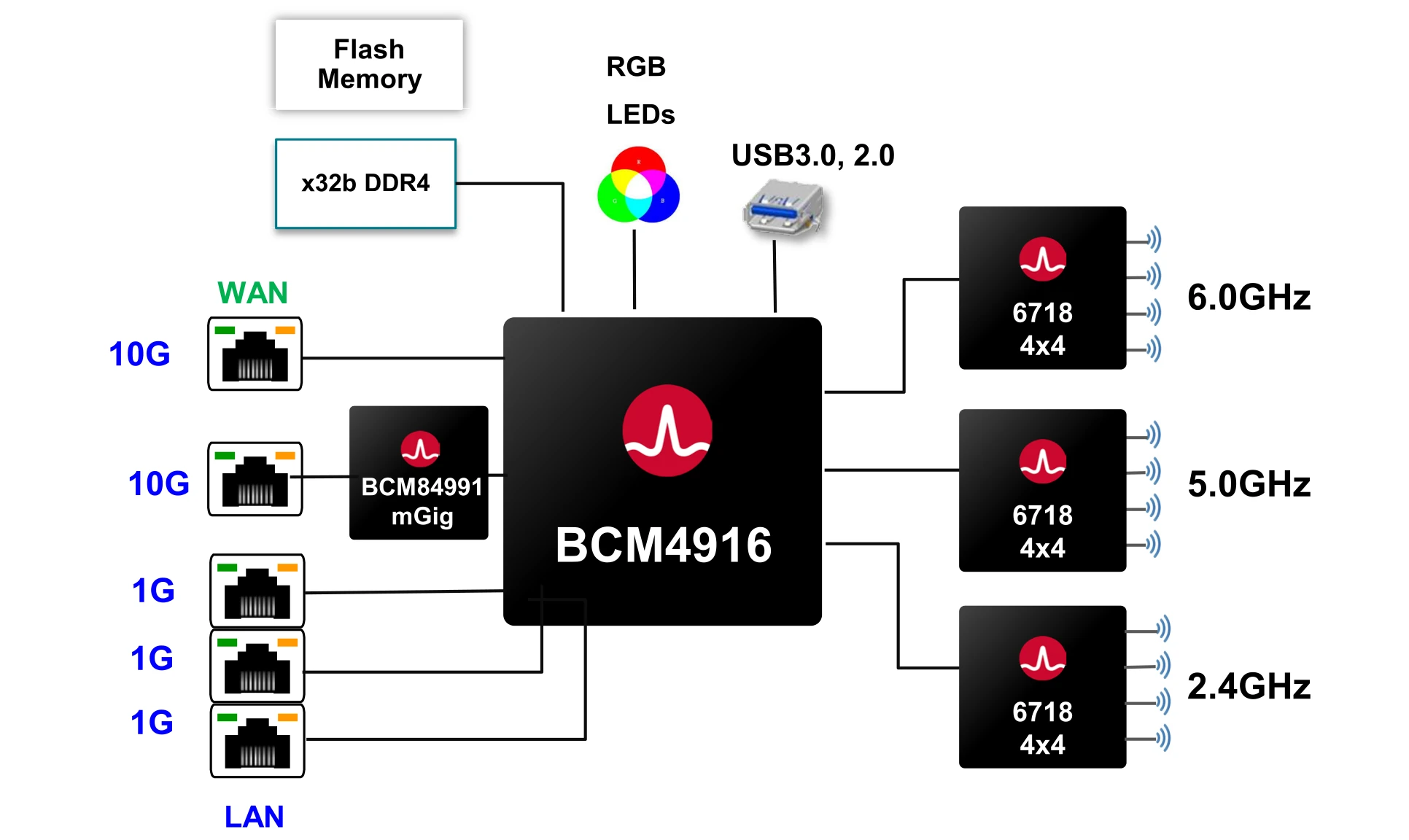

Comparison to Previous Generations: BCM4916, BCM4908, and Predecessors

Broadcom's previous Wi-Fi 6E access point processor was the BCM4916. Understanding how the BCM4918 improves on it provides context for why this chip is significant.

The BCM4916 featured:

- Dual-core ARM CPU

- Traditional packet forwarding (CPU-centric)

- Gigabit Ethernet (1 GbE)

- Standard security features

- No integrated AI acceleration

The BCM4918 adds:

- Four-core ARM CPU (2x the cores)

- Dedicated packet processor (data-plane separation)

- 10 GbE Ethernet (10x the bandwidth)

- Neural Engine (hardware ML acceleration)

- Improved security subsystems

In terms of raw performance, the BCM4918 should deliver 2-3x throughput under sustained high-load conditions. The improvement comes from multiple sources: more CPU cores, dedicated packet processing, better memory architecture.

Previous generations relied more heavily on CPU throughput. If you maxed out the CPU, the router couldn't forward traffic efficiently. The BCM4918 sidesteps this by dedicating hardware to forwarding. The CPU becomes available for other tasks.

Historically, access point evolution followed Moore's Law: more transistors, higher clock speeds, more cores. The BCM4918 represents a shift: instead of just adding more cores, Broadcom added specialized hardware for specific tasks. This is what the semiconductor industry calls "heterogeneous computing"—different types of processors optimized for different workloads.

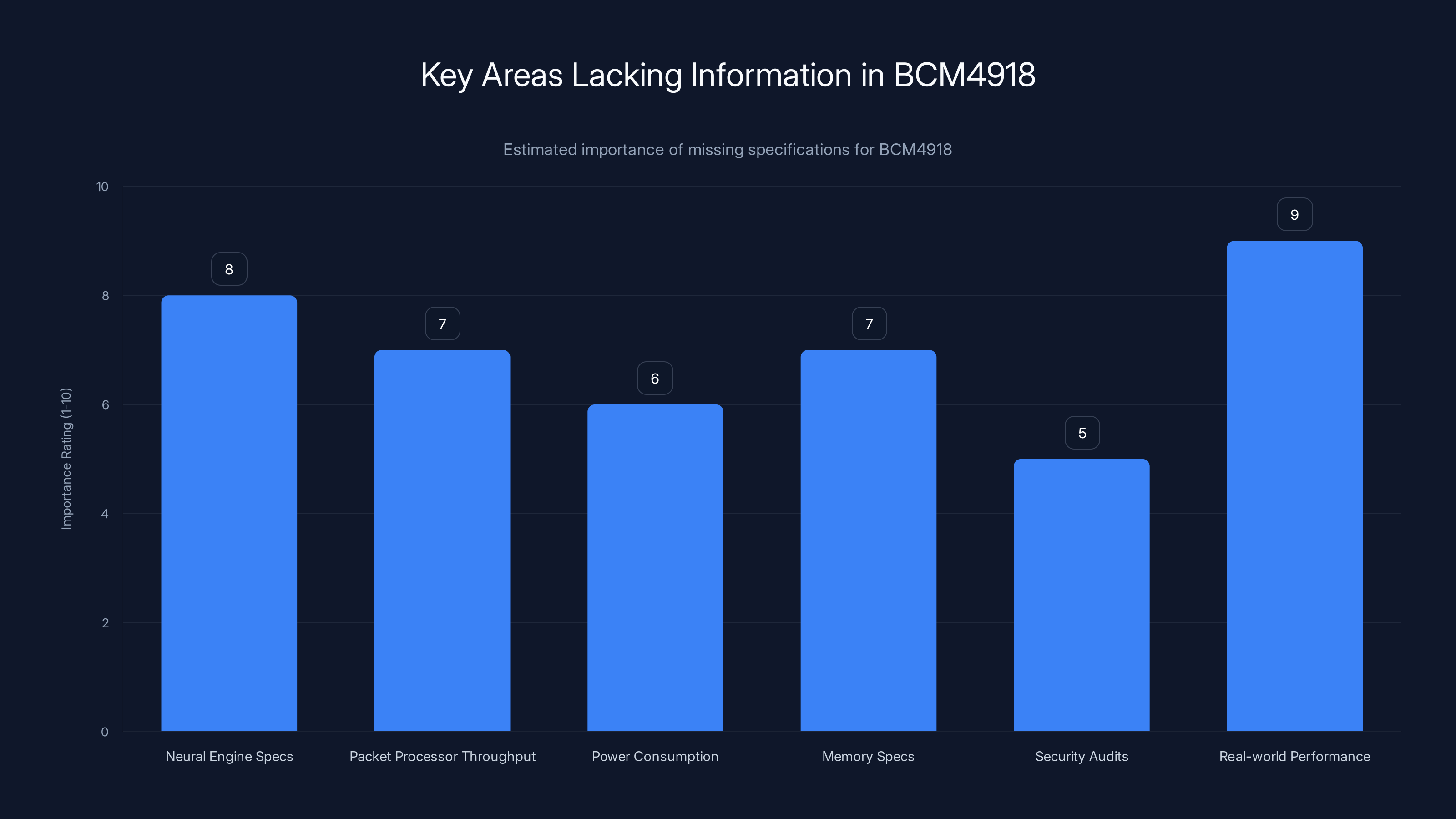

The chart estimates the importance of missing information areas for the BCM4918, highlighting real-world performance as the most critical aspect. Estimated data.

Future Implications: What Wi-Fi 8 Routers Will Actually Do

The BCM4918 is infrastructure for capabilities vendors haven't fully implemented yet. Broadcom provides the hardware. Vendors develop the software. The gap between potential and actual implementation is often significant.

Wi-Fi 8 routers using the BCM4918 should eventually support:

- Intelligent QoS: The CPU can profile traffic patterns and automatically adjust priorities without user configuration

- Predictive optimization: The Neural Engine learns your usage patterns and proactively optimizes network settings

- Local content delivery: A high-performance CPU and optional storage can cache popular content locally

- Advanced security: Real-time threat detection instead of reactive firewall rules

- Seamless roaming: With more processing power, handoffs between access points can be smoother

But here's the reality: most vendors don't have expertise in machine learning or advanced networking. They'll probably roll out incremental features: better traffic visualization, more granular QoS controls, improved stability. The truly innovative vendors (probably small startups or university labs) will experiment with more advanced features.

It typically takes 18-24 months for vendors to fully exploit a new hardware platform's capabilities. Early Wi-Fi 8 routers will feel only slightly better than Wi-Fi 6E. By 2027-2028, when second and third-generation products arrive, you'll see the real potential of chips like the BCM4918 unlocked.

Manufacturing and Integration: The 19 x 19 mm Package Challenge

The BCM4918 is manufactured on a modern process node (Broadcom hasn't announced specifics, but likely 28nm or smaller). The 19 x 19 mm package size is compact—about the size of a postage stamp.

Integrating CPU, networking, security, and AI acceleration onto such a small die requires sophisticated design. Power delivery becomes critical: you need stable voltage across multiple functional blocks while managing heat dissipation. Clock distribution must be precise: synchronizing signals across all parts of the chip without excessive latency or skew.

Vendors using the BCM4918 need to design support circuitry: power management circuits, oscillators for clock generation, DDR memory interfaces, passive components for signal integrity. This isn't plug-and-play; it's serious hardware engineering.

Broadcom's design simplification (consolidating functions onto one chip) reduces this complexity somewhat compared to multi-chip designs. You need fewer power supplies, simpler clocking networks, simpler interconnects. But you still need expertise.

This probably means that only established vendors (Asus, Netgear, TP-Link, etc.) will use the BCM4918 for consumer routers. Smaller startups lack the hardware engineering resources. This consolidation effect benefits large manufacturers, potentially reducing competition in the router market.

The Market Timing: 2026 as the Inflection Point

Broadcom announced the BCM4918 for "2026 mesh router launches." That's intentionally vague—could mean January 2026 or December 2026. In reality, early Wi-Fi 8 routers using this chip will probably arrive in Q2-Q3 2026, with broader availability in late 2026 and 2027.

This timing aligns with several factors:

- Wi-Fi 8 standardization: Ratified in 2024, allowing chip designs to finalize

- Market readiness: After 20+ years of Wi-Fi upgrades, consumers expect new speeds every few years

- Competitive pressure: Other chipmakers (Qualcomm, MediaTek, Marvell) have similar products in development

- Infrastructure maturity: 10 GbE equipment (switches, cabling) is becoming more affordable

But there's a challenge: consumer adoption lag. Most homes still use Wi-Fi 5 or Wi-Fi 6 routers. Wi-Fi 6E has been available since 2021 but hasn't achieved mainstream penetration. Wi-Fi 8 will likely follow a similar path: early adopters in 2026-2027, mainstream adoption by 2029-2030.

The BCM4918 is infrastructure for that future adoption wave. Broadcom is placing a bet that by 2027, enough homes will have needs (more devices, higher speeds, more data) that Wi-Fi 8 routers become necessary, not optional.

The BCM4918 APU significantly outperforms traditional CPUs in network-specific tasks, particularly in packet processing and 10GbE support. (Estimated data)

Cost and Pricing Dynamics: Will Wi-Fi 8 Be Affordable?

The BCM4918 likely costs Broadcom around

Early Wi-Fi 8 routers will be premium products:

This pricing arc is typical for networking hardware. Innovation starts expensive, then democratizes. The BCM4918 is part of that cycle. It's premium hardware today, mass-market hardware in 3-5 years.

For consumers, the implication is clear: if you buy a router in 2026, expect to pay a premium for Wi-Fi 8. If you can wait until 2027-2028, prices will drop and reliability will improve. Early adoption is for enthusiasts and businesses with real throughput needs.

Industry Implications: Consolidation and Specialization

The BCM4918 represents a broader trend: specialization. Broadcom is betting that vendors won't try to build custom networking processors. Instead, they'll license proven designs like the BCM4918 and focus on firmware, industrial design, and customer support.

This drives consolidation. Fewer vendors will have the expertise to compete in hardware. Those who do (Broadcom, Qualcomm, MediaTek) will capture more of the market. This can be bad for competition (fewer choices, potential for price collusion) or good (more standardized hardware, better interoperability).

It also means innovation moves to software and services. Vendors differentiate through firmware features, companion apps, cloud services, and customer support—not through unique silicon. This is already happening in smartphones (everyone uses Qualcomm or Apple's chip) and increasingly in routers.

For consumers, this is a mixed bag. You get better, more standardized hardware. But less diversity in approaches. Smaller vendors can't compete on raw capability; they must compete on niche features or support.

The Naming Convention: Why APU?

Broadcom's decision to call the BCM4918 an "APU" (Accelerated Processing Unit) is an interesting choice. Normally, marketing teams avoid ambiguous terminology. But Broadcom deliberately repurposed this term.

Possible reasons:

- Brand association: APU has positive connotations for consumers (more powerful, dedicated acceleration)

- Simplification: Marketing needs an umbrella term for "processor with specialized hardware." APU works.

- Precedent: If AMD agrees (they didn't object publicly), it's less risky

- Future flexibility: If Broadcom launches other APU-branded products, the term becomes a product family

From a technical standpoint, the naming is defensible. An APU, broadly defined, is a processor that accelerates specific workloads. Broadcom's networking acceleration is legitimate acceleration.

But it's also clever trademark opportunism. By associating the BCM4918 with the established APU brand, Broadcom gains some of the marketing equity AMD built. Marketing is about perception, and perception is influenced by familiar terms.

This probably won't change consumer behavior—most people just care if their router works—but it does signal Broadcom's strategic intent: they're not just building networking chips. They're positioning themselves as an accelerator platform vendor, competing in the same conceptual space as Nvidia (GPUs), Intel (CPUs), and AMD (APUs).

The Architectural Shift: From Horizontal to Vertical Specialization

Historically, processor evolution was horizontal: faster CPUs for everything. The BCM4918 represents vertical specialization: different processors for different jobs.

This shift has been ongoing for a decade:

- Smartphones: Qualcomm SoCs include CPU, GPU, modem, accelerators, security cores

- Data centers: Amazon Trainium for ML, AWS Graviton for general compute, custom silicon for networking

- Networking equipment: Purpose-built processors for specific functions (routing, switching, inspection)

The BCM4918 brings this approach to consumer networking. It's not just a faster ARM CPU. It's a heterogeneous system: CPU for control, specialized engines for data movement, AI accelerator for intelligence, security subsystem for protection.

This trend will continue. The next generation (BCM4920?) will probably add more specialization: dedicated video processing, additional AI cores, enhanced security features. The SoC industry is moving toward "system on a chip" that is really "ecosystem on a chip."

For consumers, this is invisible. You don't think about which processor handles Wi-Fi encryption or which handles packet forwarding. But it affects product capability, performance, power efficiency, and cost. The BCM4918 is a milestone in this architectural evolution.

Practical Implications: What You Should Actually Care About

If you're a consumer wondering whether any of this matters: it does, but indirectly.

The BCM4918's benefits accrue over time:

- 2026-2027: Early Wi-Fi 8 routers are faster, more stable, support more clients simultaneously

- 2027-2028: Vendors release firmware updates unlocking AI-driven features, better security

- 2028-2029: Prices drop, reliability improves, mainstream adoption begins

If you buy a router using the BCM4918 in 2026, you're getting:

- Faster peak throughput (probably 30-40% faster than Wi-Fi 6E)

- Better performance under high client density

- More room for future feature expansion through firmware

- Support for 10 GbE backhaul (if you upgrade your wired network too)

The practical differences in daily life? Marginal. Faster downloads, smoother video calls, snappier responses. Noticeable but not transformative.

Where the BCM4918 might matter more is business deployments or complex home networks. A small office with 30+ connected devices, a smart building with hundreds of sensors, or a content creator with high-demand upload/download patterns—those scenarios stress-test networking hardware. The BCM4918's data-plane separation and increased throughput become valuable.

For a typical home with 10-20 devices and moderate usage patterns? Wi-Fi 6E is still plenty. You can safely skip Wi-Fi 8 for another 2-3 years.

What's Missing: Unanswered Questions About the BCM4918

Broadcom's technical documentation is vague on several important points:

- Neural Engine specifications: Throughput (TOPS), supported models, memory capacity

- Packet processor throughput: Actual forwarding performance under various packet sizes

- Power consumption: In different operational modes (idle, typical, peak)

- Memory specifications: Cache sizes, memory bandwidth, buffer capacities

- Security audits: Independent verification of security claims

- Real-world performance: How do actual routers perform versus theoretical limits?

These details matter. Without them, it's hard to assess whether the BCM4918 actually delivers on its promises or if it's marketing hype with marginal improvements. Broadcom's reticence is probably strategic: they're not ready to defend claims against skeptics, so they keep things vague.

We'll learn more when routers ship and independent reviewers test them. Teardowns will reveal PCB design, component choices, and thermal management. Firmware analysis will uncover what AI features actually exist. Real-world throughput tests will show if the chip lives up to expectations.

Until then, the BCM4918 is a technical promise, not a proven product. It might be revolutionary or merely incremental. The actual implementation depends on vendors and firmware, not just the chip.

Looking Forward: The Trajectory of Consumer Networking Hardware

The BCM4918 is a waypoint in a longer trajectory. Understanding where this is headed helps put current products in context.

The trend is clear: networking hardware is becoming more capable, more intelligent, more specialization-focused. What started as simple wireless repeaters are becoming edge computing platforms, AI-powered management systems, and network security appliances.

Future evolution will likely include:

- Better AI integration: Larger neural engines, more sophisticated models, edge training

- Enhanced security: Hardware-based threat detection, micro-segmentation, zero-trust architecture

- 5G/6G readiness: Integration with cellular networks, not just Wi-Fi

- Open standards: Move toward software-defined networking, allowing customization

- Energy efficiency: Lower power consumption as competition drives optimization

Broadcom's BCM4918 is positioned well for this future. The quad-core CPU, dedicated accelerators, and flexible expansion interfaces provide a foundation for vendors to build on.

But execution matters. A great chip in poorly designed routers with bad firmware doesn't help anyone. Broadcom's contribution is enabling capability. Whether vendors deliver on that capability is the real question.

FAQ

What is the BCM4918 and how is it different from previous Broadcom processors?

The BCM4918 is Broadcom's flagship networking processor for Wi-Fi 8 access points. It differs from previous generations (like the BCM4916) by integrating a quad-core ARM CPU (versus dual-core), adding a dedicated packet processor for data-plane operations, supporting native 10 GbE connectivity, including a Neural Engine for local AI inference, and consolidating these functions into a single 19x19mm package. The key architectural difference is the separation of control-plane and data-plane processing, which prevents traffic throughput from being bottlenecked by CPU availability.

Why does the BCM4918 include 10 GbE if most homes have 1 GbE internet connections?

Home internet connections are typically 100 Mbps to 1 Gbps, but the 10 GbE support in the BCM4918 is for wired backhaul between mesh router nodes, not WAN connections. Modern mesh systems with multiple nodes can aggregate significant data traffic across all wireless clients. If a mesh node has 20 clients simultaneously moving data, the backhaul link must handle the combined throughput. 10 GbE provides headroom for high-speed local network transfers without creating bottlenecks within your home network, separate from your internet connection speed.

What does the Neural Engine actually do, and will it make a practical difference in performance?

The Neural Engine is dedicated hardware for machine learning inference—running trained models on new data. Potential applications include traffic classification, threat detection, client profiling, and anomaly detection. However, Broadcom has not published detailed specifications about throughput, supported models, or power consumption. Practical impact depends entirely on how vendors implement these features in firmware. Generic "AI" claims without specifics usually indicate minimal real-world functionality. The Neural Engine's actual utility will become clear when Wi-Fi 8 routers arrive in 2026 and vendors reveal what they've implemented.

Will I need to upgrade my home network infrastructure to use Wi-Fi 8 routers with the BCM4918?

If you're upgrading purely for Wi-Fi 8 wireless performance, you don't need to change anything. Your existing Wi-Fi clients will work fine with new Wi-Fi 8 access points. However, if you want to take advantage of 10 GbE backhaul capabilities, you'll need to upgrade your wired networking equipment (switches, cabling, network adapters). This is optional and only beneficial for homes with extensive mesh deployments or very high local network throughput demands. For typical home networks, existing Cat 5e or Cat 6 cabling is sufficient.

How does separating control-plane and data-plane operations improve network performance?

Traditionally, access points route all traffic through a single CPU. Configuration changes, software updates, and network monitoring operations compete with active data forwarding for CPU cycles. When the CPU is busy managing the device, throughput drops. The BCM4918 dedicates a quad-core CPU for control-plane tasks (firmware, settings, diagnostics) and a separate specialized packet processor for forwarding traffic. This means network throughput continues at full speed even when the device is busy with management tasks, significantly improving performance under high loads and complex configurations.

When will Wi-Fi 8 routers using the BCM4918 actually be available for consumers?

Broadcom announced the BCM4918 for "2026 mesh router launches," but the term is intentionally vague. Early Wi-Fi 8 routers using this chip will likely arrive in Q2-Q3 2026, with broader availability in late 2026 and throughout 2027. Early products will be premium-priced ($300+), and prices will likely drop to more competitive levels by 2028-2029 as second-generation products arrive and volumes increase. If you're considering upgrading, waiting until 2027 gives firmware time to mature and prices time to drop.

Is the BCM4918 more power-efficient than previous-generation networking processors?

Broadcom hasn't published comprehensive power consumption specifications. Consolidating multiple functions onto one die typically reduces power overhead from interconnects and power delivery, potentially making the BCM4918 more efficient than multi-chip designs. However, the quad-core CPU and dedicated accelerators consume power regardless. The actual power efficiency will depend on implementation details (how well power gating works, clock gating optimization, voltage scaling) that vendors don't publicly disclose. Independent teardowns and testing will reveal the true power characteristics once products arrive.

What competitive advantages does renaming the processor as an "APU" provide to Broadcom?

The naming leverages brand recognition from AMD's APU products—which consumers associate with enhanced performance and dedicated acceleration. Calling the BCM4918 an "APU" is technically defensible (it does accelerate network processing) while benefiting from positive marketing associations. It also signals Broadcom's strategic positioning as an accelerator platform vendor competing alongside Nvidia (GPUs) and other specialized processor makers. From a practical standpoint, the name doesn't affect functionality, but marketing perception influences purchasing decisions.

What are the main limitations or tradeoffs of consolidating everything onto one chip?

System-on-Chip consolidation trades flexibility for efficiency. You can't easily upgrade or swap individual components as you could with multi-chip designs. Thermal management becomes critical due to power density—the 19x19mm package concentrates significant compute. The chip can't selectively disable all unused components, so there's baseline power consumption regardless of workload. Vendors have less flexibility in customizing each subsystem. If one part of the chip (like the memory interface) has a bug, you can't upgrade just that component—you're stuck with the entire chip's limitations. These tradeoffs are acceptable for most consumers but limit design flexibility for vendors.

How does the BCM4918 compare to competing Wi-Fi 8 processors from Qualcomm and MediaTek?

Broadcom, Qualcomm, and MediaTek are all developing Wi-Fi 8 access point processors. Specific comparisons are difficult because vendors don't publish detailed specifications. The BCM4918 appears to emphasize integrated 10 GbE (not all competitors offer this), dedicated packet processing, and local AI acceleration. Qualcomm and MediaTek likely have similar approaches with different emphasis areas. Real-world comparisons will only be possible when multiple Wi-Fi 8 routers arrive and independent reviewers test them side-by-side. Until then, it's impossible to declare a definitive winner—each chip likely excels in different scenarios.

Conclusion: A Milestone in Edge Computing at Home

The BCM4918 isn't revolutionary. It's evolutionary. Broadcom has done what good hardware companies do: taken proven concepts from enterprise networking (data-plane separation, specialized accelerators, edge processing) and adapted them for consumer access points.

The significance is architectural. By separating control and data planes, Broadcom unlocked throughput potential that was artificially constrained by CPU limitations. By integrating 10 GbE, they acknowledged that home networks are becoming more sophisticated. By adding a Neural Engine, they laid groundwork for AI-driven features that probably won't arrive until 2027 or later.

What's important to understand is the timeline. The BCM4918 isn't available today. Routers using it won't arrive until mid-2026. Firmware features won't mature until 2027-2028. Real-world benefits won't be obvious until then.

If you're shopping for a router today, Wi-Fi 6E is still the smart choice. It's proven, affordable, and more than sufficient for current home networking needs. If you're willing to wait and want the latest technology, Wi-Fi 8 routers in 2027 will offer measurable improvements: better performance under high client density, lower latency, potential for AI-driven optimization.

The BCM4918 represents Broadcom's vision for what edge computing looks like in the home: intelligent, local, capable of handling complexity without shipping every decision to the cloud. Whether vendors fulfill that vision with innovative firmware remains to be seen. The hardware provides the foundation. Software will determine the outcome.

For now, the BCM4918 is a technical achievement worth understanding—not because you need to care about it today, but because it signals where home networking is headed. Edge computing is moving from data centers into homes. Access points are becoming network intelligence hubs. The era of dumb networking hardware is ending.

Broadcom's APU is one marker on that journey.

Key Takeaways

- BCM4918 separates control-plane (CPU) and data-plane (packet processor) operations, eliminating CPU bottlenecks that plague older designs

- 10GbE integration addresses mesh router backhaul demands—wired connections between nodes now have 10x more capacity than traditional Gigabit Ethernet

- Neural Engine hardware enables local AI inference for traffic classification and threat detection without cloud dependency or latency penalty

- Consolidation to single 19x19mm chip reduces PCB complexity, power distribution overhead, and total component count versus multi-chip designs

- Wi-Fi 8 routers using BCM4918 will arrive in mid-2026, with early products premium-priced ($300+) and mainstream adoption expected by 2028-2029

Related Articles

- Best Tech Deals This Week: Fitness Trackers, Chargers, Blu-rays [2025]

- Medion Erazer Major 16 X1 Review: Power Meets Performance [2025]

- Alliwava GH8 Mini PC Review: Zen 4 Power on a Budget [2025]

- CES 2026 Best Products: Pebble's Comeback and Game-Changing Tech [2025]

- AI PCs Are Reshaping Enterprise Work: Here's What You Need to Know [2025]

- Keychron Nape Pro Trackball: The Complete Review Guide [2025]

![Broadcom's BCM4918 Wi-Fi 8 APU Explained [2025]](https://tryrunable.com/blog/broadcom-s-bcm4918-wi-fi-8-apu-explained-2025/image-1-1768068492514.png)