Building Safe AI for Teens: Open AI's New Open Source Tools [2025]

Last month, Open AI introduced a groundbreaking set of open-source tools designed to enhance AI safety for teens. These tools, primarily in the form of prompts, aim to fortify applications against common online threats like graphic violence, inappropriate content, and dangerous behaviors. This article will explore the intricate details of these tools, their practical implementation, and how they are setting new standards in AI safety.

TL; DR

- Open AI's new prompts: Offer developers pre-made solutions for teen safety.

- Key challenges addressed: Include violence, inappropriate content, and harmful behaviors.

- Integration ease: Compatible with Open AI's ecosystem and beyond.

- Collaborative development: Created with input from AI safety experts.

- Future-proofing AI: Sets a new bar for AI safety standards.

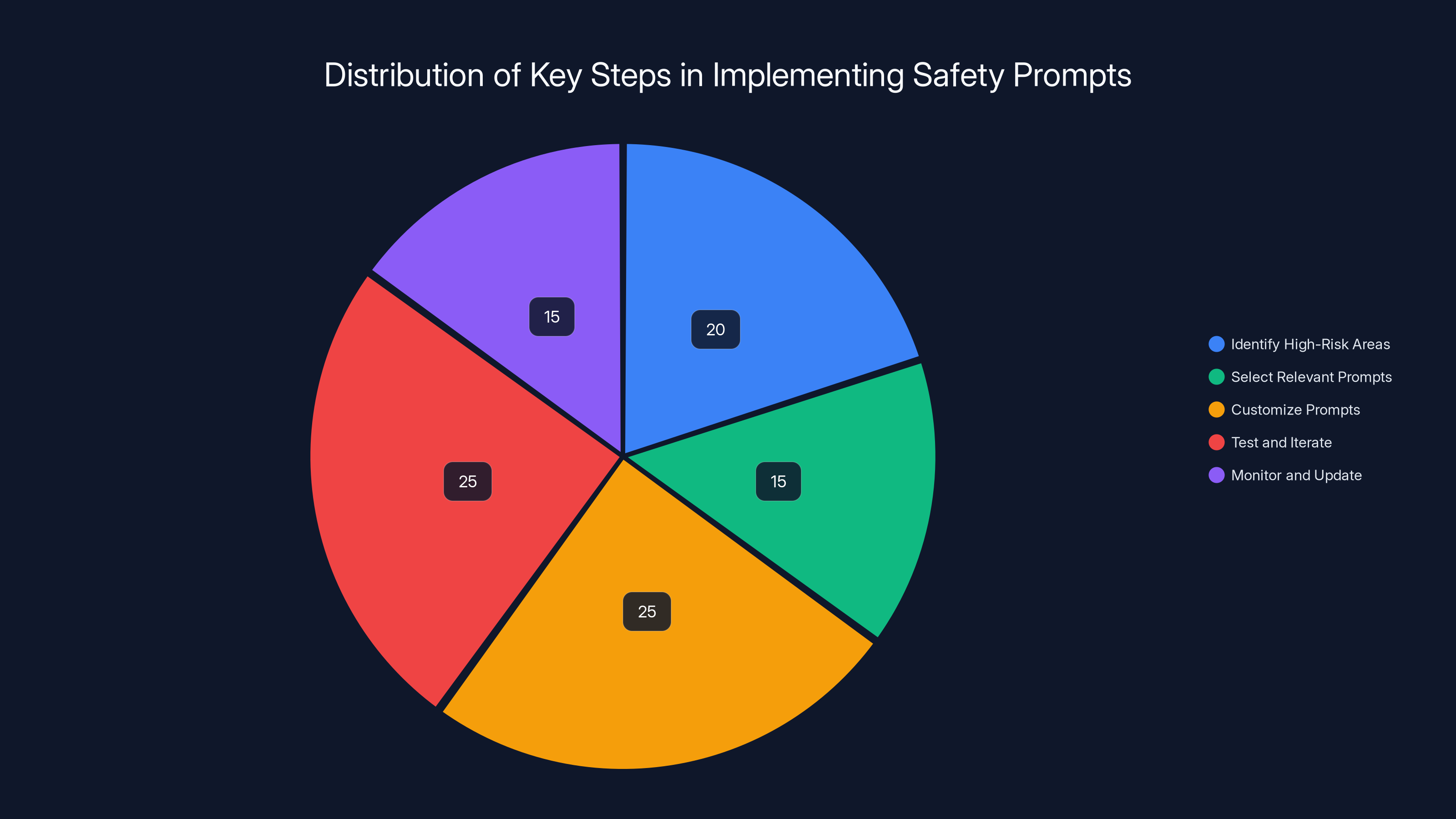

Estimated data showing the distribution of time spent on each step of implementing OpenAI's safety prompts. Customization and testing are the most time-intensive stages.

Why Teen Safety in AI Matters

In the digital age, teens are one of the most active user groups online, making it crucial to ensure their safety. With the rise of AI in everyday applications, there's a pressing need to integrate robust safety measures that cater specifically to this demographic. Teens are vulnerable to a myriad of online dangers, from exposure to harmful content to engaging in risky online challenges, as highlighted by the Cato Institute.

The Role of AI in Modern Applications

AI is increasingly embedded in social media, educational platforms, and even health apps. This integration has brought immense benefits, such as personalized learning experiences and enhanced user engagement. However, it also poses risks, especially when AI systems inadvertently expose teens to inappropriate content or encourage harmful behaviors, as discussed in the BBC's analysis.

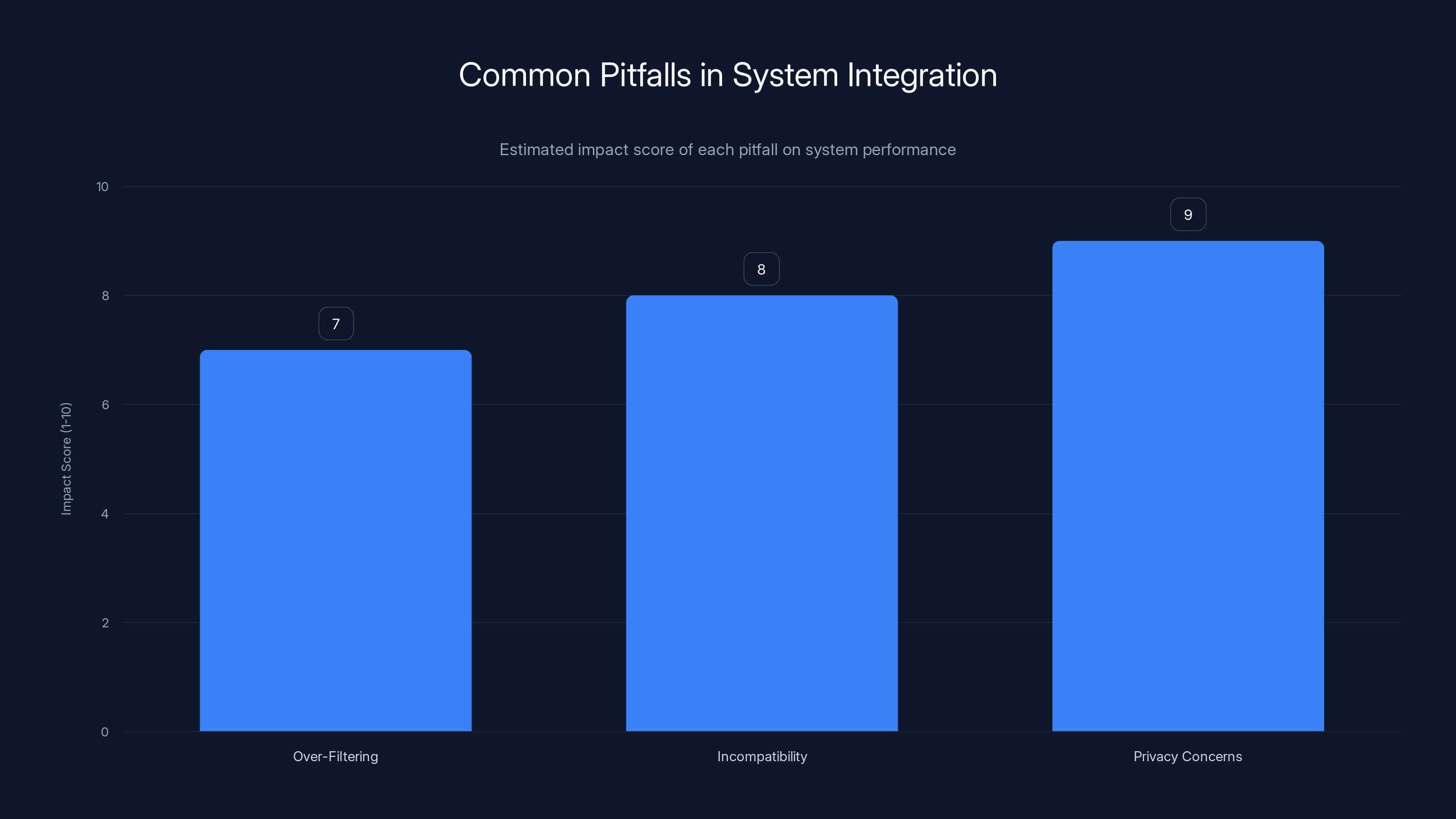

Privacy concerns have the highest impact score, indicating a critical area to address. Estimated data.

Open AI's Open Source Safety Tools: An Overview

Open AI's latest initiative offers developers a comprehensive set of prompts designed to preemptively address safety concerns. These prompts serve as a safety net, ensuring that AI applications maintain a baseline standard of safety for teen users.

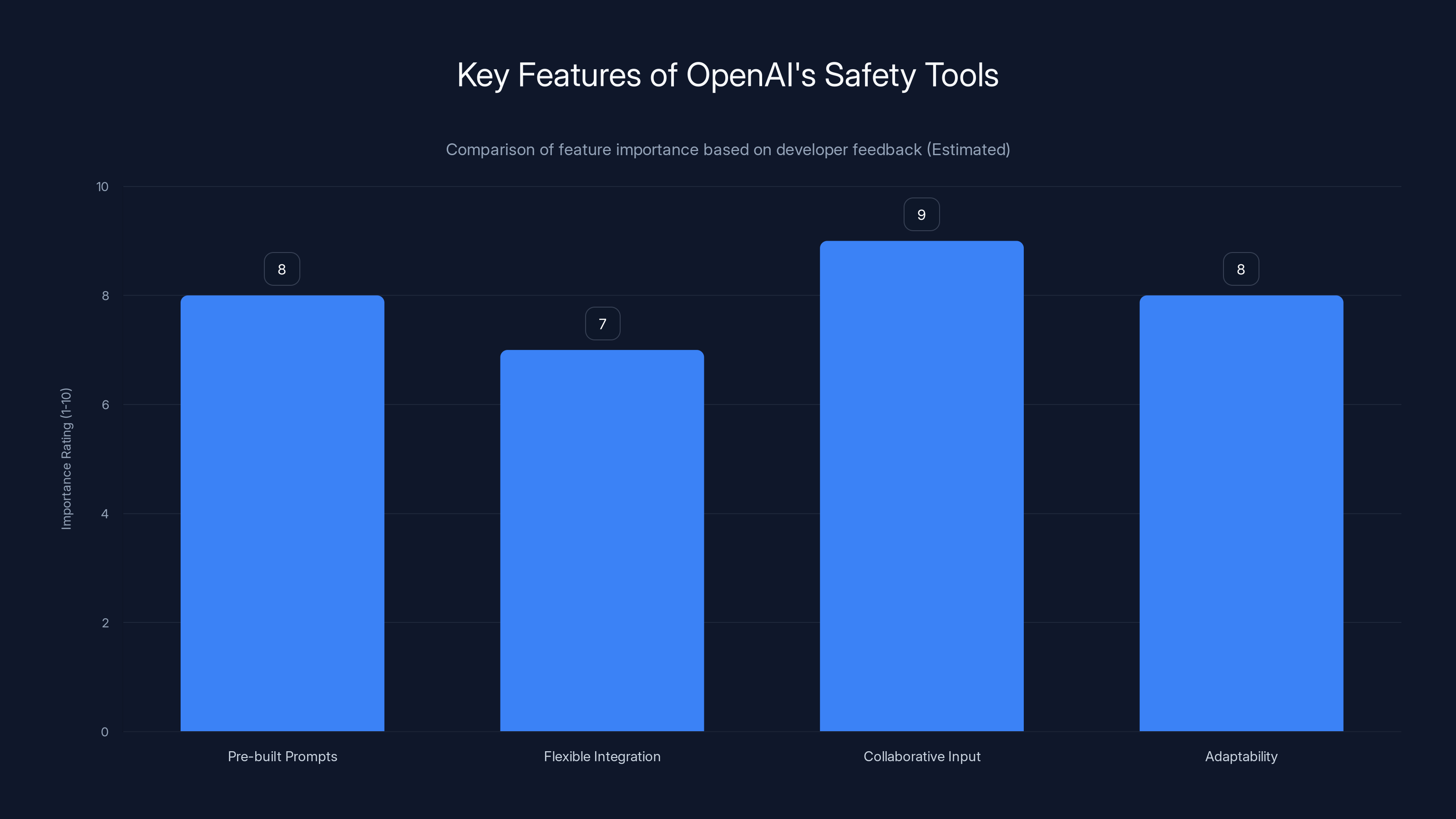

Key Features of Open AI's Safety Tools

- Pre-built Prompts: Ready-to-use prompts that address a wide range of safety issues.

- Flexible Integration: Compatible with different AI models, not just Open AI's ecosystem.

- Collaborative Input: Developed with insights from AI safety watchdogs and educational bodies.

- Adaptability: Prompts are easily customizable to fit specific application needs.

Real-World Applications

Developers can integrate these prompts into applications like chatbots, educational software, and social media platforms to ensure content is appropriate and safe for teen users. For instance, a social media app could use these prompts to filter out graphic violence and inappropriate language in real-time, as noted in upGrad's integration of OpenAI tools.

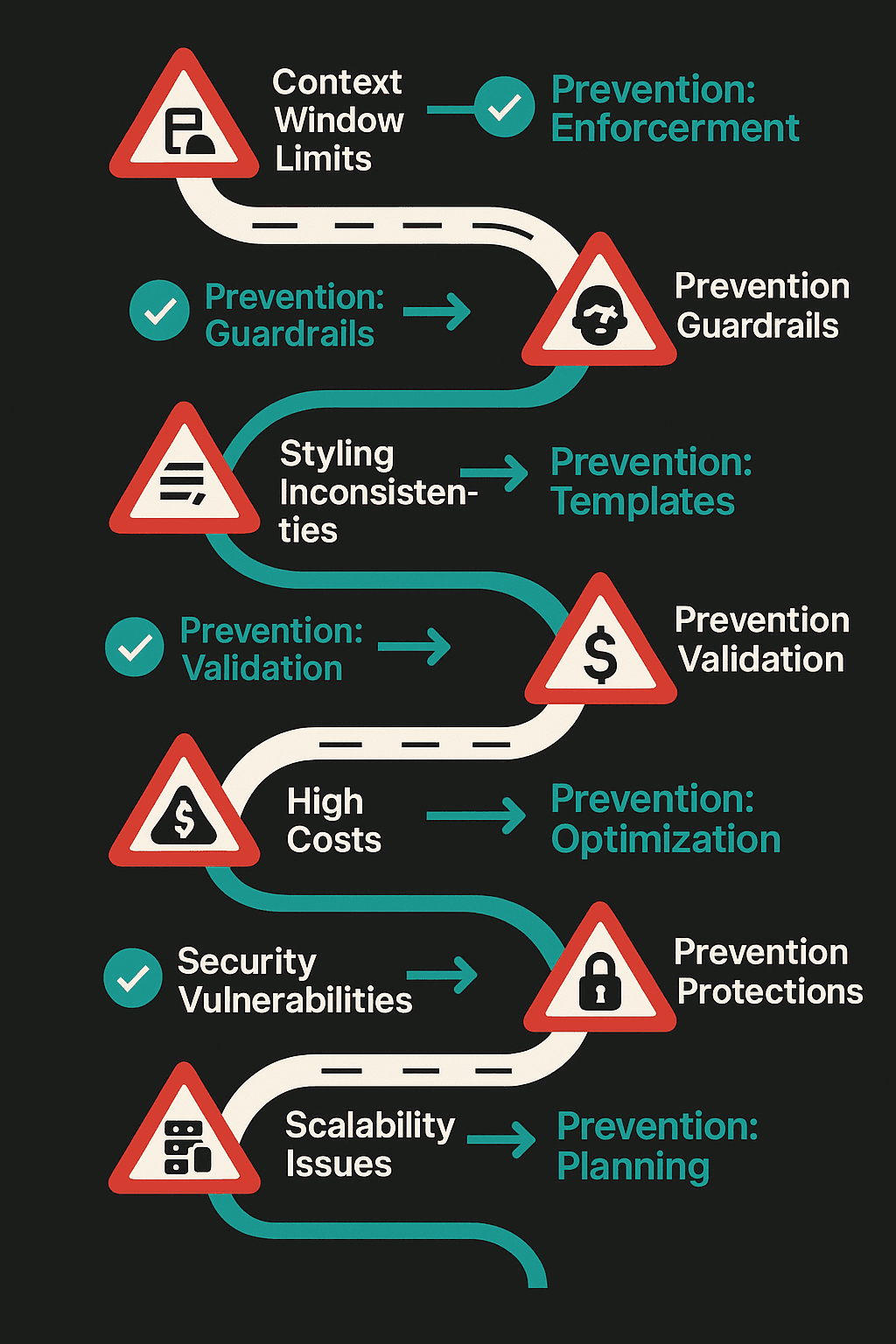

Implementing Open AI's Safety Prompts: A Step-by-Step Guide

Adopting Open AI's safety tools requires a strategic approach. Here's how developers can effectively integrate these prompts into their applications:

- Identify High-Risk Areas: Start by analyzing your application to identify features that may pose risks to teen users.

- Select Relevant Prompts: Choose prompts from Open AI's library that align with identified risks.

- Customize Prompts: Tailor the prompts to fit the specific context and needs of your application.

- Test and Iterate: Implement the prompts in a controlled environment, gather feedback, and refine as necessary.

- Monitor and Update: Continuously monitor application performance and update prompts to address new risks.

Collaborative input is rated as the most important feature, highlighting the value of diverse insights in developing safety tools. Estimated data based on typical feedback trends.

Common Pitfalls and Solutions

Pitfall 1: Over-Filtering of Content

Solution: Balance is key. While it's crucial to filter harmful content, over-filtering can lead to a lack of engagement. Use machine learning algorithms to dynamically adjust sensitivity levels based on user feedback.

Pitfall 2: Incompatibility with Existing Systems

Solution: Open AI's prompts are designed to be flexible. Ensure your system's architecture allows for modular integration, and consider using API gateways to facilitate seamless communication between systems, as recommended by Microsoft's security blog.

Pitfall 3: User Privacy Concerns

Solution: Implement transparent data handling policies. Ensure users and their guardians understand how data is used and protected. Compliance with regulations like GDPR is non-negotiable.

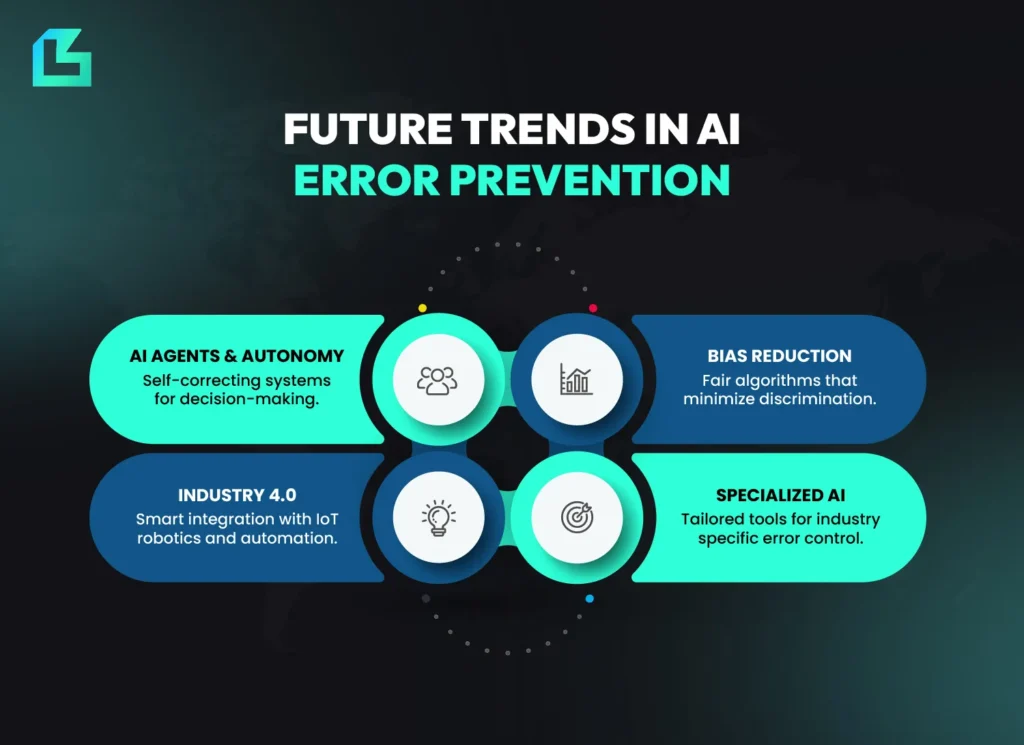

The Future of AI Safety

As AI continues to evolve, so must our approach to safety. Open AI's tools are just the beginning. Future trends in AI safety will likely include:

- AI Audits: Regularly scheduled evaluations of AI systems to ensure compliance with safety standards.

- Adaptive Safety Protocols: AI that can autonomously adjust its safety measures based on contextual changes.

- Increased Collaboration: Ongoing partnerships between tech companies, educational institutions, and regulatory bodies to enhance safety, as discussed in the Urban Institute's report.

Recommendations for Developers

- Stay Informed: Keep up with the latest developments in AI safety to ensure your applications remain compliant and safe.

- User-Centric Design: Prioritize user safety and experience in your design process. Engage with teen users to gather insights and feedback.

- Community Engagement: Participate in forums and workshops focused on AI safety to share knowledge and learn from peers.

Conclusion

Open AI's open-source tools represent a significant leap forward in ensuring the safety of teen users in AI applications. By providing developers with the resources they need to address safety concerns proactively, Open AI is setting a new standard for AI safety. As the digital landscape continues to evolve, these tools will be instrumental in protecting the next generation of digital natives.

Key Takeaways

- Open AI's tools provide developers with ready-made solutions for enhancing AI safety for teens.

- The tools address critical safety concerns, including inappropriate content and harmful behaviors.

- Developers can easily integrate these prompts into their existing systems with minimal disruption.

- Continuous monitoring and updates are essential to maintain safety standards.

- The future of AI safety will involve adaptive protocols and increased collaboration.

FAQ

What is Open AI's new safety tool?

Open AI's new safety tool is a set of open-source prompts designed to help developers build safer AI applications for teens. These prompts address issues like graphic violence, inappropriate content, and harmful behaviors.

How do these safety prompts work?

The prompts provide pre-built solutions that developers can integrate into their applications to filter and manage content, ensuring it is appropriate and safe for teen users.

What are the benefits of using Open AI's safety tools?

Benefits include enhanced user safety, compliance with regulatory standards, and the ability to quickly implement safety measures without building from scratch.

Can these tools be used with non-Open AI models?

Yes, the prompts are designed to be compatible with a variety of AI models, making them versatile for different applications.

What challenges do these tools address?

The tools address challenges such as exposure to graphic violence, inappropriate content, harmful body ideals, and dangerous online challenges.

How can developers ensure ongoing safety in AI applications?

Developers should implement continuous monitoring, regularly update safety protocols, and engage with user feedback to maintain and improve safety standards.

Further Reading

- Understanding AI Safety

- Implementing Ethical AI Practices

- Future Trends in AI Development

Related Articles

- Why Telling AI It's an Expert Programmer Might Be Hurting Its Performance [2025]

- Regional Data Sovereignty in the Age of AI: Balancing Innovation and Regulation [2025]

- How AI Will Revolutionize Data Readiness [2025]

- Claude Code and Cowork: Transforming AI Interaction with Your Computer [2025]

- Three New Gemini Features Enhance Google TV [2025]

- How AI is Reshaping Compliance: Why Governance Still Matters [2025]

![Building Safe AI for Teens: OpenAI's New Open Source Tools [2025]](https://tryrunable.com/blog/building-safe-ai-for-teens-openai-s-new-open-source-tools-20/image-1-1774379098039.jpg)