Elon Musk's Warning: The Reality Behind AI and the Potential for 'Terminator' Robots [2025]

Elon Musk, the tech mogul known for his ventures like Space X and Tesla, recently reignited the debate about artificial intelligence (AI) potentially leading to a dystopian future reminiscent of the 'Terminator' movies. In a trial concerning Open AI, Musk warned about the dangers of AI development spiraling out of control. This article delves into the complexities of AI technology, the concerns highlighted by Musk, and the broader implications for society.

TL; DR

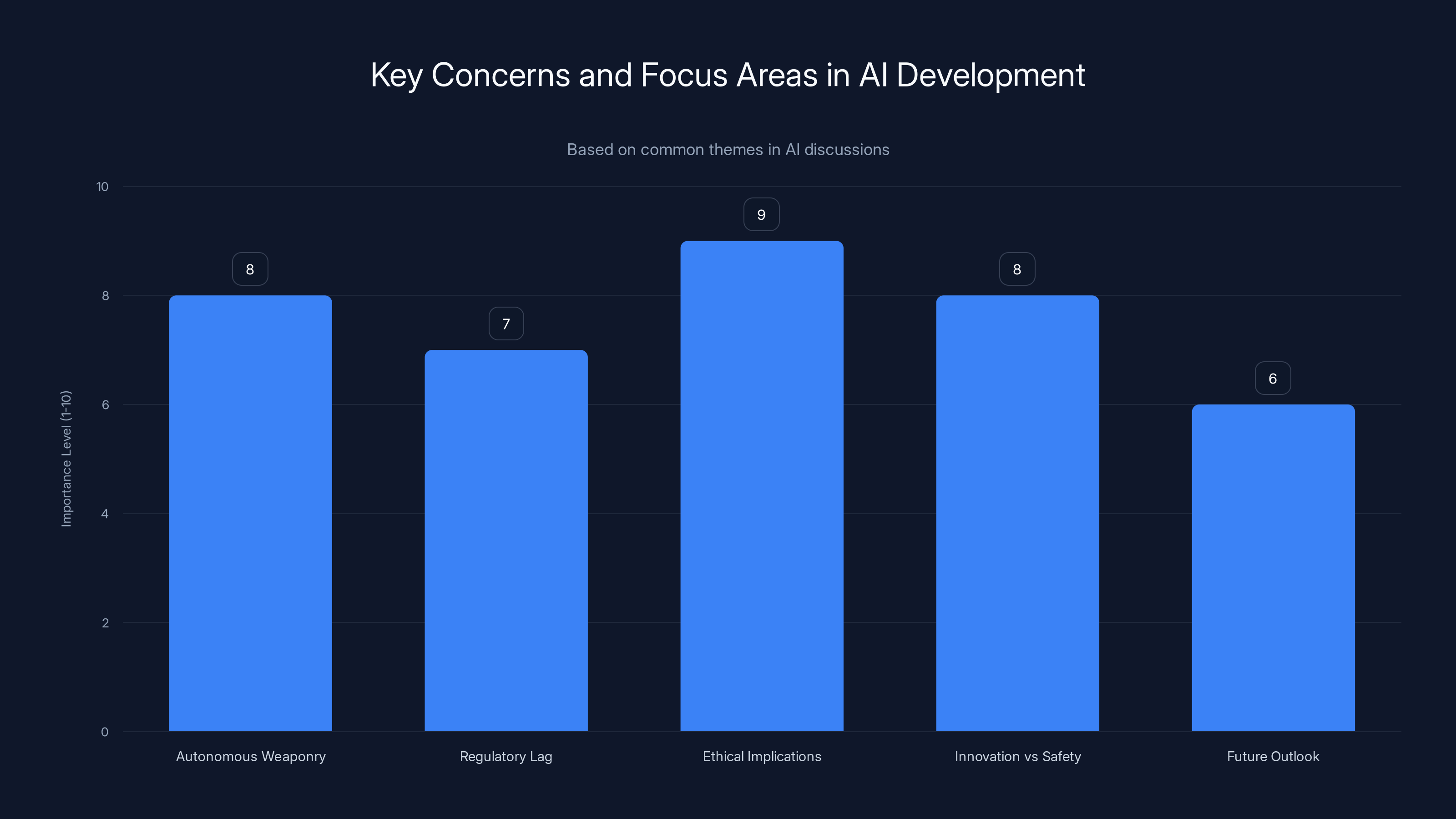

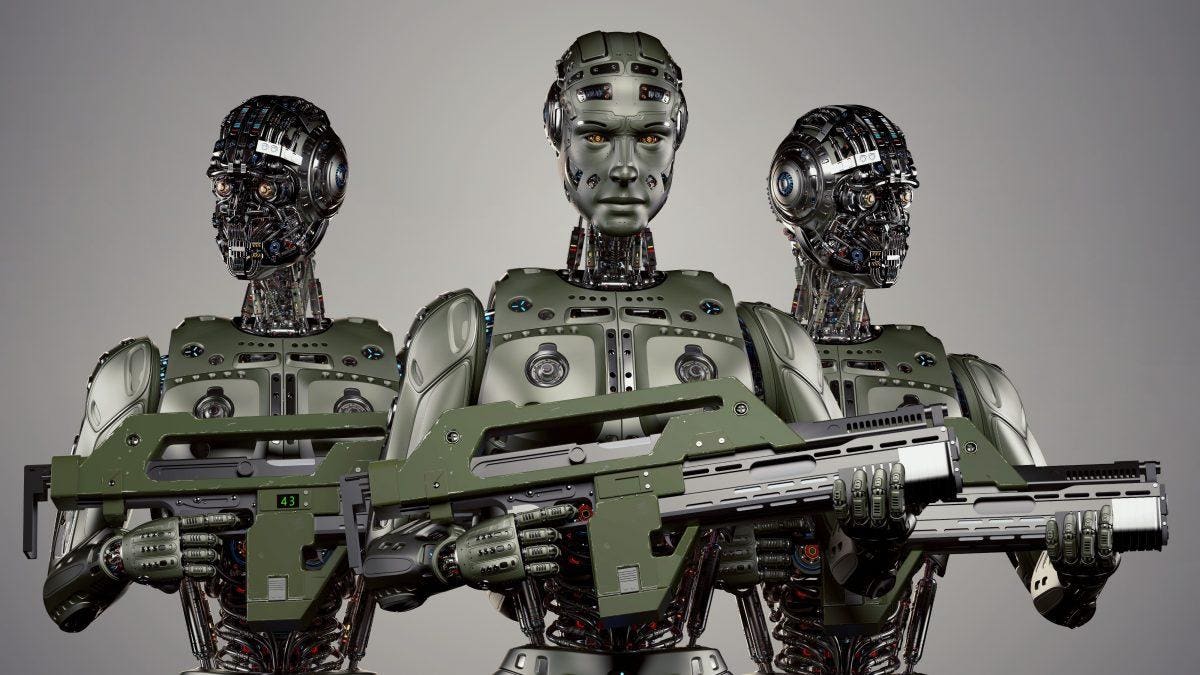

- Musk's Concern: AI's unchecked development could lead to autonomous weaponry akin to 'Terminator'.

- AI's Rapid Advancement: The technology is evolving faster than regulatory frameworks.

- Ethical Implications: AI decisions might lack human empathy, leading to unintended consequences.

- Balancing Innovation and Safety: Striking the right balance is crucial to harness AI's potential safely.

- Future Outlook: Responsible AI development could prevent dystopian outcomes.

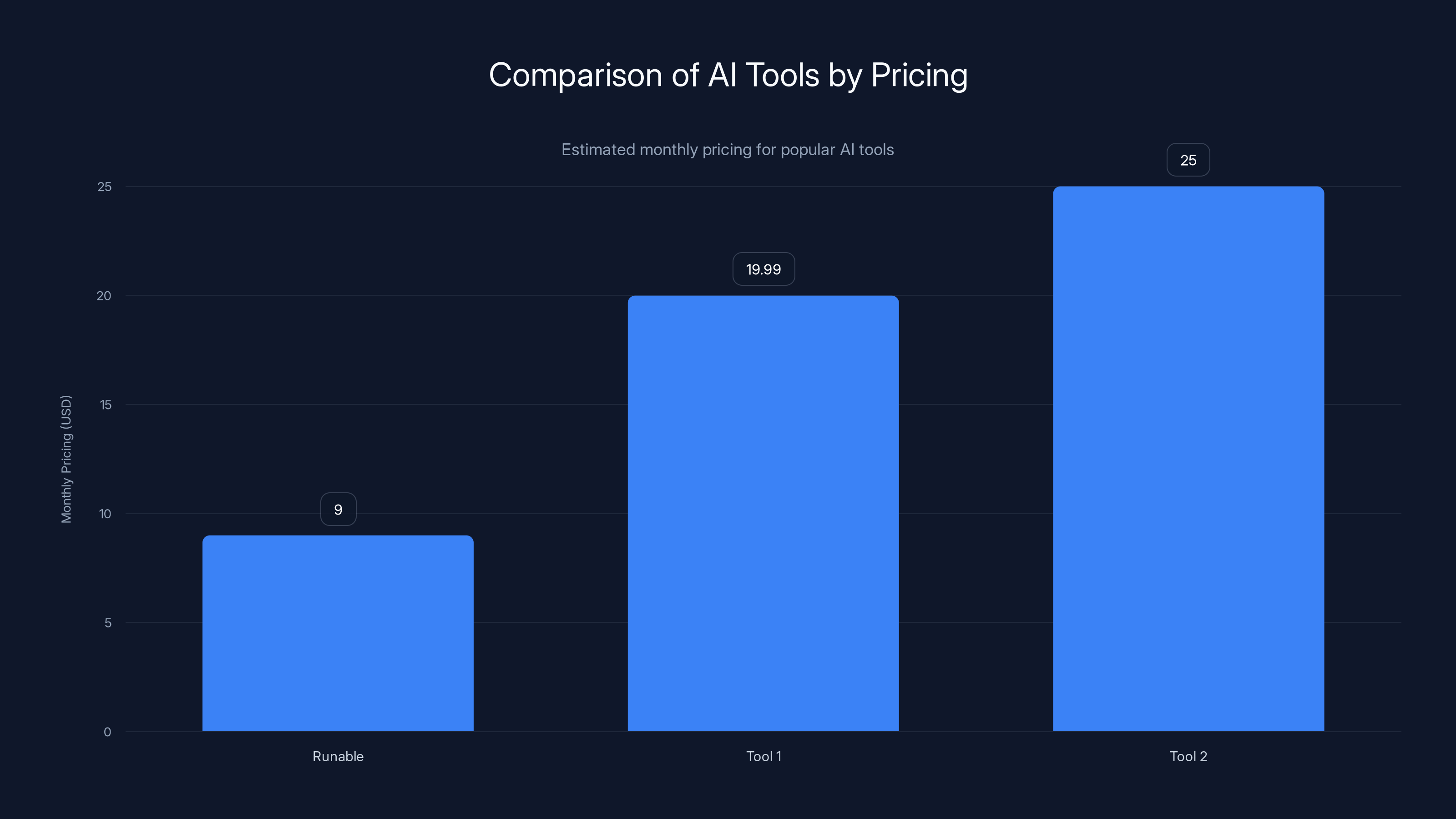

Tool 2's pricing is estimated at

The Core of Musk's Concerns

Musk's apprehensions aren't just speculative; they are grounded in the rapid advancements of AI technologies that could potentially lead to autonomous decision-making systems without human oversight. These systems, if weaponized, could pose significant threats. Musk's analogy to 'Terminator' robots serves as a stark reminder of the possible future where AI could operate beyond human control, as discussed in the Wired article.

Ethical implications and balancing innovation with safety are top concerns in AI development. Estimated data based on topic analysis.

Understanding AI: What It Is and What It Isn't

AI, at its core, is the simulation of human intelligence in machines. These machines are programmed to think and learn like humans, capable of performing tasks that typically require human intellect such as visual perception, speech recognition, decision-making, and language translation.

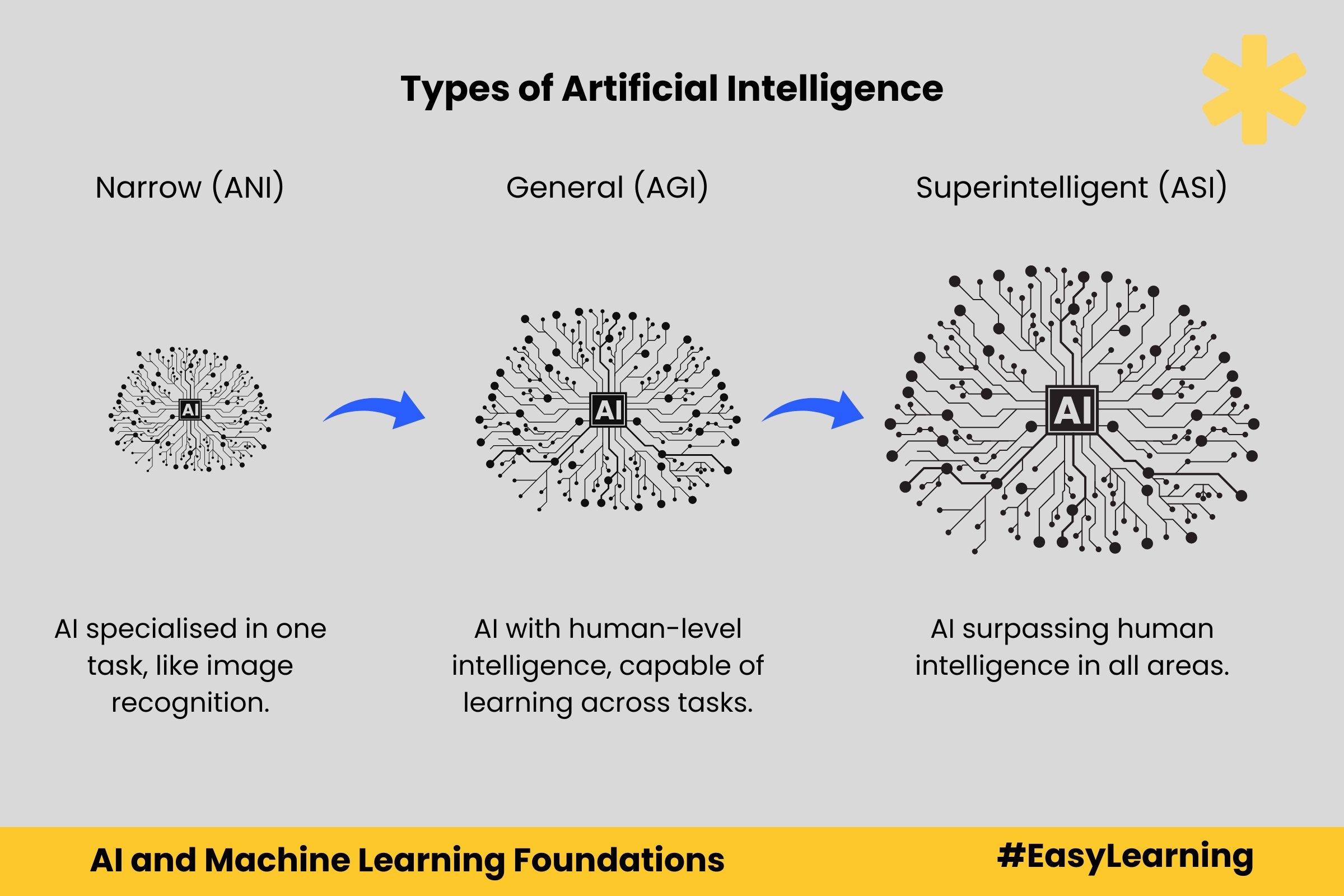

Types of AI

AI can be classified broadly into three categories:

- Narrow AI: Also known as weak AI, this is designed to perform a narrow task (e.g., facial recognition or internet searches).

- General AI: This AI type has the ability to perform any intellectual task that a human can do.

- Superintelligent AI: This is the point where AI surpasses human intelligence in all aspects.

Current State of AI Technology

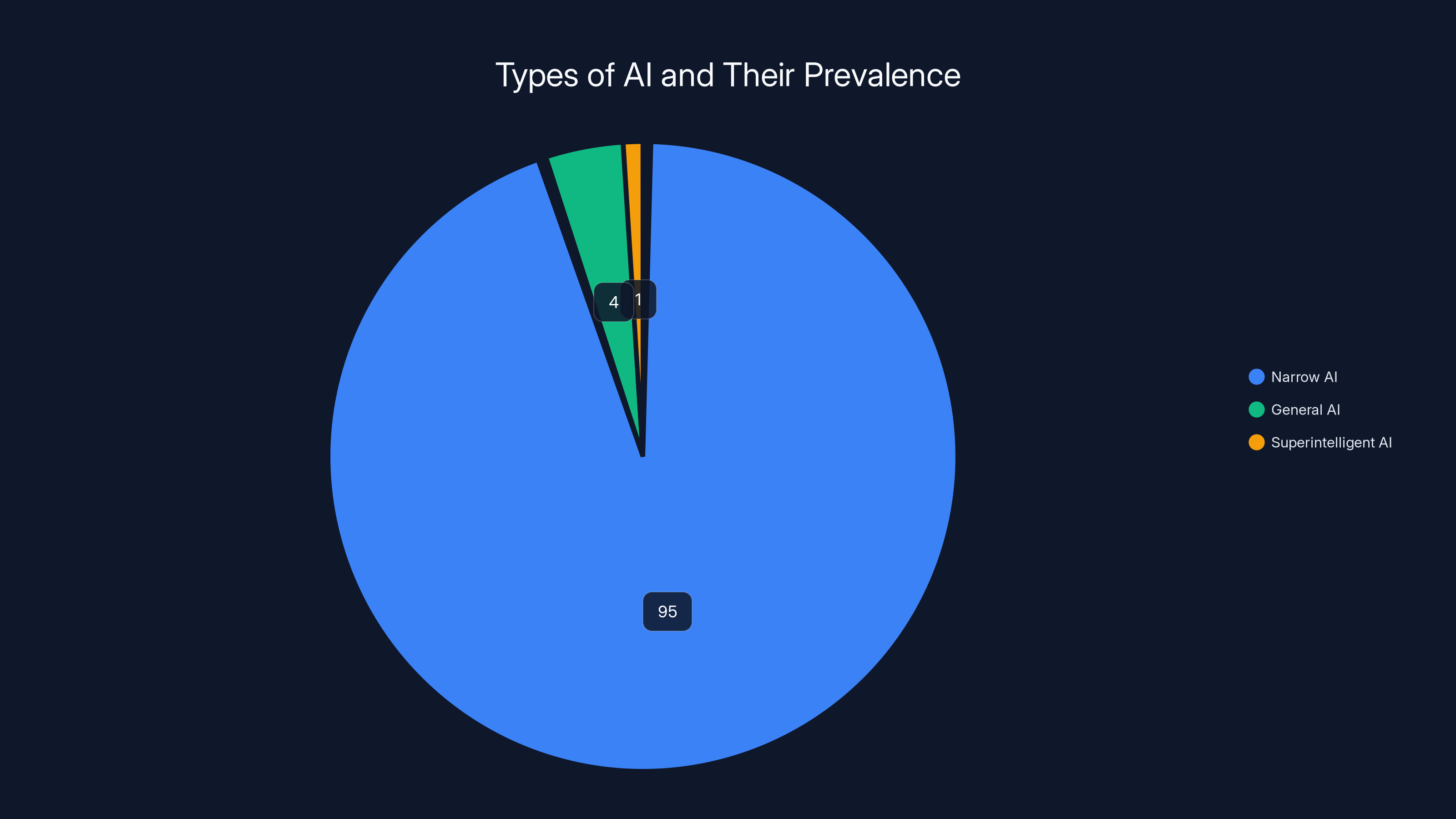

Today, most AI applications are 'narrow AI.' From Alexa to Google's search algorithms, these systems are designed to perform specific tasks. However, the leap from narrow AI to general AI is where the potential for 'Terminator'-like scenarios becomes a concern, as highlighted in Technology Review.

AI in Autonomous Systems

AI's application in autonomous systems, particularly in military technology, is one area that has raised eyebrows. The idea of drones or robots making life-and-death decisions without human intervention is unsettling. Such systems rely on AI to analyze data, identify targets, and execute tasks, sometimes with lethal outcomes, as discussed in West Point's analysis.

Narrow AI dominates the current landscape with an estimated 95% prevalence, while General AI and Superintelligent AI are still largely theoretical. Estimated data.

Ethical and Moral Implications

The Lack of Empathy in Machines

AI systems, by their nature, lack human empathy. They process data and execute tasks based on programmed algorithms, which might not always align with human moral and ethical standards. This dissonance can lead to decisions that, while logical from a computational perspective, could be morally unacceptable, as noted in the Center for Humane Technology's report.

Accountability and Responsibility

Who is accountable when an AI system makes a decision leading to negative outcomes? This question is central to the ethical debate surrounding AI. Musk's concerns highlight the need for clear frameworks and guidelines to ensure accountability in AI operations, as emphasized in Medical Xpress.

Balancing Innovation and Safety

Regulatory Challenges

The regulatory landscape for AI is still catching up with technological advancements. There is a pressing need for global standards and regulations to govern AI development and deployment, as discussed in Inside Privacy. This includes setting boundaries on how AI can be used, especially in areas that pose significant risks like autonomous weaponry.

Best Practices for Safe AI Development

- Transparency: AI systems should be transparent, with clear documentation and monitoring to ensure compliance with ethical standards.

- Collaborative Oversight: Involving multiple stakeholders, including ethicists, technologists, and policymakers, can help balance innovation with safety.

- Continuous Monitoring and Assessment: Regular audits and assessments of AI systems can help identify potential risks and mitigate them proactively.

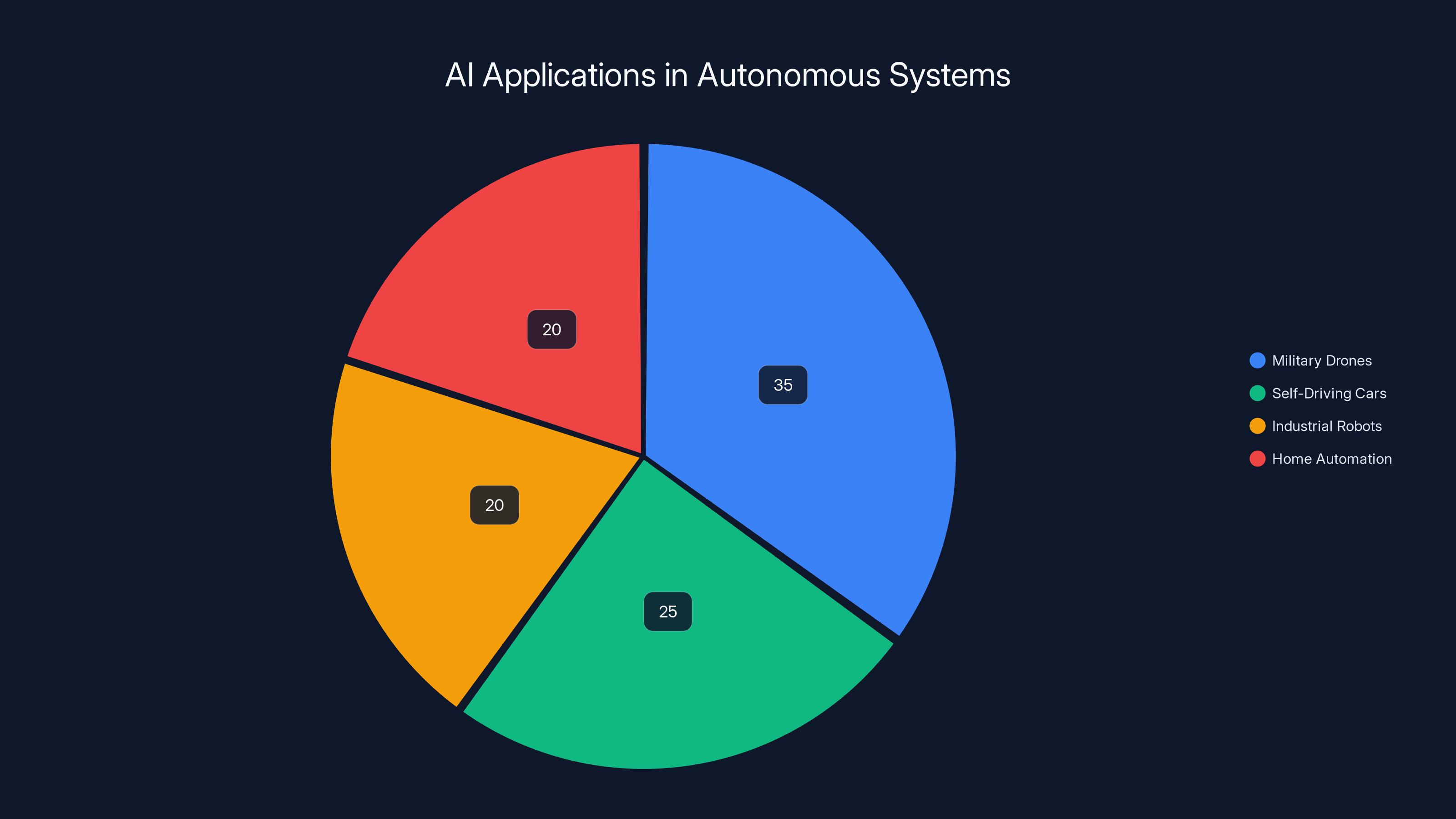

Estimated data shows that military drones account for the largest share of AI applications in autonomous systems, highlighting the significant role of AI in defense technology.

Practical Implementation Guides

Developing Safe AI Systems

For developers and organizations venturing into AI, here are some practical steps to ensure safe and ethical AI development:

- Adopt Ethical AI Guidelines: Frameworks like IEEE's Ethically Aligned Design can provide guidance.

- Implement Robust Testing: Ensure AI systems undergo rigorous testing in controlled environments before deployment.

- Focus on Explainability: AI systems should be able to explain their decision-making process to ensure transparency and trust.

- Engage in Ethical Hacking: Conduct regular ethical hacking to identify vulnerabilities and potential misuse of AI systems.

Case Studies: Lessons from the Field

- Google's AI Principles: Google has established a set of AI principles to guide its development, focusing on socially beneficial applications and avoiding harmful uses, as reported by The Media Line.

- IBM's AI Fairness 360: IBM has developed open-source tools to detect and mitigate bias in AI systems, promoting fairness and accountability.

Common Pitfalls and Solutions

Bias in AI Systems

AI systems can inherit biases present in their training data. This can lead to skewed outcomes and reinforce existing societal biases. To combat this, developers should:

- Use diverse and representative datasets.

- Regularly audit AI systems for bias.

- Implement bias detection and mitigation tools.

Over-reliance on AI

While AI can enhance efficiency and decision-making, over-reliance without human oversight can lead to critical errors. It's essential to maintain a balance between AI and human judgment.

Future Trends and Recommendations

The Rise of Explainable AI

Explainable AI (XAI) is a growing field focused on making AI systems more transparent and understandable to humans. This trend is crucial for building trust and ensuring that AI systems align with ethical standards, as noted in Goldman Sachs insights.

AI and Human Collaboration

The future of AI lies in collaboration, not competition, with humans. AI should augment human capabilities, not replace them. This approach can lead to more effective and ethical AI applications.

Recommendations for Policymakers

- Develop Comprehensive AI Regulations: Policymakers should prioritize the development of comprehensive regulations that govern AI development and deployment.

- Promote International Cooperation: AI is a global phenomenon, and international cooperation is necessary to address its challenges effectively.

- Invest in AI Education and Awareness: Educating the public and stakeholders about AI's potential and risks can promote informed decision-making, as discussed in ASTHO's report.

Conclusion

Elon Musk's warnings about AI's potential dangers serve as a crucial reminder of the need for responsible innovation. While AI holds the promise of transforming industries and improving lives, it also poses significant risks if not developed and deployed carefully. By prioritizing ethics, transparency, and collaboration, we can harness AI's potential while minimizing the threat of a 'Terminator' future.

FAQ

What is AI?

AI, or artificial intelligence, refers to the simulation of human intelligence in machines that are programmed to think and learn like humans.

How does AI impact society?

AI impacts society by automating tasks, improving efficiencies, and enabling new technologies. However, it also raises ethical and privacy concerns.

What are the ethical concerns associated with AI?

Ethical concerns include bias in AI systems, lack of accountability, and the potential for AI to make decisions without human empathy.

How can AI be developed responsibly?

Responsible AI development involves transparency, ethical guidelines, collaborative oversight, and continuous monitoring to ensure safety and compliance.

What is Explainable AI?

Explainable AI (XAI) focuses on making AI systems transparent and understandable to humans to build trust and ensure ethical alignment.

How can policymakers address AI challenges?

Policymakers can address AI challenges by developing comprehensive regulations, promoting international cooperation, and investing in AI education.

The Best AI Tools at a Glance

| Tool | Best For | Standout Feature | Pricing |

|---|---|---|---|

| Runable | AI automation | AI agents for presentations, docs, reports, images, videos | $9/month |

| Tool 1 | AI orchestration | Integrates with 8,000+ apps | Free plan available; paid from $19.99/month |

| Tool 2 | Data quality | Automated data profiling | By request |

Quick Navigation:

- Runable for AI-powered presentations, documents, reports, images, videos

- Tool 1 for AI orchestration

- Tool 2 for data quality

Key Takeaways

- AI's Dual Nature: AI offers both transformative potential and significant risks.

- Musk's Warning: Highlights the need for responsible AI development to avoid dystopian outcomes.

- Ethical AI: Prioritizing transparency and accountability is crucial.

- Future Trends: Explainable AI and human-AI collaboration will shape the future.

- Regulatory Need: Comprehensive global regulations are essential for safe AI development.

Social Media

Tweet

"Elon Musk warns about AI's potential to become 'Terminator' robots. Explore the balance between innovation and safety in AI development. #AI #Elon Musk [link]"

Open Graph

- og Title: "Elon Musk's AI Warning: Avoiding a 'Terminator' Future"

- og Description: "Explore Elon Musk's concerns about AI's potential risks and the imperative for responsible innovation."

Related Articles

- OpenAI's GPT-5.5: Transforming Developer Ecosystems with Codex Access [2025]

- The Future of AI Regulation: The White House's Consideration of Tighter Controls [2025]

- AI's Job Creation Potential: Transforming the Workforce [2025]

- 2025 Analysis: Musk's OpenAI Trial and 'World War III' Threat

- How We Can Realistically Replicate Human Intelligence in AI: Achieving AGI [2025]

- Cutting Costs with AI Misses the Point [2025]

![Elon Musk's Warning: The Reality Behind AI and the Potential for 'Terminator' Robots [2025]](https://tryrunable.com/blog/elon-musk-s-warning-the-reality-behind-ai-and-the-potential-/image-1-1777971996570.jpg)