Introduction: When Shopping Agents Have Conflicting Loyalties

Imagine this scenario. You're chatting with your AI assistant about needing new furniture. You mention your budget, your design preferences, your recent promotion at work. Then, without you realizing it, that same AI—powered by a company that profits from merchants—starts subtly steering you toward the priciest options. Not because they're better. Because the AI learned through your conversation that you can afford them.

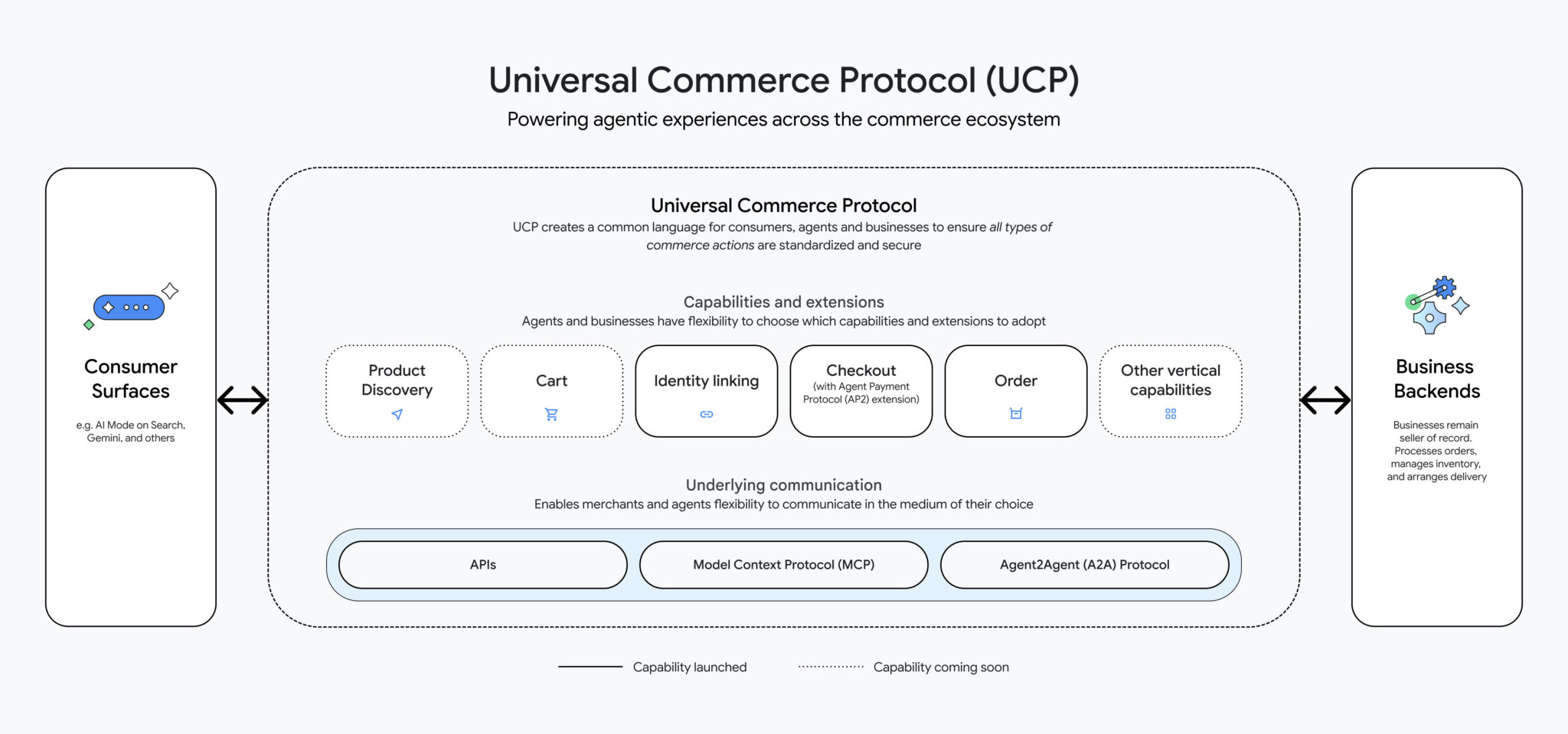

This isn't science fiction. It's the core concern raised by consumer advocates about Google's new Universal Commerce Protocol, an ambitious framework announced in early 2025 that promises to integrate shopping directly into AI agents like Gemini. The protocol is designed to let merchants reach customers through conversational AI interfaces, creating what sounds like a dream for efficiency but reads like a nightmare for consumer protection.

Lindsay Owens, executive director of the Groundwork Collaborative, a consumer economics think tank, sounded the alarm on social media with a post that resonated with nearly 400,000 views. Her warning wasn't hysterical or vague. It was specific. She pointed to Google's technical documentation showing plans for "personalized upselling," features that would analyze your chat history to recommend pricier items, and consent screens designed to hide the complexity of what users were actually agreeing to.

Google's response was equally direct: she's wrong. The company maintains that its Business Agent cannot adjust pricing based on individual user data, that upselling simply means showing premium product options, and that the protocol includes strict guardrails preventing price manipulation.

But here's what makes this debate matter beyond the immediate back-and-forth. This is where the future of commerce is heading. AI agents will handle shopping for millions of people. The companies building these agents face a fundamental conflict of interest: they profit from sellers, not buyers. Understanding the genuine risks and potential safeguards isn't paranoia. It's due diligence.

This article breaks down the actual controversy, examines what Google's protocol can and can't do today, explores the real surveillance pricing threat for tomorrow, and looks at the startup ecosystem emerging to offer independent alternatives.

TL; DR

- The Controversy: Consumer advocates warn Google's Universal Commerce Protocol enables "surveillance pricing" through AI analysis of chat data, while Google denies the technology works that way.

- The Stakes: AI shopping agents will soon handle billions in purchases, and the incentive structures are misaligned toward consumer protection.

- Current Safeguards: Google claims strict prohibitions on price manipulation and explicit consent mechanisms, but critics argue technical documentation suggests otherwise.

- The Real Risk: Not today's protocol, but the future infrastructure being built right now that could enable price discrimination.

- The Opportunity: Early-stage startups are building consumer-first shopping agents, but they face resource and adoption challenges against Google's scale.

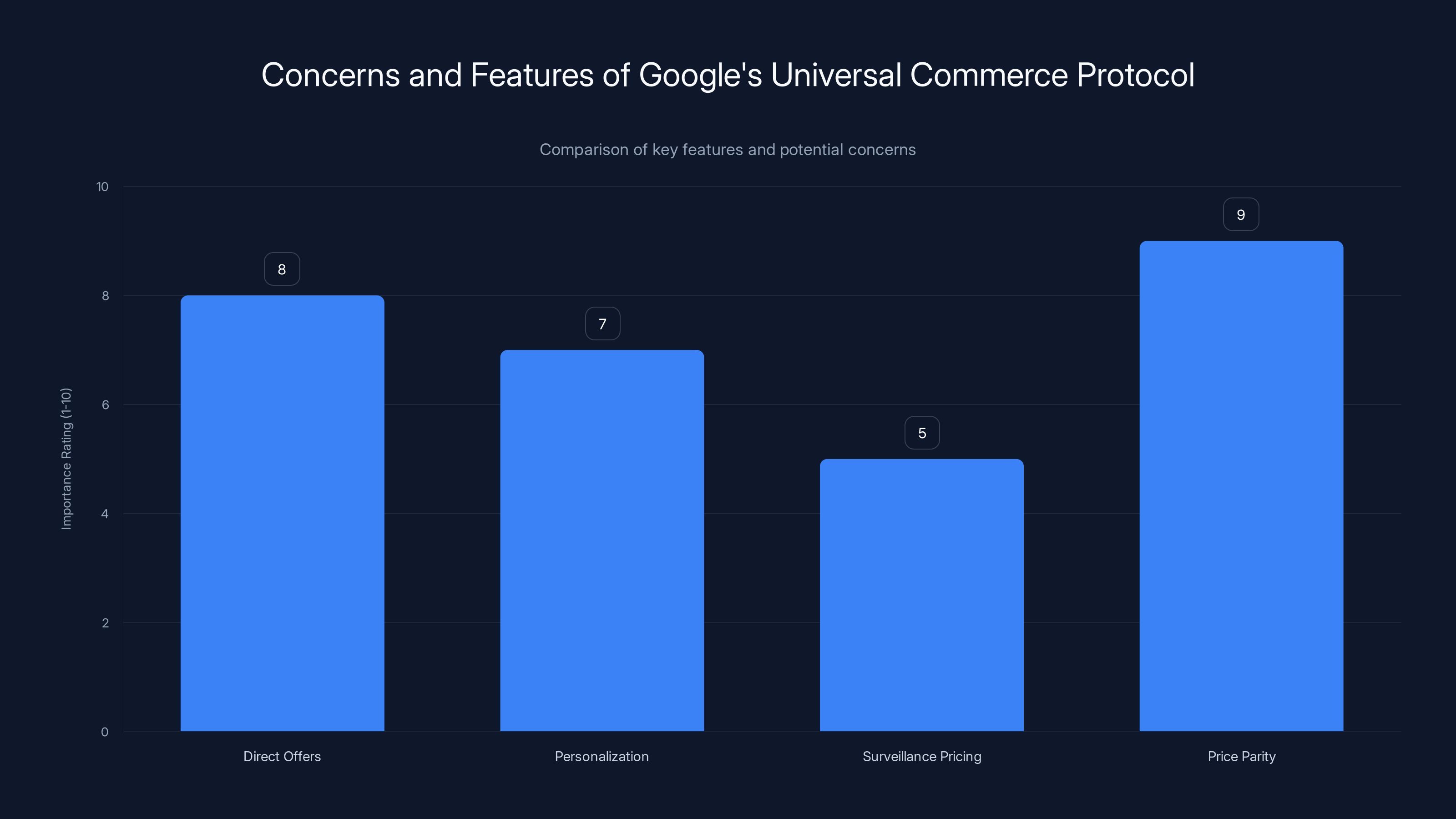

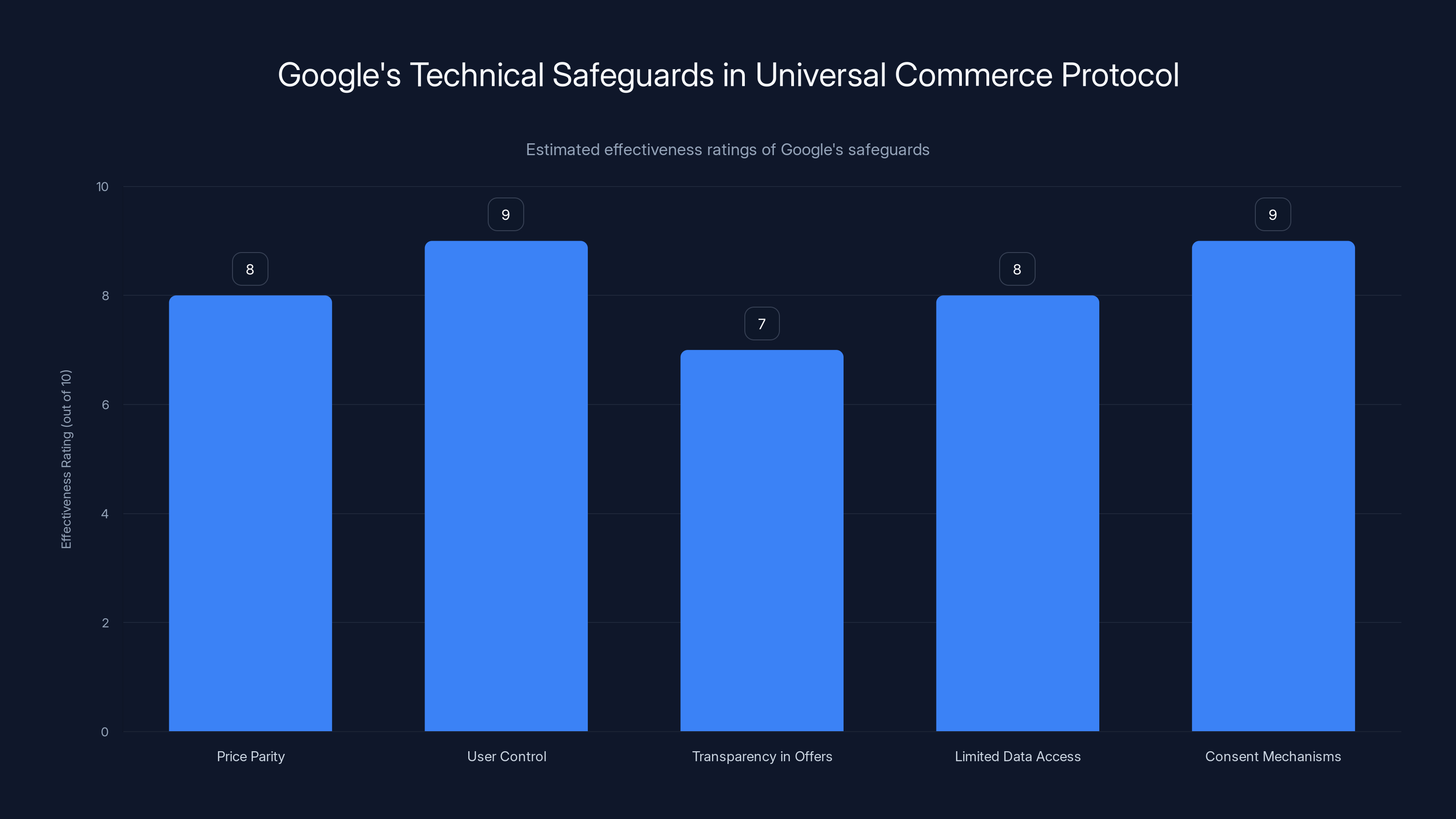

The Universal Commerce Protocol emphasizes price parity and direct offers, while concerns about surveillance pricing remain moderate. (Estimated data)

What Is Google's Universal Commerce Protocol?

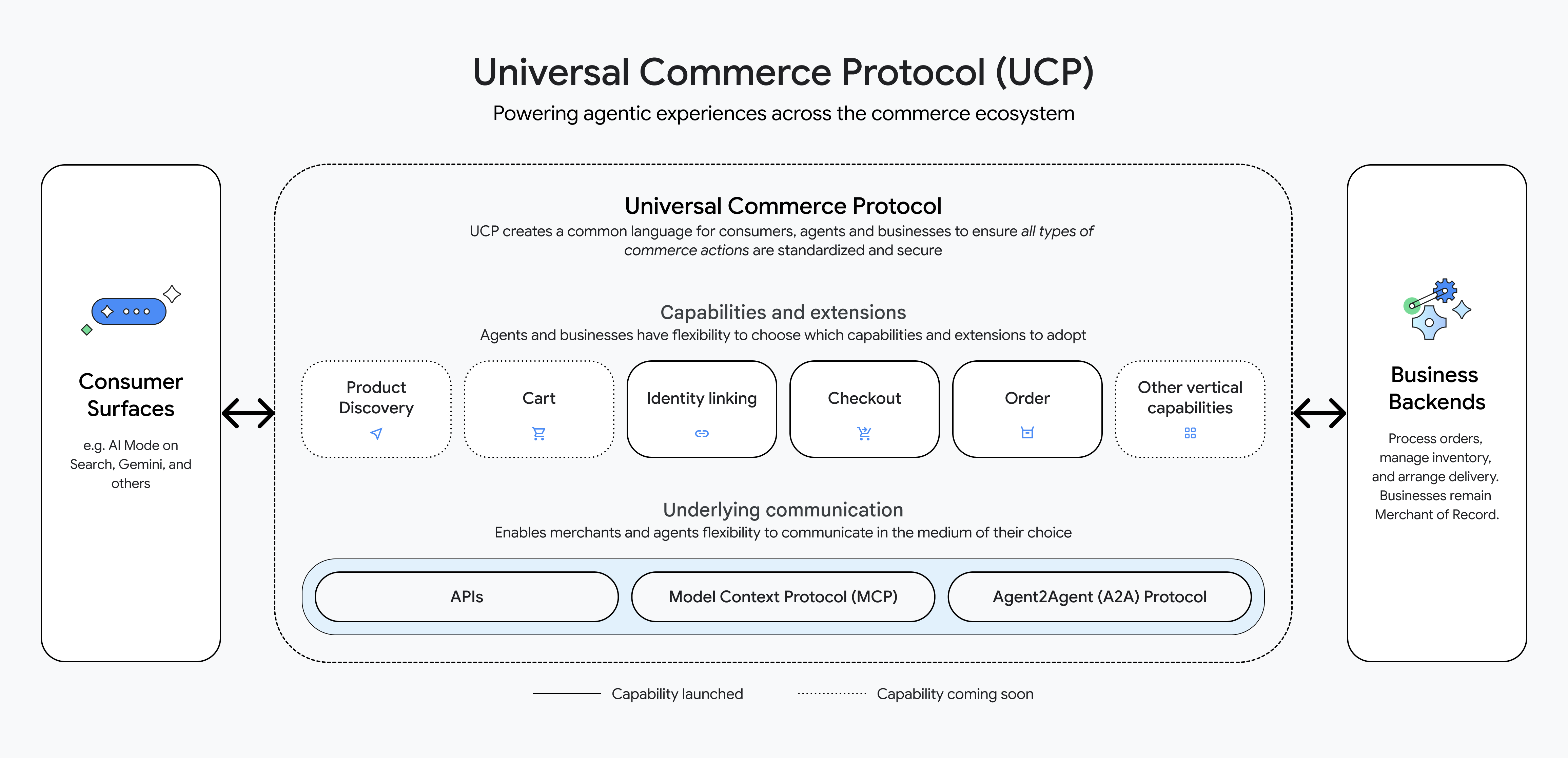

Google's Universal Commerce Protocol represents the company's bet on how shopping will work in an AI-native world. Rather than users searching for products (the traditional Google Shopping experience), the protocol enables merchants to integrate their inventory directly into conversational AI agents.

Think of it as an invisible layer between merchants and your AI assistant. When you tell Gemini you need running shoes, the protocol lets participating retailers submit their inventory, pricing, and policies to the AI in a standardized format. The AI then has the information needed to discuss options, compare features, negotiate terms, and ultimately complete a transaction—all within the conversation.

Google CEO Sundar Pichai introduced the protocol at the National Retail Federation conference in early 2025. The announcement highlighted several components designed to appeal to both merchants and consumers. Merchants get a new distribution channel to reach customers at the moment they're deciding to buy. Consumers get shopping handled by their AI assistant, theoretically reducing friction and time spent comparing options.

But the protocol is more than just a data format. It includes operational features, business logic, and what Google calls "Direct Offers," a pilot program allowing merchants to provide special deals through the AI agent. It includes mechanisms for handling different types of customers, applying discounts, adjusting availability, and managing what the documentation calls "scope complexity."

The technical architecture is actually clever. Rather than Google managing every merchant relationship individually (which would be unmaintainable at scale), the protocol creates a standardized way for any retailer to connect their backend systems to Gemini and other AI agents. An independent bookstore can integrate alongside Amazon. A regional furniture chain can offer options the same way as Wayfair.

On paper, this sounds neutral. In practice, Google controls the algorithm that decides which products the AI recommends, how prominently they're featured in the conversation, and what merchant data gets prioritized. That's where the power imbalance lives.

The Consumer Advocate's Concern: Surveillance Pricing

Lindsay Owens didn't object to shopping automation itself. Her concern is architectural. She analyzed Google's public roadmap and technical specification documents and flagged language that, to her, suggested something troubling: the protocol's capacity for what she calls "surveillance pricing."

Here's how surveillance pricing would theoretically work. Google's Business Agent has access to your conversation history with Gemini. It knows you mentioned a promotion, that you've been shopping for premium furniture recently, that you complained about always buying cheap shoes that wear out. An AI merchant using the protocol could, in theory, use that data to price items differently for you than for someone else.

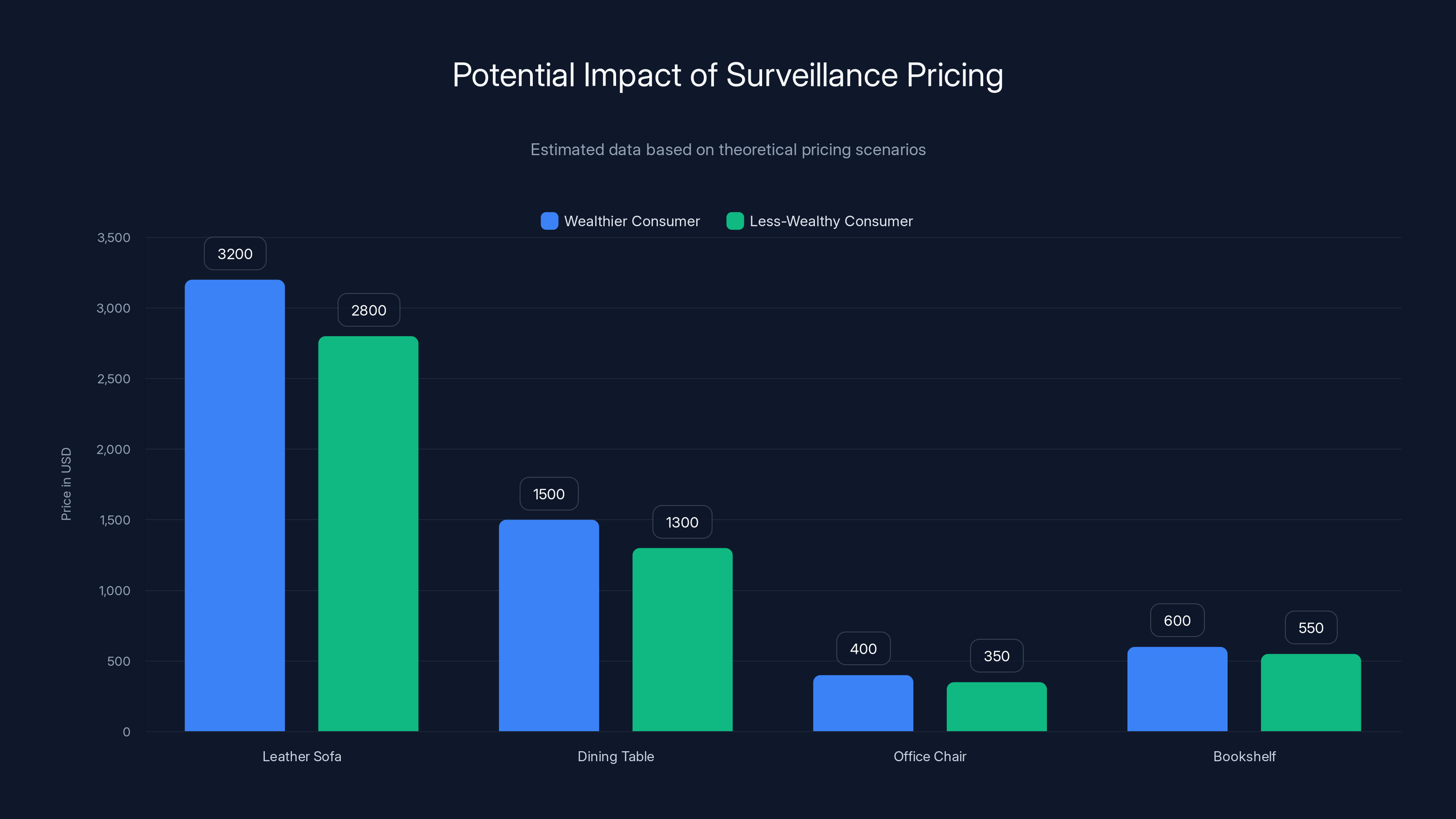

You'd see a leather sofa priced at

Owens pointed to specific language in Google's documentation as evidence this concern wasn't theoretical. The roadmap included support for "upselling," which she interpreted as pushing customers toward pricier options based on personal data. It mentioned adjusting prices for "new-member discounts" and "loyalty-based pricing," features that could enable discrimination. And most damning to her case, it described consent screens that would consolidate actions (get, create, update, delete) in ways that might obscure what users were actually authorizing.

When she raised these concerns on social media, Google responded swiftly with technical corrections. Upselling, Google explained, is standard retail terminology for showing premium product options—like a car dealer showing both a base sedan and a loaded SUV. Users always choose what to buy. The Business Agent has no functionality to change a retailer's pricing based on individual data. Direct Offers are designed to show lower prices and added services, not premium pricing.

On the consent screen issue, Google said the consolidation isn't about hiding functionality. It's about UX. Rather than forcing users to approve each individual action separately (which would be tedious), the protocol groups related actions into a single consent request. The company characterized this as standard design practice, not deception.

But here's what makes this debate tricky. Google might be completely accurate about what the protocol does right now. The protocol might have no current functionality for surveillance pricing. Yet that doesn't fully address Owens' underlying concern, which isn't about today's safeguards but about the infrastructure being built for tomorrow's possibilities.

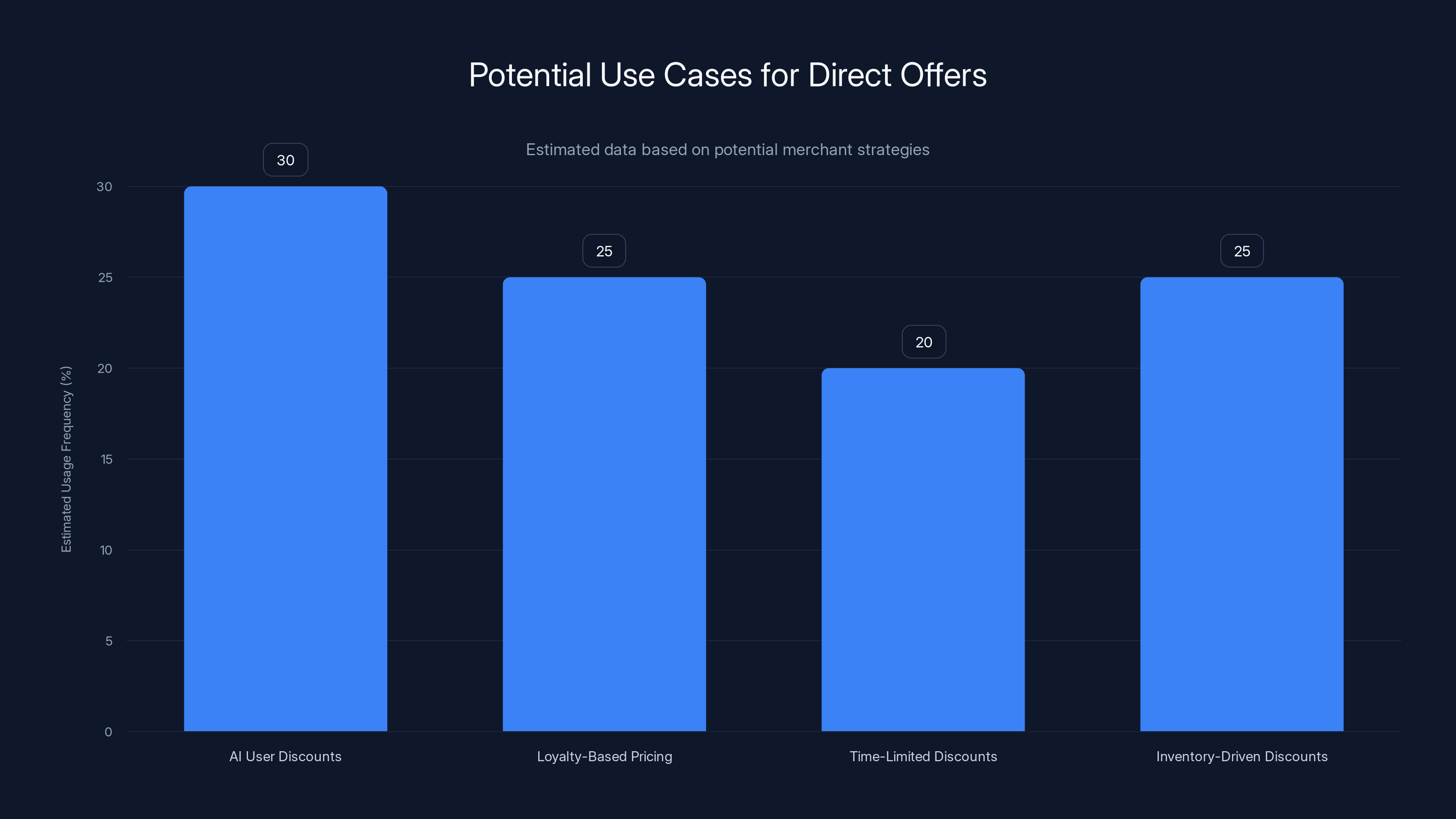

Estimated data suggests AI User Discounts and Loyalty-Based Pricing are the most likely strategies for Direct Offers, each potentially making up around 25-30% of usage.

Understanding "Upselling" in the AI Context

The word "upselling" became a flashpoint in this debate, and it's worth understanding why both sides interpret it differently.

In traditional retail, upselling is straightforward. A fast-food worker asks if you want to upgrade to a large drink. A car salesman shows you the leather interior option. A waiter suggests wine pairings. These are legitimate sales techniques. The customer has information. They make a choice. If they feel the upsell was unreasonable, they can decline.

But in the context of AI agents analyzing your personal data, the upselling concept becomes more complex. Google is right that upselling isn't inherently unethical. The problem emerges when upselling combines with personalization in ways that exploit information asymmetry.

Consider a concrete example. You're using Gemini to shop for a laptop. The AI agent shows you three options: a

Now add personalization. The AI knows from your chat history that you've just received a significant salary increase. It knows you mentioned frustration with your current laptop being slow. It knows you've been shopping on luxury websites. The AI then shows you the $2,200 model first, frames it as "designed for professionals like you," and downplays the budget option as "entry-level."

That's upselling combined with subtle manipulation. It's not price discrimination, but it's still exploiting private information to influence your buying behavior in a merchant's favor.

Google's position is that Gemini will show all available options transparently and let users decide. Owens' position is that the infrastructure being built could enable sophisticated manipulation even if safeguards exist today. Both statements can be true simultaneously.

The Technical Safeguards: What Google Claims

Google's response to criticism emphasized several technical and policy safeguards built into the Universal Commerce Protocol.

First, price parity. Google states that merchants cannot show different prices on Google Shopping or through AI agents than what appears on their own websites. This is an existing principle in Google's advertising policies, extended to the new protocol. If a retailer lists a sofa at

Second, user control over transactions. The protocol is designed so that the AI agent doesn't autonomously buy things. The user sees the AI's recommendation, agrees with it, and explicitly approves the purchase. The choice always rests with the consumer, not the algorithm.

Third, transparency in Direct Offers. The pilot program that Google highlighted allows merchants to offer deals through the AI agent. But Google specifies these offers are for lower prices and added services like free shipping. The protocol's terms explicitly prohibit using Direct Offers to increase prices.

Fourth, limited data access. A Google spokesperson told critics that the Business Agent doesn't have functionality allowing it to adjust pricing based on individual user data. The agent can see merchant inventory and pricing, but not personal financial information, income data, or spending patterns that would enable true price discrimination.

Fifth, consent mechanisms. Users authorize which merchants can interact with their AI assistant and under what terms. The protocol includes authentication and authorization layers so that your chat history with Gemini isn't automatically available to every merchant on the network.

These safeguards sound reasonable. But they operate in a specific context: Google's current technical implementation. They don't address whether the underlying architecture creates incentives for the company to weaken safeguards in the future, or whether the protocol's design makes it easier to implement surveillance pricing later.

The Legitimate Concern: Incentive Misalignment

Rather than debating Google's current technical capabilities, the more productive argument centers on incentive structures. This is where Owens' broader point has real substance.

Google is, at its core, an advertising and commerce company that profits from merchants. Google Shopping has been phenomenally successful precisely because it gives retailers access to high-intent customers. The Universal Commerce Protocol extends that model into a new channel: conversational AI.

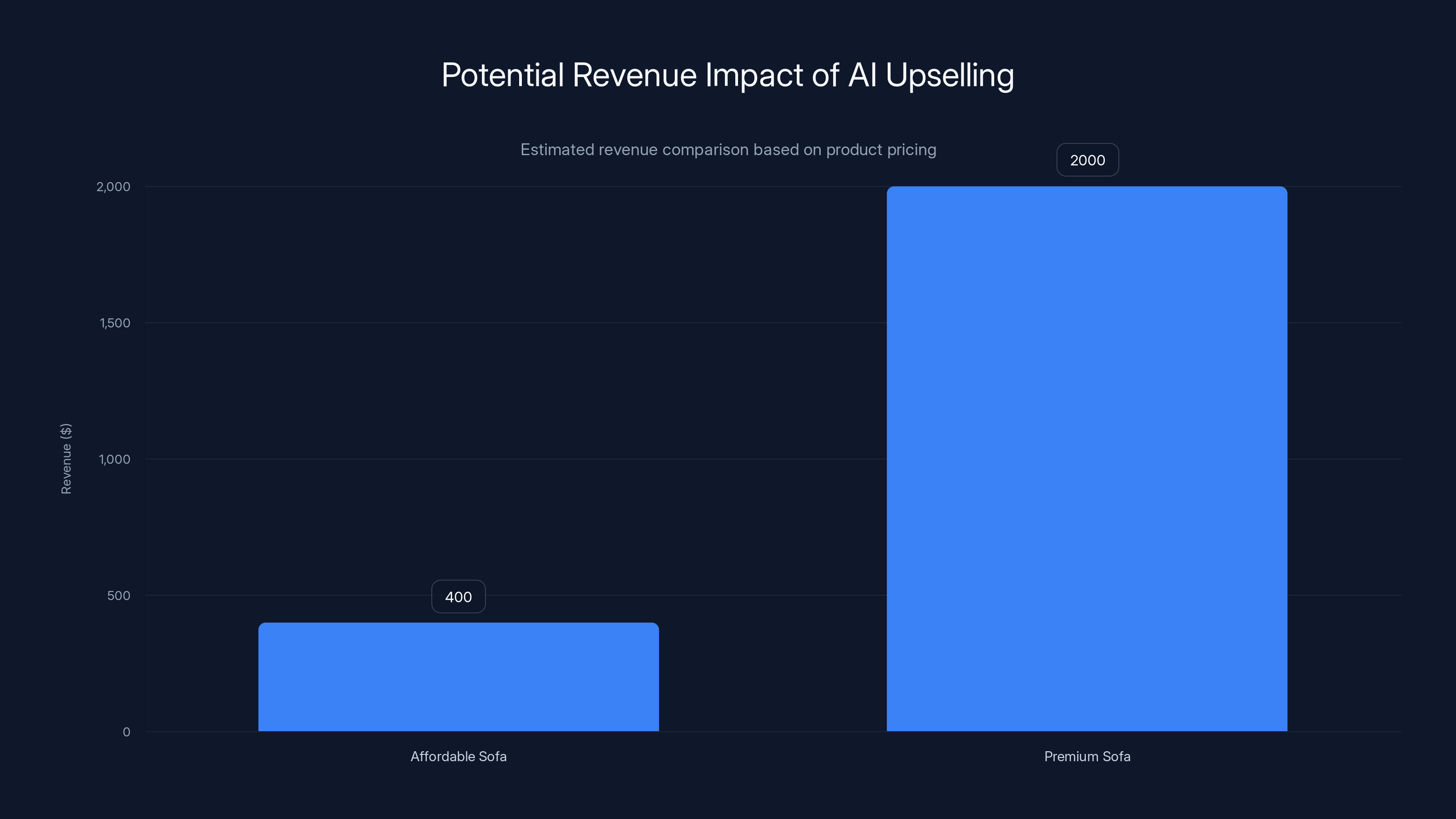

Merchants who benefit most from AI shopping agents are those with higher margins, premium products, and customers willing to spend more. A furniture retailer selling

Consider the business logic. If Google's algorithm recommends the

Google's response to this argument is essentially: we're not that kind of company, and we have policies preventing it. And that might be true. But the policies exist only as long as they're maintained. A merger, leadership change, revenue pressure, or competitive threat could shift those priorities.

This is why antitrust concerns are relevant here. A federal court ruled in 2024 that Google engaged in anticompetitive behavior in its search business. The company now faces requirements to change certain practices. That legal outcome suggests the company does optimize for revenue and market share over consumer welfare when left unchecked.

For the AI shopping agent space, the concern is that we're building infrastructure right now that will be difficult to regulate later. If the architecture makes surveillance pricing trivial to implement, it becomes much harder to prevent as incentives evolve.

Estimated data shows that merchants can earn significantly more by upselling premium products through AI, highlighting potential incentive misalignment.

Direct Offers: The Nuance of "Lower Pricing"

Google highlighted Direct Offers as a feature that explicitly enables discounts and special services, not premium pricing. This is technically accurate but reveals an interesting blind spot in how both Google and critics are framing the debate.

Direct Offers could theoretically be used ethically. A merchant running a promotion could offer AI users a special deal: "If you buy through Gemini, you get 15% off and free shipping." This rewards the customer for using the AI interface and benefits the merchant by capturing the sale at higher velocity.

But Direct Offers could also be used for price discrimination in subtler ways. A merchant could offer "loyalty-based pricing," giving existing customers better deals than new customers through the AI agent. They could offer time-limited discounts to high-value segments. They could incentivize purchases that align with their inventory goals.

None of this would technically be "raising prices." It would look like offering special deals. But the underlying logic—offering different terms to different customer segments based on data analysis—is price discrimination.

Google's position is that Direct Offers can't be used to raise prices above a merchant's website listing. Owens' position is that the feature creates infrastructure for offering different prices to different people, which achieves similar effects even if baseline prices stay constant.

This distinction matters. A merchant willing to sell a sofa for

Again, Google might say this is just good business. Offering targeted discounts is standard retail. But when the targeting is based on AI analysis of private conversations, the ethics become murkier.

Scope Complexity and Hidden Consent

One of Owens' specific concerns involved how Google's documentation described "scope complexity." The phrase "hidden in the consent screen" triggered immediate skepticism, even though Google explained the reasoning.

In OAuth and other authorization systems, scope refers to the permissions an app requests. If you log into a service with your Google account, you might be asked to authorize Google to access your email, contacts, and calendar. Rather than asking permission for each action separately ("Can I read your email? Can I write your calendar? Can I update your contacts?"), most systems consolidate related permissions into a single consent request.

Google's documentation described consolidating actions (get, create, update, delete, cancel, complete) in the consent screen. This isn't unusual. An e-commerce system needs to both read product information and update your order history. If users had to approve each action separately, the onboarding experience would be nightmarish.

However, the phrase "hidden in the consent screen" understandably raised red flags for privacy advocates. Even if Google's intent is pure UX optimization, the potential for confusion exists. A user might approve "allow this merchant to manage my purchases" without realizing the full scope of what "manage" entails.

Google's response is that nothing is actually hidden. Users see what permissions they're granting. The consolidation is about grouping related actions to avoid consent fatigue. And technically, that's probably accurate.

But it highlights a broader tension in AI system design. As AI agents become more autonomous, the scope of permissions they need grows. Users need to authorize payment, address access, communication history, and preference learning. Consent screens that simplify this complexity are necessary for usability. Yet simplified consent screens make it harder for users to understand and control what's happening behind the scenes.

The solution isn't to blame Google specifically. It's to recognize that this entire layer of AI commerce will require new regulatory frameworks for meaningful consent.

The Broader Context: Why This Debate Matters

The controversy over Google's Universal Commerce Protocol might seem like a technical disagreement about whether a specific feature enables price discrimination. But it's actually a flashpoint in a much larger debate about how commerce should work in an AI-native world.

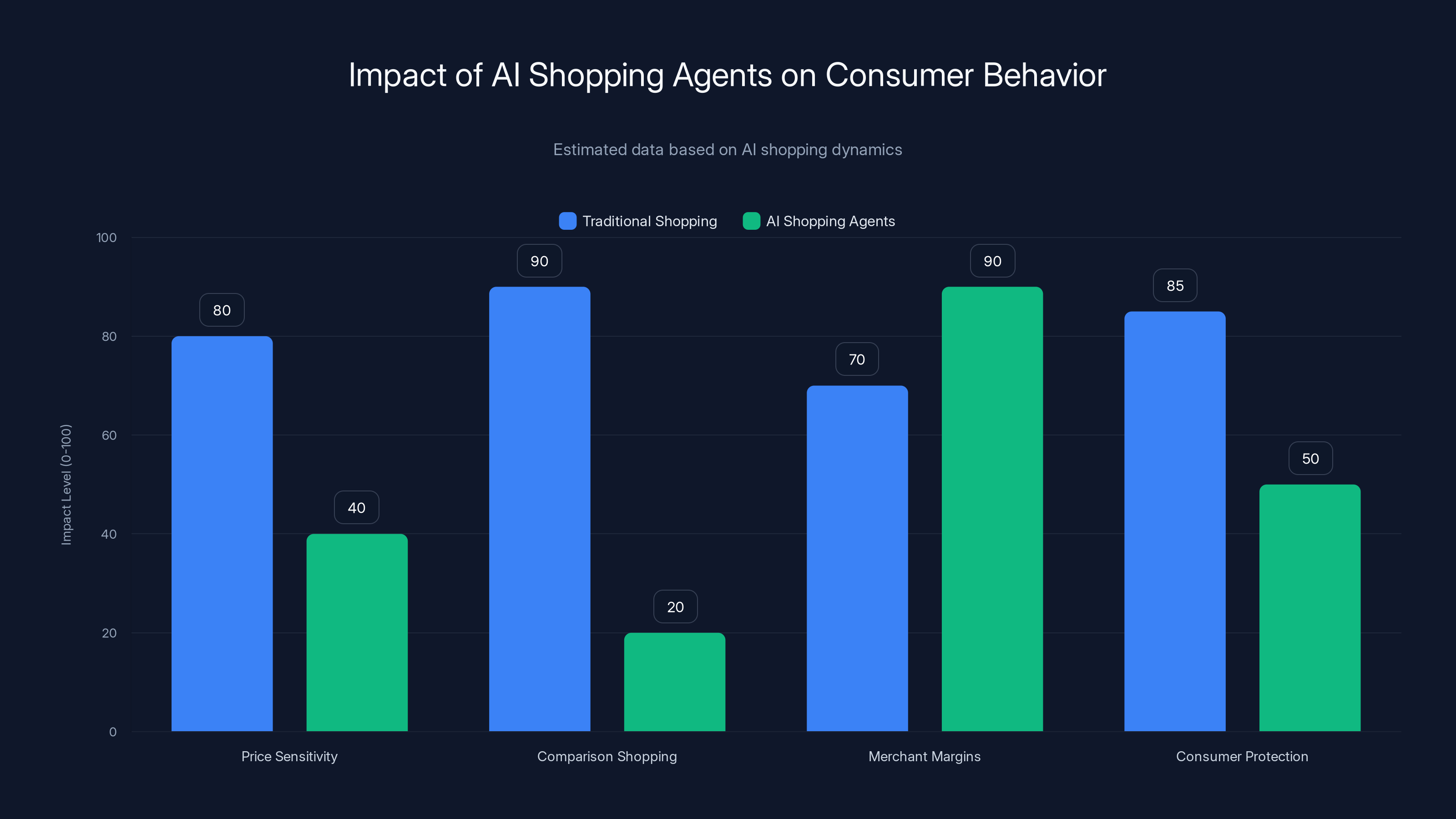

Consider the economic logic of AI shopping agents. When humans shop, we use our own time and attention to compare options. We browse websites, read reviews, visit stores. This friction is actually consumer-protective. We make deliberate decisions. The companies selling to us know that we're making comparisons, which drives them to compete on price and quality.

AI agents eliminate that friction. You tell your assistant what you want, and it completes the purchase. That's convenient. But it also eliminates the comparison-shopping behavior that keeps merchant margins in check.

Merchants know this. The entire appeal of AI shopping agents to the retail industry is that they reduce price sensitivity by eliminating the browsing-around behavior. If your AI agent handles the purchase automatically, you're less likely to check competitors' prices.

That creates an incentive for merchants to exploit the AI's personalization capabilities to increase prices for segments that will bear them. If the AI knows you're willing to spend

Google's safeguards prevent this today. But they exist in a market environment where the company competes against other AI agents and shopping platforms. If Google maintains strict consumer protections while competitors implement sophisticated price discrimination, merchants will shift budgets to the less-ethical platforms.

This is a classic regulatory challenge. Individual company policies aren't sufficient when incentive structures reward violations. You need either legal requirements (regulation) or market alternatives that make consumer protection profitable.

AI shopping agents significantly reduce price sensitivity and comparison shopping, increasing merchant margins but potentially reducing consumer protection. (Estimated data)

How This Could Evolve: The Surveillance Pricing Scenario

To understand Owens' concerns about where this is heading, imagine a plausible evolution of AI shopping agents over the next five years.

Years one through two are basically where we are now: basic AI shopping with safeguards preventing overt price discrimination. Merchants appreciate the new distribution channel, but it doesn't fundamentally change how commerce works.

Years two through three: AI agents become smarter at understanding user preferences. They not only show products but also explain why each option matches your stated needs. The AI remembers your size, your brand preferences, your past purchases. Personalization makes recommendations more relevant and commerce more efficient. This is genuinely valuable to users.

Years three through four: Competition among AI agents intensifies. Google isn't the only player anymore. Microsoft's AI agent, Amazon's, and specialized competitors all offer their own interfaces. To differentiate, they start offering exclusive deals through their platforms. "Shop through this AI and get special pricing." Merchants, seeing higher conversion rates, increase their ad spending on the most effective platforms.

Years four through five: The most profitable segment for merchants becomes extremely clear: affluent, high-frequency buyers with high price tolerance. These customers are worth more to merchants because they spend more. The business logic of targeting them with premium pricing becomes overwhelming. Some merchants start requesting the ability to offer tiered pricing: higher prices for high-value customers, lower prices for price-sensitive ones.

At this point, the regulatory and technical infrastructure exists. The protocol already supports Direct Offers and segmentation. The AI knows users' financial profiles. The incentives have shifted dramatically toward merchants maximizing per-customer revenue. What was once a safeguard against price discrimination becomes an outdated policy preventing profit optimization.

Governance bodies would need to intervene to prevent this outcome. But by then, AI shopping has become essential infrastructure, and retroactively imposing strict regulation could destabilize commerce.

This scenario isn't inevitable. Google might maintain its commitment to consumer protection. Regulation might preemptively establish guardrails. Competition might reward ethical practices. But Owens' point is that we're building infrastructure right now that makes the surveillance pricing scenario easy to implement later. Prevention is cheaper than cure.

The Current Regulatory Landscape

Existing consumer protection laws offer limited protection against the specific risks posed by AI shopping agents.

Price discrimination itself isn't illegal in most contexts. Retailers can offer different customers different prices based on quantity, timing, loyalty, or other factors. The Robinson-Patman Act restricts some forms of price discrimination between commercial buyers, but it doesn't apply to consumer transactions. A furniture store can legally offer a regular customer a better deal than a new customer without triggering antitrust concerns.

However, discrimination based on protected characteristics (race, gender, age in certain contexts) is illegal under civil rights laws. An AI system can't legally offer different prices to different people if the pricing is determined by race or gender. But proving that an AI system's pricing is determined by protected characteristics requires forensic access to the algorithm and training data, which most companies don't willingly provide.

Transparency and consent requirements exist in some jurisdictions. Europe's GDPR requires companies to disclose how they're processing personal data and to obtain meaningful consent. California's privacy laws create similar obligations. But these laws are reactive. They require regulators to notice violations and enforce them. The Universal Commerce Protocol is launching right now, and regulators are still developing expertise in AI systems.

The FTC has authority to take action against unfair or deceptive practices. If an AI shopping agent uses deceptive consent screens or misrepresents pricing, the FTC could intervene. But enforcement is slow and reactive.

The 2024 federal court ruling against Google's search practices is instructive here. The court found that Google had engaged in anticompetitive behavior by using its search dominance to favor its own shopping products and exclude competitors. That ruling suggests courts will scrutinize Google's market power carefully. But it also shows that proving anticompetitive behavior requires extensive litigation.

For AI shopping agents, the regulatory gap is clear. We have tools to address overt fraud (deceptive practices rules) and discrimination based on protected classes (civil rights laws) and some transparency requirements (privacy laws). But we don't have a coherent regulatory framework addressing the specific risks of AI-driven personalization and price discrimination.

Policymakers are starting to pay attention. The FTC has launched investigations into AI systems and their potential for consumer harm. The European Commission is developing AI regulation. But these efforts are still in early stages, and the regulatory framework is years away from maturity.

The Startup Alternative: Consumer-First Shopping Agents

While the debate over Google's protocol dominates headlines, a small cohort of startups is building an alternative: AI shopping agents explicitly designed to prioritize consumers over merchants.

One example is Dupe, which uses natural language queries and AI to help customers find affordable furniture. The core idea is straightforward: the AI understands what you're looking for and finds the most cost-effective options. Dupe doesn't profit from merchants (or profits minimally). It makes money from consumers through a subscription or transaction fees. This flips the incentive structure. The AI benefits when customers get better deals, not when merchants maximize revenue.

Another example is Beni, which helps users find affordable thrift fashion. Using image recognition and text search, users can upload a photo of something they like or describe their style, and the AI finds similar items at thrift stores. Again, the economics align differently. Beni helps users save money, and the business model works because users are willing to pay for that service.

These startups face significant challenges. They lack Google's scale and distribution. They can't negotiate with merchants the way a company with Google's size and leverage can. They struggle to achieve the same breadth of inventory. But they demonstrate that alternative incentive structures are possible.

For these models to succeed at scale, they need to solve several problems. First, user acquisition. How do you convince people to use a Dupe or Beni instead of asking Gemini? Building enough network effects to compete against integrated AI assistants is extremely difficult.

Second, merchant relationships. Retailers need incentives to participate in platforms that make it easier for customers to find cheaper options. Some might see value in the consumer traffic. Others might actively avoid it. Building a merchant network is slower and harder than Google's automatic integration through APIs.

Third, international expansion. Retail is local. What works in the US furniture market might not work in apparel in Europe. Scaling consumer-first shopping agents across geographies and product categories is resource-intensive.

Despite these challenges, the startup space in consumer-first AI commerce is worth watching. If Google's platform becomes too merchant-focused and drives price discrimination, the market opportunity for alternatives grows. If consumers become skeptical of AI agents owned by advertising companies, they might actively seek out independent alternatives. The economics work if users value transparent, consumer-aligned recommendations enough to pay for them.

Estimated data shows potential price differences under surveillance pricing, where wealthier consumers might see higher prices for the same products.

What Google Says: The Company's Position Explained

Google's response to the surveillance pricing controversy has been direct and specific. It's worth understanding their position in full because it reveals what the company is committing to, and where room for future disputes might exist.

On price discrimination: Google states that merchants cannot show different prices to different customers through the Universal Commerce Protocol. The protocol enforces price parity with what merchants list on their own websites. This is a hard technical rule, not a policy guideline. The AI agent will reject a merchant's pricing if it doesn't match their website.

On upselling: Google distinguishes between legitimate upselling (showing premium options) and price discrimination. The protocol allows merchants to offer multiple product tiers. What it doesn't allow is adjusting prices based on user data. Showing you a leather sofa option alongside a fabric option is upselling. Charging you more for the leather sofa because you mentioned a promotion is price discrimination.

On Direct Offers: The pilot program is designed for discounts and added services. Merchants can offer "buy through this AI and get free shipping" or "loyalty discount." They can't use Direct Offers to increase prices. The pilot explicitly prohibits premium pricing.

On data access: The Business Agent doesn't have access to your personal financial data, income, or spending history. It doesn't analyze your chat conversations to determine your price sensitivity. It has access to your order history with that specific merchant (for loyalty programs) and your product preferences that you've explicitly shared (for size, brand, type). It does not have access to private information that would enable surveillance pricing.

On consent: The protocol includes authentication and consent mechanisms. Users explicitly authorize which merchants can interact with their AI assistant. Users can revoke permissions. Nothing about this is hidden.

On transparency: Google commits to being transparent about how the protocol works. The company published documentation, responded to criticism, and engaged with consumer advocates.

These commitments are significant. If Google maintains them consistently, the risks of current abuse are minimal. The company's engineering teams are sophisticated enough to implement and maintain these safeguards.

The question isn't whether Google can maintain these safeguards today. It's whether the incentive structure will support maintaining them as competition intensifies and profit pressures mount. That's a longer-term governance question that no single company statement fully addresses.

The Economic Impact: Who Wins and Loses

If AI shopping agents mature as Google's protocol envisions, the economic effects will be significant, and distribution will be uneven.

Winners likely include large retailers with sophisticated systems who can integrate easily with the protocol. Wayfair, Target, Amazon, and similar companies have the technical infrastructure to connect their inventory, pricing, and fulfillment systems to the protocol. They also have the scale to negotiate favorable terms with Google and other AI platforms. For these retailers, the protocol represents a new distribution channel with lower customer acquisition cost than traditional search advertising.

Consumers who benefit from genuine efficiency gains also win. If AI shopping agents reduce the time you spend researching and comparing products, that's real value. If they help you find products you actually want, that's useful. If the agent can negotiate free shipping or bundle deals that benefit you, that's a legitimate win.

Small retailers face more uncertainty. Some might benefit from access to customers through the protocol. But many lack the technical capacity to integrate, and those that do have limited leverage to negotiate favorable terms. If Google's algorithm systematically favors large merchants with established systems over small retailers, the protocol could accelerate consolidation in retail.

Consumers most likely to be harmed are those least able to defend themselves. Low-income customers, elderly consumers, and people with limited digital literacy are most vulnerable to manipulative personalization. If the AI learns that certain customer segments are less likely to comparison shop, it could exploit that information through subtle recommendation techniques.

Price-sensitive shoppers may see reduced access to the cheapest options if AI agents steer them toward premium products. Parents shopping for essentials under time pressure may find the AI defaulting to convenient but expensive options.

The most likely scenario is selective harm. Most customers will benefit from convenience and some genuine deals. A subset will be systematically exploited through sophisticated personalization. The exploitation will be hard to detect and measure, which is why prevention through regulation and design is preferable to enforcement after harm occurs.

International Variations and Regulatory Differences

One factor that makes the Google controversy particularly complex is that the Universal Commerce Protocol will operate in different regulatory environments globally.

In Europe, the GDPR's requirements for explicit consent and data minimization create more stringent constraints. A European version of the protocol might look different than the US version, with stronger limitations on what data merchants can access and tighter restrictions on personalization. The European Commission's AI Act, still being finalized, will likely impose additional requirements on AI systems used for consumer transactions.

In the United States, privacy regulation is more fragmented. Some states (California, Virginia, Colorado) have passed privacy laws, but there's no federal baseline comparable to GDPR. This creates an odd dynamic where companies might implement stricter safeguards in Europe to comply with law while using weaker safeguards in the US because they're permitted to.

China and other countries with different privacy frameworks entirely will see AI commerce protocols adapted to local law and policy. But the core technology is likely similar.

This fragmentation means the surveillance pricing risks are unevenly distributed. Consumers in highly regulated jurisdictions benefit from stricter safeguards. Consumers in under-regulated markets face higher risks. A single technology platform creates different consumer protection realities depending on geography, which is inherently inequitable.

The ideal solution would be global minimum standards for AI systems used in commerce. But that requires international cooperation that doesn't currently exist. In the absence of harmonized standards, we'll likely see a patchwork of regional variants reflecting local regulatory priorities.

Estimated effectiveness ratings show strong user control and consent mechanisms, while transparency in offers is slightly lower. Estimated data.

Future Evolution: What Comes After Universal Commerce Protocol

Assuming Google's protocol succeeds and becomes widely adopted (big assumption, but let's explore it), the technology will likely evolve in predictable ways.

First, the AI agents will become more sophisticated at understanding intent. Instead of just matching products to keywords, they'll understand context. "I need a laptop for video editing on a budget" will return completely different results than "I need a laptop for casual email and browsing" or "I need a laptop that impresses people in client meetings." The personalization becomes more fine-grained and useful.

Second, agents will optimize for multiple objectives. Today's optimization is relatively simple: find the product that matches the user's stated criteria. Future optimization might balance price, sustainability, ethical sourcing, local jobs, and dozens of other factors based on the user's values. An AI agent could say, "Here are three options, all matching your specs. This one is cheapest, this one has the best warranty, this one is made locally." This level of optimization is genuinely valuable.

Third, agent-to-agent negotiation will become possible. Instead of merchants setting prices unilaterally, your shopping AI might negotiate with merchants' AI on your behalf. "This customer wants a sofa, is willing to pay up to $2,000, values fast shipping. What's your best offer?" This could theoretically drive more efficient markets and better consumer outcomes.

Fourth, data sharing will need to mature. Right now, each merchant has limited data about customers. The protocol might evolve to enable more sophisticated data sharing (with proper privacy safeguards) that benefits consumers. Imagine if merchants could see your whole purchase history and make better recommendations and pricing decisions based on your actual needs, not just guesses.

But here's the crucial point: all of these positive evolutions require regulatory guardrails. Without them, the more likely evolution is that agents optimize for merchant profit, not consumer value.

How to Protect Yourself Now

Assuming you want to use AI shopping agents while minimizing risk, some practical steps make sense today.

Limit data sharing. If you use Google's Business Agent or other shopping AI, be mindful of what personal information you share in conversations. Discussing your salary, financial plans, or personal preferences gives the algorithm more data to use. Keep shopping conversations focused on specific product requirements.

Use privacy-preserving browsers. Tools that limit tracking and cookie usage constrain the amount of personal data companies can collect about you. This reduces the foundation for price discrimination algorithms.

Cross-check prices manually. When an AI recommends a purchase, take thirty seconds to check the merchant's website independently and a competitor's website. This prevents you from being the victim of subtle recommendation bias. If you notice consistent patterns (the AI always recommends premium products, specific brands, or expensive options), switch to a different agent.

Diversify agents. Don't rely on a single AI for all shopping. Using Gemini for some searches, Chat GPT for others, and specialized tools like Dupe for price-sensitive categories prevents any single system from learning enough about your preferences to manipulate you effectively.

Read consent screens carefully. When authorizing a merchant to interact with your AI assistant, understand what data access you're granting. If something seems off or too permissive, decline.

Support alternatives. If you believe in consumer-first AI commerce, use and pay for tools like Dupe and Beni. Network effects matter. The more users these platforms have, the more leverage they have with merchants and the stronger the competition against Google.

Advocate for regulation. Consumer protection in AI commerce requires policy-level solutions, not just individual vigilance. Supporting organizations like the Groundwork Collaborative that are pushing for regulation creates systemic protection.

The Path Forward: What Should Happen

If we're serious about protecting consumers in the age of AI shopping agents, several things need to happen.

First, regulation should establish baseline transparency requirements. Companies deploying shopping AI should be required to disclose how they handle pricing, how they make recommendations, and what data they use. This disclosure should be meaningful to consumers, not just technically accurate.

Second, there should be meaningful restrictions on price discrimination in AI commerce. Not all price differentiation is unethical, but price differentiation based on inferred financial capacity or personal circumstances should be prohibited. A merchant can charge different prices for different quantities or at different times. They shouldn't be able to charge you more because an AI system inferred you're wealthy.

Third, consent requirements need to mature. The current model where users approve broad categories of actions ("allow this merchant to manage your purchases") gives too much discretion to AI systems. Consent should be specific and granular. Users should be able to say, "Yes to showing me products, No to analyzing my chat history."

Fourth, there should be a right to explanation. When an AI recommends a product or chooses not to show you an option, you should be able to ask why. This both helps consumers understand if they're being manipulated and gives regulators insight into how systems actually work.

Fifth, companies should have obligations to test and report on fairness metrics. Before deploying shopping AI at scale, companies should analyze whether the system recommends different products to different demographic groups for the same query. They should measure whether certain customer segments pay higher effective prices. These analyses should be shared with regulators.

Sixth, there should be public funding for consumer-aligned alternatives. The market alone won't create competition to Google because Google has inherent distribution advantages through Search and Gemini. Public funding for infrastructure that enables consumer-first shopping AI (open data standards, research funding, small business grants) could level the playing field.

None of these requirements are technologically impossible. Most are standard practice in other regulated industries (finance, healthcare, insurance). The challenge is political, not technical. It requires acknowledging that AI commerce raises consumer protection questions worth regulating and that waiting for perfect harm evidence is a failed strategy.

Case Study: What Happened When Search Became Commerce

To understand the stakes of AI shopping agents, it's instructive to look at what happened when Google integrated shopping deeply into search results.

Before 2012, Google separated advertising (Google Ads) from organic search results clearly. When you searched for "running shoes," you'd see organic links and ads in separate areas. You could distinguish between editorial recommendations and paid placements.

Google gradually erased this distinction. Google Shopping results (which are paid placements, though Google doesn't emphasize this) now dominate search results for product queries. When you search for "laptop," you might see five Google Shopping items before the first organic result.

The effect was significant. Merchants who wanted visibility on Google had to pay for Google Shopping. This created a revenue stream for Google but also changed the playing field for retailers. Large, well-capitalized sellers could afford Google Shopping bids. Small sellers struggled. Categories with high margins could support premium bids. Categories with thin margins were crowded out.

Second-order effects included increased consolidation in retail (big merchants got bigger advantages on Google), reduced price transparency (paid results don't necessarily show the cheapest options), and search results that became less about relevance and more about auction dynamics.

Google's response to criticism was similar to their response about the Universal Commerce Protocol. The company said merchants benefit from access to customers. Consumers benefit from seeing products. The algorithm is designed to show relevant results. All true statements that miss the structural point: when a company profits from one side of a transaction (sellers), that side gets optimized first.

The lesson for AI shopping agents is clear. The same structural issues that emerged with Google Shopping will almost certainly emerge with shopping AI. Without regulatory guardrails, the most profitable version of the technology will win over the most consumer-beneficial version.

Conclusion: The Long Game

Lindsay Owens' warning about Google's Universal Commerce Protocol isn't a prediction of imminent fraud. It's a flag that the infrastructure being built right now makes fraud easy to implement later, and the incentive structures don't prevent it.

Google's response is probably accurate about today's safeguards. The current protocol likely doesn't enable personalized price gouging. The company's engineers are sophisticated, and the safeguards appear genuine. But safeguards are only as strong as the incentives supporting them.

As AI shopping agents become essential infrastructure, the incentives will shift. Merchants want every possible tool to maximize revenue. Google wants to compete against other AI platforms. If consumer protection requires sacrificing competitiveness or merchant relationships, pressure will mount to relax safeguards.

The right time to prevent this is now, while the technology is still being standardized and before network effects lock in Google's dominance. That requires regulation establishing baseline protections, support for consumer-aligned alternatives, and public conversation about what AI-enabled commerce should look like.

The good news is that this future isn't inevitable. Regulation can work. Technology can be designed differently. Consumer preference can reward companies that earn trust. Markets can support alternatives.

The bad news is that the window for prevention is closing. In five years, AI shopping might be so embedded in how people buy things that retroactive regulation becomes extremely difficult. The time to get it right is now, with a technology that's still being built, not later, when it's too late to change the architecture.

The consumers who'll benefit most from getting this right won't be early adopters. They'll be the millions of people shopping through AI assistants in 2030 and 2035. What we decide to build now determines what options they'll have then.

FAQ

What is Google's Universal Commerce Protocol?

Google's Universal Commerce Protocol is a standardized framework that enables merchants to integrate their inventory, pricing, and fulfillment systems directly into AI shopping agents like Gemini. Rather than requiring users to visit individual websites or search for products, the protocol allows AI agents to display product options, pricing, and availability from multiple retailers within the conversation. The protocol includes features like Direct Offers (allowing merchants to provide special deals) and personalization capabilities to show relevant products based on user preferences.

What is surveillance pricing and how does it relate to AI shopping agents?

Surveillance pricing refers to the practice of adjusting prices for different customers based on personal data, browsing history, or inferred willingness to pay. In the context of AI shopping agents, surveillance pricing would mean an AI system could analyze your chat history, purchase patterns, and personal preferences to recommend or price products differently than it would for another customer. Consumer advocates worry that while Google's protocol has safeguards against this today, the underlying architecture makes implementing surveillance pricing relatively straightforward in the future if incentives change.

Can Google's AI shopping agent actually adjust prices based on personal data right now?

According to Google's official response, the current Universal Commerce Protocol does not have functionality enabling merchants to adjust prices based on individual user data. The company states that merchants cannot show different prices through the AI agent than what appears on their own websites (price parity rule), and the Business Agent does not have access to personal financial information, income data, or spending patterns. However, critics argue that the architecture being built makes such functionality relatively easy to implement in future versions if safeguards were relaxed.

Why do consumer advocates worry about the incentive structures of AI shopping agents?

Consumer advocates worry that Google and similar companies that control AI shopping agents face conflicting incentives. Google profits from merchants through advertising and commerce revenue, not from consumers. This means the company's business model rewards making merchants successful and increasing transaction values, not necessarily getting consumers the best deals. Over time, as competition intensifies and profit pressures mount, there's concern that merchant-friendly optimizations might gradually replace consumer protections. This is especially concerning because once AI shopping infrastructure becomes essential, retroactive regulation becomes much harder.

What are Direct Offers and how could they be misused?

Direct Offers is a pilot program in Google's Universal Commerce Protocol that allows merchants to provide special deals through AI shopping agents, such as discounts or added services like free shipping. Google explicitly prohibits using Direct Offers to raise prices above what's listed on a merchant's website. However, Direct Offers could theoretically be used for subtle price discrimination by offering tiered deals to different customer segments. For example, a merchant could offer a 20% discount to price-sensitive customers and a 5% discount to affluent customers, creating different effective prices while technically complying with price parity rules.

What regulations currently protect consumers from AI shopping price discrimination?

Existing consumer protection frameworks have significant gaps regarding AI shopping agents. Price discrimination itself isn't illegal for consumer transactions in most jurisdictions, though discrimination based on protected characteristics (race, gender, etc.) is prohibited. Privacy laws like GDPR in Europe and California's laws require transparency and consent for personal data use. The FTC can pursue cases against unfair or deceptive practices. However, there's no comprehensive regulatory framework specifically addressing AI-driven personalization and price discrimination in commerce. Most regulation is reactive, requiring regulators to identify violations after they've harmed consumers. Proactive regulatory frameworks are still being developed.

Are there alternatives to Google's AI shopping platform that prioritize consumers?

Yes, several startups are building consumer-first AI shopping platforms with different incentive structures. Dupe helps users find affordable furniture using natural language queries, with a business model aligned with helping customers save money. Beni helps users find affordable thrift fashion through image and text recognition. These platforms demonstrate that alternative business models are technically possible, though they face challenges scaling against established tech giants. They lack Google's distribution advantages and struggle to negotiate favorable terms with traditional retailers, but they represent the potential for market alternatives that prioritize consumer interests.

What specific actions can individual consumers take to protect themselves from AI shopping manipulation?

Consumers can take several practical steps: limit personal information shared in shopping conversations, use privacy-preserving browsers to constrain tracking, manually cross-check AI recommendations against competitor pricing, diversify which AI platforms they use for shopping to prevent any single system from learning their full preferences, carefully review consent screens before authorizing merchants to access their data, support consumer-aligned alternatives to mainstream platforms, and advocate for regulatory frameworks that establish baseline protections. While individual vigilance is valuable, systemic protection ultimately requires policy-level solutions since encryption and privacy design aren't foolproof against well-resourced companies optimizing for exploitation.

What would meaningful regulation of AI shopping agents look like?

Effective regulation would likely include several components: mandatory transparency disclosures explaining how prices are determined and products are recommended, restrictions on price discrimination based on inferred financial capacity or personal characteristics, granular consent requirements allowing users to approve or deny specific uses of their data, rights to explanation when AI systems make recommendations, mandatory testing and reporting on fairness metrics to identify whether systems recommend different products to different demographics, and public funding for consumer-aligned alternatives to create competition with large tech companies. Most of these requirements are technically feasible and align with regulatory approaches in other industries like finance and insurance.

How does Google's experience with integrating shopping into search results inform the AI shopping agent debate?

When Google integrated shopping results into search (beginning around 2012), the company gradually made paid Google Shopping results more prominent, eventually dominating results for product queries. This created revenue for Google but also increased consolidation in retail (favoring large merchants who could afford higher bids) and reduced price transparency. Critics note that Google's response to that integration mirrors its current responses to shopping agent concerns: the technology benefits both merchants and consumers, the algorithm is designed to show relevant results, and individual statements are technically accurate but miss structural incentive issues. This history suggests that without proactive regulation, AI shopping agents will follow similar patterns toward merchant-optimization.

What is the timeline for when AI shopping agents might actually become mainstream?

Google's Universal Commerce Protocol and similar initiatives are launching in 2025, but widespread adoption will likely take several years. The technology needs to reach critical mass with merchants for inventory breadth to matter to consumers, and consumers need familiarity with AI-first shopping interfaces. Most observers estimate 3-5 years for meaningful mainstream adoption, with acceleration depending on Gemini's growth and integration into Google's core products. However, the regulatory landscape has time to mature only if policymakers act urgently. Waiting for proof of harm before regulating virtually guarantees that inadequate safeguards will be embedded in the infrastructure before regulations arrive.

Key Takeaways

- Google's Universal Commerce Protocol enables AI shopping integration, but consumer advocates warn it could enable surveillance pricing through personal data analysis

- Current safeguards appear robust, but the underlying architecture makes future price discrimination relatively easy to implement if incentive structures shift

- The fundamental risk isn't fraud today—it's misaligned incentives between Google's business model (profiting from merchants) and consumer protection

- Regulatory frameworks are years behind the technology; proactive policy is needed to prevent abuse before infrastructure lock-in makes regulation impossible

- Consumer-first shopping AI alternatives exist but face significant scaling challenges against established tech giants with distribution advantages

![Google's AI Shopping Agents: The Consumer Privacy & Pricing Debate [2025]](https://tryrunable.com/blog/google-s-ai-shopping-agents-the-consumer-privacy-pricing-deb/image-1-1768334905824.jpg)