A Shift in Google's Corporate Ethos

When Google first introduced its corporate motto, “Don’t Be Evil,” it was a guiding principle that set the company apart. Fast forward to 2025, and Google is making headlines with its expanded contract with the U. S. Department of Defense (DoD). This new agreement allows for the use of Google's AI platform, Gemini, for any lawful purpose. This pivot has sparked discussions about Google's ethical stance and its implications for the tech industry.

TL; DR

- Google's DoD Contract: Google expands its Pentagon partnership, allowing AI use for any lawful purpose, as reported by NBC News.

- Ethical Concerns: Critics question Google's alignment with its original motto, 'Don't Be Evil,' highlighted in The Washington Post.

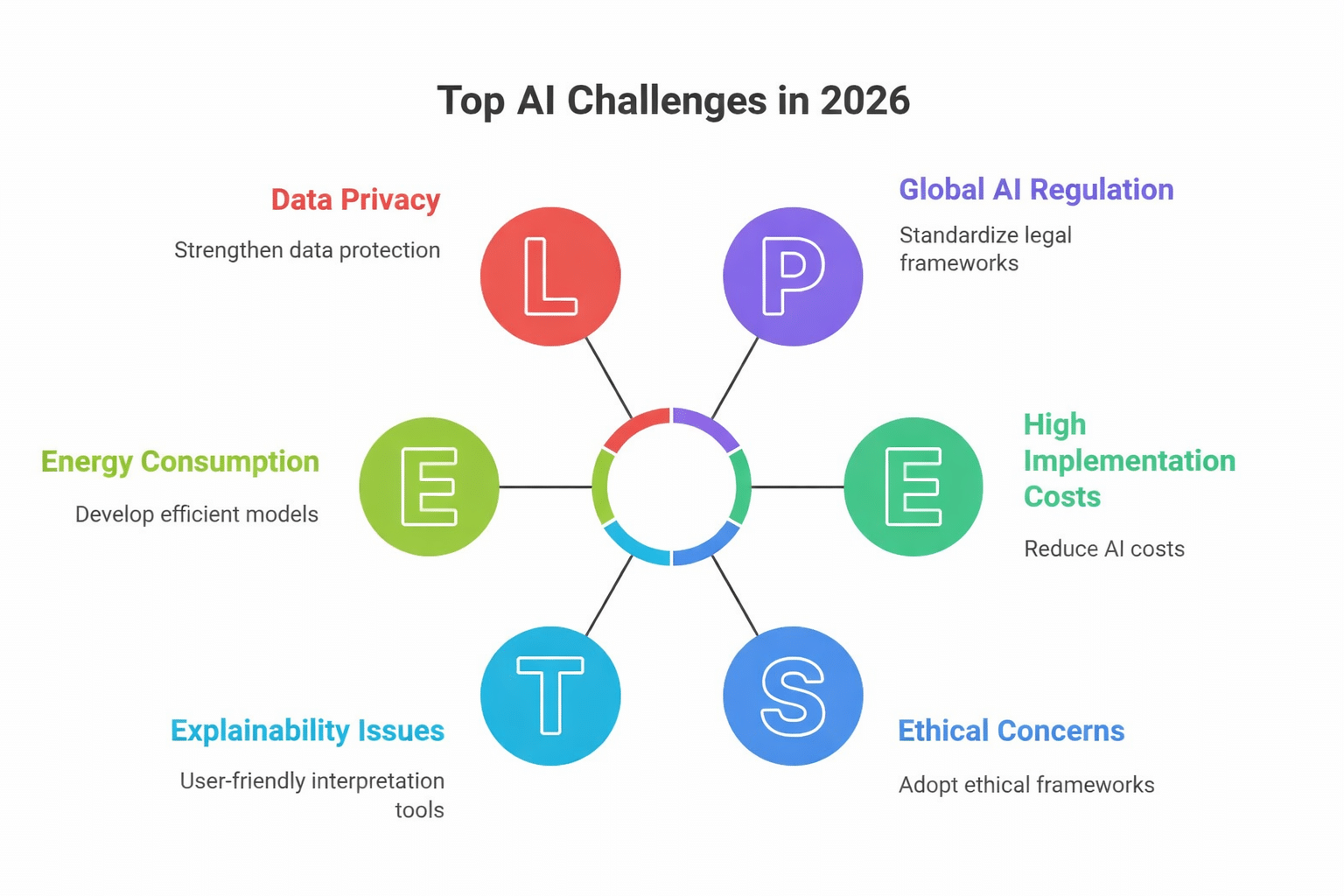

- AI in Defense: Gemini's capabilities could revolutionize military operations but raise ethical questions, as discussed in The Guardian.

- Corporate Responsibility: Balancing innovation with ethical considerations is critical for tech giants.

- Future Trends: The role of AI in defense is set to grow, requiring robust ethical frameworks, according to Forbes.

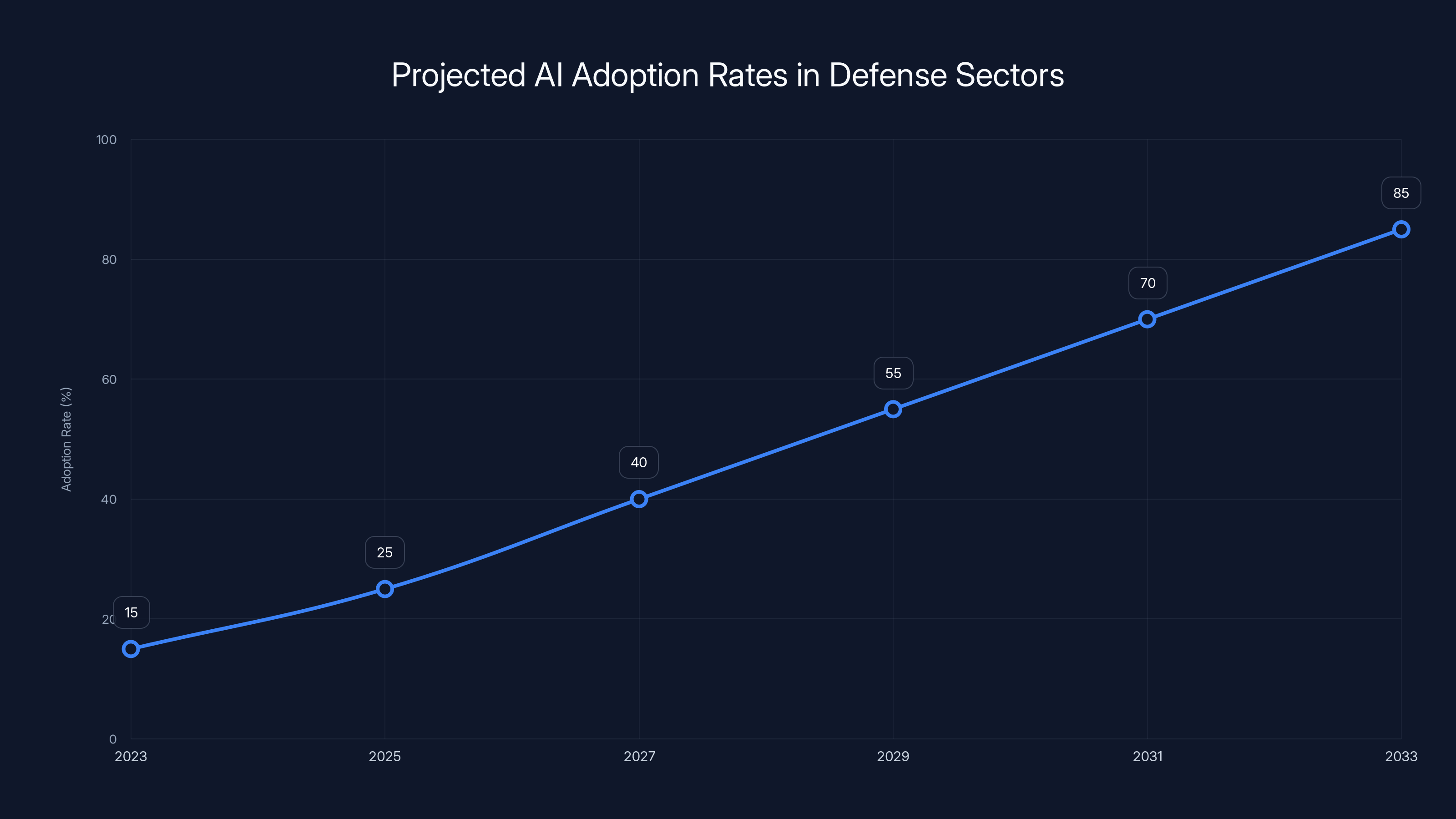

AI adoption in defense sectors is projected to grow from 15% in 2023 to 85% by 2033, driven by advancements in autonomous systems, cybersecurity, and decision support. Estimated data.

Google’s Defense Ventures: A Historical Context

Google's foray into defense isn’t entirely new. The company has been involved in military projects before, such as Project Maven, which used AI to analyze drone footage. Despite employee protests, this marked Google's initial steps toward military collaboration, as noted by SF Standard.

The New DoD Contract: What’s Inside?

The latest contract with the DoD allows for Google's AI platform, Gemini, to be utilized for any lawful purpose. This vague language has raised eyebrows, as it could potentially encompass a wide range of military applications, as detailed in The Information.

Key Aspects of the Contract:

- Broad Scope: Allows use of AI in logistics, data analysis, and potentially combat scenarios.

- Ethical Safeguards: Contract includes provisions for ethical oversight, but specifics are unclear.

- Innovation vs. Ethics: Balances cutting-edge technology with the need for ethical guidelines.

Ethical Implications: Revisiting 'Don't Be Evil'

The expansion into defense raises questions about Google's ethical commitments. Critics argue that engaging in military projects contradicts the company's original ethos, as discussed in Britannica.

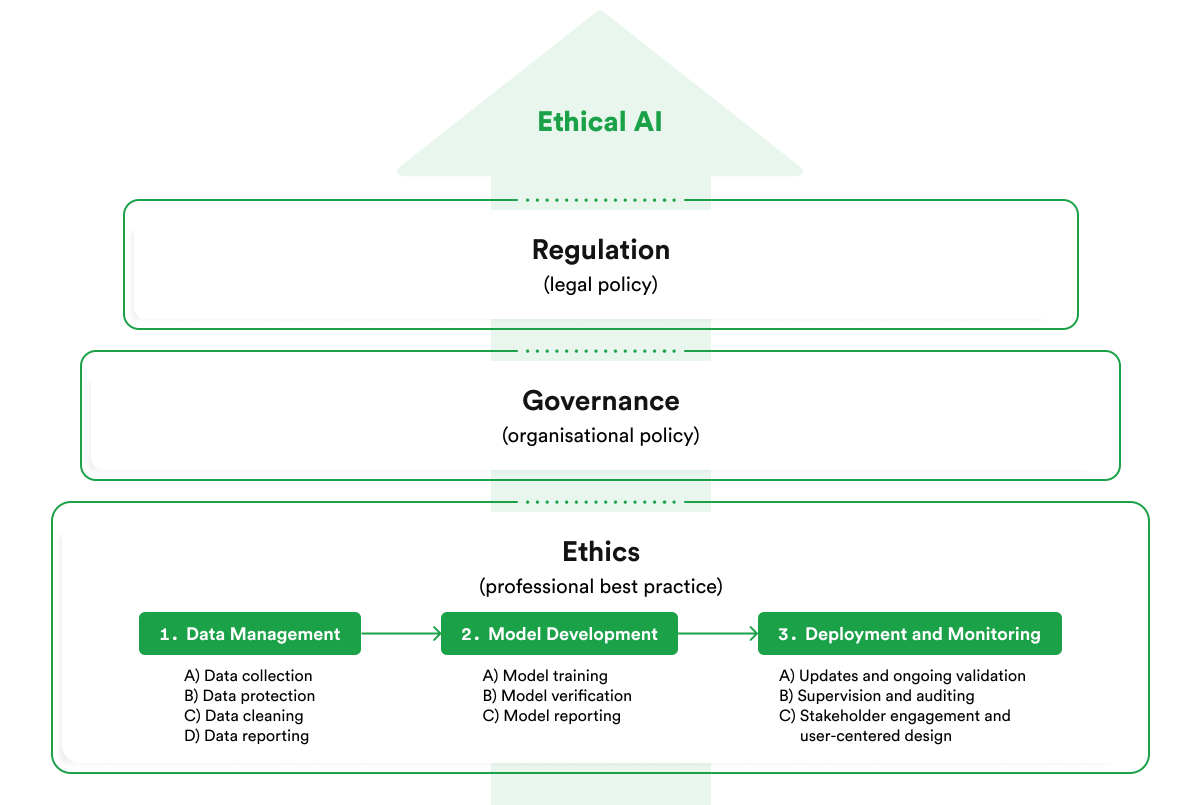

Ethical Frameworks in AI

Ethical AI involves ensuring that AI systems operate fairly and without bias. For Google, this means implementing robust ethical guidelines that prioritize human rights, as outlined in Wiz Academy.

Best Practices for Ethical AI:

- Transparency: Clear communication about AI's capabilities and limitations.

- Accountability: Establishing clear lines of responsibility for AI outcomes.

- Bias Mitigation: Implementing measures to reduce algorithmic bias.

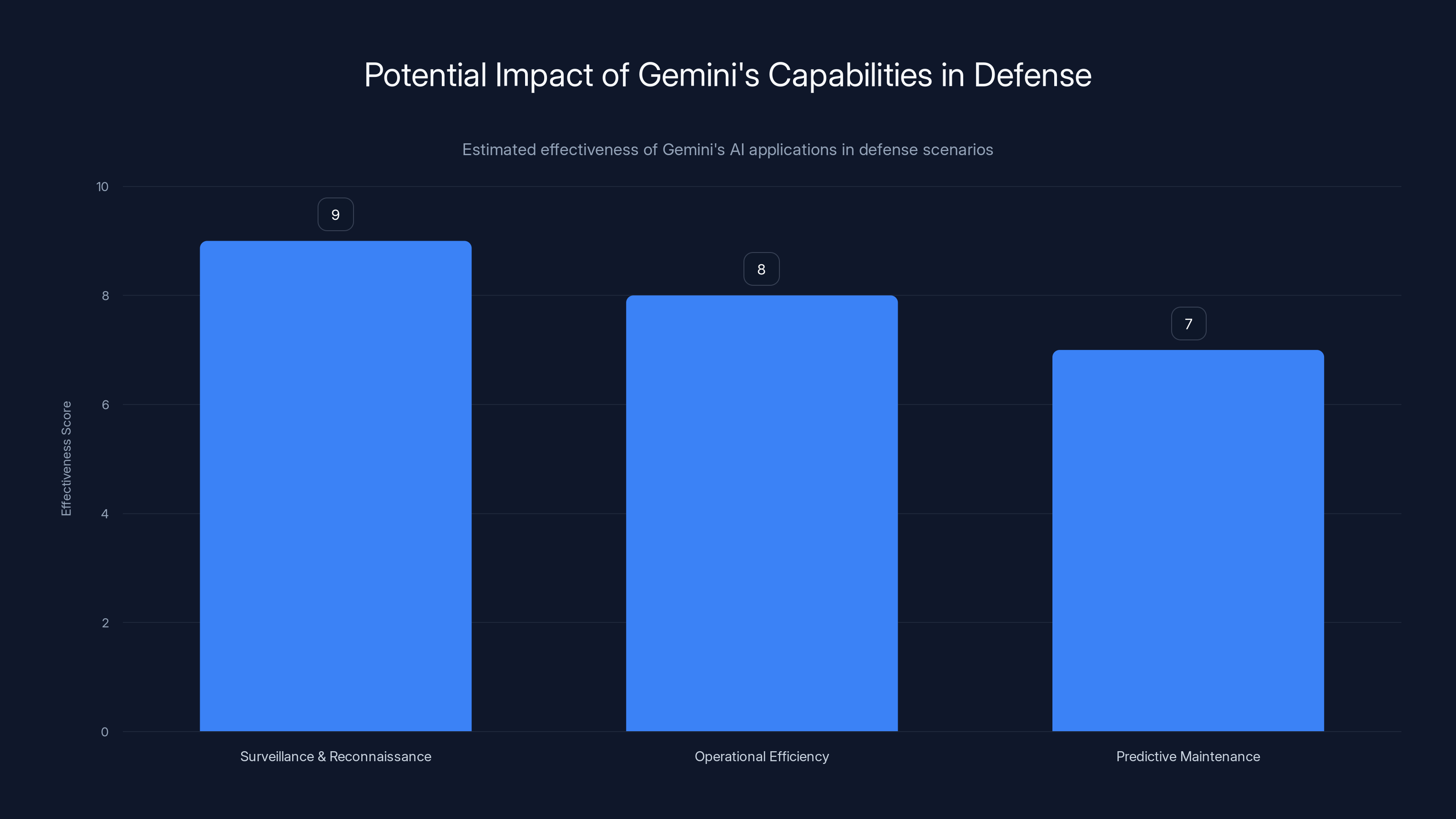

Gemini's AI shows high potential in enhancing surveillance, operational efficiency, and predictive maintenance in defense. (Estimated data)

Technical Capabilities of Gemini in Defense

Gemini is an advanced AI platform capable of processing large datasets and providing actionable insights. Its potential applications in defense are vast, as highlighted in Technology Magazine.

Use Cases for Gemini

- Surveillance and Reconnaissance: AI can analyze satellite imagery to identify threats.

- Operational Efficiency: Streamlining logistics and resource allocation.

- Predictive Maintenance: Identifying equipment failures before they occur.

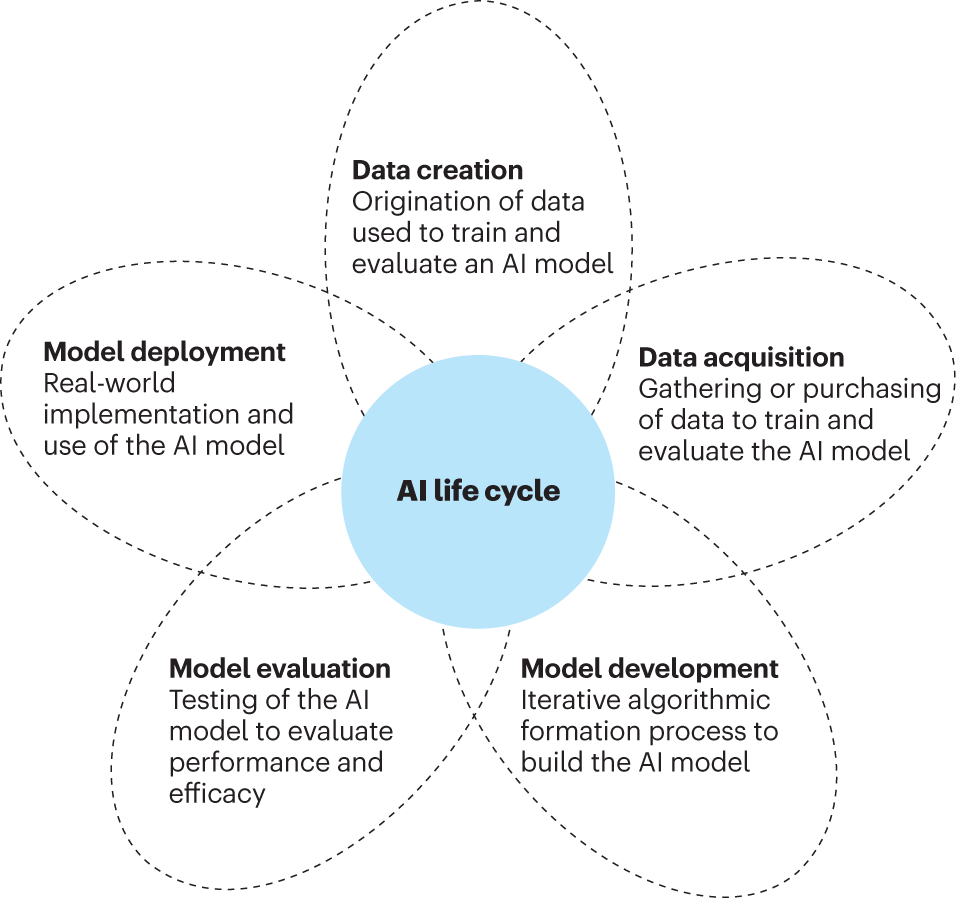

Practical Implementation of AI in Defense

Implementing AI in defense requires careful planning and execution. It involves integrating AI systems with existing infrastructure and ensuring compatibility, as discussed in Army.mil.

Steps to Implement AI

- Assessment: Evaluating current capabilities and identifying needs.

- Integration: Ensuring AI systems work seamlessly with existing technologies.

- Testing: Conducting rigorous tests to ensure reliability and safety.

- Training: Educating personnel on AI tools and their applications.

Common Pitfalls and Solutions

Integrating AI into defense isn't without challenges. Common issues include data security, algorithmic bias, and system reliability.

Solutions to Address Challenges

- Data Security: Implementing robust encryption and access controls.

- Bias Reduction: Regular audits and updates to AI systems.

- Reliability: Continuous testing and improvement of AI models.

Estimated data shows that integration and testing are the most time-consuming steps in AI implementation within defense systems, each requiring significant focus.

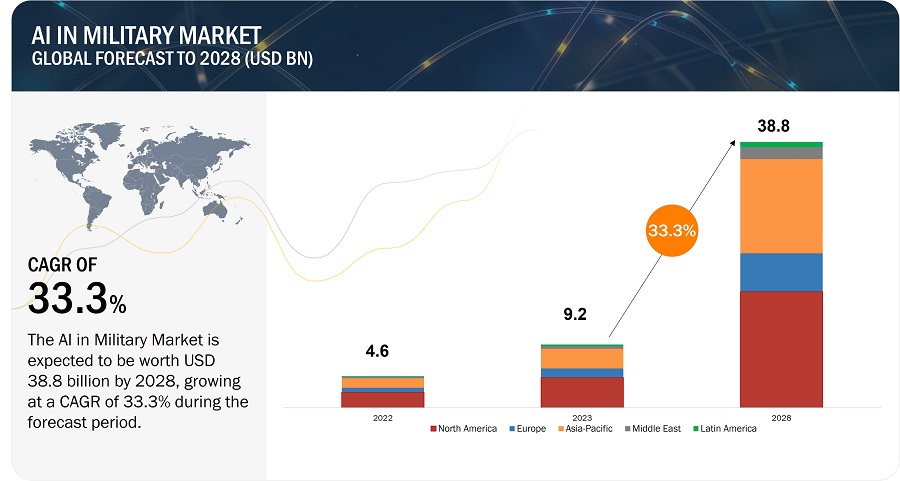

Future Trends in AI and Defense

The role of AI in defense is set to expand significantly. Future trends include increased automation, enhanced cybersecurity, and AI-driven decision-making, as projected by Forbes.

Predictions for the Future

- Autonomous Systems: AI-driven drones and robotics for surveillance and combat.

- Enhanced Cybersecurity: AI tools to detect and respond to cyber threats in real-time.

- Decision Support: AI providing real-time insights to inform strategic decisions.

Recommendations for Ethical AI Development

To ensure ethical AI development, tech companies must prioritize transparency, accountability, and continuous improvement, as emphasized by Britannica.

Key Recommendations

- Develop Ethical Guidelines: Establish clear ethical standards for AI development.

- Engage Stakeholders: Involve a diverse range of voices in AI development processes.

- Continuous Monitoring: Regularly assess AI systems to ensure they meet ethical standards.

Conclusion: Balancing Innovation and Ethics

Google's expanded contract with the DoD represents a significant step in the integration of AI in defense. While it offers opportunities for innovation, it also necessitates a careful examination of ethical considerations.

The path forward involves a delicate balance between leveraging AI's potential and upholding ethical standards. As AI continues to evolve, companies like Google must remain committed to ethical practices to ensure that technology serves humanity positively.

FAQ

What is Google's new DoD contract about?

Google's new contract with the Department of Defense allows for the use of its AI platform, Gemini, for any lawful purpose, expanding its role in military operations, as reported by NBC News.

How does this contract align with Google's 'Don't Be Evil' motto?

Critics argue that the contract contradicts Google's original ethos, as it involves military applications that may not align with the principle of avoiding harm, as discussed in The Washington Post.

What are the potential applications of AI in defense?

AI can be used for surveillance, operational efficiency, predictive maintenance, cybersecurity, and strategic decision-making in defense, as highlighted in Technology Magazine.

What ethical concerns arise from using AI in military operations?

Concerns include potential bias in AI systems, data security, accountability for AI decisions, and the implications of autonomous weapons, as noted by Britannica.

How can Google ensure ethical AI development?

By implementing transparent practices, engaging diverse stakeholders, and continuously monitoring AI systems for compliance with ethical standards, as recommended by Wiz Academy.

What trends are expected in AI's role in defense?

Future trends include the use of autonomous systems, enhanced cybersecurity measures, and AI-driven decision support tools, as projected by Forbes.

Key Takeaways

- Google's expanded DoD contract highlights the growing role of AI in military operations, as reported by NBC News.

- Ethical considerations are paramount as AI technology advances in defense applications, as emphasized by Britannica.

- Transparency, accountability, and bias mitigation are crucial for ethical AI development, as outlined in Wiz Academy.

- Future trends indicate increased automation and AI-driven decision-making in defense, as projected by Forbes.

- Balancing innovation with ethical practices is critical for tech companies like Google, as discussed in The Guardian.

Social

- "Google's new DoD contract expands AI use. But what about 'Don't Be Evil'? #AI #Ethics"

- "Google's AI platform, Gemini, now part of DoD operations. Ethical implications?"

Preview

- "Google's expanded DoD contract raises questions about its ethical commitments and the role of AI in defense."

- "Explore the implications of Google's Pentagon partnership and the ethical considerations for AI in military operations."

- "Google's DoD contract expands AI use in defense, raising ethical questions about 'Don't Be Evil.'"

- "Screenshot of Google's AI platform Gemini in military applications (2025)"

Internal Links

- "AI and Ethics in Technology" - /ai-ethics - Relevant context for ethical AI development.

- "The Role of AI in Modern Warfare" - /ai-modern-warfare - Insight into AI's military applications.

Pillar Suggestions

- "AI in Defense: Opportunities and Challenges" - Key exploration of AI's role and ethical concerns in military settings.

Related Articles

- 20VC x SaaStr: When the Agents Pick the Models, OpenAI Comes Back to Life, and Thoma Bravo Just Wiped Out $5.1B on Medallia | SaaStr

- Russia cloaks launch schedule after spaceport falls in Ukraine's sights - Ars Technica

- Red-teaming a network of agents: Understanding what breaks when AI agents interact at scale - Microsoft Research

- US falls below Ukraine in press freedom as global autocracy takes hold - Ars Technica

- Elon Musk's 7 biggest stumbles on the stand at OpenAI trial - Ars Technica

- Congress keeps kicking surveillance reform down the road | The Verge

![Google's Pentagon Partnership: Navigating the Ethics and Implications of AI in Defense [2025]](https://tryrunable.com/blog/google-s-pentagon-partnership-navigating-the-ethics-and-impl/image-1-1777588472645.jpg)