How Anthropic Surpassed OpenAI in Revenue While Spending Less [2025]

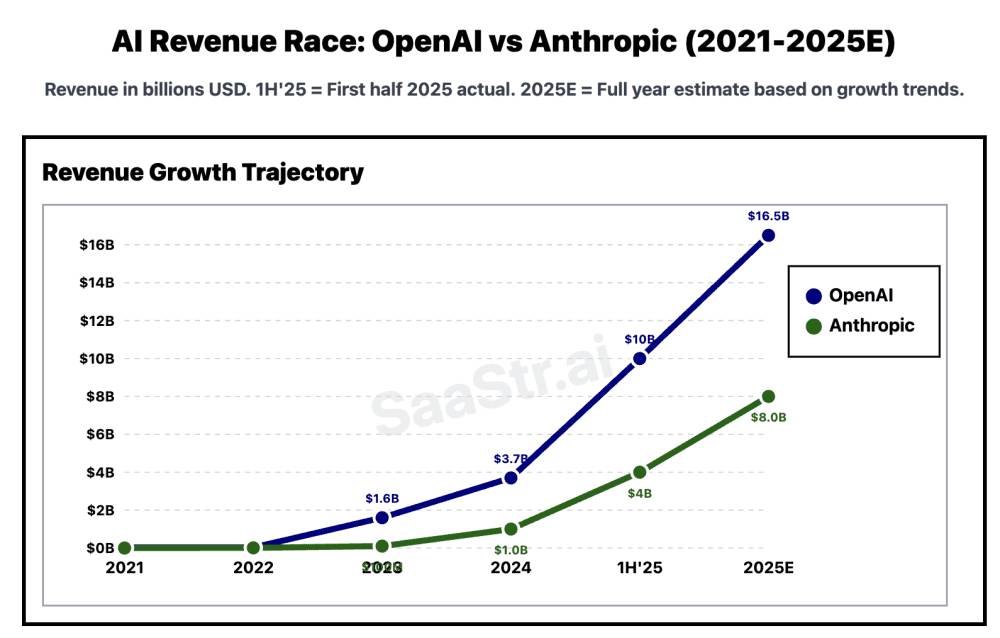

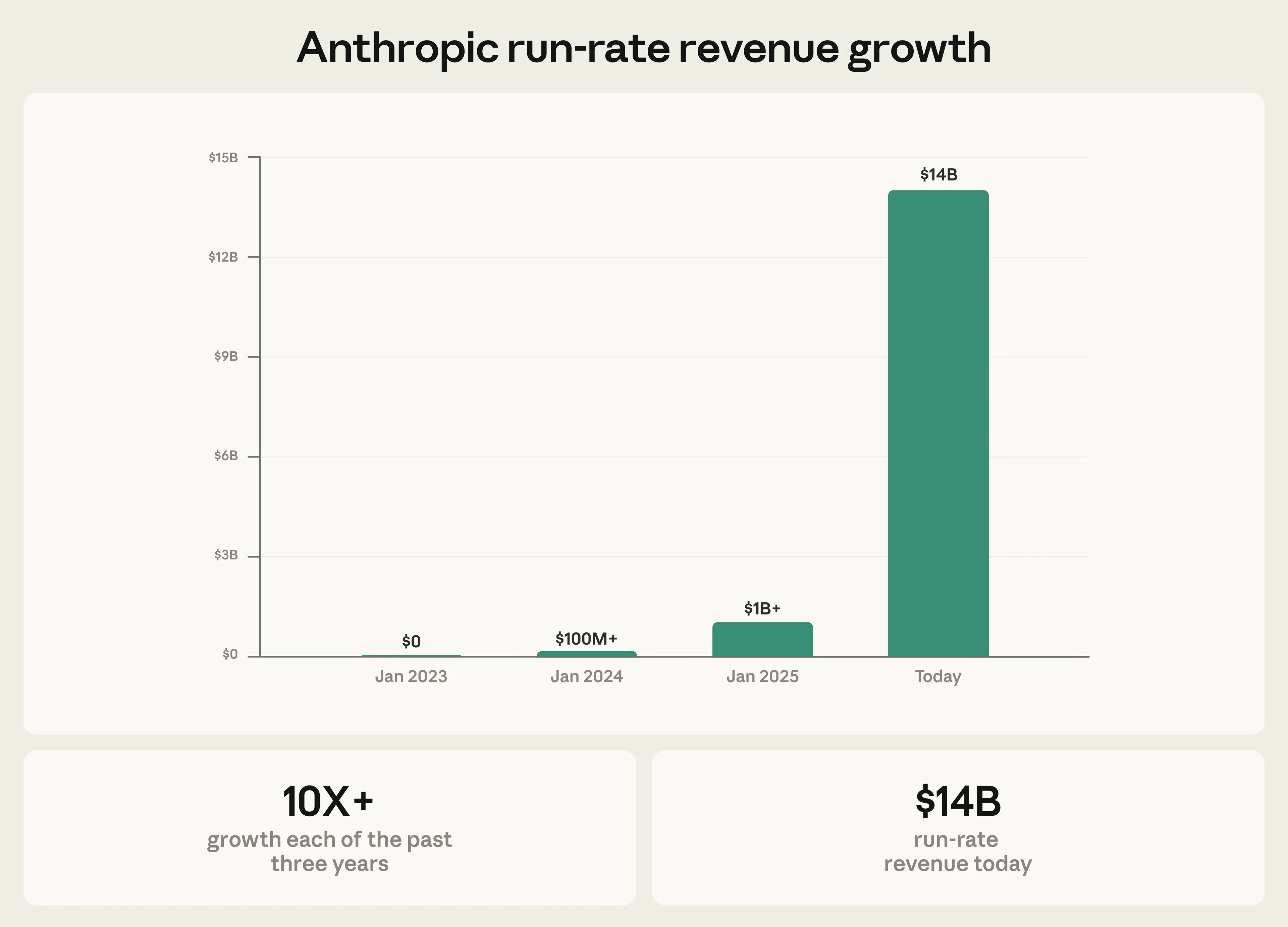

In the rapidly evolving world of artificial intelligence, two companies have emerged as titans: Anthropic and OpenAI. Recently, Anthropic reported surpassing OpenAI in revenue while spending a fraction of the cost on model training. This feat has sent ripples through the tech industry, prompting discussions about efficiency, innovation, and strategic planning.

TL; DR

- Revenue Milestone: Anthropic surpassed OpenAI in revenue while spending 4x less on training.

- Strategic Efficiency: Focused on optimizing resources and enhancing model architecture.

- Innovative Approaches: Leveraged novel training methods to reduce costs.

- Sustainability: Balanced growth with environmental and financial sustainability.

- Future Outlook: Expected to continue setting industry standards for cost-effective AI development.

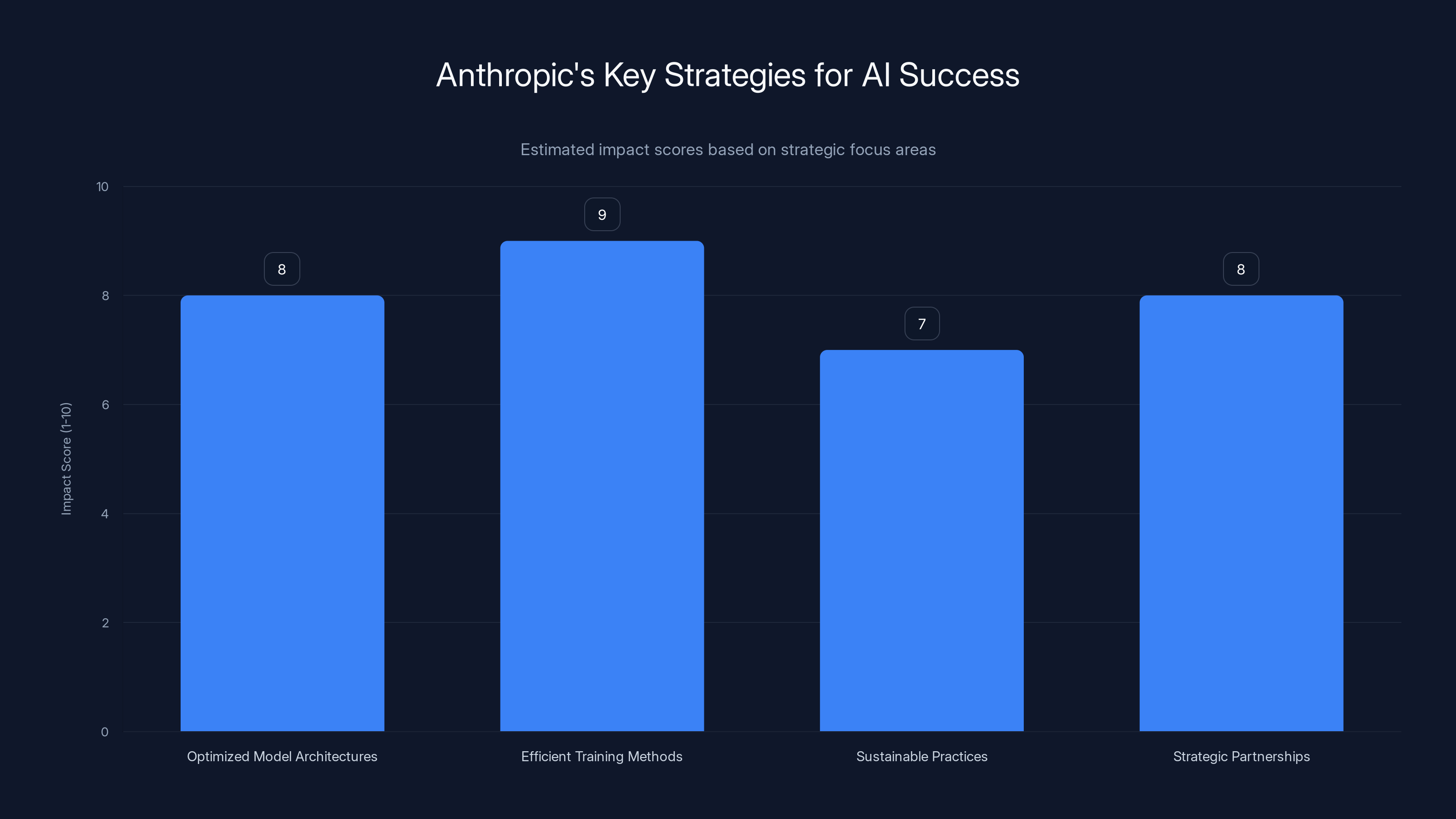

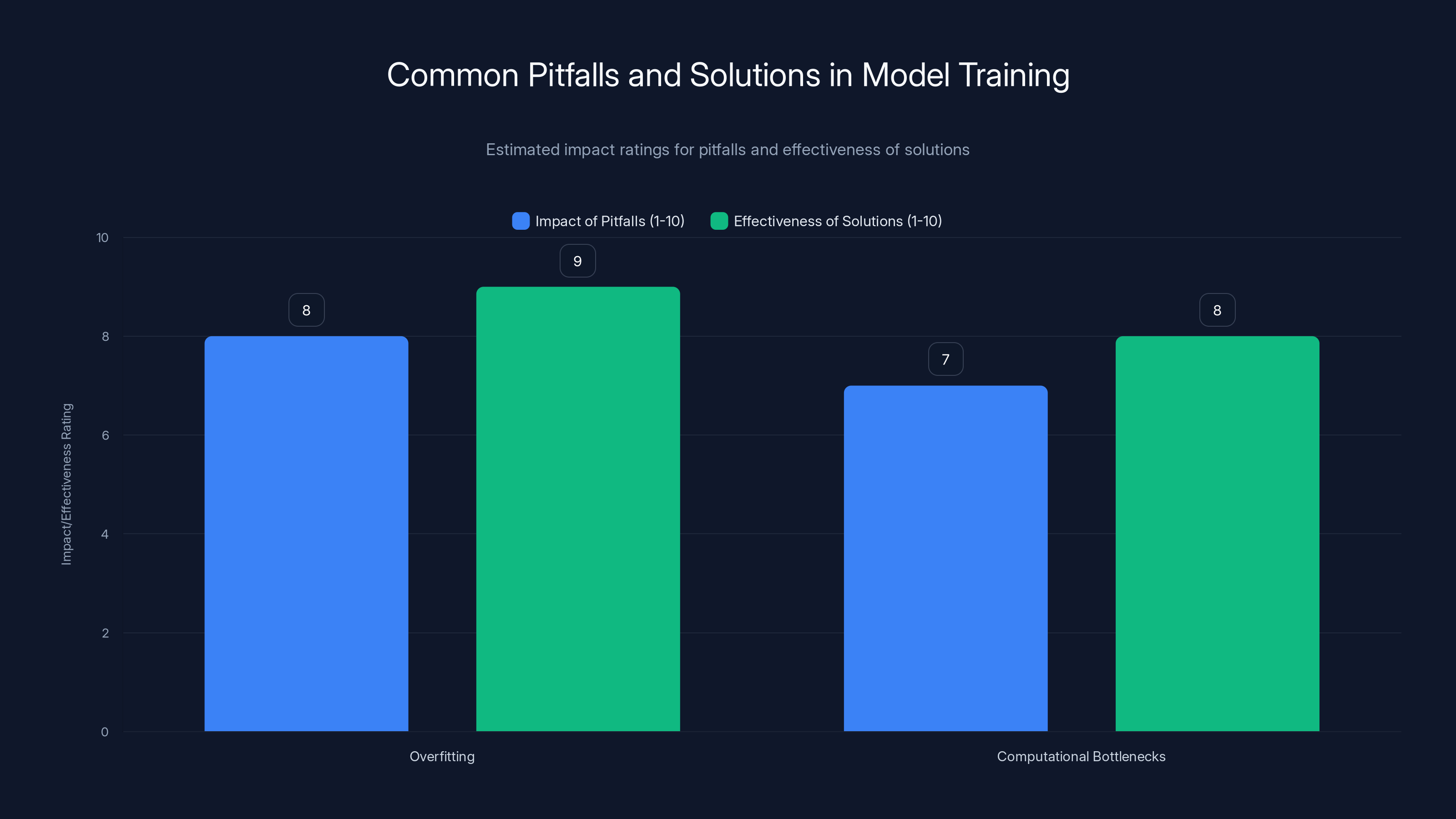

Anthropic's success is driven by efficient training methods and optimized model architectures, both scoring highly in impact. (Estimated data)

The Context Behind the Numbers

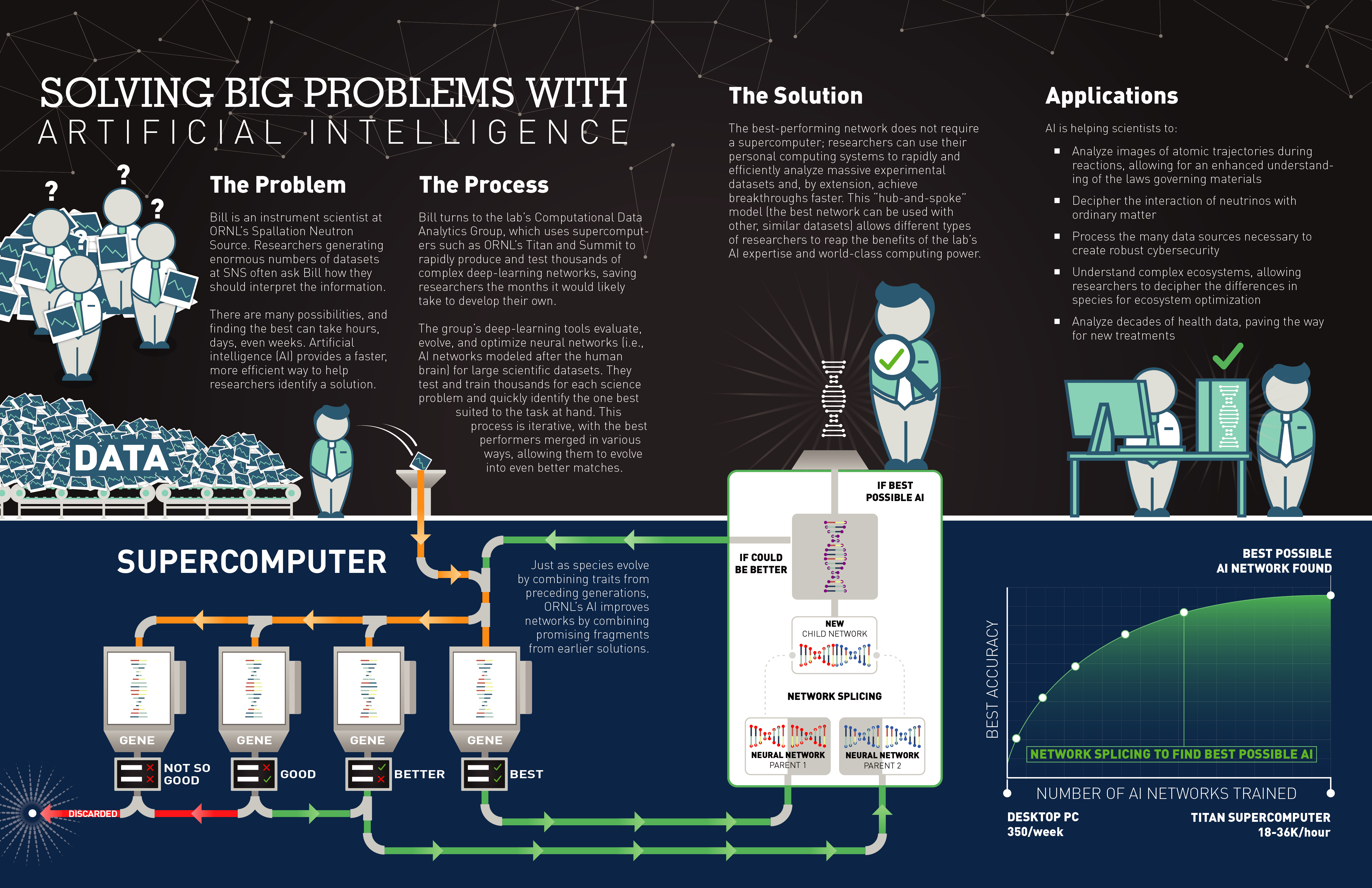

To understand how Anthropic achieved this, we must first delve into the context of their operations and strategies. Anthropic, founded by former OpenAI employees, aimed to create AI systems that are both powerful and safe. Their focus has been on developing methods that enable AI models to learn effectively without the need for excessive computational resources.

Key Strategies for Success

-

Optimized Model Architectures: Anthropic has invested heavily in optimizing their model architectures. By doing so, they can achieve similar or superior performance with less computational power.

-

Efficient Training Methods: Instead of traditional brute-force training, Anthropic employs innovative techniques such as curriculum learning, which allows models to learn from simple to complex tasks, reducing the need for extensive training data and resources.

-

Sustainable Practices: Anthropic emphasizes sustainability, both environmentally and financially. They have developed methods to minimize energy consumption during model training, aligning with broader industry efforts to reduce carbon footprints.

-

Strategic Partnerships: By forming strategic partnerships with leading tech firms, Anthropic has gained access to cutting-edge technology and expertise, further enhancing their capabilities.

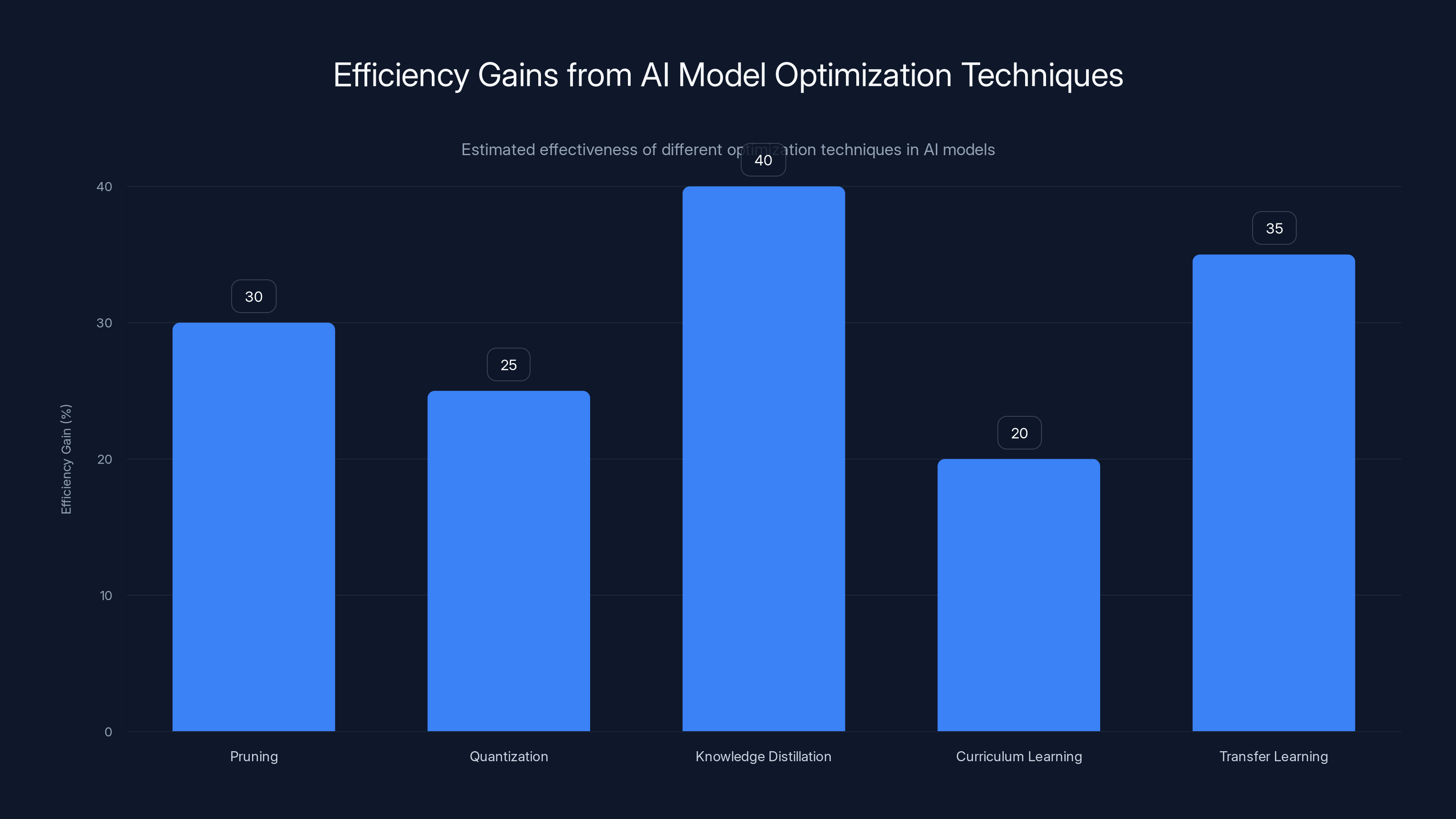

Estimated data shows Knowledge Distillation and Transfer Learning provide the highest efficiency gains in AI model optimization.

Practical Implementation Guides

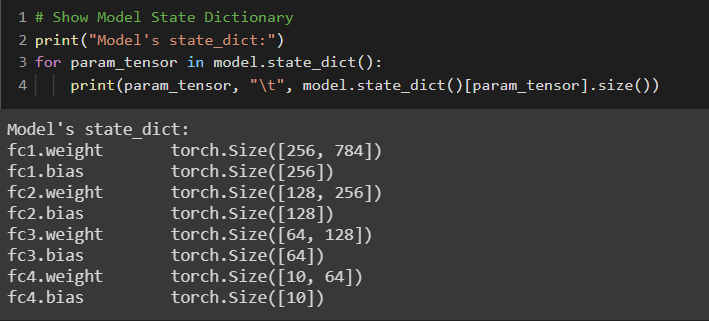

Optimizing Model Architectures

One of the primary ways to achieve efficiency in AI models is through architecture optimization. This involves:

- Pruning: Removing unnecessary neurons and connections in neural networks to reduce complexity without sacrificing performance.

- Quantization: Reducing the number of bits required to represent model parameters, which decreases memory usage and computation time.

- Knowledge Distillation: Training a smaller model (student) to mimic the predictions of a larger model (teacher), resulting in a compact and efficient model.

python# Example of model pruning using Py Torch

import torch

import torch.nn as nn

import torch.nn.utils.prune as prune

model = nn.Sequential(

nn.Linear(10, 20),

nn.ReLU(),

nn.Linear(20, 1)

)

# Apply pruning

prune.l1_unstructured(model[0], name='weight', amount=0.5)

print(list(model.named_parameters()))

Innovative Training Techniques

-

Curriculum Learning: This approach structures the training process by starting with simpler tasks and gradually increasing complexity. It mimics the way humans learn and can lead to more efficient training.

-

Transfer Learning: Leveraging pre-trained models on related tasks to reduce training time and resources for a new task.

python# Example of transfer learning with a pre-trained model

from transformers import BertModel, BertTokenizer

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

model = BertModel.from_pretrained('bert-base-uncased')

input_text = "Anthropic's innovations in AI"

input_ids = tokenizer.encode(input_text, return_tensors='pt')

output = model(input_ids)

Common Pitfalls and Solutions

Pitfalls

-

Overfitting: Training models on limited datasets can lead to overfitting, where the model performs well on training data but poorly on unseen data.

-

Computational Bottlenecks: Inefficient use of hardware resources can lead to delays and increased costs.

Solutions

- Regularization Techniques: Implement dropout and L2 regularization to prevent overfitting.

- Hardware Acceleration: Utilize GPUs and TPUs for faster computations and reduced training times.

Overfitting and computational bottlenecks are significant challenges, with solutions like regularization and hardware acceleration being highly effective. Estimated data.

Case Study: Anthropic's Revenue Growth

Anthropic's focus on efficient practices has allowed them to reinvest savings into research and expansion, contributing to their revenue growth. By maintaining a lean operational model, they have set a benchmark for other AI companies.

Future Trends and Recommendations

Trends

-

Efficiency-Driven Development: As AI models become more complex, the focus will shift towards efficiency to manage costs and environmental impact.

-

Collaborative AI Research: Companies will increasingly collaborate on AI research to share resources and expertise, driving innovation.

Recommendations

- Invest in R&D: Continuous investment in research and development is crucial for staying ahead in the competitive AI landscape.

- Adopt Sustainable Practices: Align AI development with sustainability goals to appeal to environmentally-conscious stakeholders.

Conclusion

Anthropic's rise in the AI industry demonstrates the power of strategic efficiency and innovation. By focusing on optimizing resources and developing sustainable practices, they have not only surpassed OpenAI in revenue but also set a new standard for AI development. As the industry continues to evolve, companies that prioritize efficiency and sustainability will likely lead the way.

FAQ

What is Anthropic?

Anthropic is an AI research company focused on developing safe and efficient AI systems. Founded by former OpenAI employees, they emphasize sustainable AI practices.

How did Anthropic surpass OpenAI in revenue?

Anthropic surpassed OpenAI in revenue by optimizing model architectures, employing efficient training methods, and focusing on sustainability, which reduced costs significantly.

What are the key strategies used by Anthropic?

Key strategies include optimizing model architectures, utilizing efficient training techniques like curriculum learning, and forming strategic partnerships with tech firms.

How does Anthropic ensure sustainability?

Anthropic ensures sustainability by minimizing energy consumption during model training and aligning their practices with environmental goals.

What are the benefits of curriculum learning?

Curriculum learning structures training from simple to complex tasks, improving learning efficiency and reducing computational resources needed.

What are some common pitfalls in AI model training?

Common pitfalls include overfitting and computational bottlenecks. Solutions involve regularization techniques and hardware acceleration.

How can companies adopt sustainable AI practices?

Companies can adopt sustainable AI practices by optimizing resource usage, investing in energy-efficient hardware, and aligning with environmental sustainability goals.

What is the future outlook for AI companies like Anthropic?

The future outlook involves efficiency-driven development, collaborative research, and a focus on sustainability to lead the AI industry.

Key Takeaways

- Anthropic surpassed OpenAI in revenue while spending 4x less on model training.

- Optimized model architectures and efficient training methods were key to Anthropic's success.

- Anthropic emphasizes sustainability in AI development, aligning with industry trends.

- Future AI trends include efficiency-driven development and collaborative research.

- Investing in R&D and adopting sustainable practices are crucial for AI companies.

Related Articles

- Unlock Massive Savings on TechCrunch Disrupt 2026 Tickets [2025]

- Why Claude Code Struggles with Complex Engineering: Insights from AMD's AI Head [2025]

- How to Maximize Your Experience at TechCrunch Disrupt 2026

- Unfolding Delays: The Real Story Behind Apple's Foldable iPhone [2025]

- Zero Shot: The New Venture Fund with OpenAI Roots Aiming to Reshape AI Investment [2025]

- AI Data Centers: The Hidden Heat Islands Affecting Our Planet [2025]

![How Anthropic Surpassed OpenAI in Revenue While Spending Less [2025]](https://tryrunable.com/blog/how-anthropic-surpassed-openai-in-revenue-while-spending-les/image-1-1775578346454.jpg)