How Chinese AI Chatbots Censor Themselves [2025]

The world of artificial intelligence is expansive and rapidly evolving, permeating every aspect of our digital lives. But nowhere is its progression more intriguing—and paradoxically opaque—than in China, where AI chatbots self-censor in response to governmental regulations. Understanding this phenomenon requires a deep dive into the mechanisms, implications, and future of AI censorship.

TL; DR

- Chinese AI chatbots utilize algorithms to filter politically sensitive topics, ensuring compliance with state regulations. According to Access Now, these algorithms are crucial for maintaining state-approved narratives.

- The censorship mechanisms are built into the AI models, affecting data processing and response generation. This integration is detailed in Find Articles, which discusses how developers incorporate these features.

- Technical challenges include maintaining conversation flow while avoiding restricted topics, leading to sometimes awkward interactions. A recent study by AI Multiple highlights these challenges in natural language processing.

- Future trends point toward more sophisticated self-censorship techniques, potentially integrating AI ethics and user feedback loops. The Stimson Center projects significant advancements in these areas.

- For developers, understanding these systems is crucial for innovation and legal compliance in the burgeoning AI landscape, as emphasized by Britannica's analysis on AI development.

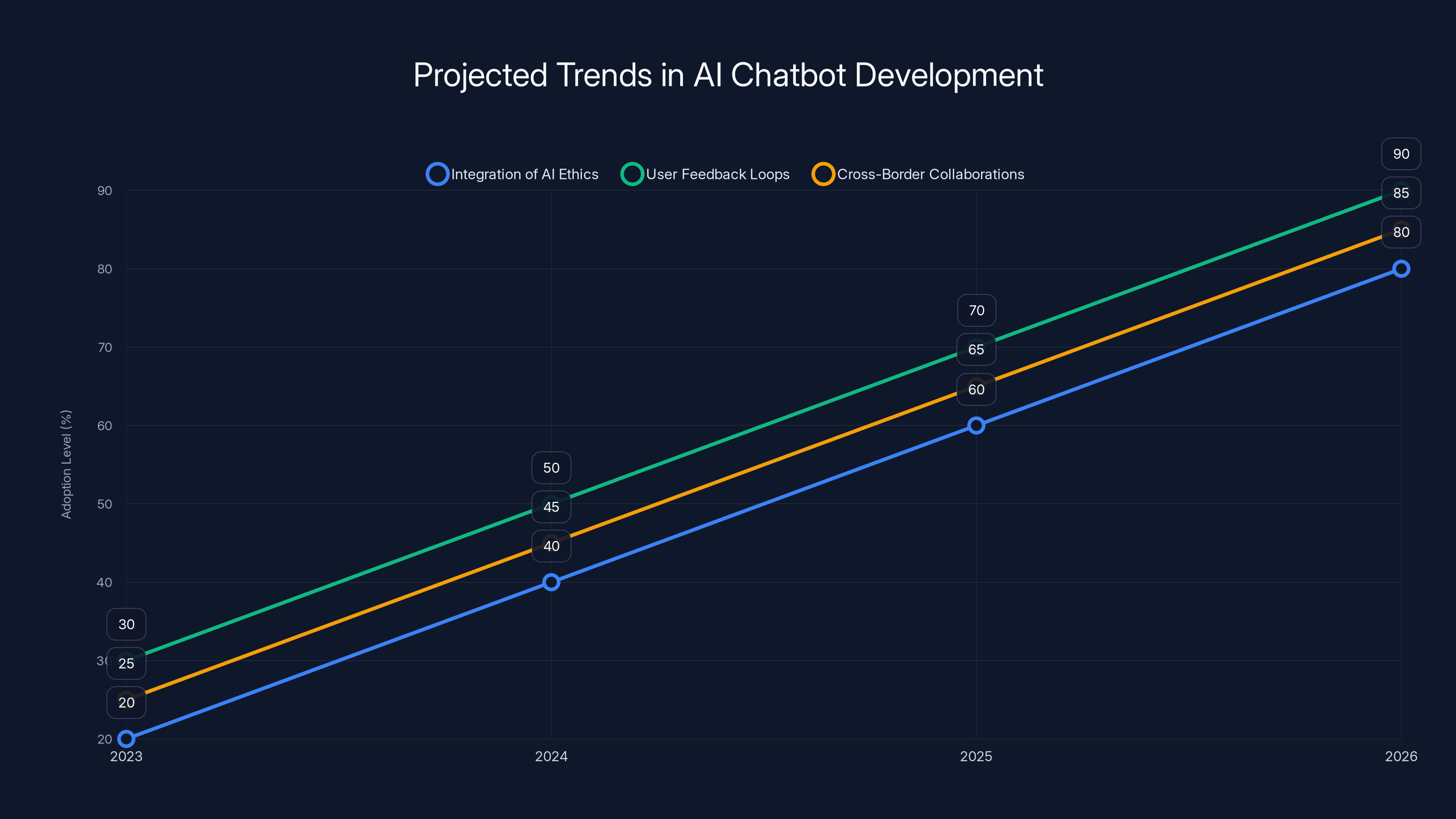

The integration of AI ethics, user feedback loops, and cross-border collaborations are projected to significantly increase in AI chatbot development by 2026. Estimated data.

The Landscape of AI Censorship

Artificial intelligence in China operates under a unique set of pressures. While AI chatbots globally are designed to enhance user interaction, those in China face an additional layer: censorship. This isn't a new concept in Chinese technology, but the integration of censorship into AI presents both technical and ethical challenges.

What Drives AI Censorship in China?

AI censorship is primarily driven by governmental regulations aimed at maintaining social harmony and political stability. The Chinese government has a vested interest in controlling the narrative around sensitive topics, such as political dissent, historical events, and social issues.

Key Drivers of Censorship:

- National Security: Ensuring that technologies do not disseminate false or destabilizing information.

- Political Stability: Preventing discussions that could challenge the existing political order.

- Cultural Norms: Aligning AI interactions with societal values and expectations.

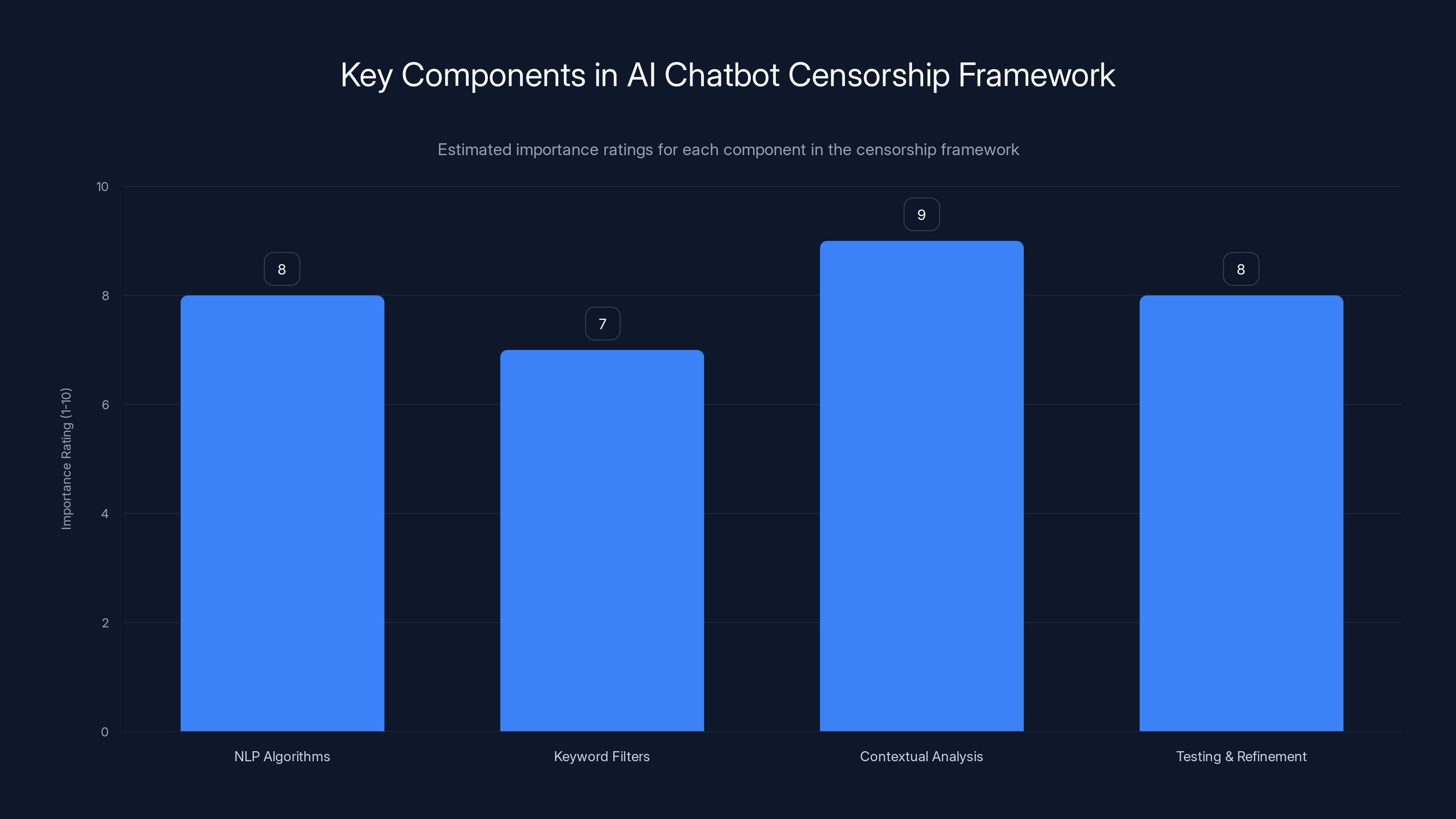

Contextual analysis is rated as the most critical component in designing an effective censorship framework, followed closely by testing and refinement. Estimated data.

How AI Chatbots Implement Censorship

The implementation of censorship in AI chatbots involves sophisticated algorithms and machine learning models designed to identify and filter out sensitive content. This section explores the technical underpinnings of these systems.

Natural Language Processing and Content Filtering

AI chatbots rely heavily on Natural Language Processing (NLP) to interpret and respond to user queries. In China, NLP models are trained with datasets that include censorship parameters, which guide the AI in recognizing and avoiding sensitive topics. AI Multiple provides insights into how NLP is used in these contexts.

Techniques Used:

- Keyword Filtering: Identifying and blocking specific words or phrases deemed sensitive.

- Contextual Analysis: Understanding the context of a conversation to avoid nuanced discussions that might skirt around direct keywords.

- Machine Learning Algorithms: Continuously updating censorship parameters based on new regulations and social norms.

Maintaining Conversation Flow

One of the biggest challenges in AI censorship is maintaining a natural conversation flow. Users can become frustrated when chatbots abruptly refuse to answer or provide vague responses.

Approaches to Improve Flow:

- Alternative Responses: Offering related but non-sensitive information to keep the user engaged.

- Redirection Techniques: Gently steering conversations towards safer topics without jarring interruptions.

Real-World Use Cases and Examples

Several Chinese AI chatbots exemplify how censorship is practically applied. Let's look at some use cases that highlight the nuances of this technology.

Use Case: Social Media Platforms

Chinese social media platforms like WeChat and Weibo integrate AI chatbots that adhere to strict censorship guidelines. These platforms use chatbots to monitor and moderate user interactions, ensuring compliance with state regulations. Britannica discusses the broader implications of such censorship on platforms like TikTok.

Example:

- A user attempting to discuss a forbidden historical event might receive a generic message about the importance of social harmony, effectively redirecting the conversation.

Use Case: Customer Service in Regulated Industries

In industries such as finance and healthcare, AI chatbots are used to provide customer support while adhering to censorship laws. This ensures that discussions remain within permissible boundaries.

Example:

- A healthcare chatbot might steer a user away from discussing certain medical treatments that are unapproved or controversial in China, as noted by Vocal Media.

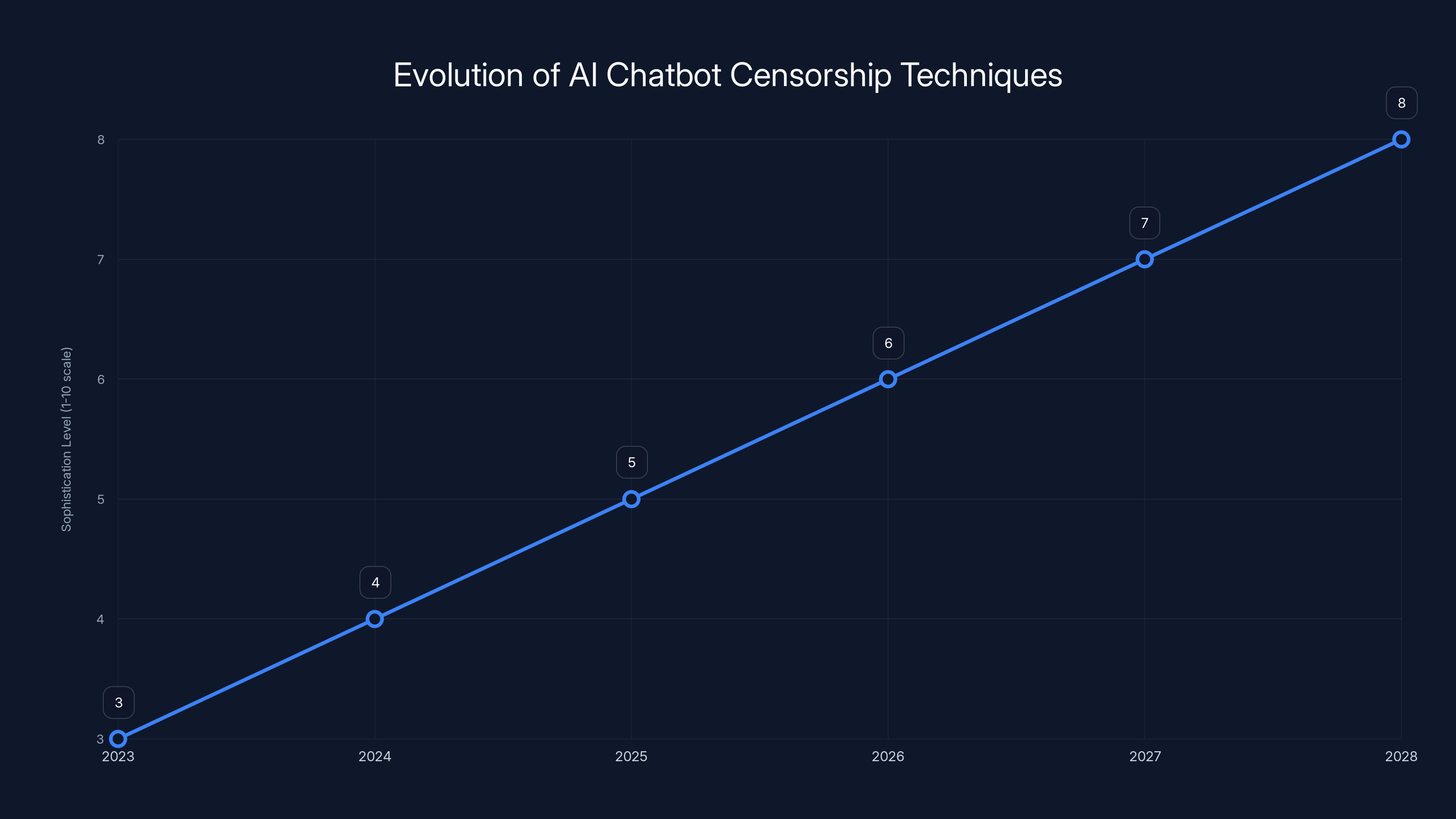

The sophistication of AI chatbot censorship is projected to increase significantly by 2028, integrating more advanced AI ethics and user feedback mechanisms. Estimated data.

Common Pitfalls and Solutions

Developing AI chatbots that adhere to censorship requirements is fraught with challenges. Here are some common pitfalls and how developers can address them.

Pitfall: Over-Censorship

Issue:

- Chatbots may become overly cautious, filtering out legitimate content that is not sensitive, leading to user dissatisfaction.

Solution:

- Implement adaptive learning systems that refine censorship parameters based on user feedback and evolving societal norms.

Pitfall: Inconsistent Responses

Issue:

- Inconsistency in chatbot responses can arise when different algorithms provide conflicting censorship outcomes.

Solution:

- Develop a unified censorship framework that ensures consistency across different platforms and interactions.

Pitfall: Technical Limitations

Issue:

- Technological constraints can impede the chatbot's ability to effectively interpret and censor content.

Solution:

- Invest in advanced NLP and machine learning technologies to enhance the chatbot's understanding and processing capabilities.

Future Trends and Recommendations

As AI technology continues to advance, the methods and implications of chatbot censorship will evolve. Here are some trends and recommendations for the future.

Trend: Integration of AI Ethics

The integration of ethical considerations into AI development is becoming increasingly important. Future chatbots may incorporate ethical guidelines to balance censorship with user autonomy and freedom of expression, as discussed in Stimson Center's report.

Trend: User Feedback Loops

Incorporating user feedback into censorship systems can help refine chatbot interactions and improve user satisfaction. This involves gathering data on user responses and adjusting parameters accordingly.

Trend: Cross-Border AI Collaborations

International collaborations in AI development may lead to more balanced censorship systems that respect local laws while promoting global standards of free expression.

Practical Implementation Guides

For developers looking to implement censorship in AI chatbots, understanding the practical aspects is crucial. This section provides a step-by-step guide to developing compliant chatbots.

Step 1: Understanding Regulatory Requirements

Before developing a chatbot, it's essential to understand the legal landscape and censorship requirements in the target region.

Step 2: Designing the Censorship Framework

Design a censorship framework that incorporates NLP algorithms, keyword filters, and contextual analysis to effectively manage sensitive content.

Step 3: Testing and Refinement

Conduct extensive testing to ensure the chatbot operates within legal parameters while maintaining user engagement. Use real-world scenarios to identify potential issues and refine the system.

Step 4: Deployment and Monitoring

Deploy the chatbot and continuously monitor its performance. Use analytics to track user interactions and make necessary adjustments based on feedback and regulatory changes.

Conclusion

Understanding how Chinese AI chatbots censor themselves provides insight into the complex interplay between technology and regulation. As AI continues to evolve, developers must balance innovation with compliance, ensuring that chatbots serve their intended purpose without infringing on user rights.

FAQ

What is AI censorship?

AI censorship refers to the use of algorithms and machine learning models to filter and manage content, ensuring compliance with legal and ethical standards.

How do Chinese AI chatbots censor themselves?

Chinese AI chatbots use NLP algorithms, keyword filters, and contextual analysis to identify and avoid sensitive topics, maintaining compliance with state regulations.

What are the challenges of implementing AI censorship?

Challenges include maintaining natural conversation flow, avoiding over-censorship, and ensuring consistent responses across different platforms and interactions.

How can developers address over-censorship in AI chatbots?

Developers can implement adaptive learning systems that refine censorship parameters based on user feedback and evolving societal norms.

What role does user feedback play in AI censorship?

User feedback helps refine chatbot interactions by informing adjustments to censorship parameters, improving user satisfaction and system performance.

What are the future trends in AI censorship?

Future trends include the integration of AI ethics, user feedback loops, and cross-border collaborations to develop more balanced censorship systems.

How can developers ensure compliance with censorship regulations?

Developers should understand the legal landscape, design a comprehensive censorship framework, and conduct extensive testing to ensure compliance with regulations.

Why is understanding AI censorship important?

Understanding AI censorship is crucial for developers to create compliant, effective chatbots that balance innovation with legal and ethical considerations.

Key Takeaways

- Chinese AI chatbots use sophisticated algorithms to censor content.

- Censorship is driven by national security and political stability concerns.

- Key challenges include maintaining conversation flow and avoiding over-censorship.

- Future trends include integrating AI ethics and user feedback loops.

- Developers must balance innovation with compliance in AI development.

- Understanding regional regulations is crucial for AI chatbot development.

- User feedback is essential for refining AI interactions.

- Cross-border collaborations may lead to more balanced censorship systems.

Related Articles

- Maximizing Your Craft Space: The Compact Revolution of Cricut's Premier Cutting Machine [2025]

- How AI is Revolutionizing Dating Apps: Inside Bumble's New Photo Feedback and Profile Guidance Tools [2025]

- Are You 'Agentic' Enough for the AI Era? [2025]

- Threads Introduces Quick DM Shortcuts: Revolutionizing Private Messaging [2025]

- Claude Opus 3: From AI Model to Substack Writer [2025]

- Google's Nano Banana 2: Advanced AI Image Tools Now Free for All [2025]

![How Chinese AI Chatbots Censor Themselves [2025]](https://tryrunable.com/blog/how-chinese-ai-chatbots-censor-themselves-2025/image-1-1772138190335.jpg)