How Roblox Uses AI to Censor Chats: A Deep Dive [2025]

In the ever-evolving world of online gaming, moderation remains a critical concern. Roblox, a platform hosting millions of players worldwide, has adopted AI to tackle this challenge, specifically focusing on chat censorship. This article explores the intricacies of AI-driven chat moderation in Roblox, examining the technology, implementation strategies, potential pitfalls, and future trends.

TL; DR

- AI Moderation: Roblox uses AI to automatically filter inappropriate content in chats, aiming to create a safer environment for users.

- Real-Time Processing: The AI processes chats in real-time, allowing for immediate detection and action on flagged content.

- Challenges: Balancing user privacy with effective moderation remains a significant challenge.

- Future Trends: Advancements in AI could lead to more nuanced understanding and contextual awareness in chat moderation.

- User Impact: While AI enhances safety, it also raises concerns about over-censorship and false positives.

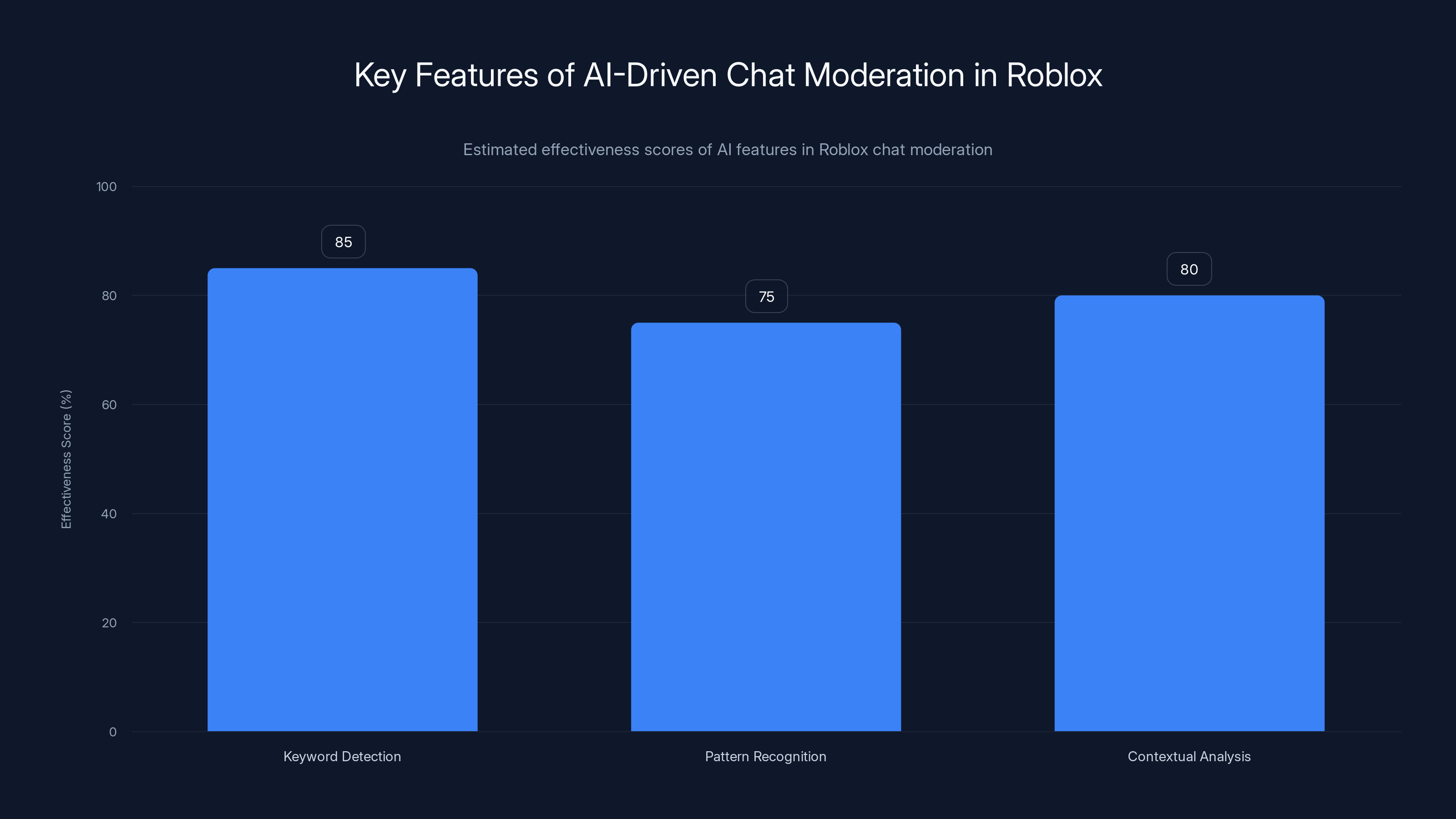

AI-driven chat moderation in Roblox effectively uses keyword detection, pattern recognition, and contextual analysis, with estimated effectiveness scores of 85%, 75%, and 80% respectively. Estimated data.

Understanding Chat Moderation in Roblox

Roblox hosts a vast array of games and activities, making it a dynamic community where players interact through chat. The platform's commitment to maintaining a safe environment is paramount, particularly given its young user base. AI plays a pivotal role in this by moderating chat content to prevent harassment, bullying, and inappropriate language.

The Role of AI in Chat Moderation

AI's ability to process large volumes of data in real-time makes it an ideal candidate for chat moderation. In Roblox, AI systems scan messages for prohibited words and phrases, flagging them for further review or automatic removal. This helps maintain a platform that is welcoming and safe for all players.

How AI Detects Inappropriate Content

Roblox employs machine learning algorithms trained on vast datasets of language patterns. These algorithms identify potentially harmful content by recognizing specific keywords, phrases, and context. The AI continuously learns and adapts, improving its accuracy over time.

Key Features of AI-Driven Moderation:

- Keyword Detection: Identifies and flags inappropriate language or sensitive topics.

- Pattern Recognition: Recognizes harmful patterns like repeated harassment or threats.

- Contextual Analysis: Attempts to understand the context of discussions to differentiate between benign and harmful content.

Real-Time Processing and Its Importance

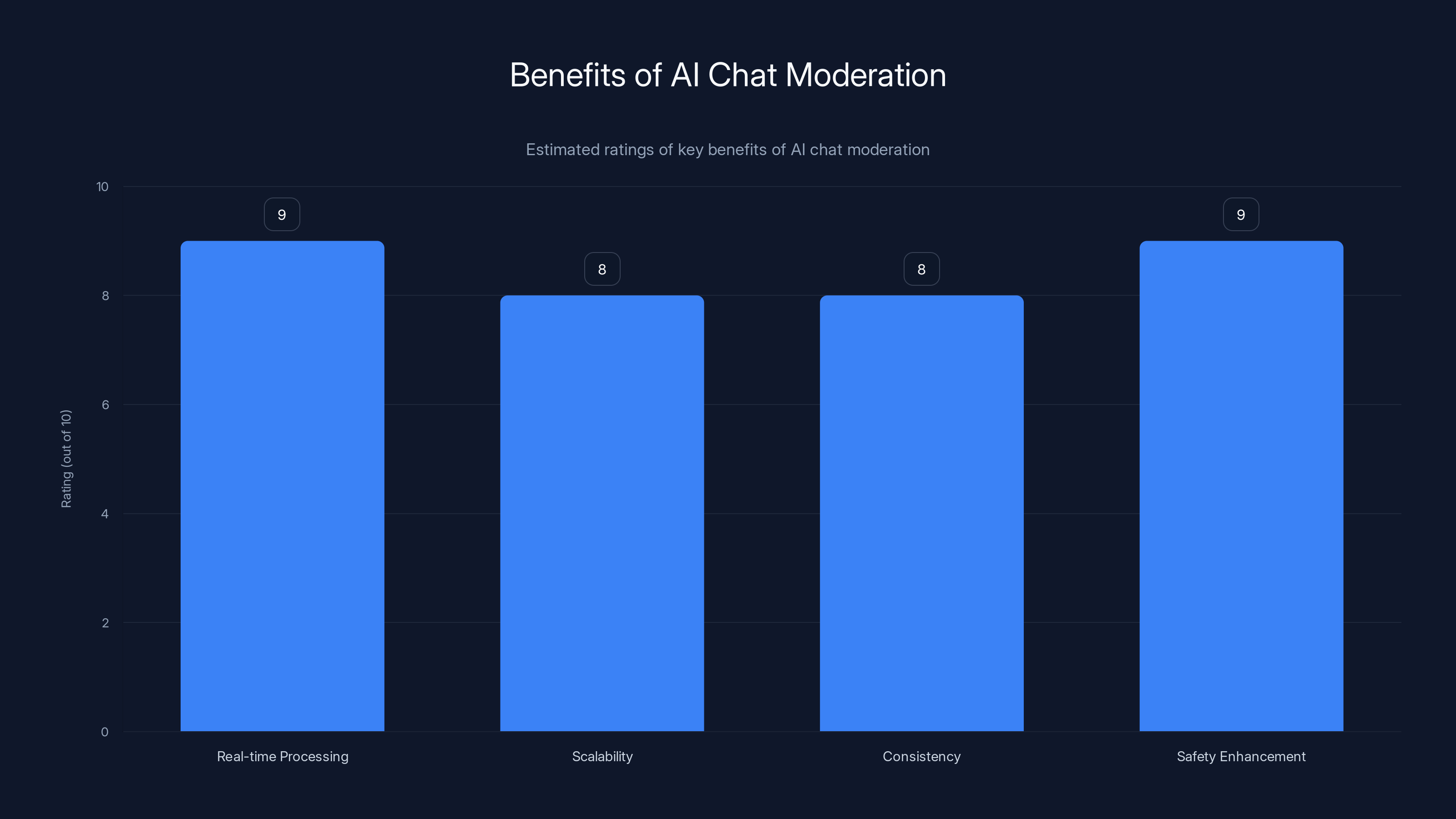

One of the standout features of AI in chat moderation is its ability to process information in real-time. This ensures that inappropriate content is addressed immediately, minimizing the potential harm to users. Real-time processing is crucial in fast-paced environments like Roblox, where conversations can influence gameplay and community dynamics.

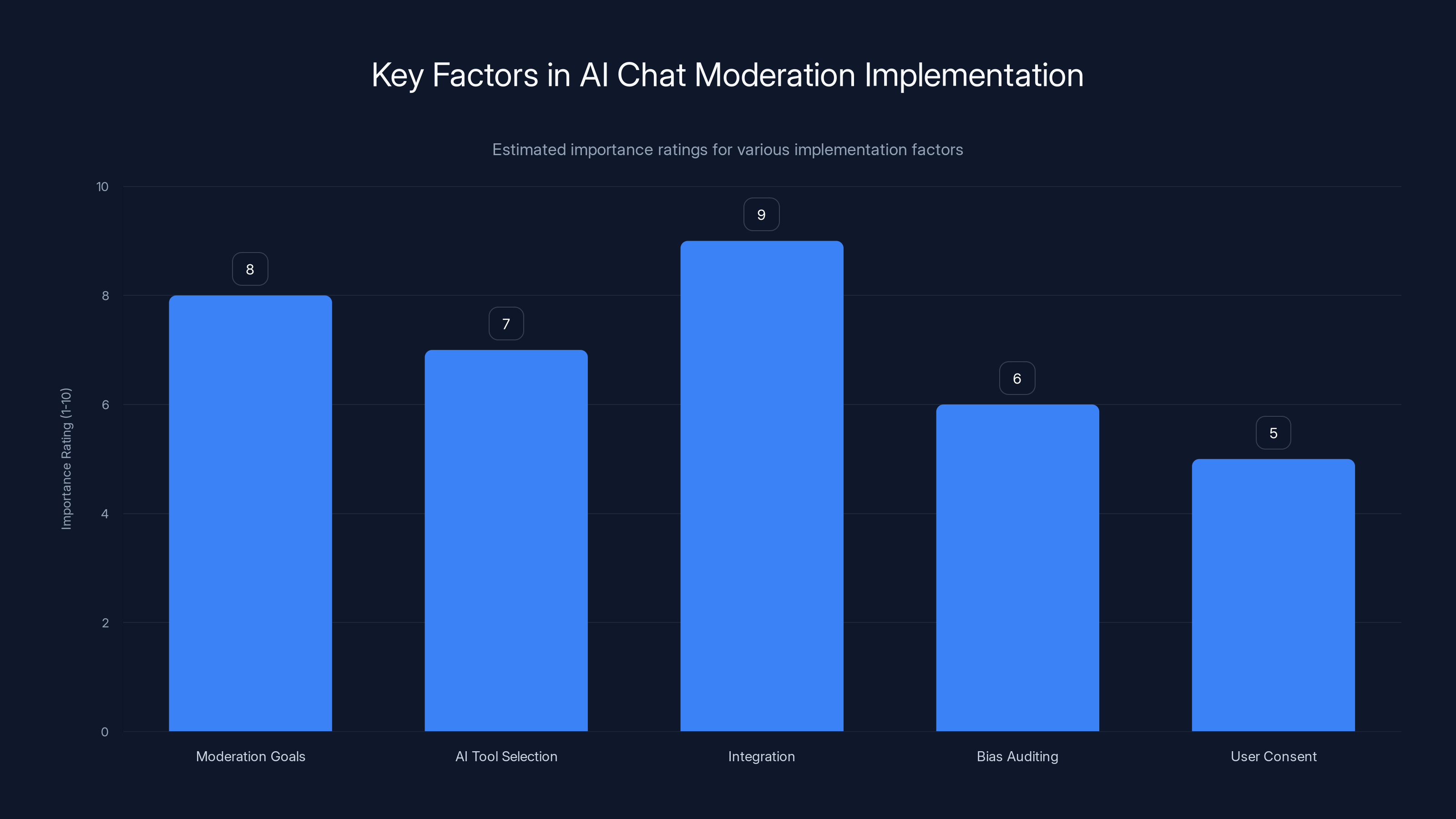

Integration with chat systems is rated highest in importance for AI chat moderation, followed by defining moderation goals. (Estimated data)

Common Pitfalls in AI Chat Moderation

Despite its advantages, AI-driven chat moderation is not without challenges. False positives, over-censorship, and privacy concerns are common issues that developers and users must navigate.

False Positives and Over-Censorship

AI systems can sometimes misinterpret benign content as harmful, leading to unnecessary censorship. This is particularly true in gaming environments where slang, abbreviations, and inside jokes are prevalent.

Solutions to Mitigate False Positives:

- Continuous Training: Regular updates and training of AI models to recognize new slang and cultural nuances.

- User Feedback: Implementing feedback loops where users can report incorrect censorship, helping the AI learn and improve.

Privacy Concerns

Balancing effective moderation with user privacy is a delicate task. Users may be concerned about their conversations being constantly monitored by AI.

Addressing Privacy Concerns:

- Transparency: Clearly communicating how AI moderation works and what data is being collected.

- Data Minimization: Ensuring that only necessary data is processed and stored.

Implementing AI Chat Moderation: A Practical Guide

For developers looking to implement AI chat moderation in their platforms, understanding the technical and ethical implications is key.

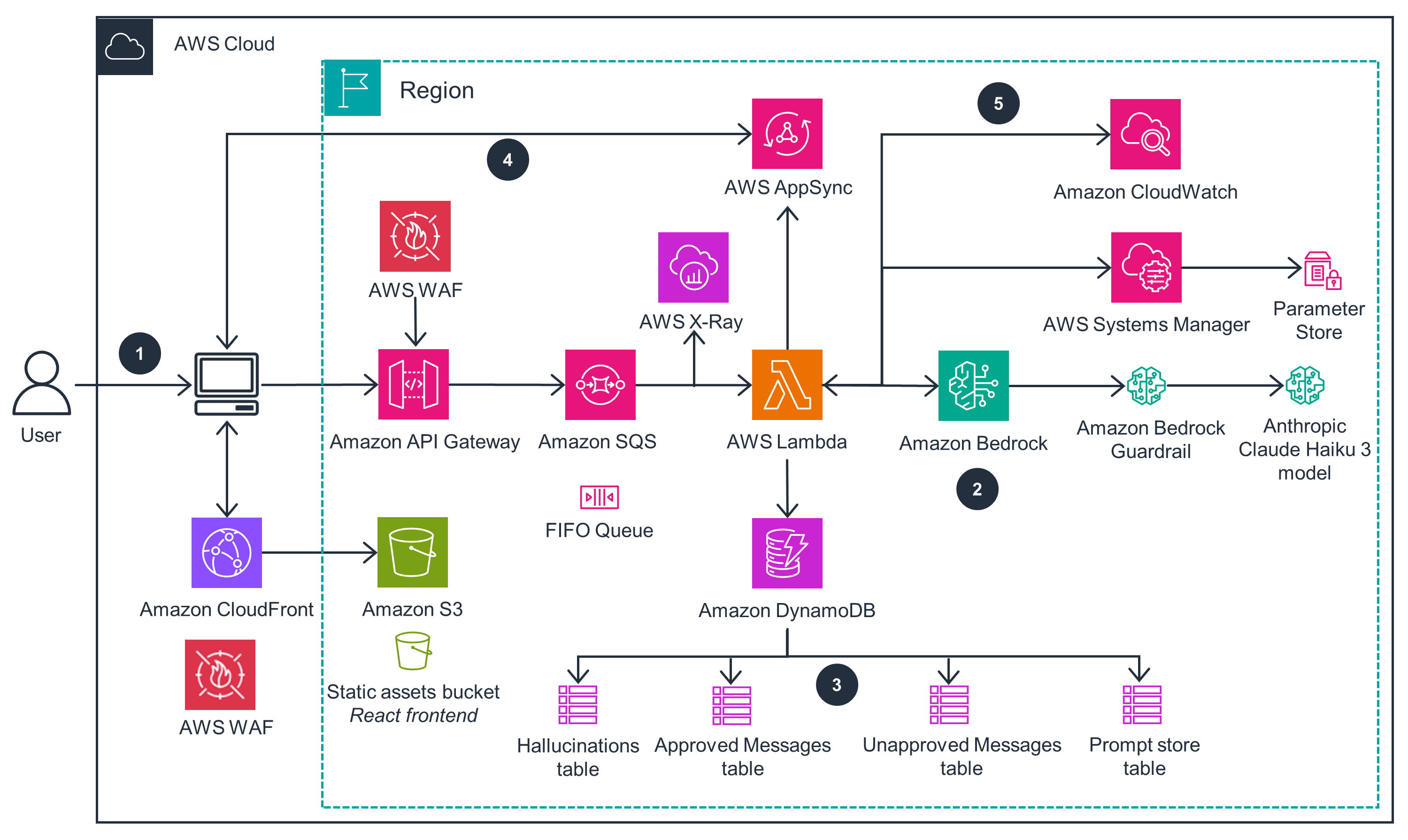

Technical Implementation Steps

- Define Moderation Goals: Clearly outline what types of content need to be moderated.

- Select Appropriate AI Tools: Choose AI models that align with your platform's needs. Consider pre-trained models for faster deployment.

- Integrate AI with Chat Systems: Ensure seamless integration with existing chat infrastructure for real-time processing.

python# Sample Python code to integrate an AI moderation model

from ai_moderation import Moderation Model

model = Moderation Model.load('pretrained-moderation-model')

# Function to process chat messages

def moderate_chat(message):

result = model.predict(message)

if result['flagged']:

return 'Message flagged for review'

return 'Message approved'

chat_message = "Hey, check out this cool hack!"

print(moderate_chat(chat_message))

Ethical Considerations

- Bias in AI Models: Regularly audit AI models to identify and correct biases.

- User Consent: Obtain user consent for data processing and provide options to opt-out where feasible.

AI chat moderation is highly rated for real-time processing and enhancing safety, with consistent performance across scalability and consistency. Estimated data.

Future Trends in AI Chat Moderation

As technology advances, the future of AI chat moderation looks promising, with several exciting developments on the horizon.

Enhanced Contextual Understanding

Future AI models are expected to have better contextual understanding, allowing them to distinguish between playful banter and genuine threats more effectively.

Integration with Multi-Modal Systems

Combining text analysis with voice and video could lead to comprehensive moderation systems that offer a holistic view of user interactions.

Personalization and User Profiling

AI could adapt moderation techniques based on user profiles, offering personalized experiences while maintaining safety protocols.

Conclusion

AI-driven chat moderation in Roblox represents a significant leap forward in maintaining a safe online environment. While challenges such as privacy concerns and false positives remain, continuous advancements in AI promise to address these issues. By understanding the technical and ethical implications, developers can implement effective moderation systems that balance safety and user freedom.

FAQ

What is AI chat moderation?

AI chat moderation refers to the use of artificial intelligence to monitor and manage user-generated chat content, ensuring it complies with community guidelines and is free from inappropriate or harmful language.

How does AI detect inappropriate content in chats?

AI systems use machine learning algorithms to identify harmful content by analyzing language patterns, keywords, and context. These systems are trained on large datasets to improve accuracy and adapt to new language trends.

What are the benefits of using AI for chat moderation?

AI offers real-time processing, scalability, and consistency in moderating large volumes of chat data. It helps create a safer online environment by quickly identifying and addressing inappropriate content.

How can false positives be reduced in AI chat moderation?

To reduce false positives, AI models should be regularly updated and trained with new language data. Implementing user feedback loops can also help AI systems learn from mistakes and improve over time.

What are the privacy concerns associated with AI chat moderation?

Privacy concerns arise from the constant monitoring of user conversations. Addressing these concerns involves transparent communication about data collection practices, obtaining user consent, and minimizing data storage.

How will AI chat moderation evolve in the future?

Future advancements in AI chat moderation may include improved contextual understanding, integration with multi-modal systems, and personalized moderation techniques based on user profiles.

Key Takeaways

- AI provides real-time chat censorship, enhancing user safety.

- Balancing privacy with moderation is a significant challenge.

- False positives in AI moderation can lead to over-censorship.

- Future AI models will offer better contextual understanding.

- Developers must consider ethical implications of AI moderation.

- Roblox's large user base necessitates effective chat moderation.

- Personalization in AI moderation could enhance user experience.

- Continuous AI advancements promise to address current limitations.

Related Articles

- Understanding Databricks' Revolutionary RAG Agent for Enterprise Search [2025]

- Decoding Life: How Open Source AI Models Are Revolutionizing Genomics [2025]

- Accelerating AI Development: Strategic Isolation and Empowerment of Engineering Teams

- AI Agents Revolutionize VPN Connectivity: How ExpressVPN Is Changing the Game [2025]

- AI Can Write Emails and Summarize Meetings, But Here’s What It Still Can’t Do in 2026

- OpenAI Codex Comes to Windows: Revolutionizing Coding with AI [2025]

![How Roblox Uses AI to Censor Chats: A Deep Dive [2025]](https://tryrunable.com/blog/how-roblox-uses-ai-to-censor-chats-a-deep-dive-2025/image-1-1772730306509.jpg)