Intel's ZAM Memory: A New Challenger to Nvidia's AI Dominance [2025]

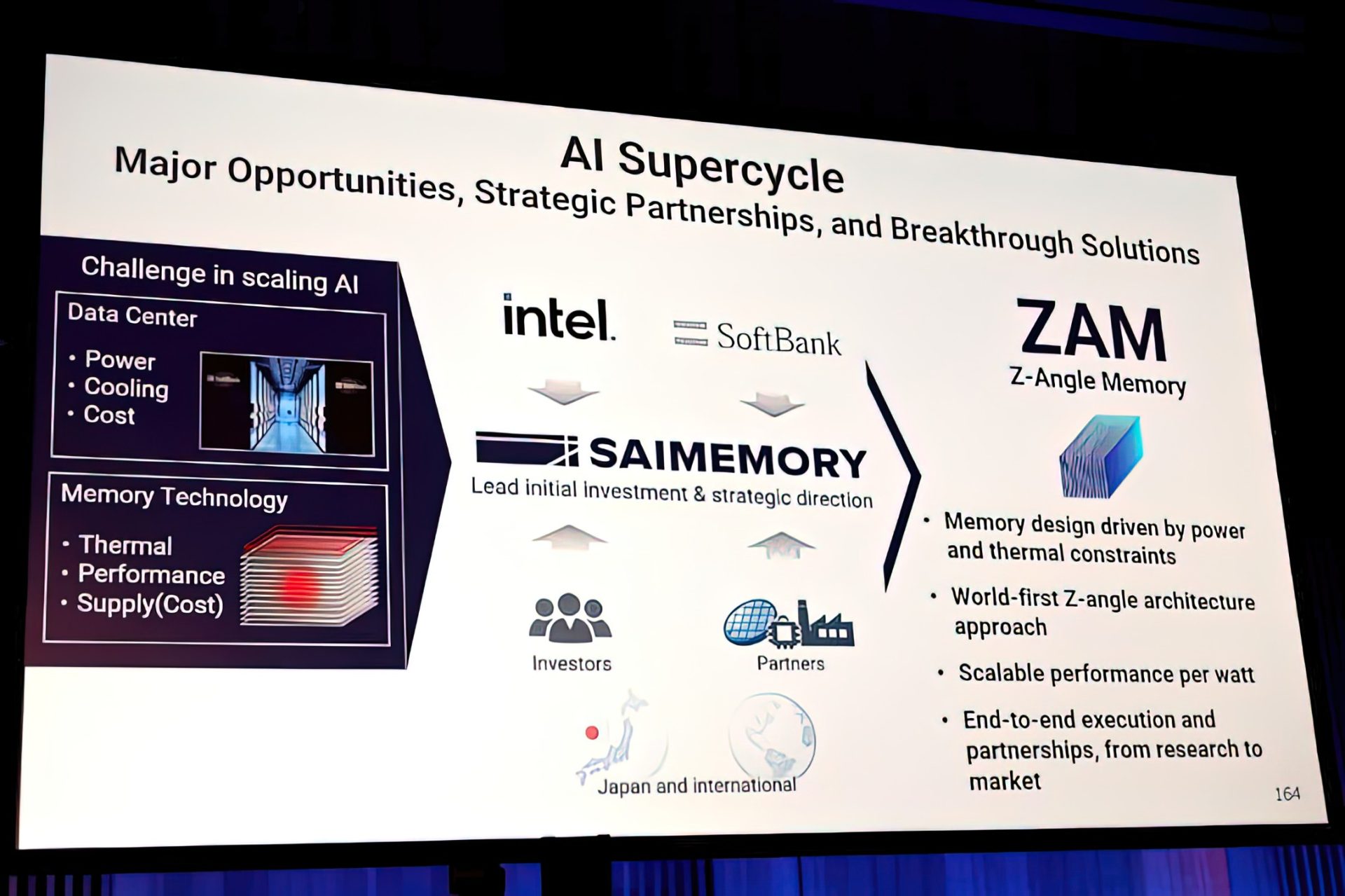

Intel's latest foray into the high-performance computing arena has taken the industry by storm with the announcement of ZAM memory technology. This innovative solution, designed to rival Nvidia's AI dominance, introduces a staggering nine-layer stack architecture that promises significant advancements in bandwidth and processing capabilities. In this article, we'll delve into the technical intricacies of ZAM memory, its potential impact on the AI landscape, and practical insights for leveraging this technology in real-world applications.

TL; DR

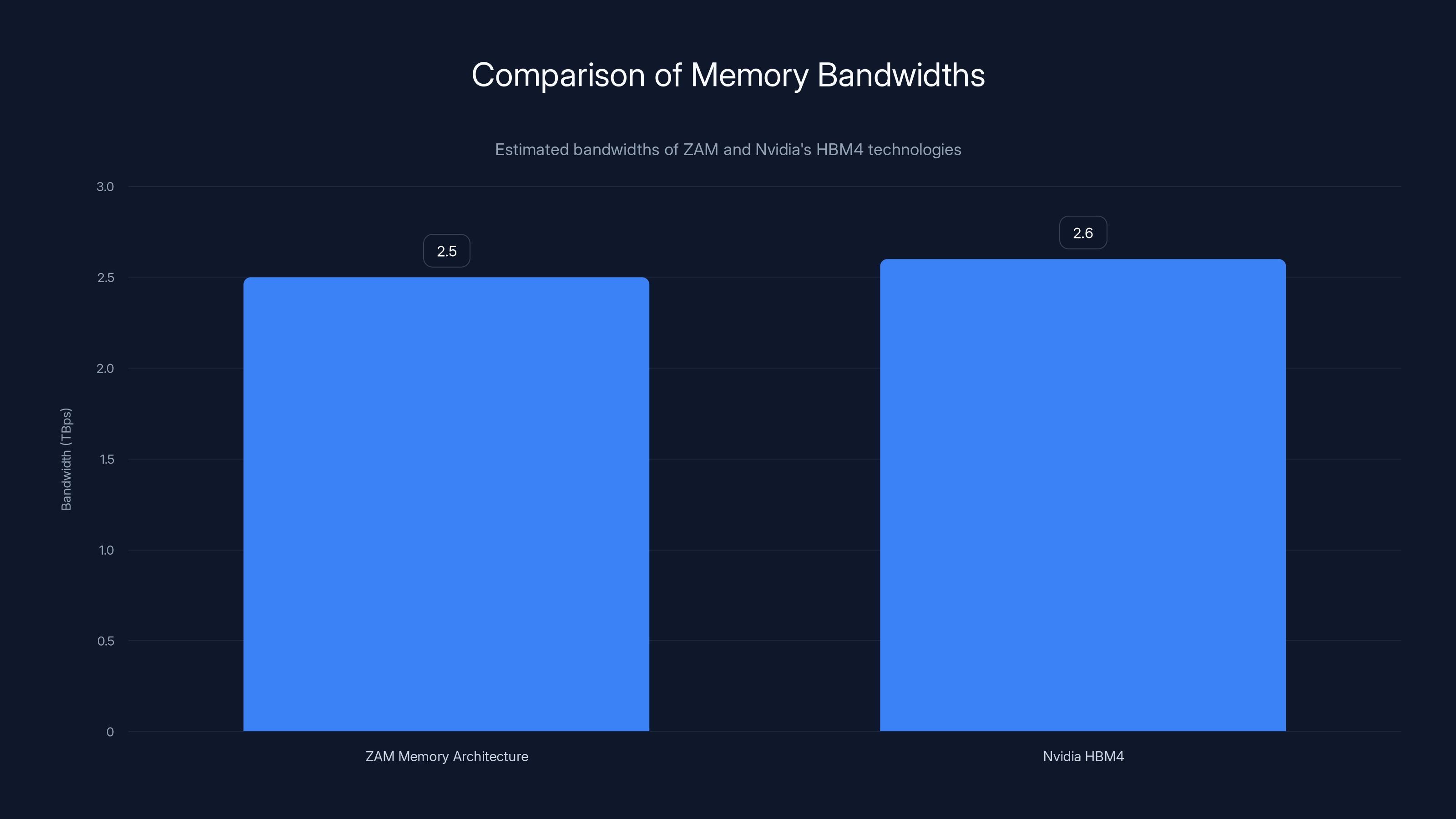

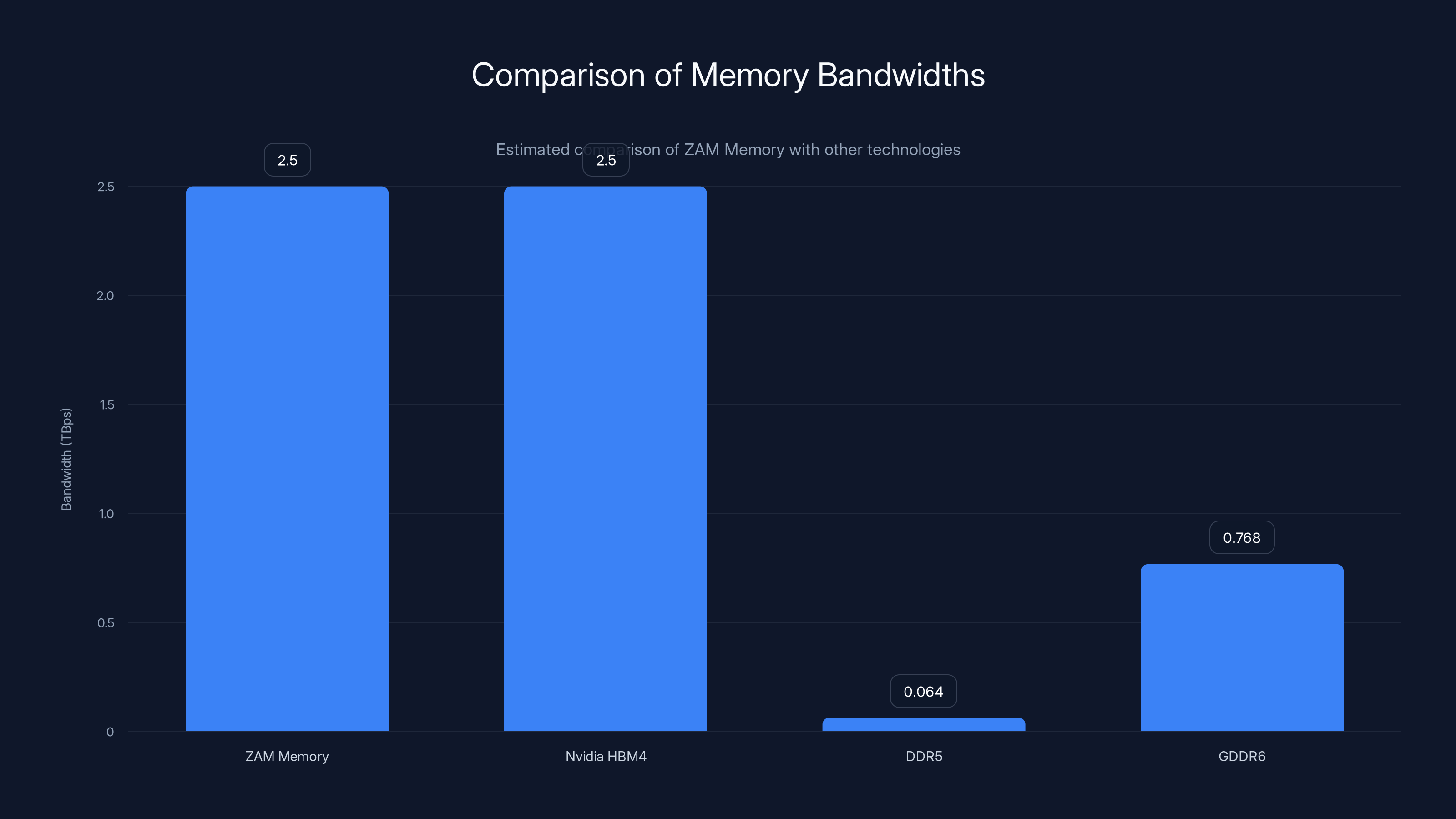

- ZAM Memory Architecture: Features nine stacked layers, offering up to 2.5 TBps of bandwidth.

- AI Performance Boost: Aims to rival Nvidia's HBM4 technology by providing similar bandwidth with enhanced efficiency.

- Implementation Insights: Key strategies for integrating ZAM in AI systems.

- Challenges & Solutions: Overcoming potential pitfalls in deploying ZAM.

- Future Trends: Anticipating the impact of ZAM on the AI memory market.

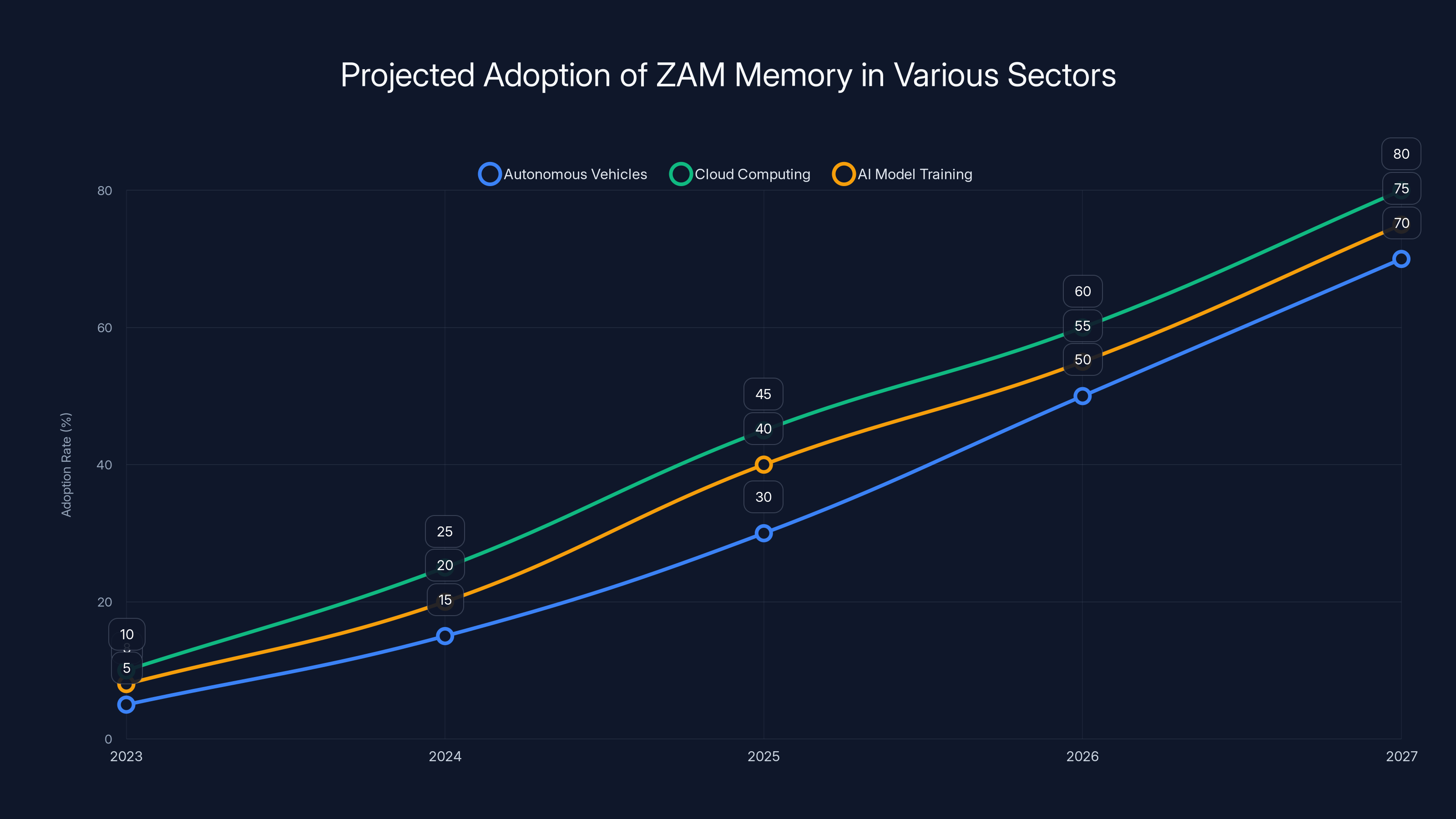

ZAM memory is expected to see increasing adoption across sectors, with cloud computing and autonomous vehicles leading the charge. Estimated data suggests significant growth by 2027.

Understanding ZAM Memory Technology

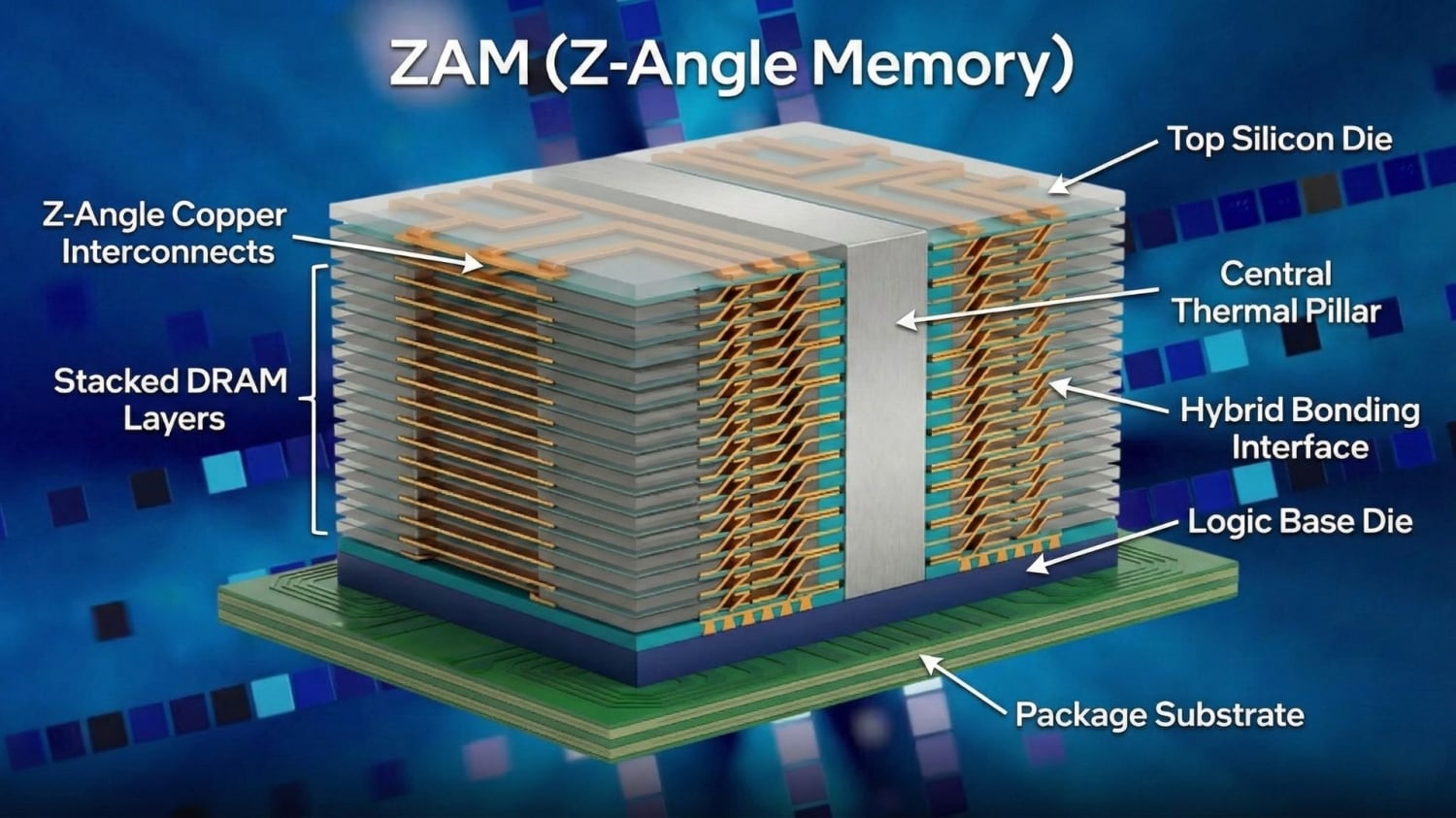

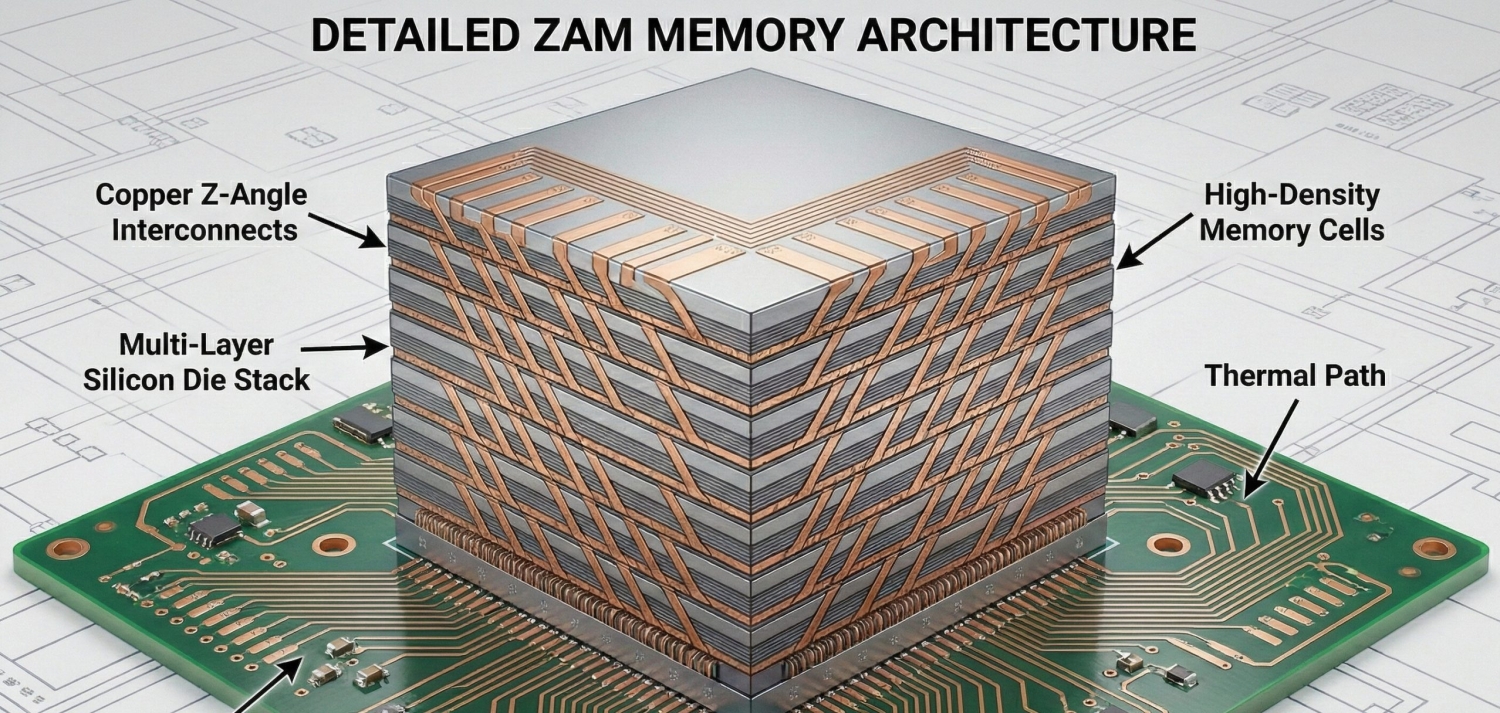

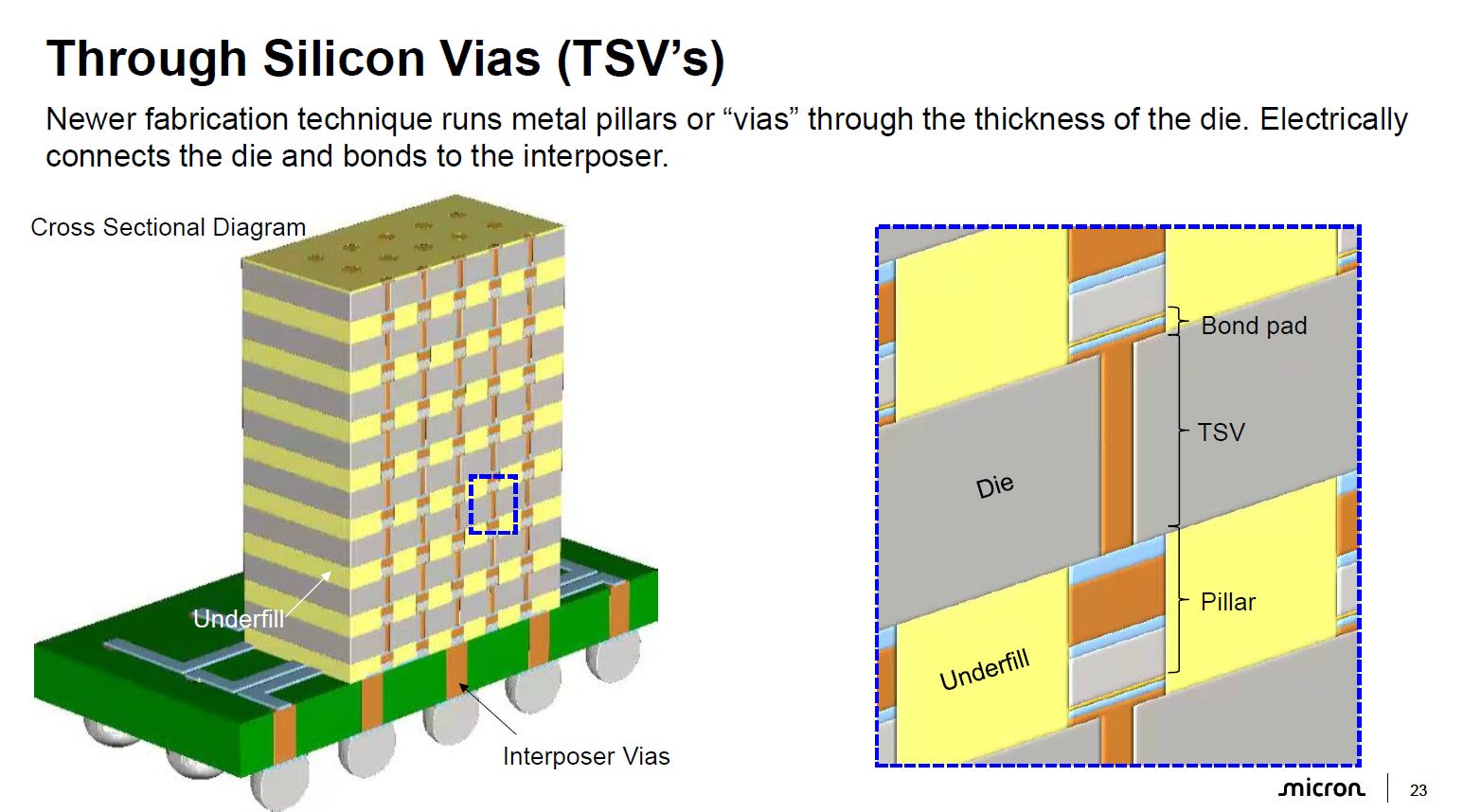

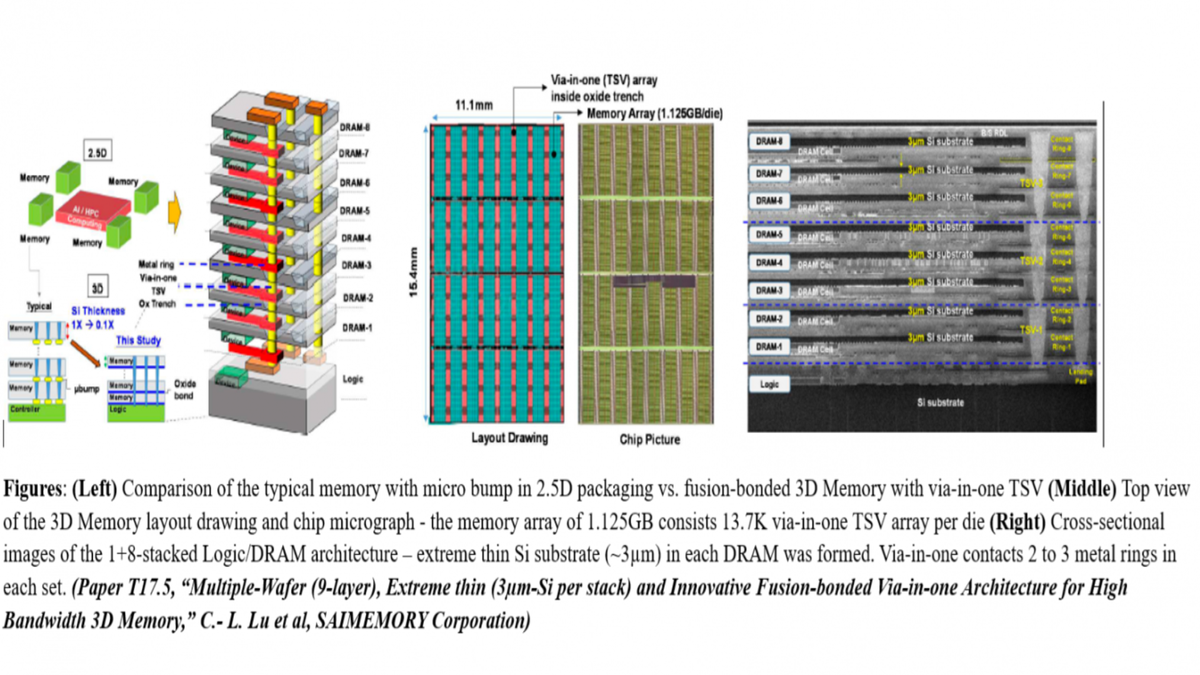

Intel's ZAM memory, short for "Zenith Array Memory," marks a significant leap forward in memory technology. It features a vertically stacked architecture consisting of nine functional memory layers. This design allows for an unprecedented level of data throughput, reaching up to 2.5 TBps. Such bandwidth is crucial for AI applications, where rapid data access and processing are paramount.

The Nine-Layer Stack

ZAM's nine-layer stack is engineered to maximize data flow efficiency. Each layer is meticulously designed to minimize latency and optimize bandwidth distribution. This architecture is not merely about stacking more layers but ensuring each layer operates seamlessly with the others, akin to the synaptic connections in a neural network.

- Layer Integration: Each layer in the ZAM stack is integrated using advanced interconnect technologies, reducing the electrical resistance and enhancing signal integrity.

- Thermal Management: With increased layers comes increased heat. ZAM employs cutting-edge thermal management solutions, including sophisticated heat dissipation materials and designs.

Bandwidth and Efficiency

The headline feature of ZAM is its ability to deliver bandwidth on par with Nvidia's HBM4. However, ZAM goes a step further by enhancing overall system efficiency. This efficiency is achieved through:

- Data Path Optimization: Reducing the number of hops data must make between layers.

- Power Management: Advanced power gating techniques that ensure only active memory sections consume power.

ZAM's architectural design ensures that AI processors can access data more rapidly and with less power consumption, a critical factor in data centers where energy efficiency is paramount.

ZAM Memory Architecture offers up to 2.5 TBps of bandwidth, closely rivaling Nvidia's HBM4 with an estimated 2.6 TBps. Estimated data.

Practical Implementation of ZAM Memory

Deploying ZAM memory in AI systems requires a strategic approach. Here are some key considerations and best practices for effectively integrating ZAM technology:

System Architecture Compatibility

Before integrating ZAM, it's essential to assess your current system architecture. Compatibility with existing motherboard configurations and processor interfaces is crucial.

- Interface Adaptation: Ensure that your system's memory controllers can handle the high throughput of ZAM.

- Firmware Updates: Check for firmware updates that optimize your system for ZAM's architecture.

Application-Specific Configurations

ZAM's capabilities can be tailored to specific applications. Whether you're running deep learning models, real-time analytics, or complex simulations, configuring ZAM to suit your workload is key.

- Memory Partitioning: Allocate memory layers to different tasks to maximize performance.

- Load Balancing: Use load balancing algorithms to distribute data evenly across memory layers.

Overcoming Challenges in ZAM Deployment

As with any cutting-edge technology, implementing ZAM memory comes with its share of challenges. Here are common pitfalls and solutions:

Thermal Constraints

The dense stacking of memory layers can lead to thermal challenges. Effective thermal management strategies include:

- Active Cooling Systems: Integrate active cooling solutions such as liquid cooling to manage heat dissipation.

- Thermal Simulation Tools: Utilize simulation tools to predict thermal behavior and adjust configurations accordingly.

Data Integrity and Error Handling

High data throughput increases the risk of data corruption. Implement robust error correction codes (ECC) and data integrity checks to mitigate this risk.

- ECC Implementation: Use ECC algorithms to detect and correct errors in real time.

- Redundancy Strategies: Employ redundancy techniques to ensure data accuracy and recoverability.

ZAM Memory and Nvidia HBM4 both achieve 2.5 TBps, significantly outperforming DDR5 and GDDR6. Estimated data for comparison.

Future Trends and Recommendations

The introduction of ZAM memory is poised to reshape the AI memory landscape. Here are some anticipated trends and strategic recommendations:

Industry Adoption and Impact

ZAM is expected to gain traction across various sectors, from autonomous vehicles to cloud computing. Its high bandwidth and efficiency make it an attractive option for any application requiring rapid data processing.

- Market Penetration: As more companies adopt ZAM, expect a gradual shift away from traditional memory solutions.

- Ecosystem Development: A growing ecosystem of tools and platforms will emerge to support ZAM integration.

Recommendations for Early Adopters

For organizations looking to leverage ZAM technology, consider the following recommendations:

- Pilot Programs: Start with small-scale pilot implementations to assess performance and compatibility.

- Collaborative Development: Partner with Intel and other tech leaders to stay at the forefront of developments in ZAM technology.

Conclusion

Intel's ZAM memory represents a pivotal advancement in AI and high-performance computing. By offering unprecedented bandwidth and efficiency, ZAM is set to challenge Nvidia's dominance in the AI space. As the technology evolves, organizations that embrace ZAM will be well-positioned to harness its full potential, driving innovation and achieving new levels of computational performance.

FAQ

What is ZAM memory?

ZAM, or Zenith Array Memory, is Intel's latest memory technology featuring a nine-layer stack design. It's engineered to deliver significant bandwidth and efficiency for AI applications.

How does ZAM compare to HBM4?

While both ZAM and HBM4 offer high bandwidth, ZAM aims to provide similar performance with enhanced efficiency, potentially making it a more attractive option for energy-conscious applications.

What are the benefits of using ZAM memory?

Benefits include increased data throughput, reduced latency, and improved power efficiency, making it ideal for AI and high-performance computing tasks.

How can I integrate ZAM memory into my systems?

Ensure compatibility with your existing architecture, update firmware, and configure memory settings to optimize performance for your specific applications.

What challenges might I face when deploying ZAM?

Potential challenges include thermal management and data integrity. Implementing effective cooling solutions and robust error correction techniques can mitigate these issues.

What future trends should I watch for with ZAM memory?

Watch for increased adoption across various industries, development of supportive ecosystems, and ongoing advancements in memory technology that further enhance ZAM's capabilities.

Is ZAM suitable for all AI applications?

ZAM is particularly beneficial for applications requiring rapid data processing and high bandwidth, such as deep learning and real-time analytics.

What steps should I take before adopting ZAM?

Conduct pilot programs, collaborate with industry leaders, and stay informed about the latest developments in ZAM technology to ensure successful integration.

Key Takeaways

- ZAM memory's nine-layer architecture offers up to 2.5 TBps bandwidth.

- It aims to rival Nvidia's HBM4 with enhanced efficiency.

- Effective thermal management and ECC are critical for deployment.

- Early adopters should conduct pilot programs for best results.

- ZAM's impact could reshape AI memory markets.

Related Articles

- Apple May Open Up The App Store To Agentic AI: Opportunities and Challenges [2025]

- Ergonomics in High-Stress Environments: How Comfort Enhances Productivity [2025]

- Navigating Microsoft's Edge Copilot: A Deep Dive into Browser AI Integration [2025]

- Building Data Centers in Orbit: The Future of Cloud Infrastructure [2025]

- Maximize Your Gaming Experience: iBuyPower's Memorial Day Sale [2025]

- LinkedIn's Workforce Reduction: Implications and Future Directions [2025]

![Intel's ZAM Memory: A New Challenger to Nvidia's AI Dominance [2025]](https://tryrunable.com/blog/intel-s-zam-memory-a-new-challenger-to-nvidia-s-ai-dominance/image-1-1778713445502.png)