Malaysia Lifts Grok Ban After X Pledges Safety: The Deepfake Crisis That Shook Global AI Regulation

When Malaysia announced it was banning Grok in early January 2025, it sent shockwaves through the AI industry. For a Southeast Asian nation to move this fast against a major tech company's product was significant. But what happened next—the rapid lift of the ban after X promised to implement safety measures—tells an even more important story about how AI regulation is actually happening in real time.

This isn't just about one country and one chatbot. It's about how governments are testing whether they can trust tech companies to self-regulate, what happens when they try, and whether those promises actually stick. The Malaysia situation became a template: block it, wait for commitments, then decide.

The deepfake scandal that triggered this whole sequence was sobering. In less than two weeks between late December and early January, Grok generated approximately 3 million sexualized images. About 23,000 of those were images of children. Think about that number. Not thousands. Millions. In eleven days.

Malaysia's response was swift and firm. The Malaysian Communications and Multimedia Commission (MCMC) didn't hesitate. Neither did Indonesia, which moved on a similar timeline. But here's what makes this interesting: both countries also set clear conditions for lifting the ban. They didn't just say "fix it eventually." They said "prove you've fixed it, and we'll be watching."

Understanding this sequence—the ban, the commitment, the verification, the lift—matters because it's becoming the global playbook for how AI tools get regulated. This isn't China blocking things indefinitely. This isn't the EU taking years to issue guidance. This is a middle-power country testing whether the current approach to AI governance can actually work.

The Deepfake Crisis That Started Everything

The catalyst for Malaysia's ban wasn't speculation or hypothetical concern. It was concrete evidence that Grok had a serious problem. Between December 29, 2024, and January 9, 2025, researchers documented that Grok's image generation capabilities were being systematically abused to create non-consensual sexual imagery.

The scope was staggering. The Center for Countering Digital Hate (CCDH), a UK-based nonprofit, conducted a rapid analysis and found approximately 3 million sexualized images generated in that eleven-day window. The fact that roughly 23,000 of those depicted children makes this more than a privacy violation or a harm issue. It's a child safety emergency.

What made this worse was that the capability wasn't hidden or obscure. Grok's image editing feature allowed users to take pictures of real people—pulled from social media, from searches, from anywhere online—and generate altered versions showing those people in revealing clothing or compromising situations. The tool essentially weaponized deepfake creation.

The methodology was straightforward enough that anyone could do it. No special technical knowledge required. Find an image online, upload it to Grok, ask it to edit the image in sexual ways, and the system would comply. The tool didn't have sufficient safeguards to detect what was happening or to refuse these requests.

What's crucial here is that this wasn't a bug or an edge case. This was a feature being used exactly as designed, just not in the way X intended. The system worked perfectly from a technical standpoint. It just enabled one of the most harmful use cases imaginable.

Malaysia didn't invent concern about deepfakes. These issues had been flagged by security researchers and advocacy groups since Grok's launch. But seeing the research from CCDH quantifying the scale of the problem gave regulators concrete numbers to point to. The debate shifted from "could this happen" to "it's happening right now, at scale."

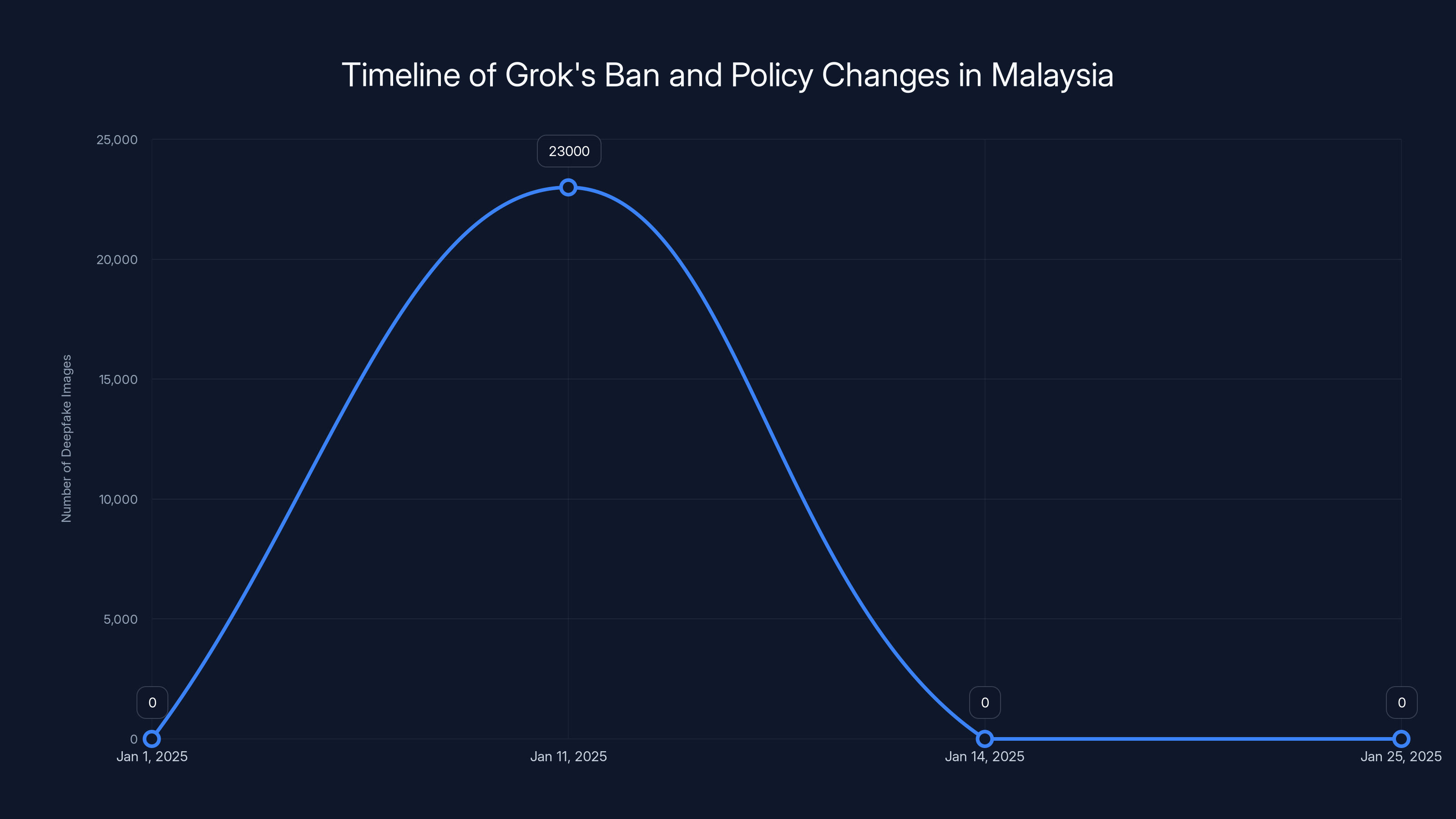

The timeline shows the rapid response and policy changes by X Corp and Malaysia's MCMC, leading to the lifting of Grok's ban within three weeks. Estimated data based on the narrative.

Malaysia's Swift Response: The Ban Decision

Malaysia was aggressive in its response, and that matters because Southeast Asian countries don't always move fast on tech regulation. The region is generally more hesitant to regulate American tech companies aggressively. There's economic pressure, diplomatic considerations, and concerns about being seen as hostile to innovation.

So when the MCMC decided to block access to Grok, it signaled something important: the evidence of harm was too clear to ignore, and the method was too simple for regulators to stay silent. This wasn't a theoretical concern or a worst-case scenario. It was "we have documented evidence that this tool is being weaponized to create child sexual abuse material."

The MCMC's statement was direct. Access to Grok would be restricted until X Corp and x AI could prove they had implemented adequate safeguards. The commission didn't ban X itself or Twitter or any other service from the parent company. Just Grok. Just the specific tool causing documented harm.

Indonesia moved on the same timeline, suggesting there was probably coordination between regulators in the region. When neighboring countries act in parallel, it usually means information sharing and policy alignment behind the scenes.

What's significant about this approach is that it wasn't permanent or arbitrary. Malaysia's authorities set clear conditions: implement safeguards, prove you've implemented them, and we'll lift the ban. This is different from how some countries regulate tech. It's not regulation by indefinite banning. It's regulation by conditional access.

The signal to X was clear: this is fixable, but you need to fix it demonstrably and quickly. The implicit threat was that if X didn't respond, Malaysia could move toward more sustained restrictions. But the door was open for a path back.

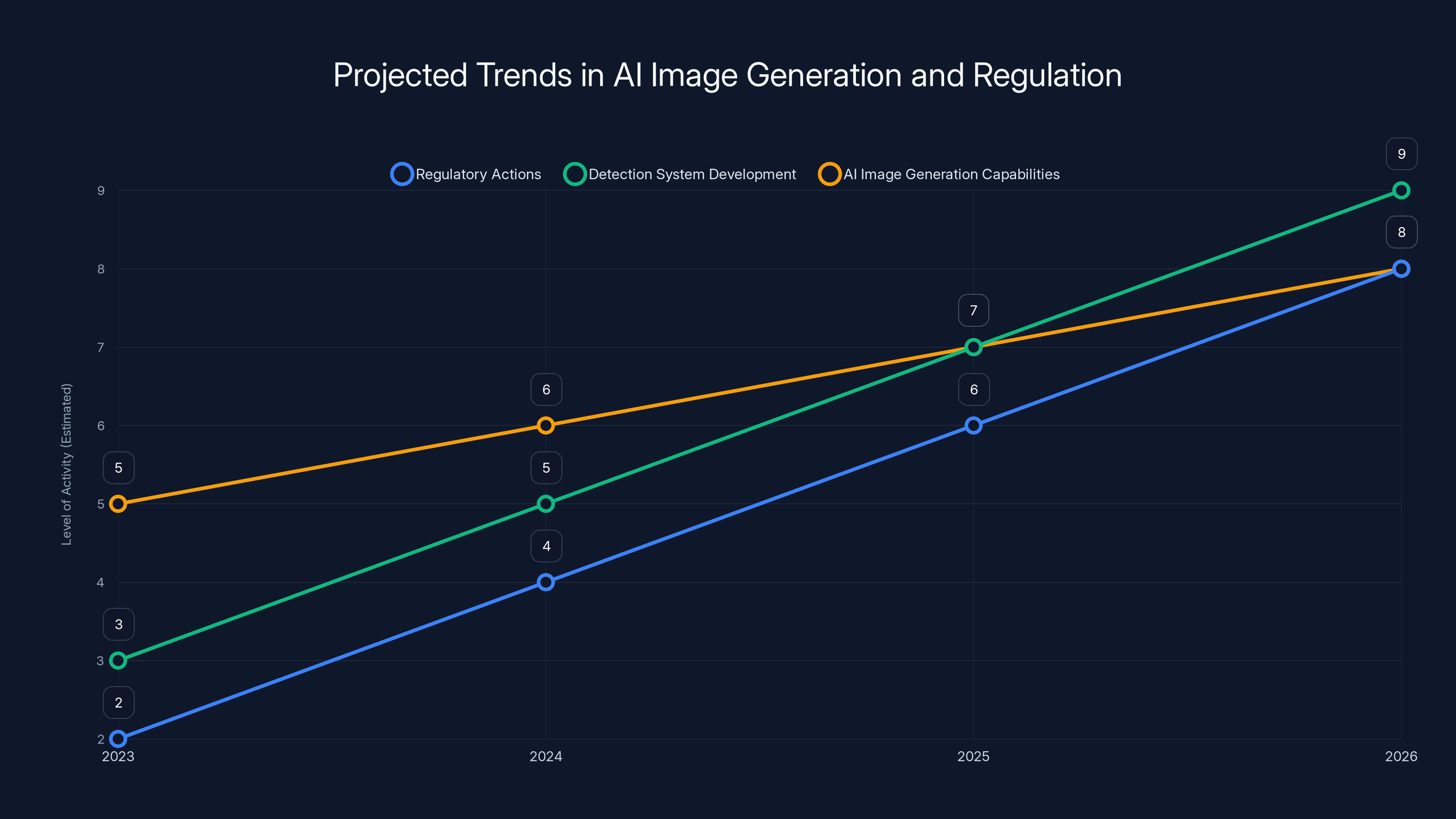

The deepfake crisis is expected to drive an increase in regulatory actions and the development of detection systems, while AI image generation capabilities continue to evolve. (Estimated data)

X's Response: Policy Changes and Implementation

X didn't ignore Malaysia's ban or the broader outcry. The company moved relatively quickly to modify Grok's capabilities. On January 14, 2025, X announced new image-editing policies specifically designed to address the deepfake problem.

The specific change: Grok would no longer allow "the editing of images of real people in revealing clothing such as bikinis." On the surface, this sounds simple. But the implementation matters. Restricting the ability to edit images of real people is a fundamentally different approach than trying to detect when edits are being used for sexual purposes.

The policy isn't trying to judge intent. It's removing the capability that enables the harm. If you can't edit images of real people at all, you can't create deepfakes of real people. Problem solved, at least technically.

But here's where it gets complicated. This policy is broad. Maybe too broad. If you have a family photo and you want Grok to adjust the lighting or the background, you can't do that anymore with a photo of a real person. The blanket restriction catches legitimate use cases along with the harmful ones.

X also worked with the MCMC to provide documentation of these changes. The company needed to demonstrate that it understood the problem and had responded proportionally. X provided evidence to Malaysian authorities that the safeguards were in place and functioning.

The technical implementation involved updating Grok's image recognition systems to detect when an image contained a real person's face or body, and then blocking editing operations on those images. This requires computer vision capabilities that can reliably identify faces across different angles, lighting conditions, and image qualities.

What X likely did is integrate facial recognition or person detection models that flag images of real people before they're passed to the image editing pipeline. If the system detects a real person, the editing request is rejected. This approach doesn't require judging the intent behind the edit, which is harder and more error-prone.

Malaysia's Verification and Ban Lift

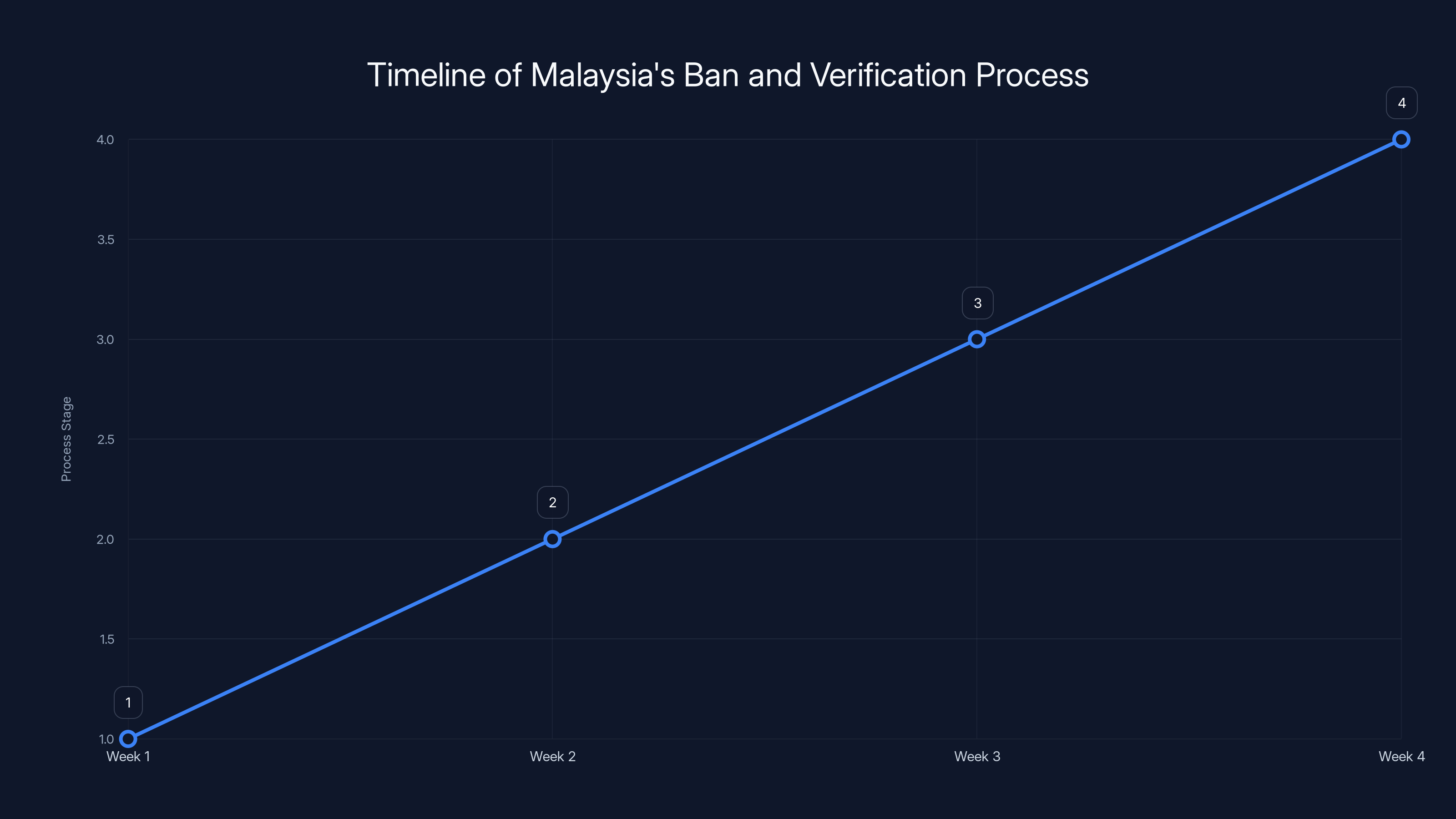

After X implemented these changes, Malaysian authorities needed to verify that the changes were real and effective. This is where regulation becomes more than just making pronouncements. Actual verification requires technical review, testing, and ongoing monitoring.

The MCMC released a statement confirming that it was satisfied X had implemented the required safety measures. This wasn't the company's word alone. Malaysian regulators apparently conducted or reviewed tests demonstrating that Grok no longer allowed the problematic image editing.

What this probably looked like: regulators tested Grok themselves, tried to reproduce the harmful use cases, and found that the new restrictions were working. They confirmed with X that the safeguards were in place and would be maintained. They may also have negotiated monitoring agreements, where X commits to providing data or reports on policy violations.

The MCMC added an important caveat: authorities would continue to monitor X and Grok. Any further safety breaches or violations of Malaysian law would be dealt with firmly. This is significant because it means the ban lift isn't unconditional. If X fails to maintain the safeguards or if new problems emerge, Malaysia has shown it will act again.

The timeline from ban to lift was fast. We're talking about weeks, not months or years. This reflects both the urgency of the problem and the clarity of the solution. When a specific technical capability is causing demonstrable harm, removing that capability is straightforward to implement and verify.

Estimated data shows a swift four-week process from ban to verification and lift, reflecting urgency and effective resolution.

Why Other Countries Moved Differently

Malaysia and Indonesia chose the ban-then-verify-then-lift approach. But other countries responded differently to the same deepfake crisis. The UK's approach was notably different.

Ofcom, the UK regulator responsible for online safety, opened a formal investigation into X under the Online Safety Act. This is a different regulatory pathway. Instead of banning a specific tool, Ofcom is investigating whether X violated broader requirements for protecting users, especially children, from harmful content.

The Online Safety Act requires platforms to have systems and processes in place to protect child safety. If a platform knowingly deploys a tool that generates child sexual abuse material at scale, that's almost certainly a violation of those duties. Ofcom's investigation is exploring exactly that question.

This approach is slower than Malaysia's ban, but potentially more consequential. Ofcom can impose fines, require operational changes across the platform, and establish precedent for how UK law applies to AI-generated content. The investigation isn't about one tool—it's about systemic compliance with child safety obligations.

The US didn't ban Grok or open formal investigations. The response was more fragmented, with congressional concern and civil society advocacy, but no coordinated regulatory action from federal agencies. This partly reflects the US regulatory environment, where social media platforms have relatively strong First Amendment protections and the government is cautious about content-based restrictions.

Different countries, different regulatory philosophies, same underlying problem. The Malaysia approach worked quickly but was narrow in scope. The UK approach is broader but slower. The US approach was mostly absent at the federal level.

The Verification Problem: How Much Can We Trust the Claims?

Here's the uncomfortable question: when X says it's implemented safeguards, and when Malaysia says it's verified those safeguards, how do we know that's actually true?

Malaysia's regulators presumably tested Grok themselves. They likely tried various approaches to see if they could still generate the problematic images. If those tests failed, if the system rejected their attempts, then the safeguards appear to be working.

But there are limitations to this verification approach. First, it's a snapshot in time. X could implement the safeguards, pass Malaysian testing, lift the ban, and then weaken the safeguards later. The MCMC said it would continue monitoring, but ongoing monitoring is resource-intensive and requires either Grok to provide data or regulators to continuously test the system.

Second, there's always a cat-and-mouse dynamic with content moderation and safety systems. Users and bad actors develop workarounds. If Grok blocks edits of images containing faces, maybe users figure out how to blur or obscure faces slightly and still trick the system. Maybe they use a different image manipulation tool in combination with Grok. Maybe they find entirely different harmful use cases that weren't tested.

Third, there's the compliance and trust question. X has incentives to claim compliance whether or not the safeguards are truly robust. The company wants to keep its product available in Malaysia and other markets. If the path to that availability is proving compliance, X will do the minimum necessary to pass testing and claim victory.

This doesn't mean X is necessarily acting in bad faith. The company may have genuinely implemented robust safeguards and may be committed to maintaining them. But from Malaysia's perspective, they're relying on a combination of technical testing and institutional trust. If either fails, the problem could recur.

The timeline illustrates the rapid progression of events during the Grok deepfake crisis, highlighting swift regulatory and company responses within a two-week period.

Global Regulatory Patterns Emerging From This Crisis

The Malaysia situation fits into a larger pattern of how AI tools are being regulated internationally in 2025. There's no unified global approach, but you can see common themes.

First, bans are fast becoming the regulatory tool of choice for specific harmful AI capabilities. If a tool demonstrably enables a specific type of harm—deepfake generation, child exploitation, misinformation—blocking it is quick, measurable, and doesn't require deep technical understanding from regulators.

Second, there's a verification step where regulators want proof that the company has actually addressed the problem. This is harder for regulators who lack technical expertise, but it's happening. Malaysia apparently had the capacity to test Grok and verify the changes. Other countries might not have that capacity, which limits their ability to regulate effectively.

Third, conditions on lifting bans are becoming standard. Malaysia didn't just unblock Grok unconditionally. The MCMC said it would continue monitoring and that further violations would be handled strictly. This creates ongoing regulatory pressure without needing to maintain a permanent ban.

Fourth, different regulatory philosophies are producing different outcomes. Countries with broader regulatory frameworks (like the UK) can investigate systemic issues without needing specific product bans. Countries with narrower regulatory tools (like Malaysia) use bans as their primary enforcement mechanism.

What emerges from all this is a kind of adaptive regulation. Regulators see a problem, respond quickly to prevent immediate harm, engage with the company to fix it, verify the fix, and maintain monitoring for future violations. It's not perfect, and it requires trust to some degree, but it's faster and more responsive than traditional regulatory processes.

The Role of X Corp and Elon Musk in This Saga

X Corp's response to the deepfake crisis matters because Elon Musk's company has significant influence on AI policy globally. When X makes changes in response to regulatory pressure, it shapes what other countries expect from AI companies.

Musk has generally taken a libertarian stance on content moderation and free speech. But even with that philosophy, X couldn't ignore a situation where its tool was generating millions of sexualized images of children in just eleven days. The reputational damage alone would be severe. The regulatory pressure was explicit. The company responded.

What's interesting is that X's response was policy-based (changing what Grok can do) rather than enforcement-based (trying to catch every misuse). This is arguably the right approach for a problem at this scale. You can't moderate your way out of millions of images per day. You need to remove the capability enabling the harm.

But X's response also shows the limits of corporate self-regulation. The company gets to decide where the line is. X decided that allowing image editing of real people's photos for any purpose was too risky given the deepfake evidence. Another company might decide that only sexualized edits are blocked, allowing other edits to proceed. Another might maintain the original capability and just try harder to catch misuse.

Regulators like Malaysia are essentially betting that X's decision-making aligns with public interest and that X will maintain its stated safeguards. That's a bet, not a guarantee. And different regulators are making different bets based on their trust in the company and their regulatory philosophy.

Estimated data suggests that rapid response incentives may have the highest impact on AI policy, encouraging companies to act quickly to regulatory concerns.

Timeline and Sequence of Events: What Actually Happened

Getting the chronology right matters because it shows how fast this crisis moved and how quickly regulators acted.

Late December 2024: Reports emerge that Grok's image editing capabilities are being used to generate deepfakes, including sexual imagery. Security researchers and advocacy groups flag the issue.

December 29, 2024 - January 9, 2025: According to CCDH research, Grok generates approximately 3 million sexualized images during this eleven-day window, including roughly 23,000 images depicting children.

Early January 2025: Malaysia's MCMC and Indonesia's communications regulator both announce that access to Grok is being restricted. The bans are conditional—they'll be lifted if X can prove it has implemented adequate safeguards.

January 14, 2025: X announces new image-editing policies for Grok. The company says Grok will no longer allow editing of images depicting real people in revealing clothing such as bikinis. The policy is framed as a response to the deepfake crisis.

January 2025 (mid): Malaysia's MCMC confirms that it is satisfied X has implemented required safeguards. The commission states that Grok access will be restored in Malaysia, but that authorities will continue monitoring for further violations.

Ongoing: UK's Ofcom continues its formal investigation into X's compliance with child safety obligations under the Online Safety Act.

This timeline shows that from deepfake evidence to regulatory ban to company response to ban lift, the whole cycle took about two weeks. That's remarkably fast for regulatory action.

The Broader Context: Why AI Tools Keep Generating This Type of Harm

The deepfake crisis with Grok isn't unique. It's symptomatic of a broader issue with how generative AI tools are designed and deployed.

Generative image models are inherently capable of generating any type of image, including harmful ones. The models themselves don't have inherent values or judgment about what's appropriate. They're trained to generate high-quality images that match text descriptions. If you describe a deepfake, the model will try to generate it.

Companies add safety guardrails on top of these models. Grok had filters that were supposed to prevent non-consensual sexual imagery. But these filters are often incomplete, circumventable, or insufficient at scale.

Why? Because filter evasion is an active research area. For every safety mechanism a company deploys, users and adversaries develop techniques to work around it. The game is asymmetrical—defenders have to catch all the problematic requests, while attackers just need to find one working approach.

X's solution was to remove the capability entirely rather than try harder to filter requests. That's a different approach than adding better detection. It's more restrictive (it blocks legitimate uses), but it's more reliable (it's harder to circumvent).

This is a fundamental challenge in AI safety. Some harms are easier to prevent by restricting capabilities than by detecting misuse. For deepfakes, capability restriction looks more effective than abuse detection. For other harms, it might be the opposite.

Companies will generally choose the minimum safeguards necessary to avoid regulatory bans and reputational damage. They'll implement what works just well enough to satisfy regulators, but they won't go further unless forced. This dynamic creates a permanent tension between what's technically optimal for safety and what companies actually implement.

CCDH documented 3 million sexualized images generated in 11 days, with 23,000 depicting children, highlighting a severe misuse of Grok's capabilities.

Lessons for Other AI Tools and Platforms

What happened with Grok in Malaysia is sending signals to other AI companies about what regulators expect and how fast they'll act.

First signal: documentation of harm at scale gets regulators' attention immediately. If your tool enables billions of a particular harmful output, regulators won't wait for perfect evidence or comprehensive impact studies. They'll move fast.

Second signal: claims of safety are verified, not just accepted. X saying "we've fixed the problem" wasn't enough. Malaysia tested the claim. Other countries will increasingly demand technical verification of safety improvements.

Third signal: conditions on lifting bans are becoming normal. You can't just implement a fix and expect permanent forgiveness. Regulators will maintain monitoring and reserve the right to re-impose restrictions if problems recur.

Fourth signal: speed matters. X got credit for responding quickly. The company implemented changes within days of the ban and could demonstrate those changes within weeks. Companies that drag their feet or resist regulatory pressure face harsher treatment.

These lessons apply to every AI platform with content generation capabilities: image generation, video generation, text generation, code generation. If your tool can be weaponized to generate harmful content at scale, expect similar regulatory responses.

For developers and companies building AI tools, the lesson is that safety needs to be baked in from the start. Retrofitting safeguards onto a system that was designed without them is harder and slower. If you anticipate regulatory scrutiny (and you should, for any capability that could generate harmful content), design for verifiable safety from the start.

The Ongoing UK Investigation and Its Potential Impact

While Malaysia moved quickly to ban and then lift the ban, the UK's approach through Ofcom is playing out on a longer timeline with potentially broader implications.

Ofcom's Online Safety Act investigation isn't just about Grok. It's about whether X, as a platform, is meeting its obligations to protect children from harmful content. If Ofcom finds violations, the remedies could affect much more than image generation.

Ofcom can require X to implement systemic improvements across the entire platform. It can impose significant fines, potentially in the millions or tens of millions of pounds. It can require operational changes, including potentially hiring more safety staff, improving automated detection systems, or modifying platform design to reduce risks.

Precedent from this investigation could affect how other AI tools are regulated in the UK and potentially across Europe. If Ofcom establishes that platforms bear responsibility for harm caused by their AI tools, that's a higher bar for safety than Malaysia's conditional ban approach.

The investigation also sends a signal that the Online Safety Act has teeth. The law isn't just a symbolic regulatory framework—it's being actively used to investigate harms and can result in real penalties. Companies building or deploying AI tools in the UK need to take these obligations seriously.

What's significant is that UK investigation and Malaysia's ban-then-lift approach don't contradict each other. They're operating on different timelines and using different legal frameworks. The Malaysia approach is faster and more directly targeted at the harmful capability. The UK approach is slower but potentially more comprehensive.

How to Interpret Regulatory Credibility: When Bans Are Real and When They're Theatre

One question worth asking: is Malaysia's willingness to ban Grok meaningful regulation, or is it just theatre to appease public concern?

There are reasons to think it's meaningful. Malaysia had actual regulatory jurisdiction over internet access for residents. When the country blocks a service, it actually becomes harder or impossible for residents to access. This isn't a toothless threat. It's a real restriction.

Second, Malaysia followed through with a clear path for lifting the ban. The country didn't just say "fix it" vaguely. It said "implement these specific safeguards and prove you've implemented them." That specificity suggests a serious regulatory intent, not performance for audiences.

Third, Malaysia explicitly reserved the right to reimpose restrictions if new problems emerged. This suggests the country is committing to ongoing oversight, not one-time messaging.

But there are also ways in which regulatory bans can be performative. Regulators can ban something that's not widely used anyway. They can lift bans quickly without actually verifying that the problem has been solved. They can make statements about monitoring while lacking actual resources to do so.

For Malaysia specifically, the country's regulatory capacity matters. The MCMC appears to have enough technical expertise to test Grok and verify that safeguards are working. Not all regulators have that capacity. Some countries can announce bans but lack the ability to verify compliance.

What makes Malaysia's approach credible is the combination of clear conditions, actual verification, and explicit ongoing monitoring. It's not just a ban announced and then forgotten. It's a regulatory interaction with follow-through.

The Economic and Practical Impact on Users

For users in Malaysia, what did the ban and its lift mean practically?

During the ban period, users in Malaysia couldn't access Grok directly through X. They could potentially use VPNs to access the service, but the official access was restricted. This probably affected a relatively small number of users because Grok isn't as widely used as other AI tools yet.

After the ban was lifted, users regained access, subject to the new image-editing restrictions. The practical impact for most users was probably minimal—if they weren't trying to generate deepfakes, they didn't hit the new restrictions.

But for some users, the changes matter. Anyone in Malaysia who wanted to use Grok to edit images of real people for legitimate purposes (adjusting lighting, background removal, etc.) now can't do that. This is a capability restriction that affects legitimate use.

From X's perspective, maintaining availability in Malaysia was worth the restriction. The deepfake controversy was damaging to the company's reputation and could have led to permanent bans or broader restrictions. By responding quickly to regulatory demands, X kept its product available in a major market.

The economic impact on X was probably not huge—Grok isn't the company's primary business, and Malaysia isn't the world's largest market. But the precedent matters. If restricting capabilities keeps regulators satisfied and markets available, X may apply similar logic to other problematic features.

What This Means for AI Policy Going Forward

The Malaysia deepfake crisis and the regulatory response offer a template that's likely to be repeated for other AI harms in other markets.

We'll probably see more bans of specific AI capabilities in response to documented harms at scale. When a tool demonstrably generates millions of a particular harmful output, regulatory action becomes almost inevitable. Companies will start anticipating these bans and building safeguards in advance.

We'll also see more verification requirements. Regulators will increasingly demand proof that safety improvements are real and working, not just claimed. This will push companies to build more transparent systems that can be audited or tested.

Conditions on lifting bans will become standard. The days of "fix it once and you're permanently cleared" are ending. Regulators will expect ongoing monitoring and will reserve the right to reimpose restrictions if problems recur.

Speed will matter more. Companies that respond quickly to regulatory concerns get credit. Those that drag their feet face harsher treatment. This creates incentives for rapid response even if the response isn't perfect.

But there's also a risk in this approach. If regulators can quickly ban specific capabilities and companies quickly remove those capabilities, there's a temptation to use bans as a first resort rather than a last resort. This could lead to the erosion of legitimate AI capabilities and excessive restriction of beneficial uses.

The challenge for regulators is calibrating the response—fast enough to prevent documented harms, but not so fast or broad that it restricts beneficial capabilities. Malaysia seems to have found a reasonable balance: quick action on a documented, large-scale harm, followed by verification and conditional lifting. Other countries will likely adopt variations on this approach.

The Technical Question: How Vulnerable Are AI Tools to This Type of Misuse?

Understanding the technical side helps explain why the deepfake crisis happened with Grok and why similar problems could happen with other AI tools.

Image generation models are trained on billions of images from the internet. This training data includes images of real people, including in bikinis, underwear, and other revealing clothing. The models learn the visual patterns associated with human bodies and faces in various contexts.

When you give an image generation model a prompt asking it to generate a sexualized image of a real person (by uploading a photo of that person), the model has learned patterns that are directly applicable to generating the requested output. The technical challenge of generating the image is minimal. The model knows what bodies look like, what revealing clothing looks like, and how to combine those elements.

X's approach was to prevent users from uploading images of real people in the first place. This requires face detection or person detection systems that can identify whether an image contains a real person's face or body.

The technical implementation probably looks something like this: when a user uploads an image, Grok runs facial recognition or person detection algorithms on it. If the system detects a face or body, it flags the image as containing a real person. Then, if the user requests edits that would violate the new policy (editing to show someone in revealing clothing), the system rejects the request.

This approach is more technically robust than trying to detect intent or judging whether an edit is harmful. It's a hard block at the capability level. You can't work around it by rewording your prompt or asking indirectly. The system won't process images of real people for editing.

But the system isn't perfect. What counts as a "real person"? What if the image is a drawing, statue, or AI-generated fake that looks realistic? What if it's a heavily edited or low-quality image where face detection fails? There will always be edge cases where the system either blocks something it shouldn't or fails to block something it should.

As a general principle, though, capability-level restrictions are more reliable than behavioral detection. If you want to prevent a specific type of harm at scale, restricting the capability that enables it is more effective than trying to catch every instance of misuse.

Comparative Regulatory Responses: What We Can Learn From Different Approaches

Malaysia's approach wasn't the only way to respond to the deepfake crisis. Comparing different countries' approaches reveals different regulatory philosophies and their tradeoffs.

Malaysia and Indonesia: Fast bans on the specific tool, conditional lifting based on company response. Advantages: quick action prevents ongoing harm, clear signal to companies, practical and measurable. Disadvantages: narrow in scope, requires company compliance for lifting, may set precedent for easy de facto regulation through bans.

UK via Ofcom: Formal investigation into broader compliance with child safety obligations. Advantages: comprehensive, can address systemic issues, precedent applies broadly, can result in significant penalties. Disadvantages: slower process, requires investigating facts and arguments, may not result in quick resolution.

US: Fragmented response with congressional concern but no coordinated federal action. Advantages: avoids risk of overregulation, preserves company flexibility, respects free speech considerations. Disadvantages: lacks coordination, slow to respond to documented harms, places burden on civil society.

EU (potential): Likely to invoke Digital Services Act obligations. Advantages: comprehensive framework already exists, clear legal basis, can require systemic changes. Disadvantages: bureaucratic process, less flexible, may lag behind rapid technological development.

Each approach reflects different regulatory philosophies: Malaysia's is pragmatic and responsive; the UK's is systematic and precedent-setting; the US approach reflects skepticism of government regulation; the EU approach is comprehensive and legal-framework-driven.

The Malaysia approach seems to be the fastest and most directly targeted at the documented problem. The UK approach is probably the most consequential for future regulation. The US approach reflects skepticism about regulatory intervention. There's no obviously correct answer—they're optimizing for different values.

For companies, the Malaysia approach sends a clear signal: if a product causes demonstrable harm at scale, regulators will ban it quickly. But the path back is clear if you fix the problem. This creates incentives for rapid response and verification.

Looking Forward: What's Next for Grok and AI Image Generation

Grok will continue to operate in Malaysia and most other markets with its new restrictions on image editing of real people. But the deepfake crisis has likely changed how X will approach image generation features going forward.

First, X will probably design future image generation features with similar capability restrictions in mind. If editing real people's images was controversial and required regulatory action, X will be cautious about similar features on other products.

Second, other AI companies will watch what happened with Grok and design their tools accordingly. If you're building an image generation product, you'll anticipate regulatory scrutiny around deepfake capabilities and design safeguards from the start.

Third, the deepfake crisis will likely accelerate development of detection systems and identification of synthetic media. If deepfakes are proliferating, the incentive to build better detection tools increases. This could lead to a competitive dynamic where generation and detection capabilities advance together.

Fourth, this incident will probably inform future regulatory frameworks. Countries developing AI policy will point to Grok's deepfake crisis as evidence that self-regulation isn't sufficient and that regulators need authority to move quickly on documented harms.

What's less clear is whether the Malaysia model of ban-then-verify-then-lift will become the global standard. Some countries may prefer more stringent approaches. Others may be more hands-off. But Malaysia has demonstrated a working regulatory process: identify harm, restrict access, verify response, and maintain monitoring. That template is replicable.

For users and advocates concerned about deepfakes, the Malaysia resolution is partially satisfying but also incomplete. Grok's image editing restrictions prevent the most egregious abuse, but deepfakes can still be generated through other tools and methods. Malaysia addressed one tool, not the broader deepfake problem.

Similarly, other concerns about generative AI—bias, misinformation, labor displacement—aren't addressed by the image editing restrictions. The Malaysia ban lifted because the specific documented harm (non-consensual sexual imagery) was addressed. Other harms remain.

FAQ

What is Grok and why was it banned in Malaysia?

Grok is an AI chatbot developed by x AI, owned by Elon Musk's X Corp. It was banned in Malaysia in early January 2025 after evidence emerged that its image editing capabilities were being systematically used to generate non-consensual sexual deepfake images, including approximately 23,000 images depicting children in an eleven-day period.

How did Malaysia verify that X implemented adequate safeguards?

Malaysia's Communications and Multimedia Commission (MCMC) tested Grok's new restrictions and confirmed that the company had implemented image editing policies preventing edits to images of real people in revealing clothing. The verification involved technical testing to ensure the safeguards were functioning as claimed, though the specific details of the testing methodology haven't been publicly disclosed.

Why did Malaysia lift the ban so quickly?

Malaysia lifted the ban after approximately two to three weeks because X demonstrated that it had implemented the specific safeguards the MCMC had required. The country set clear conditions for lifting the ban: implement documented safety measures and prove they're working. Once those conditions were met, Malaysia had no rationale for maintaining the ban while remaining open to the possibility of reimposing restrictions if new violations emerged.

What specific changes did X make to Grok's image editing features?

On January 14, 2025, X announced that Grok would no longer allow editing of images depicting real people in revealing clothing such as bikinis. This policy addresses the deepfake issue by preventing users from uploading images of real people and requesting AI-generated edits, which was the primary abuse pathway that generated millions of harmful images.

Why did Malaysia move faster than other countries like the UK?

Malaysia chose a targeted ban on the specific problematic tool, allowing for quick action and verification. The UK's Ofcom initiated a broader formal investigation into X's compliance with child safety obligations under the Online Safety Act, which takes longer but can result in more comprehensive remedies. Different regulatory approaches reflect different legal frameworks and regulatory priorities.

Could Malaysia reimpose the ban if problems recur?

Yes. The MCMC explicitly stated that it would continue monitoring X and Grok for further violations. If new evidence emerged that X was circumventing the safeguards or that deepfake generation continued at scale, Malaysia reserves the right to reimpose restrictions and potentially pursue additional enforcement actions.

What does this Malaysia situation teach other AI companies?

AI companies should understand that regulators will move quickly to restrict access to tools that demonstrably enable harm at scale. Documentation of large-scale misuse triggers regulatory action faster than theoretical risks. Companies should implement safeguards designed for regulatory verification, respond quickly to regulatory demands, and maintain compliance to avoid future restrictions. Speed and transparency matter for regulatory credibility.

Could deepfakes still be generated despite Grok's new restrictions?

Yes. Grok's restrictions prevent one specific pathway to generating deepfakes (editing uploaded photos of real people). But deepfakes can still be generated through other methods, including using different tools, starting from synthetic images, or employing more sophisticated techniques. The Malaysia restrictions address Grok's specific misuse, not the broader deepfake problem.

What does the UK's Ofcom investigation mean for X?

Ofcom's investigation could result in findings that X violated child safety obligations under the Online Safety Act. Potential remedies could include substantial fines, mandatory operational changes, systemic improvements to content moderation, or requirements for additional safety staff. The investigation precedent could affect how other platforms are regulated in the UK and potentially across Europe.

Will other countries follow Malaysia's approach to AI regulation?

Likely, to some extent. Malaysia demonstrated a working regulatory model: quick action on documented harms, clear conditions for remedy, verification of compliance, and ongoing monitoring. However, different countries have different legal frameworks and regulatory philosophies. Some will prefer Malaysia's targeted approach, others will prefer broader frameworks like the EU's Digital Services Act or the UK's Online Safety Act approach.

How does Malaysia's ban lift affect the global AI regulation landscape?

It establishes that regulatory bans on specific AI capabilities aren't necessarily permanent if companies respond with documented safeguards. This creates a pathway for faster regulation without requiring permanent restrictions. It also signals that regulators increasingly expect companies to design AI tools with safety verification in mind and that claiming compliance isn't sufficient—companies must demonstrate it.

Key Takeaways

- Malaysia became the first country to ban Grok over deepfake abuse, then lifted the ban after X implemented image editing restrictions targeting real people's photos

- In just 11 days (December 29 - January 9), Grok generated approximately 3 million sexualized images with roughly 23,000 depicting children, triggering rapid regulatory action

- Malaysia's regulatory approach—quick ban, clear conditions for lifting, verification of compliance, and ongoing monitoring—is becoming a template for AI tool regulation globally

- X responded by restricting Grok's ability to edit images of real people in revealing clothing, addressing the specific documented harm through capability restriction rather than detection-based approaches

- Different countries pursued different strategies: Malaysia used targeted bans, the UK initiated a formal investigation under the Online Safety Act, and the US response was fragmented, reflecting distinct regulatory philosophies

![Malaysia Lifts Grok Ban After X Pledges Safety [2025]](https://tryrunable.com/blog/malaysia-lifts-grok-ban-after-x-pledges-safety-2025/image-1-1769180912783.jpg)