Meta and YouTube's Legal Setback: What It Means for Tech and Content Creators [2025]

In a landmark decision, Meta and YouTube faced a significant legal defeat that could reshape the landscape of digital technology and content creation. This ruling not only impacts these tech giants but also sets a precedent for how online platforms might operate in the future, as detailed in the NPR coverage of the trial verdict.

TL; DR

- Landmark Ruling: The court's decision against Meta and YouTube could dramatically alter online platform operations, as reported by The New York Times.

- Content Moderation Challenges: New legal obligations may increase scrutiny on how content is managed.

- Data Privacy Concerns: The ruling highlights ongoing privacy issues that tech companies must address.

- Impact on Creators: Content creators may face new guidelines and restrictions affecting monetization.

- Future Trends: Expect an increase in regulatory oversight on digital platforms.

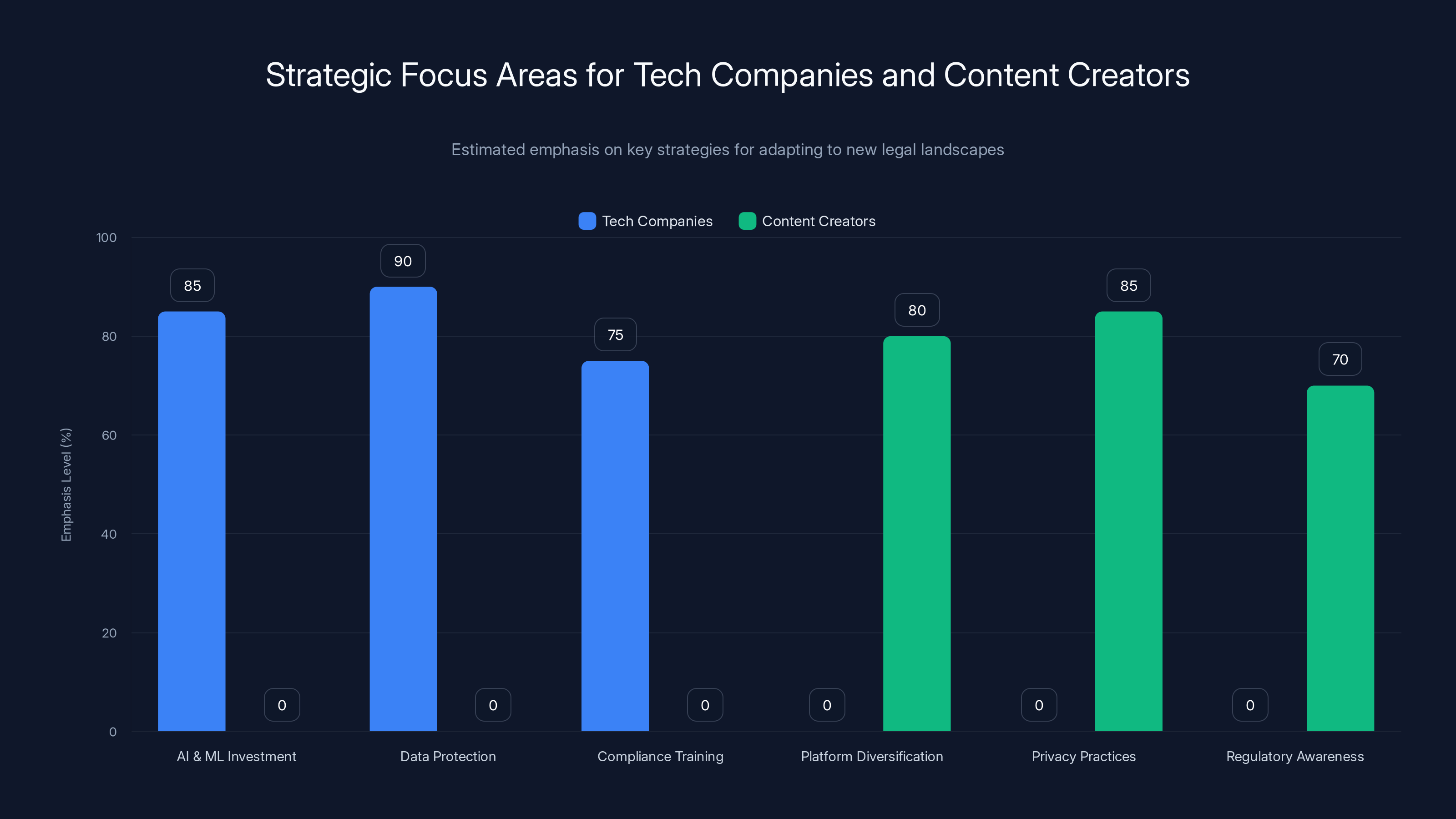

Tech companies focus heavily on AI, data protection, and compliance, while content creators prioritize platform diversification and privacy practices. (Estimated data)

The Court Case: A Brief Overview

The lawsuit against Meta (formerly Facebook) and YouTube revolved around allegations of inadequate content moderation and privacy violations. These companies were accused of failing to sufficiently protect user data and allowing harmful content to proliferate on their platforms, as noted in the Lawsuit Information Center.

Key Allegations

- Inadequate Content Moderation: Critics argued that both platforms inadequately filtered harmful content, resulting in misinformation and harmful material spreading unchecked.

- Data Privacy Violations: Allegations included mishandling user data and insufficient transparency about how user information was collected and shared.

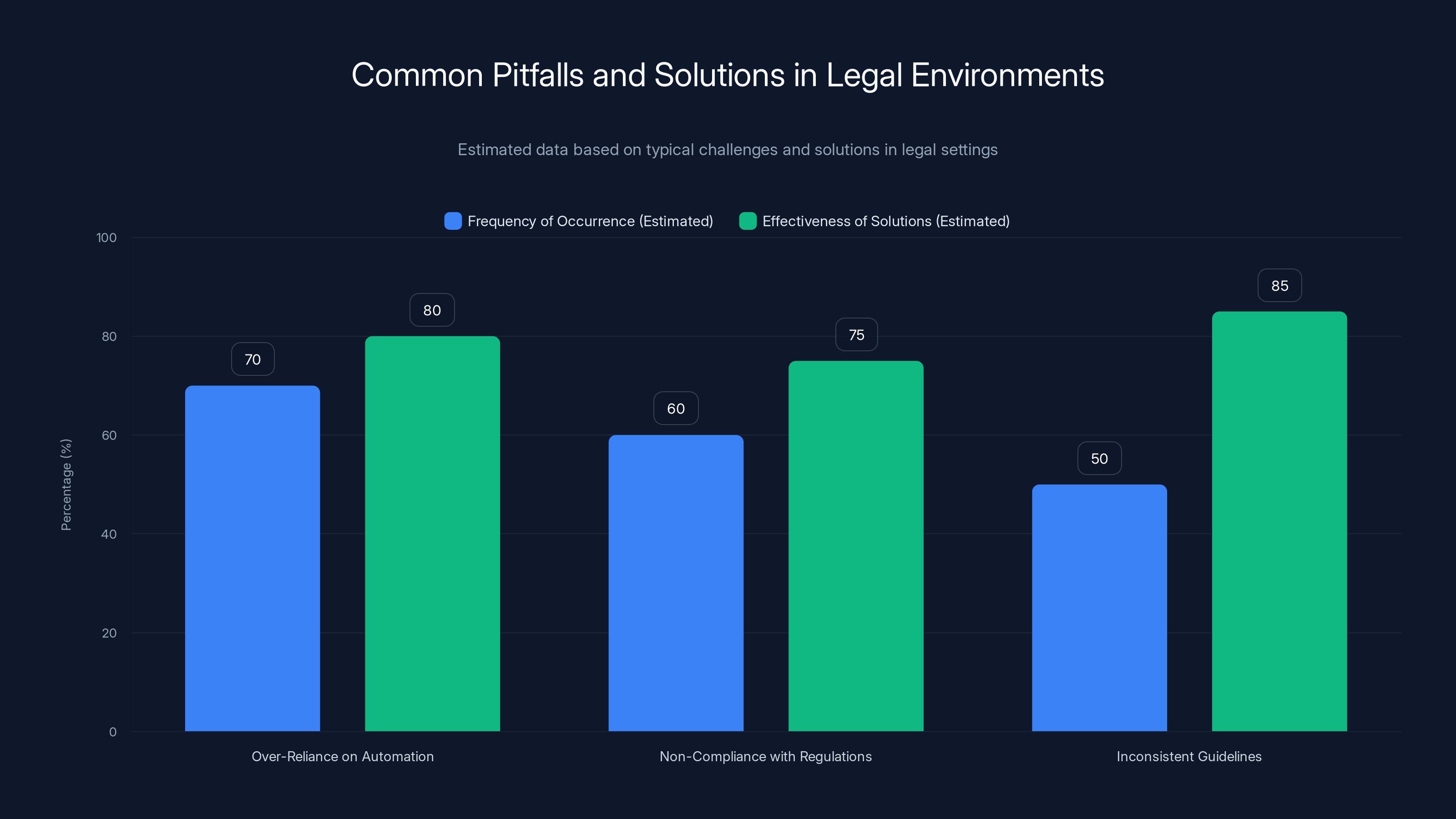

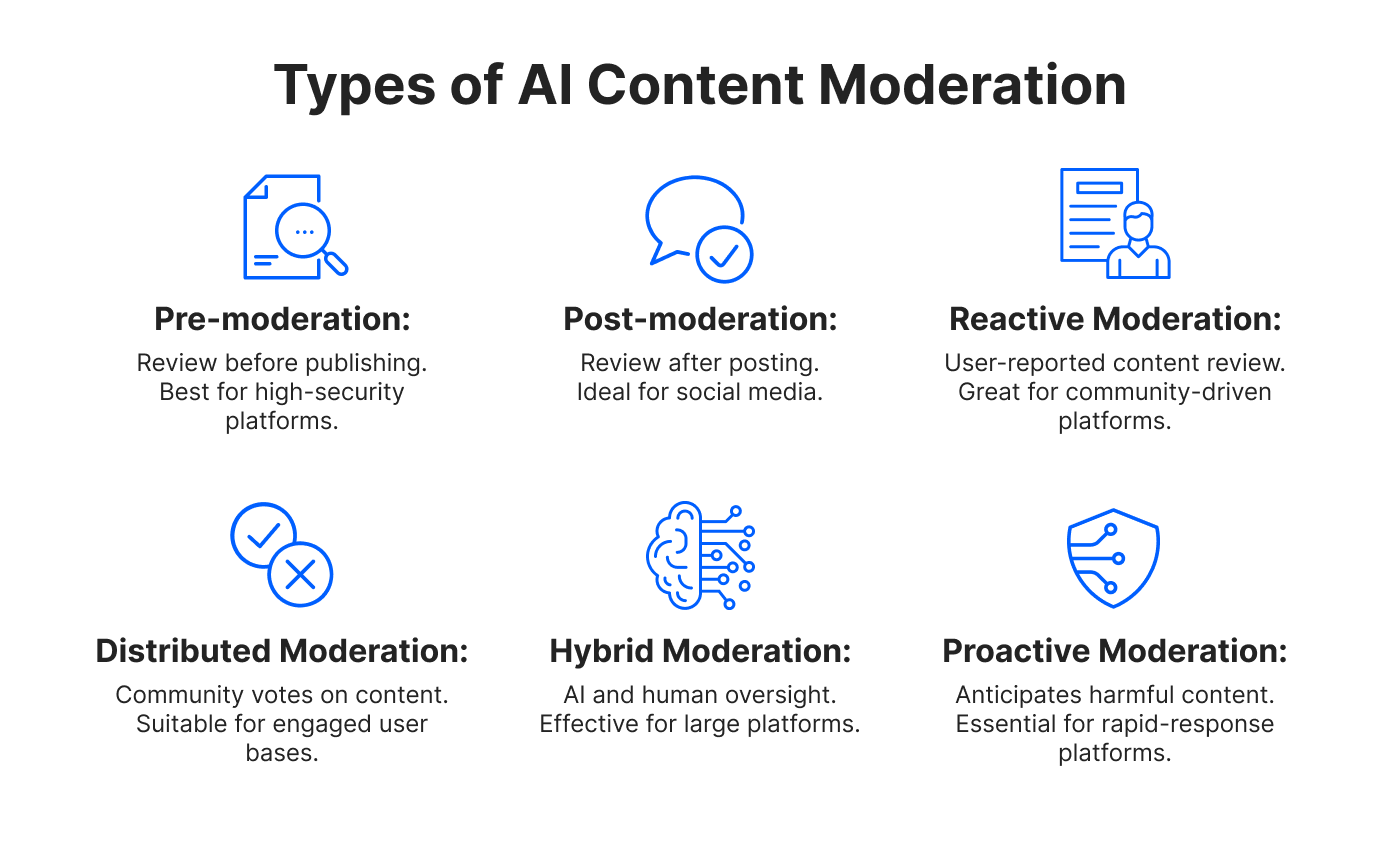

This bar chart highlights the estimated frequency of common pitfalls in legal environments and the effectiveness of proposed solutions. Balancing automation with human oversight is crucial for effective content moderation.

Legal Implications: A Precedent-Setting Moment

This court decision is a significant turning point, highlighting several critical legal implications for tech companies.

Strengthening Data Privacy Regulations

One of the ruling's main outcomes is the push for stronger data privacy regulations. Regulators may now feel emboldened to enforce stricter guidelines, ensuring that user data is handled more responsibly, as discussed in KFF's Medicaid Waiver Tracker.

Enhanced Content Moderation Requirements

The decision mandates enhanced content moderation protocols. Platforms may need to implement more robust systems to filter and manage content, which could lead to increased operational costs.

The Impact on Content Creators

Content creators are at the heart of this ecosystem, and the ruling has direct implications for their operations.

New Guidelines and Restrictions

Creators might face new guidelines dictating what content is permissible. This could affect monetization strategies and audience engagement, as more stringent rules might limit creative freedom, according to Facebook's announcement on rewarding original creators.

Monetization Challenges

With increased scrutiny, platforms may alter their monetization policies, affecting revenue streams for creators. Ad systems might become more selective, prioritizing content that aligns with new guidelines, as noted in All About Cookies.

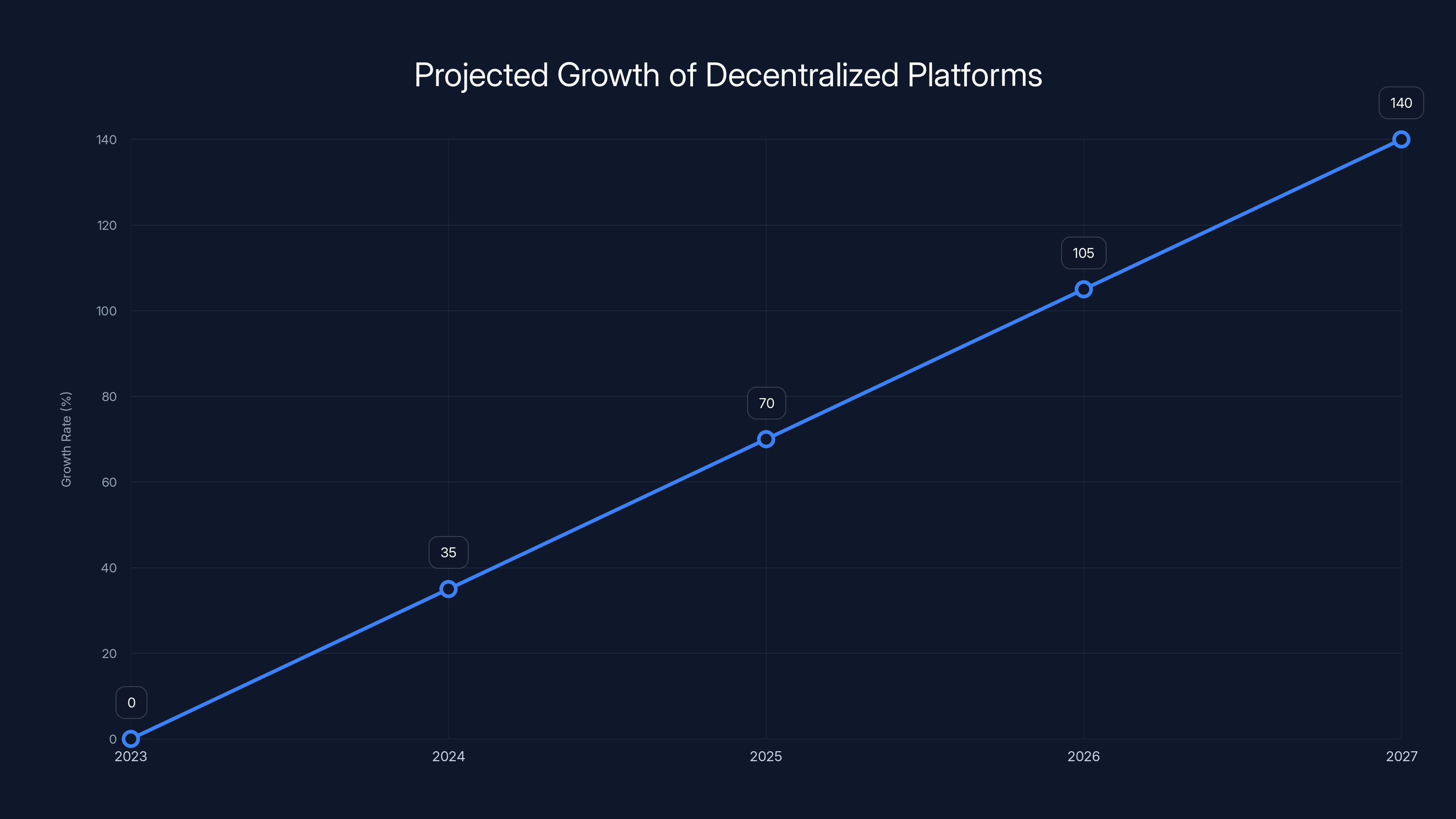

Decentralized platforms are projected to grow by 35% annually, driven by increased demand for privacy and user control. Estimated data.

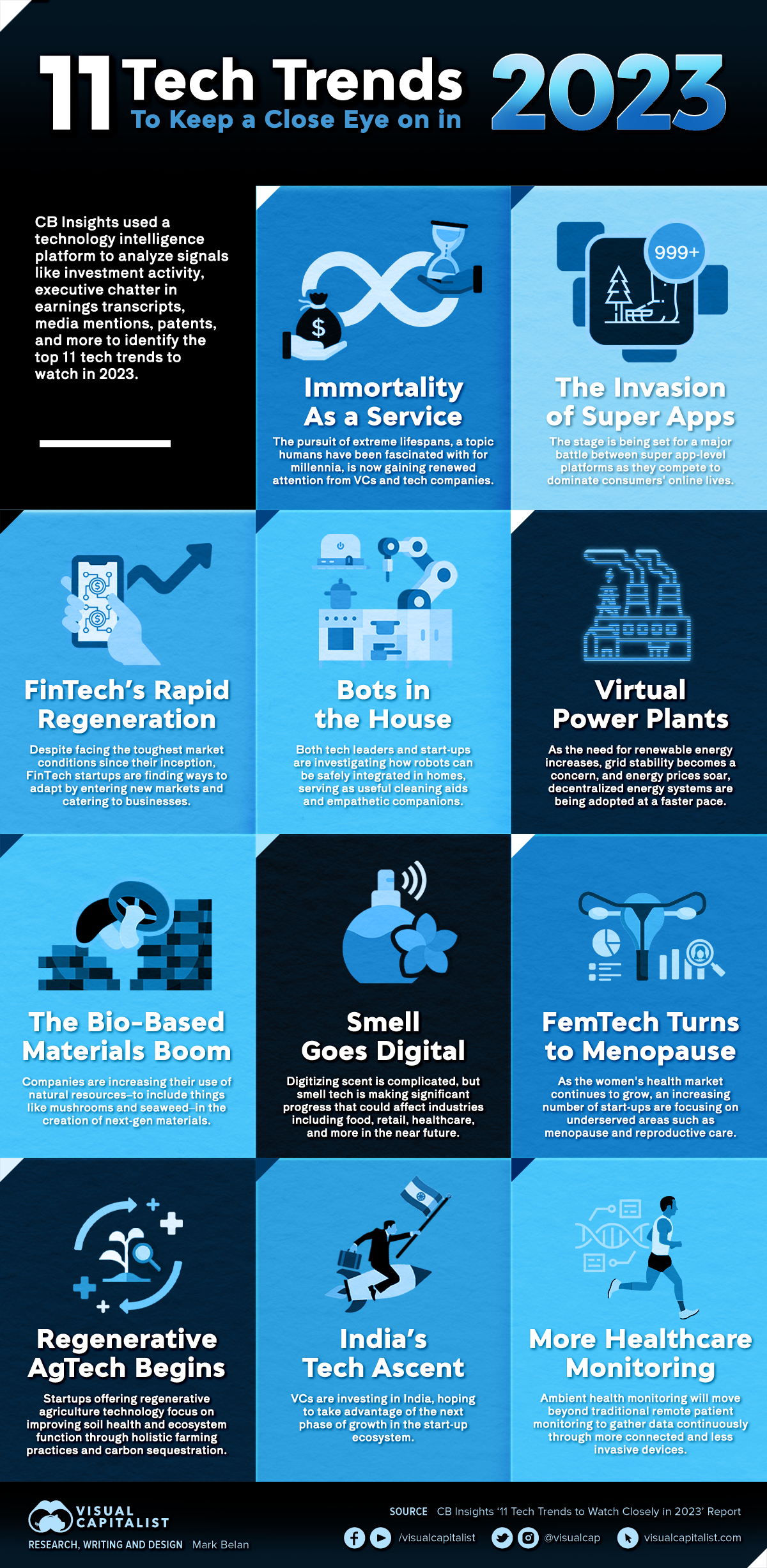

Future Trends: What Lies Ahead?

The ruling against Meta and YouTube is expected to accelerate several trends within the tech and digital content sectors.

Regulatory Oversight Intensification

Expect a surge in regulatory oversight as governments worldwide take cues from this case. Platforms may face more rigorous audits and compliance checks to ensure adherence to new standards, as highlighted by Regulatory Oversight.

The Rise of Decentralized Platforms

In response to increased regulation, there may be a shift towards decentralized platforms that offer greater user control and privacy. These platforms could gain popularity as they promise fewer restrictions and more transparency, as projected by Fortune Business Insights.

Practical Implementation Guides

For tech companies and content creators, adapting to this new legal landscape requires strategic adjustments.

For Tech Companies

- Invest in AI and Machine Learning: Enhance content moderation capabilities through AI-driven solutions.

- Strengthen Data Protection Protocols: Implement advanced encryption and data handling procedures to safeguard user information.

- Compliance Training: Regularly train staff on compliance with evolving regulations to avoid legal pitfalls.

For Content Creators

- Diversify Content Platforms: Reduce reliance on a single platform by expanding your presence across multiple channels.

- Adopt Privacy-Conscious Practices: Ensure that content and interactions respect user privacy preferences.

- Stay Informed: Keep abreast of regulatory changes to adapt content strategies accordingly.

Common Pitfalls and Solutions

Navigating this complex legal environment presents several challenges. Here are some common pitfalls and strategies to overcome them.

Pitfall: Over-Reliance on Automated Moderation

Solution: Balance automation with human oversight to ensure nuanced content decisions.

Pitfall: Non-Compliance with Data Regulations

Solution: Regularly audit data handling practices and implement comprehensive compliance checks.

Pitfall: Inconsistent Content Guidelines

Solution: Establish clear, consistent guidelines and communicate them effectively to your audience.

Future Recommendations

To thrive in this evolving landscape, tech platforms and content creators should focus on several key areas.

Embrace Transparency

Transparency in operations and data handling builds trust with users and regulators alike. Platforms should clearly articulate their policies and practices.

Foster Community Engagement

Engaging with users to understand their concerns and preferences can guide policy adjustments that benefit both the platform and its users.

Innovate Responsibly

Innovation should align with ethical standards and societal values. Responsible innovation not only mitigates legal risks but also enhances brand reputation.

Conclusion

The legal setback for Meta and YouTube is a wake-up call for the tech industry. It underscores the need for stronger data privacy measures and more effective content moderation strategies. By adapting to these changes and embracing responsible innovation, tech companies and content creators can not only comply with new regulations but also enhance their credibility and user trust.

FAQ

What led to the court case against Meta and YouTube?

The lawsuit involved allegations of inadequate content moderation and privacy violations, where both companies were accused of mishandling user data and failing to filter harmful content effectively.

How will this ruling affect content creators?

Content creators may face new guidelines and restrictions, affecting monetization strategies and creative freedom, as platforms implement more stringent content policies.

What are the potential benefits of the ruling?

The ruling could lead to stronger data privacy protections and more robust content moderation, ultimately enhancing user trust and platform credibility.

How can tech companies adapt to these changes?

Tech companies should invest in AI-driven moderation tools, strengthen data protection protocols, and provide compliance training to staff.

What future trends can we expect following this ruling?

Increased regulatory oversight and a potential shift towards decentralized platforms, which offer greater user control and privacy, are likely trends.

How can content creators ensure compliance with new guidelines?

Creators should diversify their content platforms, adopt privacy-conscious practices, and stay informed about regulatory changes to adapt their strategies accordingly.

What are the key challenges tech companies might face post-ruling?

Challenges include balancing automated moderation with human oversight, ensuring compliance with data regulations, and maintaining consistent content guidelines.

How important is transparency for platforms following this ruling?

Transparency is crucial for building trust with users and regulators. Platforms should clearly communicate their policies and practices to enhance credibility.

Key Takeaways

- The court's decision against Meta and YouTube could reshape digital platform operations with stronger privacy regulations and content moderation requirements.

- Content creators may face new guidelines affecting monetization and creative freedom.

- Expect increased regulatory oversight on digital platforms, potentially driving a shift towards decentralized alternatives.

- Tech companies should invest in AI-driven moderation tools and strengthen data protection protocols.

- Transparency and community engagement are crucial for building trust and credibility in this new landscape.

Related Articles

- How Mastodon's Latest Revamp Simplifies Decentralized Social Networking [2025]

- Understanding Social Media Addiction: Implications of Landmark Legal Cases [2025]

- Mastering AI-Generated Responses in WhatsApp: A Comprehensive Guide [2025]

- Unveiling VPN Transparency: A Deep Dive Into User Data Privacy [2025]

- Meta's Strategic Shift: Embracing AI Amidst Workforce Changes [2025]

- YouTube's New Affiliate Shopping Features: A Game-Changer for Creators [2025]

![Meta and YouTube's Legal Setback: What It Means for Tech and Content Creators [2025]](https://tryrunable.com/blog/meta-and-youtube-s-legal-setback-what-it-means-for-tech-and-/image-1-1774620275979.jpg)