Meta's $100 Billion AMD Chip Deal: Paving the Way for Personal Superintelligence [2025]

Last week, a colossal shift in the tech industry unfolded as Meta announced its intention to purchase up to $100 billion worth of AMD chips. This move is not just about hardware acquisition; it’s a strategic leap towards achieving what Meta calls 'personal superintelligence.' But what does this mean for the tech landscape, and how will it shape the future of artificial intelligence?

TL; DR

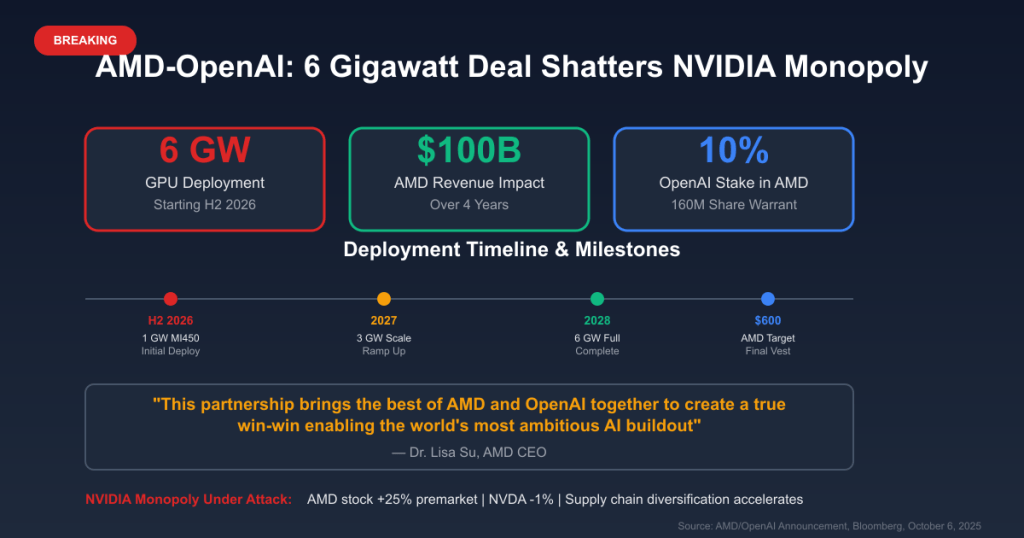

- Meta's Investment: Meta plans to buy $100 billion worth of AMD chips, aiming to drive massive data center power, as reported by TechCrunch.

- Strategic Partnership: This involves a performance-based warrant for up to 160 million AMD shares, according to Barron's.

- Technological Shift: Focuses on AMD’s MI540 GPUs and latest CPUs, enhancing AI inference capabilities, as detailed by The Wall Street Journal.

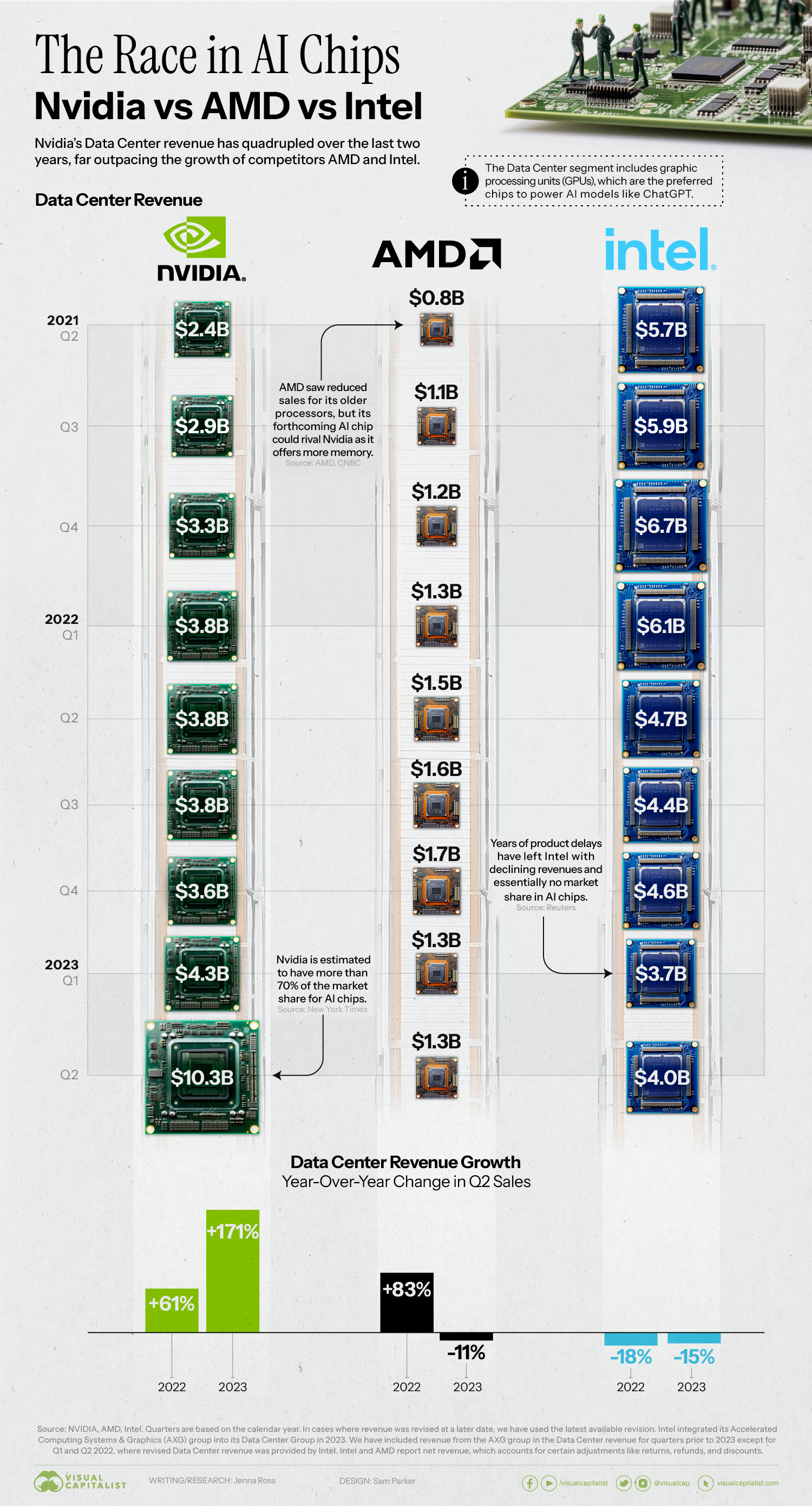

- Market Impact: Signals a shift away from Nvidia dominance in the AI chip market, as noted by TechBuzz.

- Future Vision: Aims to develop personal superintelligence, enhancing everyday tech interactions.

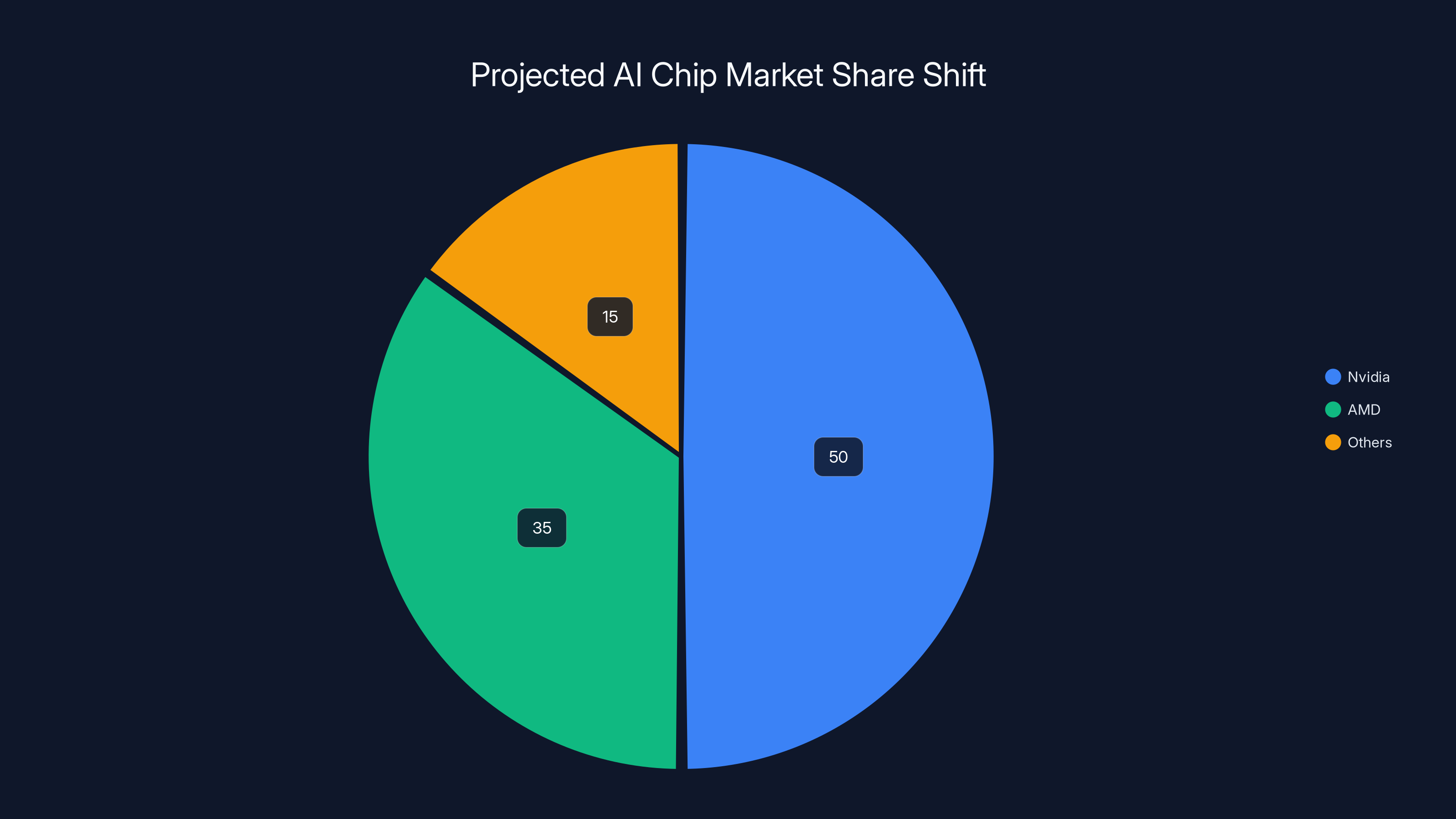

Meta's $100 billion investment in AMD could significantly increase AMD's market share in the AI chip sector, challenging Nvidia's dominance. (Estimated data)

The Significance of Meta's $100 Billion Deal

Meta's deal with AMD is monumental, not just in scale but in its potential to redefine AI infrastructure. The agreement is structured around performance milestones, indicating a long-term commitment to innovation and efficiency. This partnership is designed to leverage AMD's cutting-edge MI540 series GPUs and its latest CPUs, which are crucial for advancing AI inference tasks, as highlighted in The New York Times.

Why AMD?

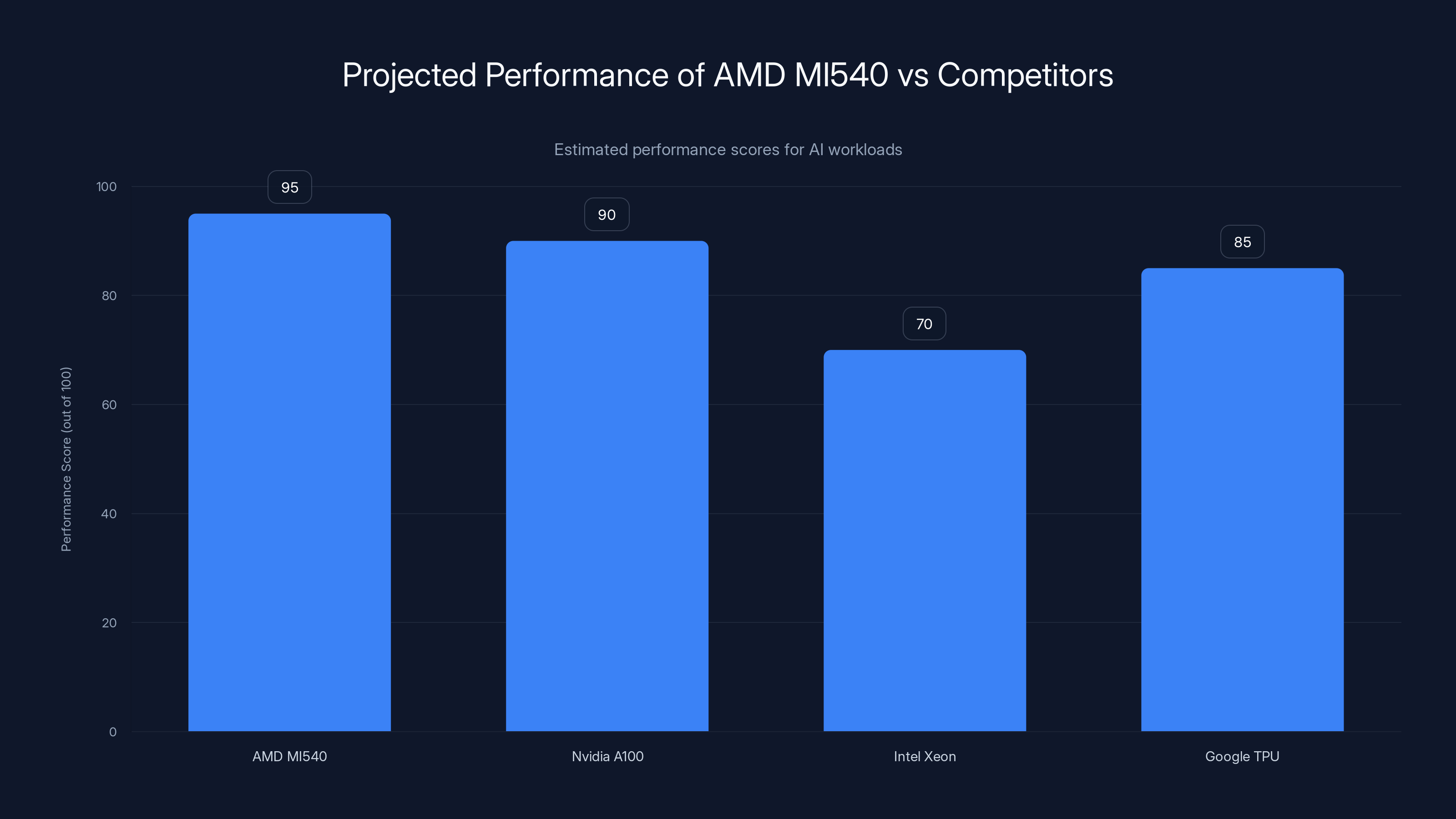

AMD's recent advancements in chip technology have positioned it as a formidable competitor in the AI space, traditionally dominated by Nvidia. The MI540 series GPUs are engineered to deliver exceptional performance, particularly in AI workloads, as described by PC Gamer. Unlike traditional CPUs, these GPUs are optimized for parallel processing, which is essential for handling the massive datasets AI applications require.

AMD's MI540 series GPUs are projected to outperform competitors in AI workloads, indicating a strong position in the AI infrastructure market. Estimated data.

AMD's Advantage in AI

While Nvidia has long been the leader in the GPU market, AMD offers several advantages that make it attractive for AI applications:

- Cost Efficiency: AMD chips often provide similar performance to Nvidia at a lower cost, which is crucial for scaling AI operations, as noted by Vocal Media.

- Open Ecosystem: AMD's commitment to open-source software and compatibility with various AI frameworks allows for greater flexibility, as explained in AMD's technical articles.

- Power Efficiency: AMD's architecture is designed to optimize power usage, a crucial factor in large-scale data centers, according to Deloitte's semiconductor industry outlook.

From CPU to GPU: Understanding the Transition

The evolution from CPUs to GPUs in AI workloads is driven by the need for high-performance computing. CPUs, while powerful, are not designed for the parallel processing required by AI models. GPUs, with their thousands of cores, can handle multiple operations simultaneously, making them ideal for AI inference, as discussed in Towards Data Science.

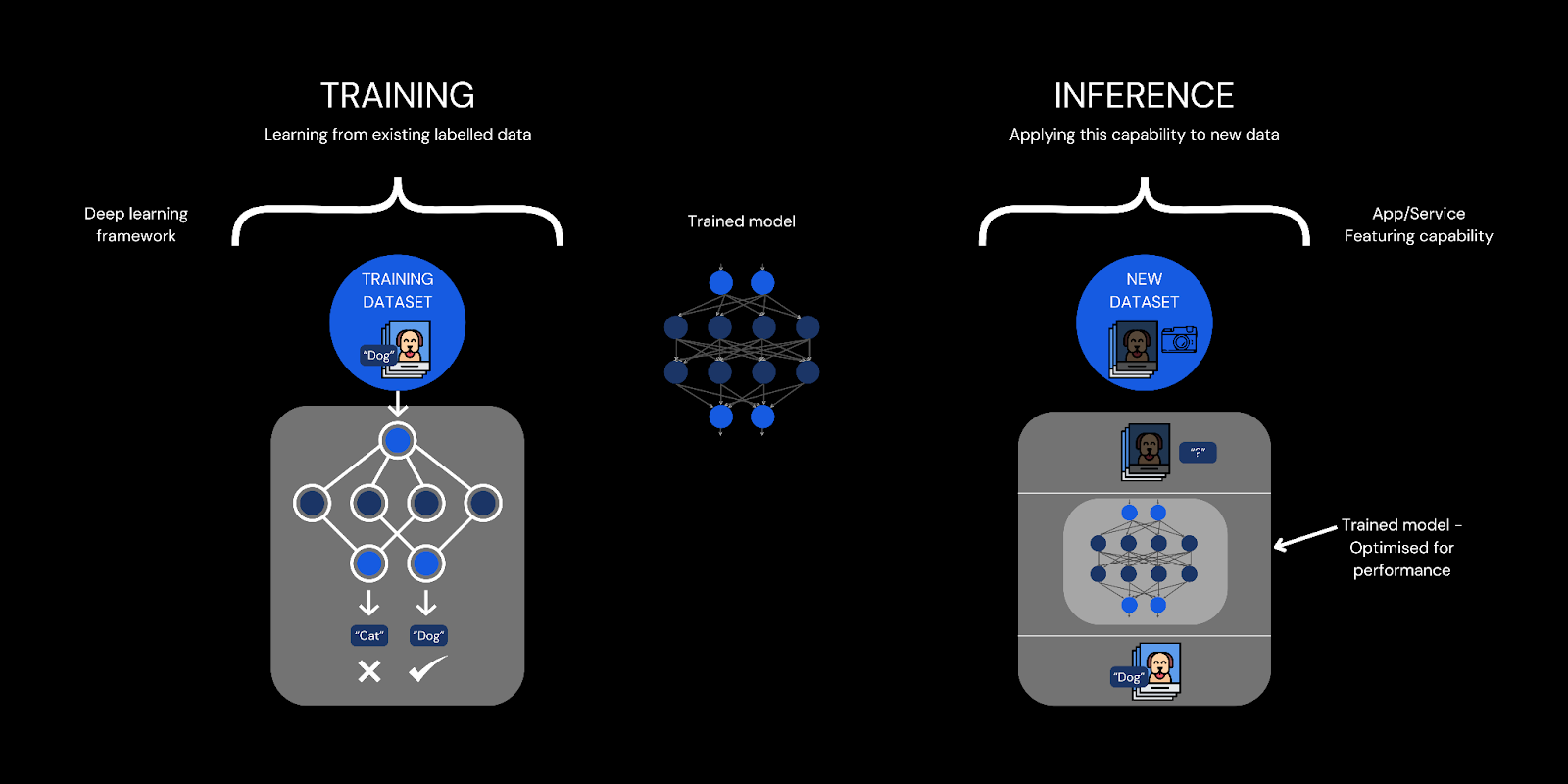

The Role of AI Inference

AI inference refers to the process of applying a trained model to new data to make predictions or decisions. This is where AMD’s new chips come into play. With enhanced performance and efficiency, these chips are set to redefine how AI inference is conducted, allowing for faster and more accurate results, as reported by FedScoop.

Practical Implementation of AI Inference

Implementing AI inference at scale involves several key steps:

- Model Training: Using datasets to train AI models, typically done on high-performance GPUs.

- Data Input: Feeding new data into the model for analysis.

- Model Deployment: Integrating the trained model into applications or systems.

- Inference Execution: Running the model to generate predictions or decisions.

AMD's new chips are estimated to improve AI inference performance by up to 30%, particularly enhancing inference execution speed. Estimated data.

Meta's Vision: Personal Superintelligence

Meta’s pursuit of personal superintelligence aims to enhance everyday user interactions with AI. This vision extends beyond digital assistants to creating AI that deeply understands user preferences, needs, and contexts, as outlined by Nature.

Key Components of Personal Superintelligence

- Contextual Awareness: AI systems that understand user context, enabling more relevant interactions.

- Adaptive Learning: Continuous learning from user interactions to improve over time.

- Natural Interaction: Seamless communication with AI through voice, text, and gesture.

Challenges and Pitfalls

While the potential is vast, several challenges must be addressed:

- Data Privacy: Ensuring user data is protected as AI systems become more integrated into daily life.

- Bias and Fairness: Developing unbiased AI models that provide fair outcomes.

- Scalability: Managing the computational demands of widespread AI deployment.

Overcoming Pitfalls

To mitigate these challenges, companies need to adopt best practices such as:

- Robust Security Protocols: Implementing end-to-end encryption and user consent management.

- Bias Mitigation Strategies: Using diverse datasets and regular audits of AI models.

- Scalable Architectures: Leveraging cloud-based solutions to dynamically scale resources.

Future Trends in AI and Computing

As AI continues to evolve, several trends are shaping the future:

- Edge Computing: Moving processing closer to data sources to reduce latency and enhance real-time capabilities.

- Quantum Computing: Exploring new computational paradigms to solve complex problems beyond current capabilities.

- AI Democratization: Making AI tools and resources accessible to a broader audience, fostering innovation and collaboration.

Conclusion: A New Era of AI

Meta's partnership with AMD is a bold move towards a future where AI plays a central role in personal and professional domains. By investing in cutting-edge technology, Meta is not only advancing its own capabilities but also setting a precedent for the industry. As we look to the future, the potential for personal superintelligence offers exciting possibilities, promising to enhance our interactions with technology in ways we are only beginning to imagine.

FAQ

What is personal superintelligence?

Personal superintelligence refers to AI systems that can understand and predict user needs, providing personalized and contextually relevant interactions. It aims to enhance user experiences by integrating AI deeply into daily life.

How does AMD's technology support AI development?

AMD's MI540 GPUs and latest CPUs are optimized for high-performance computing, making them ideal for AI workloads. They offer cost efficiency, power optimization, and compatibility with various AI frameworks, supporting scalable AI development.

What are the potential risks of widespread AI adoption?

Potential risks include data privacy concerns, algorithmic bias, and the challenge of ensuring fair and ethical AI outcomes. Addressing these requires robust security measures, diverse data, and regular audits.

How can companies mitigate AI-related challenges?

Companies can mitigate challenges by implementing strong security protocols, using diverse datasets to train models, conducting regular audits, and adopting scalable architectures to manage computational demands.

What future trends are influencing AI and computing?

Key trends include the rise of edge computing, advancements in quantum computing, and the democratization of AI tools, which are making AI more accessible and fostering innovation across industries.

Key Takeaways

- Meta plans to purchase $100 billion of AMD chips to support AI advancements.

- AMD's MI540 series GPUs and CPUs are pivotal for AI inference tasks.

- The partnership signals a shift from Nvidia dominance in AI chip technology.

- Meta's vision of personal superintelligence aims to enhance AI-user interactions.

- Key challenges include data privacy, bias, and scalability in AI deployment.

- Future trends in AI include edge computing and quantum computing advancements.

Related Articles

- Meta's Strategic AI Chip Acquisition: A Deep Dive into the AMD Deal [2025]

- The Future of Home Security: ADT's Acquisition of Wi-Fi Motion Sensing Technology [2025]

- Waymo's Robotaxi Revolution: Expanding to 10 US Cities [2025]

- AI Music Revolution: How a Chainsmokers-Approved AI Producer Joins Google [2025]

- The Ultimate Guide to the Best 2-in-1 Laptops of 2026: Versatility Meets Performance

- Lenovo ThinkPad P16 Gen 3 Mobile Workstation Review: Power Meets Portability [2025]

![Meta's $100 Billion AMD Chip Deal: Paving the Way for Personal Superintelligence [2025]](https://tryrunable.com/blog/meta-s-100-billion-amd-chip-deal-paving-the-way-for-personal/image-1-1771947435057.png)