Meta's Next-Gen AI Systems: Revolutionizing Content Enforcement [2025]

In an era where digital content proliferates at an unprecedented pace, Meta is stepping up its game by deploying advanced AI systems for content enforcement. This move aims to enhance the detection and removal of harmful content while reducing the company's dependence on third-party vendors. Let's dive deep into how these systems work, their implications, and what the future holds.

TL; DR

- Advanced AI Systems: Meta is deploying AI systems for better content enforcement.

- Reduced Third-Party Reliance: The company is cutting back on external vendors.

- Enhanced Detection: AI improves accuracy in identifying harmful content.

- Swift Response: Faster action against policy violations.

- Future Implications: Potential industry-wide shifts in content moderation.

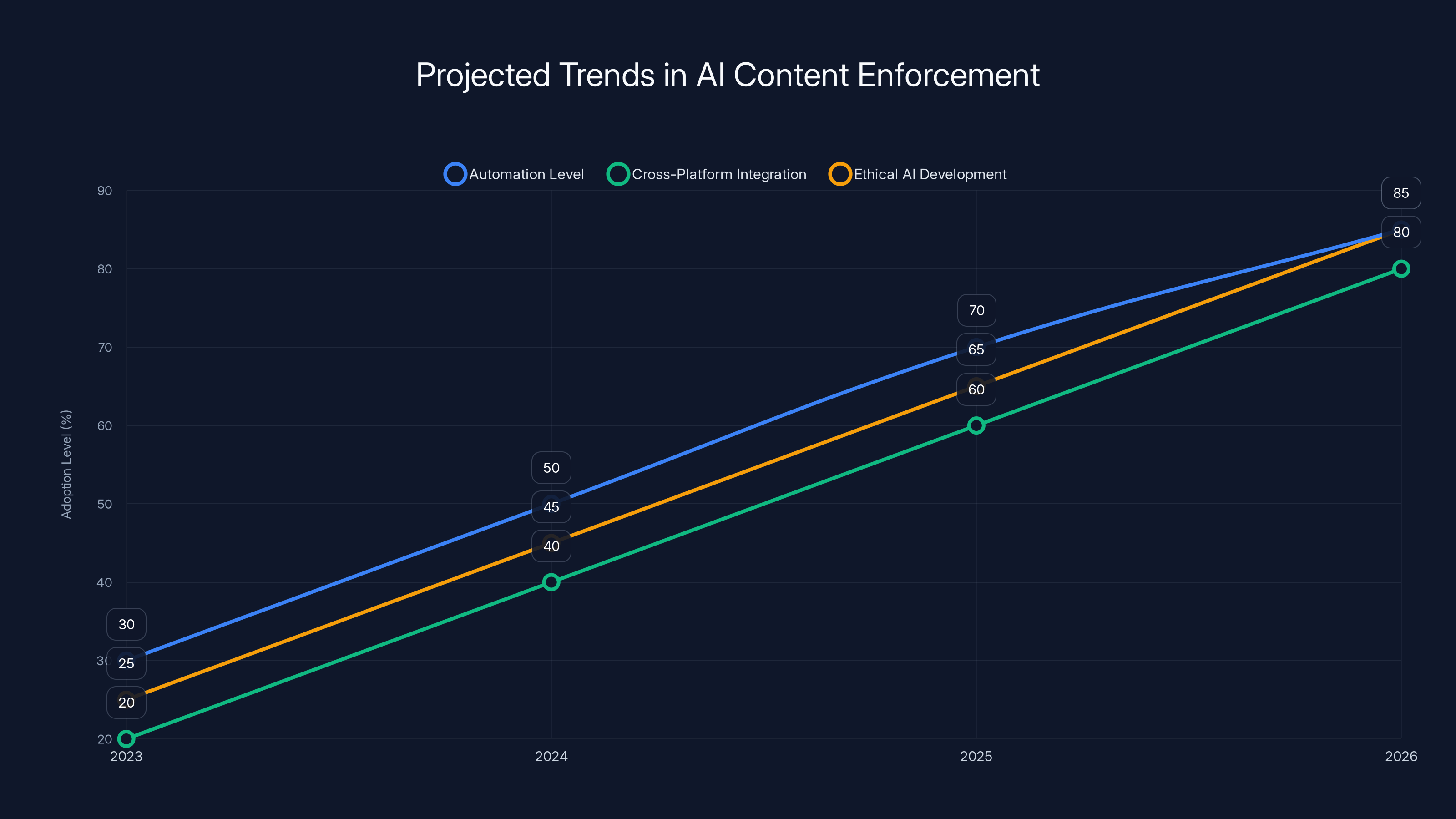

Projected trends indicate significant growth in automation, cross-platform integration, and ethical AI development by 2026. Estimated data based on industry trends.

Why AI in Content Enforcement?

Content enforcement is crucial for maintaining a safe online environment. Meta's decision to lean heavily on AI stems from the need to process vast amounts of data quickly and accurately. AI systems excel in handling repetitive tasks, such as scanning for graphic content or identifying scams, which are often targeted by adversarial actors.

The Challenges of Manual Content Review

Human moderators have traditionally been the backbone of content enforcement. However, as digital content expands, relying solely on human review becomes impractical. Human moderators face emotional tolls from exposure to distressing content, and their capacity to process information is limited compared to AI.

AI to the Rescue

Meta's AI systems are designed to outperform current methods by automating content reviews and adapting to changing tactics used by malicious actors. These systems use machine learning algorithms to continuously learn from new data, improving their ability to detect violations.

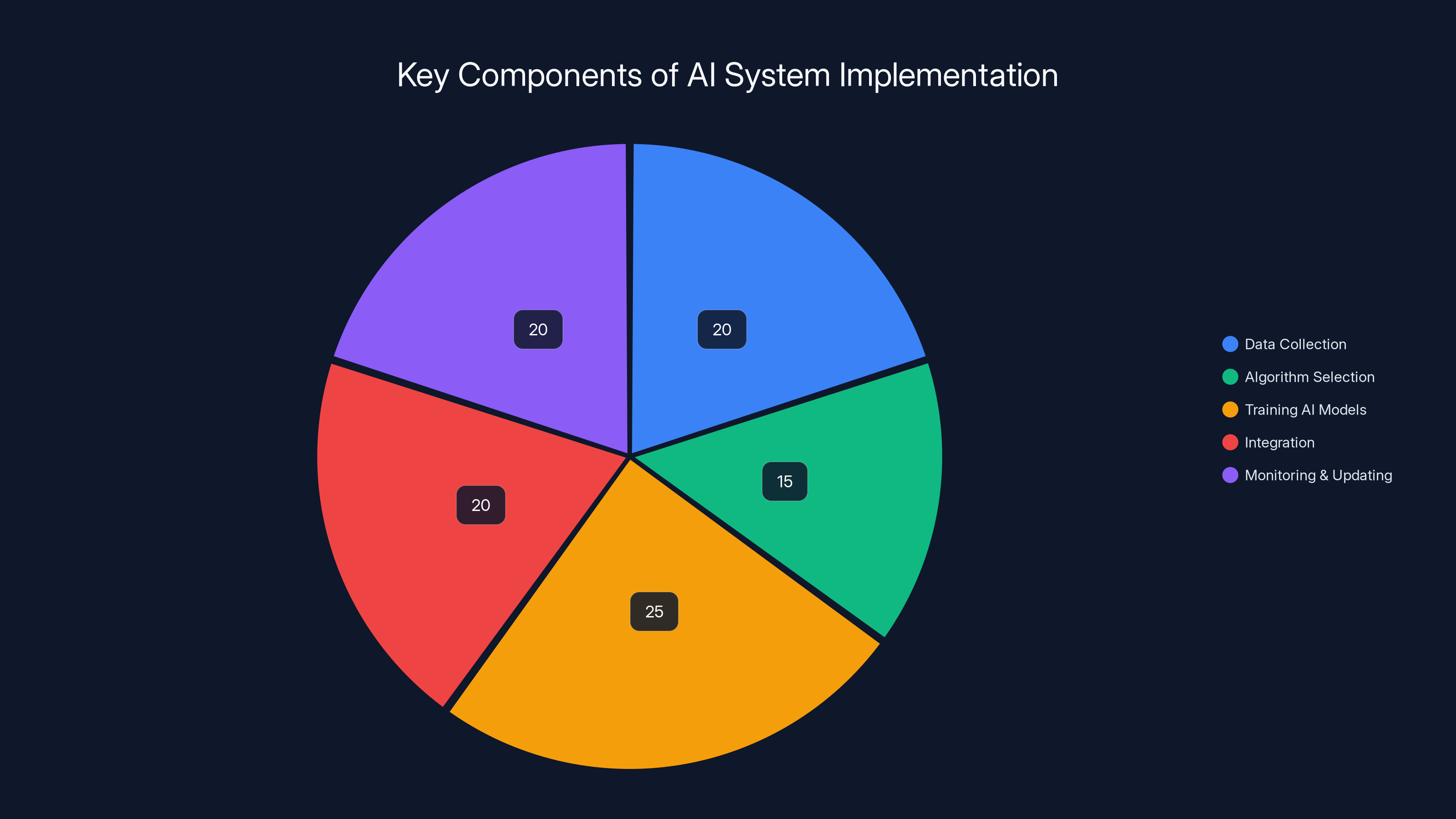

This pie chart illustrates the estimated distribution of focus areas when setting up AI systems for content enforcement. Training AI models and monitoring/updating are critical components, each comprising 25% and 20% of the focus, respectively. (Estimated data)

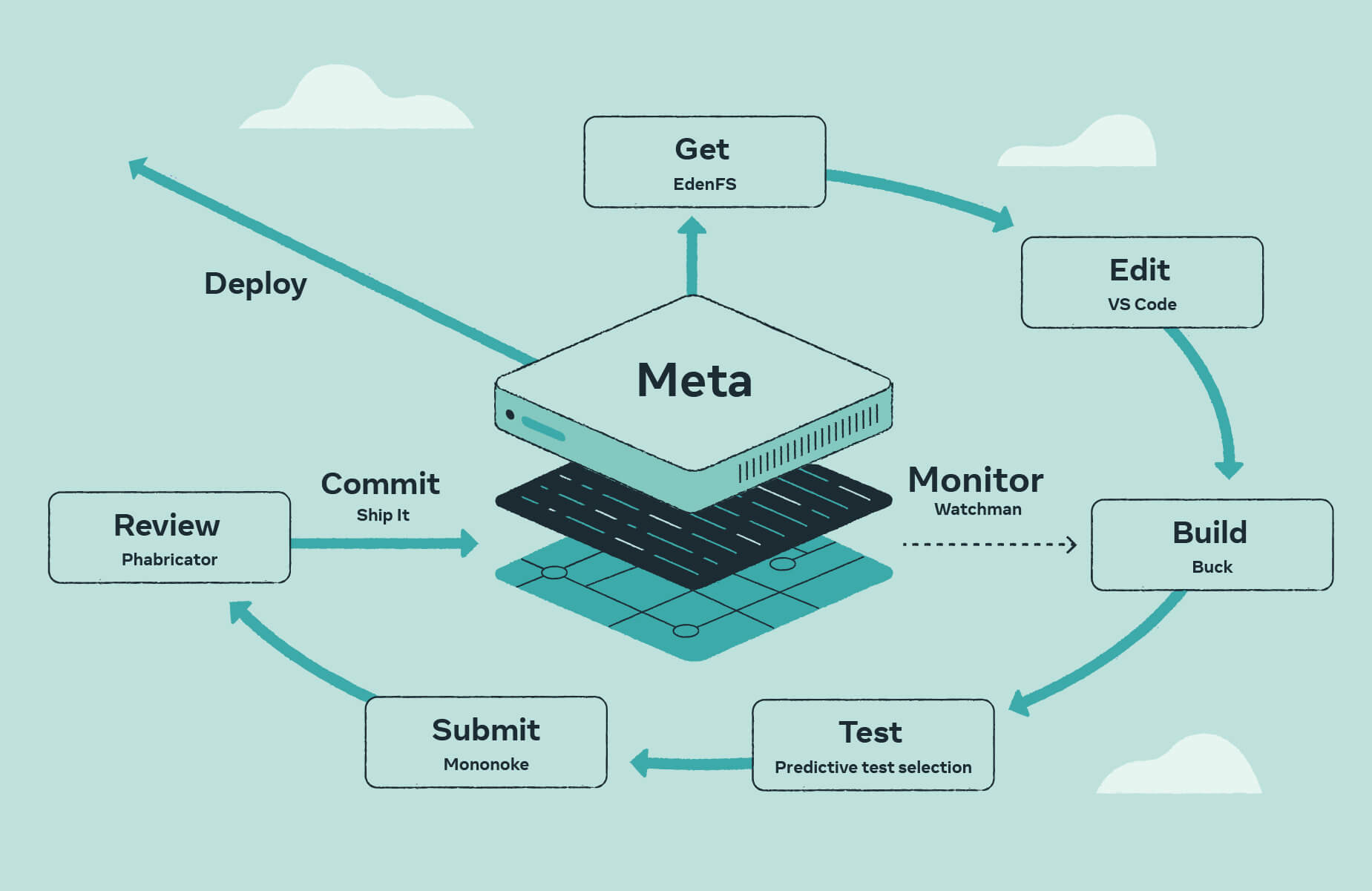

How Meta's AI Systems Work

Machine Learning and Natural Language Processing

Meta's AI systems leverage machine learning (ML) and natural language processing (NLP) to analyze text, images, and videos. These technologies enable the systems to recognize patterns and infer context, allowing them to distinguish between acceptable and harmful content.

Predictive Algorithms

Predictive algorithms are at the core of Meta's AI systems. They use historical data to anticipate potential violations, enabling preemptive action. For instance, if certain content trends indicate a rise in fraudulent posts, the system can flag and assess similar content preemptively.

Implementation Guide

Setting Up AI Systems for Content Enforcement

- Data Collection: Gather historical data on content types and previous violations.

- Algorithm Selection: Choose suitable ML and NLP algorithms based on content type and platform needs.

- Training AI Models: Use labeled datasets to train the AI systems, ensuring they learn to identify violations accurately.

- Integration: Seamlessly integrate AI systems into existing content management infrastructures.

- Monitoring and Updating: Continuously monitor AI performance and update models with new data.

Best Practices

- Regular Updates: Constantly update AI models with the latest data to improve accuracy.

- Human Oversight: Maintain a team of human moderators to handle complex cases AI might misinterpret.

- User Feedback: Implement feedback mechanisms to refine AI judgments.

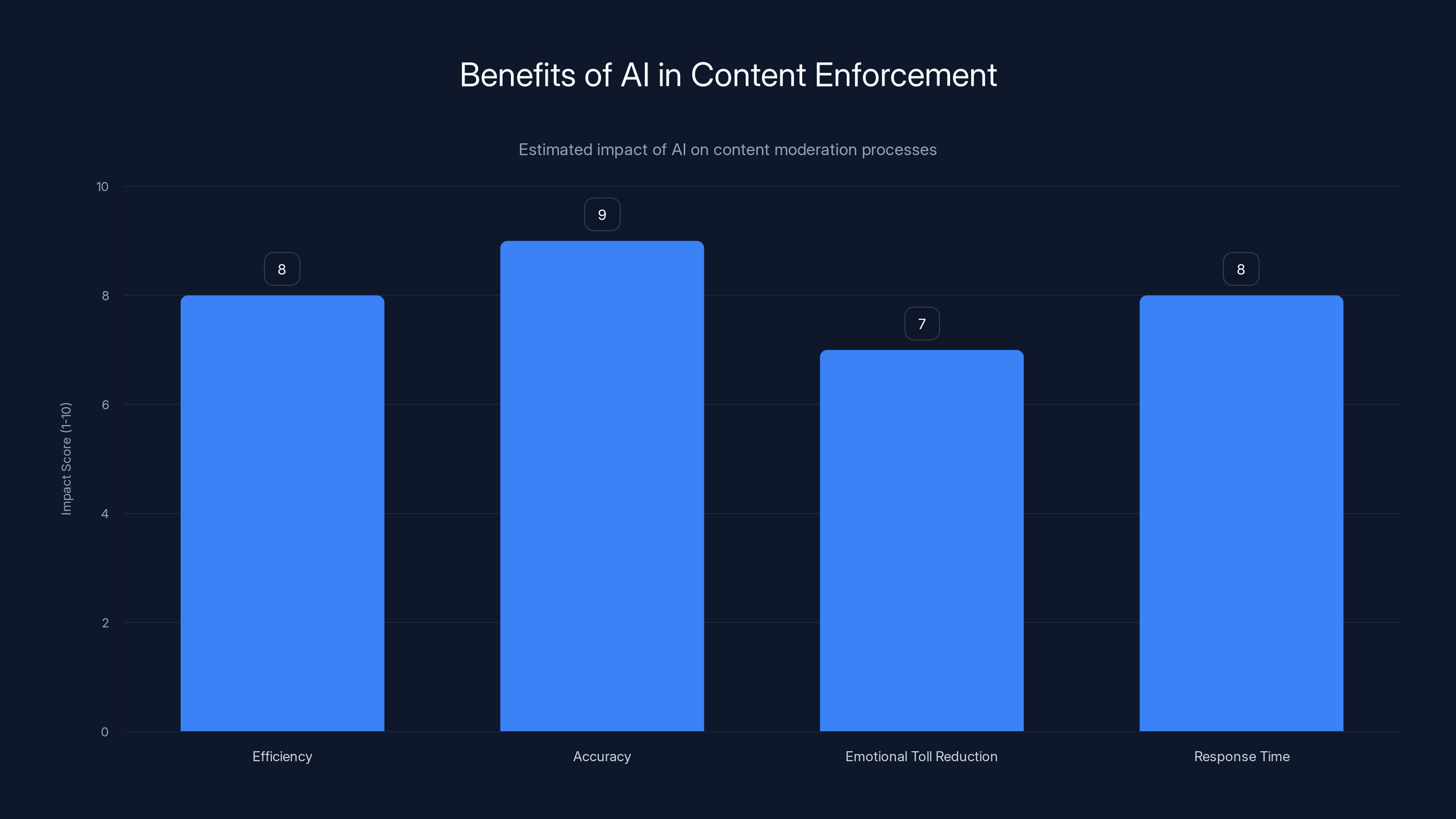

AI significantly improves content enforcement by enhancing efficiency, accuracy, and response times while reducing the emotional toll on human moderators. (Estimated data)

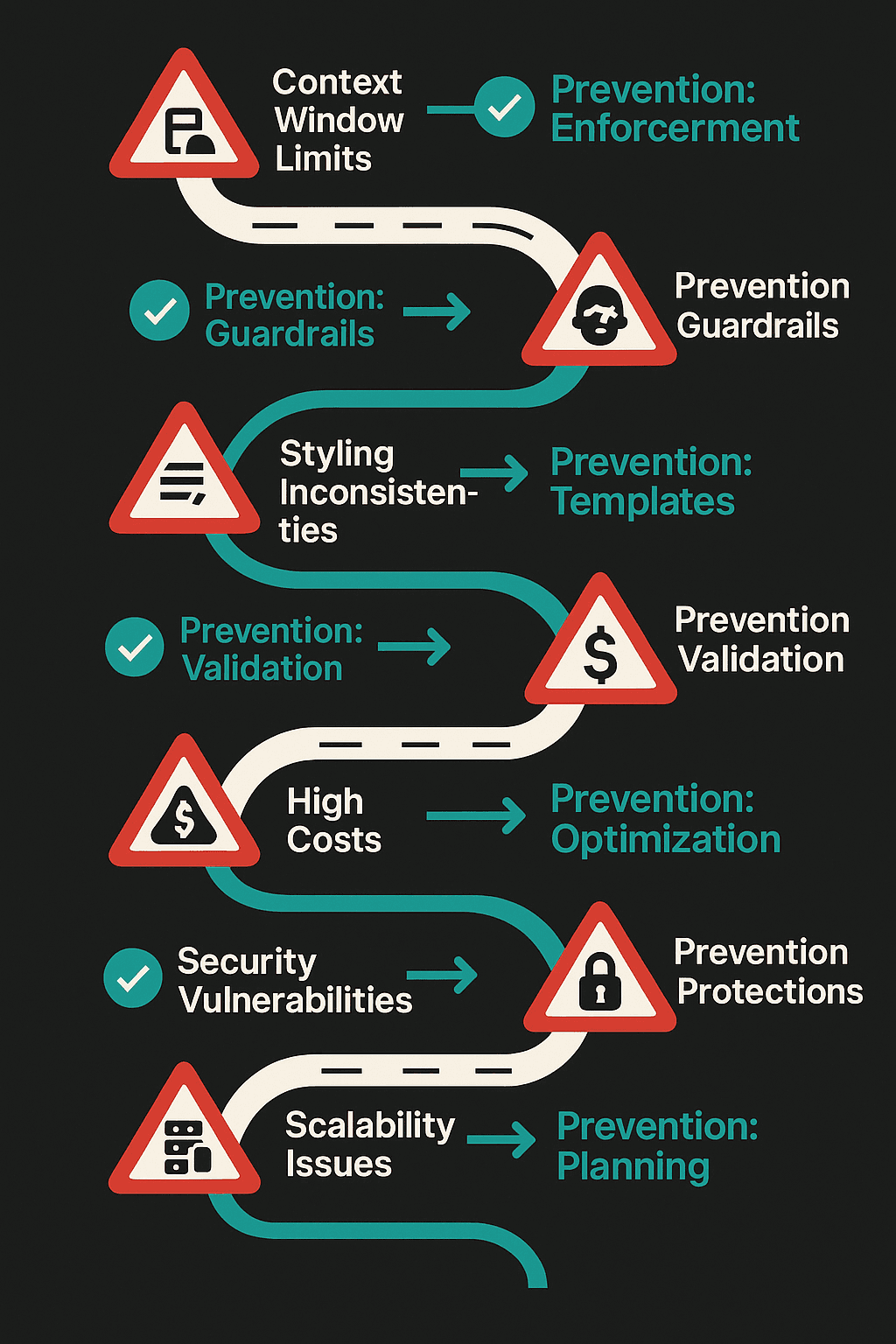

Common Pitfalls and Solutions

Over-Reliance on AI

While AI can handle vast data efficiently, over-reliance might lead to oversight of nuanced cases. Balance AI use with human judgment to ensure fair content moderation.

Algorithmic Bias

AI systems can inherit biases present in training data. To mitigate this, diversify training datasets and implement bias detection mechanisms.

Adversarial Tactics

Malicious actors may exploit AI weaknesses. Employ robust security measures and continuously update AI to counter evolving threats.

Future Trends in AI Content Enforcement

Increased Automation

As AI technologies improve, we can expect a higher degree of automation in content enforcement. AI systems will become more autonomous, requiring minimal human intervention.

Cross-Platform Integration

Future AI systems will likely integrate across multiple platforms, allowing for seamless content moderation across Meta's suite of apps. This will enhance consistency and efficiency in enforcement practices.

Ethical AI Development

The focus will shift towards developing ethical AI systems that prioritize user privacy and transparency. This includes clear communication of AI decisions and ensuring data protection.

Conclusion

Meta's rollout of new AI content enforcement systems marks a significant step forward in digital content moderation. By reducing reliance on third-party vendors and enhancing AI capabilities, Meta aims to create safer online environments. As AI technologies advance, the potential for industry-wide transformation in content enforcement grows, promising more efficient and ethical digital landscapes.

FAQ

What is AI content enforcement?

AI content enforcement involves using artificial intelligence to monitor, detect, and manage harmful content on digital platforms, ensuring compliance with community standards.

How do Meta's AI systems improve content moderation?

Meta's AI systems enhance moderation by automating the detection of violations, reducing response times, and adapting to evolving tactics used by malicious actors.

What are the benefits of AI in content enforcement?

Benefits include increased efficiency, accuracy in identifying harmful content, reduced emotional toll on human moderators, and faster response to violations.

How does AI handle biases in content moderation?

AI systems are trained with diverse datasets and regularly audited for biases to ensure fair moderation. Developers implement bias detection mechanisms to minimize bias impact.

What role do human moderators play alongside AI?

Human moderators handle complex cases that AI might misinterpret, provide oversight, and ensure ethical considerations in content enforcement.

Are Meta's AI systems used across all its platforms?

Yes, Meta plans to deploy AI content enforcement systems across its suite of apps, including Facebook, Instagram, and WhatsApp, ensuring consistent moderation practices.

How does AI content enforcement impact user privacy?

AI systems are designed with privacy in mind, ensuring data protection and transparency in moderation decisions. Ethical AI development prioritizes user privacy.

What is the future of AI content enforcement?

The future includes increased automation, cross-platform integration, and ethical AI development, leading to more efficient and transparent content moderation.

Key Takeaways

- Meta's AI systems improve content enforcement efficiency.

- Reduced third-party reliance enhances internal capabilities.

- AI systems adapt to evolving malicious tactics.

- Increased automation will shape future content moderation.

- Ethical AI development prioritizes user privacy and transparency.

Related Articles

- The Future of Internet Law: Exploring the Implications of Proposed Legislative Changes [2025]

- Google Expands Search Live Globally: A New Era in AI-Powered Search [2025]

- The Controversy Surrounding ByteDance's Seedance 2.0 AI: Implications and Future Trends [2025]

- ChatGPT's 'Adult Mode': Navigating the New Era of Intimate Surveillance [2025]

- Waymo's Journey to 170 Million Miles: Navigating the Future of Autonomous Driving [2025]

- Alexa+: The AI Layer Over Everything We Didn't Know We Needed [2025]

![Meta's Next-Gen AI Systems: Revolutionizing Content Enforcement [2025]](https://tryrunable.com/blog/meta-s-next-gen-ai-systems-revolutionizing-content-enforceme/image-1-1773941715300.jpg)