Micron's 256GB LPDDR5x Module: Revolutionizing AI Servers with 2TB Capacity [2025]

In the realm of AI and high-performance computing, memory capacity has always been a critical determinant of system capability. With the introduction of Micron's 256GB LPDDR5x memory module, a new horizon of possibilities opens up for AI servers. This technological leap not only enhances memory capacity but also optimizes power efficiency and bandwidth, ushering in a new era for data centers and AI workloads.

TL; DR

- 256GB LPDDR5x Modules: Micron's innovation in memory technology leads to massive capacity gains.

- Hyperscale Potential: Stack eight modules to achieve a staggering 2TB in AI servers.

- AI Workloads: Ideal for large language models and inference pipelines.

- Efficiency and Performance: Combines high capacity with low power consumption.

- Future Trends: Sets a new standard for memory design in AI infrastructures.

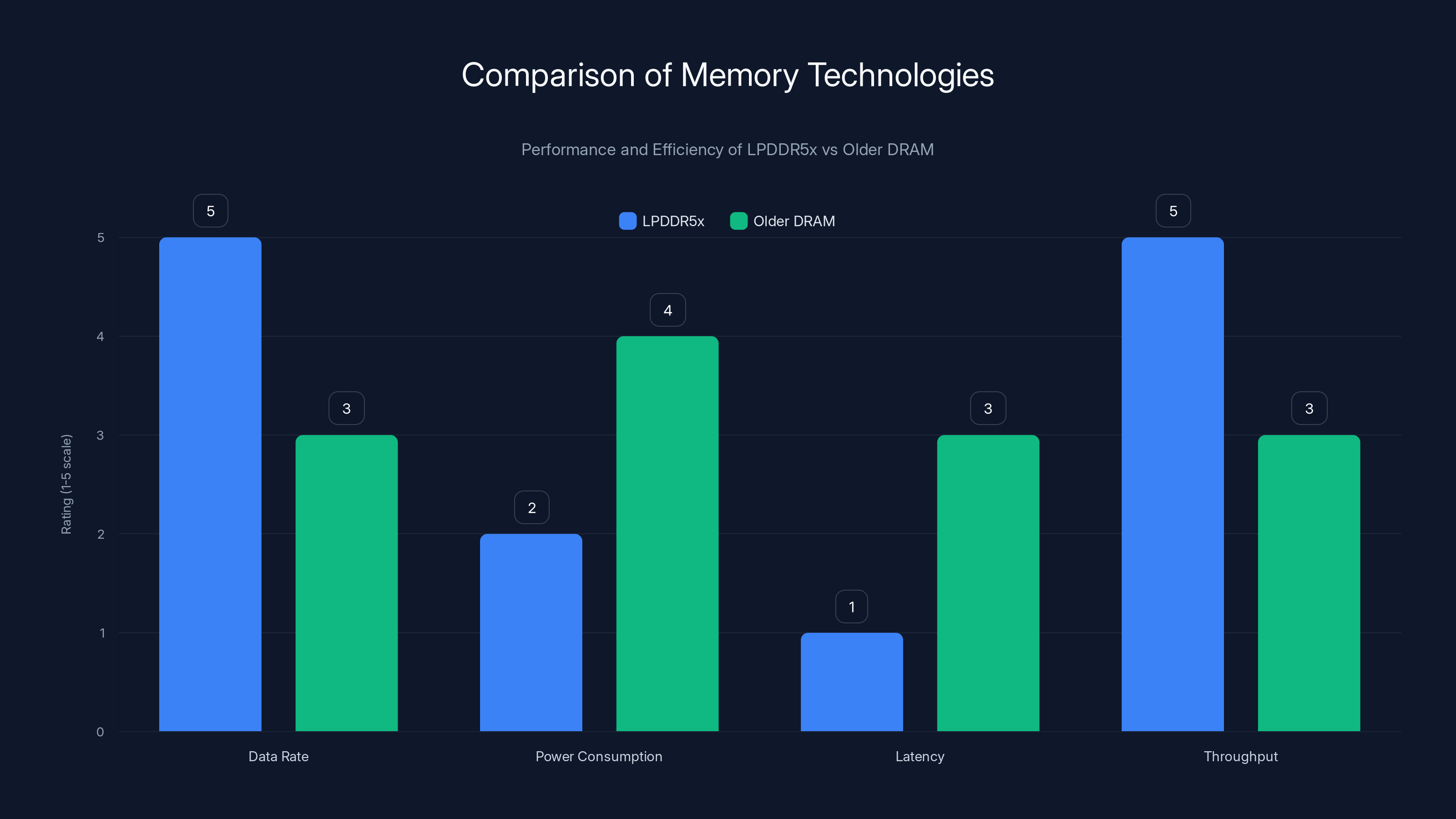

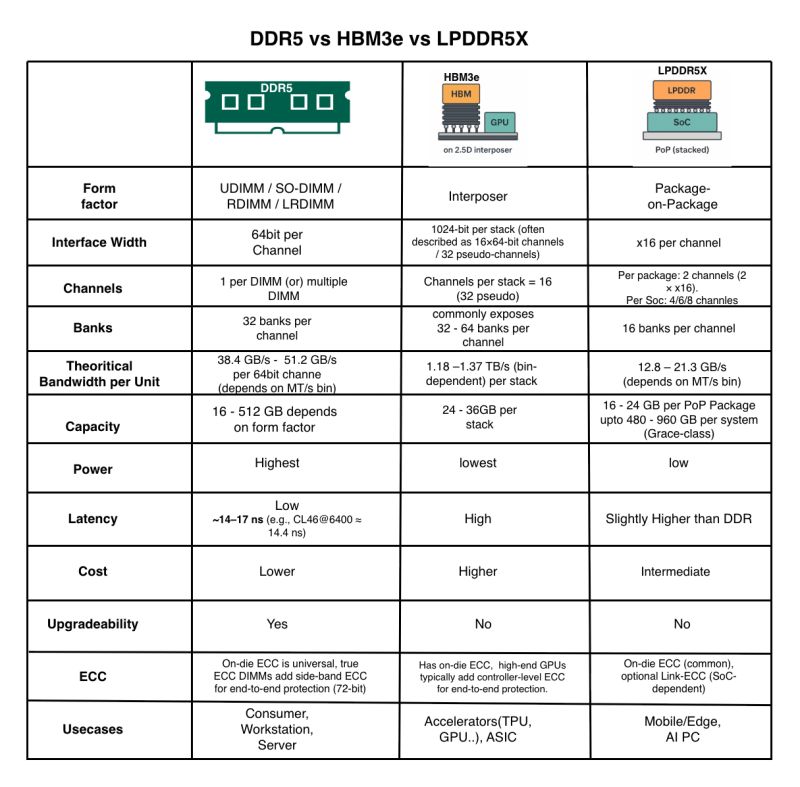

LPDDR5x significantly outperforms older DRAM technologies in data rate and throughput while consuming less power and reducing latency. Estimated data.

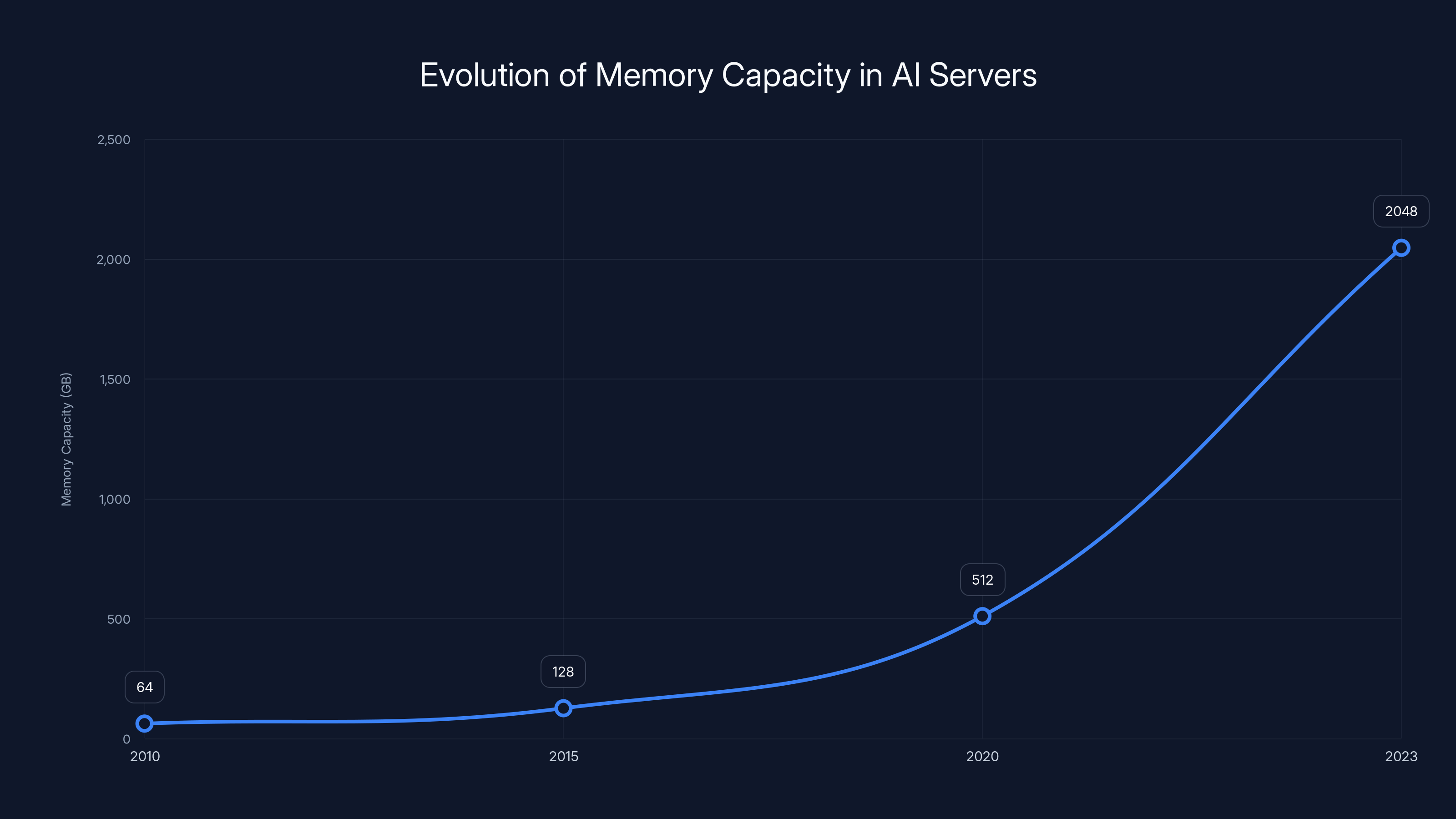

The Evolution of Memory in AI Servers

Memory technology has evolved alongside computational demands. Historically, servers relied on DRAM modules that prioritized capacity over speed. However, the rise of AI and machine learning has shifted this balance, demanding both high speed and substantial capacity. Enter LPDDR5x, a low-power, high-performance memory standard that meets these dual needs.

What is LPDDR5x?

LPDDR5x improves on previous standards by offering higher data rates and lower power consumption, making it ideal for AI workloads that require rapid data access and processing. This is particularly crucial as AI models grow larger and more complex.

Micron's Breakthrough

Micron's 256GB LPDDR5x module represents a significant leap in memory technology. By leveraging advanced packaging techniques, Micron has managed to pack more memory into a smaller footprint. This density not only increases the available memory per module but also reduces the physical space required in data centers.

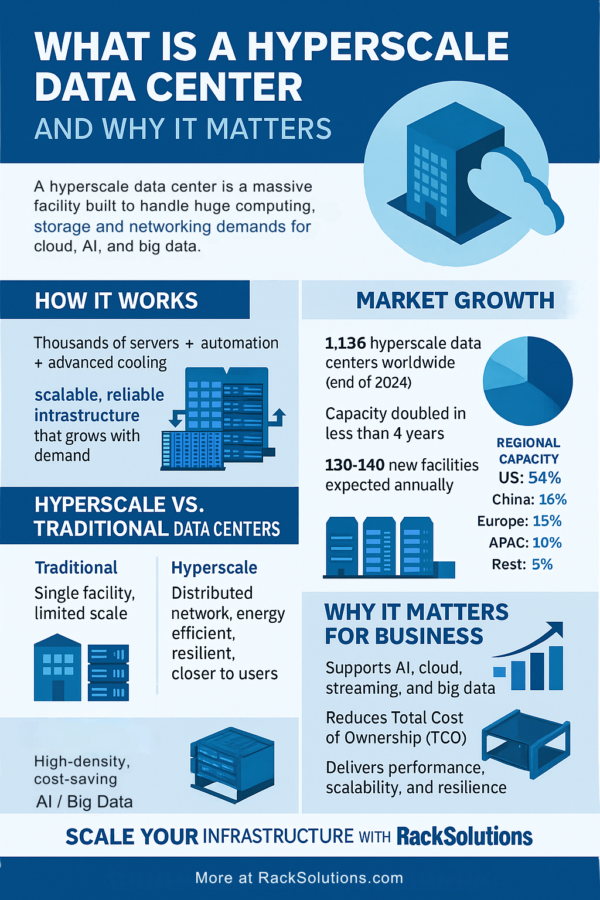

The Hyperscale Impact

Hyperscale data centers, which underpin the infrastructure of major cloud providers and AI companies, stand to benefit tremendously from Micron's innovation. By stacking eight 256GB modules, these data centers can achieve a total of 2TB of system memory per server—a capacity once thought unattainable.

Key Benefits of 2TB Memory Capacity:

- Enhanced AI Model Training: Supports larger datasets and more complex models.

- Improved Inference Performance: Reduces latency and increases throughput.

- Scalability: Facilitates the scaling of AI applications without significant hardware overhauls.

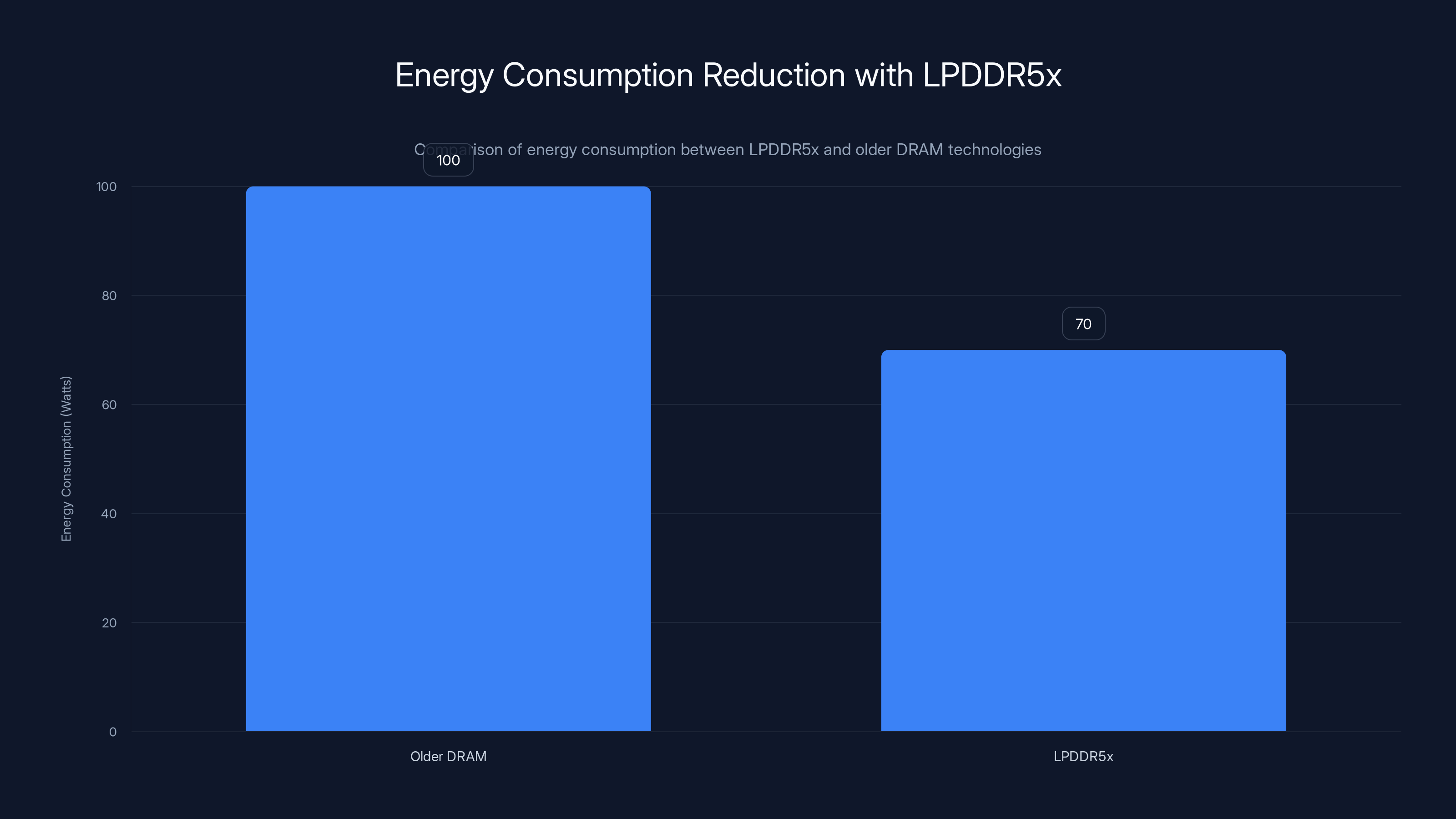

Adopting LPDDR5x in AI servers can reduce energy consumption by up to 30% compared to older DRAM technologies, enhancing efficiency.

Technical Details and Implementation

Building Blocks of the Module

Each 256GB module is composed of 64 individual 32GB LPDDR5x chips. These chips are integrated using a System-in-Package (Si P) approach, which enhances the module's performance characteristics by minimizing latency and maximizing bandwidth.

Power Efficiency and Cooling

A critical aspect of Micron's design is its power efficiency. LPDDR5x offers significant reductions in power consumption compared to traditional DRAM solutions. This is achieved through:

- Dynamic Voltage Scaling: Adjusts power usage based on workload demands.

- Thermal Management: Advanced heat dissipation techniques to maintain optimal temperatures.

Integration with AI Workloads

AI workloads, particularly those involving large language models (LLMs), require substantial memory resources. The increased capacity of Micron's modules allows for more data to be held in memory, reducing the need for slower disk access and thus speeding up processing times.

Common Use Cases:

- Natural Language Processing (NLP): Enables real-time processing of large text corpora.

- Computer Vision: Supports high-resolution image analysis and pattern recognition.

- Autonomous Systems: Facilitates complex decision-making algorithms in real-time.

Practical Implementation Guide

Step-by-Step Integration

- Assessment of Current Infrastructure: Evaluate existing server capabilities and identify compatibility with LPDDR5x modules.

- Module Procurement: Source modules from Micron or authorized distributors.

- Installation: Follow Micron's installation guidelines to ensure proper module seating and connection.

- Configuration: Update server BIOS and memory settings to optimize for LPDDR5x performance.

- Testing and Validation: Conduct performance benchmarks to validate improvements.

Best Practices

- Firmware Updates: Regularly update server firmware to support the latest memory optimizations.

- Load Balancing: Distribute AI workloads evenly across available resources to prevent bottlenecks.

- Monitoring: Implement comprehensive monitoring tools to track memory usage and performance.

The chart illustrates the significant growth in memory capacity in AI servers, reaching 2TB in 2023 with advancements like Micron's LPDDR5x modules. Estimated data.

Common Pitfalls and Solutions

Pitfalls

- Compatibility Issues: Ensure that the server motherboard supports LPDDR5x modules.

- Overheating: Without adequate cooling, modules may throttle to prevent damage.

- Configuration Errors: Incorrect BIOS settings can lead to suboptimal performance.

Solutions

- Thorough Compatibility Checks: Verify specifications before purchase.

- Enhanced Cooling Solutions: Consider liquid cooling or advanced air cooling.

- BIOS Optimization: Work with IT specialists to configure settings for peak performance.

Future Trends and Recommendations

The Path Forward

As AI models continue to grow in size and complexity, the demand for high-capacity, efficient memory solutions like Micron's LPDDR5x modules will only increase. Here are some trends to watch:

- AI Model Scaling: Expect continued growth in model sizes, necessitating even larger memory capacities.

- Edge AI Applications: LPDDR5x may become a staple in edge devices, enabling powerful processing closer to data sources.

- Sustainability: Energy-efficient memory solutions will be crucial in reducing the carbon footprint of data centers.

Conclusion

Micron's 256GB LPDDR5x module is a game-changer for AI servers, offering unprecedented memory capacity and efficiency. By enabling 2TB configurations, these modules set a new standard for what is possible in AI and high-performance computing environments. As we move forward, the integration of such technologies will be essential for organizations aiming to lead in the AI space.

FAQ

What is the significance of Micron's 256GB LPDDR5x module?

Micron's module significantly enhances memory capacity, allowing AI servers to support larger and more complex workloads, thereby improving performance and efficiency.

How do these modules improve AI server performance?

By providing more memory capacity, these modules reduce latency, increase throughput, and enable better handling of large datasets, crucial for AI tasks.

What are some common pitfalls when integrating these modules?

Common issues include compatibility problems, overheating, and incorrect BIOS configurations, all of which can be mitigated with proper planning and resources.

How does LPDDR5x compare to previous memory technologies?

LPDDR5x offers higher data rates and lower power consumption compared to older DRAM technologies, making it ideal for modern AI workloads.

What future trends can we expect in memory technology for AI?

Expect continued growth in memory capacity, integration in edge AI applications, and a focus on sustainable, energy-efficient solutions.

Key Takeaways

- Micron's new 256GB LPDDR5x module enhances AI server capabilities significantly.

- Eight modules can stack for a total of 2TB memory, revolutionizing data center performance.

- Improved power efficiency and bandwidth make these modules ideal for AI workloads.

- Common pitfalls include compatibility issues and overheating, but solutions are available.

- Future trends indicate growing demand for high-capacity, efficient memory solutions.

Related Articles

- Exploring Nscale's Rise: From Startup to Europe's Decacorn Powerhouse [2025]

- The Disappearance of Apple's 512GB Mac Studio: Uncovering the RAM Shortage [2025]

- The Pledge to Power: Data Center Giants Commit to Generating Their Own Energy [2025]

- Tech Giants Pledge Not to Pass Data Center Costs to Consumers: What This Means for You [2025]

- Tech Giants Pledge to Curb Energy Impact of AI Data Centers [2025]

- Big Tech Signs White House Data Center Pledge With Good Optics and Little Substance | WIRED

![Micron's 256GB LPDDR5x Module: Revolutionizing AI Servers with 2TB Capacity [2025]](https://tryrunable.com/blog/micron-s-256gb-lpddr5x-module-revolutionizing-ai-servers-wit/image-1-1773099282504.jpg)