Introduction: When Mission Success Masks Catastrophic Risk

Imagine you're an experienced pilot strapped into a spacecraft hurtling toward the International Space Station at 17,500 miles per hour. Your thrusters are failing. One after another, they're shutting down. You've lost control in six degrees of freedom. You can't steer. You can't stabilize. You're staring at orbital mechanics calculations in your head, wondering if you can even come back home alive.

This wasn't a worst-case scenario drill. This was June 2024. And the pilot was astronaut Butch Wilmore.

Yet the world heard nothing about this danger until months later. Instead, Boeing celebrated what it called "an outstanding day." NASA appeared to back that assessment. The spacecraft docked successfully, the mission was declared a win, and the early reporting focused on achievement. But underneath that success narrative lay a chilling truth: critical systems had failed, leadership had made questionable decisions, and a culture had developed that prioritized messaging over safety.

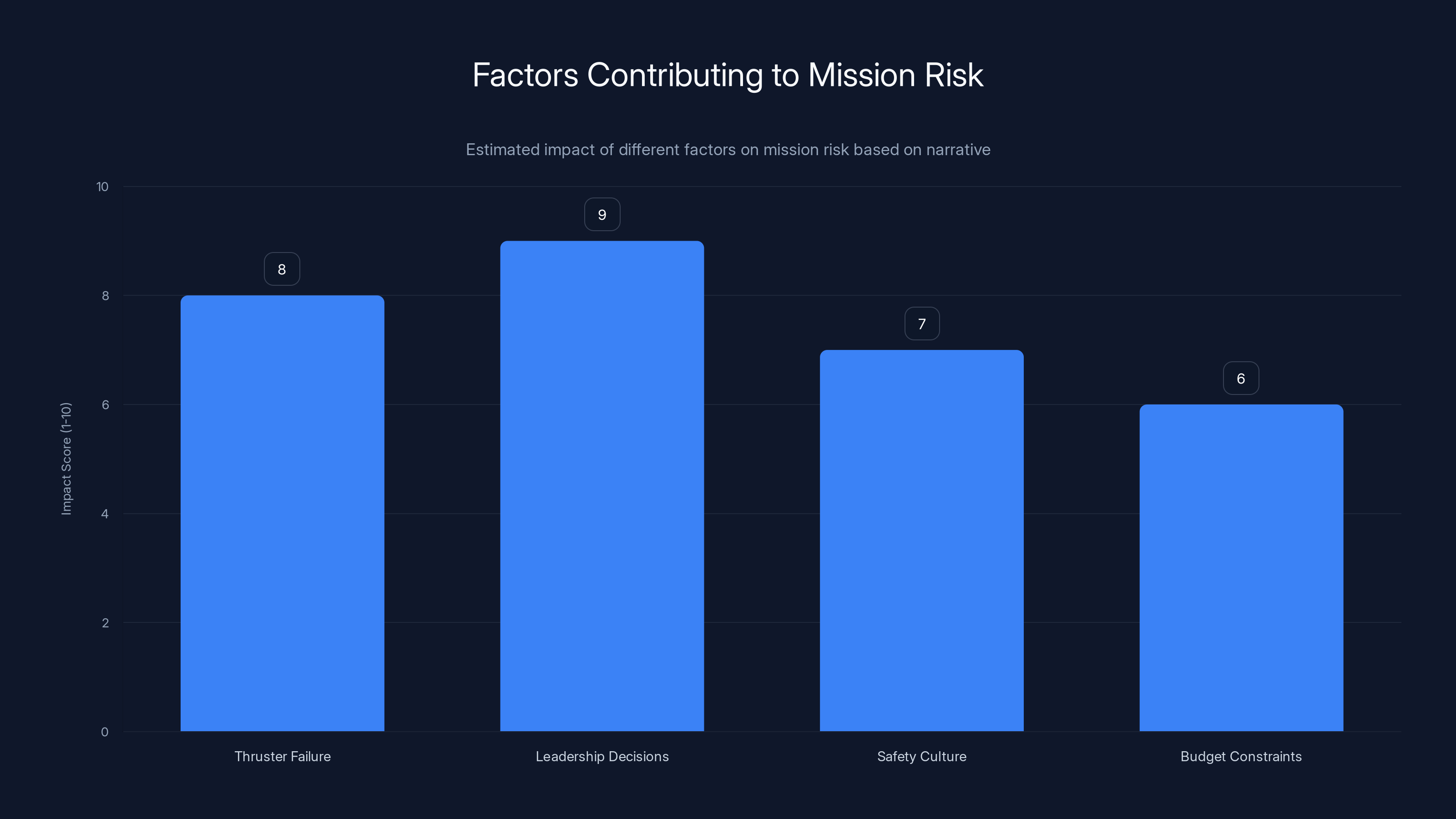

In February 2025, NASA Administrator Jared Isaacman delivered his verdict. The Starliner flight wasn't a success with minor issues. It was a "Type A" mishap—the space agency's formal classification for serious failures. More provocative than the classification itself was Isaacman's statement: "The most troubling failure revealed by this investigation is not hardware. It is decision-making and leadership that, if left unchecked, could create a culture incompatible with human spaceflight" as reported by CNN.

This wasn't about thrusters or helium lines anymore. It was about an institutional failure to acknowledge risk, prioritize crew safety, and maintain the uncompromising standards that human spaceflight demands.

This investigation revealed something larger than one troubled spacecraft. It exposed how even mature, experienced organizations can gradually drift toward decisions that would have been unthinkable in earlier eras. It showed how budget pressure, schedule pressure, and the desire to demonstrate commercial success can subtly shift an agency's judgment. And it raised uncomfortable questions about whether NASA had lost some of the risk aversion that once defined its approach to human spaceflight.

The Starliner investigation matters because it's about more than Boeing. It's about organizational culture, decision-making under pressure, and whether NASA can maintain the standards necessary for human spaceflight in an era of commercial partnerships and accelerating timelines.

What Happened on June 5, 2024: The Flight That Wasn't Outstanding

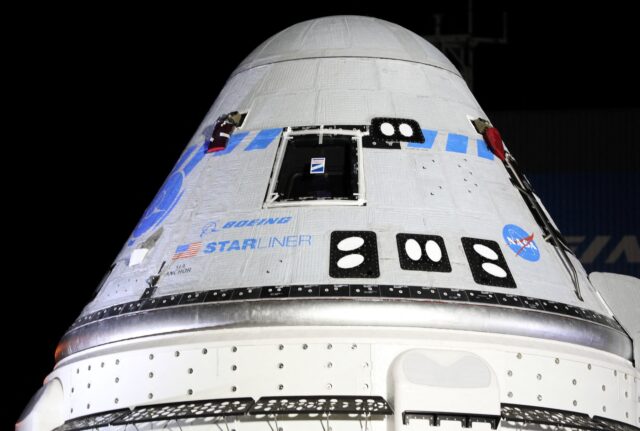

Boeing's Starliner had been in development for years as part of NASA's Commercial Crew Program, a bold initiative to let private companies handle astronaut transportation rather than relying solely on government spacecraft. The idea made economic sense. The vision was elegant. Let competition drive innovation and reduce costs while NASA focused on deeper exploration goals.

But June 5, 2024, tested that vision immediately. Starliner launched on an Atlas V rocket from Cape Canaveral, carrying astronauts Wilmore and Williams toward orbit. The launch itself succeeded. But within minutes of reaching space, mission controllers noticed something alarming: helium was leaking from the spacecraft's propulsion system as reported by WESH.

Helium under pressure is the working fluid for Starliner's reaction control thrusters. It pressurizes the fuel lines, driving propellant through the injectors. Lose helium, and you lose the ability to generate thrust. The leak was small at first. Controllers watched the pressure slowly drop. Maybe it would stabilize, they thought. Maybe it was manageable.

It wasn't. The leak continued throughout the ascent to orbit.

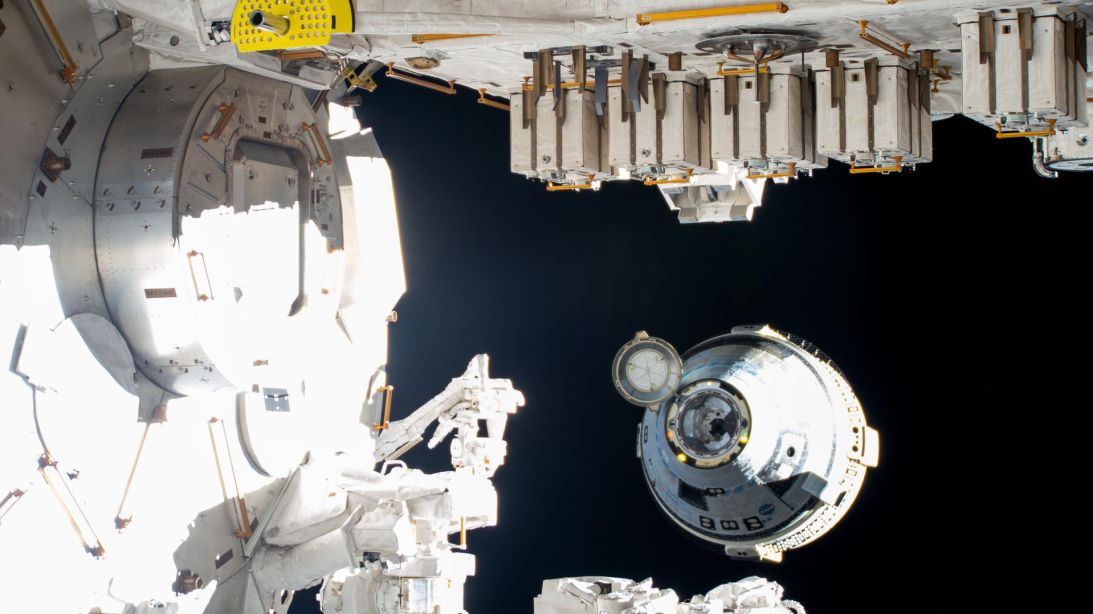

But that was just the beginning. Once Starliner reached the orbital rendezvous phase with the space station, things deteriorated further. The thrusters that should have been functioning began failing. Intermittently at first. One would shut down. Controllers would compensate. Another would fail. Compensate again. Wilmore, taking manual control, found himself managing a spacecraft that was becoming increasingly unresponsive.

The situation was genuinely precarious. Wilmore was flying an uncrewed military test of Starliner earlier in 2022 had encountered thruster issues, but nothing like this. Yet somehow, Starliner limped forward. The docking eventually succeeded. The spacecraft had reached the station despite carrying critical failures.

And that's when the narrative became troubling. Boeing officials, standing in front of cameras, declared victory. "We accomplished a lot, and really more than expected," said Mark Nappi, Boeing's Commercial Crew Program manager. "We just had an outstanding day" as noted by NBC News. The framing was success. The message to the public, to NASA, to the supply chain partners was: mission accomplished.

NASA mostly echoed that message in the days immediately following. The strategy, officials said, was to bring the crew home on Starliner as planned, perhaps after some additional tests. This decision puzzled outside observers. If the spacecraft had experienced thruster failures during approach, what confidence was there that the more demanding deorbit burn would succeed?

The deorbit burn is arguably the most critical phase of any spacecraft return. The spacecraft must fire engines with precise timing and duration to slow down and drop out of orbit. Get this wrong, and the spacecraft either doesn't reenter (you stay in space forever) or reenters at the wrong angle and speed (the crew burns up or the spacecraft crashes). This is not a phase where you want to trust a vehicle with a history of thruster failures.

But the official message remained: Starliner would bring the crew home.

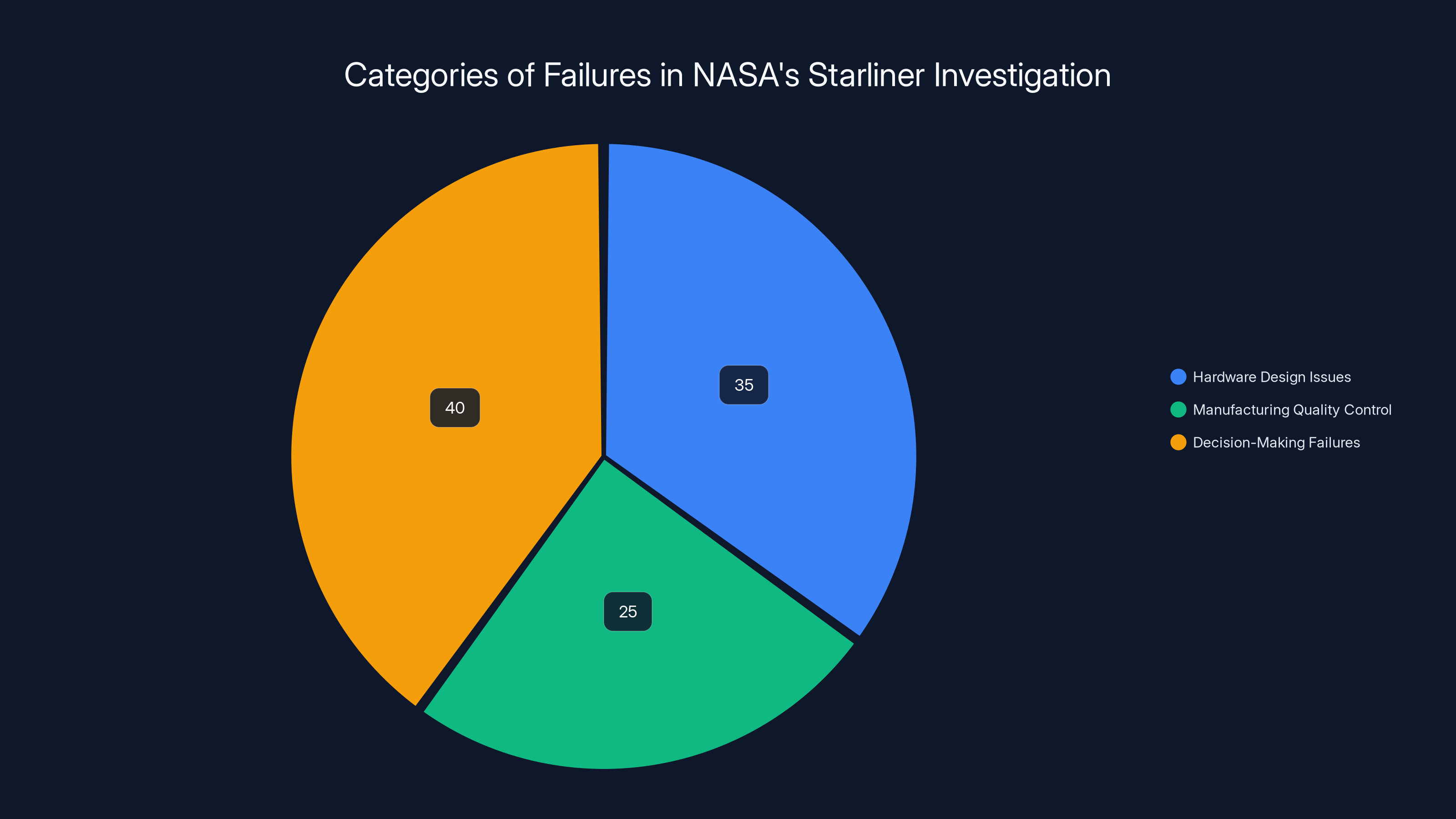

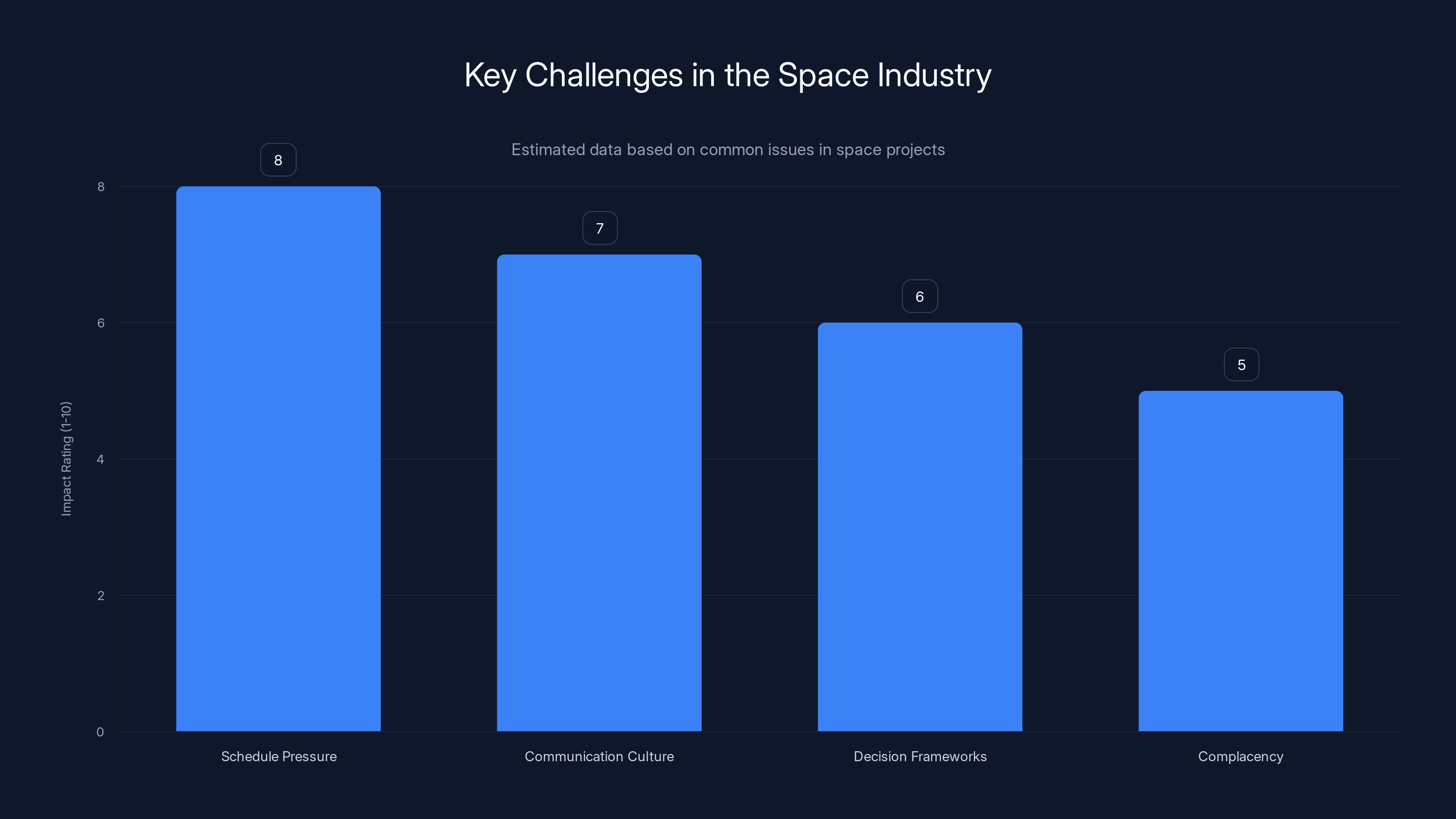

The investigation highlighted decision-making failures as the most significant issue, comprising 40% of the problems, followed by hardware design issues and manufacturing quality control.

The Private Moment: Wilmore's Realization of Real Danger

Wilmore's perspective on what happened in the capsule provides crucial context for understanding why the investigation mattered so much. In an interview months after his return, he described the moment when he realized how serious the situation had become.

The issue wasn't just that thrusters were failing. It was that losing thruster authority in a spacecraft without backup systems creates cascading hazards. Six degrees of freedom (6DOF) refers to movement in three dimensions: forward-backward, left-right, up-down, plus rotation around three axes. A spacecraft needs thrusters to control all six. Lose enough thrusters, and you lose what engineers call "authority"—the ability to maneuver.

Wilmore found himself in exactly that position. With multiple aft thrusters inoperative, he couldn't fully control Starliner's orientation or position. He was essentially flying a spacecraft with impaired responses.

He then faced a decision tree with no good options. The space station was ahead of him, but slightly offset and at a different altitude. In orbital mechanics, altitude and velocity are linked. If you're below the station, you're moving faster than the station. You'll move away from it. If you're above, you move slower and fall behind. Starliner, with limited thruster control, was positioned awkwardly relative to the target.

Wilmore could attempt to dock anyway, which would require precise thruster firings with a spacecraft he couldn't fully control. Or he could abort the approach and attempt deorbit to return to Earth. But deorbit is more demanding than approach. You need sustained, powerful burns to slow down enough to fall out of orbit. The thrusters that had already failed during the less demanding approach were now required for the more demanding deorbit.

He was trapped in a situation where both options—docking and deorbit—required thruster reliability he no longer had.

"I don't know that we can come back to Earth at that point," Wilmore said in his later interview. "I don't know if we can. And matter of fact, I'm thinking we probably can't." He was visualizing orbital mechanics, running through the physics in his head, contemplating what would happen if another thruster failed. What if they lost communications? What would he do then?

The fact that Starliner eventually docked successfully suggests that Wilmore's piloting and perhaps some luck allowed the spacecraft to reach the station despite the failures. But this doesn't change the fundamental truth: the system had failed. The spacecraft was operating outside safe parameters. The crew faced genuine hazard.

Yet for weeks after docking, NASA and Boeing continued publicly discussing bringing the crew home on Starliner. This disconnect between the private reality (the pilot's genuine concern about the spacecraft's reliability) and the public message (confidence in the spacecraft) became one of the central issues that the investigation later highlighted as discussed by Talk of Titusville.

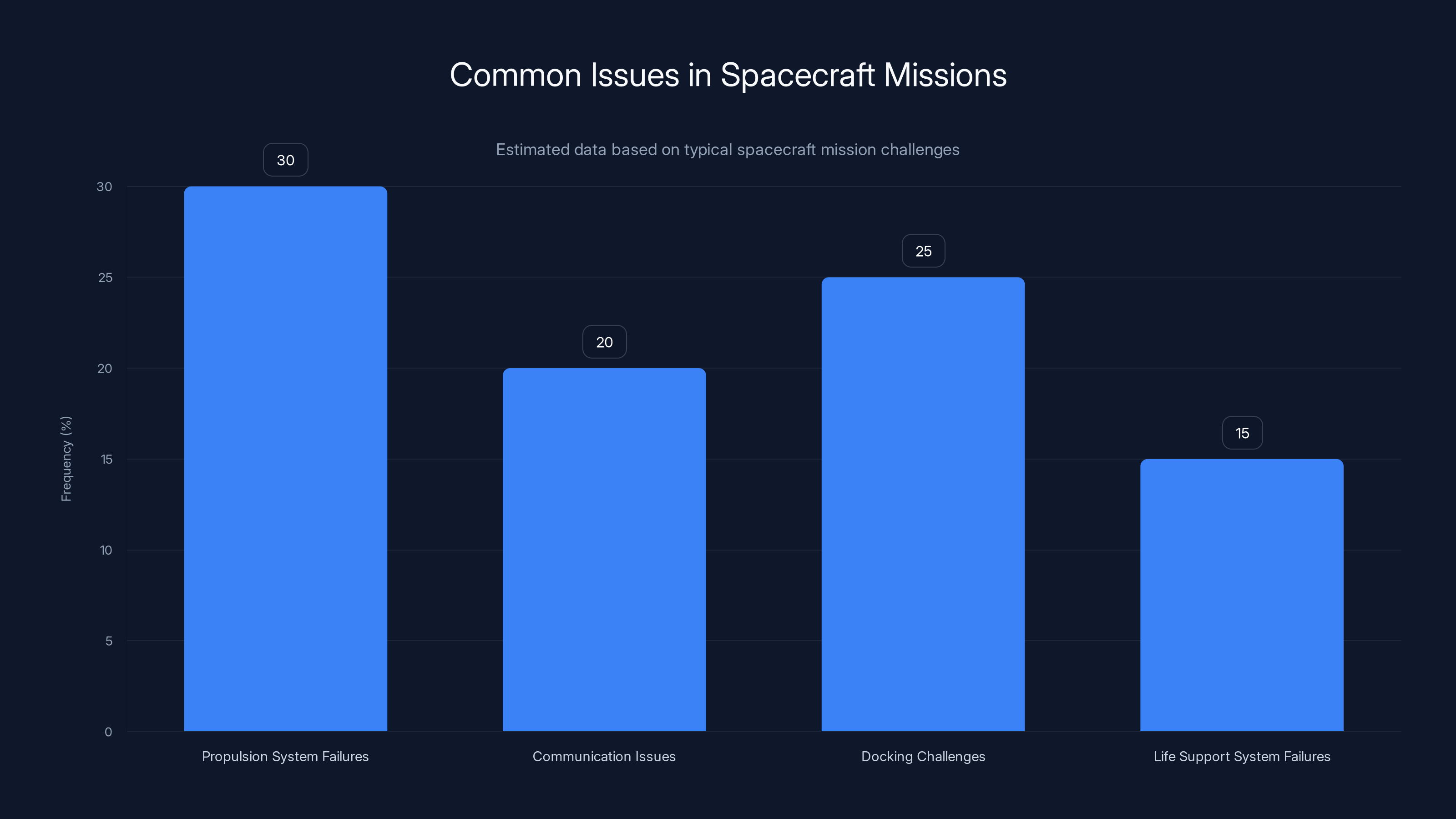

Propulsion system failures are among the most common issues in spacecraft missions, accounting for an estimated 30% of problems encountered. Estimated data.

Decision-Making Under Pressure: Why Boeing and NASA Didn't Immediately Pull the Plug

Understanding why NASA and Boeing continued the return-on-Starliner strategy despite clear evidence of problems requires understanding the pressures, both institutional and financial, that organizations face in the space industry.

Boeing had invested billions in Starliner's development. The Commercial Crew Program itself was designed partly as a vote of confidence in commercial space capabilities. Switching the crew to Space X's Dragon would be an admission that Starliner wasn't ready—a message that would reverberate through Boeing's stock price, its government relationships, and its broader commercial space ambitions.

NASA, meanwhile, had championed commercial crew from the beginning. The space agency had bet its approach to human spaceflight on the commercial sector's ability to deliver. Walking back that bet by refusing to use the spacecraft would suggest the program itself had fundamental flaws.

Both organizations faced schedule pressure. Keeping astronauts on the International Space Station requires continuous resupply missions and crew rotations. NASA's flight manifest didn't have unlimited flexibility. If Starliner couldn't return the crew, the crewed return slots would need to be filled by other vehicles. Space X's Dragon has limited cargo capacity when configured for crew, and its own schedule was already packed.

Additionally, once the crew was docked at the station, there was a mental shift in how risk was evaluated. The crew wasn't in immediate danger of dying in a launch or in-space event. They were safely at the station. The question became: what's the comparative risk of returning on Starliner versus staying longer and returning on a different vehicle? This reframing made the risk feel more manageable.

But this reasoning misses a critical insight: the deorbit and reentry phases are inherently the most dangerous parts of a crewed spacecraft mission. Returning safely from orbit is exponentially harder than reaching orbit. A spacecraft with thruster reliability issues is a dangerous way to come home.

Boeing's public confidence likely reflected several things: a genuine belief that the thruster issues were isolated anomalies, pressure from leadership to project confidence to stakeholders, and perhaps a bias toward optimism that's common in engineering cultures where solving problems is the default mode.

NASA's slowness to act on the Starliner issue appears to reflect similar pressures combined with genuine organizational deference to Boeing's judgment. Boeing is the spacecraft builder. They have more detailed telemetry, more engineers analyzing the data, more institutional knowledge about Starliner. There's a natural tendency for NASA's program management to trust the contractor's assessment unless there's overwhelming evidence to the contrary.

But this deference created a problem: it delayed the public acknowledgment of risk and delayed contingency planning. If NASA had immediately declared that Starliner would not return the crew (regardless of whether the spacecraft could actually do it), the space agency would have had weeks to arrange alternative return options and manage the public message.

Instead, the process dragged out through July and early August 2024. Finally, on August 24, NASA formally decided that Wilmore and Williams would return on Space X's Crew Dragon instead of Starliner as noted by Cape Cod Times.

Wilmore and Williams eventually returned to Earth in March 2025 as part of the Crew-9 mission, after spending much longer in orbit than planned. They were safe. The immediate crisis was resolved.

But the decision-making process that led to the crisis, and the leadership's reluctance to acknowledge it immediately, became the focus of the investigation that followed.

The Formal Investigation: 311 Pages of Institutional Self-Examination

When Jared Isaacman became NASA Administrator in December 2024, the Starliner investigation was already underway but not yet formally concluded. Isaacman, unlike many NASA administrators, had direct spaceflight experience as a private astronaut who flew to the International Space Station. He also came from a background in commercial industry, bringing a perspective that combined respect for NASA's mission with an outsider's willingness to challenge institutional assumptions.

One of Isaacman's first major actions was to direct the release of the full 311-page Program Investigation Team report. This move was somewhat unusual. Agencies often keep investigation details internal or release heavily redacted versions. Isaacman's decision to publish the full report signaled that NASA wanted public accountability as documented by NASA.

The report's findings painted a picture of an organization that had gradually drifted from its core risk management principles. The specific failures fell into several categories: hardware design issues with Starliner's propulsion system, manufacturing quality control problems, and most critically, decision-making and leadership failures at multiple levels.

On the hardware side, the helium leak and thruster failures weren't random component failures. They reflected design deficiencies. Starliner's propulsion system had complexity and single-point failures that had been accepted as manageable during development but proved problematic in actual operation.

These are the kinds of issues that engineering organizations have mechanisms to catch: requirement reviews, design reviews, fault tolerance analysis, testing. The question that the investigation implicitly asked was: why didn't these mechanisms work?

Part of the answer lay in schedule and budget pressures. NASA's Commercial Crew Program was designed to rely on commercial competition and market forces rather than the traditional detailed government oversight of military or NASA-developed vehicles. The theory was sound: competition would drive innovation and efficiency. The execution, however, allowed less rigorous oversight than traditional programs.

Boeing faced schedule pressures that every contractor faces. Miss dates, and you miss milestones. Miss milestones, and you impact funding, company morale, and customer confidence. There's a powerful incentive to accept workarounds, to declare items complete when they're mostly complete, to proceed with testing despite incomplete data.

But human spaceflight is different from most engineering endeavors. You can't accept marginal on human spaceflight. A 99% reliable system is a failure waiting to happen when lives are aboard.

The investigation found that this standard had been eroded. Small compromises accumulated. Issues that earlier generations of spacecraft designers would have refused to accept were rationalized away. The culture had shifted incrementally from "we need to be absolutely sure this is safe" to "we need to be reasonably confident this will probably work."

Isaacman's statement about leadership failure wasn't abstract criticism. It was a diagnosis of organizational decay that, if left unchecked, would inevitably lead to a catastrophe. He was essentially saying: we caught this one. We had the chance to bring the crew home safely, and they are safe. But if we don't fix the decision-making culture that led to these compromises, next time we might not be so lucky.

The report documented where specific decisions should have been made differently. It identified leaders at Boeing and at NASA who made choices that created the conditions for risk to develop. And it made clear that accountability needed to follow—though Isaacman stopped short of publicly announcing specific personnel actions as reported by SpaceNews.

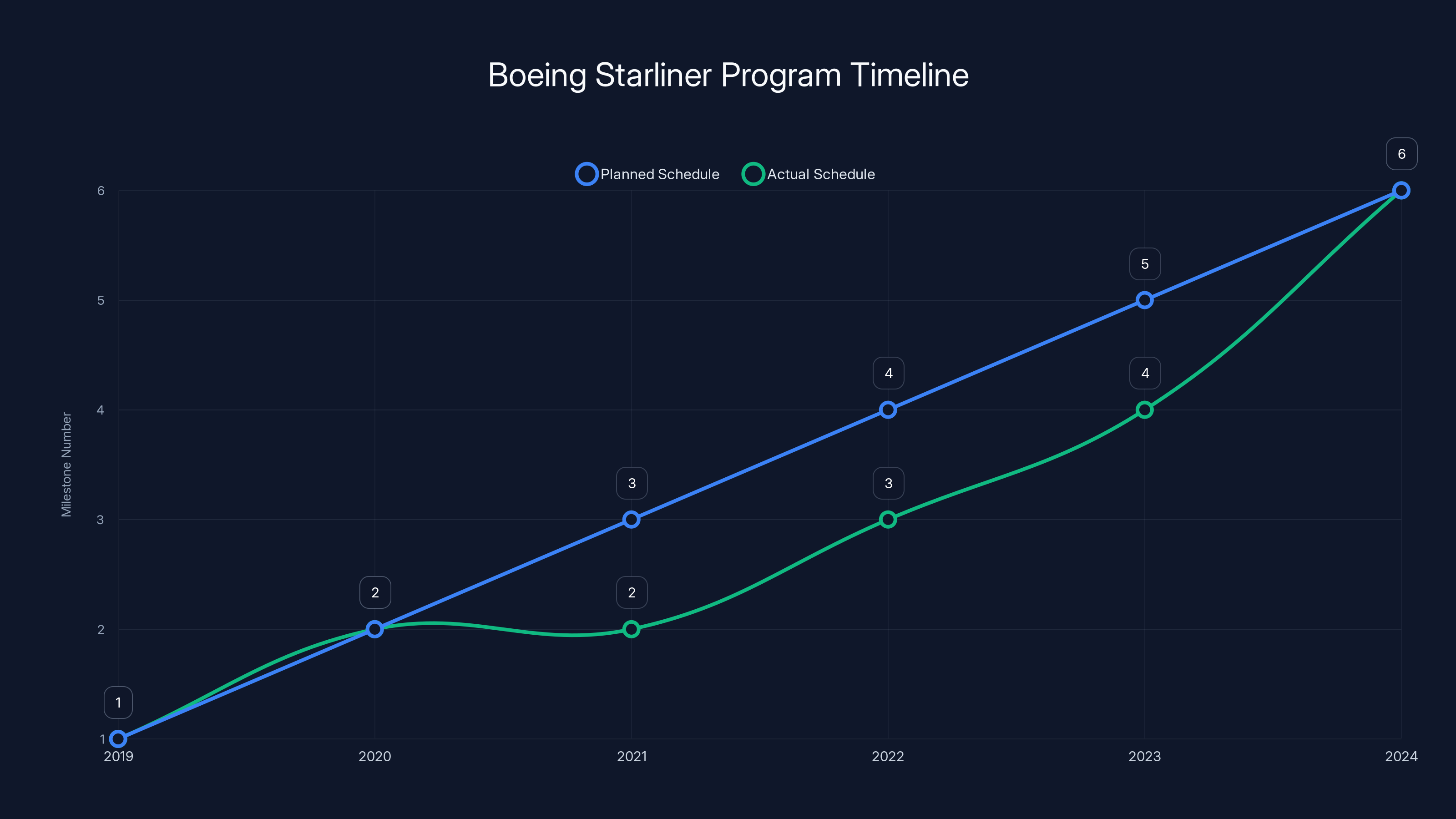

The Boeing Starliner program experienced significant delays, with key milestones slipping by several years. The crewed test flight originally planned for 2020 was pushed to 2024. Estimated data.

The Hardware Issues: Thruster Design and Helium Management

At the surface level, Starliner's problems were straightforward: helium leaked, thrusters failed. But diving deeper, these weren't random component failures. They reflected design choices that had created systematic vulnerabilities.

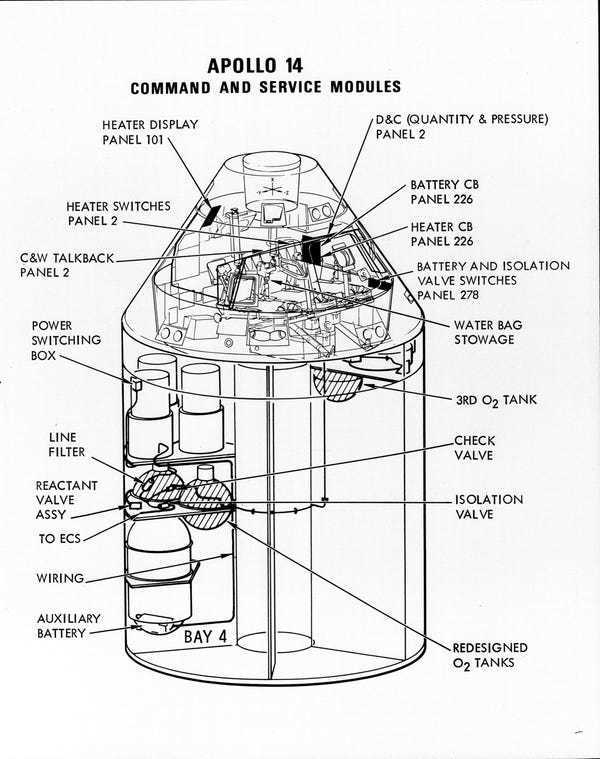

Starliner's reaction control system uses approximately two dozen small thrusters positioned around the spacecraft. These thrusters are essential for attitude control during the ascent to orbit, rendezvous with the space station, docking, undocking, and deorbit burn. They're redundant in some aspects but not uniformly so.

The helium pressurization system works by storing pressurized helium in tanks, then using that pressure to push propellant through feed lines to the thrusters. The system is a proven design used on multiple spacecraft. But implementation details matter enormously.

Starliner's helium isolation valve, the device that closes off the helium tank when not needed, reportedly had design issues that contributed to the leak. The valve had been tested extensively, but the specific failure mode that manifested during the June flight apparently wasn't adequately predicted during ground testing.

This raises a fundamental question about test adequacy. On paper, a system can be extensively tested. But all testing is finite. You can't test every possible condition, every environmental interaction, every edge case. At some point, engineering organizations make a risk judgment: we've tested enough, the design is sound, and we can proceed to flight.

The judgment call on Starliner's propulsion system appears to have been optimistic. This isn't unique to Boeing. Every spacecraft development program makes similar judgments. But the standard should be particularly rigorous for human-rated spacecraft.

The thruster failures themselves also suggest deeper issues. The Starliner uses the same helium pressurization system to feed oxidizer and fuel to each thruster. When you have multiple thrusters sharing a common pressurization system, and that system is degrading, you can get cascade failures: one thruster fails, pressure in the system drops further, another thruster fails, pressure drops more, and so on.

A robust design would have isolated thruster pairs or clusters so that failures didn't propagate. Space X's Dragon uses a different approach with redundant propulsion strings, so one system failure doesn't degrade the others. Starliner's design, in contrast, created a situation where thruster reliability depends on maintaining the health of the entire shared system.

This is a design choice, not necessarily a wrong one, but it comes with constraints and risks. Those risks should have been explicitly managed through more rigorous testing, tighter quality control, and acceptance of a higher bar for declaring the system flight-ready.

The investigation found that this higher bar hadn't been maintained. Schedule pressure and the desire to reach a particular launch date apparently influenced the decision to declare Starliner ready despite incomplete thruster reliability data.

From a manufacturing perspective, the report also identified quality control issues in how Starliner components were produced and inspected. These weren't mentioned in detail in public summaries, but the implication was that workmanship standards had slipped from where they should have been on a human-rated vehicle.

Boeing's quality control practices are generally robust—the company builds commercial airliners and military hardware that must meet exacting standards. But spacecraft quality is a different domain with its own requirements and culture. The transition from building aircraft to building spacecraft involves learning curves on both the contractor and government sides.

Organizational Culture: How Did Boeing Get Here?

The investigation's most provocative finding was about culture and decision-making, not hardware. This requires understanding Boeing's trajectory in the commercial space sector and the institutional pressures that shape engineering culture.

Boeing entered the commercial crew market relatively late compared to Space X. Elon Musk's company had been building rockets and spacecraft for NASA from the beginning of the Commercial Crew Program, learning through iteration and flight. Boeing was developing Starliner in parallel, using more traditional aerospace engineering approaches: extensive ground testing, careful requirements management, and risk mitigation through design robustness.

Both approaches have merit. Space X's iterative development is fast and efficient. Boeing's traditional approach is methodical and thorough. But they carry different risks. Space X's approach accepts some flight failures as acceptable learning. Boeing's approach aims for extremely high reliability but can accumulate schedule delays and cost overruns.

Starliner definitely experienced schedule pressures. The program stretched longer than originally planned. Milestones slipped. The initial uncrewed test flight happened in December 2019, but it had to be reflown in August 2022 due to issues during the first attempt. The crewed test (the June 2024 flight) had been planned for 2020 but didn't happen until four years later.

By the time the crewed test finally launched, Starliner was already years behind schedule. There was institutional pressure to finally declare the vehicle ready and move it into operational service. Missing the June 2024 launch date would have meant another delay and another round of questions from NASA, Congress, and Boeing's own leadership.

There's also a broader context: Boeing's commercial space division had not achieved financial success. The space company was bleeding money relative to projections. Every delay, every retest, every issue that pushed back operational service meant continuing to burn through budget allocation with no corresponding revenue.

These aren't excuses for the decision-making failures. They're context that explains how an organization with talented engineers and a commitment to safety can still gradually drift toward accepting risks that earlier generations would have rejected.

The culture shift often happens incrementally. Early in a program, requirements are non-negotiable: you test, you verify, you don't move forward until you're certain. But as schedule pressure accumulates, a language of pragmatism develops. You hear phrases like "acceptable risk," "reasonable confidence," "statistical likelihood."

These terms aren't inherently wrong. Engineering is always about accepting some risk. But in human spaceflight, the bar should be systematically higher than in other domains. You need a culture that questions optimism, that pushes back when leadership wants to accelerate schedules, and that treats crew safety as non-negotiable.

The investigation found that Starliner's program had drifted from this standard. It wasn't that people at Boeing didn't care about safety. It was that the institutional mechanisms for preventing bad decisions had been gradually eroded by schedule pressure and optimism that the system would ultimately work.

NASA's side of the culture issue involved excessive deference to Boeing's judgment. NASA had championed commercial crew programs and had incentives to show that they worked. Walking back confidence in Starliner would have been embarrassing. It would have suggested that the commercial crew experiment had failed.

But NASA's core mission is crew safety, not commercial industry success. When contractor judgment conflicts with crew safety, NASA should always defer to the conservative path. The investigation found that this principle had been compromised as noted by the Orlando Sentinel.

Isaacman's emphasis on leadership accountability was essentially a statement: the individual engineers were trying to do the right thing, but the leadership structure, incentive systems, and institutional culture had drifted in ways that undermined good decision-making.

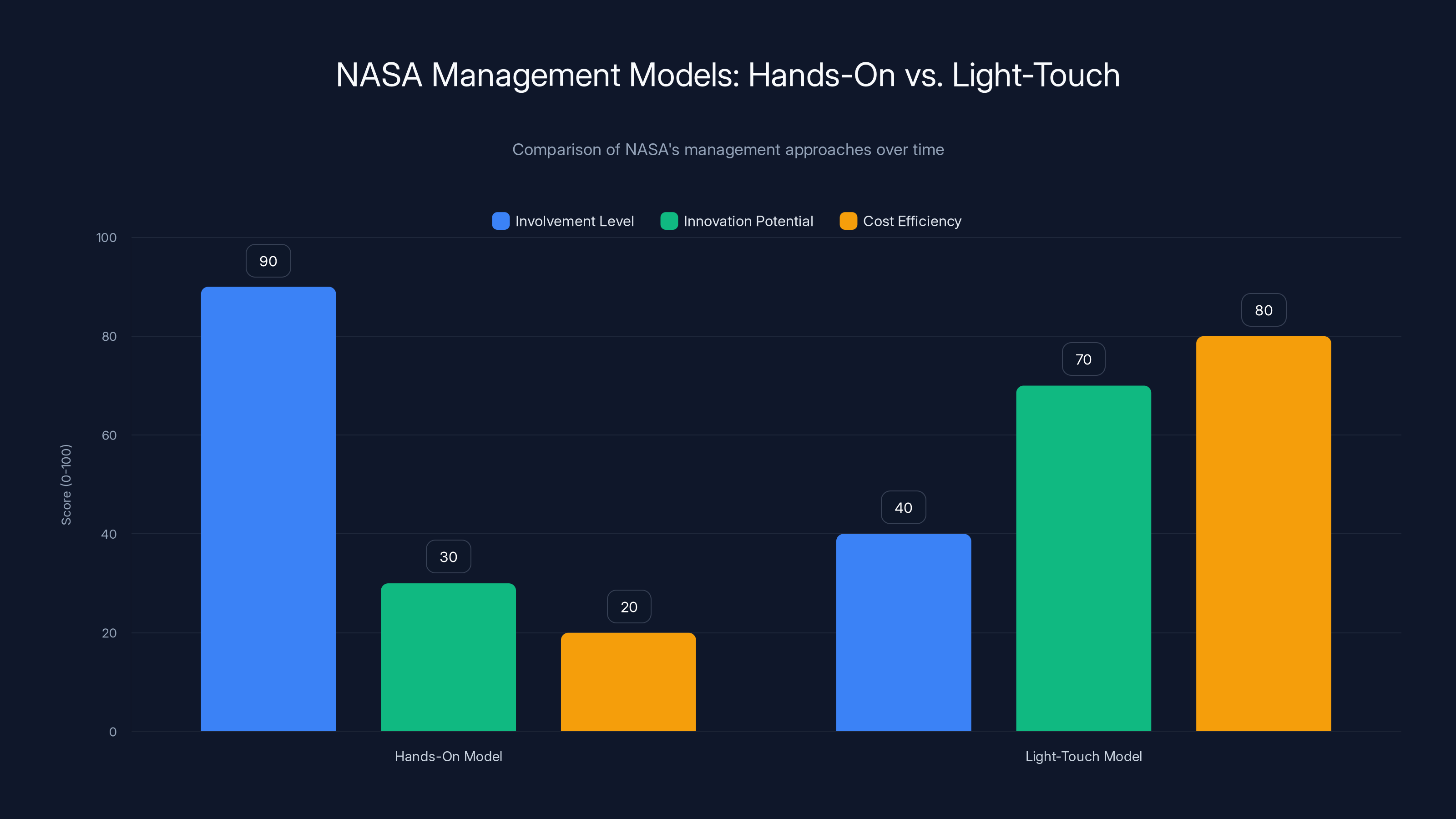

The hands-on model had high involvement but low innovation and cost efficiency, while the light-touch model increased innovation and efficiency but reduced NASA's involvement. Estimated data.

Communication Failures: Public Confidence Versus Private Reality

One of the most striking aspects of the Starliner situation was the disconnect between public messaging and private reality. For weeks, both Boeing and NASA publicly discussed bringing the crew home on Starliner while privately acknowledging serious concerns.

This isn't unique to Starliner, but it's particularly problematic in human spaceflight. The public, Congress, and international partners need to understand the actual status of crewed missions. Optimistic public messaging that masks private concerns erodes trust and enables bad decisions.

Boeing's "outstanding day" declaration immediately after docking was an example. From the company's perspective, the crew had successfully reached the station despite propulsion issues. From a communications standpoint, this was a victory to be celebrated. But it also set a narrative in the public mind that everything was fine, which made the later decision to return the crew on Dragon appear like an overcautious reversal rather than a necessary correction.

If the narrative had been different—if Boeing and NASA had immediately acknowledged that Starliner had experienced more serious failures than expected and that the team was evaluating implications—the August decision would have been better positioned as a reasonable safety response rather than a crisis.

NASA's communication was similarly problematic. The space agency, which has a culture of transparency developed through decades of managing public expectations on complex programs, initially backed Boeing's optimistic assessment. Only gradually did public messaging shift as NASA's confidence declined.

This kind of gradual public messaging shift creates the impression of an agency making decisions reactively rather than proactively. It suggests that NASA is following contractor guidance rather than exercising independent judgment.

The investigation found that these communication failures reflected deeper issues about who was actually in charge of decision-making and risk acceptance. On a well-managed program, NASA would set clear standards: we will return the crew on Starliner only if we have high confidence in the vehicle's reliability for the deorbit and reentry phases. If that confidence isn't justified by data, we will return the crew on an alternative vehicle.

Instead, decision-making appeared more iterative and reactive: let's see what Boeing says, let's monitor the data, let's see if we can build confidence over time.

This approach would be reasonable for an unmanned vehicle development. It's not appropriate for a crewed vehicle.

One of Isaacman's implicit messages in his statement about leadership accountability was that this communication dynamic needed to change. Future crewed spacecraft programs should operate under clearer decision frameworks that don't permit the kind of gradual erosion of standards that happened with Starliner.

Comparative Analysis: Starliner Versus Dragon, Starliner Versus Soyuz

Contextualizing Starliner's failures helps understanding their significance. How did Starliner compare to alternatives, and what can be learned from other crewed spacecraft programs?

Space X's Crew Dragon uses a fundamentally different propulsion architecture. Instead of a single shared pressurization system, Dragon has multiple independent propulsion strings. The Draco thrusters (the small control thrusters) are organized into redundant groups, so a failure in one group doesn't cascade to others.

Dragon also went through an extensive flight-testing regimen that included uncrewed cargo flights to the station years before human flights. This testing provided real operational data that informed the vehicle design and identified issues early when they could be fixed before crewed missions.

Starliner had only one uncrewed test flight before the crewed flight, and that uncrewed test had issues (orbital attitude issues and software glitches) that required investigation before proceeding to crewed operations. This is a much thinner testing foundation than Dragon had achieved.

Russia's Soyuz spacecraft, which has flown continuously for decades with an excellent safety record, uses yet another approach. The Soyuz has proven itself in actual operation for over 50 years. Every problem that could occur has essentially already occurred and been fixed. This is the advantage of a mature, heavily flown vehicle.

Starliner is new. It lacks the operational heritage and the accumulated fixes that come from decades of flights. This is true of all new spacecraft, but it argues for an extra-high bar on ground testing and a conservative approach to initial crewed operations.

NASA's decision to use Space X Dragon as the backup for returning Starliner's crew was based on Dragon's proven track record. By June 2024, Dragon had already flown multiple crewed missions with excellent reliability. The vehicle was understood. The risks were manageable.

Starliner, by contrast, was untested in actual crewed operations beyond the single June flight. Asking the same crew to trust it for the critical deorbit and reentry phase was simply not justified by the data.

The investigation's implicit conclusion was that Starliner should have been treated more cautiously from the beginning. A longer testing period, more rigorous oversight, and a lower tolerance for schedule pressure would have likely caught the propulsion issues during ground testing or uncrewed operations, before humans were aboard.

Leadership decisions and safety culture had the highest impact on mission risk, overshadowing technical failures. Estimated data based on narrative context.

Systemic Implications: What the Starliner Investigation Reveals About NASA

The Starliner situation reveals something concerning about how NASA manages commercial contracts and how the space agency's culture has evolved since the Space Shuttle era.

During the Space Shuttle program, NASA's management culture was heavily hands-on. The agency had thousands of engineers doing detailed technical work and making engineering decisions. NASA didn't just oversee contractors; NASA was deeply involved in the design, testing, and decision-making.

This approach had downsides. It was expensive and slow. It created dependencies on government employment that made innovation harder. And it meant that contractors had less autonomy to innovate.

The Commercial Crew Program was explicitly designed as a reaction to this model. NASA would set requirements and performance standards, but contractors would have freedom to design and implement their own approaches. NASA's role would shift from hands-on technical management to oversight and acceptance testing.

In theory, this model works. Market competition drives efficiency. Contractors innovate. Costs decrease. And NASA gets transportation services without the overhead of managing detailed spacecraft development.

In practice, the Starliner case suggests the model has weaknesses when the contractor faces financial pressure, schedule pressure, or competitive disadvantage relative to other companies. In those situations, the lighter oversight can allow corners to be cut and standards to erode.

Isaacman's emphasis on leadership and decision-making culture was a signal that NASA needs to recalibrate its oversight approach. Perhaps the right model isn't as light-touch as the Commercial Crew Program initially envisioned. Perhaps NASA needs more engineers deeply engaged in the technical details of crewed spacecraft development, even if contractors are taking the lead.

Alternatively, perhaps NASA needs more rigorous gate reviews and decision frameworks that create explicit pressure points where senior leadership must actively confirm that standards have been met before proceeding.

The investigation didn't call for eliminating commercial crew. The concept remains sound. But it did suggest that the execution of the concept had drifted in ways that undermined its core principle: using market forces to drive innovation while maintaining safety standards.

This recalibration will take years to implement fully. Starliner is still part of NASA's crew transportation portfolio (though probably not in near-term operations). Space X's Dragon remains the proven alternative. And the broader commercial space industry is watching to see whether NASA strengthens oversight or maintains the lighter-touch approach.

The stakes are high. If NASA loses confidence in its commercial crew suppliers, the space agency would need to develop its own crewed vehicles again, a task that would cost tens of billions and take decades. The commercial space industry has invested tremendous resources in meeting NASA's standards. If NASA's standards prove inconsistently enforced, that undermines everyone's confidence.

Timeline: Key Decisions and Turning Points

Understanding the Starliner situation requires following the timeline of how decisions unfolded and when critical choice points occurred.

December 2019: Starliner's first uncrewed test flight occurs. The flight experiences issues, including an orbital mechanics problem that prevents docking with the International Space Station. Investigation follows, and a determination is made to reflty the uncrewed test before attempting crewed operations.

August 2022: Starliner's second uncrewed test flight occurs successfully, and the spacecraft docks with the station.

December 2023 to June 2024: The crewed test is scheduled and begins final preparations. Schedule pressure is significant; the mission is already multiple years behind the original timeline.

June 5, 2024: Starliner launches with astronauts Wilmore and Williams. Within minutes of reaching orbit, helium leaks are observed. Thruster failures begin shortly after. The spacecraft struggles through the rendezvous and docking phases but ultimately reaches the station.

June 6 to June 28, 2024: Boeing and NASA initially maintain confidence that Starliner will return the crew. The public narrative emphasizes mission success.

Late July 2024: NASA begins privately questioning whether Starliner should return the crew. Technical reviews become more critical in tone.

August 2024: Public messaging begins to shift. NASA officials acknowledge that Dragon might be used for crew return instead of Starliner.

August 24, 2024: NASA formally announces that Wilmore and Williams will return on Space X's Crew Dragon instead of Starliner.

September 2024: Starliner deorbits unmanned. The spacecraft deorbits successfully and reenters without crew, suggesting the propulsion system, though unreliable during approach, had enough capability for the less-demanding deorbit burn.

November 2024: NASA releases the Program Investigation Team report (reportedly delayed from earlier in the year).

December 2024: Jared Isaacman becomes NASA Administrator.

February 2025: Isaacman formally classifies Starliner as a Type A mishap and releases the full 311-page investigation report to the public. He issues an agency-wide letter emphasizing leadership accountability.

March 2025: Astronauts Wilmore and Williams return to Earth aboard Crew Dragon as part of the Crew-9 mission.

This timeline shows that the critical decision points occurred in late July and August 2024. The delay in making the formal decision to switch return vehicles, despite private concerns developing much earlier, became one of the investigation's focal points.

Schedule pressure is the most significant challenge in the space industry, requiring ongoing management. Estimated data.

Accountability: What Happens Next?

Isaacman's promise of "leadership accountability" raises the question: what does this actually mean, and how will it be implemented?

NASA doesn't have the authority to fire Boeing employees. Accountability for Boeing staff would likely come through performance evaluations, reduced responsibilities, or in extreme cases, reassignment to different programs. Whether Boeing actually implements changes remains to be seen and will be influenced by whether the company's leadership feels pressure from NASA.

On NASA's side, accountability is more directly within leadership's control. Isaacman indicated that people at NASA who made decisions contributing to the Starliner situation would face consequences, but he didn't publicly announce specific actions.

Historically, government agencies are cautious about publicizing personnel actions against employees for performance issues on programs. These decisions are made quietly, documented in official files, and rarely discussed publicly. This is partly to protect employee privacy and partly because agencies want to maintain the morale of other employees who might see their colleagues punished for honest judgment calls that turned out wrong.

But the lack of public transparency around accountability creates challenges. Observers don't see consequences, and it's unclear whether the culture change that leadership proclaims actually takes hold.

A stronger form of accountability would involve systematic changes to how the program operates. This might include:

- More rigorous NASA technical oversight, with more engineers directly involved in design reviews

- Clearer decision gates that require active confirmation from senior NASA leadership before proceeding to crewed operations

- More comprehensive independent testing and verification

- Explicit standards for thruster reliability that must be met before crewed flights

- Regular external audits of program progress

These changes would be visible and measurable. They would demonstrate that Isaacman's concern about leadership and decision-making isn't just rhetoric.

The danger is that without visible systemic changes, the Starliner situation becomes a cautionary tale rather than a catalyst. Future programs slip back into old patterns. Schedule pressure again erodes standards. The next crewed vehicle program faces similar pressures and similar risks.

Isaacman appears to understand this risk. His emphasis on culture and decision-making rather than just hardware issues suggests he's trying to drive systemic change, not just apply a technical fix.

But driving systemic change in a large government agency is slow work. It takes years. It requires sustained focus from leadership. And it can be undone if subsequent administrators don't maintain the same standards.

Looking Forward: How Starliner Affects NASA's Path to the Crewed Moon

The Starliner situation occurs against the backdrop of NASA's Artemis program, which aims to return humans to the Moon. The relationship between these efforts is important for understanding the long-term implications.

Artemis requires multiple spacecraft and systems: the Space Launch System (NASA's heavy-lift rocket), Orion (NASA's deep-space crew vehicle), Human Landing System vehicles developed by contractors, and continued access to low-Earth orbit for crew rotation and supply missions.

Starliner was supposed to be part of this ecosystem, providing routine crew transportation to the space station. This role would have allowed NASA to focus Artemis resources on lunar objectives rather than diverting them to Earth-orbit crew transport.

The problems revealed by the Starliner investigation complicate this vision. If Starliner cannot reliably serve as a crew transportation vehicle, NASA is dependent on Dragon, which is excellent but not unlimited in capacity. This could mean slower crew rotations or reduced operations at the station, freeing up resources for Artemis but also reducing science return from station operations.

Alternatively, Starliner might be fixed and eventually brought into reliable service, but this would require time and investment that diverts resources from lunar program objectives.

The broader question is whether the Starliner experience will make NASA more conservative in its approach to Artemis contractors. If the space agency increases oversight and rigor for lunar Human Landing Systems, that could slow development and increase costs.

But if NASA doesn't apply stronger standards to Artemis contractors, it risks repeating the Starliner experience: contractors facing schedule and financial pressure who gradually drift toward accepting higher risk.

Isaacman's challenge is maintaining the benefits of commercial space development (competition, efficiency, innovation) while restoring the safety standards that human spaceflight demands. This is difficult but not impossible. It requires explicit decision frameworks, strong NASA technical oversight, and a culture that treats crew safety as genuinely non-negotiable.

The next few years will show whether Isaacman's message about leadership and decision-making culture creates sustained change or becomes a temporary course correction that gradually erodes as new schedules and pressures accumulate.

Lessons for the Space Industry: Standards, Safety Culture, and Schedule Pressure

The Starliner investigation offers lessons that extend beyond NASA and Boeing. Any organization developing complex, safety-critical systems faces similar pressures and similar risks of culture drift.

The first lesson is that schedule pressure is a chronic problem that requires chronic management. It doesn't go away with a single decision or policy. It emerges repeatedly, especially when programs slip behind original timelines and leadership wants to "get back on track."

The solution isn't to eliminate schedule pressure (that's impossible in competitive environments) but to manage it explicitly through governance structures that prevent it from overwhelming technical judgment.

The second lesson is that communication culture matters as much as technical standards. If leadership consistently accepts optimistic messaging that masks private concerns, the organization trains itself to ignore bad news. Over time, this makes genuine safety problems harder to address because people don't trust that serious concerns will be taken seriously.

The third lesson is that contractor-customer relationships require clear decision frameworks. Ambiguity about standards, about what it takes to proceed, about how risk will be evaluated, allows both parties to drift into different assumptions about what's acceptable.

Starliner appears to have involved different assumptions between Boeing and NASA about what level of thruster reliability was acceptable for a crewed vehicle. Boeing might have thought "these failures are acceptable because we have redundancy in other areas." NASA might have thought "we'll accept this for the test flight and require reliability improvements afterward." These differing assumptions were never explicitly reconciled.

The fourth lesson is that mature, successful organizations can develop complacency that undermines their own standards. Boeing is a world-class aerospace company with incredible expertise. NASA has experience managing crewed spaceflight dating to the Mercury program. Yet both organizations drifted in ways that experienced engineers and managers should have caught.

This happens because no single person is responsible for "organizational culture." It emerges from thousands of individual decisions, each seemingly reasonable, that collectively create a pattern. Catching this pattern requires active self-examination and a willingness to question institutional assumptions.

The Starliner investigation is valuable partly because it's honest. Rather than blaming Boeing or focusing narrowly on hardware issues, NASA and Boeing acknowledged systemic failures in decision-making and culture. This honesty is what makes the investigation useful for the industry.

Conclusion: When Success Masks Failure

The Starliner investigation is ultimately a study of how success can mask failure. The spacecraft reached orbit. It docked with the space station. The crew landed safely. By the simple metrics of mission accomplish, it looks like a win.

But underneath this surface success lay multiple failures: hardware deficiencies that should have been caught in design review, manufacturing quality issues that should have been caught in inspection, schedule pressure that should have been resisted, communication that should have been more transparent, and decision-making that should have been more rigorous.

Assuming Wilmore hadn't been an exceptional pilot, assuming the spacing and timing had been slightly different, assuming the remaining thrusters had failed one more time, that mission could have ended tragically. The investigation makes clear that the crew was in genuine danger, and the organization's response to that danger was inadequate.

What makes Starliner significant isn't that it represents a unique failure. What makes it significant is that it represents a type of failure that the space industry had been successfully preventing for decades: a culture drift where standards gradually erode until you end up trusting humans to come home in a vehicle that you have good reasons not to trust.

Isaacman's response to the investigation—formal classification as a Type A mishap, release of the full report, and emphasis on leadership accountability—signals that NASA recognizes the seriousness. But the real test will be whether this recognition translates into sustained change.

The space industry will be watching. Contractors will be watching to see how seriously NASA enforces this reestablished standard. Employees at Boeing and NASA will be watching to see whether there are real consequences for the decisions that led to the situation. Congress and the public will be watching to see whether NASA can be trusted to manage commercial crew programs responsibly.

And most importantly, future astronauts will be depending on whether these lessons actually change how spacecraft development is managed.

The Starliner investigation started with Butch Wilmore facing a genuinely dangerous situation, making life-or-death decisions about spacecraft control, and wondering whether he could bring the spacecraft home safely. It ends with NASA's leadership saying: we need to change how we make decisions so this never happens again.

Whether that commitment holds will define the next era of American human spaceflight.

FAQ

What is a Type A mishap classification in NASA terms?

A Type A mishap is NASA's formal designation for a serious failure involving hazard exposure, loss of life, or significant damage to facilities or equipment. This classification indicates systemic problems that require investigation and corrective action, not just technical fixes. For crewed spacecraft, Type A classification is particularly significant because it means the mission involved genuine risks to human life.

What went wrong with the Starliner's propulsion system?

Starliner experienced both helium leaks from its pressurization system and intermittent thruster failures during its crewed flight. The helium system, which pressurizes propellant lines to feed fuel to the thrusters, had design deficiencies that allowed more leakage than the system could tolerate. As helium pressure degraded, multiple thrusters failed in cascade because they all draw from the same pressurization source. This created a situation where the spacecraft lost control authority and became difficult to maneuver, particularly dangerous during the critical rendezvous and docking phases.

Why didn't NASA immediately prevent the crew from returning on Starliner?

Several factors contributed to the delay in decision-making. First, the problems weren't immediately apparent as catastrophic—the spacecraft successfully docked despite the issues. Second, both Boeing and NASA's leadership had institutional incentives to present optimism. Third, NASA gave significant deference to Boeing's technical judgment as the spacecraft builder. Fourth, once the crew was safely docked at the station, the risk was reframed as being about the future return phase, which seemed manageable. Finally, clear decision criteria about what would trigger a crew-return method change apparently weren't established, allowing decision-making to drift across weeks while data accumulated.

What was the core failure revealed by the investigation?

According to NASA Administrator Isaacman, the most troubling failure wasn't hardware but decision-making and leadership. The investigation found that organizational culture had gradually drifted toward accepting higher levels of risk and ambiguity than appropriate for human spaceflight. Leadership failed to clearly establish and enforce standards, contractors and NASA staff faced pressure to proceed despite incomplete data, and communication culture masked rather than revealed concerns. The hardware issues (thruster failures, helium leaks) were symptoms of this deeper cultural problem.

How does Starliner's design compare to Space X's Dragon?

Starliner uses a single, shared helium pressurization system for all its thrusters, which means thruster failures cascade if system pressure degrades. Dragon uses redundant, independent propulsion strings where failures in one system don't affect others. Additionally, Dragon had multiple uncrewed cargo flights before crewed operations, providing extensive operational testing and data. Starliner had only one uncrewed test before the crewed flight, giving less opportunity to identify and fix issues before humans were aboard. Dragon's design philosophy emphasizes redundancy and isolation; Starliner's design philosophy relies more on single-system reliability.

What does leadership accountability mean in this context?

Isaacman's statement about leadership accountability indicated that people at NASA and Boeing who made decisions contributing to the problematic situation would face consequences. However, the space agency didn't publicly announce specific personnel actions. Accountability could involve performance evaluations, reduced responsibilities, or reassignment, though historically government agencies are quiet about these decisions. More importantly, leadership accountability also signals the need for systemic changes in how future programs are managed, with clearer decision gates, more rigorous oversight, and explicit standards that cannot be compromised by schedule pressure.

How will the Starliner investigation affect NASA's future commercial spacecraft programs?

The investigation suggests NASA needs to recalibrate its oversight approach for commercial contractors. The lighter-touch oversight model of the Commercial Crew Program allows contractor flexibility but apparently enabled corners to be cut when schedule pressure mounted. Future programs may require more direct NASA technical involvement, more rigorous gate reviews, and clearer standards that must be met before proceeding to crewed operations. However, NASA faces a balance: too much government control reintroduces the inefficiencies that the Commercial Crew Program was designed to avoid. The challenge is maintaining the benefits of commercial competition while restoring safety standards.

Why did Boeing declare the mission successful despite the thruster failures?

Boeing's assessment focused on the primary objective that was achieved: reaching the International Space Station and docking successfully. From a systems perspective, despite the propulsion issues, the spacecraft accomplished its immediate mission goal. However, this framing obscured a critical reality: the vehicle operated outside its safe operating envelope, the crew faced genuine hazards, and the more demanding deorbit and reentry phases hadn't yet occurred. The investigation found that this kind of selective success narrative—celebrating what went right while downplaying what went wrong—contributed to decision-making drift.

What happens to Starliner now that it's been classified as having had a Type A mishap?

Starliner is still technically part of NASA's crew transportation portfolio, though it's unlikely to transport crew on near-term missions. The spacecraft will require modifications to address the propulsion system deficiencies and other hardware issues identified in the investigation. After modifications, Starliner would need to undergo extensive testing and verification before NASA would consider it for another crewed mission. In the near term, Space X's Dragon will continue as NASA's primary crew transportation vehicle for rotating astronauts to and from the International Space Station.

How does this investigation affect the Artemis program's timeline?

The Starliner situation doesn't directly affect Artemis lunar missions, but it may have indirect effects. If NASA increases oversight and rigor for spacecraft and systems contractors (which seems likely based on Isaacman's statements), that could slow development of Artemis-related systems and increase costs. Alternatively, if Starliner issues force more time to be spent on crew transportation in low Earth orbit, that diverts resources and focus from lunar program objectives. The investigation may also make NASA's contractor selection process more rigorous and demanding, which is positive for safety but negative for schedule and budget.

TL; DR

- Type A Mishap Classified: NASA formally classified Starliner's crewed flight as a serious failure despite the spacecraft reaching the space station and docking successfully, signaling that risk to crew safety is taken extremely seriously

- Hardware and Culture Issues: The investigation found deficiencies in Starliner's propulsion design and manufacturing quality, but emphasized that systemic failures in leadership and decision-making were the most troubling problems

- Astronaut Faced Real Danger: Pilot Butch Wilmore lost control authority in multiple spacecraft axes with four aft thrusters inoperative, forcing him to contemplate whether it was riskier to continue toward the station or attempt deorbit with unreliable systems

- Decision-Making Failures: Both Boeing and NASA allowed schedule pressure and organizational incentives to compromise their standards, delaying the decision to return crew on Space X Dragon by nearly three months despite private concerns

- Leadership Accountability Promised: NASA Administrator Isaacman emphasized that the most troubling failure was organizational culture and decision-making, not hardware, suggesting systemic changes are coming to commercial spacecraft oversight

Key Takeaways

- NASA classified Starliner's crewed flight as a Type A mishap despite mission success, indicating serious safety failures beneath the surface

- The investigation revealed that leadership and decision-making failures were more troubling than hardware deficiencies, suggesting systemic organizational problems

- Astronaut Butch Wilmore faced genuine danger with loss of spacecraft control authority, yet this risk wasn't immediately disclosed to the public

- Schedule pressure and institutional incentives allowed both Boeing and NASA to drift from strict safety standards over months of development

- The incident raises critical questions about NASA's oversight model for commercial spacecraft contractors and whether current approaches are sufficient

![NASA's Starliner Type A Mishap: Inside the Investigation [2025]](https://tryrunable.com/blog/nasa-s-starliner-type-a-mishap-inside-the-investigation-2025/image-1-1771538857934.jpg)