Navigating the Complex Path of Getting Depression-Detecting AI through the FDA [2025]

Artificial intelligence (AI) is revolutionizing healthcare by offering innovative solutions for diagnosing and managing mental health disorders like depression. However, getting AI-based solutions through regulatory bodies like the FDA is not straightforward. This article dives deep into the complexities of getting depression-detecting AI through the FDA, examining the challenges, providing technical details, and exploring future trends.

TL; DR

- Regulatory Hurdles: The FDA approval process for AI is complex and rigorous.

- Data Privacy Concerns: Ensuring patient data privacy is a significant challenge.

- Technical Challenges: AI models must be transparent and interpretable.

- Real-world Testing: Clinical trials are essential but challenging to implement.

- Future Trends: Emerging trends point towards more personalized AI solutions.

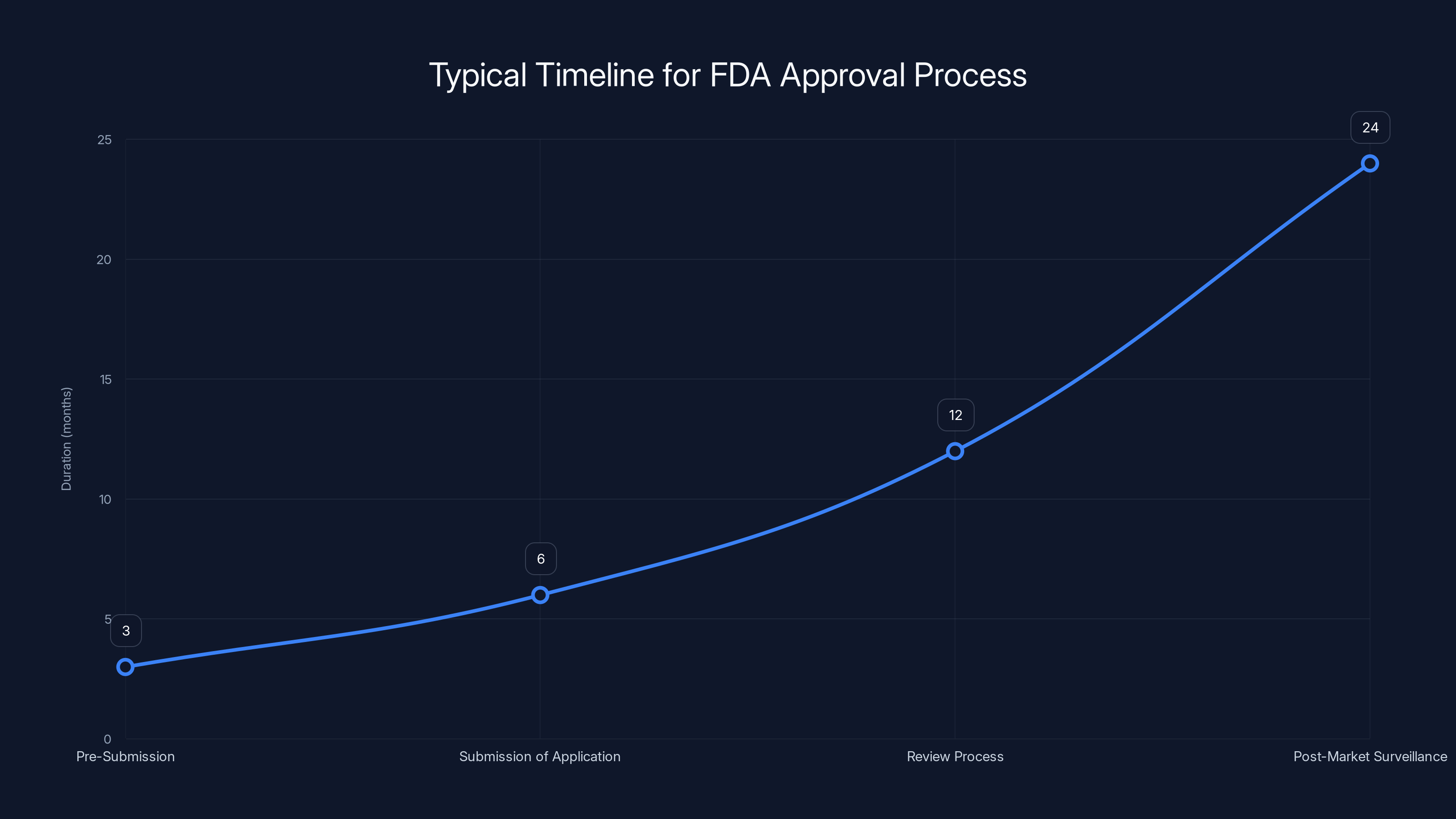

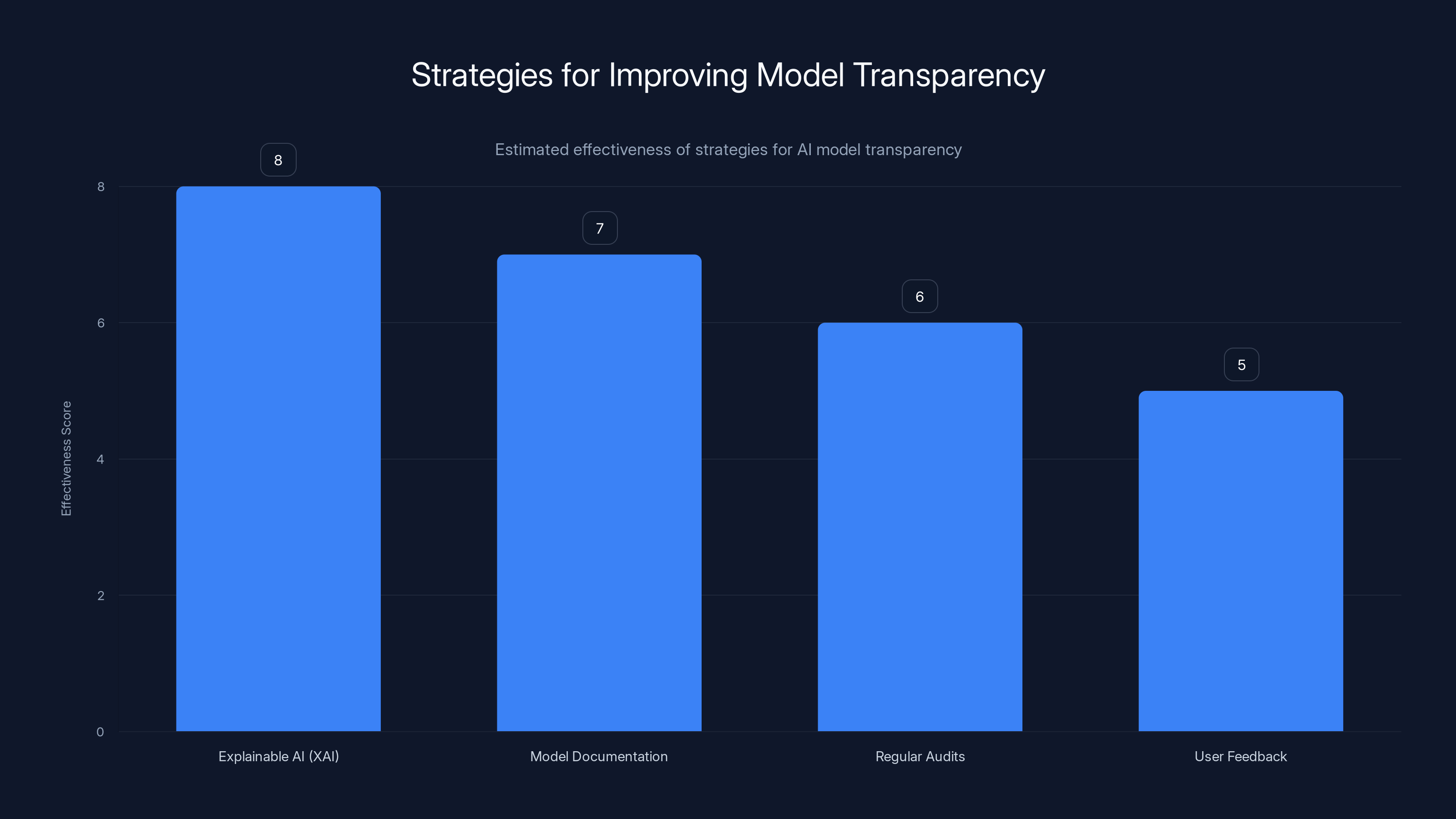

The FDA approval process for AI systems can take several years, with the review process being the most time-consuming step. Estimated data.

Understanding Depression-Detecting AI

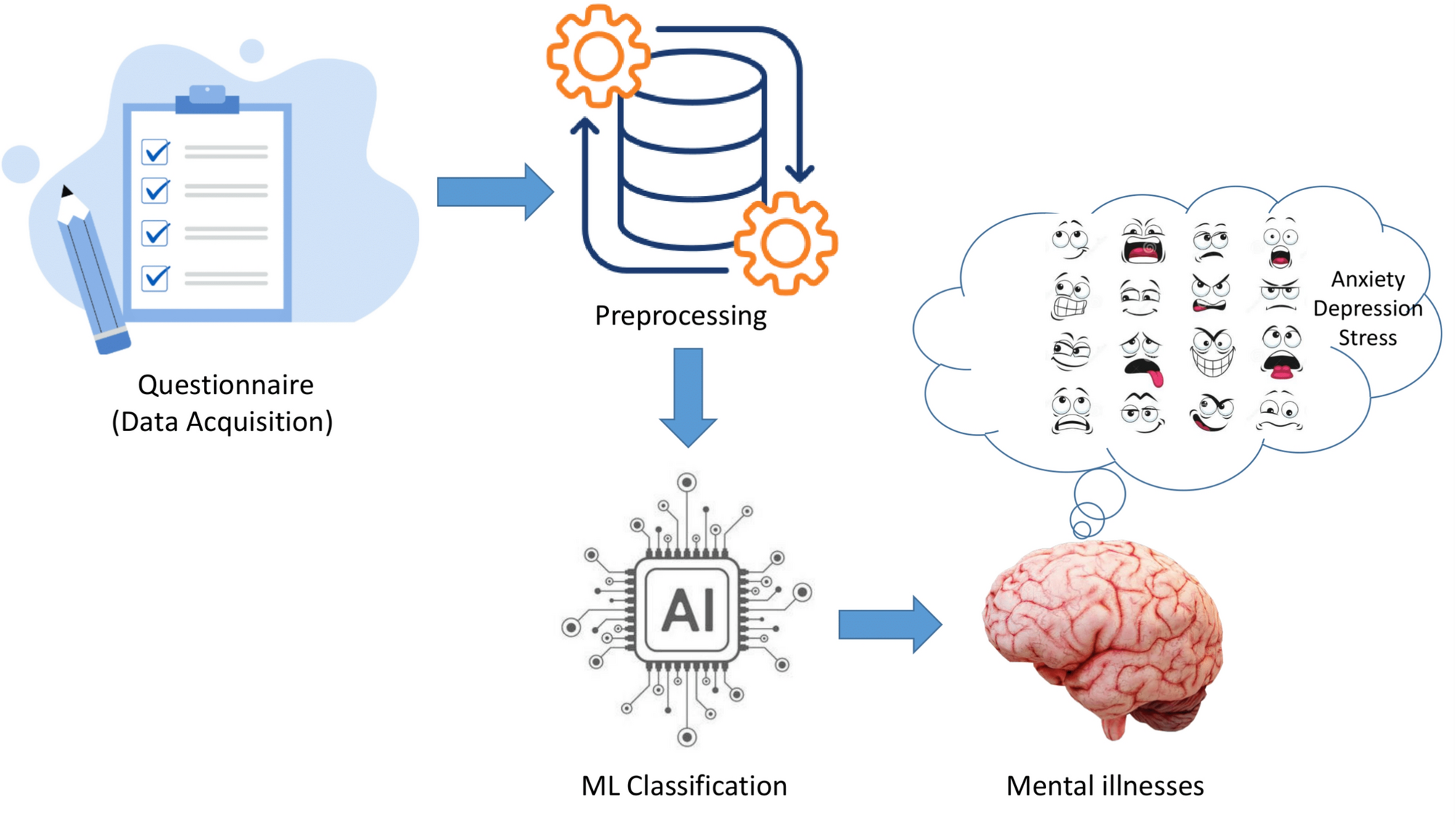

Depression-detecting AI systems leverage machine learning algorithms to identify patterns in patient data that may indicate depression. These systems process data from various sources, such as electronic health records (EHRs), patient questionnaires, and even wearable devices that track physiological responses.

How Does It Work?

The AI models analyze large datasets to identify markers of depression. For instance, a model might identify a combination of decreased physical activity, irregular sleep patterns, and changes in speech as potential indicators of depression.

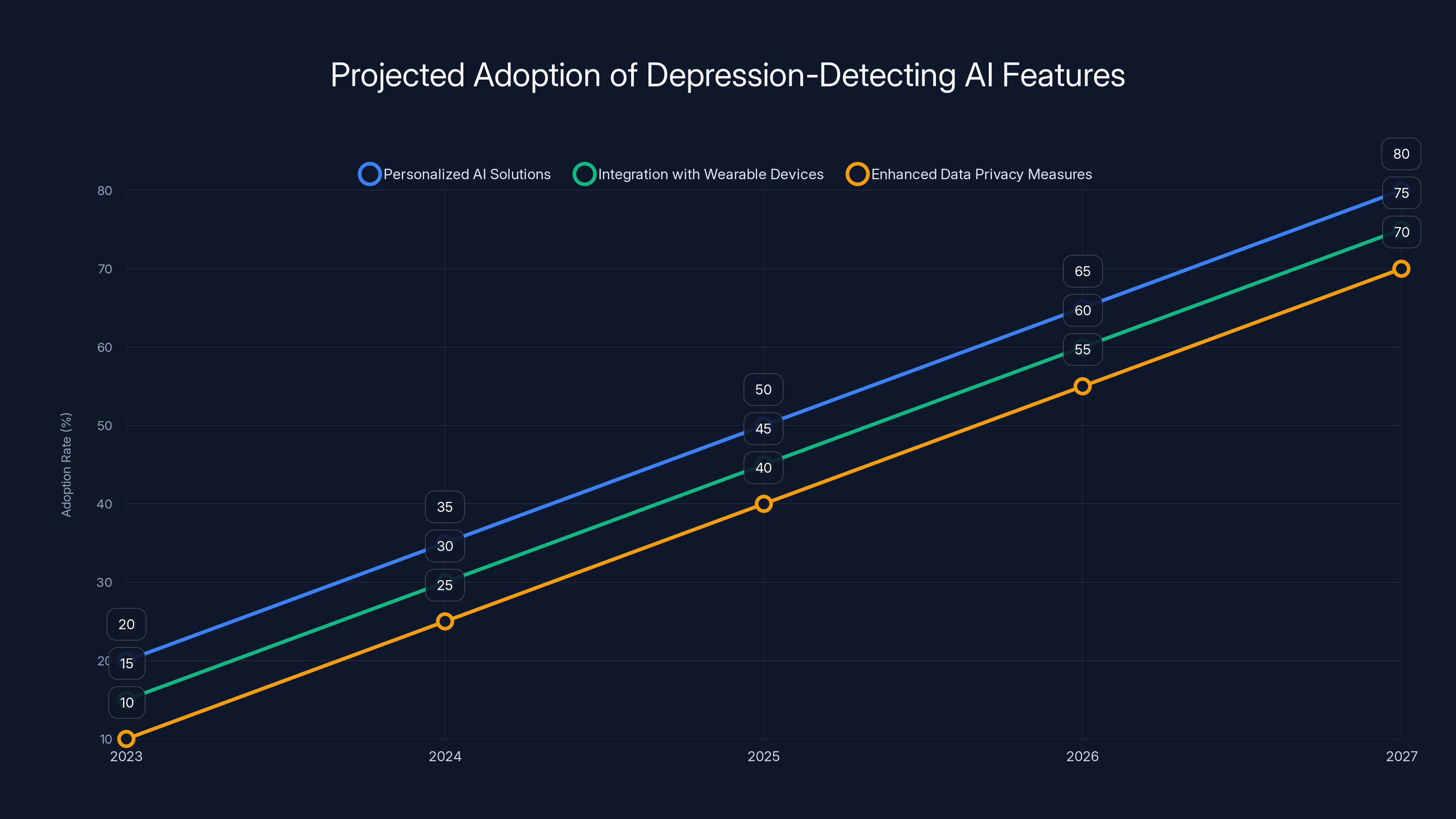

Projected trends indicate significant growth in personalized AI solutions, integration with wearables, and data privacy measures over the next five years. Estimated data.

Key Challenges in FDA Approval

1. Regulatory Hurdles

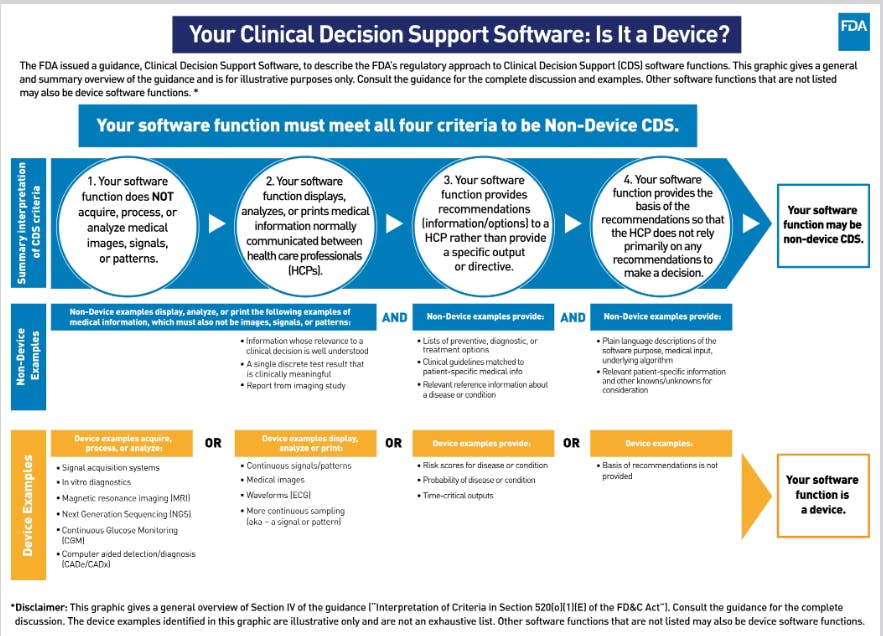

Getting FDA approval involves navigating a complex regulatory landscape. The FDA requires comprehensive evidence that an AI system is safe, effective, and reliable.

Steps in the FDA Approval Process:

- Pre-Submission: Developers engage with the FDA to discuss their AI system and its intended use.

- Submission of Application: Developers submit detailed documentation, including clinical trial data.

- Review Process: The FDA evaluates the safety and efficacy of the AI system.

- Post-Market Surveillance: Continuous monitoring of the AI system's performance in real-world settings.

2. Data Privacy Concerns

AI systems require access to large datasets, raising concerns about patient privacy. Ensuring compliance with regulations like HIPAA is crucial.

Best Practices for Data Privacy:

- Anonymization: Remove personally identifiable information from datasets.

- Data Encryption: Use encryption to protect data both in transit and at rest.

- Access Control: Implement strict access controls to limit who can access sensitive data.

Technical Challenges and Solutions

1. Model Transparency and Interpretability

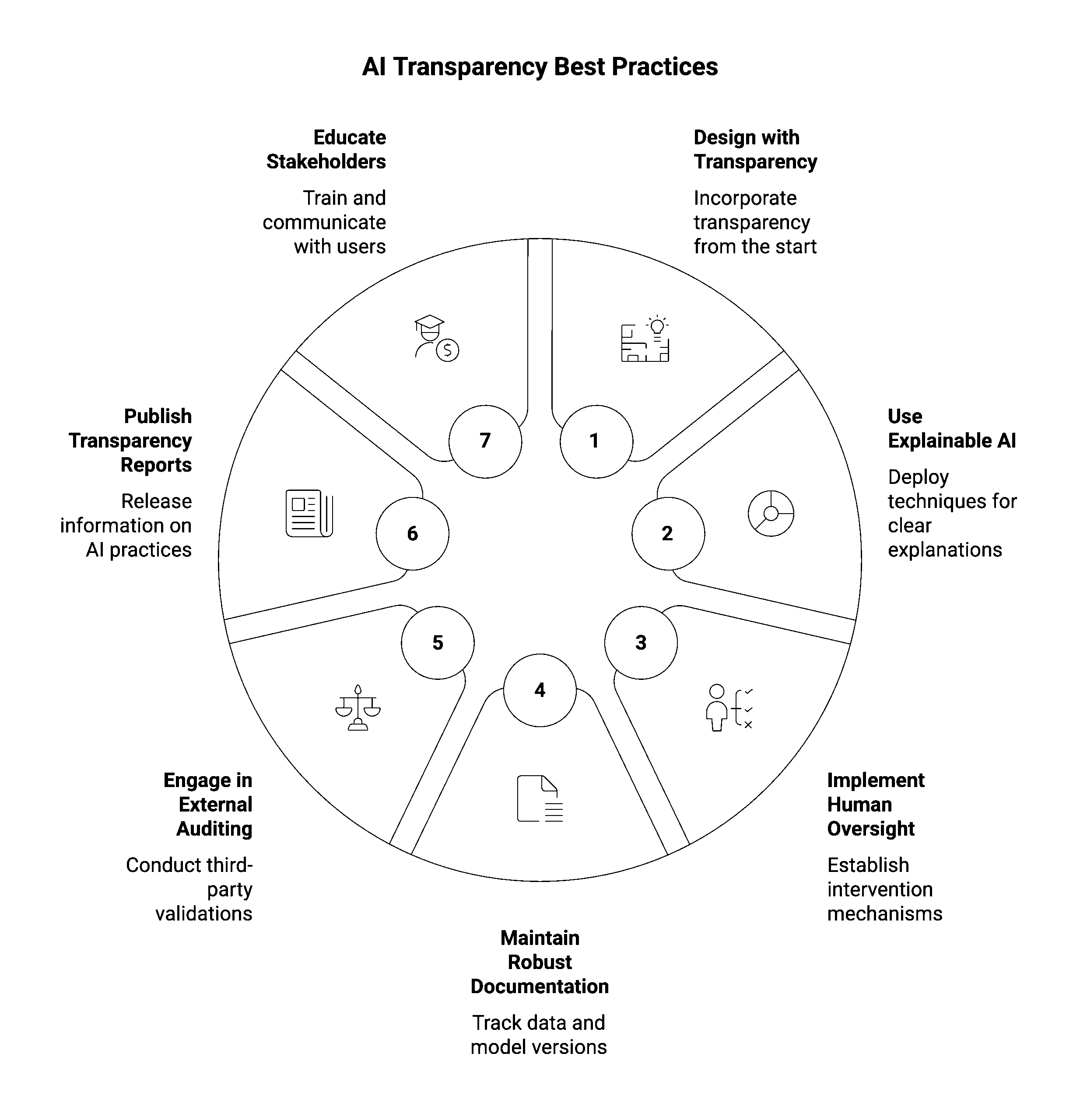

For FDA approval, AI models must be transparent and interpretable. Clinicians need to understand how AI arrives at its conclusions.

Strategies for Improving Model Transparency:

- Explainable AI (XAI): Use techniques that make AI decision-making processes understandable to humans.

- Model Documentation: Provide detailed documentation of how the model works and its limitations.

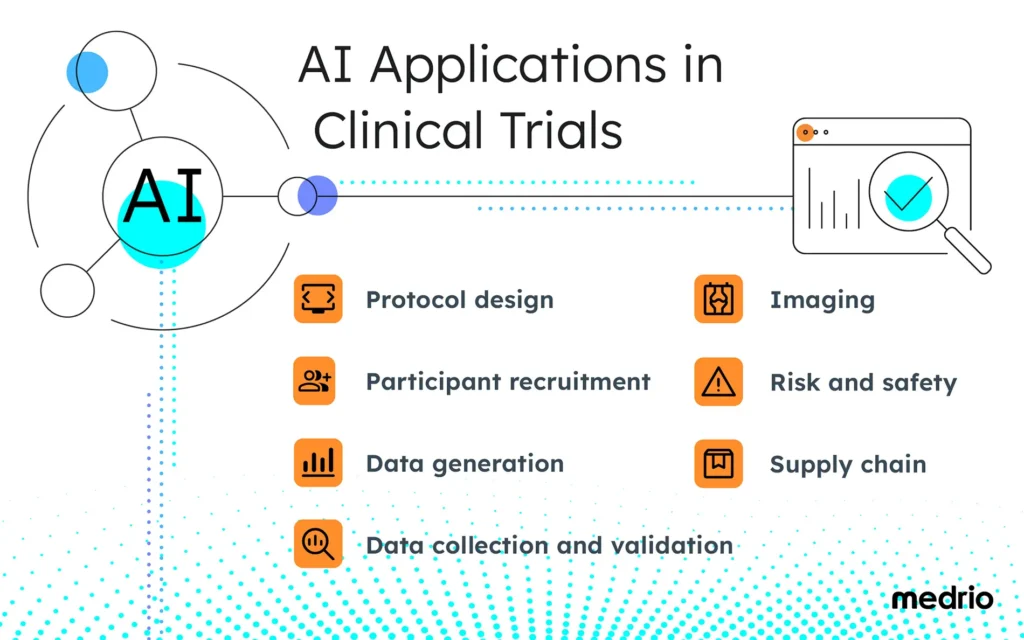

2. Clinical Trials

Conducting clinical trials for AI systems is challenging due to the need for large, diverse datasets.

Steps for Successful Clinical Trials:

- Define Clear Objectives: Determine what the trial aims to prove about the AI system.

- Recruit Diverse Participants: Ensure the trial includes a diverse population to improve the generalizability of results.

- Monitor and Adjust: Continuously monitor trial results and adjust the trial design as needed.

Explainable AI (XAI) is estimated to be the most effective strategy for enhancing model transparency, followed by comprehensive model documentation. (Estimated data)

Implementation Guide

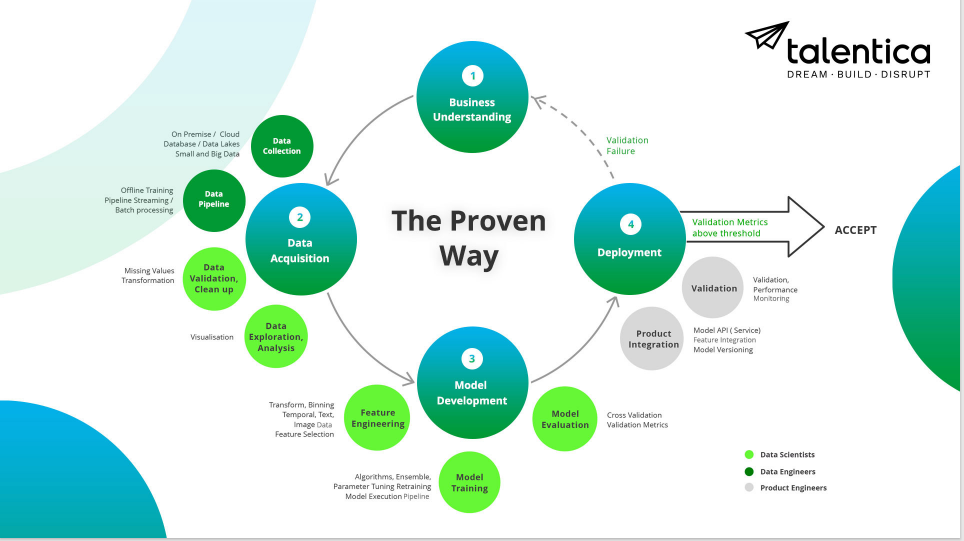

Step 1: Develop the AI Model

Start by developing a robust AI model using high-quality datasets. Ensure the model is trained on diverse data to improve its accuracy.

Step 2: Conduct Preliminary Testing

Test the model in a controlled environment to identify any potential issues.

Step 3: Engage with the FDA

Initiate pre-submission discussions with the FDA to understand their requirements and expectations.

Step 4: Prepare for Clinical Trials

Design and conduct clinical trials to gather evidence of the model's effectiveness and safety.

Step 5: Submit for FDA Approval

Compile all necessary documentation and submit the application to the FDA for review.

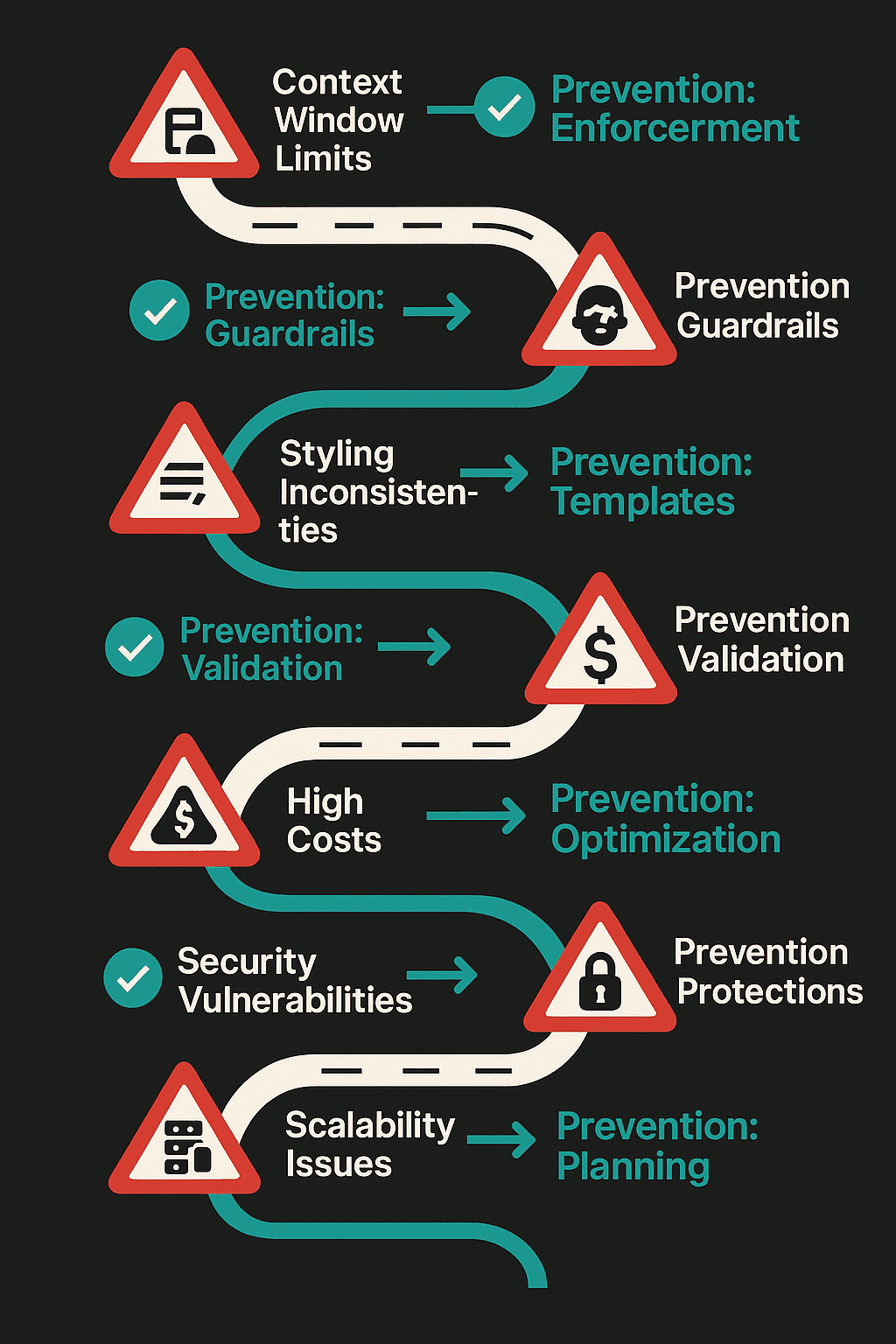

Common Pitfalls and Solutions

Pitfall 1: Inadequate Data Quality

Poor data quality can lead to inaccurate AI models.

Solution: Use rigorous data cleaning and preprocessing techniques to ensure data quality.

Pitfall 2: Overfitting

AI models that are too complex may overfit the training data.

Solution: Use techniques like cross-validation to prevent overfitting.

Future Trends in Depression-Detecting AI

1. Personalized AI Solutions

Future AI systems will likely provide more personalized assessments based on individual patient data.

2. Integration with Wearable Devices

AI systems will increasingly integrate with wearable devices to gather real-time data on patients' physiological states.

3. Enhanced Data Privacy Measures

As technology evolves, new methods for ensuring data privacy will emerge, making patients more comfortable sharing their data.

Recommendations for Developers

- Engage Early with Regulators: Early engagement with the FDA can help developers understand regulatory expectations and streamline the approval process.

- Invest in Explainability: Make model transparency a priority to facilitate FDA approval and improve clinician trust.

- Prioritize Data Privacy: Implement robust data privacy measures to protect patient information and comply with regulations.

Conclusion

Developing depression-detecting AI that can navigate the FDA approval process is a complex but rewarding challenge. By understanding the regulatory landscape, addressing technical challenges, and staying abreast of future trends, developers can create AI systems that improve mental health outcomes while ensuring safety and efficacy.

FAQ

What is depression-detecting AI?

Depression-detecting AI refers to systems that use machine learning algorithms to analyze data and identify potential markers of depression.

How does depression-detecting AI work?

These systems analyze data from various sources, such as electronic health records and wearable devices, to identify patterns indicative of depression.

What are the challenges in getting FDA approval for AI?

Challenges include navigating complex regulatory requirements, ensuring data privacy, and conducting clinical trials.

How can developers address data privacy concerns?

Implement anonymization, use encryption, and apply strict access controls to protect sensitive data.

What are the future trends in depression-detecting AI?

Future trends include more personalized AI solutions, integration with wearable devices, and enhanced data privacy measures.

Key Takeaways

- FDA approval for AI is complex due to stringent regulatory requirements.

- Data privacy is a significant concern with AI systems handling sensitive patient data.

- Clinical trials are essential for proving AI system efficacy but are challenging to implement.

- Future AI systems may offer more personalized assessments using real-time data.

- Developers should engage early with the FDA and prioritize model transparency.

Related Articles

- Inside Microsoft's Superintelligence Strategy for Business [2025]

- Understanding the Impact of System Failures on Autonomous Vehicles: A Deep Dive [2025]

- The Future of Chip Design: How AI is Revolutionizing the Semiconductor Industry [2025]

- Navigating the Complex World of DMCA Takedowns: Lessons from Anthropic's GitHub Incident [2025]

- Why AI Agents Outlast the Best Agencies [2025]

- Building Diverse Teams Starts with Diverse Venture Capitalists [2025]

![Navigating the Complex Path of Getting Depression-Detecting AI through the FDA [2025]](https://tryrunable.com/blog/navigating-the-complex-path-of-getting-depression-detecting-/image-1-1775146120620.jpg)