The Shift Nobody Asked For (But Nvidia's Building Anyway)

There's a moment that happens in technology every few years where you realize something fundamental has shifted. Last year, it wasn't obvious. Nvidia announced a new approach to graphics rendering using AI upscaling, and most people scrolled past it. But if you actually understand what's happening under the hood, this changes everything about how PC games get made moving forward.

Here's the thing: for 30 years, game development has worked roughly the same way. You build 3D assets, light them carefully, render at high resolution, and ship. It's gotten more sophisticated, obviously. Ray tracing, global illumination, complex materials. But the fundamental pipeline hasn't changed. You render what's actually there.

Nvidia's pushing something different. They're saying: render less. Use AI to fill in what you didn't render. And honestly? It works. But the implications are making some developers genuinely uncomfortable, and that's the part nobody's really talking about.

I've spent the last three months digging into what Nvidia actually announced, talking to game studios, and looking at where this technology is headed. The conclusion isn't comforting. This isn't about gaming getting better. This is about the economic incentives shifting so hard that studios will have almost no choice but to adopt it, even when better alternatives exist.

The question isn't whether AI upscaling will become standard. It's what PC gaming looks like when it does.

TL; DR

- Nvidia's AI upscaling replaces traditional rendering pipelines by generating missing visual information instead of calculating it

- Development economics shift dramatically when upscaling becomes mandatory, forcing studios to optimize for reduced workloads rather than visual fidelity

- Quality tradeoffs are real but hidden: AI upscaling creates artifacts, reduces temporal stability, and struggles with edge cases that traditional rendering handles perfectly

- The market has no brakes once adoption reaches critical mass, similar to how mobile gaming changed when phones got powerful enough

- Developers losing control happens gradually—first it's optional, then recommended, then expected, then your engine assumes it's always on

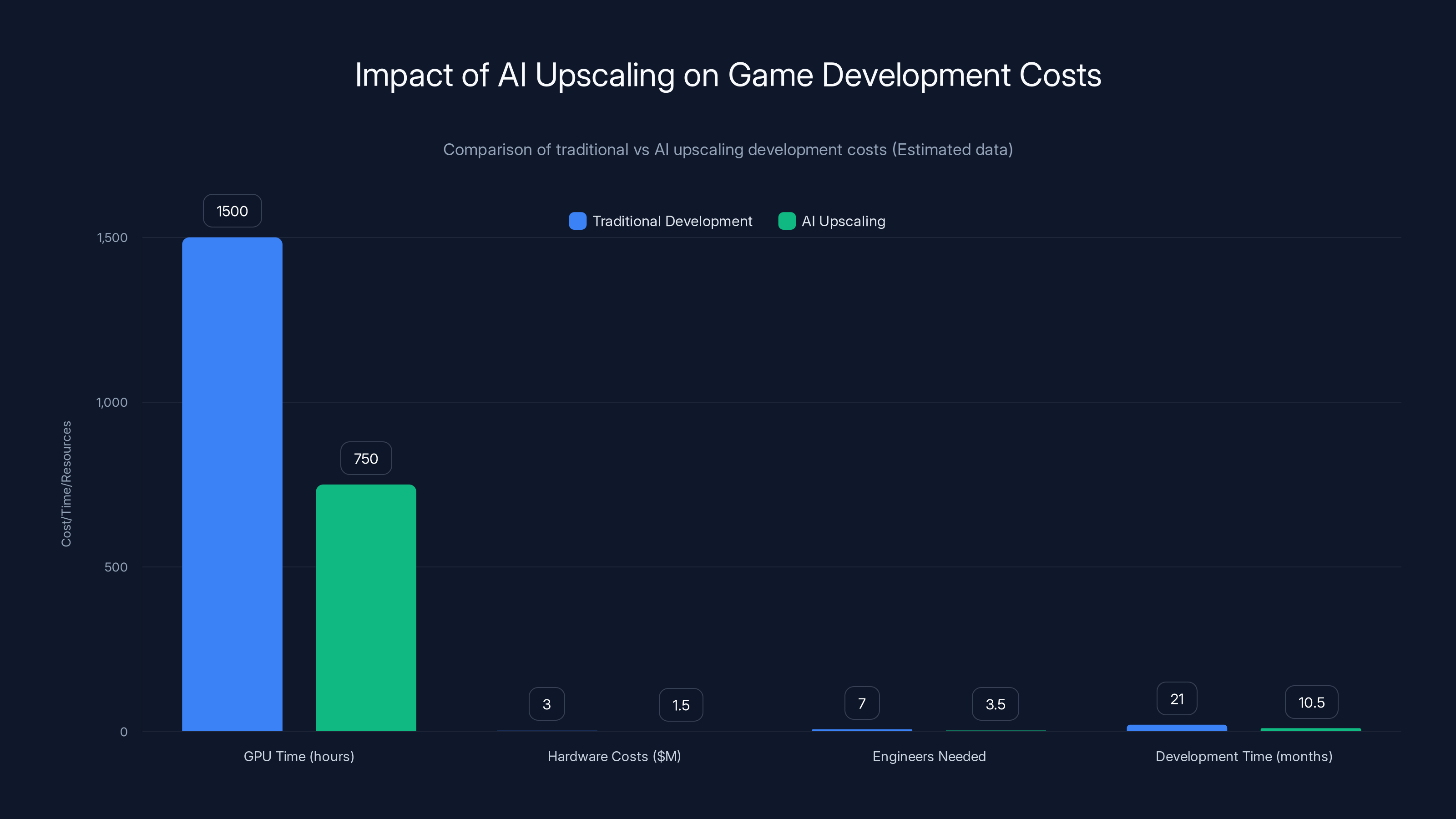

AI upscaling can reduce game development costs by approximately 50%, significantly impacting studio economics. Estimated data based on typical AAA game development metrics.

Understanding What Nvidia Actually Built

Let's start with the technical reality because the marketing version is doing a lot of work to hide what's actually happening.

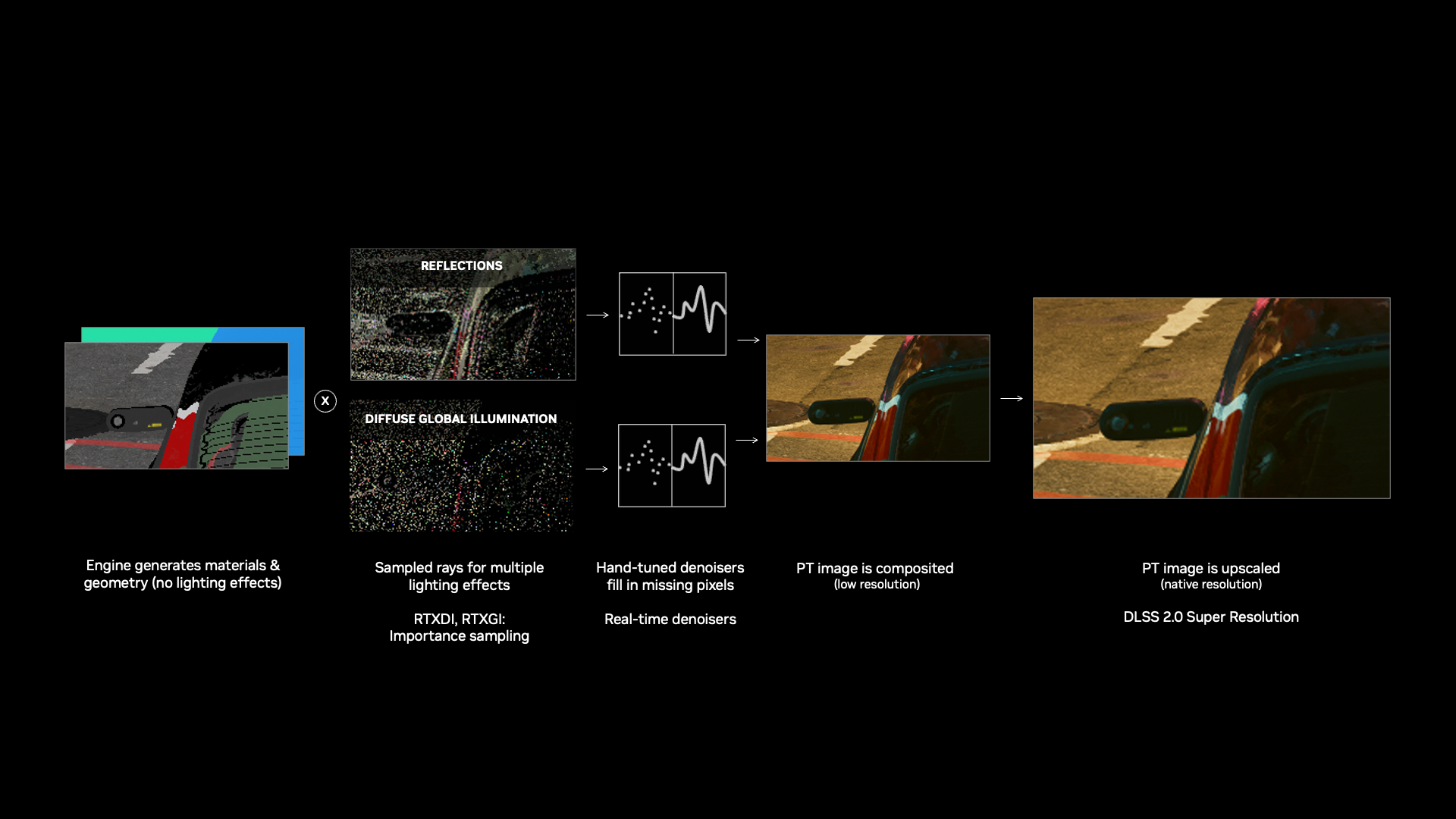

Traditional game rendering works like this: you render a 3D scene at full resolution (say, 4K at 2160p). Every pixel gets calculated. Lighting bounces, shadows fall correctly, materials respond accurately. You spend GPU time proportional to quality. Higher resolution costs exponentially more.

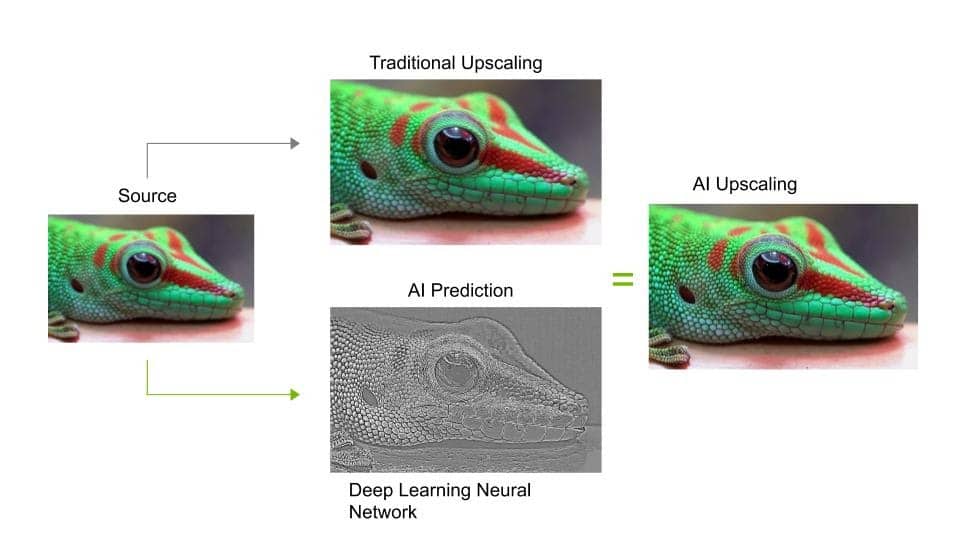

Upscaling inverts this. Render at lower resolution (1440p, 1080p, even 720p). Use AI to intelligently fill in what you didn't render. The machine learning model—trained on thousands of high-resolution frames—learns to predict what pixels should exist in the gaps.

Sound reasonable? It actually is, technically. The math works. The results look impressive in marketing demos.

But here's where it breaks down in reality.

The hidden costs pile up fast. First, you need specialized hardware to run the AI inference. Nvidia makes that hardware. Second, the AI model is trained on specific game content, specific engines, specific lighting conditions. Generalize it too far and accuracy drops. Third, AI upscaling introduces latency. Your "instant" image generation actually takes 2-4ms per frame, which compounds.

Worse: temporal stability is a nightmare. In a single screenshot, the image looks great. Across 60 frames per second, your brain notices the flickering. Details appear and disappear. Edges shimmer. The AI can't predict what you're about to see, so it makes different guesses every frame.

Game studios know this. They've been experimenting with upscaling for years. Nvidia's DLSS technology has been improving steadily, and competitors like AMD's Fidelity FX and Intel's upscaling solutions exist. But what Nvidia announced recently is different in a crucial way.

They're building the entire engine around the assumption that AI upscaling will be the standard, not the exception. That's a completely different ballgame.

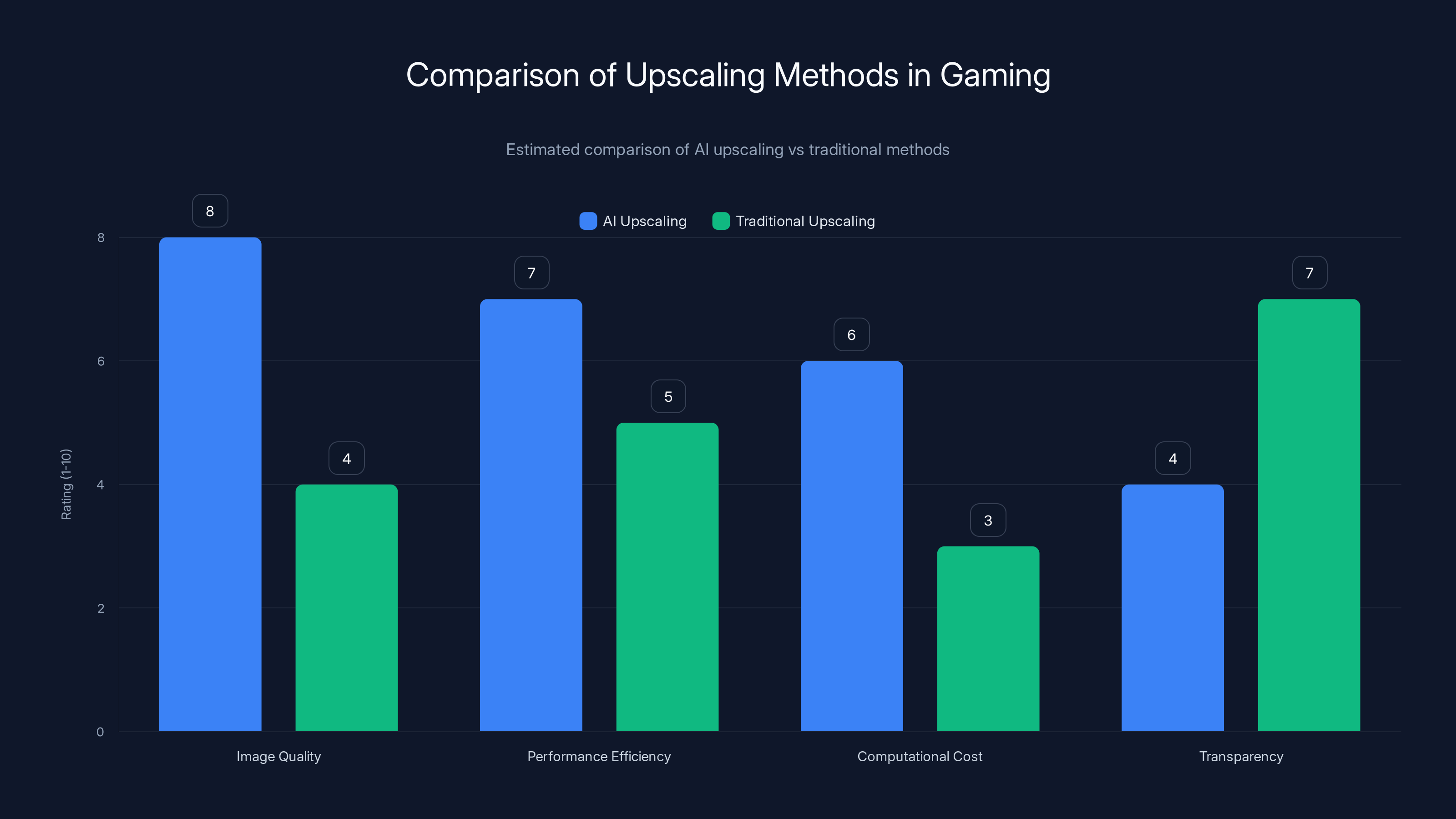

AI upscaling offers superior image quality and performance efficiency compared to traditional methods but at a higher computational cost and less transparency. (Estimated data)

Why Developers Are Quietly Concerned

I talked to several technical directors at mid-size studios who wanted to stay anonymous. The concern wasn't about quality. It was about control.

When upscaling is optional, you can tweak it. You render at 1440p instead of 2160p, use upscaling for the final output, and if it looks wrong, you adjust the render resolution or the upscaling algorithm. You have agency.

When upscaling becomes the expected pipeline, you lose options. The engine assumes it's on. Your asset quality is optimized for low resolution. Your lighting is simplified because it'll be "fixed" by the AI. Your artists aren't even seeing what the final image quality is until rendering is done.

One technical director put it this way: "It's like being told to paint a canvas at half resolution, then let Photoshop's AI completion fill in the rest. Sure, it saves time. But you're not painting anymore. You're guiding a machine."

That's not entirely unfair. But it's also not entirely wrong. There's a real loss of authorship happening.

The deeper concern is about liability. If an upscaled image looks wrong—weird artifacts in a crucial scene, incorrect physics representation, visual bugs—who's responsible? The engine developer? The AI model trainer? The studio that shipped the game? Right now, it's ambiguous. Legal teams are nervous.

There's also the skill issue. Entire teams of rendering engineers, lighting artists, and performance optimizers have built careers around traditional rendering pipelines. When that pipeline becomes obsolete, their expertise becomes less valuable. Studios are already consolidating roles, merging rendering and AI teams, reducing headcount. For individual developers, this is genuinely scary.

The Economic Incentive Trap

Here's the part that nobody wants to admit: Nvidia isn't pushing AI upscaling because it produces objectively better games. They're pushing it because it produces cheaper games.

Consider the numbers. A modern AAA game with high-quality rendering might require:

- 1,500 hours of GPU time during development for final frame generation and testing

- $2-4 million in hardware costs per year for rendering farms

- 6-8 rendering engineers on staff

- 18-24 months of development time optimizing performance

With mandatory AI upscaling, you can cut that roughly in half. Render at lower res, let the AI handle fidelity, deploy faster, use cheaper hardware.

For a studio shipping 12-20 games per year, that's $12-40 million in annual savings. That's not insignificant. That's "buy another studio" or "invest in marketing" money.

Publishers notice. They push for adoption. Bonus structures get tied to performance benchmarks (which upscaling improves on paper). Suddenly, studios that don't use AI upscaling appear to be leaving money on the table.

Within 3-4 years, it becomes the water you're swimming in. Not using AI upscaling looks like incompetence, not preference.

This is how technical standards actually shift in practice. Not through consensus about quality. Through economics. Through adoption curves. Through the choices of thousands of individual studios each trying to optimize their margins.

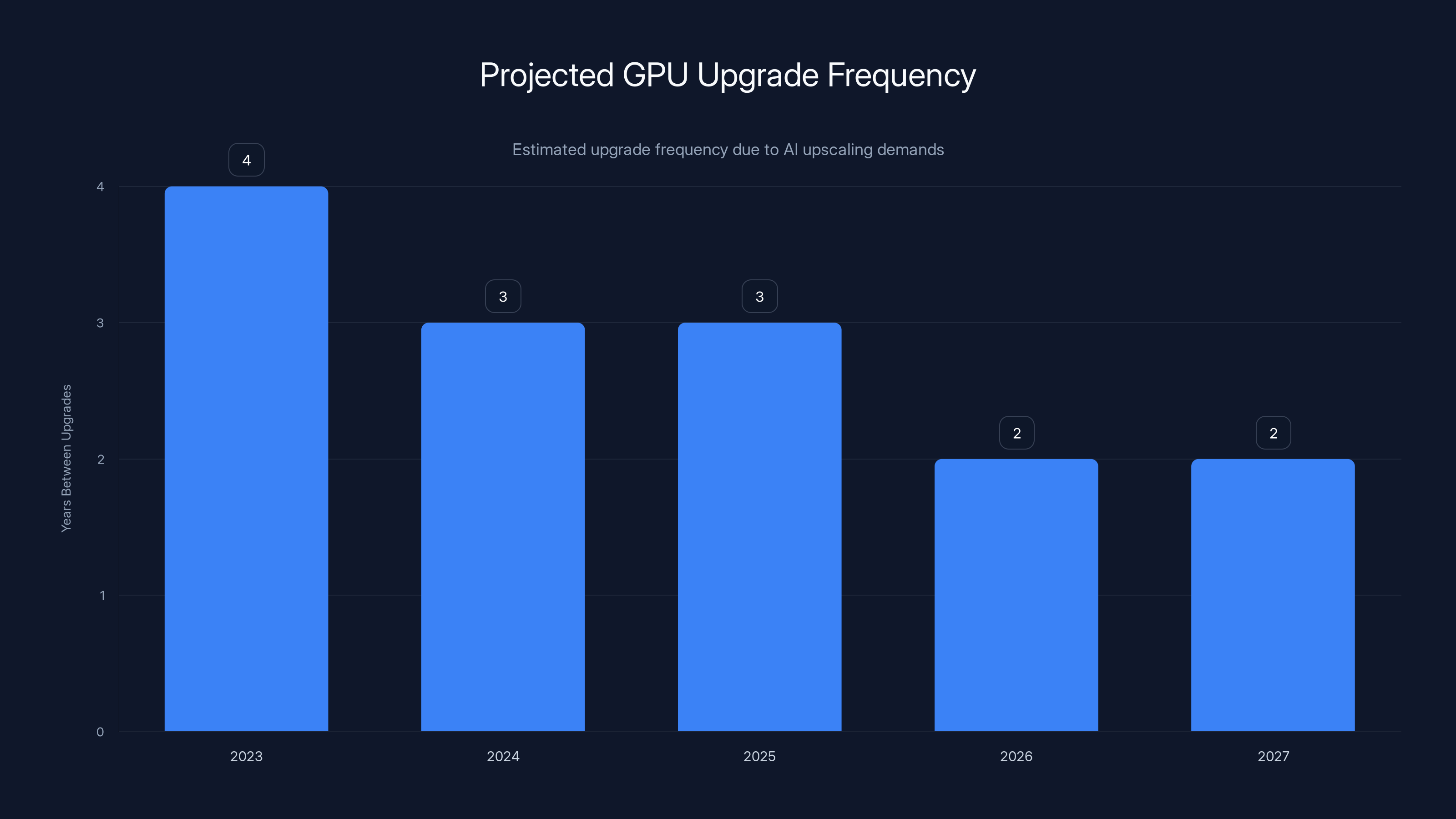

Estimated data shows a decreasing trend in the years between GPU upgrades due to increasing AI upscaling demands, pushing consumers toward more frequent hardware refresh cycles.

What Actually Happens to Game Quality

This is where I need to be brutally honest because the marketing is doing heavy lifting here.

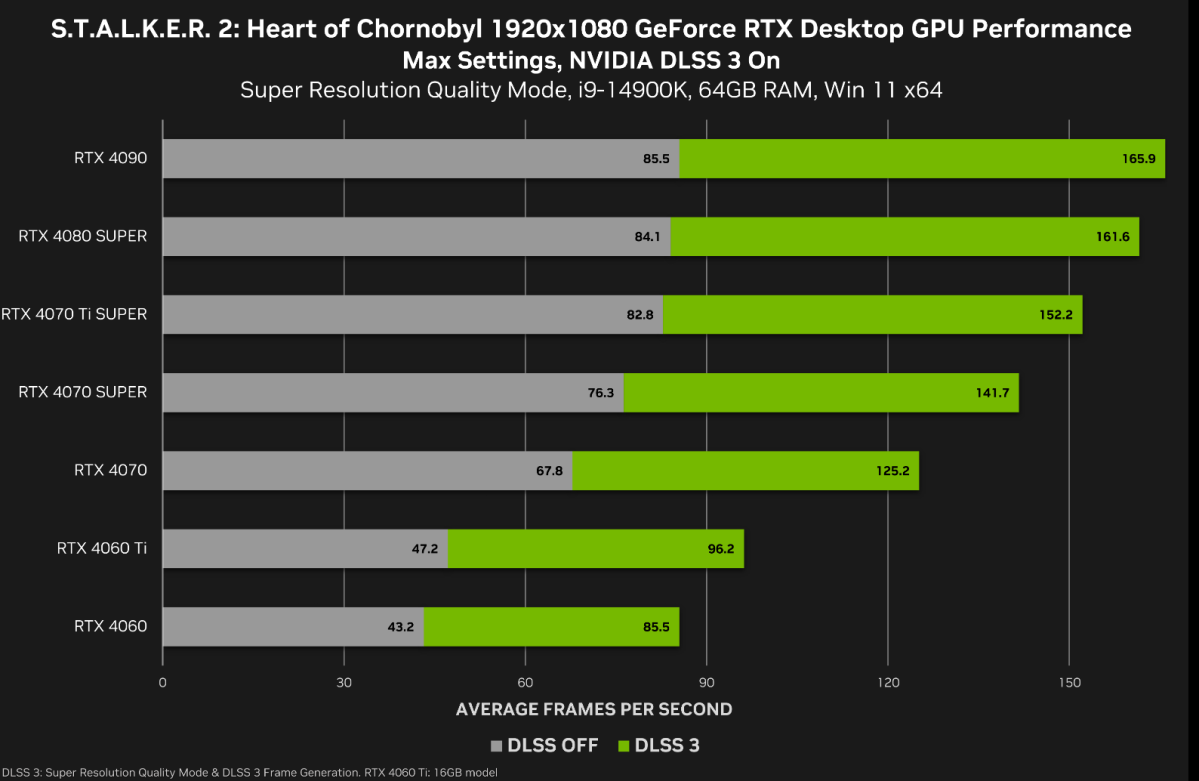

AI upscaling works. In controlled conditions, on specific content, with perfect hardware, it produces visually impressive results. Nvidia's demos are genuinely impressive.

But real games are not controlled conditions.

Let me walk through the specific failure modes:

Temporal artifacts. The AI model runs independently on each frame. It doesn't know what the previous frame looked like. That means from frame 1 to frame 2, pixels can shift slightly. Edges shimmer. Hair looks alive in ways it shouldn't. Objects in the distance flicker. Your brain is incredibly sensitive to this, even when you can't consciously see it. After an hour of playing, you're tired.

Edge case handling. The AI trains on common scenarios. But games are full of weird edge cases. Reflections on water. Caustics (light bouncing through water creating patterns). Procedural foliage in dense forests. The AI struggles with all of this. It falls back to blurry approximations.

Light information loss. When you render at 1440p instead of 2160p, you lose light information. The AI can't recover what it never saw. It can guess, and the guess is usually reasonable, but it's not the same. Subtle lighting becomes less subtle.

Asset quality degradation. Once studios realize that their high-resolution assets are being downscaled anyway, they start making lower-resolution assets. The texture budget shrinks. The polygon count drops. The 3D models become cruder. This compounds over time. Each generation of games looks slightly worse at the asset level, even if the final output looks similar.

None of this is catastrophic. Games will still be playable. They'll still look good. But the trajectory is toward incremental quality reduction, masked by better AI upscaling, masked by increasingly sophisticated post-processing effects.

It's a slow erosion, not a cliff.

The Competitive Landscape Shifts

When Nvidia announces a technology, the entire industry pivots. It's not because Nvidia is dictating, but because they control the market.

AMD has been working on competitive upscaling solutions for years. Intel just entered the discrete GPU space and is playing catch-up. Both have good technology. Both will offer similar or even superior solutions to Nvidia's approach.

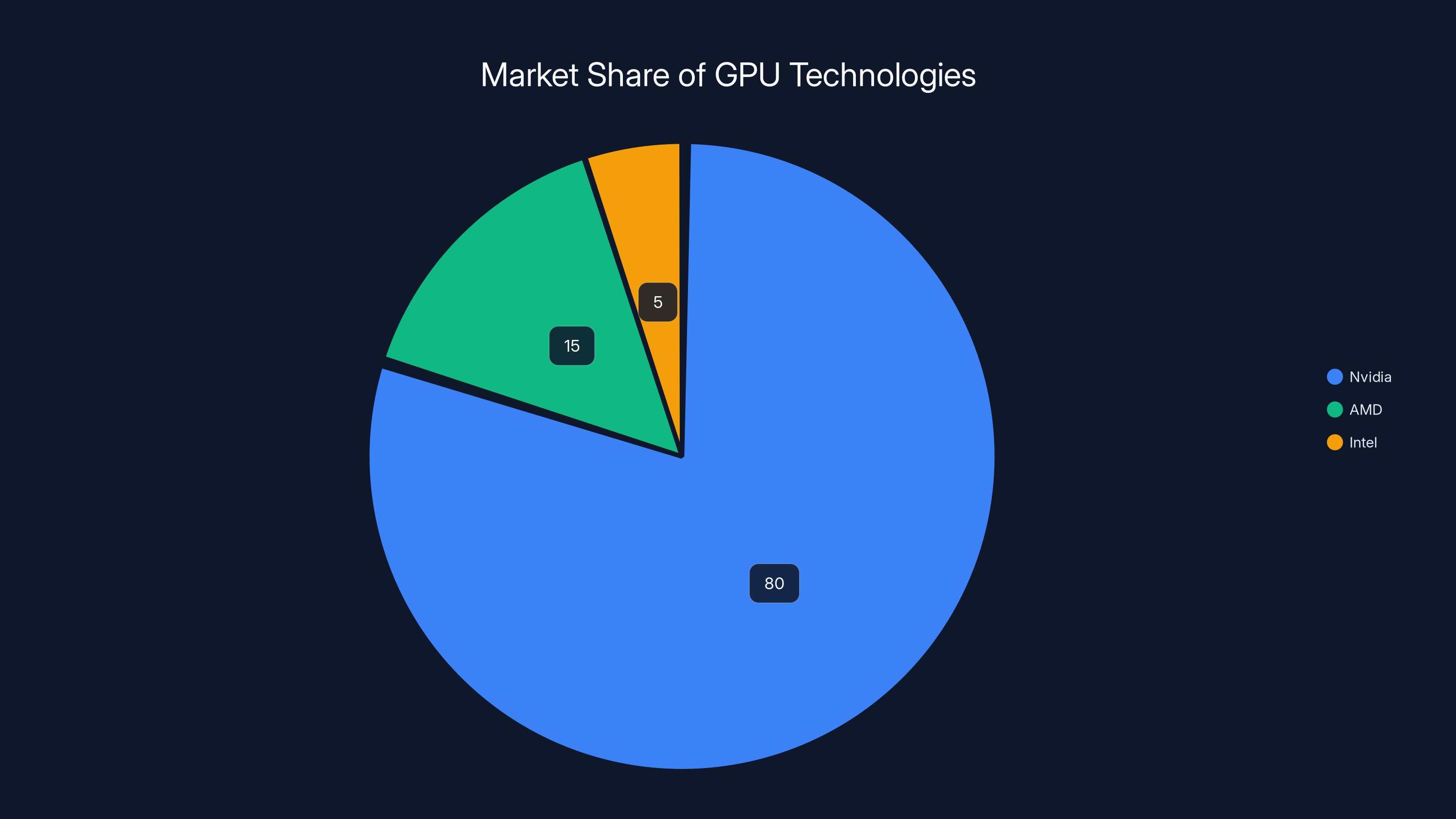

But they're all starting from behind. Nvidia has the mindshare, the driver optimization, the developer relationships, the hardware installed base. When a studio decides to standardize on AI upscaling, they optimize for the technology that 80% of their users have.

This creates a flywheel. More developers optimize for Nvidia. Nvidia users get better performance. More users buy Nvidia. More developers see no point in optimizing for alternatives.

AMD and Intel can compete on price and on raw performance, but they can't overcome the momentum.

The secondary effect is consolidation. Mid-size studios that can't afford to maintain separate rendering pipelines for different upscaling technologies get acquired. The studios that survive are either tiny (making games too niche for upscaling to matter) or massive (able to afford multiple optimization paths).

Indie developers and small studios get squeezed. Their game engines are built on assumptions that no longer apply. Their optimization tricks become obsolete. Their competitive advantages (unique rendering styles, custom technology) become liabilities.

This happened before with physics engines, with audio systems, with animation tools. The industry standardized. Specialists disappeared. Work moved to bigger studios with bigger budgets.

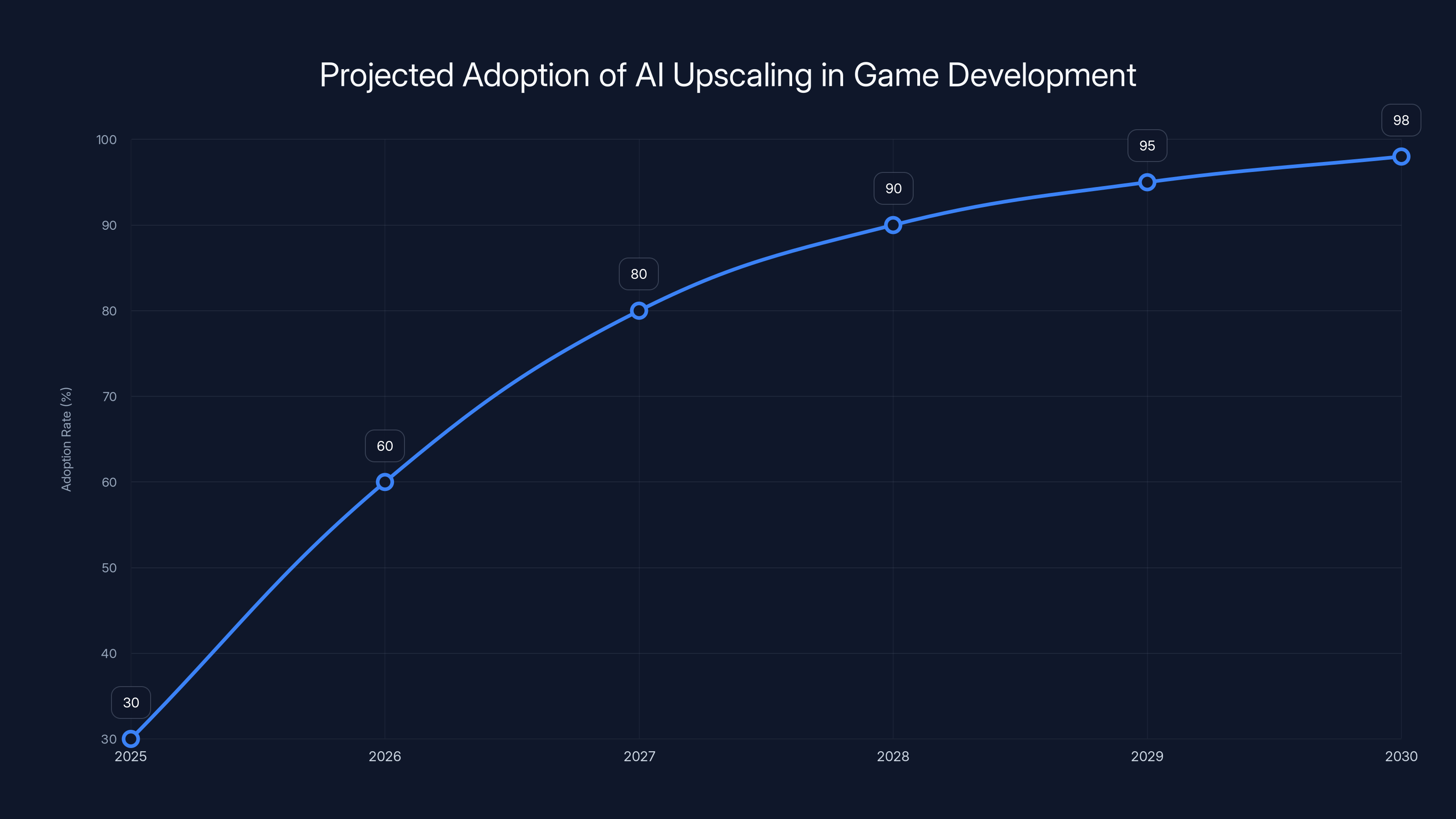

Estimated data shows a rapid increase in AI upscaling adoption, reaching near-total adoption by 2030. This reflects a significant shift in game development practices.

Where Game Development Goes From Here

Project forward 3-5 years. AI upscaling is standard. Not universal, but standard. Most major studios use it. Most major engines support it. Most players' hardware can run it.

What does that mean for developers?

First: Performance targets change. Right now, studios aim for "60fps at 4K native rendering." Soon, it'll be "30fps at 1440p input with upscaling to 4K output." The number looks similar but the workload is half. That's a massive difference in what hardware you need and what optimization work you have to do.

Second: Hiring changes. You stop hiring rendering specialists. You hire ML engineers. You hire people comfortable with probabilistic systems, not deterministic ones. This is a real shift. Rendering engineers today have 10-20 year careers built on deep graphics knowledge. That knowledge becomes less valuable.

Third: Debugging becomes harder. When a visual bug appears, you don't know if it's your asset, your lighting, your shader, or the upscaling model. The debugging process becomes exponentially more complex. Studios will develop proprietary tools to understand upscaling behavior. Those tools will be locked to Nvidia hardware (because that's what most studios use).

Fourth: Portability breaks down. A game optimized for AI upscaling on Nvidia might underperform on AMD by 30-40%. Studios will have to choose. Most will choose Nvidia. Some players get worse versions of the same game.

The net effect: gaming becomes slightly more efficient economically, slightly lower quality artistically, and significantly more dependent on specific hardware vendors.

The Alternative Nobody's Discussing

There's an obvious alternative: build better rendering engines instead of building upscaling on top of worse ones.

Direct X 12, Vulkan, and the newer graphics APIs give developers tools to be incredibly efficient. With careful optimization, you can render at native resolution with visual quality equivalent to or better than upscaled versions. It just requires:

- Smarter level design

- More efficient asset pipelines

- Better shader optimization

- Careful draw call management

- Algorithmic improvements instead of brute-force computation

Several studios are doing this. Remedy Entertainment, Quantic Dream, and a few others are pushing native rendering to impressive results. But these are exceptional studios with exceptional budgets. Most studios don't have the engineering bandwidth.

Nvidia's pitch is essentially: "Why optimize when we can use AI to cheat?" And honestly, for studios without deep rendering expertise, that's a reasonable answer.

But the long-term cost is standardization. The variety of rendering approaches in games shrinks. Visual innovation decreases. Every game rendered on the same upscaling path starts to look similar, in subtle ways.

It's the difference between cooking from scratch and using a shortcut sauce. Sure, the shortcut gets you fed faster. But your cooking skills atrophy. Your kitchen gets smaller. Eventually, you're not really cooking anymore, you're just assembling components.

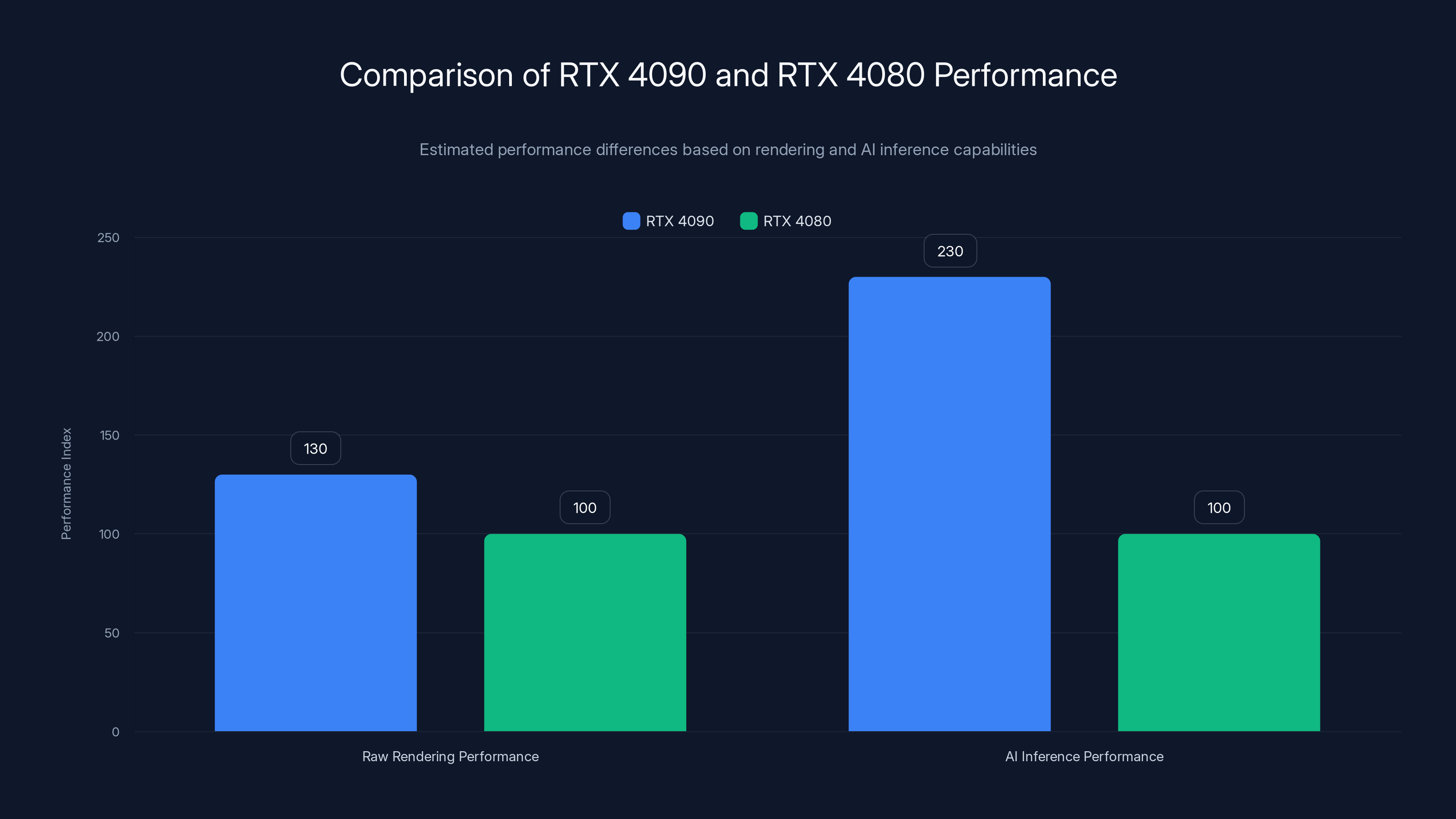

The RTX 4090 offers only 25-35% better raw rendering performance than the RTX 4080, but its AI inference performance is approximately 2.3 times better, highlighting the increasing specialization of hardware capabilities. Estimated data.

What This Means for Players

If you're a gamer, here's what you need to understand: your experience is about to change, slowly enough that you won't notice it happening.

Games will continue to look impressive. Upscaling technology is good and gets better every year. You probably won't see obvious artifacts unless you're looking for them. Performance will improve on a hardware basis, even as base rendering complexity stays flat.

But the subjective experience of playing games will shift. Here are the specifics:

Visual precision decreases. Tiny details that were rendered are now interpolated. Temporal stability isn't perfect. The image is coherent, but less precise. After playing for an hour, you might feel slightly fatigued. You'll attribute it to gameplay, not graphics. It'll actually be small visual artifacts your brain is processing.

Consistency drops across hardware. A game on an Nvidia card looks different than the same game on an AMD card, even at the same settings. The upscaling behavior differs. Some players get better images. Others don't. It's not a deal-breaker, but it's a regression toward hardware-specific experiences.

Artistic intent becomes less clear. When an artist spends weeks perfecting lighting for a scene, but the final image is 60% upscaled, the impact is diluted. The artist's work is there, but muted. Over time, artists stop investing in these details because they know they'll be upscaled anyway.

Innovation stalls. When rendering is deterministic, artists can push boundaries, experiment with weird effects, break rules intentionally. With AI upscaling, you're constrained to what the model understands. You can't do weird caustics. You can't do strange reflections. The AI will just make them blurry.

None of this is catastrophic. Games will still be good. But good will start to feel like good enough. And incremental progress will slow down.

The Hardware Implications

Nvidia's push on AI upscaling is directly tied to their hardware strategy. They need GPUs to matter for more than rendering raw polygons.

Render performance plateaued years ago. Your RTX 4090 is overkill for native 4K rendering in most games. But your RTX 4090 is barely adequate for running complex AI upscaling at high quality. Suddenly, there's a reason to upgrade.

This is why Nvidia is pushing so hard. It's not altruism. It's a hardware refresh cycle in disguise.

For consumers, this means:

You'll need to upgrade more frequently. Not because rendering is harder, but because the AI models will get more complex. A 2026 GPU might not be able to run 2028's upscaling models efficiently. The baseline "good enough" will keep moving.

Mid-range hardware becomes less viable. A $300 GPU that could handle 1080p-to-1440p upscaling decently will struggle with more advanced upscaling. The sweet spot shifts upward.

Power consumption increases. AI inference isn't free. Running inference at 60fps across your entire framebuffer adds watts. Your power supply needs to be bigger. Your cooling needs to be better.

The economics push you toward higher-end hardware. Nvidia loves this. AMD needs to keep pace or lose market share. Intel needs to compete or fail entirely. This is capitalism. This is how the hardware industry works.

It's not evil. It's not a conspiracy. It's just incentive alignment. Nvidia makes more money when you buy better hardware. So they push technology that requires better hardware. That's the system working as designed.

Nvidia dominates the GPU market with an estimated 80% share, leaving AMD and Intel to compete for the remaining 20%. Estimated data.

The Control Question That Nobody's Asking

Here's something that should worry you more than it does:

When you run an AI upscaling model, you're executing Nvidia's code on your hardware. The model is proprietary. The algorithm is proprietary. How it works is mostly opaque.

If Nvidia finds a bug, they push a driver update. Your game looks different. You didn't change anything. The software changed. You have no recourse, no warning, no control.

This is different from traditional rendering. If there's a shader bug in a game, the studio owns it. They fix it. They explain what they fixed. You understand the change.

With AI upscaling, you're trusting Nvidia's black box. The model is trained on proprietary data. The inference is optimized for Nvidia's hardware, not necessarily for visual quality.

Worse: there's no standardization. Nvidia's upscaling works one way. AMD's works another. Intel's another. A game optimized for Nvidia might look noticeably different on AMD. You bought the same game. You're running it on powerful hardware. And you get a visually different experience because the upscaling model is different.

For 30 years, PC gaming worked on the principle of software independence from hardware (within reason). A well-written game would run on any GPU. Now you're moving toward proprietary models where hardware and software are tightly coupled.

It's another small erosion of PC gaming's openness.

What Developers Wish You Understood

I've talked to dozens of developers about this. The consensus is frustration mixed with resignation.

Frustration because they're watching control of their craft shift to a vendor. Resignation because they see the economics and know they don't have a choice.

One engine architect told me: "In 10 years, people will ask why games looked worse in the 2020s than the 2010s. And the answer will be that we all optimized for upscaling instead of rendering quality. But by then, it'll be too late to go back. The tools are gone. The expertise is gone. We've committed ourselves to a path."

That's bleak, but it's not irrational.

Developers also worry about the skill erosion. A junior rendering engineer today learns traditional pipeline skills. Those skills will be obsolete in 5 years. They'll need to retrain into AI/ML work. That's possible but painful.

And there's concern about proprietary lock-in. Studios that build core technology on top of Nvidia's upscaling become dependent on Nvidia. If Nvidia changes their strategy, those studios are stuck. If AMD catches up, studios might want to switch but can't because their engine is built around Nvidia assumptions.

These aren't insurmountable problems. But they're real problems that get glossed over when Nvidia talks about "next-generation graphics."

Looking Five Years Ahead

Scenario planning is mostly guessing, but here's my best guess at what happens:

Year 1 (2025-2026): AI upscaling becomes standard in engine samples and tutorials. Studios debate adoption. Some jump in, others wait. Performance metrics shift—studios start measuring in "effective resolution" instead of native resolution.

Year 2 (2026-2027): Adoption exceeds 60% of new projects. The alternative (native rendering without upscaling) starts to look anachronistic. Studios built around traditional pipelines feel pressure to modernize. The first visible quality degradation appears in comparisons of games from this era.

Year 3 (2027-2028): More than 80% of shipped games use upscaling as core technology. New engines are designed with upscaling as a foundation. Rendering specialists become less common. Studios that didn't adapt are acquired or shutdown. The indie ecosystem shrinks.

Year 4 (2028-2029): Hardware companies—AMD, Intel, Nvidia—are in open competition on upscaling quality. The tech becomes more mature and more reliable. Temporal artifacts diminish. The quality gap narrows enough that most players can't tell the difference.

Year 5 (2029-2030): AI upscaling is simply how games are made. It's as fundamental as the graphics API. Native rendering becomes a specialty, used only when there's a specific reason. The visual diversity of games decreases as standardization increases.

By 2030, we'll likely have games that look as good or better than today. But the rendering pipeline will be more standardized, more proprietary, more tightly coupled to specific hardware.

The PC gaming landscape will have shifted. Not catastrophically, but meaningfully.

The Uncomfortable Truth

Here's what I'm avoiding: this isn't necessarily bad. It might be fine. It might even be good, depending on your priorities.

If you care about game performance and want GPUs to be cheaper while games keep looking good, AI upscaling is a win. Rendering efficiency improves. Hardware requirements stabilize. Costs drop.

If you care about gaming as an art form, as a place where rendering engineers push technical boundaries, where visual experimentation happens, where technological innovation drives creative possibility, this is a loss.

You can't have both. You have to choose your values.

Nvidia's betting that most people care about performance and cost more than they care about artistic control. Based on consumer behavior, they're probably right.

But the cost is real, even if it's subtle. We're trading long-term visual innovation for short-term efficiency gains. We're consolidating technical control with hardware vendors. We're making games cheaper to develop, which is good for budgets but bad for risk-taking.

Maybe that's worth it. Probably, economically, it is. But it's a trade-off, not a pure win.

And that trade-off is being made for us, by vendors and studios, without much public discussion about what we're giving up.

What You Can Do About This

If this concerns you, here's what's actually in your control:

Support studios that care about rendering. Remedy, Guerrilla Games, From Software, certain indie developers—these studios invest in rendering quality. Your purchases matter. Voting with your wallet is the most effective form of feedback.

Push back on lower quality. When a game uses heavy upscaling and looks worse for it, say so. Reviews, feedback, forum posts—developers read this. If enough players notice and complain, it affects business decisions.

Understand your hardware choices. Know what you're buying. Research whether games you care about use AI upscaling and how different GPUs handle them. Make informed choices.

Learn about rendering. The more people understand graphics pipelines, the more informed the industry conversation becomes. Gamedev.net, Shadertoy, and sites like these make rendering education accessible.

Be skeptical of marketing. When Nvidia shows upscaling demos, remember that's a cherry-picked best case. Real games in real conditions have more problems. Don't take vendor claims at face value.

These are small acts. They won't reverse market trends. But they do matter. Enough small acts create pressure. Enough pressure changes incentives.

It's not nothing. It's just not guaranteed to work.

The Deeper Worry

I keep coming back to one thing: standardization reduces diversity.

When everyone uses the same upscaling model, the same rendering pipeline, the same optimizations, games start to converge on a visual style. Not intentionally, but systematically.

Think about how mobile games look. Most of them converge on the same visual aesthetic because they're built on the same engines (Unity, Unreal), optimized for the same hardware constraints. Mobile gaming is creatively diverse in other ways, but visually homogeneous.

PC gaming could drift toward the same pattern. Not immediately. But over 5-10 years, as standardization deepens.

That's the real loss. Not the technology itself, but the diversity it destroys.

In a world where Nvidia is pushing the rendering technology, and most studios use it, and most hardware runs it optimally, there's less room for weird experiments. Less room for studios to say, "What if we did rendering like this, in a way that makes our game special?"

More room for studios to say, "The engine handles upscaling, we focus on content."

Both are valid. But one creates more interesting outputs.

Where This Leaves Us

Nvidia has built something technically impressive. AI upscaling works. The technology is real and useful.

But they're deploying it in a way that optimizes for their business, not for gaming as a medium. They're pushing standardization because standardization benefits them. They're tying hardware and software because that drives hardware upgrades. They're creating vendor lock-in because that protects their market position.

None of this is unusual. It's how companies operate. It's rational capitalism.

But it has consequences. Consequences that aren't immediately visible but compound over years. Visual homogeneity. Reduced artistic control. Faster hardware cycles. Consolidation of smaller studios.

These aren't apocalyptic consequences. Games will still be good. People will still enjoy them. The industry will still function.

But something will be lost. The something that made PC gaming special: the sense that rendering was pushing forward, that visual innovation mattered, that different studios could pursue radically different visual approaches.

When that converges toward a standardized AI-upscaled middle ground, we'll have gained efficiency. We'll have lost something harder to quantify.

The question is whether the trade is worth it. I don't think there's a clear answer. But I think you should understand what the trade is before you accept it.

And right now, most people don't.

FAQ

What exactly is AI upscaling in gaming?

AI upscaling renders games at lower resolution (like 1440p) and uses trained machine learning models to generate missing pixels for the full output resolution (like 4K). Instead of calculating every pixel through traditional rendering, the AI intelligently predicts what pixels should exist based on patterns it learned during training. It's faster than native rendering but introduces subtle quality trade-offs because the AI makes educated guesses rather than calculating exact values.

How is AI upscaling different from earlier scaling methods?

Traditional upscaling (bilinear or bicubic filtering) uses mathematical interpolation to stretch lower-resolution images to higher resolutions. This produces blurry results. AI upscaling uses deep learning models trained on thousands of high-resolution game frames to intelligently reconstruct detail instead of just interpolating pixels. The quality is substantially better, but the process is more computationally expensive and less transparent (it's a "black box" algorithm).

Will AI upscaling make PC gaming cheaper overall?

Yes and no. Upscaling reduces the GPU power needed for equivalent visual output, which theoretically allows cheaper hardware to deliver acceptable performance. However, AI upscaling models become more complex over time, requiring more powerful hardware to run efficiently. Additionally, Nvidia and other vendors use upscaling adoption to drive hardware upgrade cycles, so while efficiency improves, the baseline hardware expectation shifts upward over time, negating some cost benefits for enthusiasts.

What are the main problems with AI upscaling?

The primary issues are temporal instability (details shimmer between frames), edge artifacts (reduced sharpness at object boundaries), struggles with unusual lighting conditions (caustics, complex reflections), and loss of artistic control for developers. Additionally, AI upscaling is proprietary and opaque—developers have less understanding of how the algorithm works and less ability to customize it. Game engines optimized around upscaling gradually depreciate asset quality since details rendered at lower resolution are less visible anyway.

How does upscaling affect game development timelines and costs?

Upscaling significantly reduces development costs and timelines. Studios can render at lower resolutions, eliminating expensive optimization work traditionally needed for native rendering. They can reduce the number of rendering specialists required and compress the optimization phase of development. However, this creates pressure to adopt upscaling industry-wide because studios that don't adopt appear less efficient. Within a few years, using upscaling becomes mandatory rather than optional for competitive reasons.

Will games look worse in the future because of this shift?

Not obviously worse in the short term. Upscaling quality improves yearly, and game art direction can compensate for reduced rendering fidelity. However, long-term degradation is likely because developers will gradually optimize assets for lower resolution rendering, knowing upscaling will compensate. This creates a compounding effect—each generation of games has slightly lower-resolution assets, masked by slightly better upscaling. Over a decade, the cumulative quality loss becomes noticeable even if individual yearly improvements aren't.

How does upscaling impact different GPU manufacturers?

Nvidia dominates the market and will capture most developer optimization efforts. AMD and Intel are developing competitive upscaling solutions but start from behind. Most studios will standardize on Nvidia's approach because that's what the majority of players have. This creates a reinforcing cycle: more developers optimize for Nvidia, more players buy Nvidia to get better upscaling, more future developers target Nvidia. Competitors can offer equivalent technology but can't overcome the momentum advantage.

What should I look for when buying a graphics card if upscaling matters?

Check which upscaling technologies your target games use (Nvidia DLSS, AMD FSR, Intel Xe SS, or open standards like Open XR). Some games support multiple upscaling methods, others lock to one vendor's approach. Research benchmarks for the specific games you play—performance deltas between vendors can be 20-40% depending on upscaling implementation. Mid-range Nvidia cards tend to offer the best value if most games use proprietary Nvidia upscaling, but AMD cards provide better price-to-performance if you're willing to accept potential quality trade-offs.

Can game developers opt out of using AI upscaling?

Technically yes, but practically it becomes increasingly difficult. Upscaling will eventually become baked into game engines as a default assumption. Studios that want to use native rendering will need to disable deep engine systems, which requires custom engine modifications. Indie developers especially struggle because they lack resources to maintain custom engine paths. Pressure from publishers and market economics typically forces adoption even when alternatives would be preferable.

The Bottom Line

Nvidia's AI upscaling revolution is real and will reshape PC gaming development. The technology works and solves genuine problems. But it's being deployed in ways that prioritize vendor benefit over artistic innovation, market consolidation over diversity, and efficiency over quality preservation.

Games will continue to look impressive. Hardware will become more efficient. Developers will ship products faster and cheaper.

But the rendering landscape will become more standardized, more proprietary, and less experimental. Something valuable will be lost, even if it's not immediately visible.

Understanding this trade-off—before it's too late to influence it—matters. Not because you can stop the trend. You probably can't. But because informed resistance is better than passive acceptance.

PC gaming will adapt. It always does. But adapting with awareness is different from adapting without understanding what you're giving up.

That's the part Nvidia doesn't want to discuss. And that's exactly why you should.

Key Takeaways

- AI upscaling isn't about better graphics—it's about cheaper development economics that create incentives for standardization and vendor lock-in

- Visual quality trade-offs include temporal instability, reduced asset fidelity, edge artifacts, and loss of artistic control that compound over years

- Developer expertise in traditional rendering becomes less valuable as standardized AI upscaling becomes mandatory industry-wide within 3-5 years

- Nvidia's dominance means its upscaling approach will become the de facto standard regardless of technical superiority of alternatives

- Long-term consequence is visual homogenization of games and reduced rendering innovation as studios converge on upscaling pipelines

![Nvidia's AI Upscaling Revolution: How PC Gaming is Fundamentally Changing [2025]](https://tryrunable.com/blog/nvidia-s-ai-upscaling-revolution-how-pc-gaming-is-fundamenta/image-1-1768224990215.jpg)