Revolutionizing Content Moderation in the AI Era: Lessons from a Facebook Insider [2025]

Content moderation on social media platforms has always been a contentious issue. With the explosion of digital content, the challenges have only multiplied. Brett Levenson, a former insider at Facebook, has illuminated some of these complexities and the role AI could play in resolving them. This comprehensive guide explores the intricacies of content moderation, drawing insights from Levenson's experiences and delving into AI-driven solutions.

TL; DR

- Content moderation is crucial for maintaining community standards and user safety.

- AI and machine learning are pivotal in scaling moderation efforts effectively.

- Human oversight remains essential for nuanced decision-making and policy enforcement.

- Continuous policy updates are necessary to address evolving content trends.

- Future trends include more sophisticated AI models and proactive content detection.

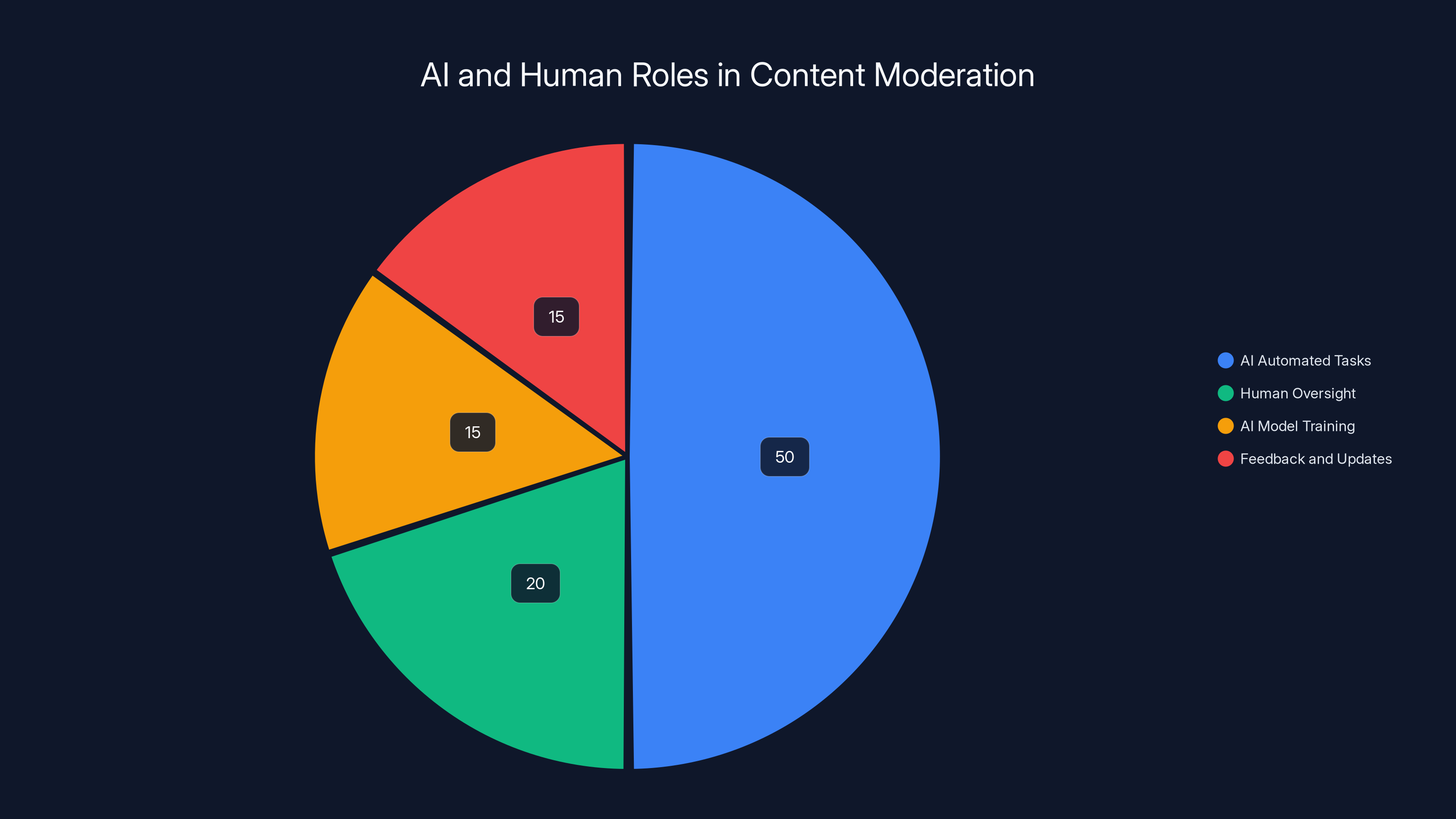

Estimated data showing AI handles 50% of tasks, with human oversight and feedback playing significant roles.

The Role of Content Moderation in Social Media

Content moderation is the process of monitoring and managing user-generated content to ensure it complies with community guidelines and legal standards. It's a balancing act between allowing free expression and protecting users from harmful material. Platforms like Facebook face immense pressure to maintain this balance, especially after incidents like the Cambridge Analytica scandal.

Why Content Moderation Matters

- User Safety: Protects users from harmful or triggering content.

- Legal Compliance: Ensures adherence to laws and regulations.

- Brand Reputation: Maintains the platform's integrity and user trust.

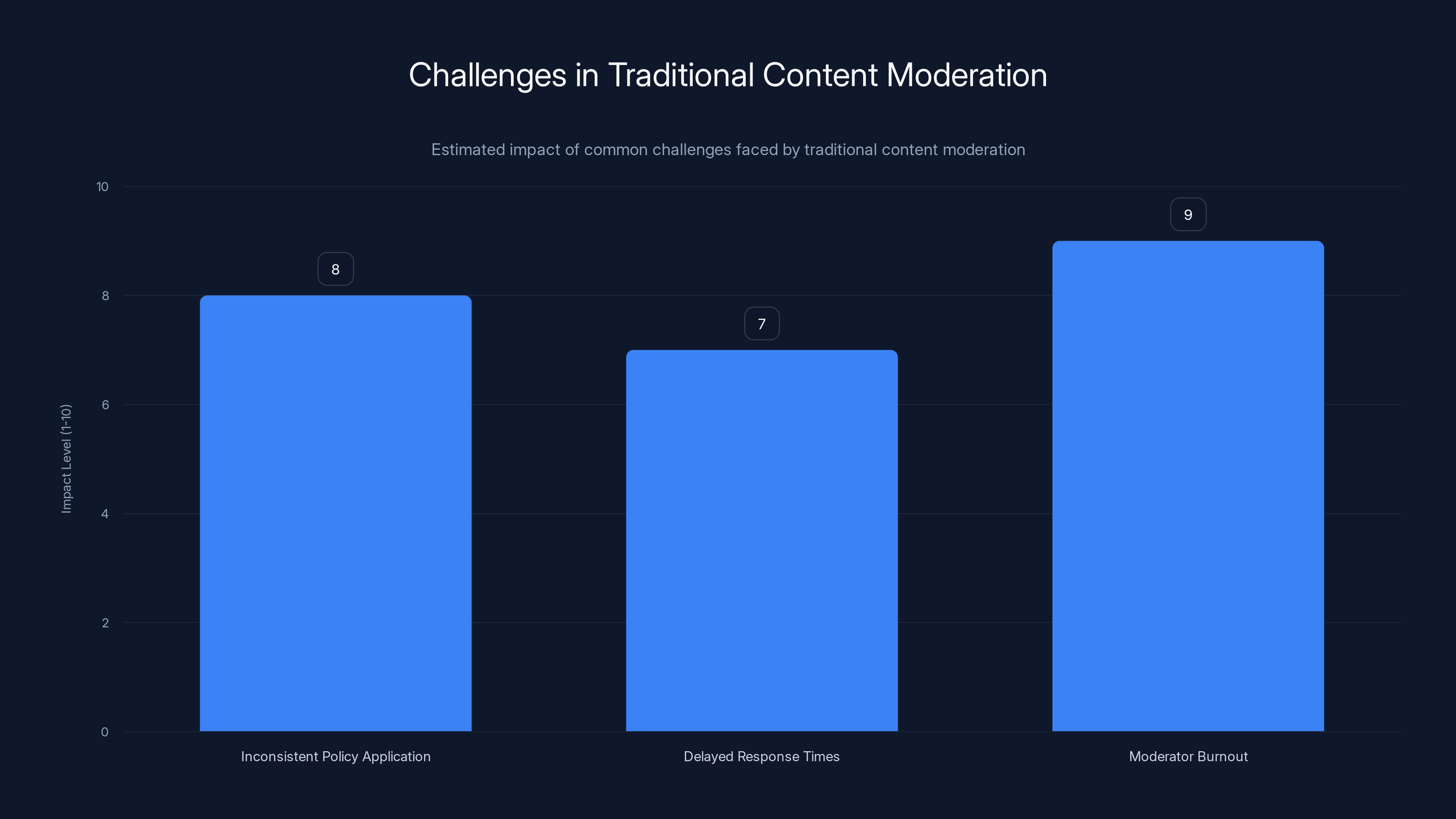

Traditional content moderation is significantly impacted by moderator burnout and inconsistent policy application, highlighting the need for improved systems. (Estimated data)

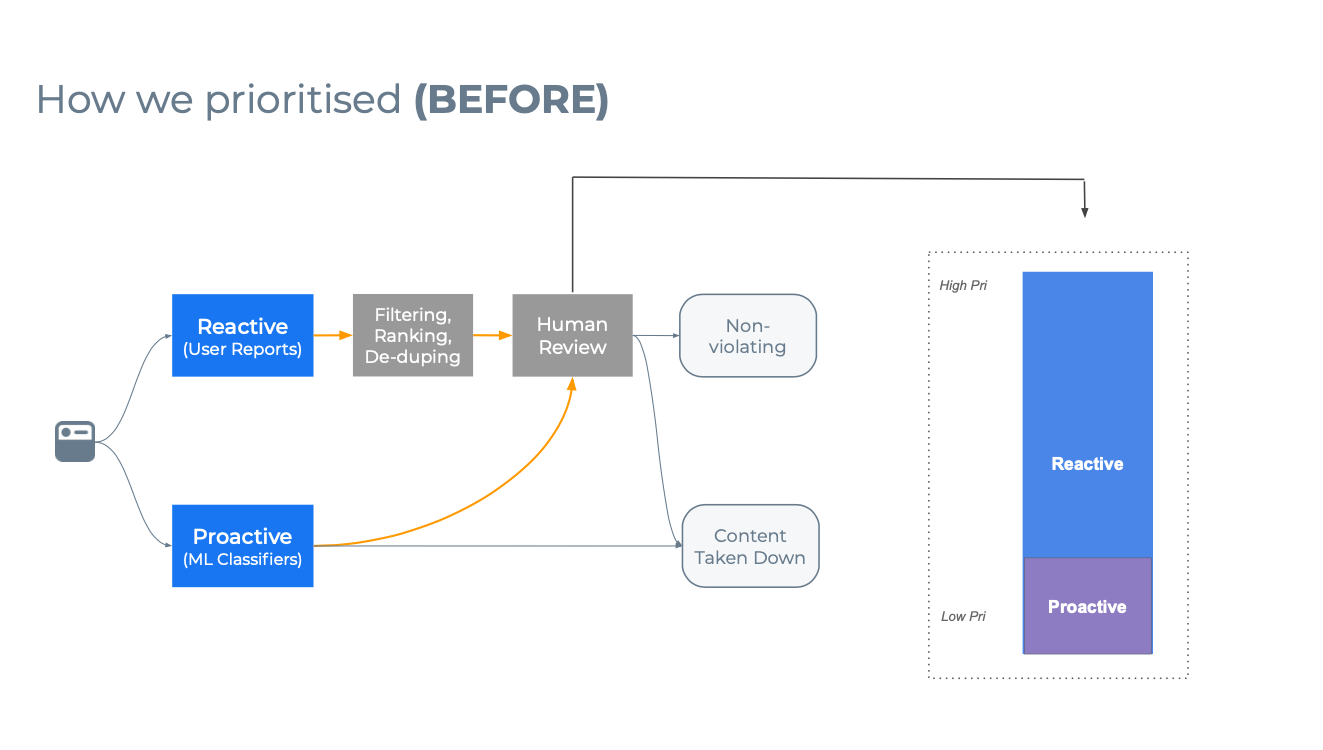

Challenges in Traditional Content Moderation

Traditional content moderation relies heavily on human reviewers. Brett Levenson highlighted a significant issue: human moderators were overwhelmed by the sheer volume of content and often had inadequate tools. They were given a 40-page policy document, machine-translated, and expected to make rapid decisions on complex issues.

Common Pitfalls

- Inconsistent Application: Policies applied inconsistently due to language barriers and interpretation.

- Delayed Response: Harmful content often remained on platforms for days before being addressed.

- Burnout and Turnover: High stress and pressure led to high turnover rates among moderators.

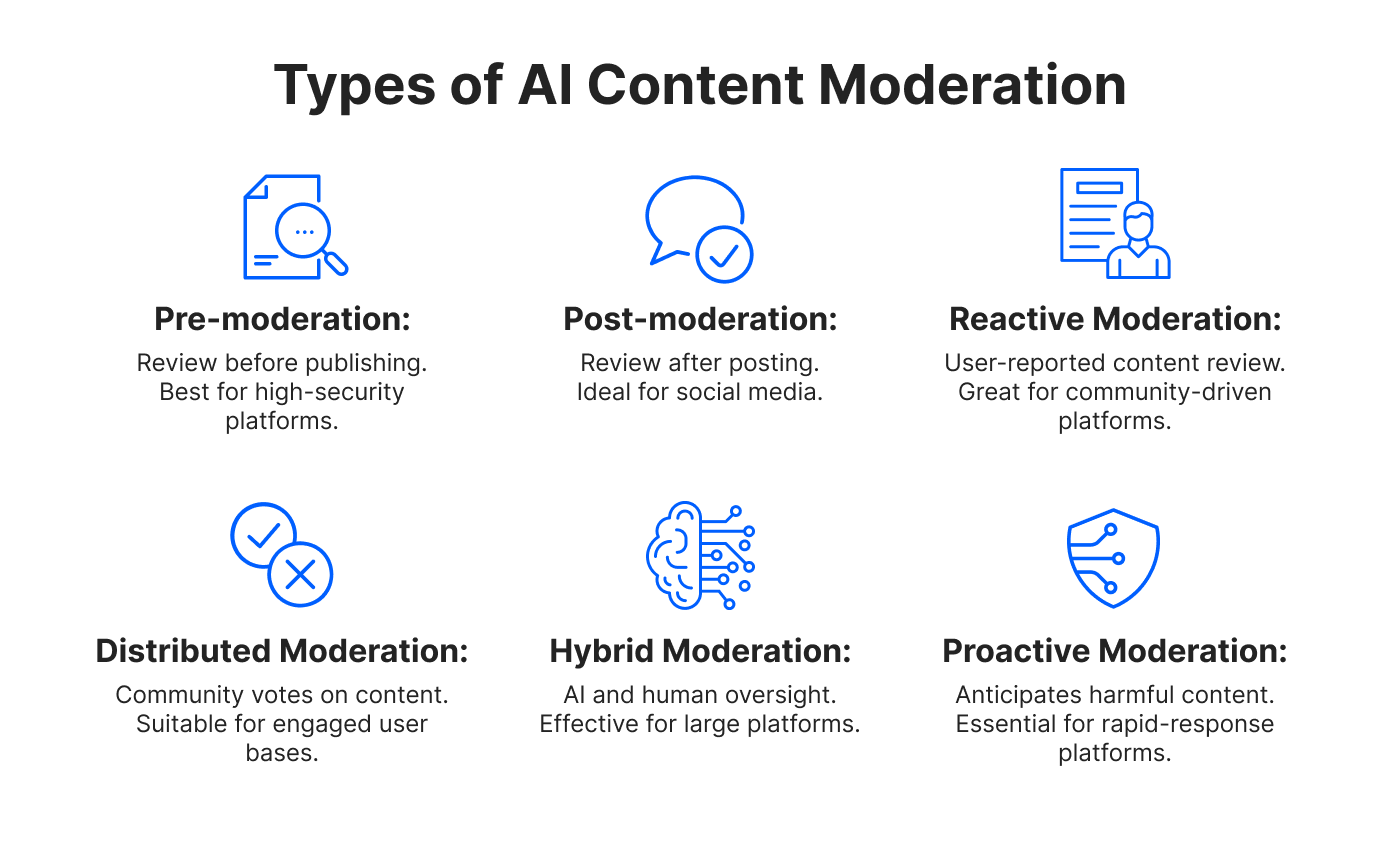

Enter AI: A New Era of Moderation

AI and machine learning have introduced new possibilities for moderating content at scale. These technologies can analyze vast amounts of data and identify potentially harmful content faster than human moderators.

How AI Enhances Moderation

- Automated Detection: AI can automatically flag content based on pre-defined criteria.

- Scalability: Can handle the massive volume of content posted daily.

- Pattern Recognition: Identifies patterns of harmful behavior and emerging threats.

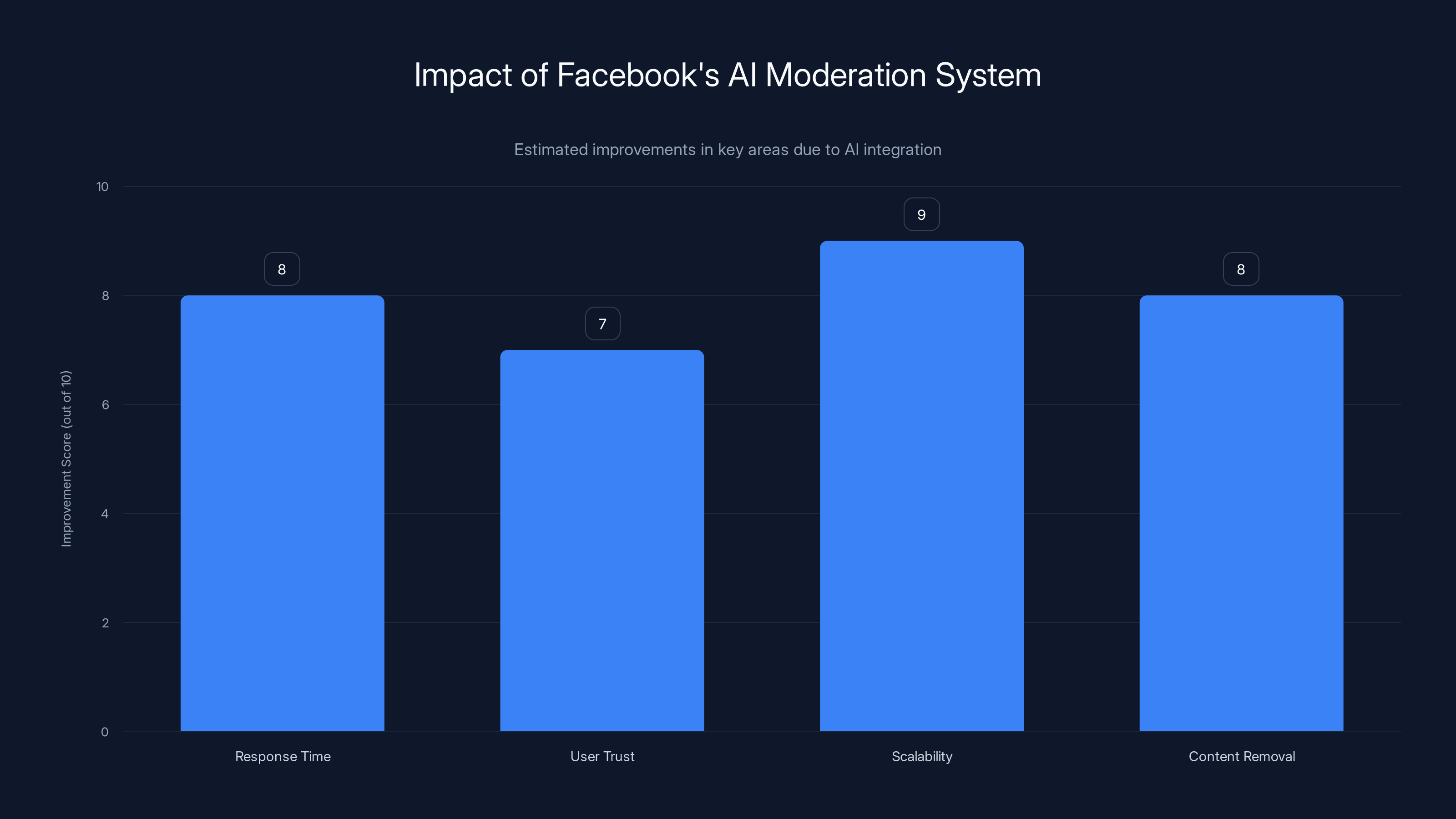

Facebook's AI moderation system has significantly improved response times, user trust, scalability, and the speed of harmful content removal. (Estimated data)

Implementing AI in Content Moderation

Integrating AI into content moderation involves a strategic approach that combines technology with human oversight. Here are the steps to effectively implement AI-driven moderation.

Step 1: Define Clear Policies

Before deploying AI, platforms must establish clear, comprehensive content policies. These should be adaptable to changing societal norms and legal requirements.

Step 2: Develop Robust AI Models

AI models need to be trained on diverse datasets to recognize different types of harmful content. This includes textual, visual, and audio content.

Step 3: Continuous Monitoring and Feedback

AI systems must be continuously monitored and updated based on feedback from human moderators. This ensures they remain effective and unbiased.

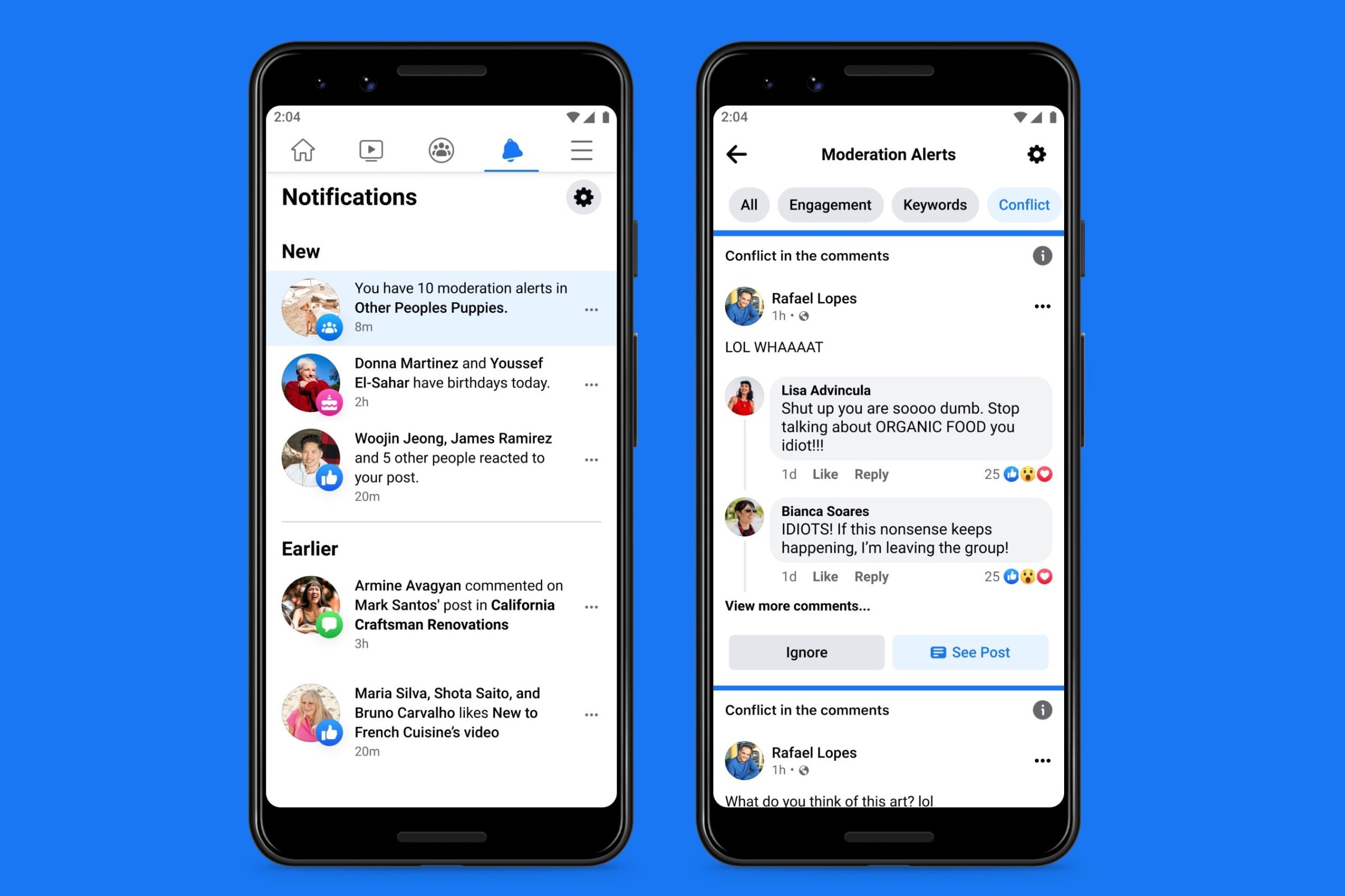

Step 4: Human-AI Collaboration

While AI can handle the bulk of content moderation, human oversight is crucial for nuanced decision-making. Human moderators should review flagged content and provide feedback to improve AI accuracy.

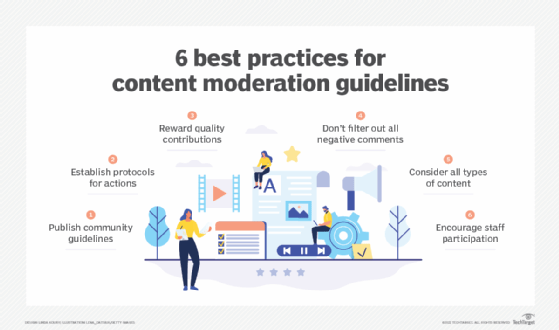

Best Practices for AI-Powered Content Moderation

- Transparency: Be open about how content is moderated and what criteria are used.

- User Appeal Processes: Allow users to appeal moderation decisions and provide clear explanations.

- Bias Mitigation: Regularly audit AI models to prevent bias and ensure fairness.

Case Study: Facebook's AI Moderation System

Facebook has been at the forefront of integrating AI into content moderation. The platform uses a combination of machine learning models and human reviewers to manage content. This hybrid approach has improved response times and reduced the burden on human moderators.

Key Achievements

- Reduced Harmful Content: Faster removal of harmful content.

- Improved User Trust: Increased transparency and user feedback mechanisms.

- Scalable Solutions: Ability to handle billions of posts daily.

Future Trends in Content Moderation

The future of content moderation lies in more advanced AI models capable of understanding context and intent. Here are some trends to watch:

Proactive Content Detection

AI will shift from reactive to proactive detection, identifying harmful content before it reaches users.

Real-Time Moderation

Advances in processing power will enable real-time moderation, reducing the window of exposure to harmful content.

Enhanced User Tools

Platforms will provide users with more tools to control their content experience, such as customizable filters and advanced reporting options.

Building a Sustainable Moderation Ecosystem

Creating a sustainable content moderation system requires collaboration between technology and human expertise. It's about finding the right balance between automation and human judgment.

Recommendations for Platforms

- Invest in AI Research: Continuously improve AI models to handle complex content.

- Enhance Moderator Support: Provide adequate training and mental health support for human moderators.

- Engage with Stakeholders: Work with governments, NGOs, and user communities to develop fair policies.

Conclusion

Content moderation in the AI era is a dynamic field that requires constant adaptation and innovation. By leveraging AI technology and maintaining human oversight, platforms can create safer, more inclusive online spaces. Brett Levenson's insights provide valuable lessons for navigating the complexities of content moderation.

FAQ

What is content moderation?

Content moderation involves monitoring and managing user-generated content to ensure it aligns with community guidelines and legal standards.

How does AI enhance content moderation?

AI enhances moderation by automating the detection of harmful content, increasing scalability, and recognizing patterns of harmful behavior.

What are the challenges of traditional content moderation?

Traditional moderation faces challenges like inconsistent policy application, delayed response times, and moderator burnout.

Why is human oversight important in AI moderation?

Human oversight ensures nuanced decision-making, provides feedback for AI improvement, and addresses complex content issues.

What future trends can we expect in content moderation?

Future trends include proactive content detection, real-time moderation, and enhanced user tools for content control.

How can platforms build a sustainable moderation ecosystem?

Platforms can build sustainability by investing in AI research, supporting moderators, and collaborating with stakeholders.

Key Takeaways

- Content moderation is essential for user safety and legal compliance.

- AI-driven moderation scales efforts and detects harmful content efficiently.

- Human reviewers remain crucial for nuanced decisions and policy enforcement.

- Continuous policy updates are necessary due to evolving content trends.

- Future trends include proactive content detection and real-time moderation.

Related Articles

- Reddit's Evolution Beyond r/all: Navigating the Future of Online Communities [2025]

- Apple's Most Pivotal Product in Its First 50 Years: A Deep Dive [2025]

- Apple: Redefining Personal Computing at 50 [2025]

- The Rise of AI in Healthcare: Chatbots Prescribing Psychiatric Drugs [2025]

- AI Agents: Transforming Business Operations in 2025

- Samsung TV's New Google Cast Support: A Game-Changer for Smart Home Entertainment [2025]

![Revolutionizing Content Moderation in the AI Era: Lessons from a Facebook Insider [2025]](https://tryrunable.com/blog/revolutionizing-content-moderation-in-the-ai-era-lessons-fro/image-1-1775227060699.png)