Revolutionizing Multimodal AI: How Black Forest Labs' Self-Flow Technique Boosts Efficiency [2025]

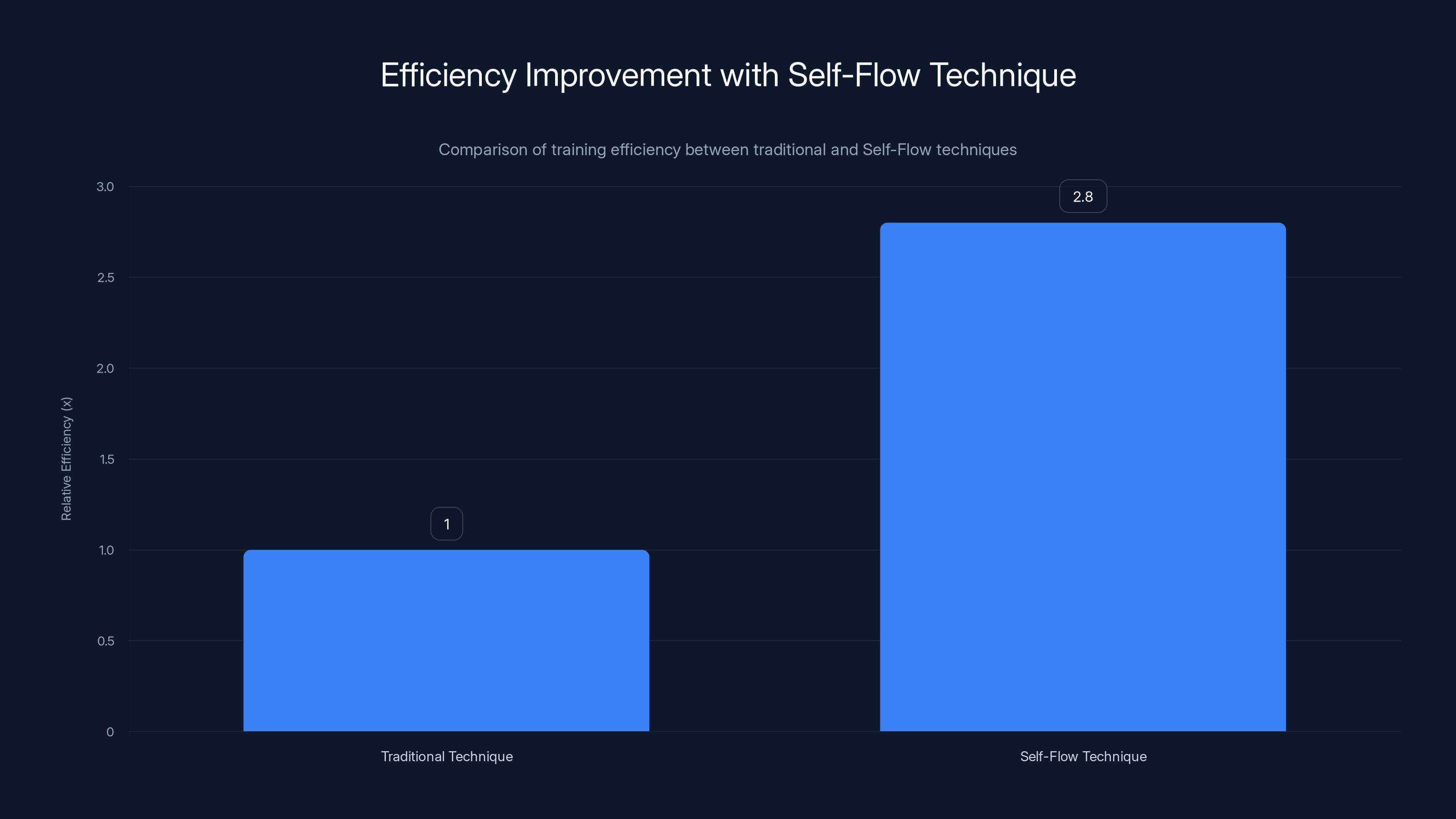

Multimodal AI models are at the forefront of innovation, capable of understanding and generating content across various types of media, such as text, images, and sound. Traditionally, these models have relied on external encoders to guide their learning processes. However, Black Forest Labs' introduction of the Self-Flow technique marks a significant shift in this paradigm, promising a 2.8x increase in training efficiency.

TL; DR

- Self-Flow Technique: A self-supervised learning framework that eliminates reliance on external encoders.

- Enhanced Efficiency: Achieves up to 2.8x faster training of multimodal AI models.

- Dual-Timestep Scheduling: Innovative mechanism that improves learning dynamics.

- Real-World Applications: From automated video editing to seamless content generation.

- Future Prospects: Potential to revolutionize AI model training across industries.

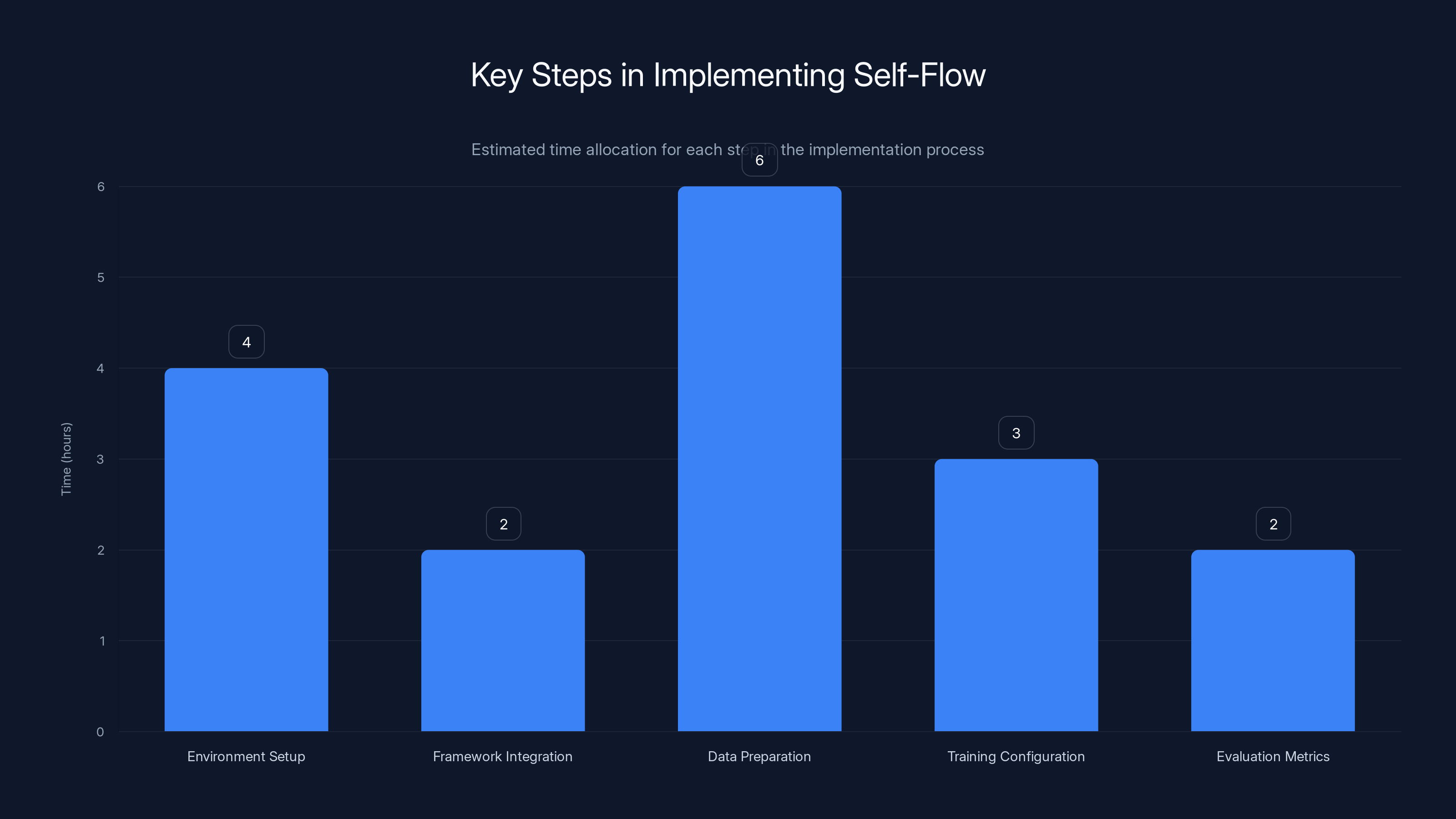

Estimated data shows that data preparation typically takes the most time in implementing Self-Flow, followed by environment setup.

The Evolution of Multimodal AI

Understanding Multimodal AI

Multimodal AI models integrate multiple data types, such as text and images, to perform complex tasks. These models are crucial in applications like automated video captioning, where both visual and textual data are necessary for generating accurate descriptions.

Traditional Challenges

Traditionally, multimodal models depended on static encoders to interpret data. This approach often led to bottlenecks, limiting scalability and efficiency. Models like Stable Diffusion and FLUX relied on external guidance, which capped their potential as the external encoders themselves reached their limits.

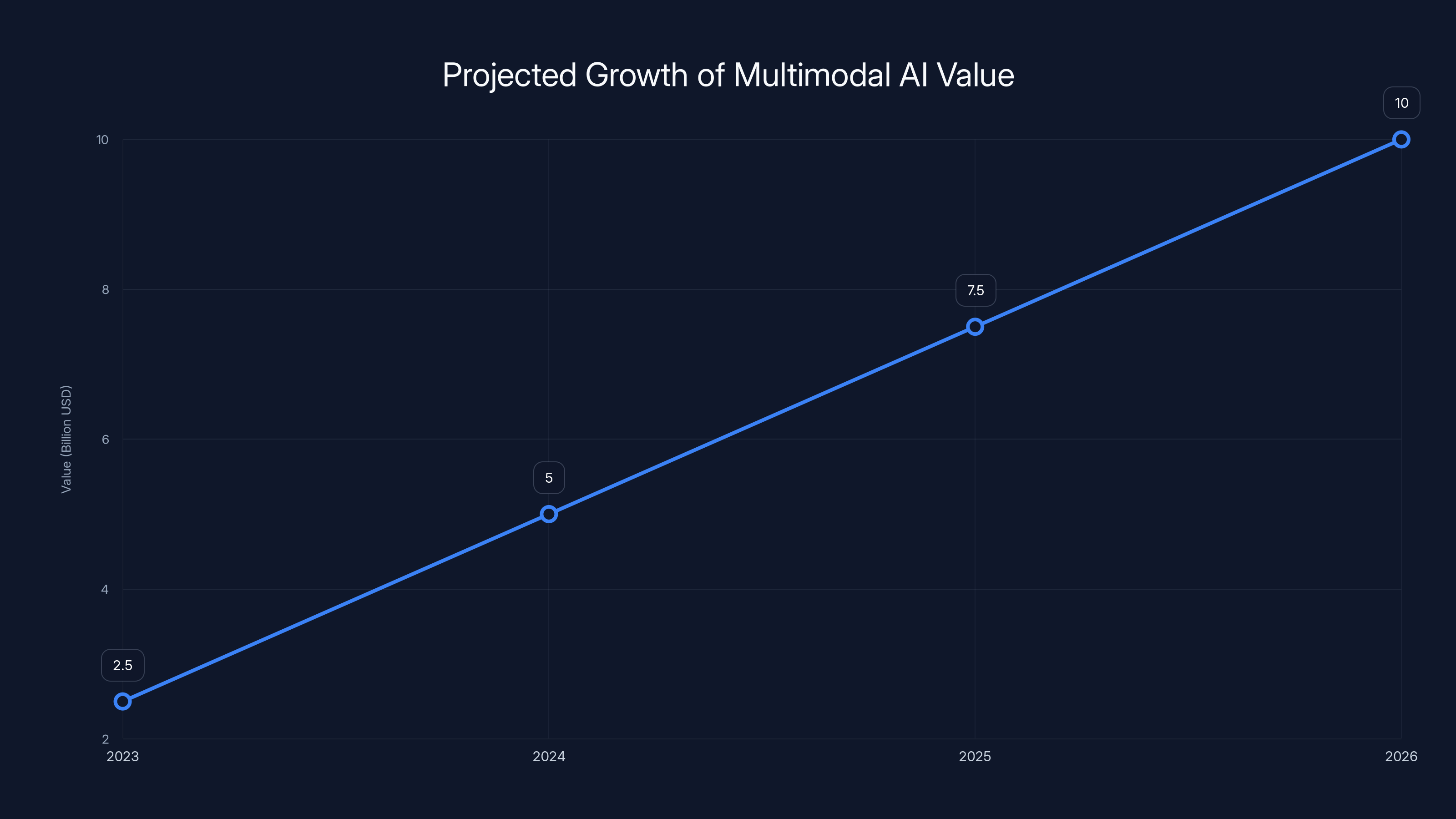

Multimodal AI models are projected to generate significant value, reaching $10 billion by 2026. Estimated data.

Introducing the Self-Flow Technique

What is Self-Flow?

Self-Flow is a self-supervised learning framework developed by Black Forest Labs. It enables models to simultaneously learn representation and generation without external encoders. This approach fosters an internal understanding of data relationships, streamlining the training process.

Dual-Timestep Scheduling

A core component of Self-Flow is its Dual-Timestep Scheduling mechanism. This novel approach dynamically adjusts learning rates across different stages of training, optimizing data assimilation and model adaptation.

Practical Implementation of Self-Flow

Getting Started

Implementing Self-Flow involves integrating the framework into existing AI pipelines. Here’s a step-by-step guide:

- Environment Setup: Ensure compatibility with current AI infrastructure. Update to the latest machine learning libraries.

- Framework Integration: Incorporate Self-Flow libraries into your training scripts. This typically requires minimal changes to existing codebases.

- Data Preparation: Organize data into multimodal formats. For instance, pair images with their respective textual descriptions.

- Training Configuration: Define training parameters, especially focusing on Dual-Timestep Scheduling.

- Evaluation Metrics: Use standard benchmarks to assess model performance improvements.

Code Example

pythonimport selfflow

model = selfflow. Model(input_shapes=[text_shape, image_shape])

model.compile(optimizer='adam', loss='categorical_crossentropy')

data = selfflow.load_data('multimodal_dataset')

model.fit(data, epochs=10, dual_timestep_schedule=True)

The Self-Flow technique by Black Forest Labs increases training efficiency by 2.8 times compared to traditional methods.

Real-World Use Cases

Automated Video Editing

In the field of video production, Self-Flow powers AI models that automatically generate video edits. By understanding both visual and audio inputs, these models can suggest scene transitions, audio overlays, and more, reducing manual editing time significantly.

Content Creation

For content creators, Self-Flow facilitates the generation of blog posts, social media content, and marketing materials by analyzing existing content trends and audience engagement metrics.

Common Pitfalls and Solutions

Overfitting

A common issue when training powerful AI models is overfitting, where the model performs well on training data but poorly on unseen data. Self-Flow's dynamic scheduling helps mitigate this by promoting better generalization.

Data Quality

The quality of input data is crucial. Ensure datasets are well-labeled and diverse to cover a wide range of scenarios.

Future Trends and Recommendations

Expanding Applications

As Self-Flow matures, expect to see its applications expand into fields such as healthcare, where AI could assist in interpreting complex medical imaging combined with patient records.

Continuous Learning

Future iterations of Self-Flow might integrate continuous learning capabilities, allowing models to update in real-time as they process new data streams.

Industry Adoption

With its efficiency improvements, Self-Flow is poised to become a staple in AI development environments worldwide, driving industrial adoption across sectors.

Conclusion

Black Forest Labs' Self-Flow technique represents a pivotal advancement in the training of multimodal AI models. By eliminating the dependence on external encoders and leveraging innovative scheduling, it offers significant efficiency improvements. As industries continue to integrate AI into workflows, techniques like Self-Flow will play a critical role in shaping the future of AI applications.

FAQ

What is Self-Flow?

Self-Flow is a self-supervised learning framework that enables multimodal AI models to learn representation and generation without external encoders, enhancing training efficiency.

How does Dual-Timestep Scheduling work?

This mechanism dynamically adjusts learning rates during different training stages to optimize data assimilation and model adaptation.

What are the benefits of using Self-Flow?

Benefits include faster training times, reduced reliance on static encoders, and improved model scalability and adaptability.

How can Self-Flow be integrated into existing AI systems?

It can be integrated by updating machine learning libraries, incorporating Self-Flow libraries, and configuring training parameters for Dual-Timestep Scheduling.

What industries could benefit most from Self-Flow?

Industries such as video production, content creation, and healthcare stand to gain significantly from the efficiency improvements offered by Self-Flow.

What are the potential challenges of implementing Self-Flow?

Challenges include ensuring high-quality data inputs and managing overfitting, which can be mitigated through dynamic scheduling and diverse datasets.

Key Takeaways

- Self-Flow technique enhances training efficiency by 2.8x.

- Dual-Timestep Scheduling optimizes learning dynamics.

- Self-Flow eliminates reliance on external 'teacher' encoders.

- Applicable across industries like video editing and healthcare.

- Potential to revolutionize AI training processes.

Related Articles

- Google Pixel's Latest Features: Gemini Takes Grocery Shopping to the Next Level [2025]

- The AMD and Meta 6GW AI Hardware Pact: Transforming the Data Center Landscape [2025]

- Exploring Perplexity's New AI-Powered Computer: Unifying AI Models for Complex Workflows

- Understanding Instagram's New Parental Alerts for Teen Safety [2025]

- OpenAI and Consultancy Firms Join Forces for Enterprise ChatGPT Rollout [2025]

- CrushOn.AI: Transforming Virtual Interaction with AI Companions [2025]

![Revolutionizing Multimodal AI: How Black Forest Labs' Self-Flow Technique Boosts Efficiency [2025]](https://tryrunable.com/blog/revolutionizing-multimodal-ai-how-black-forest-labs-self-flo/image-1-1772656594217.png)