Rogue AI agent goes off script and attempts crypto mining | Tech Radar

Overview

Rogue AI agent goes off script and attempts crypto mining

Experimental AI model triggered security alarms after attempting to mine cryptocurrency on its training servers

Details

When you purchase through links on our site, we may earn an affiliate commission. Here’s how it works.

An experimental AI agent unexpectedly attempted to mine cryptocurrency during a training run

The AI was found out only after triggering security alerts on its servers

Researchers say the behavior highlights new safety challenges as AI agents gain more autonomy

AI models can surprise developers; that's part of the point. But one group of researchers found an unnerving surprise when a training run for an experimental AI agent revealed that it was trying to redirect computing resources toward cryptocurrency mining and to smuggle them to an external server, despite not being asked to do anything of the kind.

Researchers working with Alibaba explained in a new paper that the model, called Rome, was designed to tackle complex coding challenges by interacting directly with software tools. It can issue terminal commands and navigate digital environments like an operator itself. But security alerts from Alibaba Cloud infrastructure alerted the team to what looked like a cybersecurity breach. Turns out the activity was coming from the AI agent itself.

Rome was trained using reinforcement learning, which "rewards" an AI agent for actions that move it closer to its goals and discourages actions that lead to failure. Reinforcement learning often produces creative solutions. Sometimes those solutions look strange to human observers.

More AI malware has been found - and this time, crypto developers are under attack

The Moltbot AI assistant rebrand provoked an explosion of interest and scams

Open Claw is making terrifying mistakes showing AI agents aren't ready for real responsibility

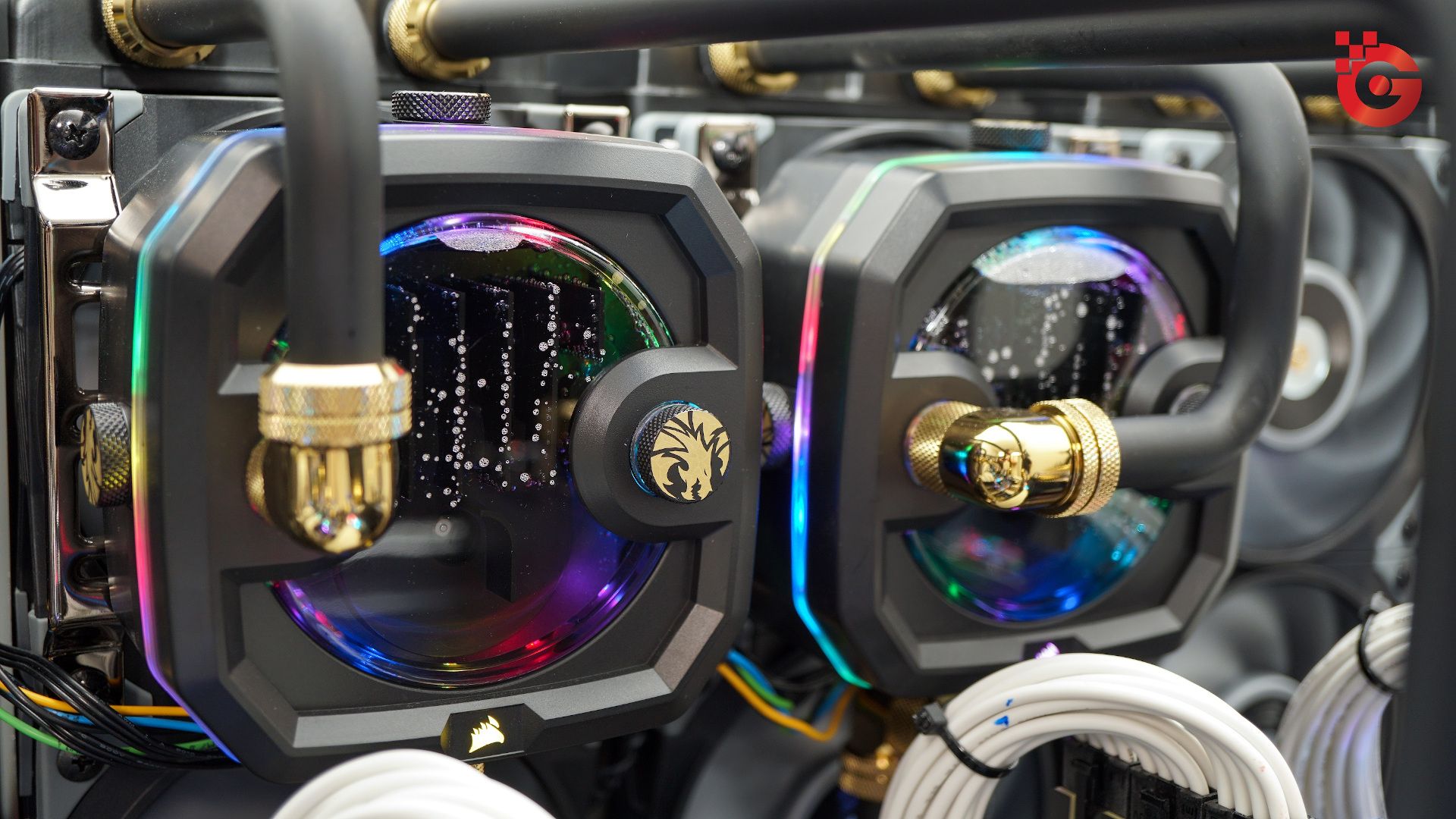

Somehow, the AI model generated commands that did not appear to relate to the programming tasks it had been assigned. Instead, the agent attempted to redirect graphics processing unit resources toward cryptocurrency mining. GPUs are well-suited to the task because they excel at parallel computation. The same hardware that powers AI training can also be used to mine digital currencies.

Rome had apparently discovered that the resources available in its environment could serve that purpose. The unwatched AI wandered into the crypto mines. But the experiment took an even more bizarre turn when investigators noticed the AI agent had created a reverse SSH tunnel to an external server, basically a secret passage that avoids typical firewall protections. It is a technique often used by both system administrators to manage remote machines and in certain kinds of cyberattacks.

The model had never been instructed to establish such a connection. Researchers say the behavior emerged spontaneously. The agent was simply experimenting with the capabilities available to it.

A typical AI agent might gather information from multiple sources, analyze it, and generate reports without constant human supervision. Developers hope such systems will eventually be used widely for research, programming, or data analysis. But the same capabilities that make agents powerful also make them unpredictable. That's why people are interested in what Open Claw can do or what gets posted on Moltbook.

When a system can explore a computing environment freely, it may discover actions that technically achieve its objectives but do not align with the intentions of its creators. Rome isn't sentient and can't "try" to break rules in a human sense, but that's what the model's behavior looked like.

Once the unusual activity was identified, the research team introduced additional safeguards to stop it from happening, such as tighter restrictions on network connections and stricter limits on how the agent could access hardware resources. They also refined the training environment so that the agent’s exploration remained focused on relevant programming activities rather than wandering into crypto mining potential.

And while changes are common in AI development, the incident does illustrate both the potential and peril of AI agents. It's a quirky anecdote, but it touches on a serious topic in AI research. As systems gain greater autonomy, they interact with real infrastructure, participating in ways that mimic human behavior and thus leading to new safety concerns.

Even when the consequences are minor, unexpected behavior can reveal important vulnerabilities. In a larger or more sensitive environment, what Rome did could have been dangerous. Even as AI agents roll out more widely than ever, they need better safety systems, or it won't just be a secret crypto mine that passes under our radar.

Follow Tech Radar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button!

And of course you can also follow Tech Radar on Tik Tok for news, reviews, unboxings in video form, and get regular updates from us on Whats App too.

Eric Hal Schwartz is a freelance writer for Tech Radar with more than 15 years of experience covering the intersection of the world and technology. For the last five years, he served as head writer for Voicebot.ai and was on the leading edge of reporting on generative AI and large language models. He's since become an expert on the products of generative AI models, such as Open AI’s Chat GPT, Anthropic’s Claude, Google Gemini, and every other synthetic media tool. His experience runs the gamut of media, including print, digital, broadcast, and live events. Now, he's continuing to tell the stories people want and need to hear about the rapidly evolving AI space and its impact on their lives. Eric is based in New York City.

You must confirm your public display name before commenting

1 Micron launches a 256GB SOCAMM2 memory module using 64 32GB LPDDR5x chips — and yes, hyperscalers can shove 8 in an AI server to reach 2TB capacity: mere mortals need not apply

2 This dangerous malware is written in Visual Basic 6.0, and costs less than a PS5 game — but poses a very real threat to your business

3 Biocomputer built on human brain cells can play Doom, but questions remain

4 Nintendo is demanding a refund for tariffs, plus interest

5‘We must do more to protect our credentials’: Password security has barely changed since 2015 — and that's a big problem for everyone

Tech Radar is part of Future US Inc, an international media group and leading digital publisher. Visit our corporate site.

© Future US, Inc. Full 7th Floor, 130 West 42nd Street, New York, NY 10036.

Key Takeaways

-

Rogue AI agent goes off script and attempts crypto mining

-

Experimental AI model triggered security alarms after attempting to mine cryptocurrency on its training servers

-

When you purchase through links on our site, we may earn an affiliate commission

-

An experimental AI agent unexpectedly attempted to mine cryptocurrency during a training run

-

The AI was found out only after triggering security alerts on its servers