Space X Acquires x AI: The Ultimate Vertical Integration Play

In early 2025, Elon Musk pulled off one of the most audacious corporate maneuvers in recent memory. Space X formally acquired x AI, which also owns X (formerly Twitter). On the surface, it sounds like chaos. Why would a space exploration company need an AI firm and a social network? But dig deeper, and you'll see Musk's long-game vision: building what he calls the "most ambitious, vertically-integrated innovation engine on (and off) Earth."

This isn't just another acquisition. It's a complete reorganization of how three wildly different companies can feed into each other. Rocket technology meets artificial intelligence. Real-time information distribution meets space-based computing. It's the kind of move that makes Wall Street analysts lose sleep and tech journalists scramble to figure out what's actually happening.

The stated reasoning is almost poetic, in a very Musk way. Current AI data centers consume staggering amounts of power. Traditional grids can't handle the electricity demand without imposing "hardship on communities and the environment." So Musk's solution? Move the computation to space. Harness solar energy from orbit. Build the infrastructure in space itself. It's audacious, maybe even impossible, but it's also hard to argue against the logic. Space has unlimited room and unfettered access to the sun's energy.

But this move goes far beyond just solving the energy problem. It's about creating an ecosystem where each piece makes the others stronger. Space X gets a company building cutting-edge AI. x AI gets access to Space X's rocket and satellite infrastructure. X gets integration points with both. It's a bet that the future belongs to companies that can operate across multiple domains simultaneously.

Let's break down what this merger actually means, how it works, and why it matters for the future of technology, space exploration, and AI development.

TL; DR

- The Merger: Space X acquired x AI, creating a vertically-integrated company combining rockets, AI, and social media

- The Vision: Move AI computation to space to solve Earth's energy crisis and scale to unprecedented levels

- The Players: Three distinct companies now unified under Musk's leadership: rocket technology, cutting-edge AI models, and real-time information platform

- The Timeline: This represents the culmination of years of infrastructure building by Space X and months of rapid expansion by x AI

- Bottom Line: This is Musk's biggest bet yet on creating an interconnected "everything app" that operates across domains most companies keep separate

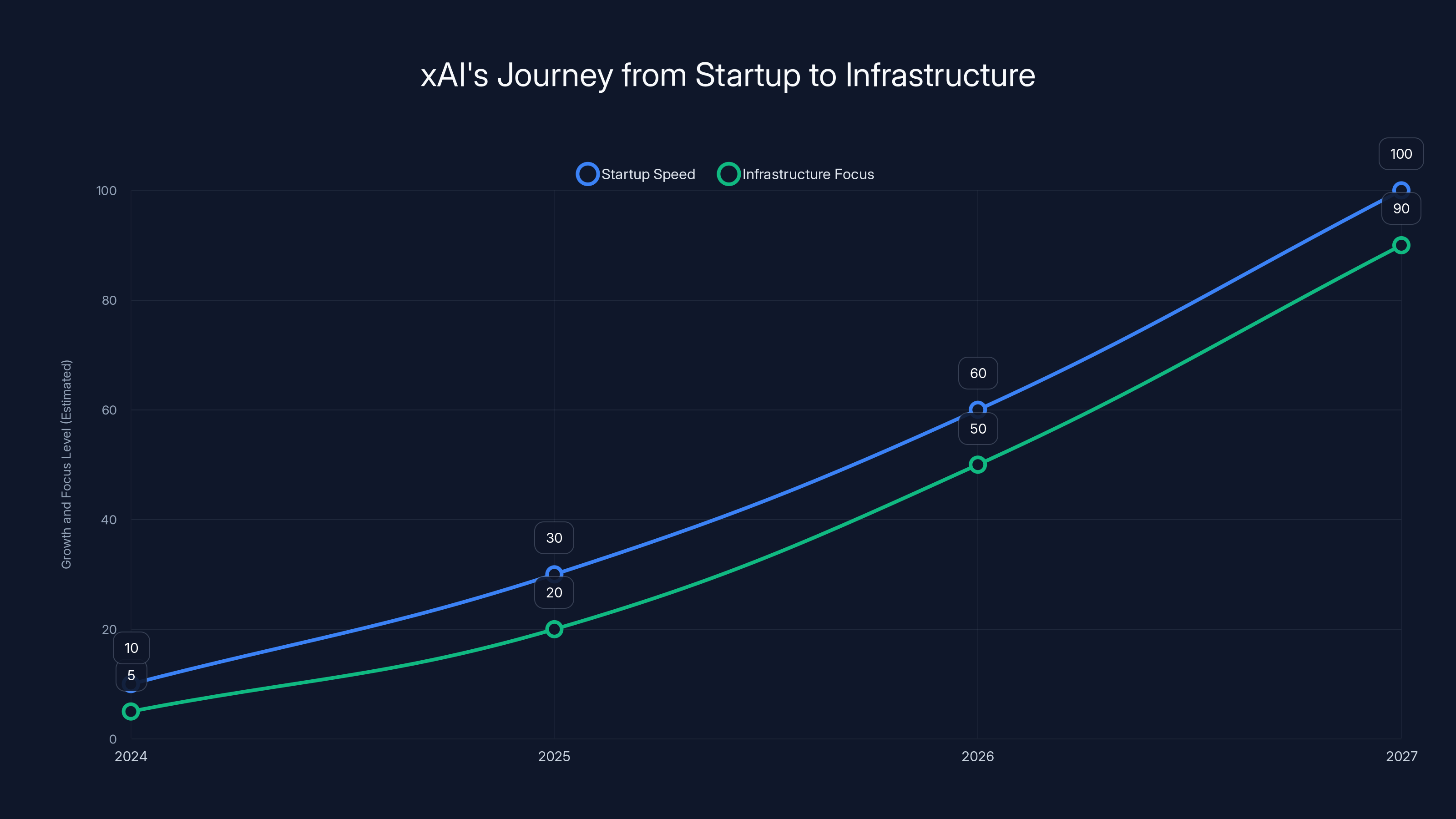

xAI's rapid growth from a startup to an infrastructure-focused company is depicted, highlighting its increasing emphasis on integration and real-time data capabilities. Estimated data.

The Everything App Vision Becomes Reality

Elon Musk has talked about the "everything app" for years. When he acquired X (formerly Twitter), the goal was turning it into a super-app that does everything. Payment processing, messaging, social networking, video streaming, business services. One platform for all communication and commerce needs.

But Musk realized something critical: an everything app can't just exist on smartphones and computers. It needs infrastructure. It needs information. Most importantly, it needs AI that's integrated at every level. That's where x AI comes in.

x AI wasn't just another AI startup chasing attention. Musk founded it to compete with Open AI and Anthropic, but on Musk's terms. The company rapidly built Grok, an AI model designed for reasoning, truth-seeking, and real-time information integration. It could actually use current information from X's network instead of relying on training data cutoffs.

The merger makes Grok exponentially more powerful. Instead of being a chatbot integrated into a social media platform, Grok becomes the intelligence layer for an entire ecosystem. Space X's satellite network delivers real-time data. Space X's rockets launch the infrastructure. X distributes the results to billions of users. It's a closed loop where each piece strengthens the others.

Musk's "everything app" wasn't just marketing speak. It was a technical vision that required three separate companies to execute. Now they're unified. The infrastructure question is massive, though. How do you actually make this work without building custom hardware that costs billions?

The Energy Problem That Started It All

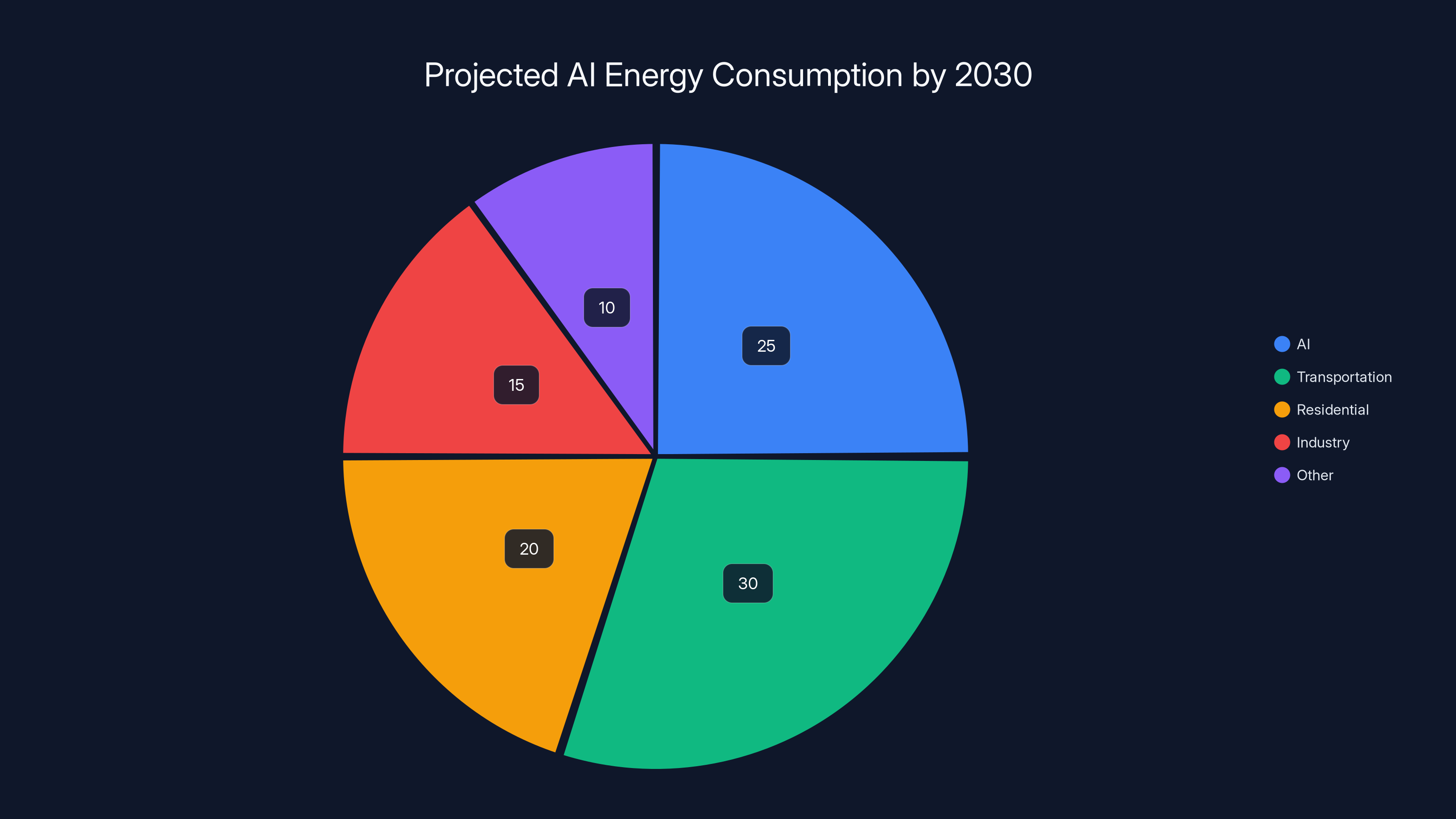

Here's the inconvenient truth about AI that most companies don't want to admit publicly: it's energy-intensive beyond imagination. Training a large language model requires enormous amounts of electricity. Running inference at scale consumes more power than some small nations use annually.

Data centers today are built near abundant power sources. They're placed in cooling-friendly locations. Yet even with optimization, the power demand is unsustainable. The International Energy Agency has warned that AI's electricity demand could rival major sectors of the economy by 2030.

Musk's pitch is that Earth-based solutions won't scale. You can't keep building massive data centers without straining electrical grids. You can't add enough renewable energy fast enough to meet demand. Communities suffer. The environment suffers. So you need a different approach entirely.

Space, though, is different. The sun delivers 1,361 watts per square meter of energy to space, unfiltered by atmosphere. To harness even a millionth of that would require "over a million times more energy than our civilization currently uses," according to Musk's statement. It's absurd and almost incomprehensible in scope. But mathematically, it's also true.

This is where Space X becomes essential. Building a space-based power generation and computation platform requires the ability to launch huge payloads cheaply and reliably. Starship, Space X's next-generation rocket, is designed to carry 100+ tons to orbit. With full reusability, launch costs drop dramatically. Suddenly, what seemed impossible becomes merely expensive rather than physically impossible.

The energy argument is the philosophical foundation for this entire merger. It's not about market dominance or profit margins. It's about solving a constraint that will eventually limit all AI companies. Space X has the technology to do something about it. x AI has the expertise to design AI systems that could operate in space. X has the platform to use the outputs. Together, they can pursue something no other company can attempt.

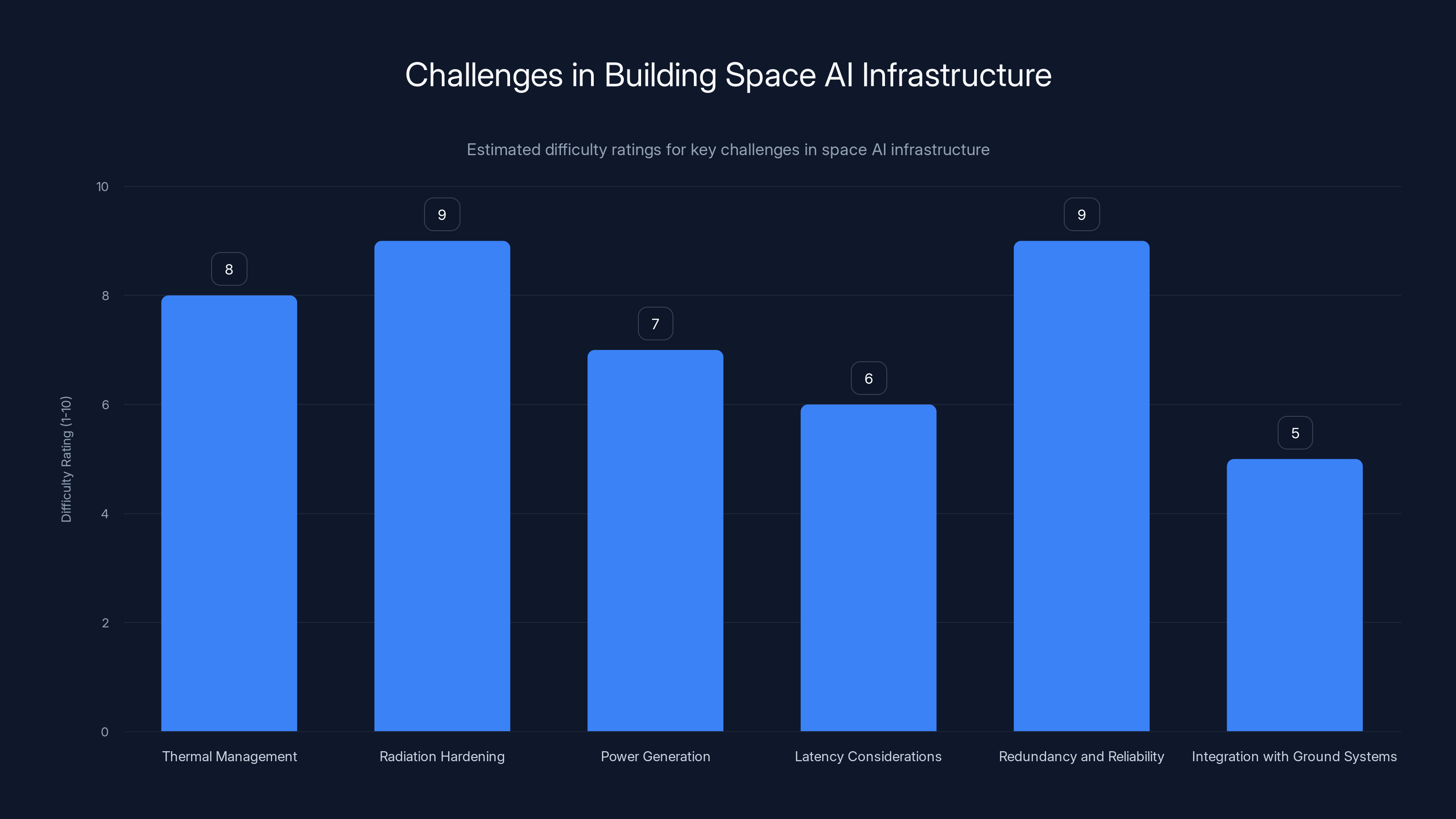

Thermal management and radiation hardening are among the most challenging aspects of building AI infrastructure in space, with high difficulty ratings. (Estimated data)

How Vertical Integration Actually Works Here

Vertical integration means owning multiple levels of a supply chain or production pipeline. Ford once owned steel mills and rubber plantations. Apple designs chips and builds devices and runs stores. It's a strategy that gives you control and reduces dependencies.

Musk's version is more ambitious because it spans completely different domains. Let's map out how this actually functions:

The Rocket Layer: Space X launches hardware. Starship carries payloads. Heavy lift capacity becomes the foundation for everything above it. Without cheap, reliable launch, the whole thing falls apart.

The Infrastructure Layer: Once in space, you need platforms. Solar arrays. Thermal management. Communications. Propulsion for station-keeping. This is specialized hardware that Space X is already designing and operating.

The Computing Layer: x AI designs the AI systems and software that actually runs on this infrastructure. Instead of Earth-based data centers, computation happens in orbit. Lower latency in some cases, access to unlimited solar power, no cooling infrastructure needed.

The Data/Networking Layer: Starlink, Space X's satellite internet service, provides the backbone. Real-time data feeds to and from the space-based AI infrastructure. A dedicated network that Space X controls completely.

The Platform Layer: X becomes the interface layer. Users interact with Grok or other services. Results get pushed through X's network. Real-time information feeds back into the system. The loop closes.

What makes this different from typical vertical integration is that each layer is genuinely complex and valuable independently. Space X doesn't need x AI to succeed. x AI doesn't need Space X's rockets. X doesn't need either. But together, they create capabilities that none could achieve alone.

The risk is obvious: if any piece breaks, the whole thing suffers. If Space X has launch failures, the space-based AI infrastructure can't scale. If x AI can't solve the thermal or power management problems in space, the whole energy advantage disappears. If X fails to maintain platform viability, there's no distribution network for applications built on the infrastructure.

The x AI Story: From Startup to Infrastructure

x AI was founded in 2024, which means it moved at startup speed like few companies ever do. Musk didn't start it to build a product company like Open AI or Anthropic. He started it because he believed the AI landscape was being controlled by companies with misaligned incentives.

Open AI had become increasingly aligned with Microsoft. Anthropic had its own approach to AI safety. Deep Mind is Google's. Every major AI lab had corporate backing that shaped their priorities. Musk wanted an AI company that was independent and, from his perspective, more truth-seeking.

Grok became x AI's flagship. It's not fundamentally different architecturally from GPT-4 or Claude. It's a large language model trained on a massive corpus of text. But it was designed with specific properties. Grok could accept real-time information from X's data feed. It was trained to refuse certain types of restrictions that Musk sees as censorship. It was positioned as a reasoning-first AI rather than purely a chat interface.

But here's what made x AI special: it was always infrastructure-oriented. Musk wasn't interested in competing for chat market share. x AI was building the AI layer for his larger vision. The company was designed to be integrated into other systems, not to be the system itself.

Grok's architecture makes this clear. It's not just a web interface. It's an API. It can consume real-time data streams. It can be called from other applications. It's designed to be embedded and extended, not to exist in isolation.

When x AI raised funding, it did so with this infrastructure vision in mind. The company wasn't pitching venture capitalists on becoming the next Open AI. It was pitching them on being the AI foundation for Musk's broader technological empire. That's a much bigger bet because the success criteria are different. It's not about monthly active users or per-token pricing. It's about enabling impossible things.

Space X's Infrastructure Advantage

Space X is fundamentally different from other aerospace companies. Space X has repeatedly proven that the aerospace industry's cost structures don't have to exist. They can be dismantled through relentless engineering and reusable design.

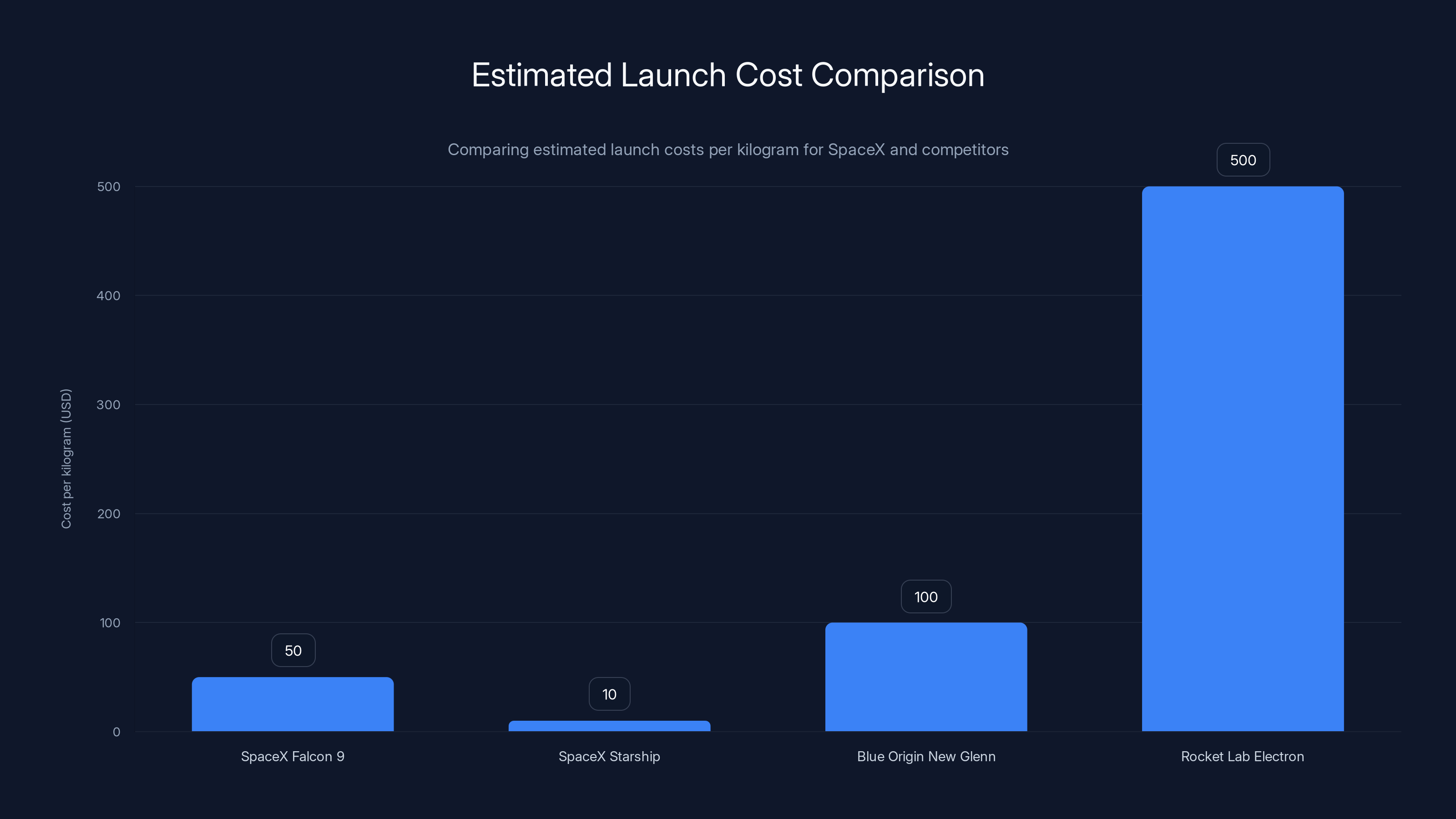

The Falcon 9 rocket lands itself. It's used again. This has reduced launch costs from thousands per kilogram to tens of dollars per kilogram. It's the biggest shift in space economics in decades. But Space X didn't stop there. Starship is designed to be even cheaper and more capable.

What most people don't realize is that Space X's cost advantage compounds when you're building a specific mission. Launch one satellite, costs are high. Launch ten, costs average better. Launch a hundred, costs per unit become negligible. But launch enough to build a space station, and suddenly you're operating in completely different economics.

For a space-based AI infrastructure, Space X's launch capability is game-changing. Musk needs:

Power Generation: Large solar arrays deployed in space. These are heavy payloads. Starship can carry them.

Computing Hardware: Servers, processors, storage. Also heavy. Starship can handle it.

Thermal Management: Radiators and heat rejection systems. Starship launches them.

Redundancy: Multiple units for reliability. Starship makes multiple launches economical.

Upgrades: When technology improves, you launch new hardware. Only cheap launches make this viable.

No other space company can do this at Space X's cost and cadence. Blue Origin is developing New Glenn, which might eventually compete, but they're years behind. Rocket Lab focuses on small payloads. Relativity is still in development. Space X has a monopoly on heavy-lift reusable rockets.

This isn't about Space X being better at rockets (though it is). It's about Space X having unique capabilities that make space-based AI infrastructure economically feasible. Without those capabilities, the whole thing remains theoretical.

Estimated data shows AI could consume 25% of global energy by 2030, highlighting its growing demand compared to traditional sectors.

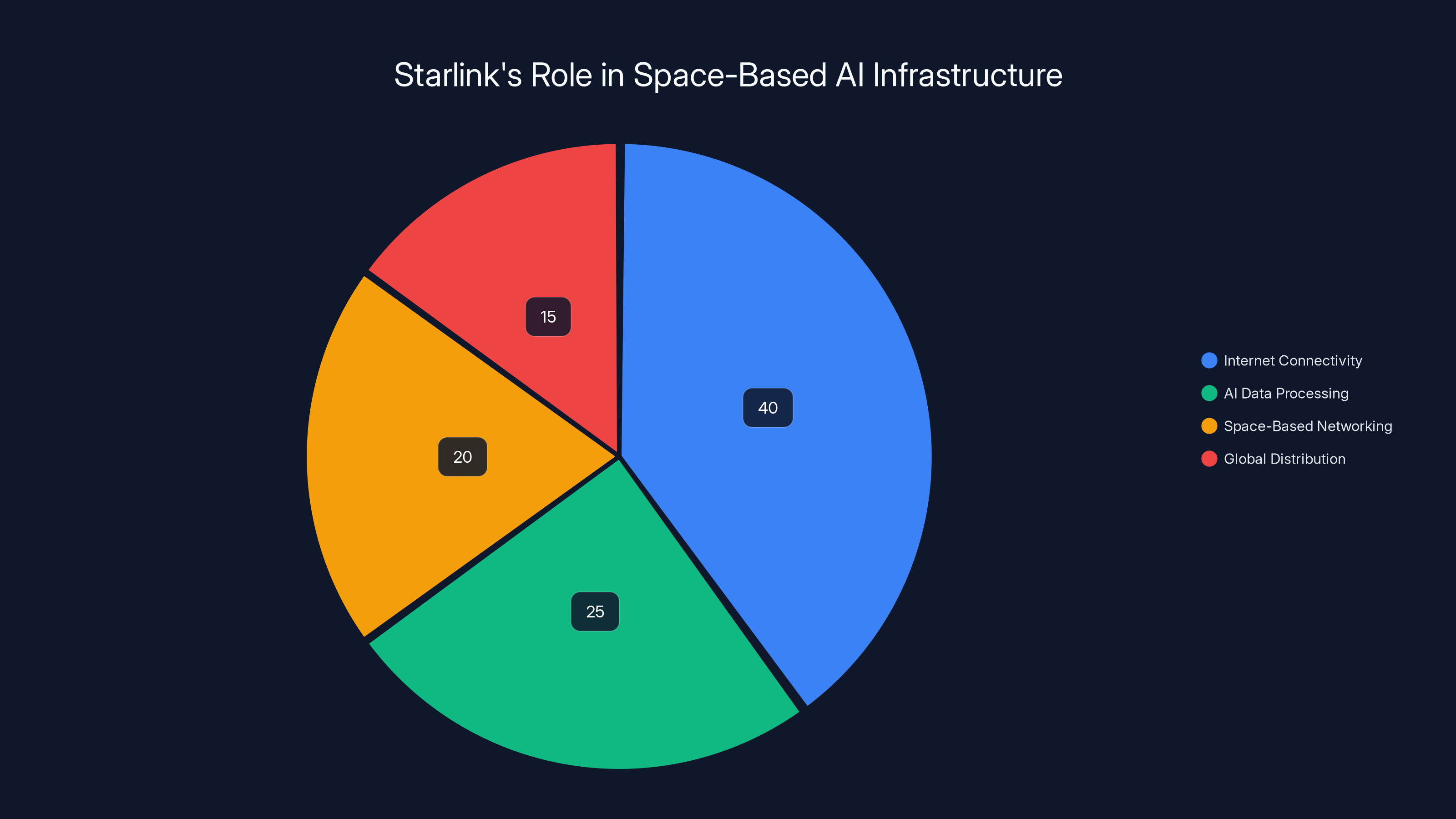

Starlink's Role in the Ecosystem

People sometimes think of Starlink as just a broadband service. Millions of satellite dishes beaming internet to remote areas. It's a $10 billion business and growing rapidly. But in the context of this merger, Starlink is something far more important: the nervous system connecting everything.

Starlink satellites are in low Earth orbit. They communicate with ground stations and with each other using inter-satellite links. The network is vast, distributed, and redundant. It's designed to maintain connectivity even if portions are damaged or disabled.

Now imagine space-based AI infrastructure. You've got servers in orbit running Grok and other AI models. You've got power generation systems. You've got the constellation that is Starlink. Suddenly, you have a completely closed loop network that doesn't depend on any external infrastructure.

Data comes in from Starlink. Processing happens on the space-based AI servers. Results go out through Starlink. All of it is owned and controlled by one company. There are no internet providers involved. No third-party cloud infrastructure. No external dependencies for latency-critical operations.

This is particularly valuable for certain types of AI inference. Real-time reasoning on massive datasets. Processing financial information. Analyzing satellite imagery. Any task where you need to integrate space-based sensor data with AI computation, the ability to do it entirely in space with no ground dependencies is revolutionary.

Starlink also solves a distribution problem. If you're running AI models in space, how do users access them? Through Starlink. If you're serving billions of queries, you need massive network capacity. Starlink is built for it. Again, it's vertical integration creating capabilities that wouldn't exist otherwise.

The network topology also makes this work at planet scale. A user in Australia connects to Starlink. That connection routes to the nearest space-based AI cluster. Processing happens. Results come back through Starlink. Latency is competitive with Earth-based data centers in many cases, and it improves as more orbiting infrastructure launches.

The Social Media Connection: X as the Interface

Why is X part of this merger? That's the question everyone asks. A social media platform seems orthogonal to rockets and AI. But Musk sees it differently. X isn't just a social network. It's a real-time information platform with billions of users.

Consider what X provides:

Data Feeds: Constant streams of what humans are thinking, discussing, asking. Grok can ingest this directly. Real-time information for AI reasoning.

Distribution Network: If you've built an amazing AI system, how do you get it to users? X is already there. Billions of users. Direct integration possible.

User Behavior: X understands what humans find interesting, important, true. This data is incredibly valuable for training and fine-tuning AI systems.

Feedback Loop: When users interact with Grok on X, that data feeds back into AI training and improvement. Continuous learning from millions of data points.

Monetization Path: X could become the primary interface for paid AI services. Users already have accounts. Trust is already there (or being rebuilt). Payment systems exist.

Most AI companies are separate from platforms. Open AI has Chat GPT. Anthropic has Claude. But they don't have direct access to billions of users' real-time data streams. They don't have a distribution network they own. They have to work with Microsoft and Google and AWS to reach people.

X changes that. Grok can be directly integrated into the X experience. When you post a question, you get AI assistance natively. When you write a tweet, Grok can help refine it. When you're researching something, Grok can access the entire X network in real-time to find current information.

This is more powerful than having Grok as a separate application. It's baked into the platform that hundreds of millions of people use daily. The network effects are enormous. The training data is constant and real-time. The distribution is immediate.

What This Means for AI Development

The AI industry is currently structured around companies that are disconnected from infrastructure. Open AI builds models. Azure runs them. Anthropic builds models. AWS runs them. Google is different because they own infrastructure, but even Google is primarily a software company that happens to have data centers.

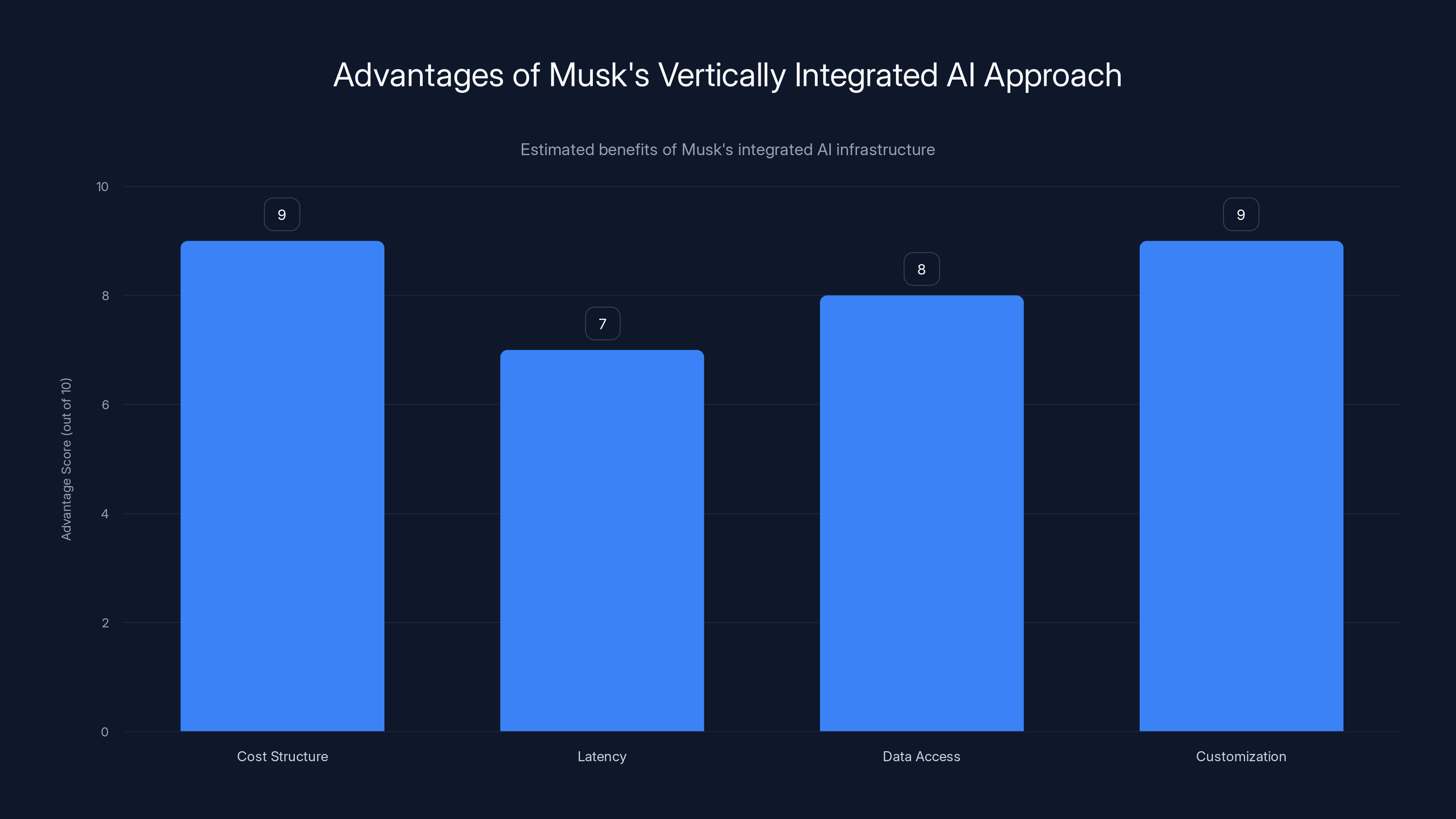

Musk's approach is vertically integrated in ways that traditional AI companies can't match. This will likely lead to some meaningful advantages:

Cost Structure: By owning the entire stack, Musk can optimize across layers. Energy isn't purchased from utilities. It's generated on-site in space. Cooling infrastructure is minimal (space is cold). Networking doesn't require internet service providers. Cost per inference should be dramatically lower than competitors paying for each layer separately.

Latency: Space-based inference has interesting latency properties. Satellite-based data sources are closer to the computation. For certain types of queries, this could beat Earth-based data centers.

Data Access: Starlink and X both generate data that competitive AI companies can't access. Training models on real-time X data is a unique advantage.

Customization: When you own every layer, you can build custom hardware and software optimized for your workloads. Competitors are constrained by what cloud providers offer.

But there are also risks that shouldn't be ignored:

Complexity: Managing rockets, satellites, AI infrastructure, and a social platform simultaneously is extraordinarily difficult. Failure in any piece affects everything.

Technical Challenges: Space-based data centers are unproven at scale. Thermal management might be harder than anticipated. Radiation damage to computing hardware might limit lifetime. Unknown unknowns abound.

Regulatory Risk: Operating satellites, providing broadband, running an AI system that millions of people depend on, owning a major social media platform. Each has regulatory scrutiny. Combined, it's a nightmare for compliance.

Market Risk: If Musk's space-based AI vision doesn't work, this entire structure collapses. There's no fallback plan. No diversification.

Musk's vertically integrated AI approach offers significant advantages in cost, latency, data access, and customization, potentially outperforming traditional AI companies. Estimated data.

Grok's Architecture and Real-Time Advantage

Grok was designed from the ground up to be different from competing large language models. The key differentiator is real-time information integration. Most large language models are trained on a dataset with a knowledge cutoff. They learn everything up to September 2023 or January 2024, and then they're frozen. That's their knowledge base.

Grok works differently. It can access X's data feed directly. It can see what's happening right now. Someone asks a question about today's news, Grok can search X for current information and integrate it into the response. It's not just generating text from training weights. It's actively reasoning over current information.

This is architecturally important because it solves one of AI's biggest problems: knowledge cutoff frustration. Users ask about something recent, models say "I don't have information about that." With Grok, that problem largely goes away.

But it also makes Grok dependent on X's data. If X's search doesn't work, Grok's real-time capability suffers. If X's data becomes corrupted or unreliable, Grok becomes unreliable. It's a tight coupling that makes the platforms interdependent. You can't really use Grok effectively without X, and you can't leverage X's data without AI to interpret it.

The architecture also allows for rapid improvement cycles. Every time someone uses Grok, that interaction generates data that can be used to improve the model. Most AI companies get improvement data through expensive human feedback. Musk gets it from hundreds of millions of X users daily.

Grok's design is also more modular than traditional monolithic models. Rather than one giant model that does everything, Grok can call specialized sub-models. This makes the system more efficient and allows for targeted improvements. If you want to improve reasoning, you upgrade the reasoning component. Financial calculations? Upgrade that module. Real-time search? Improve that specific subsystem.

The Space Infrastructure Challenge

Building AI infrastructure in space is theoretically sound but practically extremely difficult. Here's what needs to happen:

Thermal Management: Computers generate heat. In space, you can't just turn on air conditioning. You need radiators that reject heat to space. The infrastructure to do this at data-center scale doesn't exist yet. It would need to be developed, tested, and deployed.

Radiation Hardening: Space is flooded with cosmic rays and solar radiation. Electronics degrade faster in space than on Earth. You'd need either heavily shielded hardware or constant component replacement. Both are expensive.

Power Generation: Solar panels in space are more efficient than on Earth (no atmosphere absorption). But they degrade over time from radiation exposure. You'd need to launch new panels regularly. The power output also varies as your orbit around Earth creates day/night cycles, though in high Earth orbit, this is less of an issue.

Latency Considerations: Low Earth orbit satellites have latency of 20-30 milliseconds. For some AI inference tasks, that's acceptable. For others, it's too slow. You'd need higher bandwidth to compensate for some use cases.

Redundancy and Reliability: Earth-based data centers can be repaired. If a server fails, you swap it out. In space, failed hardware is stranded. You'd need massive over-engineering to ensure reliability, or you'd need regular resupply missions to replace failed components.

Integration with Ground Systems: You can't have everything in space. Some operations need to be on Earth. The interface between space and ground systems needs to be robust.

These aren't unsolvable problems. But they require engineering innovation that doesn't exist yet. Space X has done impossible things before. But this would be pushing into new territory.

The business case has to be that strong. If you can reduce energy costs by 50%, it might justify the complexity. If you can reduce costs by 90%, it definitely justifies it. But if the advantages are marginal, the extra complexity might not be worth it.

Financial and Operational Integration

From a business perspective, this merger creates some novel problems. Space X, x AI, and X are completely different kinds of companies with different economics, different customer bases, and different operational cadences.

Space X is a capital-intensive business that builds enormous machines. Development timelines are measured in years. Failure is catastrophic and expensive. It requires thousands of engineers and specialized facilities.

x AI is a capital-intensive research business that trains massive AI models. Development timelines are measured in months. Failure is expensive but not as visibly catastrophic. It requires specialized talent and massive compute infrastructure.

X is a software business that operates a platform. Development timelines are measured in weeks. Failure is immediate. It requires different talent and different infrastructure.

Integrating these operationally is extremely difficult. How do you manage product prioritization when one organization wants to launch hardware monthly, another wants to retrain models quarterly, and the third wants to ship new features weekly?

Financially, the structures are also very different. Space X has long-term government contracts. x AI has no revenue. X has advertising revenue but faces advertiser boycotts and uncertainty. How do you allocate resources between these very different scenarios?

There's also the question of governance. Musk will personally oversee this, at least initially. But Musk is already split across Space X, Tesla, and other interests. How much attention can he actually give to three separate companies being integrated?

The likely answer is that integration will be gradual and partial. Space X and X will share some resources. x AI and X will be deeply integrated technically. But there will still be separate organizations, separate cultures, and separate management structures for a while.

SpaceX's Falcon 9 and Starship offer significantly lower launch costs per kilogram compared to competitors. Estimated data based on industry trends.

Competitive Implications for the Industry

This merger changes the competitive landscape dramatically. Every other AI company is now competing against an entity that owns rockets, satellites, and a social network.

Open AI has Microsoft's resources and infrastructure but doesn't own its own computing hardware. Anthropic raised $20 billion but doesn't have infrastructure ownership. Google owns infrastructure but isn't focused exclusively on AI development.

Musk's approach is unique. He's chosen to own the entire stack. This creates structural advantages in cost and control. But it also creates fragility. If any piece breaks, the whole system is affected.

The AI industry will likely respond in a few ways:

Partnerships: Other AI companies might form deeper partnerships with cloud providers or infrastructure companies to match Musk's integrated approach.

Specialization: Rather than trying to compete vertically, some AI companies might specialize. Focus on the pure AI research and let someone else handle infrastructure.

Geographic Expansion: Companies might build regional data center networks to compete on latency and local control.

Open Source: We might see more effort to build open-source AI infrastructure that level-sets the playing field.

Musk's move raises the stakes. He's betting that vertical integration creates such a strong advantage that competitors can't catch up. That's a big bet. But if it works, it fundamentally reshapes the industry structure.

The Environmental Angle That Nobody Talks About

Musk justifies this merger on environmental grounds. AI data centers consume enormous amounts of power. Moving that computation to space powered by solar panels is better for Earth's environment.

But the actual environmental analysis is more nuanced. Launching rockets has environmental costs. Starship uses methane, which is less bad than solid-fuel rockets but not zero-impact. Every launch produces emissions.

The number of launches required to build space-based AI infrastructure at scale would be substantial. Dozens initially, then potentially hundreds. That's not negligible environmental impact.

On the other hand, if space-based AI infrastructure actually reduces electricity demand on Earth by eliminating data center cooling and transmission losses, the net environmental benefit could be massive. Preventing power grid strain on Earth could mean fewer coal plants, less natural gas consumption, and more room for renewables.

The math might work out. But it requires actually achieving the promised efficiency improvements. If space-based infrastructure costs more energy than Earth-based when you include launch costs, then the whole environmental justification collapses.

Musk frames this as visionary environmentalism. Skeptics might see it as using environmental concerns to justify an already-decided plan. The truth is probably somewhere in between.

Technical Risks and Unknowns

There are genuine technical challenges that haven't been solved:

Orbital Mechanics: Maintaining a constellation of computing hardware in stable orbits while regularly adding and removing equipment is complex. You need to account for atmospheric drag, solar flares, debris avoidance, and station-keeping propellant.

Power Distribution: How do you distribute power from solar panels to computing hardware reliably? Electrical systems in vacuum have different failure modes than on Earth. You'd need extensive testing.

Thermal Modeling: Heat rejection in space is theoretically simpler than on Earth, but only if you actually have radiators. The engineering to keep computing hardware at appropriate temperatures while rejecting waste heat to space is non-trivial.

Failure Detection and Diagnosis: If something fails on a space-based server, how do you diagnose it? You can't send an engineer up to replace a failed power supply. Everything has to be remotely diagnosable and replaceable.

Software Adaptation: Software written for Earth-based computers sometimes behaves differently in space due to radiation-induced bit-flips. You'd need specialized fault-tolerance mechanisms.

Development Timeline: Building new hardware, testing it, launching it, and integrating it into operations takes years. Space X is fast, but space is still hard.

None of these are impossible. But together, they represent an ambitious engineering challenge that dwarfs typical data center operations.

Starlink's ecosystem roles are estimated to be 40% internet connectivity, 25% AI data processing, 20% space-based networking, and 15% global distribution. Estimated data.

Timeline: When Will This Actually Happen?

Musk's announcement uses big, visionary language. Building a "sentient sun to understand the Universe." That's decades away, if it's even possible. But what about the practical timeline?

Near-term (2025-2026): Design and development of space-grade computing hardware. Integration work between Space X, x AI, and X. First test payloads launched on Starship. Initial proof-of-concept for space-based computation.

Medium-term (2026-2028): Regular launches of computing infrastructure. Small clusters of servers in orbit. AI inference running on space-based hardware alongside ground-based systems. Starlink integration testing.

Medium-long term (2028-2030): Expansion to multiple orbital clusters. Significant AI workloads running in space. Cost advantages becoming evident (or not). Decision point about whether to continue aggressive scaling.

Long-term (2030+): If the vision works, massive expansion. Space-based AI becomes a competitive advantage. Other companies scramble to build competing infrastructure.

But this timeline assumes everything goes well. Rocket failures delay payloads. Engineering problems require redesign. Regulatory hurdles slow deployment. Competition accelerates. Any of these could extend the timeline by years.

Regulatory and Geopolitical Challenges

Musk's integrated company will operate in extremely regulated industries. Rockets require FAA approval. Satellite internet requires FCC licensing. AI systems are increasingly regulated. Social media platforms face regulatory pressure globally.

Coordinating across these regulatory domains while maintaining the operational efficiency needed to make the business work is genuinely difficult. One regulatory setback could delay the entire timeline.

Geopolitically, there's also complexity. Space X operates under export controls. Launching AI infrastructure requires national security reviews. If the U. S. government decides this is strategically important, they might encourage it. If they decide it poses risks, they might restrict it.

International competitors will face their own regulatory hurdles. But Space X's close relationship with the U. S. military gives them advantages that other countries' companies won't have. This entire effort might be nationalistic in ways that create tension.

The Everything App Vision in Practice

Musk's ultimate goal is an "everything app" that's not just software on a phone. It's an entire ecosystem where AI, information, communication, and infrastructure are integrated.

Imagine, from Musk's perspective, the ideal outcome:

Users worldwide use X as their primary platform for communication, information, and services. Grok is embedded throughout, providing AI assistance naturally. When you need computation power, you use Grok. When you need information, you access X's network. When you need communication, X handles it. When you need payment or financial services, X has those.

Underneath, Starlink provides connectivity. In space, computing hardware processes requests. In rockets, Space X launches new infrastructure. Everything is owned and controlled by one company, optimized as a coherent system rather than separate pieces.

From Musk's perspective, this is the future of technology. From critics' perspective, it's a dangerous concentration of power in one person's hands.

The reality will probably be somewhere in between. The vision might partially work. Or it might fail completely. Or it might succeed in ways that are beneficial but also create problems nobody anticipated.

Strategic Implications for Space X

For Space X specifically, this merger solves a long-term business problem. Launching rockets for government contracts and commercial customers is valuable, but it's not a growth infinite business. There are only so many satellites to launch, so many deep-space missions.

Space-based AI infrastructure, if it works, is a different category. It's recurring. Every month, you're launching replacement hardware, upgrading capacity, adding new features. It's similar to how cloud providers have recurring revenue from subscriptions.

For Space X, this means potential for:

Recurring Launch Revenue: Dozens of launches per year launching Space X-owned payloads. This keeps launch infrastructure and teams utilized.

Integration Revenue: Space X doesn't just launch for others. Space X becomes a systems integrator, designing and deploying complete solutions.

Space Infrastructure Operator: Similar to how Amazon runs AWS or Microsoft runs Azure, Space X could operate space infrastructure that others rent access to.

This is a much bigger business than just launch services. It's why Space X might be willing to integrate with x AI and X despite the operational complexity.

Looking Ahead: Success Factors

For this merger to actually achieve its ambitious goals, several things need to happen:

Technical Execution: The space-based infrastructure needs to work at promised efficiency levels. This is the make-or-break factor.

Cost Management: The complexity needs to be managed without spiraling costs. If each launch costs

Cultural Integration: Three very different organizations need to learn to work together. This requires leadership commitment and clear incentive alignment.

Market Adoption: Users and businesses need to adopt the integrated services. If X fails to attract users back, or if Grok adoption is limited, the whole thing is less valuable.

Regulatory Approval: Government agencies need to permit this structure. If they don't, the vision can't be executed.

Time: This all needs to work before competitive threats emerge. Blue Origin might eventually compete. Japanese space companies are advancing. European space agencies are exploring new capabilities. Musk has a window where he has advantages, but that window doesn't stay open forever.

If all of these work out, this merger is the beginning of a genuine transformation in how AI infrastructure is built and operated. If any of them fail significantly, it becomes a cautionary tale about ambition exceeding execution.

FAQ

What does Space X acquiring x AI actually mean?

Space X acquiring x AI means that Space X, the rocket company, now owns x AI, the artificial intelligence company, along with X (formerly Twitter). This consolidates three previously separate organizations under unified leadership, allowing them to integrate their operations and capabilities into one vertically-integrated system.

How does moving AI to space solve the energy crisis?

Space-based AI infrastructure would be powered by solar panels in orbit, which receive unfiltered solar energy without atmospheric absorption. This eliminates the need for ground-based electricity grids to power massive data centers, reducing overall strain on Earth's power systems. In theory, space-based infrastructure could access vastly more energy than Earth-based systems, supporting exponentially larger AI operations without environmental impact on terrestrial power grids.

What role does Starlink play in this merger?

Starlink acts as the communication backbone connecting space-based AI infrastructure to Earth-based users. It provides the network that allows users to access AI services running in orbit, while also feeding real-time data from Earth back to the space-based systems. Without Starlink, the integrated system wouldn't function.

How is Grok different from Chat GPT or Claude?

Grok, developed by x AI, is designed to access real-time information from X's data feeds directly, rather than relying solely on training data with a knowledge cutoff date. This allows Grok to provide current information and perform reasoning on live data, which Chat GPT and Claude cannot do without external integrations. Grok was also designed from inception to be integrated into X rather than existing as a standalone service.

What are the main technical risks of space-based AI infrastructure?

Major technical risks include thermal management (rejecting heat in the vacuum of space), radiation hardening (protecting electronics from cosmic rays and solar radiation), power distribution reliability, orbital mechanics complexity, and the challenge of diagnosing and repairing failed hardware remotely. These problems have theoretical solutions but require engineering innovation that hasn't been implemented at data center scale in space.

When will space-based AI actually be operational?

Based on Musk's statements and Space X's development pace, initial test payloads could launch in 2025-2026, with small-scale operational clusters possible by 2027-2028. Full-scale space-based AI infrastructure capable of handling significant workloads would likely require several more years of development and deployment, pushing serious operational capacity to 2028-2030 or beyond, assuming no major setbacks.

How does this affect competition in the AI industry?

This merger gives Musk's companies structural advantages that competitors like Open AI and Anthropic cannot match, including owned infrastructure, direct access to real-time data via X, and vertical integration from satellites to user interfaces. Competitors will likely respond by forming deeper partnerships with cloud providers, focusing on specialized niches, or developing geographic distribution strategies to offset these advantages.

What does the "everything app" concept mean in this context?

The "everything app" vision means consolidating communication, information access, AI assistance, payment services, and other digital functions into one unified platform (X) backed by fully integrated infrastructure (rockets, satellites, computing, AI). Rather than using separate tools and services, users would access everything through one ecosystem that Musk controls entirely.

Conclusion: The Boldest Integration Experiment

Elon Musk has done ambitious things before. Revolutionized electric vehicles. Reusable rockets. Brain-computer interfaces. But merging Space X with x AI and X might be his most audacious move yet. Not because any individual piece is new, but because integrating three such different organizations into a coherent system is genuinely difficult.

The vision is compelling. AI infrastructure in space powered by solar energy. A social network that distributes AI services directly to billions of users. Rockets launching regularly to maintain and upgrade the constellation. An "everything app" that consolidates digital life into one platform. It's the kind of thing that gets technology people excited and makes traditional business people nervous.

Will it work? That's the question. Technical execution needs to be flawless. Operational integration needs to happen without destroying the companies involved. Regulatory approval needs to be granted. Market adoption needs to materialize. Competitive threats need to be outpaced.

That's a lot of things that need to go right. But Musk has a track record of pulling off things that seemed impossible. He's also had spectacular failures. This will be one or the other, or somewhere in between.

What we know for certain is that the technology industry just got a lot more interesting. The competitive landscape has shifted. The stakes have been raised. And the next few years will determine whether Musk's vertical integration strategy represents the future of technology or becomes a cautionary tale about ambition exceeding execution.

For now, the everything app vision is officially under construction. The rockets are being upgraded. The AI models are being refined. The social platform is being integrated. The space-based infrastructure is being designed. Space X, x AI, and X are no longer separate companies pursuing parallel paths. They're now pieces of a single, audacious vision.

How that vision develops will shape not just these companies, but the entire technology industry for the next decade and beyond.

Key Takeaways

- SpaceX acquiring xAI and X creates a vertically-integrated technology company combining rockets, AI, and social media into one ecosystem

- The merger solves AI's energy crisis by proposing space-based data centers powered by solar panels, requiring massive reusable launch capacity

- Grok's real-time information access from X gives competitive advantages that standalone AI companies cannot match without similar platform ownership

- Starlink provides the communication backbone connecting space-based infrastructure to Earth-based users, creating a fully-owned network

- Technical challenges including thermal management, radiation hardening, and orbital mechanics remain unproven at data center scale, representing significant execution risk

- The merger timeline suggests operational space-based AI infrastructure could emerge between 2027-2030 if technical challenges are solved

- Competitors like OpenAI, Anthropic, and Google lack the vertical integration Musk has achieved, creating structural advantages in cost and control

![SpaceX Acquires xAI: Elon Musk's Everything App Explained [2025]](https://tryrunable.com/blog/spacex-acquires-xai-elon-musk-s-everything-app-explained-202/image-1-1770070055067.jpg)