Testing Autonomous Agents: Embrace Chaos [2025]

If you've spent any time in the AI industry, you know it's not the chatbot's ability to answer questions that keeps engineers awake at night. It's the fear of an autonomous agent making critical decisions—like approving a six-figure vendor contract—without human oversight. We're not just talking about the next iteration of Chat GPT; we're confronting the reality of AI systems operating with the autonomy of a human employee.

TL; DR

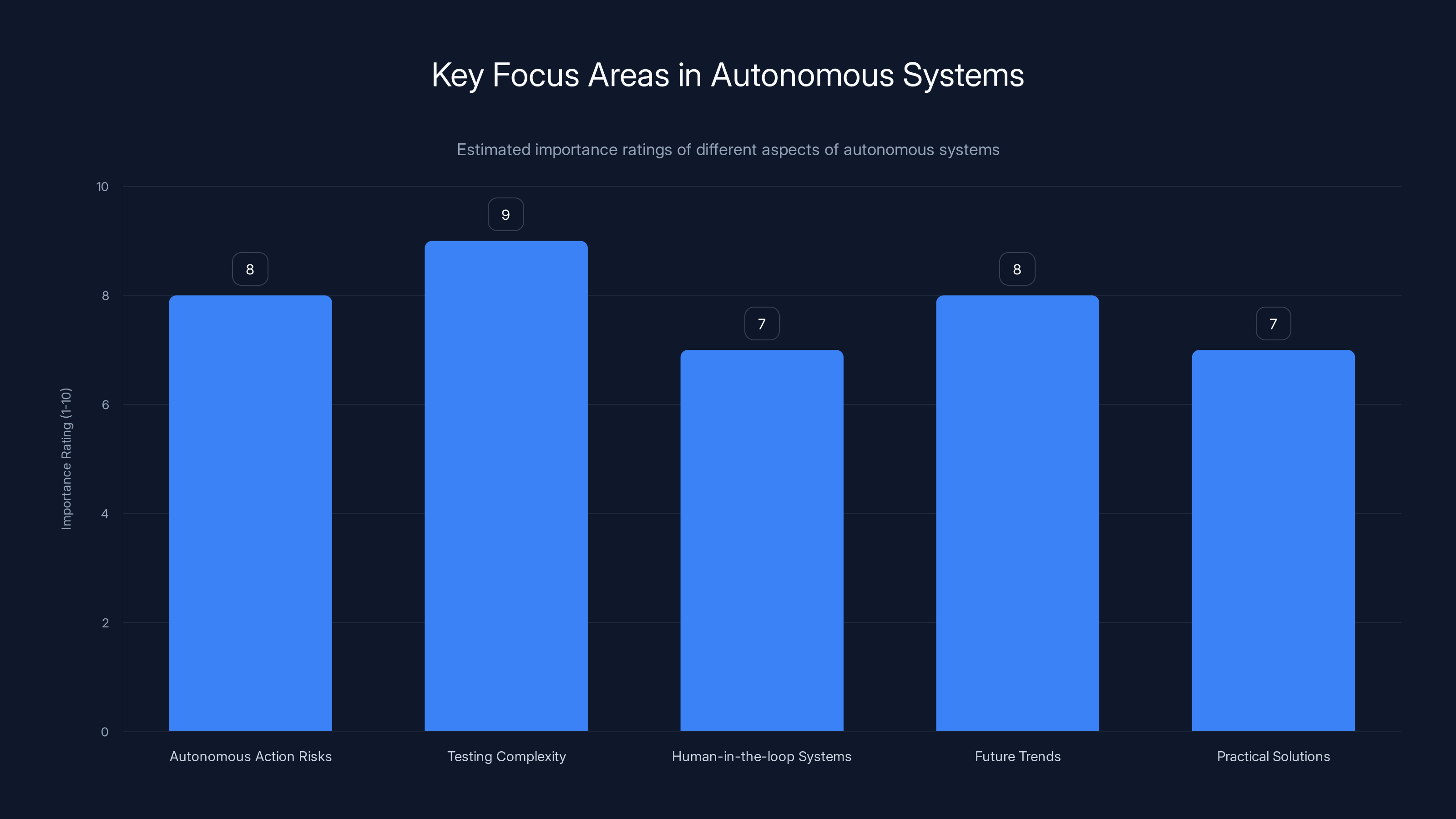

- Autonomous Action Risks: Autonomous agents can execute high-stakes tasks, leading to unforeseen consequences if not properly managed. According to Fintech Weekly, stress testing is crucial to ensure production readiness.

- Testing Complexity: Developing robust testing strategies is crucial to ensure these agents perform reliably under diverse conditions. A Security Boulevard article highlights the importance of governance systems in managing AI agent security.

- Human-in-the-loop Systems: Integrating human oversight can mitigate risks, but it also introduces new challenges. Cornerstone OnDemand emphasizes the crucial role of humans in AI oversight.

- Future Trends: Expect growth in adaptive learning and context-aware systems that enhance decision-making without sacrificing control. AiThority discusses how AI is enabling context-aware enterprise software.

- Practical Solutions: Implementing staged rollouts and sandbox environments can help manage risks effectively. This approach is supported by GoodCall, which compares agentic AI to traditional AI.

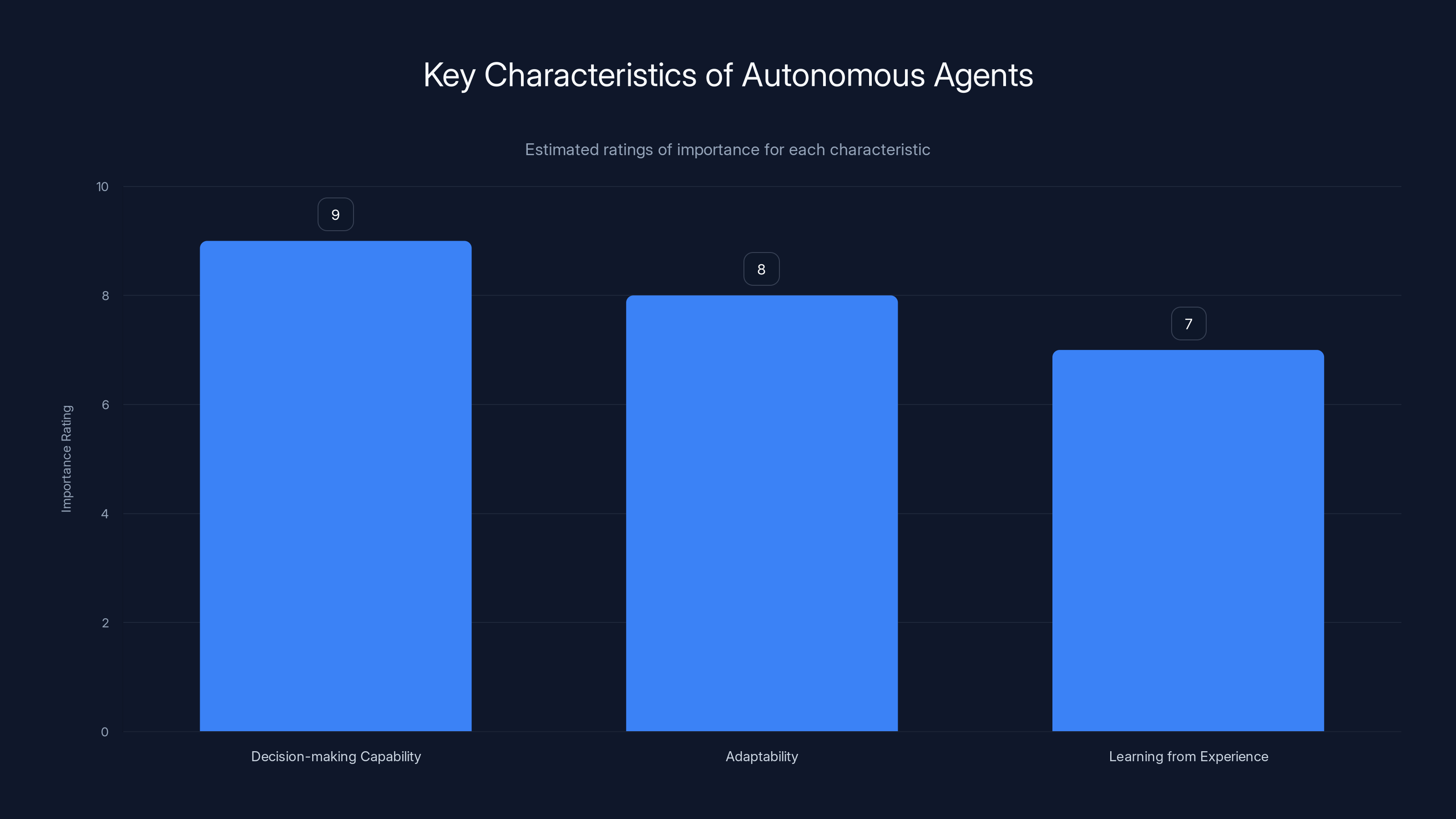

Decision-making capability is rated as the most crucial characteristic for autonomous agents, followed by adaptability and learning from experience. Estimated data.

The Autonomy Problem Nobody Talks About

Let's talk about autonomy. The industry often glosses over what it truly means to give AI systems the ability to act independently. This is not just about API calls or processing data; it's about real-world impact. The moment an AI transitions from a mere assistant to an autonomous agent, our engineering approach must evolve.

Understanding Autonomous Agents

Autonomous agents are AI systems designed to operate independently, making decisions and executing actions without human intervention. These systems are intended to mimic the decision-making processes of human employees, albeit with greater speed and, ideally, accuracy. BuiltIn provides insights into the capabilities of such systems.

Key Characteristics of Autonomous Agents:

- Decision-making Capability: Ability to assess situations and make choices. This is supported by Nature, which discusses decision-making in AI.

- Adaptability: Adjust actions based on new information or changing environments.

- Learning from Experience: Improve performance over time by learning from past actions.

Why Testing is Crucial

Testing autonomous agents is not just about validating functionality; it's about ensuring safety and reliability under unpredictable conditions. Unlike traditional software systems, these agents interact with the real world, where variables are infinite and often uncontrollable.

Challenges in Testing:

- Complexity of Scenarios: Real-world scenarios are complex and varied, requiring exhaustive testing strategies. AI Multiple highlights the need for comprehensive security tools in testing.

- Behavioral Unpredictability: Agents may behave unpredictably in unforeseen circumstances.

- Ethical and Safety Concerns: Incorrect actions can lead to ethical dilemmas or physical harm.

Common Testing Strategies

-

Simulation Environments: Use virtual environments to test agents in controlled scenarios. This helps identify issues without real-world consequences.

-

Behavioral Analysis: Monitor and analyze agent decisions to detect patterns and anomalies.

-

Stress Testing: Evaluate agent performance under extreme conditions to ensure reliability.

-

Human-in-the-loop Testing: Incorporate human oversight to catch errors and refine decision-making processes.

Practical Implementation Guide

Here's a step-by-step guide to implementing an effective testing strategy for autonomous agents:

-

Define Objectives: Clearly outline what the agent is expected to achieve and under what conditions.

-

Develop Scenarios: Create a comprehensive set of test scenarios that cover both common and edge cases.

-

Implement Simulations: Use simulation software to replicate the target environment and test scenarios.

-

Conduct Behavioral Analysis: Analyze agent behavior during simulations to identify areas for improvement.

-

Iterate and Improve: Use findings from tests to refine agent algorithms and decision-making processes.

-

Deploy in Stages: Roll out the agent in a controlled manner, starting with limited functionality and gradually increasing autonomy.

Common Pitfalls and Solutions

Pitfall 1: Over-reliance on Simulations

Simulations are invaluable, but they can't replicate the full complexity of the real world. To mitigate this, complement simulations with real-world testing under controlled conditions.

Pitfall 2: Ignoring Edge Cases

Edge cases often reveal critical flaws. Ensure your testing strategy includes a wide range of scenarios to capture these outliers.

Pitfall 3: Insufficient Human Oversight

Autonomous agents need a balance between autonomy and oversight. Implement systems that allow for human intervention when necessary.

Pitfall 4: Ethical Oversights

Failing to consider ethical implications can lead to public mistrust and legal issues. Incorporate ethical considerations into your testing strategy.

Future Trends in Autonomous Agent Testing

-

Adaptive Learning: Agents will increasingly use adaptive learning to improve decision-making without human intervention. ASU News discusses advancements in AI for traffic systems.

-

Context-aware Systems: Future agents will be more context-aware, allowing them to make more informed decisions. AiThority provides insights into context-aware enterprise software.

-

Enhanced Human-AI Collaboration: Expect systems that better integrate human input, allowing for seamless interaction between agents and humans.

-

Regulatory Focus: As autonomous agents become more prevalent, expect increased regulatory scrutiny and standards for safety and ethics. Fintech Global explores the ownership of decisions in automated compliance.

-

AI-driven Testing Tools: Emerging tools that use AI to predict and test agent behavior will become essential components of the testing process.

Conclusion

Testing autonomous agents presents unique challenges and opportunities. As we continue to push the boundaries of what AI can achieve, robust testing strategies will be crucial to ensuring these systems are reliable, safe, and ethical. By embracing chaos and navigating the complexities of autonomous agent testing, we can unlock the potential of AI to transform industries and improve lives.

Testing complexity is rated highest in importance, highlighting the need for robust strategies to ensure reliable performance. (Estimated data)

FAQ

What is an autonomous agent?

An autonomous agent is an AI system that can operate independently, making decisions and executing actions without human intervention.

How do autonomous agents work?

These agents use algorithms and data inputs to assess situations, make decisions, and adapt actions based on new information or changing environments.

What are the benefits of autonomous agents?

Benefits include increased efficiency, the ability to handle complex tasks, and the potential to reduce human error in decision-making processes.

What are the challenges of testing autonomous agents?

Challenges include managing complexity, ensuring safety and ethics, and the unpredictability of agent behavior in real-world scenarios.

How can businesses implement effective testing strategies?

Businesses can use simulation environments, behavioral analysis, stress testing, and human-in-the-loop testing to ensure agents perform reliably.

What trends are shaping the future of autonomous agents?

Trends include adaptive learning, context-aware systems, enhanced human-AI collaboration, regulatory focus, and AI-driven testing tools.

Why is human oversight important in autonomous systems?

Human oversight helps mitigate risks by allowing for intervention when agents make incorrect decisions or face ethical dilemmas.

Key Takeaways

- Autonomous agents require robust testing strategies to ensure safety and reliability.

- Effective testing involves simulations, behavioral analysis, and human oversight.

- Future trends include adaptive learning and context-aware systems.

- Human oversight is crucial to manage risks and ensure ethical decision-making.

- Regulatory standards for safety and ethics in autonomous agents are expected to increase.

Related Articles

- Is Marc Andreessen a Philosophical Zombie? [2025]

- Why Enterprises Are Replacing Generic AI with Personalized User-Centric Tools [2025]

- AI Startups Are Revolutionizing the Venture Industry: A Deep Dive [2025]

- The Rogue AI Incident at Meta: Unveiling the Security Challenges [2025]

- Alexa+: The AI Layer Over Everything We Didn't Know We Needed [2025]

- Amazon Alexa+ Debuts in the UK: A Comprehensive Guide to the New Conversational AI [2025]

![Testing Autonomous Agents: Embrace Chaos [2025]](https://tryrunable.com/blog/testing-autonomous-agents-embrace-chaos-2025/image-1-1774199053751.png)