The Smartphone Reign Is Ending. Here's What Comes Next

Your smartphone has been winning for 18 years. It conquered the world, displaced cameras, killed GPS devices, murdered portable music players, and buried the calculator. It's been the computing center of human life.

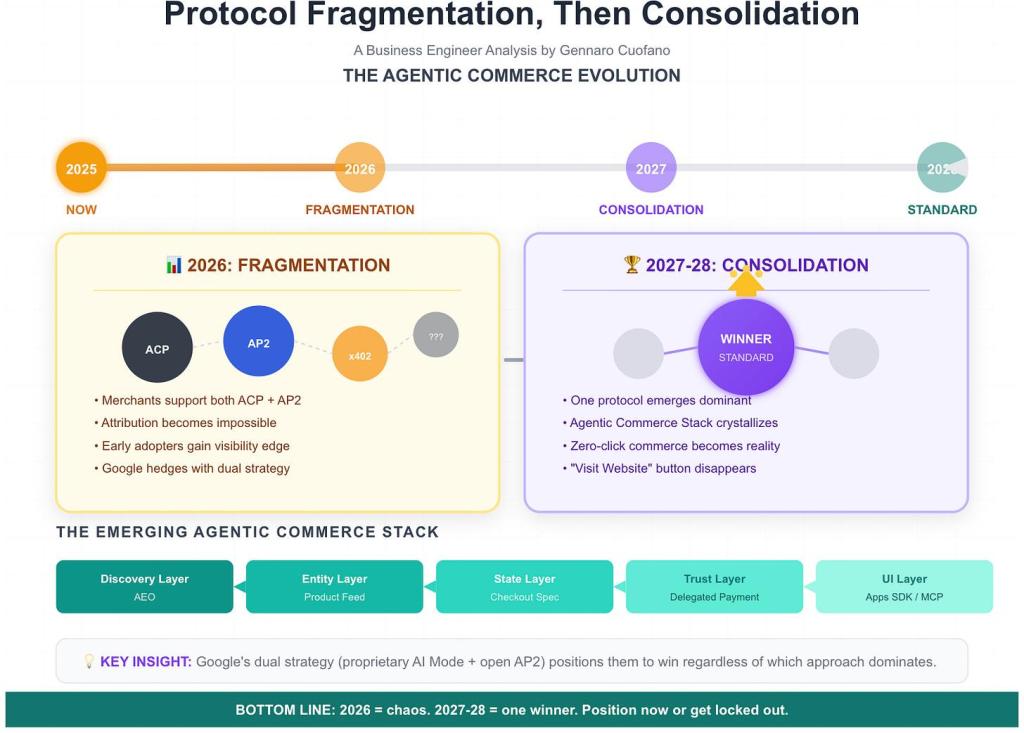

But something fundamental is shifting right now, in 2025, and it's about to accelerate dramatically. The device you're holding might be obsolete by 2026.

I know that sounds hyperbolic. But the timing is too perfect to ignore. OpenAI is working with Jony Ive, the legendary designer who created the iPhone, to build something completely different. It's not a better phone. It's a wearable AI device that fundamentally reimagines how you interact with technology.

The company has been quietly building audio capabilities into ChatGPT, testing voice interactions that feel less like talking to a machine and more like having a conversation with someone who actually understands you. Real-time voice conversations. Natural interruptions. The kind of interface that makes typing or tapping a screen feel impossibly slow.

This isn't just a product roadmap. This is the end of an era.

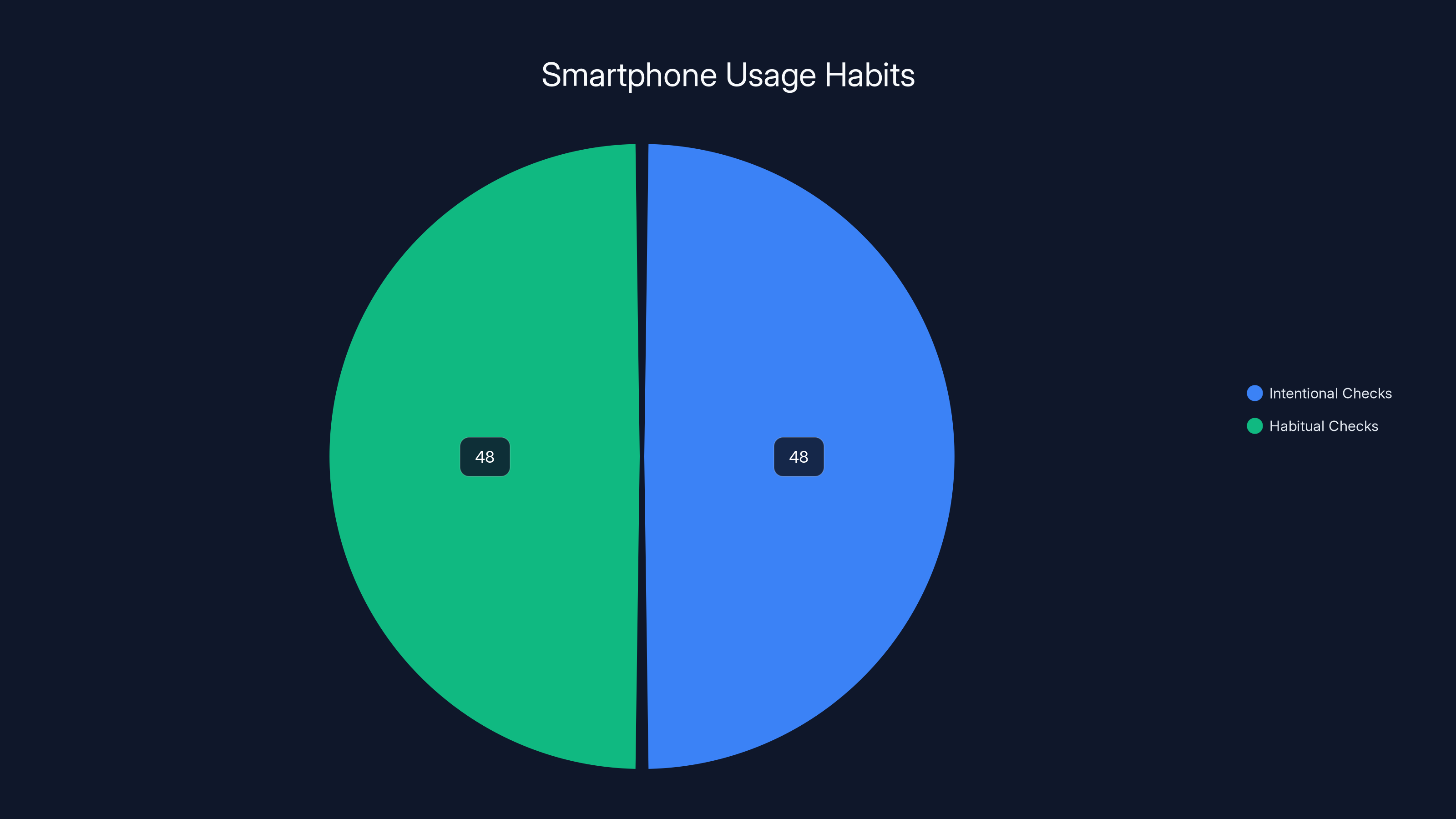

The smartphone was revolutionary because it was portable and personal. But it came with massive friction. You have to take it out. Unlock it. Find the app. Wait for it to load. Type or tap through menus. Most of us do this 150 times a day without thinking about it. We've normalized something that's actually insanely inefficient.

AI wearables solve that. Always there. Always listening. No unlock, no app, no latency. Just speak. And the AI understands context, learns your preferences, and anticipates what you need. This is the future that's actually arriving, and 2026 could be the inflection point where it stops being speculative and starts being mainstream.

Let's break down why this is happening now, what's technically required to make it work, what the OpenAI and Jony Ive collaboration means, and what your computing life looks like when the smartphone finally becomes a relic.

TL; DR

- OpenAI and Jony Ive partnership: Building a wearable AI device designed to replace smartphone interactions entirely

- Voice-first computing: Real-time audio conversations with AI eliminating the friction of screens, apps, and typing

- 2026 timeline: Industry experts predict this is when AI wearables reach critical adoption mass among early adopters

- Form factor flexibility: Devices won't look like phones; they'll be earpieces, rings, glasses, or ambient devices

- The smartphone won't disappear: It'll become a secondary device, like how the PC became after smartphones arrived

- Bottom line: We're transitioning from a screen-based computing era to an AI-ambient one, driven by natural language understanding that finally works

Estimated data suggests that a basic model priced between

Why the Smartphone's Reign Is Actually Ending

The smartphone dominated because of three things: portability, touchscreen interface, and being general-purpose. It could do anything. It fit in your pocket. And you could see what was happening.

But here's the catch that nobody talks about. The smartphone is friction-heavy. Even the best ones.

You're trying to check the weather. You take your phone out. Unlock it (face, fingerprint, code). Open the weather app or ask Siri. Wait. Look at the screen. Process the information. Put it back. Five steps for something that should take one second.

Now imagine someone who understands context. Who knows where you are, what your plans are, what you care about, and what you might need right now. Who can deliver the answer before you even ask. That's the shift.

AI wearables eliminate that friction entirely. No screen to tap. No app to open. Just speak naturally. The AI handles context, parsing, and delivering results. This works because language models have become genuinely good at understanding what you actually mean, not just what you literally said.

Three years ago, voice assistants were still comically bad. Siri would mishear you constantly. Alexa would misinterpret simple requests. They worked for obvious commands ("set a timer", "play music") but broke immediately on anything conversational or contextual.

That's no longer true. Modern language models can handle nuance, context switching, follow-ups, and interruptions. They understand implicit requests. If you say "that's too expensive", the AI knows you're referring to something from three sentences ago, not asking for a philosophical discussion about the concept of expense.

This shift in AI capability is the prerequisite that makes wearables viable. Without it, you'd be wearing a frustrating device that constantly misunderstands you. With it, you have something legitimately better than a smartphone for most interactions.

The timeline is crucial too. We're not in 2019 anymore, where voice assistants were the punchline of every tech podcast. We're in 2025, where the technology actually works. The transition can happen quickly once the foundation is solid. It happened with smartphones (2007 iPhone to 2012 Android dominance was five years). It happened with streaming video. When the tech clicks and the UX is frictionless, adoption accelerates rapidly.

2026 could be that inflection point. Not because AI suddenly works (it already does), but because enough people experience a genuinely better way of interacting with technology and realize their phone is actually holding them back.

AI wearables focus on key functionalities like communication and real-time information, optimizing for specific use cases. Estimated data.

The OpenAI and Jony Ive Partnership: What It Actually Means

This collaboration is significant for reasons that go deeper than "two smart people are making a device."

Sam Altman doesn't need to launch hardware. OpenAI is generating billions in revenue. The company could remain purely a software and API play forever, selling its models to everyone, and be extraordinarily successful.

But Altman chose to build a device. That's a deliberate strategic bet that the form factor matters. That how people interact with AI changes what AI can do.

Jony Ive designed the devices that shaped an entire generation's relationship with technology. He understood that design isn't decoration. It's about removing everything unnecessary until the essential function becomes obvious. The iPhone didn't succeed because it was flashy. It succeeded because the interaction model was so clear that anyone could understand it immediately.

Pairing these two together signals something specific: OpenAI is thinking about this as a design problem, not just an engineering problem.

The partnership also indicates that this isn't some experimental side project. This is OpenAI's bet on what computing looks like after the smartphone era ends. They're not building a second device. They're building the device that replaces the smartphone.

What makes this credible is Ive's track record. He worked on the iPhone when nobody believed in touchscreen phones. He designed the iPad when the entire industry said tablets were just big phones. He shaped the vision for a company-wide design philosophy that influenced everything from software to retail spaces. If Ive is involved, it means someone genuinely world-class is solving the hard problems: What does this device physically look like? How do you interact with it? How do you know it's working? How do you control it when you don't want it listening?

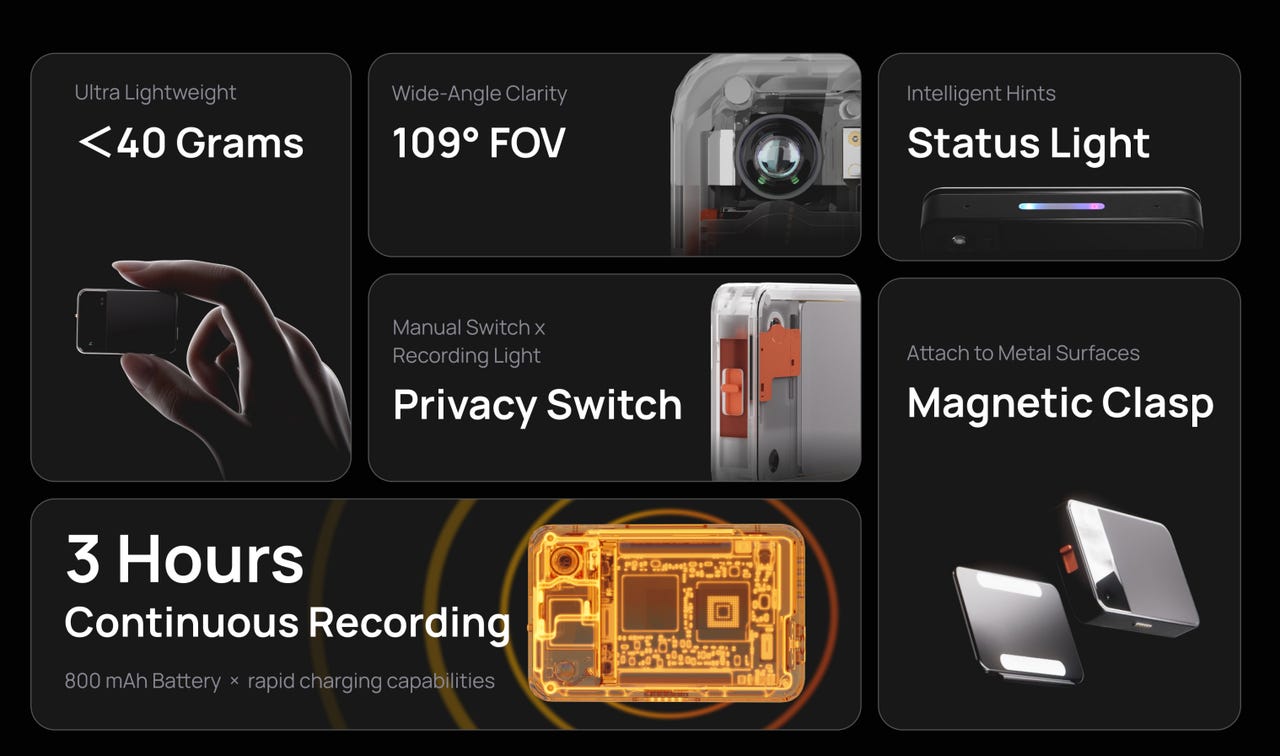

These design problems are harder than the AI problems, honestly. Getting GPT-4 to work in a device is straightforward. Making a device that people actually want to wear, that doesn't feel weird, that does what they expect, that respects their privacy, that lasts all day without charging—that requires design genius.

The timeline also suggests seriousness. Altman has mentioned that the team is working on "advanced audio capabilities," which is code for real-time, natural voice conversations. That's harder than it sounds. Streaming responses over the internet with latency that doesn't feel laggy. Audio quality that doesn't sound robotic. Interruption handling that feels natural. These are all solvable problems, but they require serious engineering.

The partnership also answers a strategic question: Will this device run OpenAI's models, or will it be open? If it's OpenAI-exclusive, it creates lock-in but risks being limited. If it's open, it could become the dominant platform for wearable AI, but OpenAI wouldn't capture the value as directly.

Either way, this signals that the next computing era isn't smartphones. It's something we're barely imagining yet.

How AI Wearables Will Actually Replace Your Phone

Let's get specific about what this replacement actually looks like, because it's not just "smaller phone."

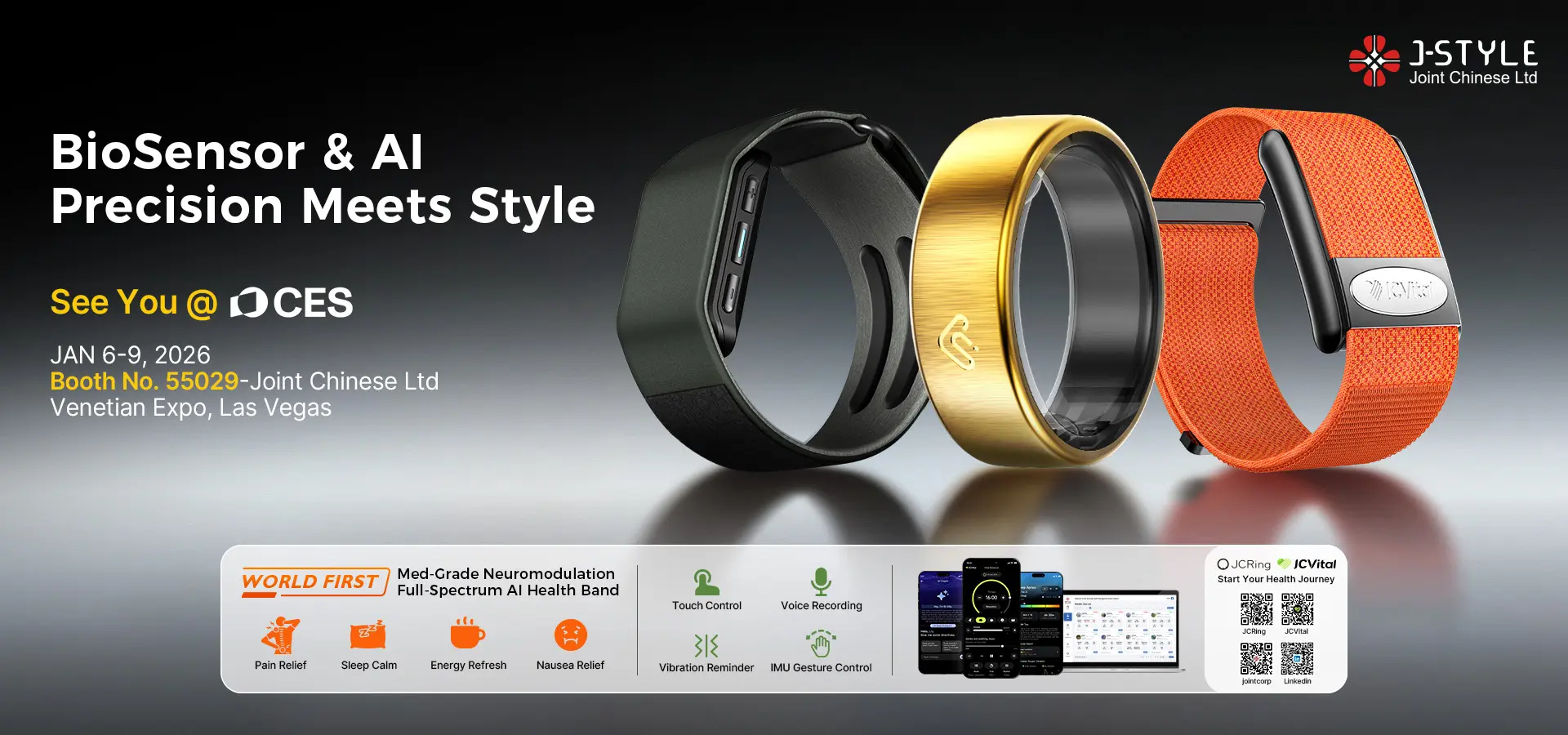

The form factor will vary. For some people, it's earbuds or an earpiece. For others, it's a ring or a bracelet. For others, it's glasses with an integrated display. The beauty of moving away from phones is that the form factor can optimize for the actual use case instead of trying to do everything.

Right now, your smartphone is doing maybe 50 different things. Calls, texts, maps, email, social media, photos, videos, payments, weather, calendar, music, podcasts, books, news, and another 40 apps you rarely open. It's doing this while trying to be pocketable and have a reasonable battery life. That's why it's so thick and heavy and costs $1,200.

An AI wearable doesn't try to do all of that. Instead, it handles:

- Voice-first communication: Calls, texts, voice notes

- Real-time information: Weather, time, directions, notifications

- Contextual assistance: Calendar reminders, task management, proactive suggestions

- Audio content: Music, podcasts, audiobooks

- Payments: Tap-based or voice-based transactions

- Personal assistant functions: Research, advice, brainstorming

Photography, complex video, heavy document editing, and detailed data entry? Those stay on secondary devices. A laptop or tablet that you use when you're actually sitting down to create something.

The smartphone becomes what the PC became after smartphones arrived. It's not gone. It's not irrelevant. It's just not the center of your computing life anymore.

Here's what a day with an AI wearable actually looks like:

You wake up. The device already knows your sleep patterns and suggests you get coffee. You ask for your calendar while brushing your teeth. It tells you you're running late for a 9 AM meeting, and there's traffic on your usual route. You ask for an alternate route while showering. The AI suggests public transit instead, and you approve it. No screen, no friction.

You're heading to the office. Your commute is 45 minutes. You listen to a podcast. The AI knows your preferences and chose the episode automatically. Halfway through, you get a message from a colleague asking about a deadline. You respond verbally. The AI transcribes and sends it.

You're in the meeting. You're taking notes by asking the AI to record key points. No typing. No distraction from a screen. After the meeting, you ask the AI to summarize the decisions and email them to the group. It does.

Lunch. You ask the AI where to eat. It remembers you have a dietary preference and suggests a restaurant that's opened recently near your office. You ask for directions and a reservation. The AI handles both.

Afternoon. You're deep in work, but a question comes up that needs research. You voice-ask the AI to find information about competitive pricing for a project. While you're focused on something else, the AI searches and prepares a summary. You ask clarifying questions. It digs deeper. No context switching. No "let me open my browser and search." The AI becomes an ambient presence that's available whenever you need it.

Later. You're commuting home. Your partner texts to ask if you want to grab dinner. You respond while walking. No phone. No distraction. The AI handles the typing and sending.

This doesn't require a screen. It doesn't require touching or swiping. It just requires natural language understanding good enough to handle context, interruptions, and follow-ups. Which, as of 2025, actually works.

Audio quality is the most critical factor when selecting an AI wearable, followed by interruption handling and privacy model. Estimated data based on user considerations.

Audio: The Breakthrough That Makes This Possible

The entire feasibility of AI wearables rests on one thing: making audio conversations with AI feel genuinely natural.

This is harder than it sounds. When you talk to Siri or Alexa, there's a latency that makes the interaction feel robotic. You speak. There's a pause. The device beeps or shows a wave. You wait for the response. More pause. It speaks back. The entire exchange feels chunky and broken compared to a conversation with a human.

OpenAI's real-time voice capability changes this. The model processes audio as it comes in, rather than waiting for you to finish speaking. It can interrupt you. You can interrupt it. The conversation flows naturally because the latency is gone.

Think about how different this is. In a normal voice assistant conversation, you finish your complete sentence or question, then wait for a response. With real-time voice, you can say "what's the weather in San Francisco... actually, make that Seattle" and the AI understands both the context and the change. You don't have to restart.

This requires solving several hard problems:

Latency Compression: The model needs to respond so quickly that it doesn't feel like you're waiting. Sub-500ms response time is critical. Below that, humans don't perceive delay. Above it, the conversation feels stilted.

Audio Quality: Voice synthesis can sound robotic if it's not done right. The latest models generate speech that sounds genuinely human, with appropriate emphasis, pacing, and emotion. This matters more than people realize. A robotic-sounding AI feels less trustworthy, even if it's saying the same thing.

Interruption Handling: In a real conversation, people interrupt each other constantly. If an AI can't handle you interrupting it mid-sentence, or if it can't handle you changing your request partway through, it feels broken. Real-time voice requires the model to gracefully handle interruptions and context shifts.

Privacy and Control: Always-listening devices create justifiable privacy concerns. The device needs to have clear, obvious on/off states. It needs to process some audio locally (not everything gets sent to the cloud). It needs to be transparent about what's being recorded and what's being stored. This is a design and engineering problem, not just an AI problem.

OpenAI has been testing these capabilities internally, and the improvements are dramatic. The latency has dropped. The audio quality has improved. The interruption handling works smoothly. These aren't theoretical improvements. They're already working in internal versions.

The breakthrough here is that audio becomes the primary interface, not a secondary feature. Your wearable device doesn't have a meaningful screen. It has speakers and a microphone. Everything else is software.

This shift to audio-first computing is significant because it changes what you can do while using the device. With a phone, you need to look at the screen. You can't drive, you can't walk safely, you can't be in a social situation. With audio-first computing, you can do almost anything while interacting with your AI.

This opens up entirely new use cases that phones never enabled effectively. Real-time assistance while working. Hands-free interaction during exercise. Seamless conversation during social situations. An always-available thinking partner that doesn't require dedicated attention.

What Wearable AI Devices Actually Look Like

Form factor matters, and this is where Jony Ive's expertise becomes crucial.

The device doesn't look like a phone. It doesn't have a screen (or has a very minimal one). It's optimized for being worn comfortably for hours at a time.

Possible form factors:

Earbuds or Earpiece: This is the most obvious choice. Airpods Pro-style earbuds with better audio and more powerful compute. You wear them all day, they handle calls and audio interaction, and they connect to your other devices for more complex tasks. Battery life is the main constraint here, but fast charging helps.

Smart Glasses: Google Glass tried this and failed. But the technology has improved dramatically. Modern smart glasses can have a tiny display for showing critical information without being intrusive, plus speakers and a microphone for voice interaction. The advantage is that you can see what's happening without taking anything out or looking down.

Ring or Bracelet: Smaller form factor for minimal computation, but useful for notifications, quick responses, and staying connected. Less useful for longer interactions, but perfect for checking information quickly.

Ambient Devices: A device you keep in your pocket or on your desk that's powerful enough to handle complex interactions, but minimal enough that you're not thinking about it constantly. Closer to the size of an iPod Shuffle than a phone.

Combination Approach: Most likely, people will use multiple devices. Earbuds for primary interaction. Glasses for visual information when needed. A more powerful device for anything that requires more compute or a screen. This becomes your ecosystem.

The key constraint is battery life. Phones can get through a day because they're relatively power-hungry and you accept charging them nightly. A wearable needs to last multiple days, ideally a week, before needing a charge. This limits how much compute you can do on the device itself.

The solution is edge + cloud computing. The device does enough local processing to handle audio input, basic understanding, and very quick responses. For anything more complex, it sends to the cloud, gets a response, and returns the audio. This works because the latency is manageable if the network is good, and the local processing handles the cases where latency matters most (acknowledgment, quick facts, system feedback).

Design also matters for making the device feel less intrusive. A smartphone is obviously a phone. You know when you're using it. A wearable device should feel more ambient. It's there when you need it, but it's not constantly demanding attention. This is a discipline that Jony Ive excels at. Removing visual noise. Making technology feel like it's serving you rather than demanding interaction.

Half of the average 96 daily smartphone checks are habitual rather than intentional, highlighting inefficiency and friction in current smartphone use.

The Business Model: How This Actually Gets Made

Building an AI wearable is expensive. Really expensive.

Custom chips. Advanced audio processing. Manufacturing at scale. Distribution. Support. This isn't a software play where marginal cost is near zero.

OpenAI and Jony Ive's startup (called Lovot, though it may rebrand for this device) need to answer hard questions:

How much does it cost? If it's under

How do you make money? The hardware margin? Subscription services? API usage fees? Data? This determines whether the business is sustainable.

How do you handle privacy? You're building a device that listens. You need to be genuinely transparent about data collection and storage, and you need to make it obvious how to control what's recorded.

How do you avoid the smartphone trap? Phones are sold every two or three years. Wearables might last longer. How do you plan for hardware that's designed to last rather than be replaced constantly?

What's the ecosystem play? Does the device only work with OpenAI services, or does it integrate with other platforms? This determines how big the market can be.

The most likely scenario is a premium positioning initially (maybe

This matches the model that successful device companies use. Apple sells expensive hardware with good margins, then makes recurring revenue from services. Google subsidizes hardware to increase service usage. Meta bundles hardware with content experiences.

OpenAI's advantage is that they don't need to optimize for advertising like Google or Meta. They can focus on the product working really well and users being willing to pay for quality. This is a real advantage in a crowded wearables market.

Timeline: When This Actually Launches

OpenAI has been publicly mentioning audio improvements and wearable interest. The trajectory suggests:

2025 (Now): Continued development and internal testing. Possible announcement of a partnership or first prototype. More refinement of the real-time voice capability in ChatGPT, making it available to more users.

2026: Launch of a first device or limited release to a specific market or user group. Initial manufacturing runs to understand supply chain issues and refine the product. Early adopter feedback drives iterations.

2027+: Broader rollout, competing products from other companies arriving, market stabilization around successful form factors and business models.

The 2026 timeline isn't arbitrary. It's when the technology is mature enough, the partnerships are established, and early testing is complete. It's also when competitors will be launching products, creating a race to be first with a genuinely good implementation.

Note that "launch" doesn't mean everyone has it. Just like early iPhones were luxury items, early AI wearables will be expensive and available in limited quantities. But 2026 could be the year the market realizes this is the future, and adoption accelerates from there.

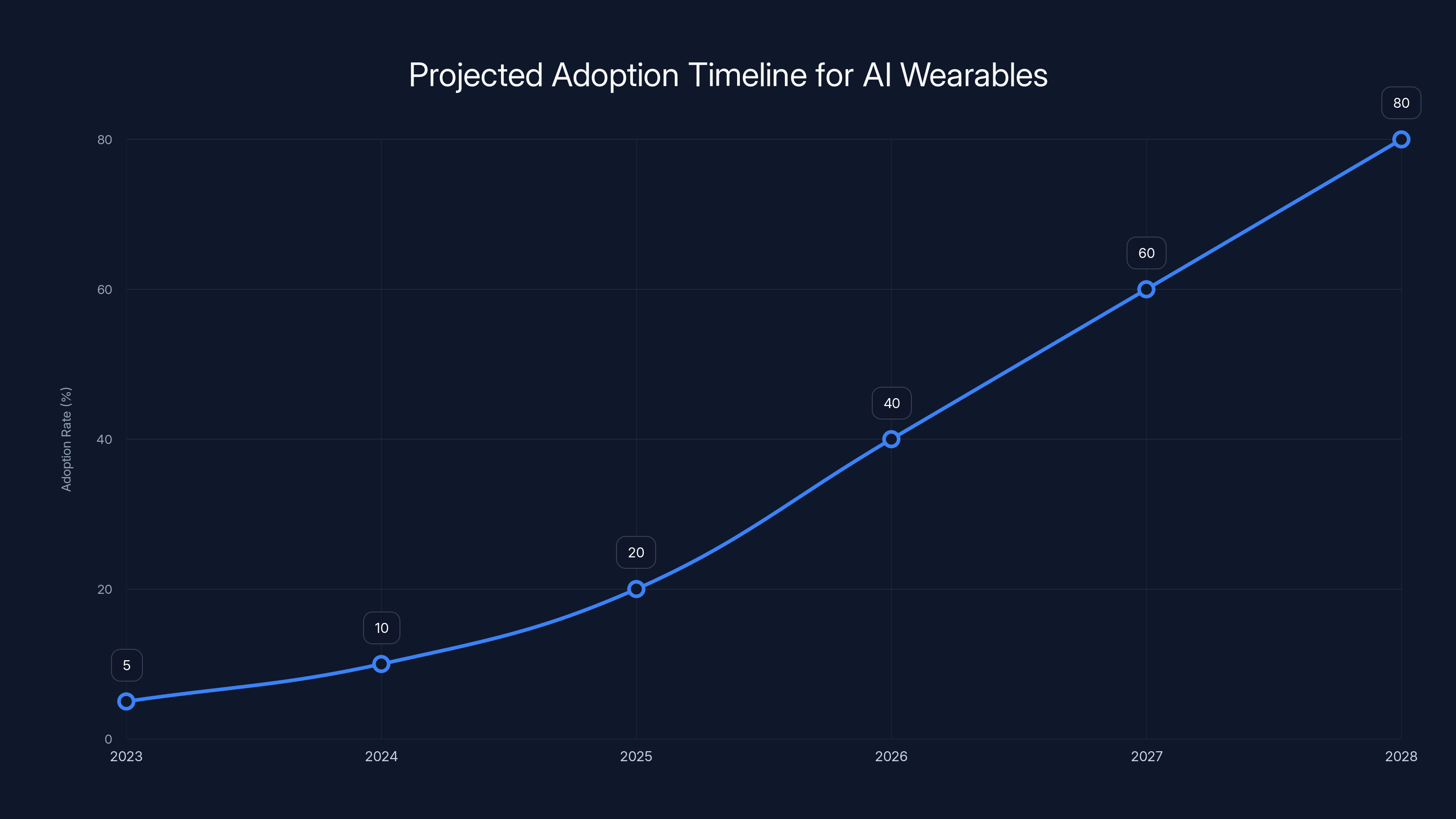

AI wearables are expected to see significant adoption growth starting in 2026, with mainstream adoption projected by 2028. Estimated data.

How AI Wearables Change What AI Can Do

This isn't just about hardware. The form factor change enables new capabilities.

Right now, people use AI in sessions. You open ChatGPT, you have a conversation, you close it. The AI has no continuity with your life. It doesn't know what you did yesterday. It can't proactively help you.

An always-on wearable changes this fundamental. The AI becomes aware of your context continuously. It knows your location, your calendar, your recent conversations, your preferences. It can make suggestions based on actual context rather than generic recommendations.

This enables:

Proactive Assistance: The AI suggests things before you ask. You're walking toward a meeting and running late. The AI warns you automatically. You're about to order lunch at a place that usually overcharges. The AI mentions it.

Continuous Learning: The AI learns your preferences, communication style, and values over time. It gets better at helping you specifically, not just generally.

Natural Collaboration: Instead of "I'm using an AI tool," it becomes "I have an AI assistant." The relationship feels more collaborative and less transactional.

Integration with Daily Life: Rather than being something you consciously access, the AI becomes ambient. It's always available for the brief moments of need, without requiring focused attention.

This changes the model from "tools you use" to "services that work for you." It's a meaningful shift in how AI integrates into human life.

What This Means for Your Smartphone

The smartphone isn't going away tomorrow. That's not how technology transitions work.

What's happening is the same thing that happened with PCs when smartphones arrived. PCs got better. They're still used for serious work. But they're no longer the center of your digital life. You don't carry one everywhere. You don't check it 150 times a day. It's a secondary device for dedicated tasks.

Smartphones will transition to being secondary devices. You'll still have one, probably. You'll use it for photography, video, detailed work, email, and situations where a bigger screen actually helps. But you won't carry it everywhere. You won't use it as your default for communication and information.

This has cascading effects:

-

Messaging apps matter less. If you're talking to your AI instead of typing messages, apps like WhatsApp and Telegram become less central.

-

Mobile apps matter less. If the AI handles most use cases, the entire app economy shrinks in importance (though it doesn't disappear).

-

Screen time expectations change. If your primary interface isn't visual, you're not staring at a screen for hours. This might be good for mental health and attention spans.

-

Battery life becomes less critical. If you're not checking your phone constantly, it doesn't need to last all day. You might charge it twice a week.

-

Screen size becomes less critical. A smartphone optimized for reading, video, and detailed work doesn't need to be pocketable anymore. It can be larger and heavier.

The smartphone evolves into something like what tablets became. Still useful. Still produced. Still improved. But no longer the center of computing.

The transition is interesting to watch from a user behavior perspective. Early adopters will embrace wearables immediately. Late adopters will cling to smartphones longer. Somewhere in the middle, your parents will finally stop calling you because they didn't realize their wearable was on. The transition will be messy and gradual.

But the arrow is pointing in one direction. More interaction via voice. Less via screen. More proactive assistance. Less reactive searching. More ambient technology. Less focused tools.

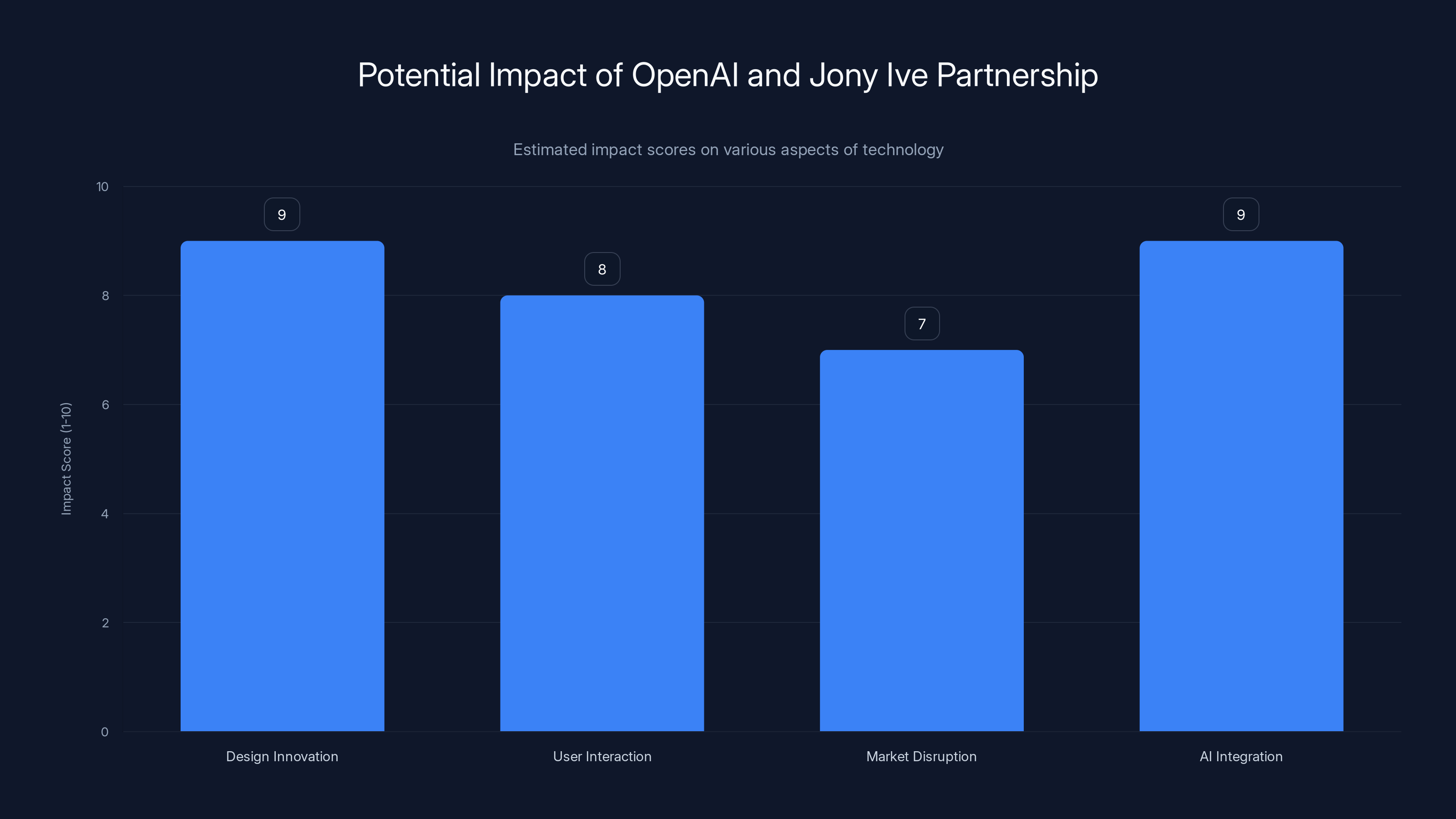

Estimated data suggests that the partnership will significantly impact design innovation and AI integration, potentially transforming user interaction and disrupting the market.

The Privacy and Trust Challenge

None of this works if people don't trust it.

A wearable device that's always listening (or can listen at any moment) is inherently more intrusive than a smartphone you hold in your hand. The privacy concerns are real and justified.

OpenAI and any company building these devices needs to address:

What's recorded? Does everything get sent to the cloud, or does the device process locally? How much can stay on-device?

Where's it stored? How long is audio or conversation data kept? Who has access to it? Can you delete it?

How's it secured? If the device is hacked, what does an attacker have access to? What are the protections?

How do you control it? There needs to be an obvious, reliable way to prevent recording. Not just a setting buried in an app. Physical control. On/off button. Light indicator.

What about third parties? If the AI needs to access third-party services (calendar, email, maps, etc.), what data gets shared? What happens if a third party gets breached?

These are solvable problems. Apple has done reasonably well with privacy in the context of wearables and digital assistants. But they require genuine commitment, not just PR.

The company that gets privacy right will have a massive competitive advantage. Users need to trust that the device isn't recording everything, selling their data, or enabling surveillance. If that trust is broken, the entire category stalls.

Competitive Landscape: Who's Actually Building This

OpenAI and Jony Ive aren't the only players. This is becoming a crowded field:

Apple: Siri in AirPods is their play. They have the advantage of ecosystem integration and installed base. The disadvantage is they're more constrained by privacy and corporate caution.

Google: Pixel Buds are their answer. Google has the AI capability and the services integration. The challenge is trust—Google's entire business model is built on data collection and advertising.

Meta: Building glasses and AI assistants, trying to create a VR/AR wearable ecosystem. The barrier here is that most people don't want to wear glasses with a camera.

Microsoft: Focused on enterprise with Copilot integration. They have the AI capability and corporate relationships. But they're slower at consumer hardware.

Startups: Humane AI, Rabbit R1, and others are building dedicated devices. Some are more about productivity, others about health or specific use cases.

The advantage OpenAI has is that they're starting fresh. No legacy business model conflicting with the new paradigm. No advertising platform to protect. They can optimize purely for the user experience. And they have Jony Ive, which signals design seriousness that most other companies don't match.

But this is still competitive. The company that gets the form factor, audio quality, and privacy model right first has the biggest opportunity. Everything else is chasing.

The Future: Beyond 2026

If AI wearables do transition to mainstream by 2026-2027, the next evolution comes quickly.

Augmented Reality Integration: Glasses that show information overlaid on reality. Not sci-fi goggles, but subtle displays that enhance what you're seeing without taking full attention.

Implicit Interaction: The AI starts learning your needs so well that you don't even need to ask. It anticipates. You approach your car. The AI has pre-cooled it because it knows it's hot outside. You're running late. The AI has already adjusted your calendar notifications.

Multi-Modal Input: Voice is the primary interface, but the device understands gestures, location, context, and other signals. It combines them into a richer understanding of what you need.

Distributed Intelligence: Instead of everything running in the cloud or on the device, intelligence is distributed across your wearable, your home, your car, your office. They share context and coordinate.

Personal Model Training: The AI isn't just trained on general data. It's trained on your specific patterns and preferences. It's uniquely yours.

These aren't speculative. The technology exists today. What's needed is integration, refinement, and appropriate use cases.

The computing model shifts from "devices you buy" to "services you subscribe to," with the hardware becoming increasingly invisible. The device itself becomes less important than the intelligence running on it.

Building Your AI Wearable Strategy

If you're thinking about this as a user, not a company, what should you pay attention to?

Watch the audio quality first. This is the make-or-break variable. If the voice sounds robotic or latency is noticeable, the device will feel broken regardless of capability.

Check interruption handling. Can you actually have a conversation, or do you have to speak in full sentences and wait for responses? Real conversation flow is non-negotiable.

Understand the privacy model. Read the actual privacy policy, not the marketing. Where does data go? How long is it kept? How much happens locally? Can you really turn it off?

Look at the ecosystem. Does the device integrate with the services you actually use? Can it access your calendar, email, location, and other contexts, or is it isolated?

Test the real-world latency. Specs are meaningless. Put on the device and use it. Walk around. Try different network conditions. Does it hold up?

Check battery life realistically. Company claims are always optimistic. Real battery life is usually 30% worse. What does that mean for your use case?

Consider the price-to-value ratio. Early devices are expensive. Is the improvement over your current setup worth $200-500? Or should you wait?

Most users should probably wait until 2027 before buying. The first generation will have rough edges. The second generation learns from those mistakes. But early adopters who are willing to tolerate issues will get to experience the future first.

The Smartphone Isn't Going Away (But Its Primacy Is)

The big picture: 2026 isn't when smartphones disappear. It's when the computing center of gravity shifts.

For decades, the screen was the computing interface. Desktops had screens. Laptops had screens. Tablets had screens. Phones had screens. Every computing device was screen-first.

Wearables shift this. The primary interface becomes voice and context. Screens become secondary, used when actually needed for visual tasks.

This is as big a shift as screens replacing keyboards (which happened gradually from 1980s to 2010s). It's not instant. It's not universal. But it's directional and inevitable.

The companies that realize this and build for it will win. The companies that cling to screens and touch interfaces will become legacy players. The users who adapt early will experience a genuinely better way of interacting with technology.

Your smartphone was revolutionary. But its time as the center of your digital life is ending. By 2026, something better might already be here.

FAQ

What is an AI wearable device?

An AI wearable is a device you wear (like earbuds, glasses, or a ring) that provides AI assistance through voice interaction, context awareness, and seamless integration with your daily life. Unlike smartphones that require screens and active engagement, AI wearables operate primarily through natural language conversation and ambient intelligence, making them available whenever you need them without the friction of unlocking, finding apps, or typing.

When will AI wearable devices become mainstream?

2026 could mark the inflection point when AI wearables transition from experimental to mainstream adoption among early adopters, driven by improvements in real-time audio processing, natural language understanding, and partnerships like OpenAI with design experts. Full mainstream adoption will likely take several years, following the pattern of previous computing transitions, but the market momentum and significant venture capital investment suggest rapid scaling once the technology matures.

How will AI wearables replace smartphones?

AI wearables won't instantly replace smartphones, but will shift them to secondary status. Wearables handle primary interactions (communication, information, voice commands) while phones become specialized devices for photography, video, detailed work, and complex tasks. The smartphone will evolve similarly to how desktop PCs became secondary after mobile devices arrived—still useful and produced, but no longer the center of computing.

What's the role of Jony Ive in the OpenAI wearable project?

Jony Ive brings world-class design expertise from his work on the iPhone and iPad, focusing on solving the hard design problems that engineering can't address: form factor, user interaction paradigms, privacy controls, and making technology feel ambient rather than intrusive. His involvement signals that this is a serious design-first product, not just an engineering experiment.

How do AI wearables handle privacy and listening concerns?

Responsible AI wearables need transparent privacy models including local processing (not sending everything to the cloud), clear user controls for recording on/off, physical indicators (lights, buttons) showing when the device is listening, explicit data retention policies, and easy deletion options. Users should verify actual practices, not just marketing claims, by checking privacy policies and understanding what stays on-device versus what's transmitted to servers.

What are the different form factors for AI wearables?

Possible AI wearable form factors include earbuds or earpieces for continuous audio interaction, smart glasses that add visual information overlays, rings or bracelets for minimal interfaces, and pocket or desk devices for more powerful computation. Most users will likely adopt multiple devices—earbuds as primary, glasses for visual info when needed, and a secondary device for complex tasks—creating an interconnected ecosystem.

Will my smartphone become obsolete?

No, smartphones will become secondary devices rather than obsolete. You'll likely keep using one for photography, video, detailed work, and situations where a larger screen helps, similar to how desktop computers are still used despite being less central to computing. Battery life and screen size may evolve as smartphones become less frequently used for communication and information retrieval.

What companies are building AI wearables?

OpenAI is partnering with design expert Jony Ive for a dedicated device. Apple is enhancing Siri in AirPods, Google is developing Pixel Buds with AI assistance, Meta is building glasses and AI assistants, and startups like Humane AI and Rabbit R1 are creating dedicated devices focused on specific use cases.

What should I look for when evaluating an AI wearable?

Prioritize audio quality and latency over marketed features, since these determine whether voice interaction feels natural or robotic. Check interruption handling to ensure real conversations are possible, understand the privacy model by reading actual policies, verify ecosystem integration with services you use, test realistic battery life, and consider whether the price-to-value justifies adoption now versus waiting for second-generation products.

Why is 2026 significant for AI wearables?

2026 represents the convergence of mature technology (real-time voice AI works now), strategic partnerships (OpenAI + Jony Ive), competitive pressure (multiple companies launching), and sufficient venture funding. It's when the infrastructure and expertise align to make this not just possible, but practical and desirable for mainstream users.

How will AI wearables change what AI can do?

Always-on wearables enable continuous context awareness (location, calendar, recent activity), proactive assistance (suggesting things before you ask), personal learning (understanding your specific preferences and patterns), and natural collaboration rather than transactional tool use. AI becomes an ambient assistant integrated into your life rather than a tool you consciously access.

Use Case: Building voice-first applications or documentation for AI wearable projects becomes faster with automated content generation.

Try Runable For Free

Key Takeaways

- OpenAI and Jony Ive partnership signals a serious bet that AI wearables will replace smartphones as the primary computing device by 2026-2027

- Real-time voice AI with minimal latency and natural interruption handling now exists, making wearable interfaces finally practical

- Voice-first wearables eliminate smartphone friction: no unlocking, no app searching, no screen staring, just natural conversation

- Smartphones won't disappear—they'll become secondary devices for specialized tasks like photography and detailed work, similar to how PCs evolved

- The privacy model and audio quality are the make-or-break factors determining whether AI wearables succeed or remain niche products

- Multiple companies (Apple, Google, Meta) are racing to launch AI wearables, but the first with natural audio and seamless integration will dominate

Related Articles

- Amazon Ember Artline TV: The Ultimate Samsung Frame Competitor [2025]

- Bee AI Wearable: Amazon's Big Moves After Acquisition [2025]

- CES 2026: Why AI Integration Matters More Than AI Hype [2025]

- Plaud NotePin S: AI Wearable with Highlight Button [2025]

- Plaud NotePin S: The Button That Changed Everything [2025]

- SwitchBot Obboto: The AI Pixel-Art Desk Light [2025]

![The End of Smartphones? How AI Wearables Will Reshape Computing in 2026 [2025]](https://tryrunable.com/blog/the-end-of-smartphones-how-ai-wearables-will-reshape-computi/image-1-1767629466414.jpg)