The Hidden Costs of AI-Generated Code: Debugging in Production [2025]

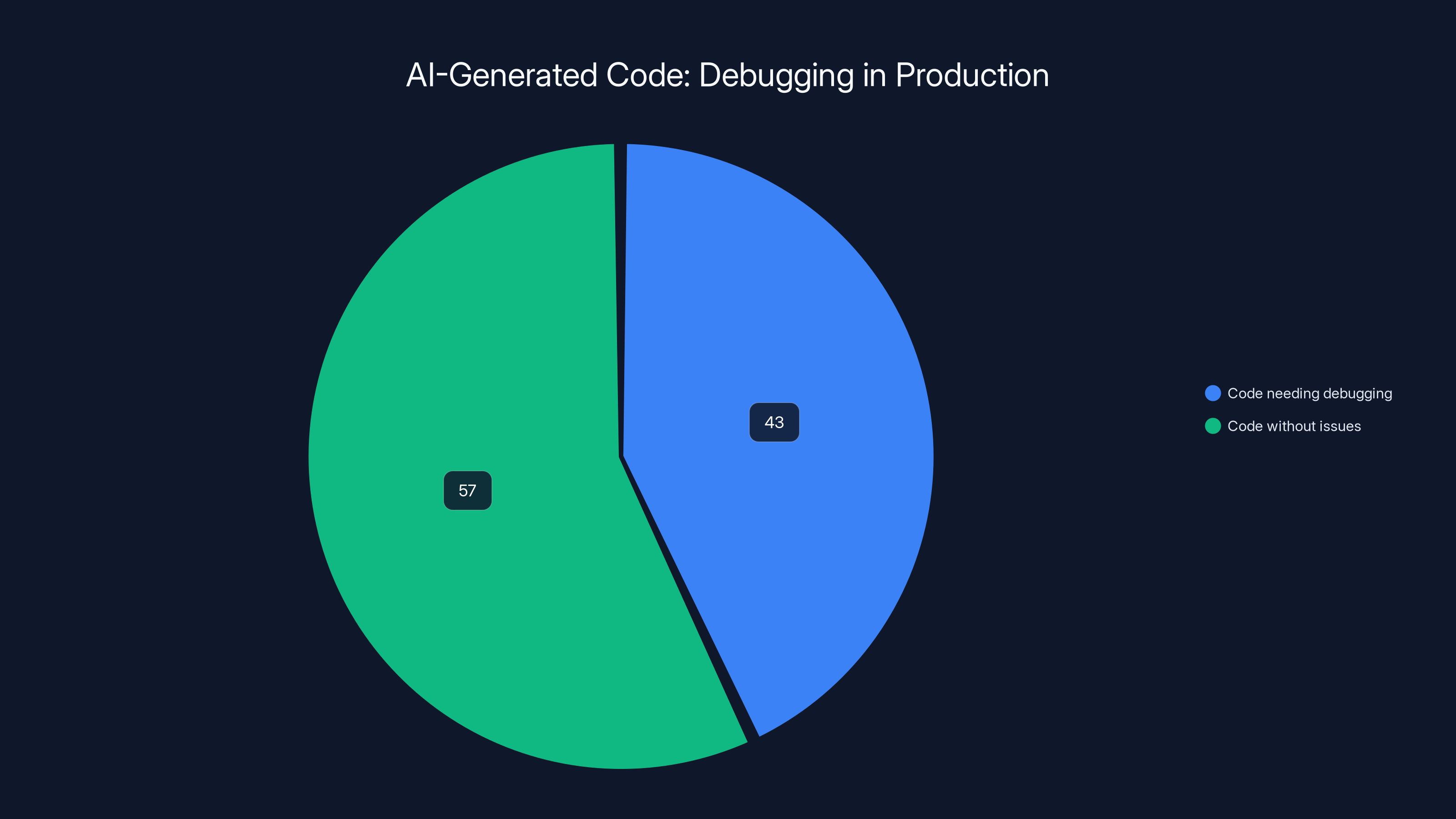

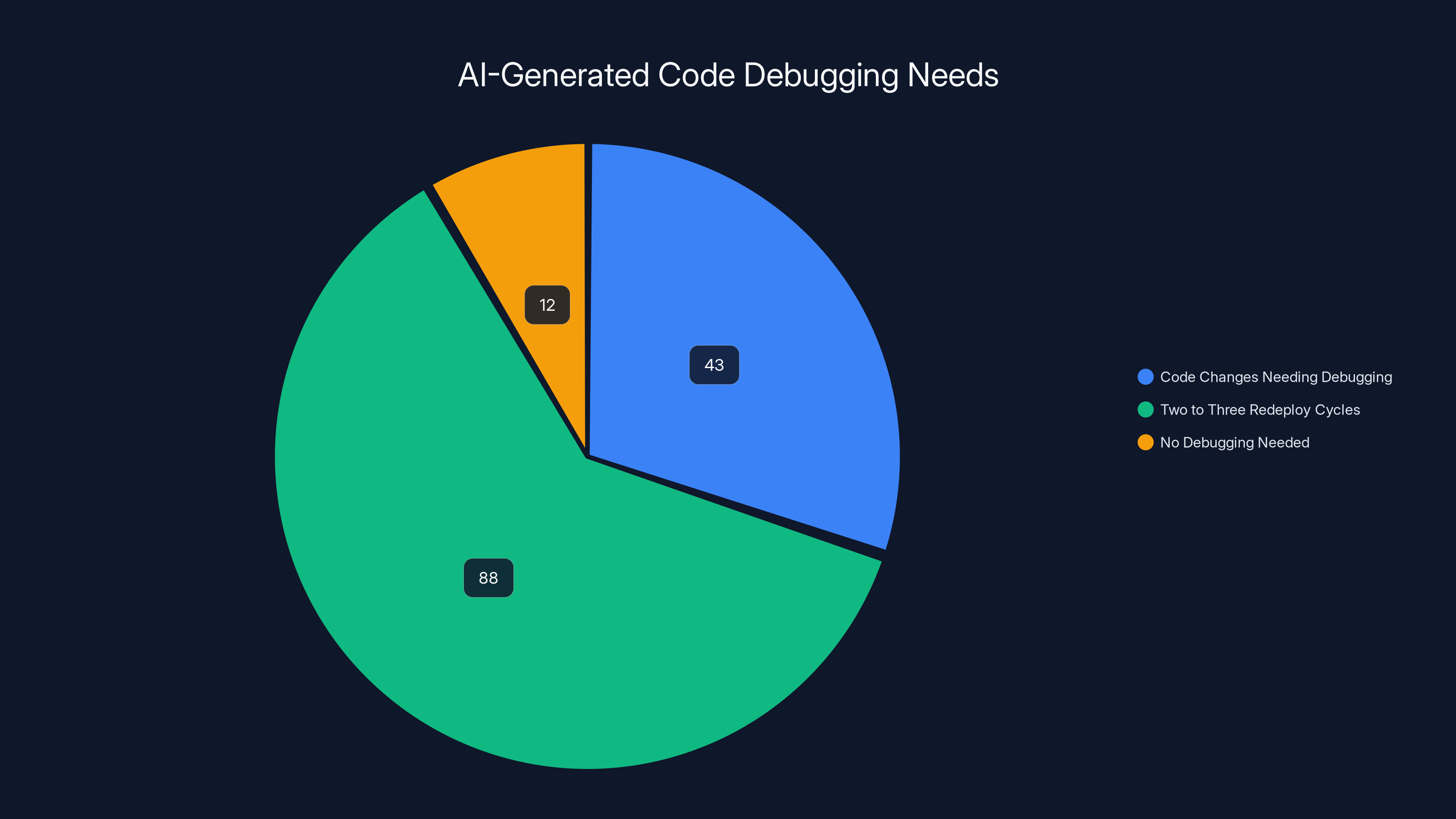

Last month, a friend's team was thrilled to integrate AI into their coding process. But the excitement quickly faded when 43% of their AI-generated code changes needed debugging in production. They're not alone. A survey of DevOps leaders reveals that AI-generated code often fails to hold up once deployed.

TL; DR

- 43% of AI-generated code changes need debugging in production.

- Multiple redeploy cycles are common, with 88% requiring two to three cycles.

- Manual debugging remains a critical skill alongside AI coding.

- Future trends suggest better AI training for reduced debugging.

- Practical tips for integrating AI in coding workflows effectively.

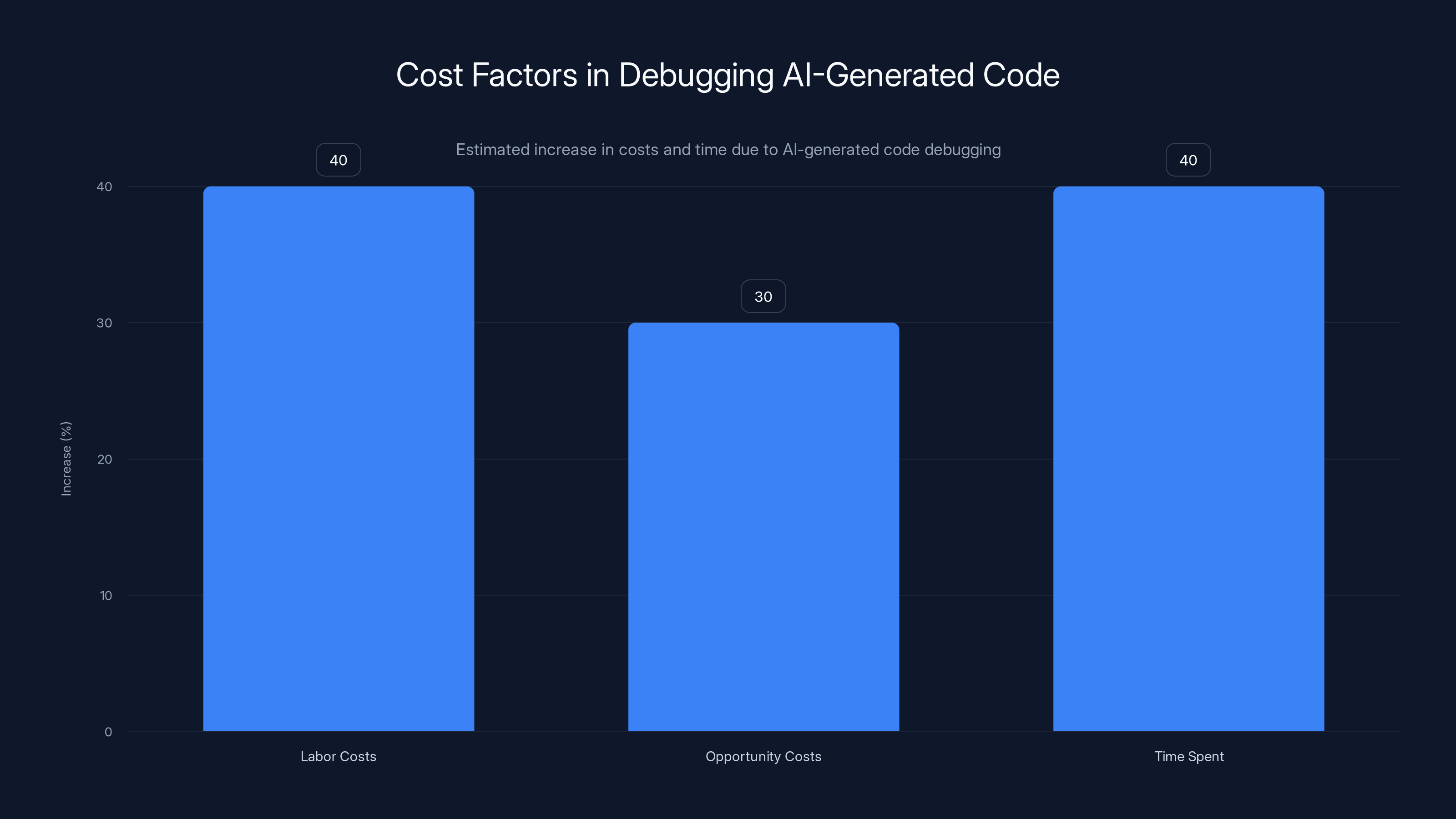

Debugging AI-generated code can increase labor and opportunity costs by 30-40%, with time spent on projects rising by 40%. Estimated data based on industry insights.

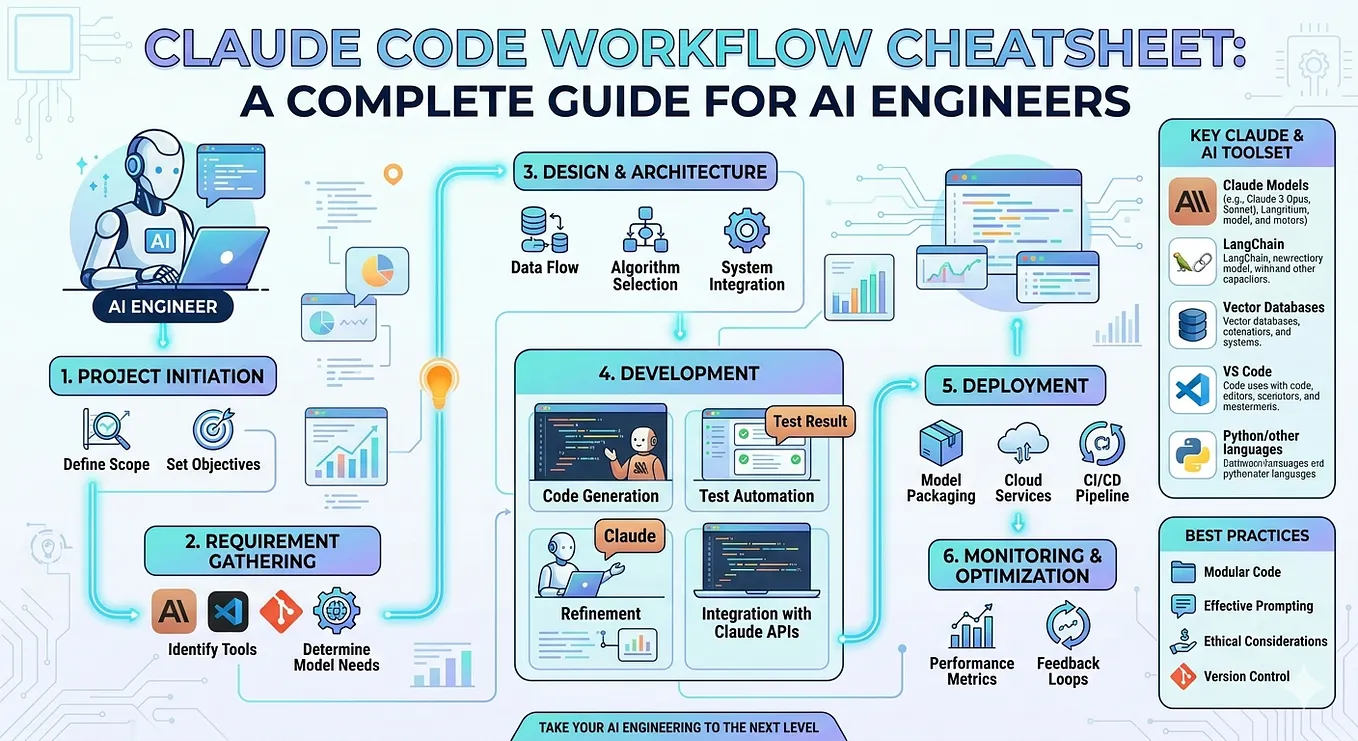

The Rise of AI in Software Development

AI's promise in software development is undeniable. From generating boilerplate code to suggesting optimizations, AI tools like GitHub Copilot and Tabnine are transforming how developers work. But there's a catch: AI-generated code isn’t foolproof.

What Developers Are Saying

Developers often find themselves in a love-hate relationship with AI-generated code. On one hand, it saves time by automating repetitive tasks. On the other, it introduces bugs that require human intervention to fix.

43% of AI-generated code changes required debugging in production, highlighting significant reliability challenges.

Why AI-Generated Code Needs Debugging

AI tools are trained on vast datasets of existing code, learning patterns and syntax. However, they can't fully understand the context or intent behind the code. This lack of understanding leads to code that compiles but doesn't perform as intended.

Common Scenarios

- Context Mismatch: AI suggests code that doesn't fit the specific use case.

- Data Dependency Issues: AI-generated code fails when interacting with real-world data.

- Performance Bottlenecks: AI doesn't always optimize for performance, leading to slowdowns.

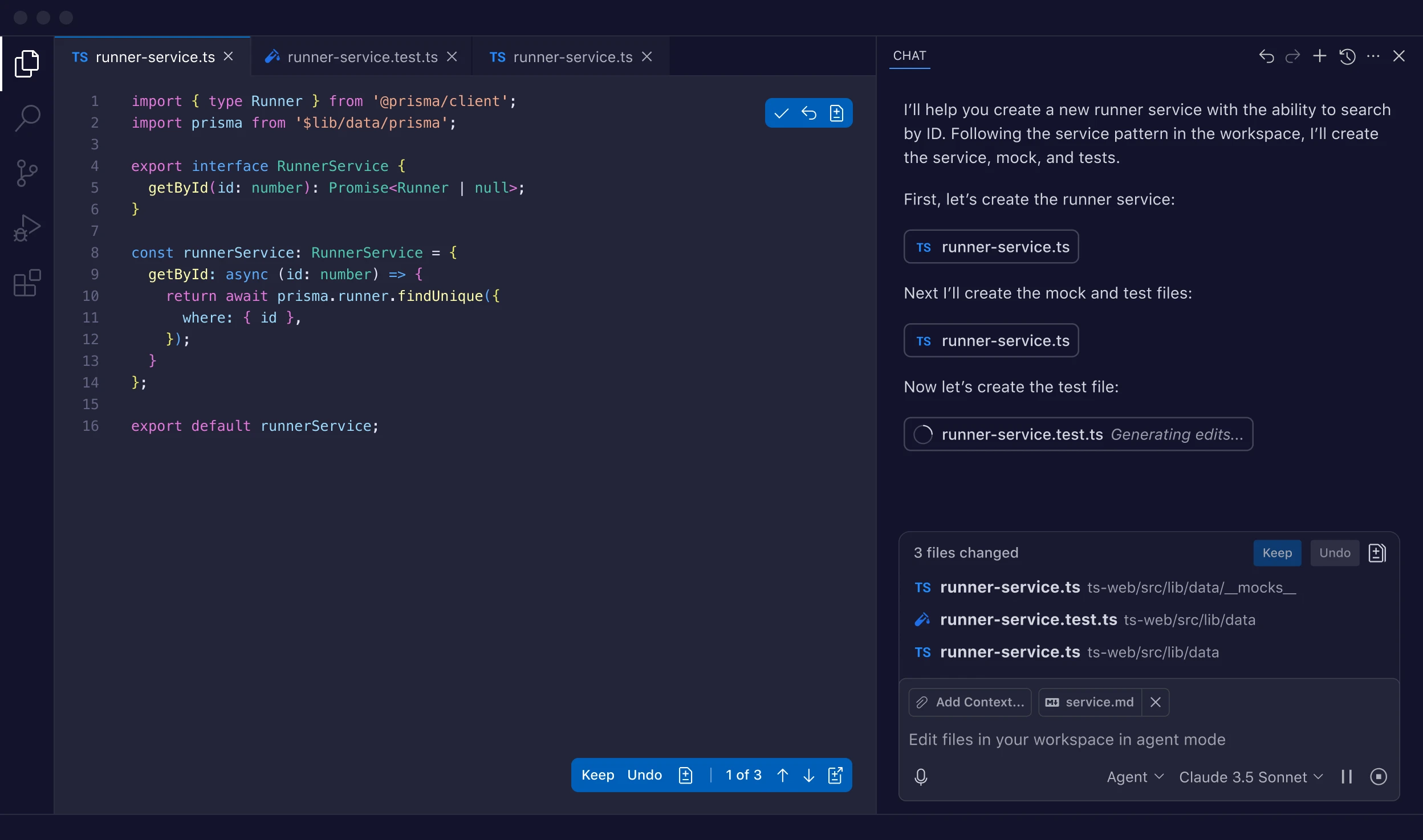

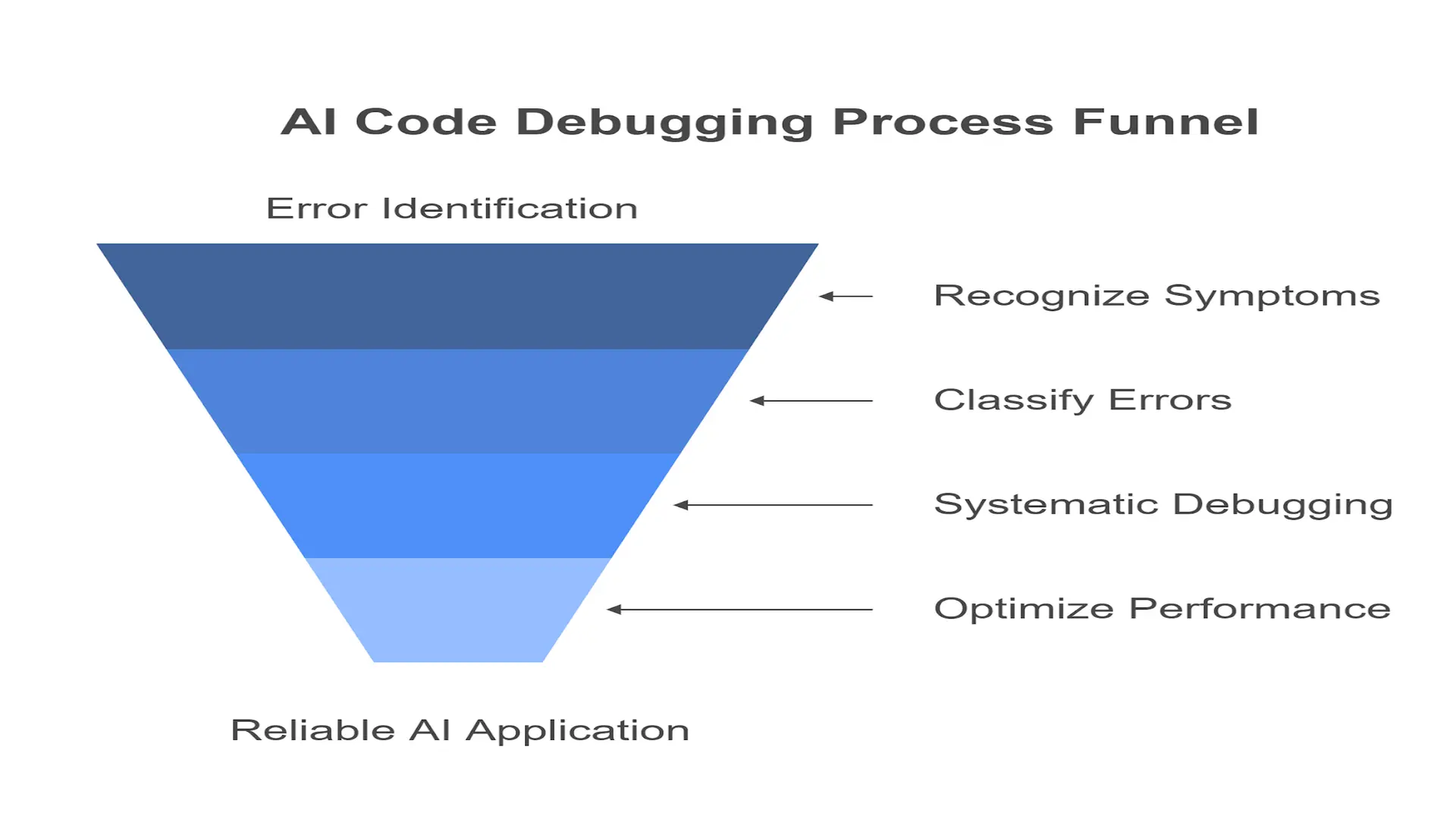

Debugging AI-Generated Code: A Practical Guide

Debugging AI-generated code requires a mix of traditional and modern techniques. Here’s a step-by-step guide:

1. Analyze the Output

Start by understanding what the AI generated and why. Look for patterns and common errors.

python# Example of AI-generated code that needs debugging

import numpy as np

def calculate_mean(data):

# AI-generated assumption: data is always a list

return np.mean(data)

# Debugging step: Check data type

if not isinstance(data, list):

raise Type Error('Data must be a list')

2. Contextualize the Code

Consider the context in which the code will run. Does it have all necessary dependencies? Are there environmental constraints?

3. Test Extensively

AI might pass initial tests but fail under edge cases. Create comprehensive test suites to cover various scenarios.

43% of AI-generated code changes require debugging, with 88% needing two to three redeploy cycles. Estimated data for 'No Debugging Needed'.

Best Practices for Using AI in Code Development

- Limit Scope: Use AI for specific tasks, like generating boilerplate code, rather than complex algorithms.

- Enhance AI Training: Feed your AI tools with domain-specific data to improve their accuracy.

- Integrate Human Review: Always have a human review AI-generated code.

Case Study: A Real-World Example

A fintech startup used AI to automate parts of their codebase. Initially, they faced a 50% failure rate in production. By refining their AI models and integrating a robust testing framework, they reduced this to 10%.

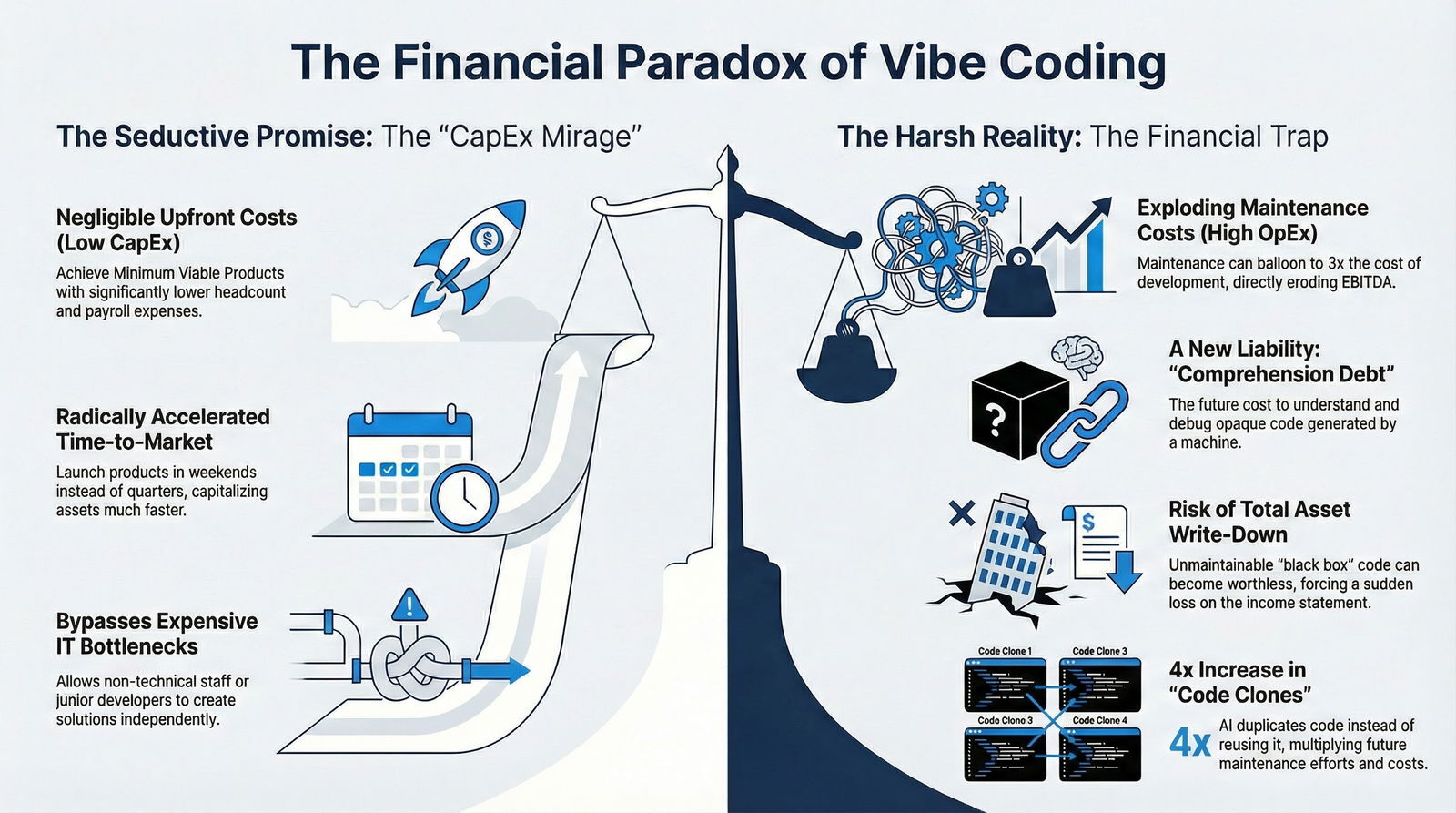

The Cost of Debugging AI-Generated Code

Debugging isn’t just about fixing code—it’s about time and resources. Teams often face increased workloads and delayed project timelines.

Financial Implications

- Increased Labor Costs: More man-hours spent debugging.

- Opportunity Costs: Delayed projects mean missed market opportunities.

Future Trends in AI-Generated Code

Better Training Models

The future holds promise for AI models that better understand context and intent, reducing the need for debugging.

Collaborative AI

AI tools are expected to evolve into collaborative partners, working alongside developers rather than just tools.

Recommendations for Development Teams

- Invest in Training: Ensure your team is equipped to integrate AI tools effectively.

- Focus on Data Quality: Better training data leads to better AI performance.

- Monitor and Adapt: Continuously monitor AI-generated code in production and be ready to adapt.

Conclusion

AI is reshaping the software development landscape, but it's not a panacea. While it can automate repetitive tasks and offer insights, human oversight is essential. By understanding and addressing the challenges of AI-generated code, teams can harness its potential while minimizing risks.

FAQ

What is AI-generated code?

AI-generated code refers to code suggestions made by AI tools based on existing code patterns and data.

Why does AI-generated code require debugging?

AI lacks context and understanding of unique project requirements, leading to errors that require manual intervention.

How can teams integrate AI tools effectively?

By limiting AI use to specific tasks, enhancing AI training, and ensuring human review, teams can effectively integrate AI tools.

What are the financial implications of debugging AI-generated code?

Increased labor costs and opportunity costs due to delayed projects are common financial implications.

How can AI tools improve in the future?

Future AI tools will likely have better training models and work more collaboratively with developers.

What are the best practices for using AI in development?

Limit AI's scope, enhance its training with domain-specific data, and always include human review.

What are common pitfalls of AI-generated code?

Context mismatch, data dependency issues, and performance bottlenecks are common pitfalls.

How can debugging be improved for AI-generated code?

Through extensive testing, contextual analysis, and enhanced AI tools, debugging can be improved.

Key Takeaways

- 43% of AI-generated code changes need debugging in production.

- Multiple redeploy cycles are often required for AI-generated code.

- Manual debugging skills remain critical alongside AI coding.

- Future trends suggest AI training improvements for reduced debugging.

- Practical tips for integrating AI in coding workflows effectively.

Related Articles

- Linux Rules on AI-Generated Code: Guidelines for Developers [2025]

- The Future of Cyclist Safety: Exploring Wearable Airbag Technology [2025]

- The Claude Code Plugin: Unveiling Data Collection in Non-Vercel Projects [2025]

- Microsoft's New AI Bots for 365 Copilot: A Deep Dive into OpenClaw-like Innovations [2025]

- Essential Power Tools for Every DIY Enthusiast in 2026

- Meet CoachCube: The AI Personal Trainer Revolutionizing Fitness [2025]

![The Hidden Costs of AI-Generated Code: Debugging in Production [2025]](https://tryrunable.com/blog/the-hidden-costs-of-ai-generated-code-debugging-in-productio/image-1-1776173865310.webp)