Three Ways AI is Learning to Understand the Physical World [2025]

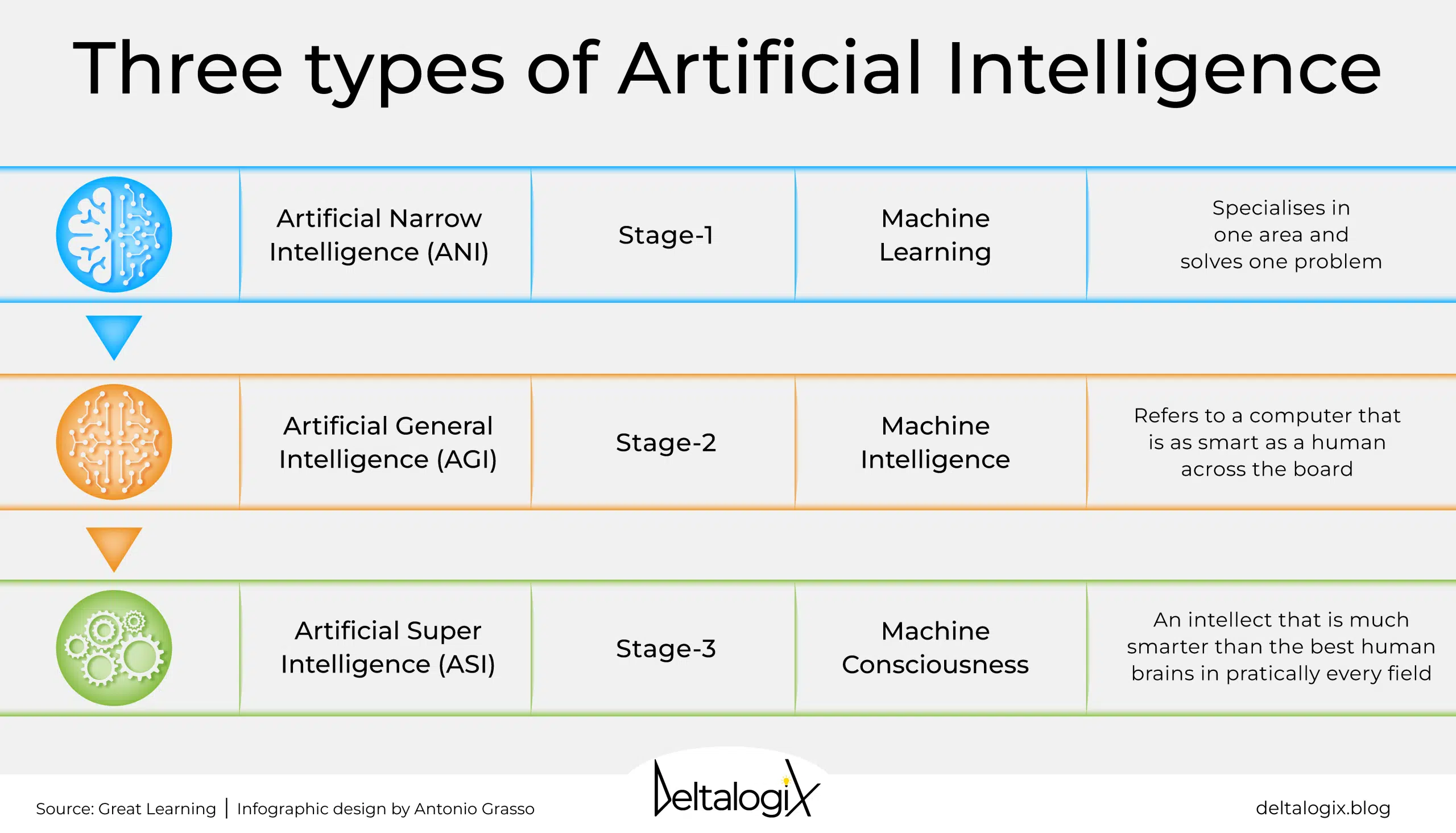

Artificial Intelligence (AI) has transformed numerous sectors by excelling at data-driven tasks. However, when it comes to understanding and interacting with the physical world, traditional AI models face significant challenges. This article explores three innovative approaches that are enabling AI to better comprehend and operate within the physical realm.

TL; DR

- Embodied AI: Integrating sensory input and physical interaction to enhance learning.

- Sim-to-Real Transfer: Utilizing simulated environments to train AI for real-world applications.

- Neuro-Symbolic AI: Combining neural networks and symbolic reasoning for improved contextual understanding.

- Bottom Line: These approaches are pivotal for AI's expansion into physical domains like robotics, autonomous vehicles, and smart manufacturing.

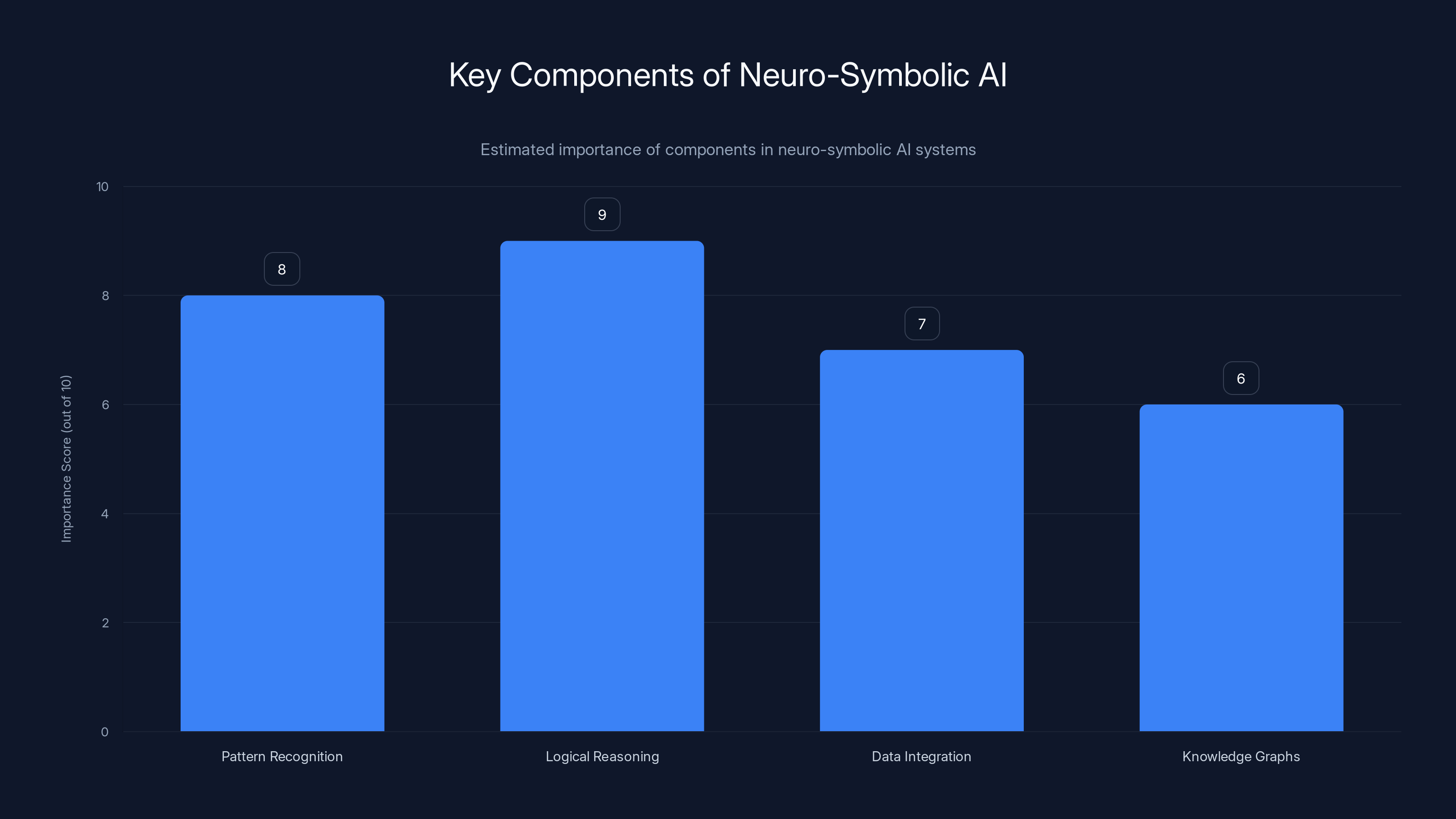

Neuro-symbolic AI systems heavily rely on logical reasoning and pattern recognition, with data integration and knowledge graphs also playing significant roles. (Estimated data)

Introduction

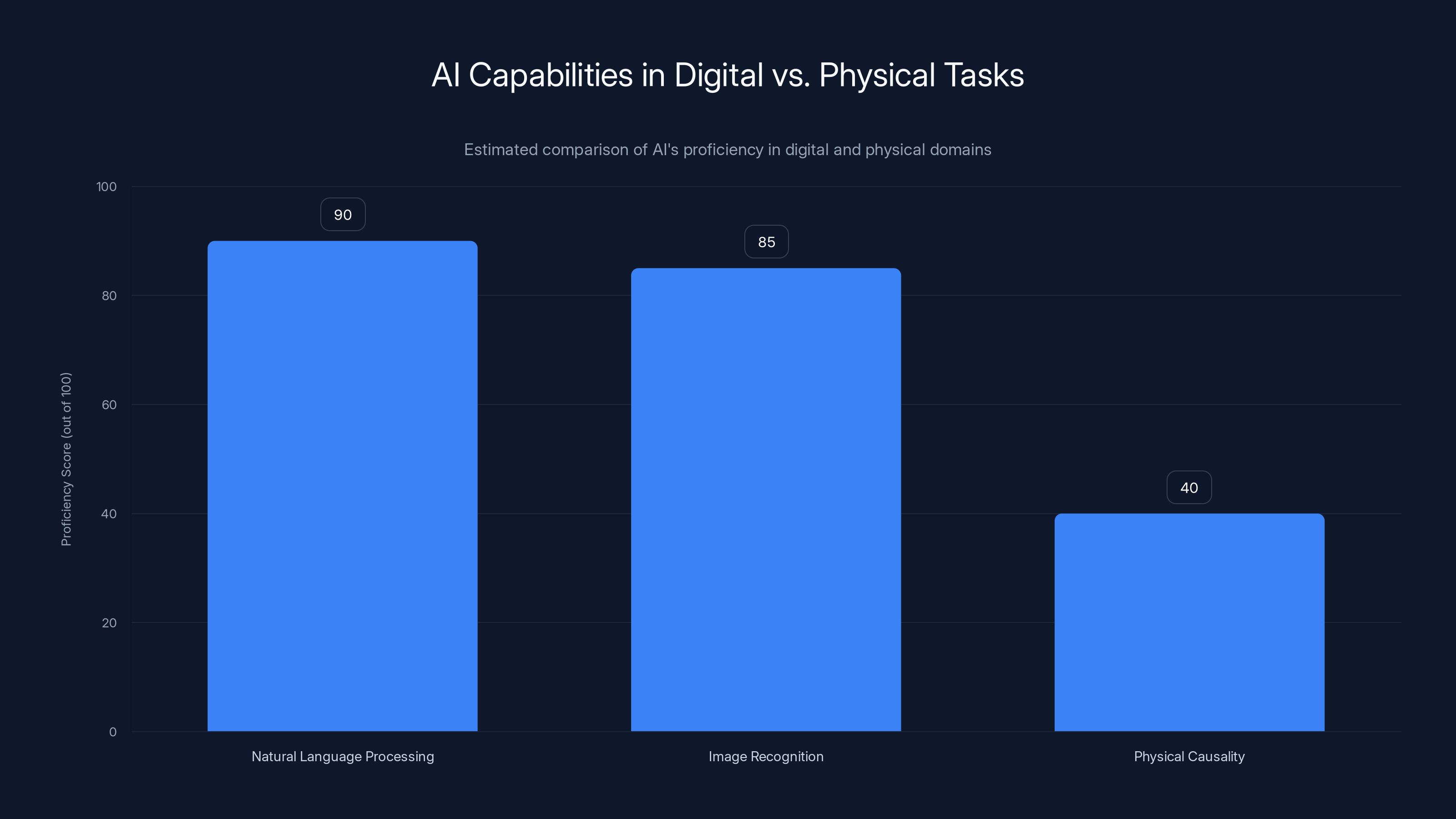

The rise of AI has been marked by impressive achievements in areas like natural language processing and image recognition. Yet, the transition from digital prowess to physical world understanding remains a significant hurdle. Large Language Models (LLMs), for instance, excel at processing abstract knowledge but lack the ability to predict the consequences of physical actions.

The Challenge

AI's inability to comprehend physical causality is a major limitation. Consider a robotic arm tasked with assembling parts. While it can follow predefined instructions, it struggles when unexpected situations arise, such as a missing component or a misaligned piece.

Researchers are actively exploring ways to equip AI with the tools needed to navigate these challenges. Here, we delve into three promising strategies that are helping AI bridge the physical-digital divide.

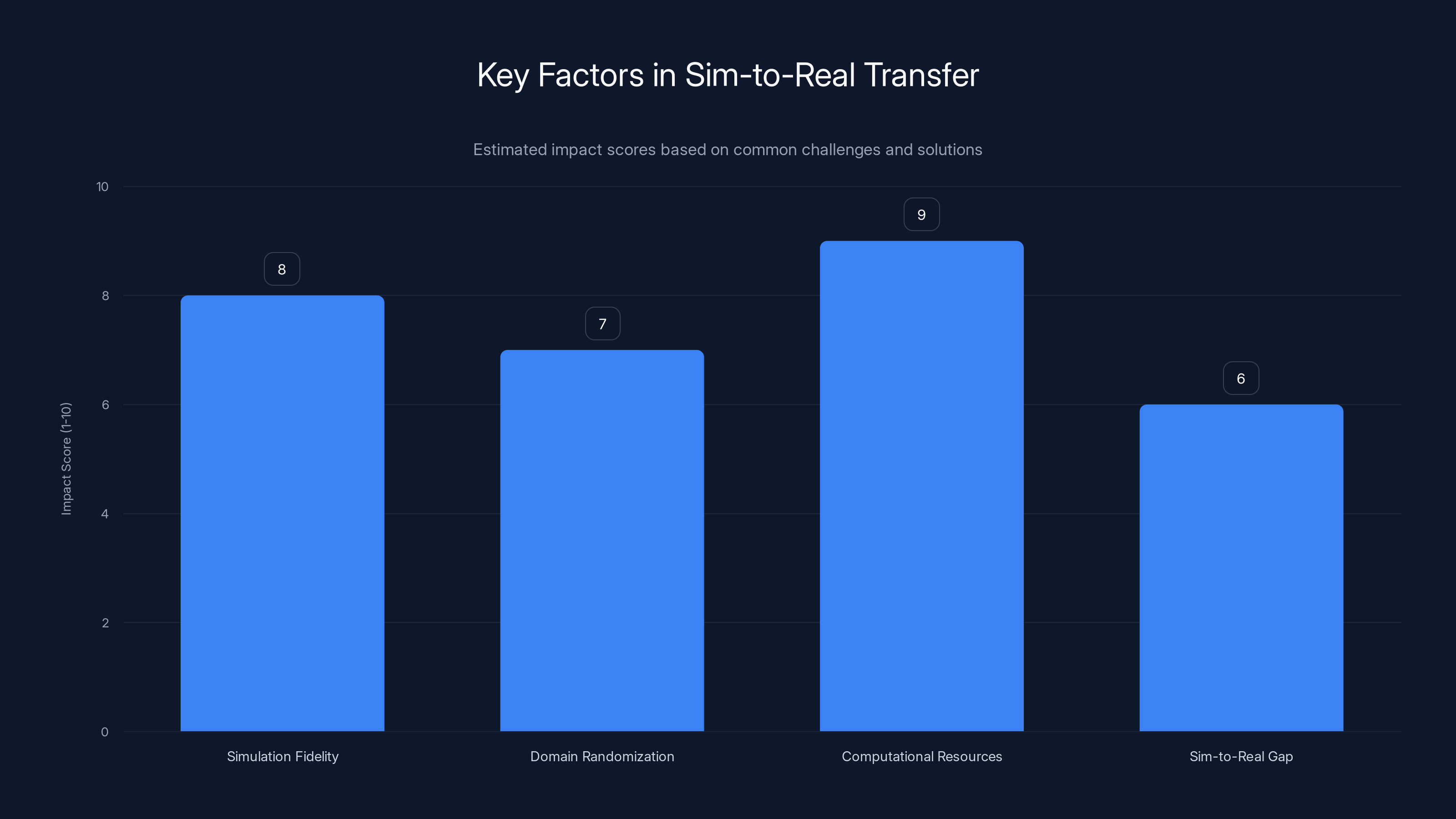

Simulation fidelity and computational resources are crucial for effective sim-to-real transfer, with high impact scores. Estimated data based on common challenges and solutions.

Embodied AI: Learning Through Interaction

Embodied AI refers to systems that learn by interacting with their environment, much like how humans and animals learn. This approach emphasizes sensory input and physical interaction as key components of the learning process.

How It Works

Embodied AI systems are equipped with sensors and actuators that allow them to perceive and interact with the physical world. Through a process known as reinforcement learning, these systems learn by trial and error, gradually improving their ability to perform tasks.

Example: Boston Dynamics' Spot Robot

Boston Dynamics' Spot robot exemplifies embodied AI in action. Equipped with cameras, sensors, and robotic limbs, Spot can navigate complex terrains, avoid obstacles, and even open doors. It learns from its environment, adapting its behavior based on sensory feedback, as highlighted in a recent partnership with FieldAI.

Practical Implementation

- Multi-Modal Sensor Integration: Combine data from cameras, LIDAR, and other sensors for a comprehensive understanding of the environment.

- Reinforcement Learning Algorithms: Implement algorithms that reward desired behaviors, enabling the system to refine its actions over time.

Common Pitfalls

- Data Overload: Managing the vast amount of sensory data can be challenging. Implementing efficient data processing techniques is crucial.

- Real-Time Processing: Ensuring that the AI can process sensory data in real-time is essential for responsive interaction.

Future Trends

As embodied AI continues to evolve, we can expect more sophisticated robots capable of performing complex tasks in dynamic environments. The integration of AI with Internet of Things (IoT) devices will further enhance its capabilities, as discussed in ASUS's exploration of AI in urban mobility.

Sim-to-Real Transfer: Bridging the Gap

Sim-to-real transfer involves training AI models in simulated environments before deploying them in the real world. This approach helps overcome the limitations of real-world data collection and testing.

How It Works

AI models are trained in virtual environments that mimic real-world conditions. Once the models achieve a certain level of proficiency, they are transferred to physical systems.

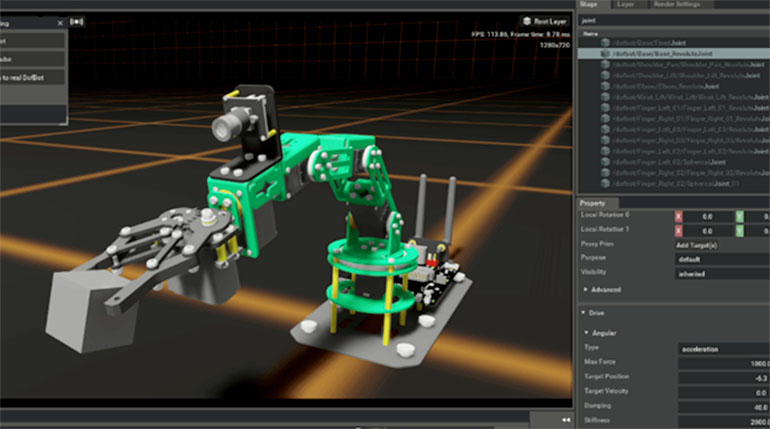

Example: NVIDIA's Isaac Sim

NVIDIA's Isaac Sim platform allows developers to create photorealistic simulations for training robots and autonomous vehicles. These simulations accelerate the training process while reducing the risks and costs associated with real-world testing, as detailed in research on AI's impact on weather prediction.

Practical Implementation

- Simulation Platforms: Utilize platforms like Unity or Unreal Engine to create detailed simulations.

- Domain Randomization: Introduce variability in simulations to improve the model's robustness and adaptability.

Common Pitfalls

- Sim-to-Real Gap: Discrepancies between simulated and real-world conditions can affect performance. Continuous fine-tuning is necessary.

- Computational Resources: High-quality simulations require significant computational power.

Future Trends

Advancements in graphics technology and cloud computing will enhance the fidelity of simulations, making sim-to-real transfer more effective. This approach will be pivotal in fields like autonomous driving and drone navigation, as highlighted by Fortune's coverage of AI in infrastructure.

AI excels in digital tasks like NLP and image recognition but struggles with physical causality. Estimated data reflects current trends.

Neuro-Symbolic AI: Merging Neural and Symbolic Worlds

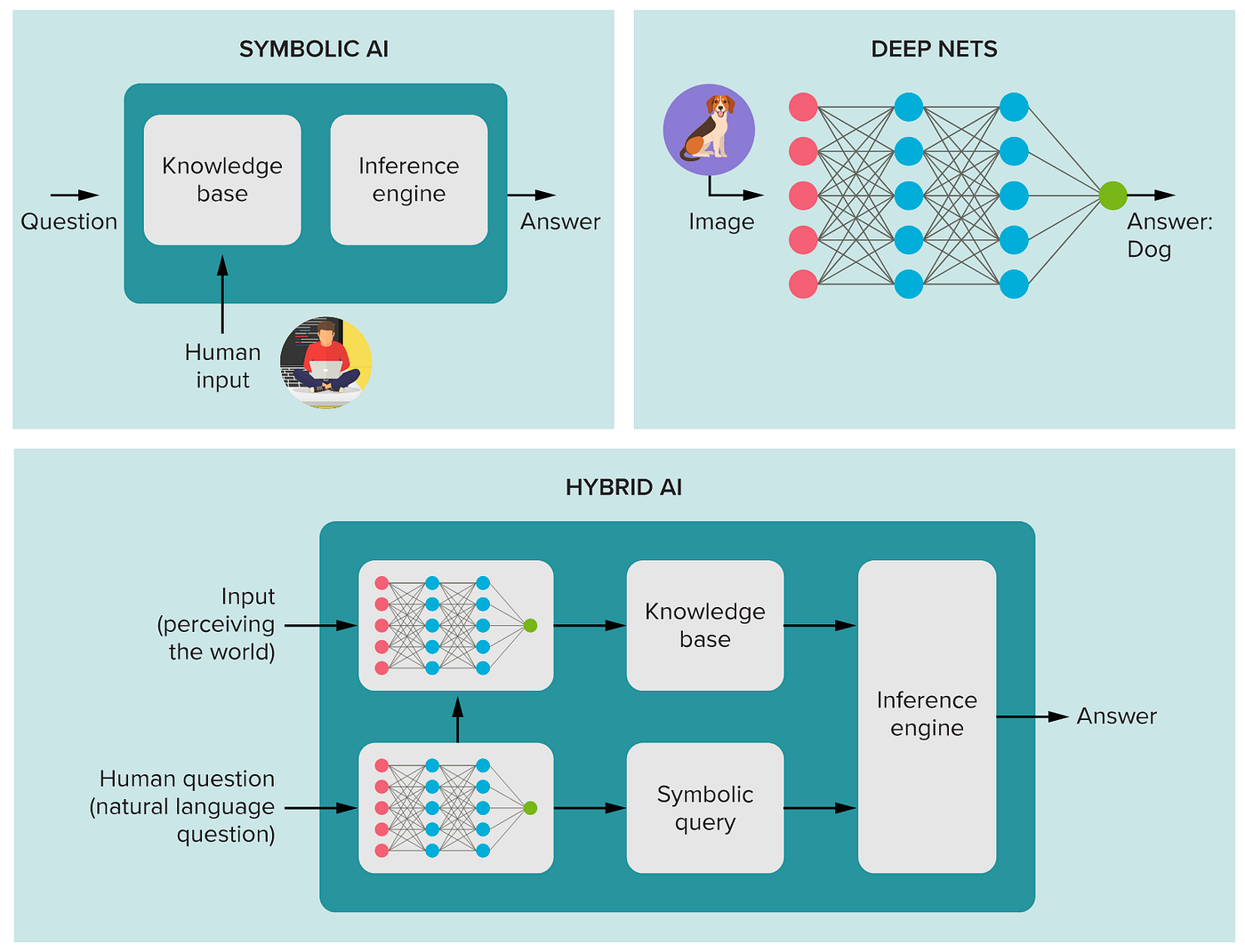

Neuro-symbolic AI combines the pattern recognition capabilities of neural networks with the logical reasoning strengths of symbolic AI. This hybrid approach enhances AI's ability to understand context and make informed decisions.

How It Works

Neuro-symbolic systems integrate deep learning models with symbolic reasoning frameworks. Neural networks process sensory data, while symbolic systems provide a structured understanding of the world.

Example: IBM's Project Debater

IBM's Project Debater leverages neuro-symbolic AI to engage in complex debates with humans. It processes vast amounts of textual information and uses symbolic reasoning to construct coherent arguments, as demonstrated in Georgetown University's exploration of AI's physical capabilities.

Practical Implementation

- Knowledge Graphs: Use knowledge graphs to represent relationships between entities and concepts.

- Hybrid Architectures: Develop architectures that seamlessly integrate neural and symbolic components.

Common Pitfalls

- Complexity: Designing and implementing hybrid systems can be technically challenging.

- Data Integration: Ensuring seamless integration of neural and symbolic data is crucial for optimal performance.

Future Trends

Neuro-symbolic AI is poised to revolutionize areas like natural language understanding and cognitive robotics. As AI systems become more adept at reasoning, they will be better equipped to tackle complex real-world problems, as discussed in Tufts University's research on AI models.

Conclusion

The journey to equip AI with an understanding of the physical world is fraught with challenges, but the potential rewards are immense. Embodied AI, sim-to-real transfer, and neuro-symbolic AI represent significant strides toward achieving this goal.

As these technologies continue to evolve, we can expect AI systems to play an increasingly integral role in fields such as robotics, autonomous driving, and smart manufacturing. By embracing these advancements, we can unlock new possibilities for innovation and efficiency in the physical world, as highlighted in the World Economic Forum's insights on AI's impact on jobs.

FAQ

What is embodied AI?

Embodied AI refers to artificial intelligence systems that learn through physical interaction with their environment, similar to how humans and animals learn, as explored in research by NTU Singapore.

How does sim-to-real transfer work?

Sim-to-real transfer involves training AI models in simulated environments before deploying them in the real world, helping to bridge the gap between virtual training and physical application, as detailed in Allen Institute's blog.

What is neuro-symbolic AI?

Neuro-symbolic AI combines the strengths of neural networks and symbolic reasoning to enhance AI's contextual understanding and decision-making capabilities, as discussed in Stony Brook University's workshop.

What are the benefits of these AI advancements?

These advancements enable AI to better comprehend and interact with the physical world, leading to improvements in robotics, autonomous vehicles, and smart manufacturing, as highlighted in Vocal Media's analysis of the robotics market.

How do these technologies impact everyday life?

By enhancing AI's understanding of the physical world, these technologies pave the way for more intelligent and adaptive systems that can improve efficiency, safety, and innovation across various industries, as noted in RSM's insights on AI in life sciences.

What challenges do researchers face in developing these AI systems?

Challenges include managing data complexity, ensuring real-time processing, bridging the sim-to-real gap, and designing hybrid architectures that integrate neural and symbolic components effectively, as discussed in VentureBeat's exploration of AI learning.

Key Takeaways

- Embodied AI integrates sensory input and physical interaction for enhanced learning.

- Sim-to-real transfer trains AI in virtual environments for real-world applications.

- Neuro-symbolic AI combines neural networks and symbolic reasoning for better context understanding.

- These approaches are critical for AI's expansion into physical domains like robotics and autonomous vehicles.

- The integration of AI with IoT devices will further enhance its capabilities.

Related Articles

- The Overlooked Warning: Navigating the AI-Iran Nexus in Global Security [2025]

- AI Empowering UK SMEs: Productivity Gains and Adoption Challenges [2025]

- Amazon's Acquisition of Rivr: A Leap Toward Autonomous Robotics [2025]

- ChatGPT's 'Adult Mode': Navigating the New Era of Intimate Surveillance [2025]

- Tesla's Full Self-Driving: On the Cusp of a Recall [2025]

- Understanding the Intensified Investigation into Tesla's Full Self-Driving Software [2025]

![Three Ways AI is Learning to Understand the Physical World [2025]](https://tryrunable.com/blog/three-ways-ai-is-learning-to-understand-the-physical-world-2/image-1-1774037140116.jpg)