Trust in AI: An Evolving Landscape

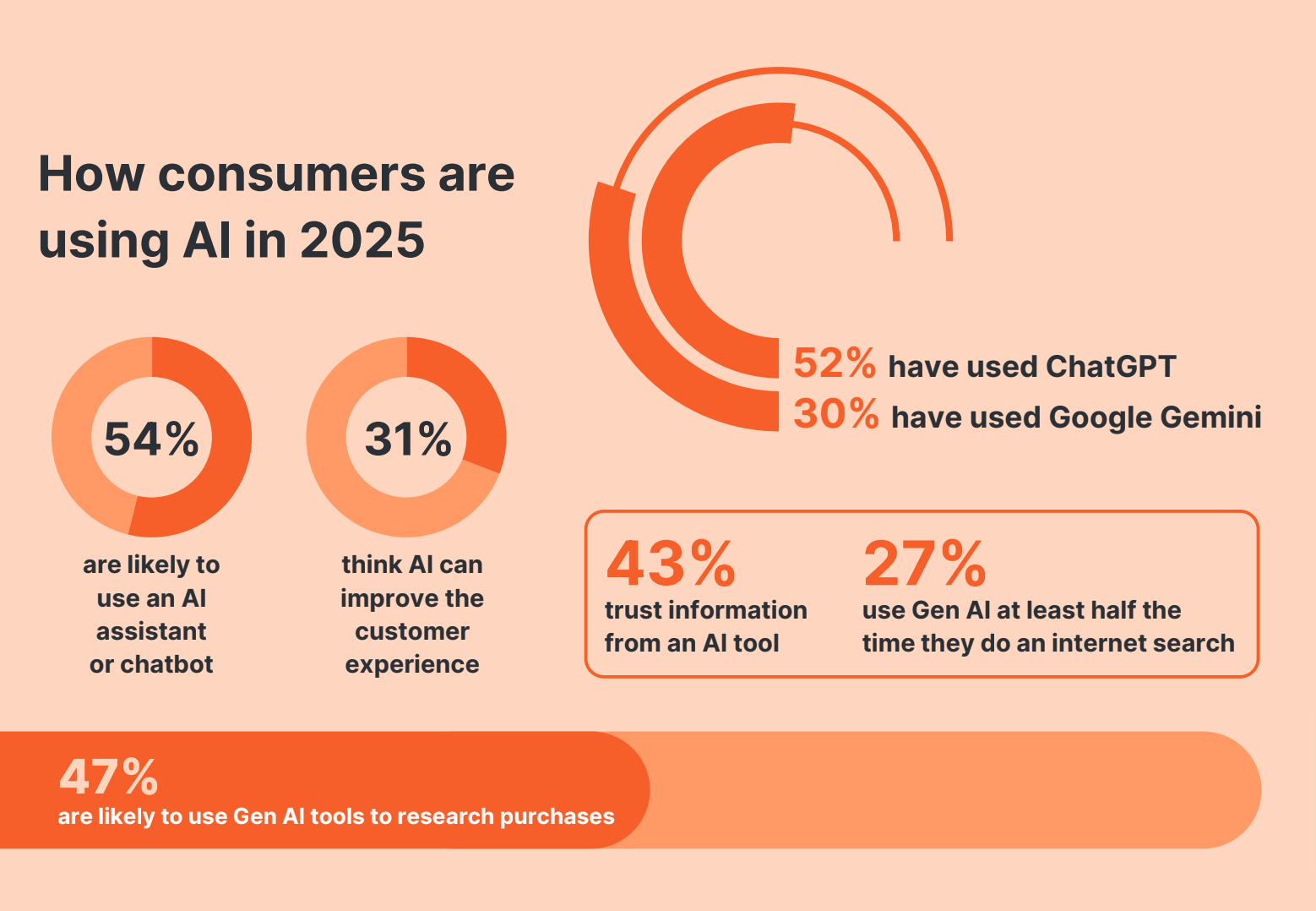

The conversation around artificial intelligence (AI) has shifted dramatically over the past decade. Once a concept confined to science fiction, AI is now an integral part of our daily lives, influencing everything from how we shop online to how our cars find the best route to work. Despite these advancements, a recent study reveals a gap between AI's capabilities and public trust in these technologies. This article delves into the nuances of this trust gap, exploring why people are more accepting of AI but remain hesitant to relinquish full control.

TL; DR

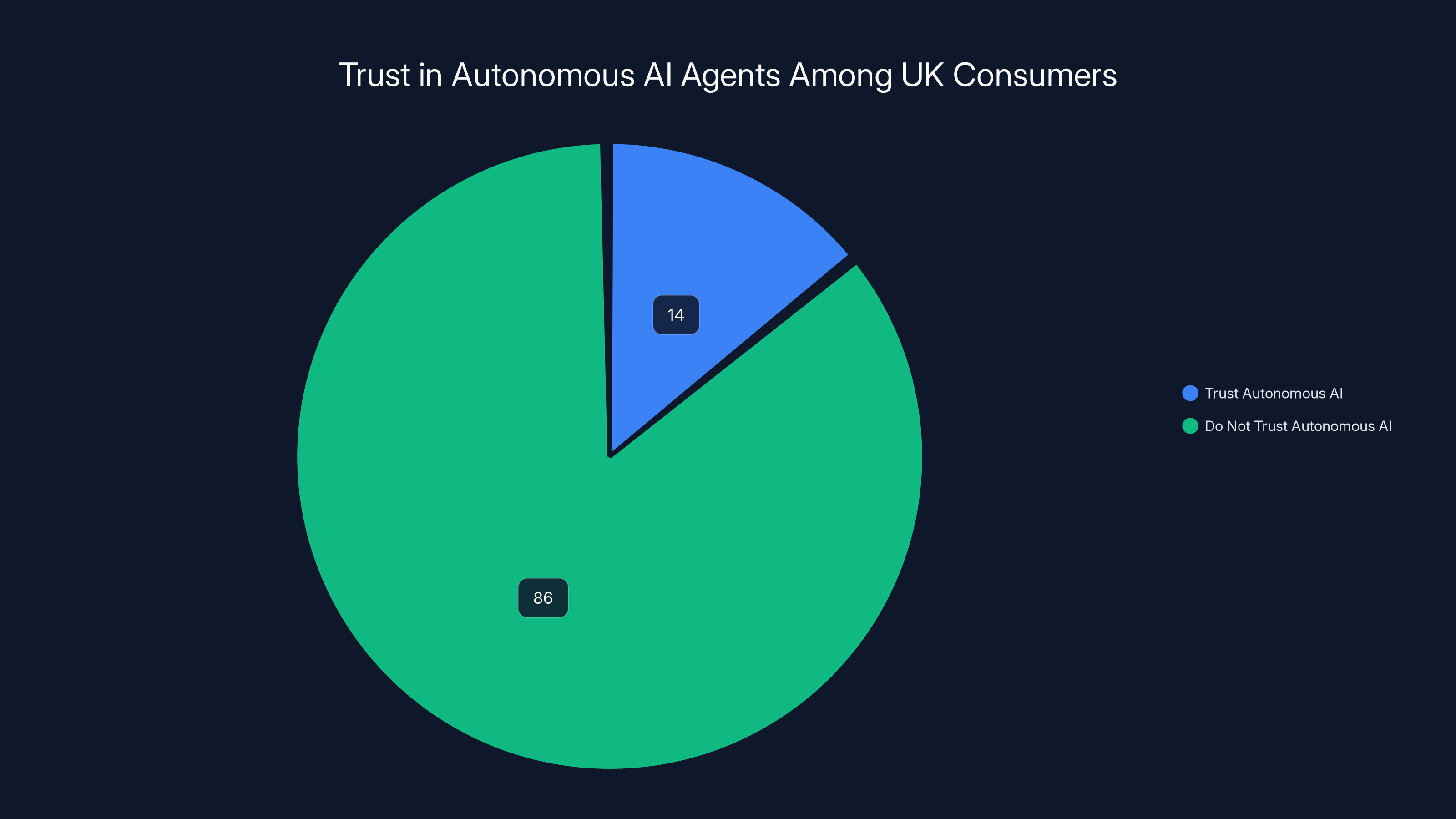

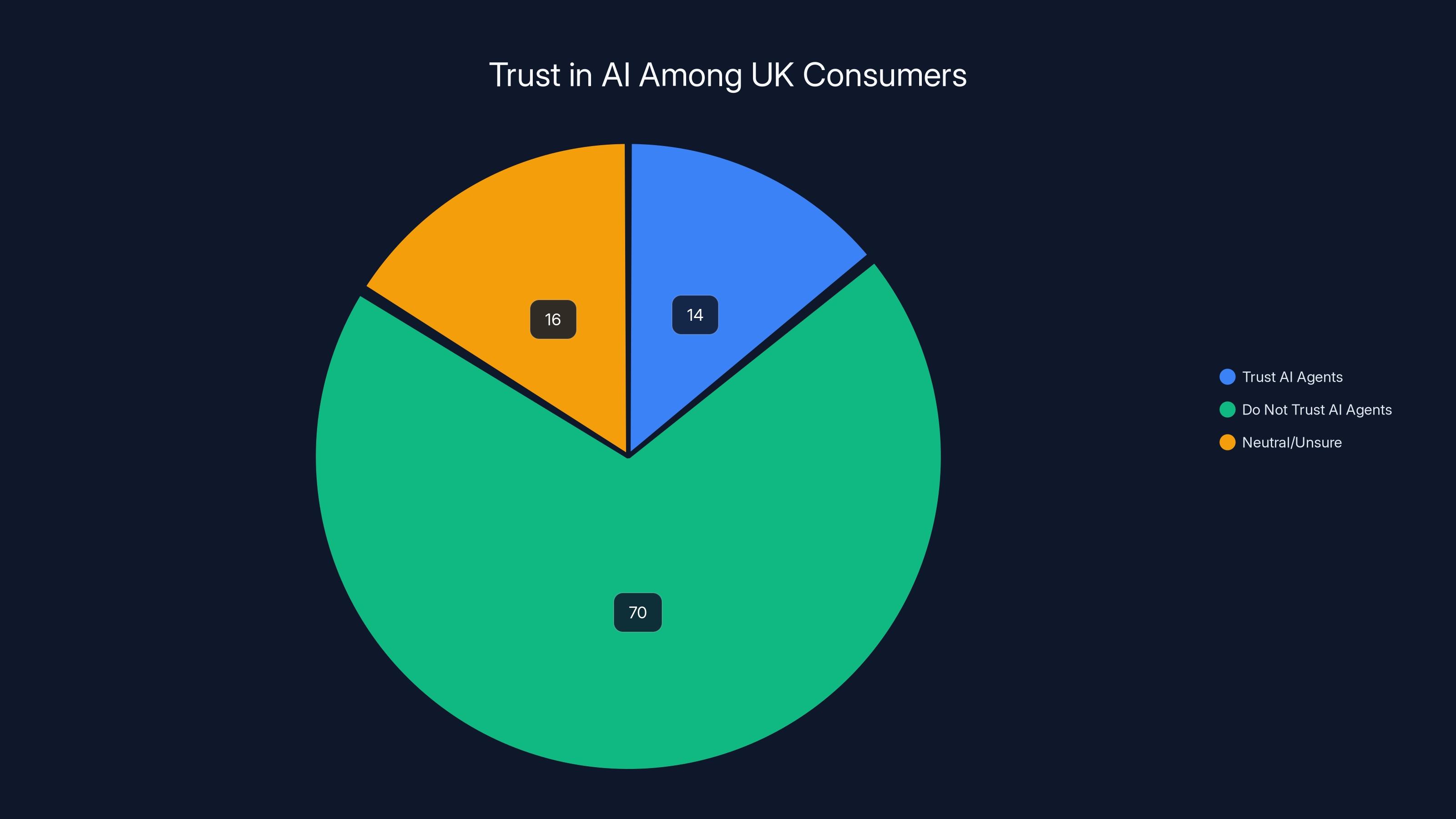

- 14% of UK consumers trust autonomous AI agents: A significant gap exists between AI capabilities and public trust.

- Control and transparency are key concerns: Users want more insight and influence over AI decisions.

- AI's role in everyday life is expanding: Adoption is rising, yet full autonomy is not widely accepted.

- Practical implementation requires balance: Integrating AI without losing human oversight is crucial.

- Future trends emphasize ethical AI: Responsible AI development will be critical moving forward.

Only 14% of UK consumers trust autonomous AI agents, highlighting a significant trust gap. Estimated data based on current trends.

The Current State of AI Acceptance

AI technologies have made considerable inroads into various sectors, increasing efficiency and enabling new capabilities. However, as AI's presence grows, so does the scrutiny of its applications. According to a recent study, only 14% of UK consumers say they would trust autonomous AI agents, indicating a significant trust gap.

Understanding the Trust Gap

The hesitancy stems from concerns over control, accountability, and transparency. Users are wary of AI systems making critical decisions without human oversight. This apprehension is evident in scenarios where AI is applied in sensitive areas such as healthcare or autonomous vehicles, as noted in a report on AI in surgery.

Only 14% of UK consumers trust autonomous AI agents, highlighting a significant trust gap with 70% expressing distrust. Estimated data for neutral/unsure category.

Practical AI Implementations and Their Challenges

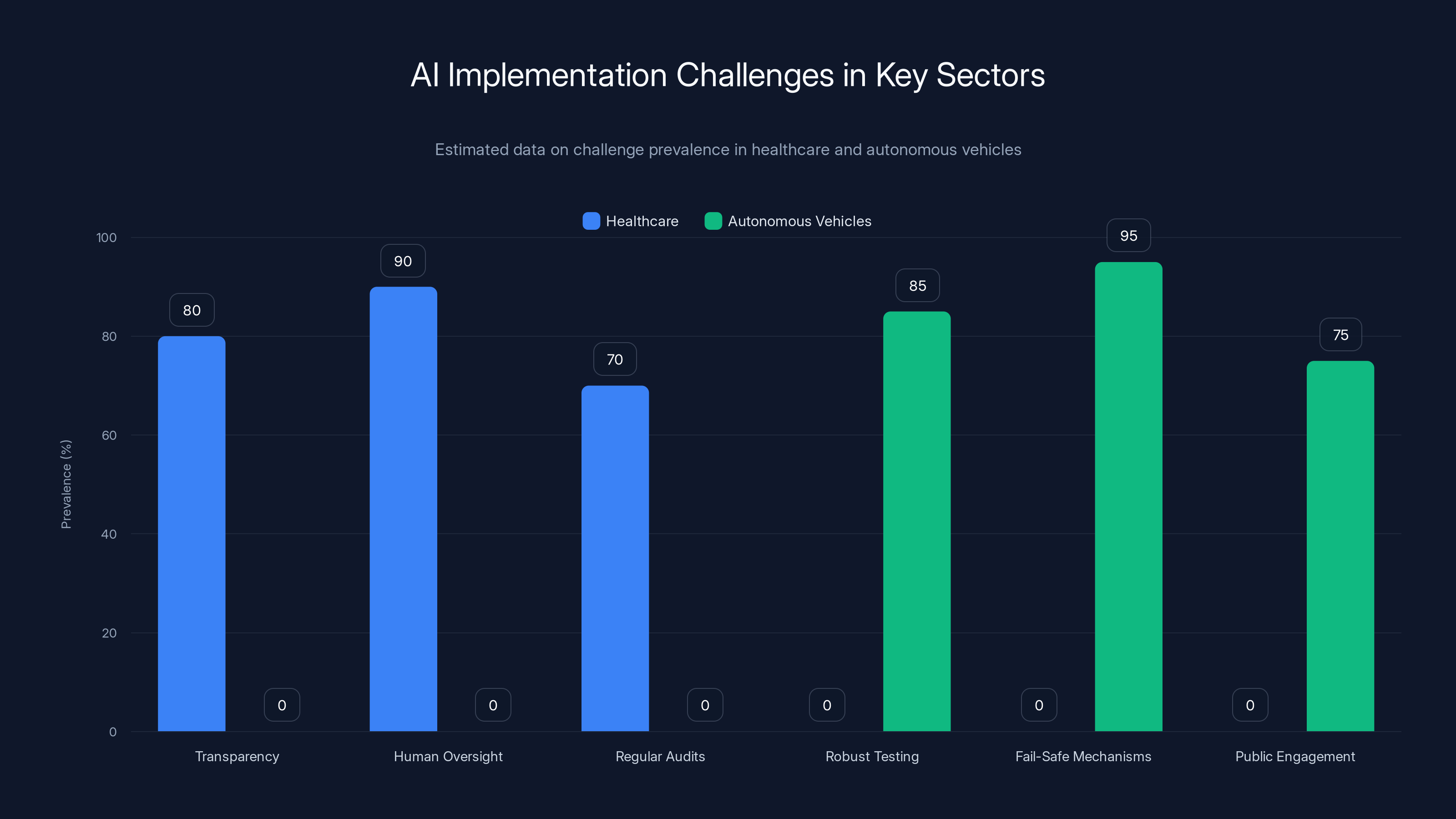

Case Study: Healthcare

In healthcare, AI is used for diagnostic purposes, predicting patient outcomes, and even in surgical assistance. While these applications have shown promising results, the trust gap persists due to ethical concerns and the potential for errors, as discussed in a study on human oversight in AI.

- Implementation Guide:

- Ensure Transparency: Clearly communicate how AI models make decisions.

- Maintain Human Oversight: Integrate AI as an assistive tool rather than a replacement.

- Regular Audits: Conduct thorough audits to ensure AI systems are functioning as intended.

Case Study: Autonomous Vehicles

Autonomous vehicles represent one of the most visible applications of AI, promising to revolutionize transportation. However, incidents involving autonomous vehicles have highlighted the need for improved safety measures and fail-safes, as emphasized by Austin leaders.

- Implementation Guide:

- Robust Testing: Conduct extensive real-world testing in varied conditions.

- Fail-Safe Mechanisms: Implement systems that ensure vehicle control can be manually overridden.

- Public Engagement: Educate the public about the benefits and limitations of autonomous vehicles.

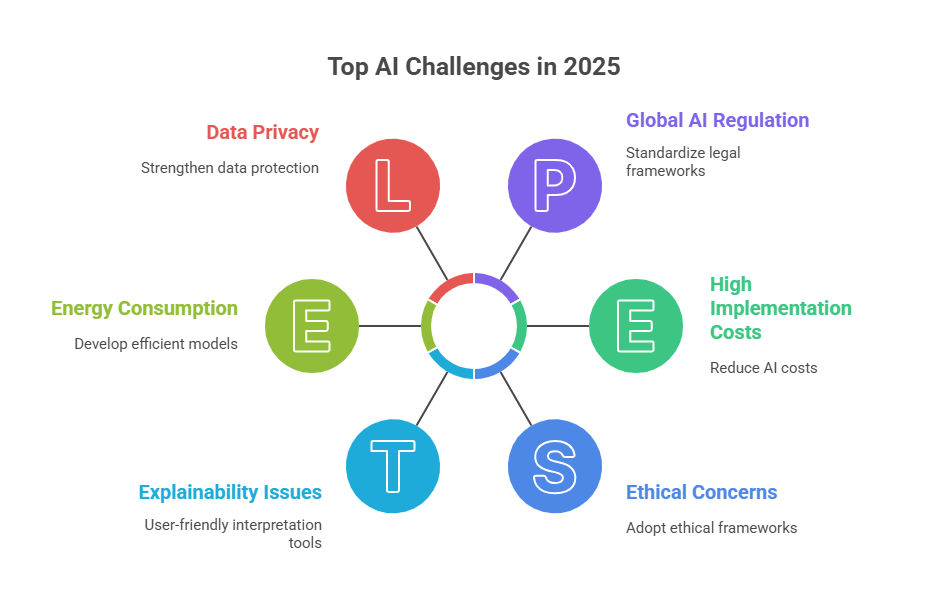

Common Pitfalls and Solutions in AI Adoption

Pitfall: Lack of Transparency

One major pitfall is the 'black box' nature of many AI systems. Users are often unaware of how decisions are made, leading to mistrust.

Solution: Develop explainable AI models that provide users with clear insights into decision-making processes, as suggested by research on AI transparency.

Pitfall: Over-reliance on AI

Organizations may become overly reliant on AI, neglecting the need for human intervention in critical situations.

Solution: Establish protocols that ensure human oversight is maintained in decision-making processes.

Pitfall: Ethical Concerns

AI systems can inadvertently perpetuate biases, leading to unfair outcomes.

Solution: Implement ethical guidelines and diverse datasets to train AI models, reducing bias, as highlighted in a study on AI ethics.

Estimated data shows that transparency and human oversight are key challenges in healthcare AI, while robust testing and fail-safe mechanisms are critical for autonomous vehicles.

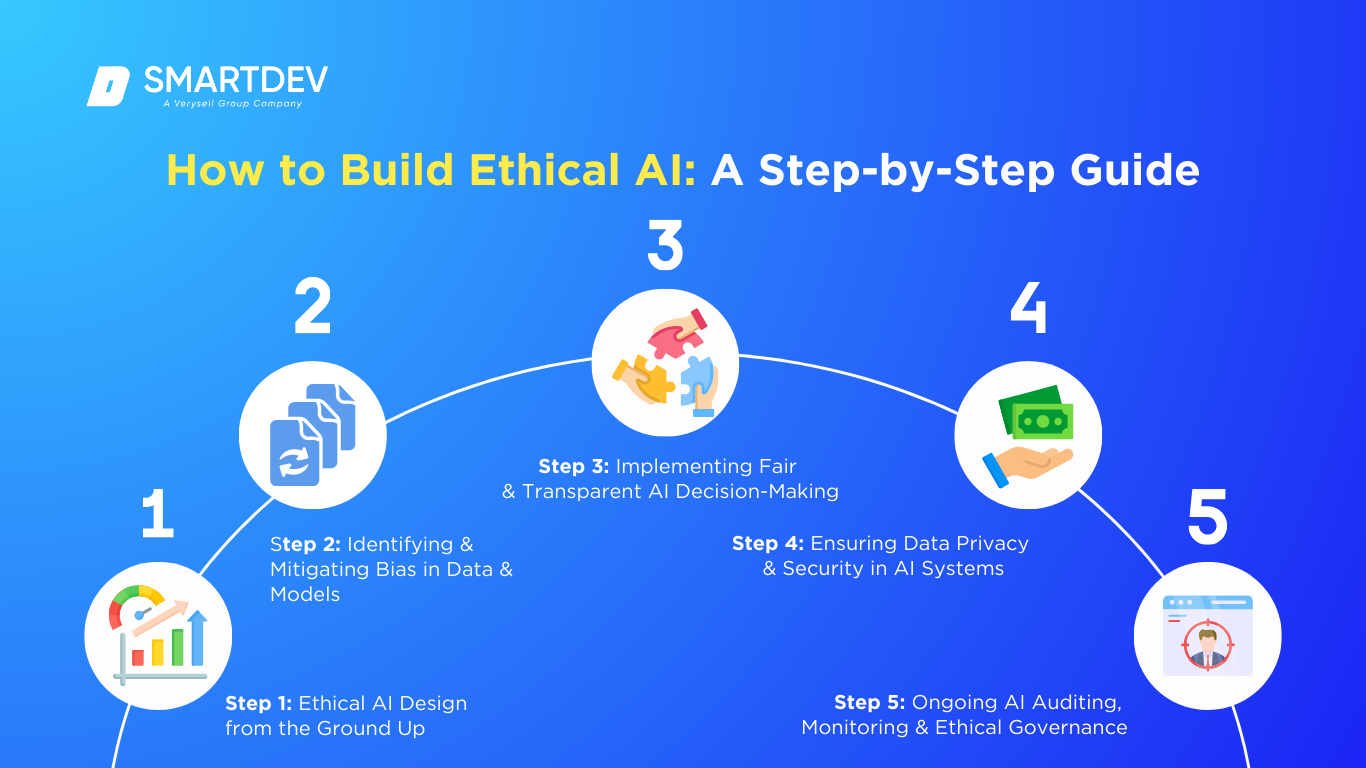

Future Trends and Recommendations

Trend: Ethical AI Development

As AI continues to evolve, ethical considerations will become increasingly important. Future advancements must prioritize ethical AI development to maintain public trust, as noted by the National Academies.

- Recommendations:

- Inclusive Design: Involve diverse stakeholders in AI development to ensure broader perspectives.

- Continuous Monitoring: Implement systems for ongoing monitoring and evaluation of AI impacts.

Trend: Hybrid AI Systems

Hybrid systems, which combine human and AI capabilities, present a promising future direction. These systems leverage the strengths of both human intuition and AI efficiency.

- Recommendations:

- Collaborative Interfaces: Design interfaces that facilitate seamless collaboration between humans and AI.

- Adaptive Learning: Develop AI systems that learn from human interactions and adapt accordingly.

Conclusion

The trust gap between AI capabilities and public acceptance highlights the need for a balanced approach to AI integration. By focusing on transparency, accountability, and ethical development, we can foster greater trust in AI systems and harness their full potential.

Bottom Line: As AI technologies become more embedded in our lives, building trust through transparency and ethical practices will be essential.

FAQ

What is AI?

AI, or artificial intelligence, refers to systems or machines that simulate human intelligence to perform tasks and can iteratively improve themselves based on the information they collect.

How does AI work?

AI utilizes algorithms and data to mimic human decision-making processes, often involving machine learning and deep learning techniques to analyze patterns and make predictions.

What are the benefits of AI?

AI offers numerous benefits including increased efficiency, cost reduction, enhanced decision-making, and the ability to handle complex data sets, as supported by McKinsey.

Why is transparency important in AI?

Transparency ensures users understand how AI systems make decisions, which is crucial for building trust and ensuring accountability.

How can organizations improve AI trust?

Organizations can improve trust by implementing explainable AI models, maintaining human oversight, and adhering to ethical guidelines in AI development.

What does the future hold for AI?

The future of AI involves more ethical and transparent systems, hybrid human-AI collaboration, and increased integration across various industries.

How can AI bias be reduced?

AI bias can be reduced by using diverse datasets, involving diverse teams in the development process, and implementing ethical guidelines for AI training.

Are autonomous vehicles safe?

While autonomous vehicles have the potential to reduce accidents, they require robust testing and fail-safe mechanisms to ensure safety and reliability, as highlighted in a report on the autonomous vehicle market.

Key Takeaways

- Only 14% of UK consumers trust autonomous AI agents.

- Transparency and accountability are crucial for building AI trust.

- AI's role is expanding, but full autonomy is not yet accepted.

- Practical implementation requires a balance between AI and human oversight.

- Future trends emphasize ethical and transparent AI development.

Related Articles

- GPT-5.5 Instant: A Peek into AI's Memory Revolution [2025]

- Understanding the Meta Lawsuit: Copyright Infringement in the Digital Age [2025]

- The Legal Battle Over AI Chatbots Pretending to Be Licensed Doctors [2025]

- Robots on the Rails: How Automation is Shaping Public Transport [2025]

- Inside the Mind Games: How Google's AI Architect Became Elon Musk's Obsession [2025]

- The Ethical Implications of AI Manipulation: A Deep Dive [2025]

!['Trust and AI: Technological Capability Outpaces Acceptance [2025]'](https://tryrunable.com/blog/trust-and-ai-technological-capability-outpaces-acceptance-20/image-1-1778067233466.jpg)