Trust and Judgment: Navigating the AI-Driven SOC Landscape [2025]

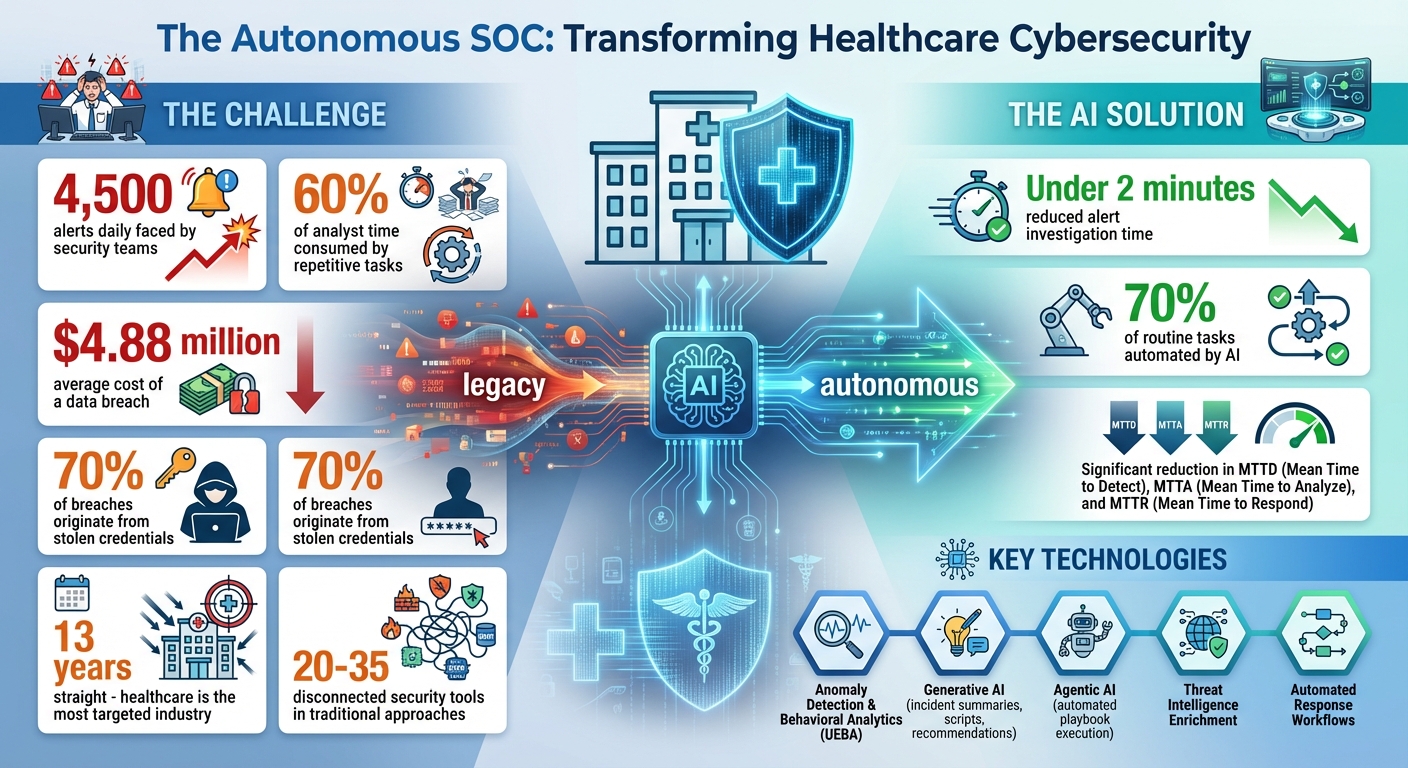

The integration of AI-driven solutions in Security Operations Centers (SOCs) is transforming how cybersecurity threats are identified and managed. The promise of these innovations lies in their ability to handle vast amounts of data, automate routine tasks, and provide predictive insights that can preempt threats before they materialize. However, the effectiveness of AI in SOCs hinges heavily on trust and judgment.

TL; DR

- Trust in AI: Building trust requires explainable AI and transparency in decision-making processes.

- Human Oversight: Essential for validating AI decisions and managing false positives.

- Future Trends: AI will evolve to handle more complex threats autonomously.

- Common Pitfalls: Over-reliance on AI can lead to complacency in threat management.

- Implementation Guide: Start with small-scale AI integration and gradually increase scope.

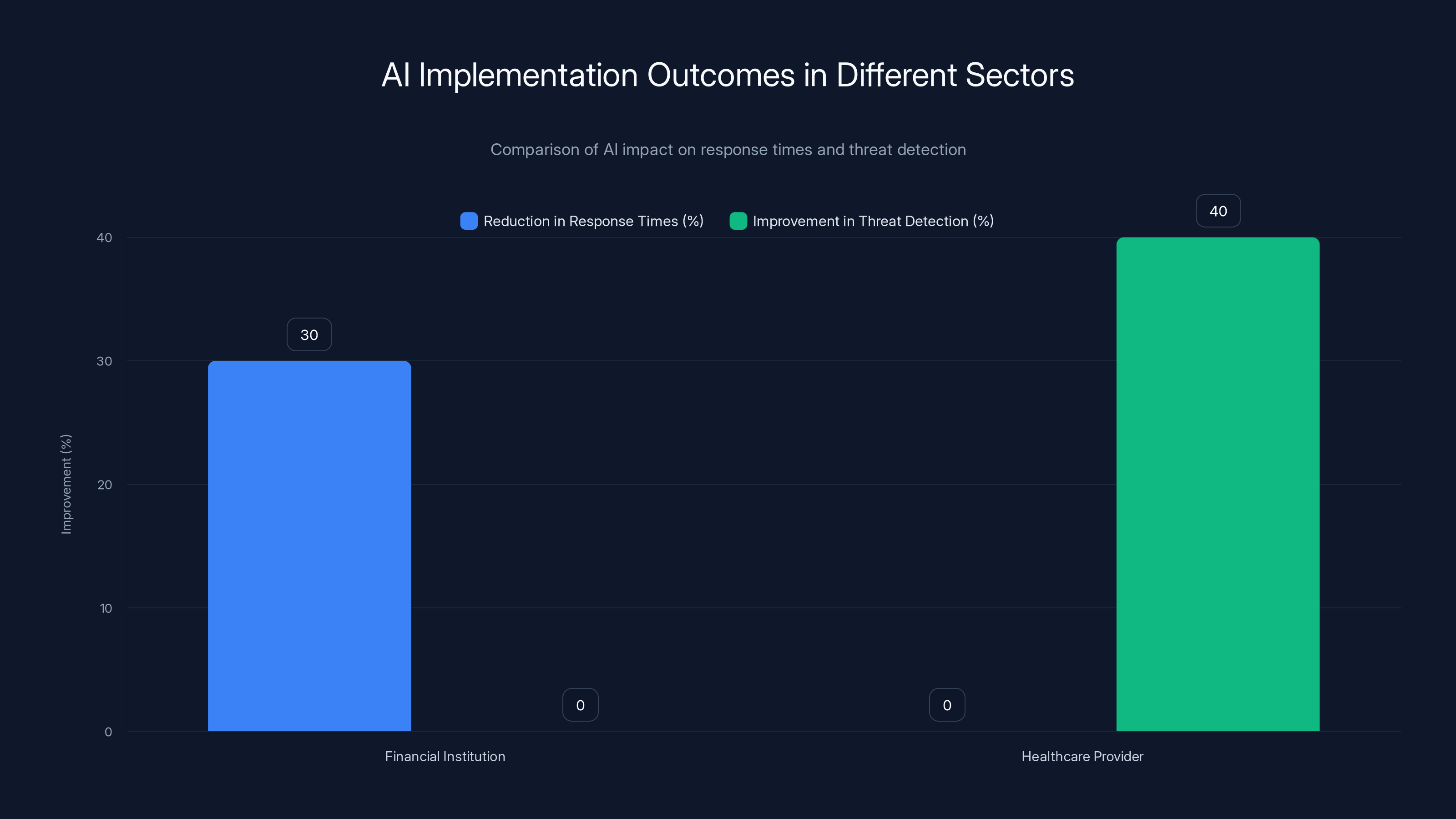

The financial institution saw a 30% reduction in response times, while the healthcare provider achieved a 40% improvement in threat detection. Estimated data for comparison.

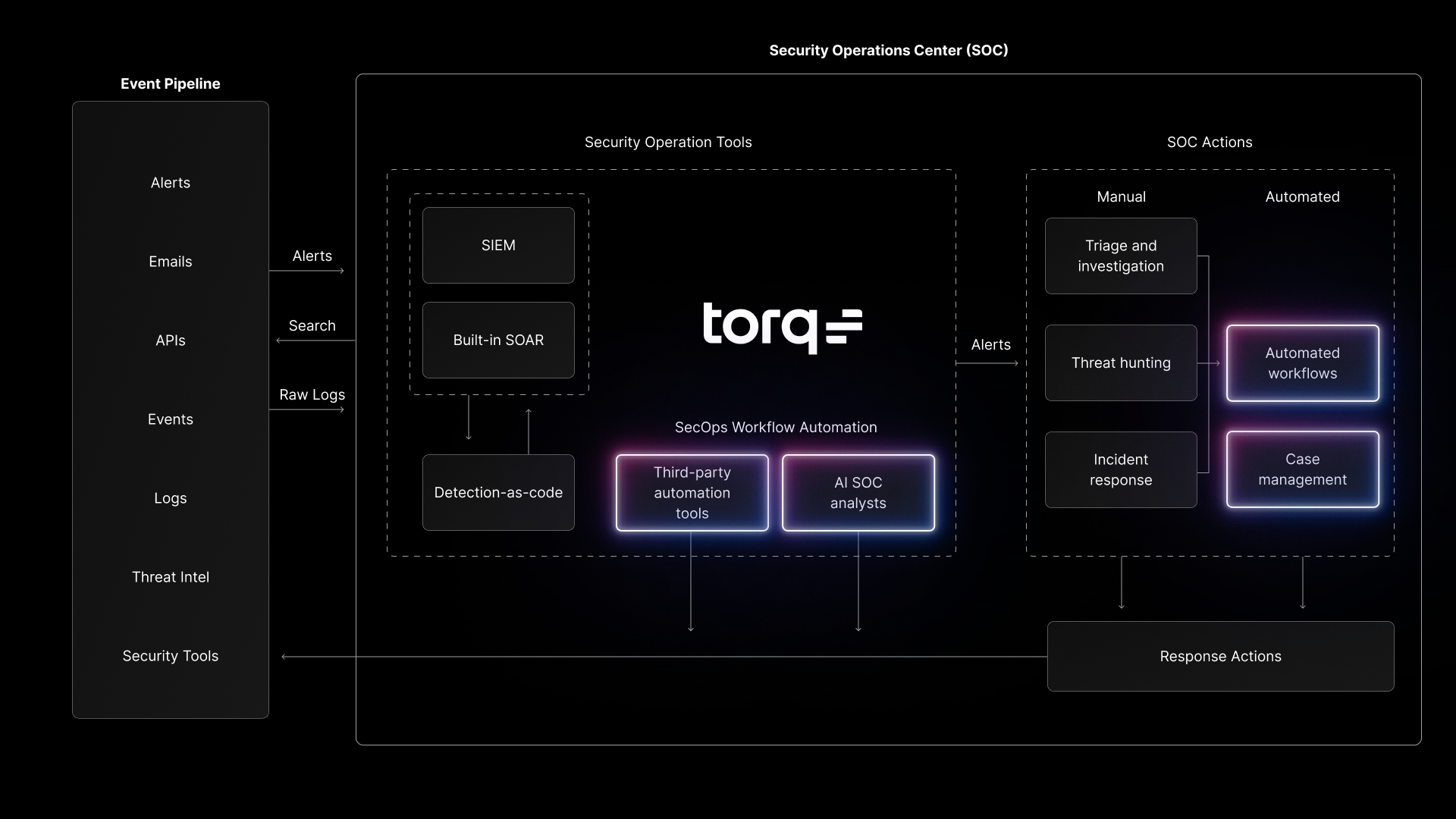

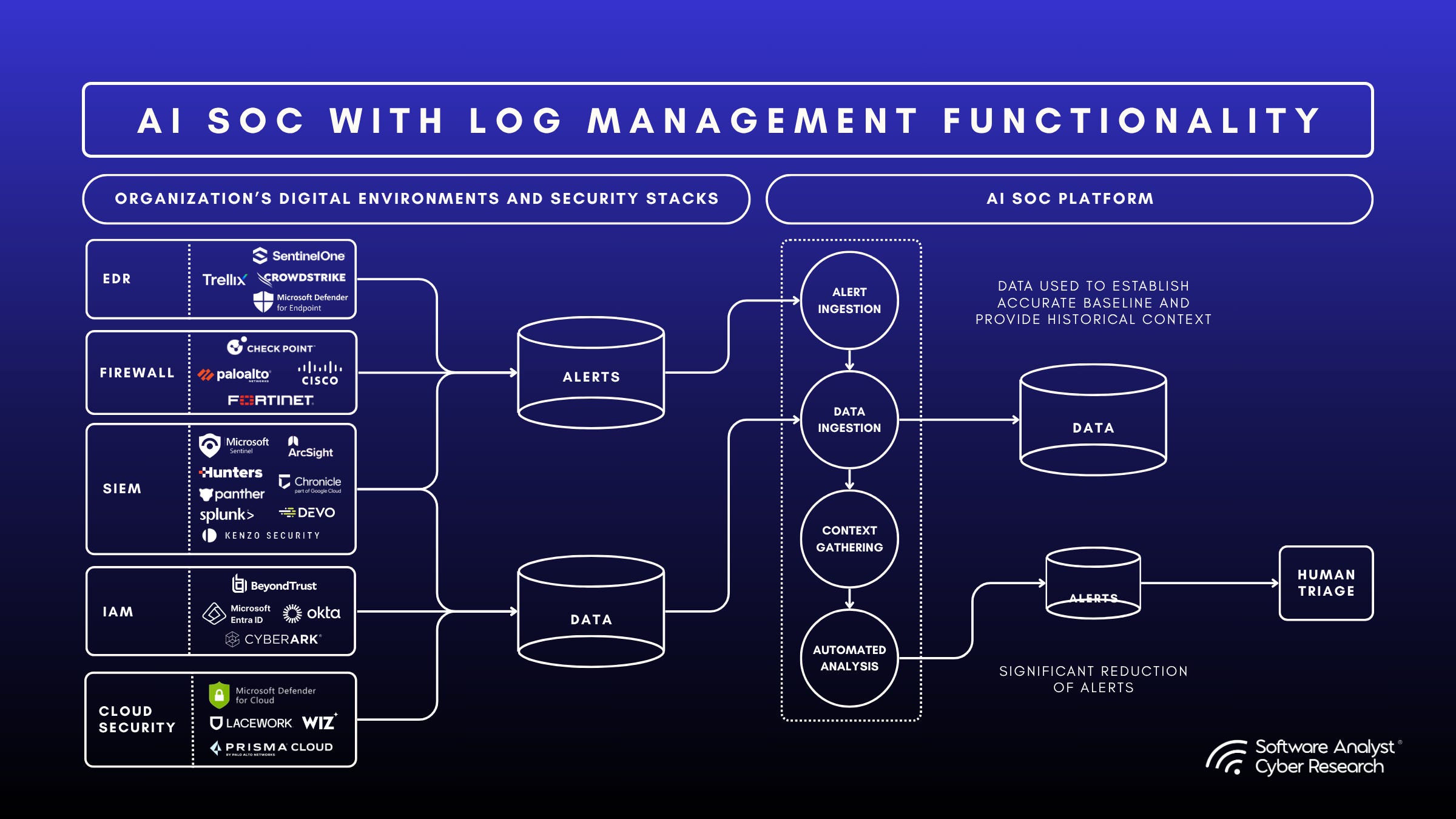

The Role of AI in SOCs

AI technologies are reshaping SOCs by enabling faster threat detection and response. These systems can analyze data from various sources, identify patterns, and suggest actions based on historical data and learned behaviors. This capability is crucial in managing the growing volume of cybersecurity threats efficiently.

Key Functions of AI in SOCs

- Data Analysis: AI processes massive datasets to identify anomalies.

- Threat Prediction: Predictive models assess potential risks based on past incidents.

- Automated Response: AI can automatically mitigate low-level threats.

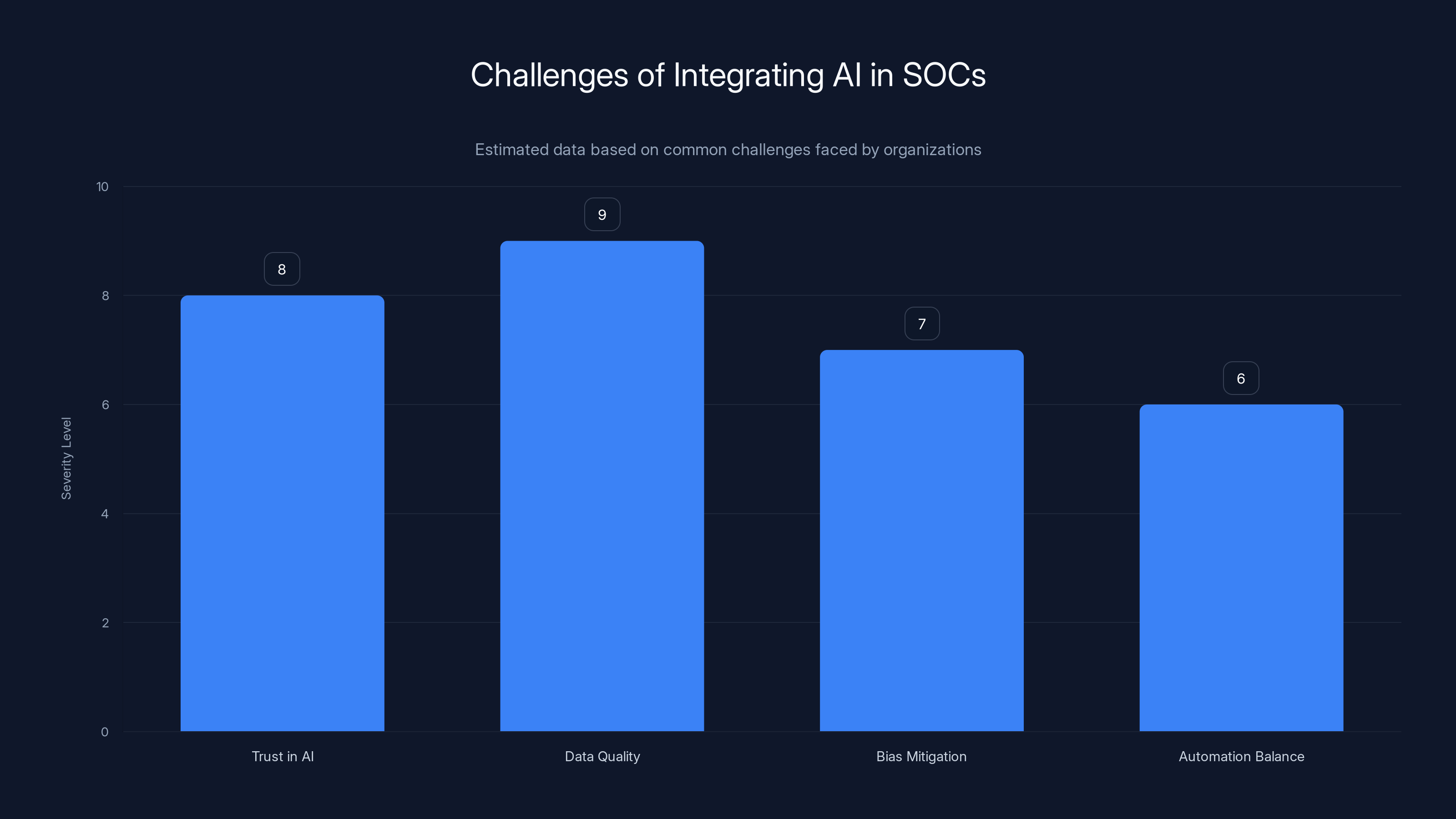

Data quality and trust in AI systems are the most severe challenges when integrating AI into SOCs. Estimated data.

Building Trust in AI

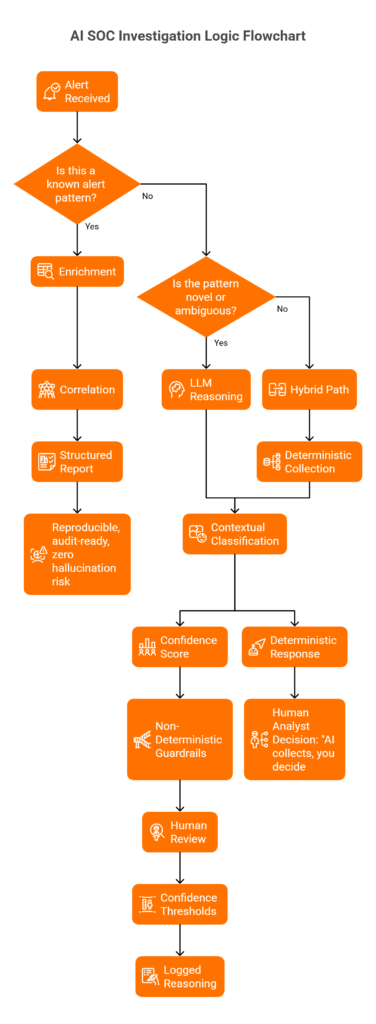

For AI to be effective in SOCs, security teams must trust its outputs. Trust is built through explainable AI, which provides transparency into how decisions are made. SOC teams need to understand AI logic to validate and trust its conclusions.

Explainable AI

Explainable AI involves models that offer insights into their decision-making processes. This transparency helps operators understand the rationale behind alerts and actions, thereby increasing confidence in AI systems.

- Model Transparency: Ensures that AI decisions can be traced and verified.

- User Interface: Intuitive interfaces help operators interact effectively with AI systems.

Human Oversight and Judgment

While AI can automate many tasks, human oversight remains crucial. Cybersecurity professionals provide context and judgment that AI lacks, particularly in ambiguous situations where human intuition can be invaluable.

Role of Human Oversight

- Decision Validation: Humans confirm AI actions and refine algorithms.

- Contextual Awareness: Humans provide context that AI might overlook.

- False Positive Management: Reducing unnecessary alerts that can overwhelm teams.

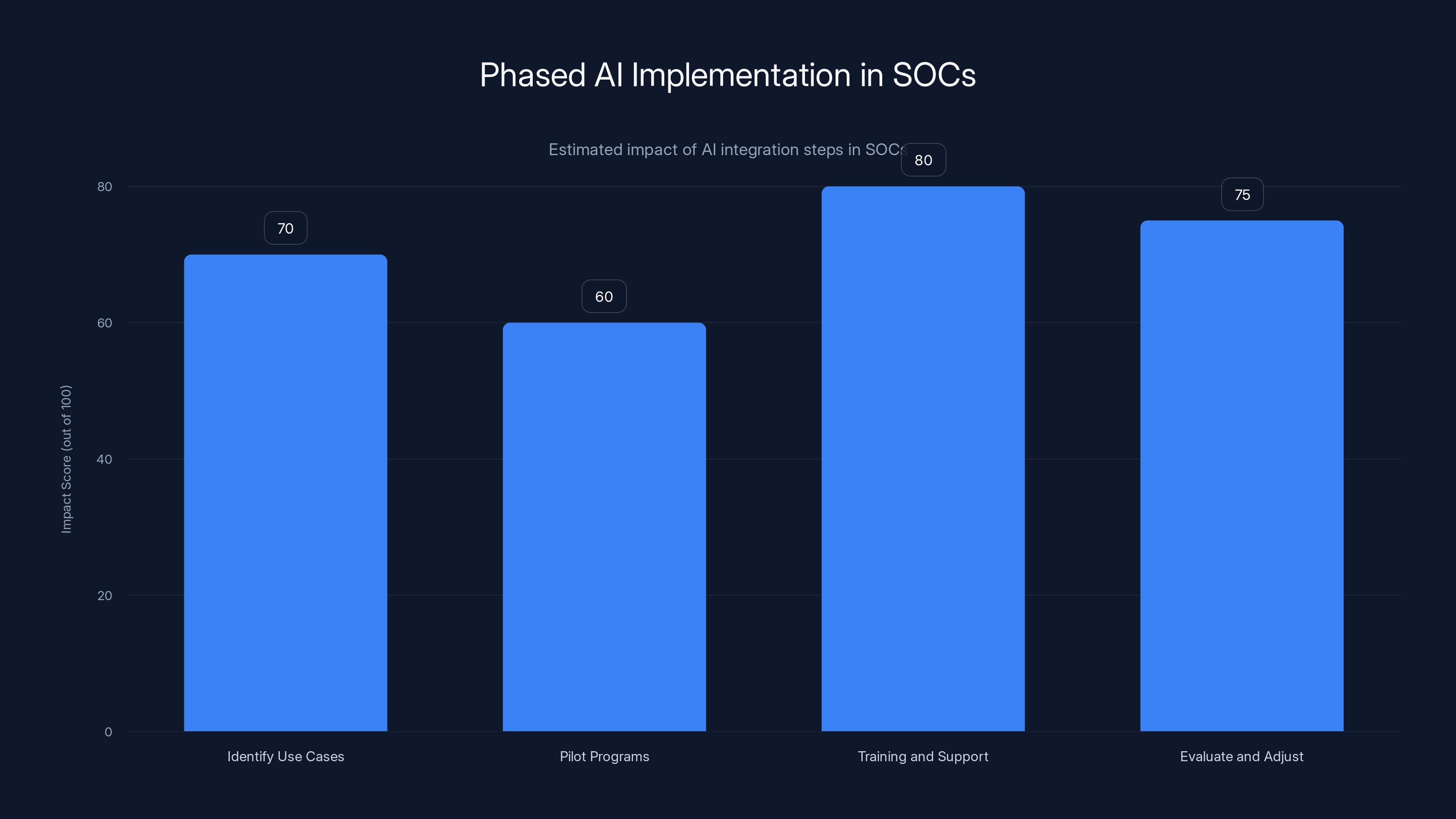

Estimated data showing the impact of each step in AI implementation within SOCs. Training and support are crucial for maximizing AI effectiveness.

Challenges with AI-Driven SOCs

Over-reliance on AI

A common pitfall is becoming too dependent on AI, leading to complacency. It’s vital to maintain a balanced approach where AI supports human decision-making rather than replacing it.

Data Quality and Bias

AI systems are only as good as the data they are trained on. Poor data quality or biased datasets can lead to inaccurate predictions and decisions.

- Data Validation: Regular audits of input data to ensure quality.

- Bias Mitigation: Implementing strategies to reduce biases in AI algorithms.

Practical Implementation Guide

For organizations looking to integrate AI into their SOCs, a phased approach is recommended. Start small, assess outcomes, and gradually expand AI capabilities.

Step-by-Step Implementation

- Identify Use Cases: Focus on specific areas where AI can have the most impact, such as threat identification or incident response.

- Pilot Programs: Begin with small-scale pilot projects to test AI capabilities and gather feedback.

- Training and Support: Ensure that SOC teams are adequately trained to use AI tools effectively.

- Evaluate and Adjust: Continuously assess AI performance and make necessary adjustments.

Case Studies

Case Study 1: Implementing AI in a Financial Institution

A leading bank integrated AI into its SOC to manage the rising number of cybersecurity threats. By starting with a focused pilot program, the bank was able to refine its AI systems and eventually expand its scope to include predictive threat analysis.

- Outcome: 30% reduction in response times.

- Challenges: Initial data integration issues.

Case Study 2: AI in Healthcare Security

A healthcare provider used AI to enhance its SOC capabilities, focusing on protecting patient data. The implementation involved setting up AI models that could detect unusual access patterns to sensitive information.

- Outcome: 40% improvement in threat detection.

- Challenges: Balancing automation with human oversight.

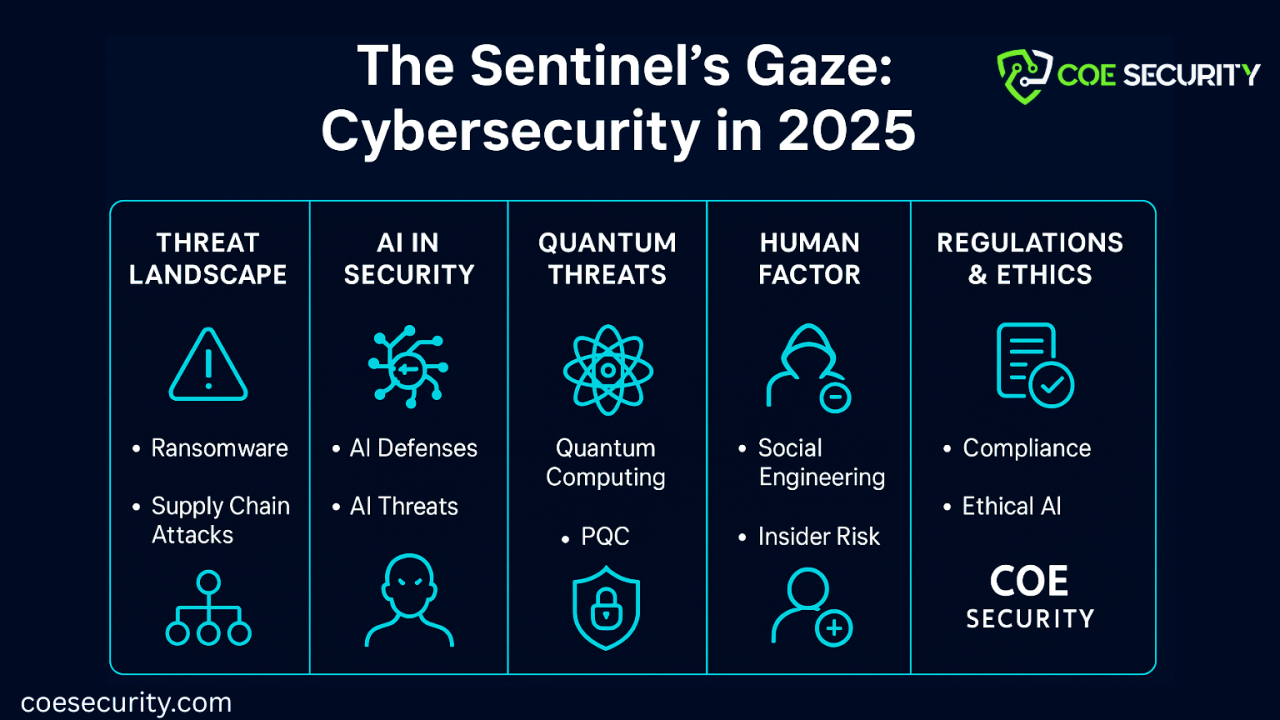

Future Trends in AI-Driven SOCs

The future of AI in SOCs is promising, with advancements in machine learning and AI technologies enabling more sophisticated threat management.

Emerging Trends

- Advanced Analytics: AI will leverage advanced analytics to provide deeper insights into threat landscapes.

- Autonomous Systems: Development of AI systems that can operate with minimal human intervention.

- Improved Collaboration: AI tools will facilitate better collaboration between SOC teams and other departments.

Recommendations for SOC Leaders

- Invest in Training: Continuous training for SOC teams on AI tools and best practices.

- Focus on Explainability: Prioritize AI systems that offer transparency in their decision-making processes.

- Regular Audits: Conduct regular audits of AI systems to ensure data quality and performance.

Conclusion

Integrating AI into SOCs is not without its challenges, but with careful planning and execution, it can significantly enhance threat detection and response capabilities. By building trust through explainable AI, maintaining human oversight, and preparing for future advancements, SOC leaders can navigate the complexities of AI-driven cybersecurity effectively.

FAQ

What is an AI-driven SOC?

An AI-driven SOC uses artificial intelligence to enhance its threat detection and response capabilities, allowing for faster and more accurate cybersecurity management.

How does AI improve SOC efficiency?

AI improves SOC efficiency by automating routine tasks, analyzing vast datasets for threat patterns, and providing predictive insights that help preempt potential threats.

What are the challenges of integrating AI in SOCs?

Challenges include building trust in AI systems, managing data quality, mitigating biases, and maintaining a balance between automation and human oversight.

How can SOCs build trust in AI systems?

Trust can be built through explainable AI that provides transparency in decision-making, ensuring that SOC teams understand and can verify AI outputs.

What future trends are expected in AI-driven SOCs?

Future trends include the development of more autonomous AI systems, advanced analytics for deeper insights, and improved collaboration tools within SOC teams.

Why is human oversight important in AI-driven SOCs?

Human oversight is crucial for validating AI decisions, providing contextual understanding, and managing false positives, ensuring that AI supports rather than replaces human judgment.

How should organizations start integrating AI into their SOCs?

Organizations should start by identifying specific use cases for AI, launching small-scale pilot programs, providing training for SOC teams, and continuously evaluating AI performance.

What role does data quality play in AI-driven SOCs?

Data quality is critical as AI systems rely on accurate and unbiased data for effective threat detection and decision-making. Regular audits and validation processes are essential.

How can organizations ensure AI systems are unbiased?

Organizations can mitigate AI bias by using diverse datasets, implementing bias detection tools, and continuously monitoring AI outputs for fairness and accuracy.

What are the benefits of explainable AI in SOCs?

Explainable AI enhances trust, provides transparency, and allows SOC teams to understand and verify the rationale behind AI decisions, leading to more informed and confident threat management.

Key Takeaways

- Trust in AI is crucial for effective SOC integration.

- Explainable AI enhances transparency and trust.

- Human oversight is essential for AI decision validation.

- Data quality and bias are critical challenges in AI systems.

- Future trends include autonomous AI systems and advanced analytics.

Related Articles

- Will Bots Outnumber Humans Online by 2027? Exploring the Future of Internet Traffic [2025]

- Tempur-ActiveBreeze Smart Bed Review: A High-Tech Sleep Revolution [2025]

- The Future of Desktop Superapps: OpenAI's Ambitious Leap [2025]

- US Takes Down Botnets Used in Record-Breaking Cyberattacks | WIRED

- The Rogue AI Incident at Meta: Unveiling the Security Challenges [2025]

- Why Meta is Keeping Horizon Worlds on VR: An In-depth Look [2025]

![Trust and Judgment: Navigating the AI-Driven SOC Landscape [2025]](https://tryrunable.com/blog/trust-and-judgment-navigating-the-ai-driven-soc-landscape-20/image-1-1774006527841.jpg)