Understanding Reddit's Human Verification for Fishy Accounts [2025]

Last month, Reddit announced a significant shift in how it deals with suspicious accounts, requiring them to verify they are run by humans. In the digital age, where AI and bot proliferation is rampant, this move aims to ensure authenticity and maintain trust within the Reddit community. Let's dive into what this means for users, moderators, and the platform itself.

TL; DR

- Human verification: Reddit requires suspicious accounts to prove human operation to combat bots, as detailed in TechCrunch's report.

- AI's growing influence: This move is a response to the increasing AI presence online, highlighted by TechBuzz.

- Privacy concerns: Verification uses third-party tools without exposing user identity, ensuring privacy as noted by Benzinga.

- Community impact: Most users won't be affected; only accounts showing fishy behavior, according to Yahoo Tech.

- Future implications: Sets a precedent for other platforms in managing AI and user authenticity, as discussed in FindArticles.

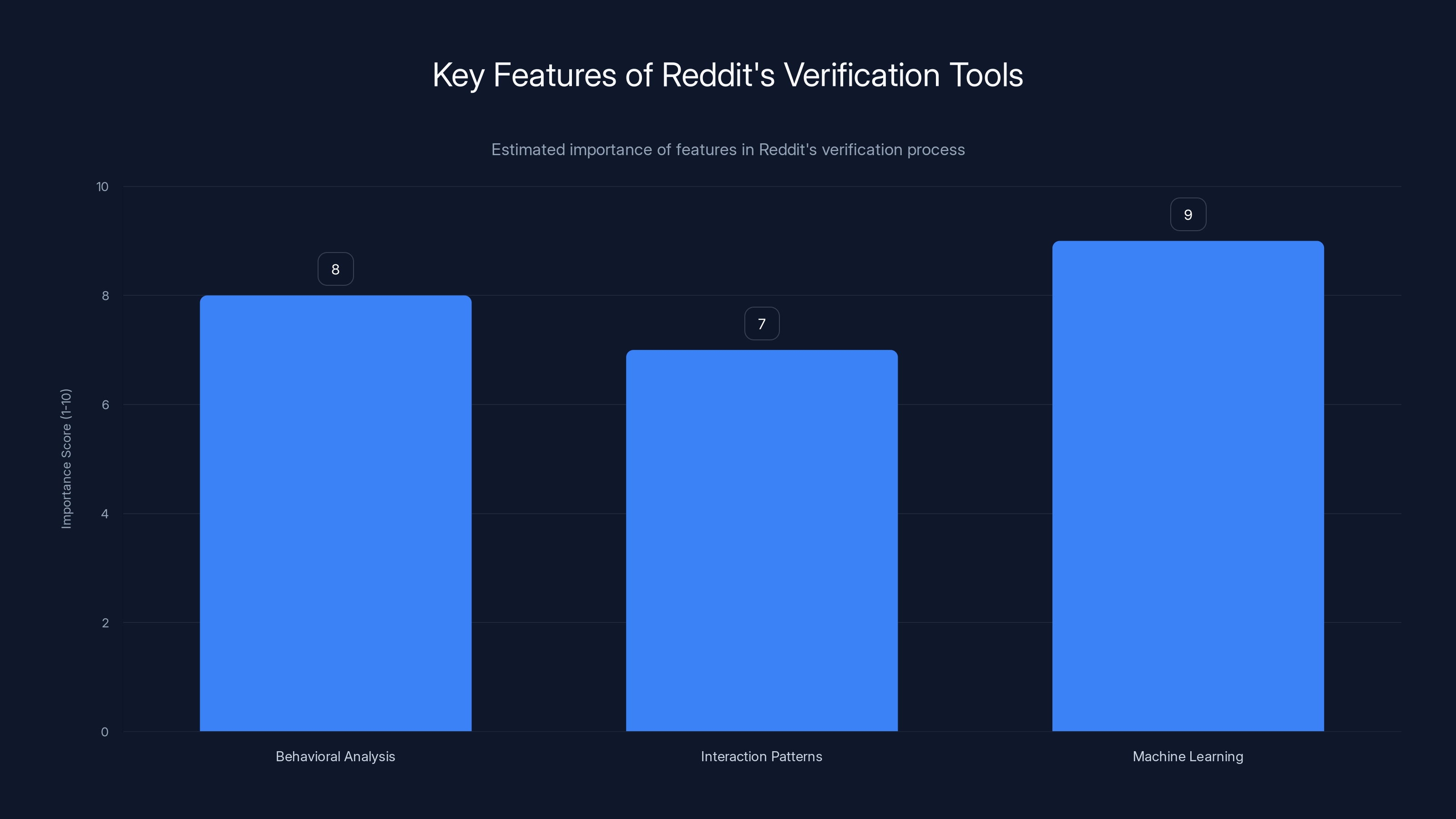

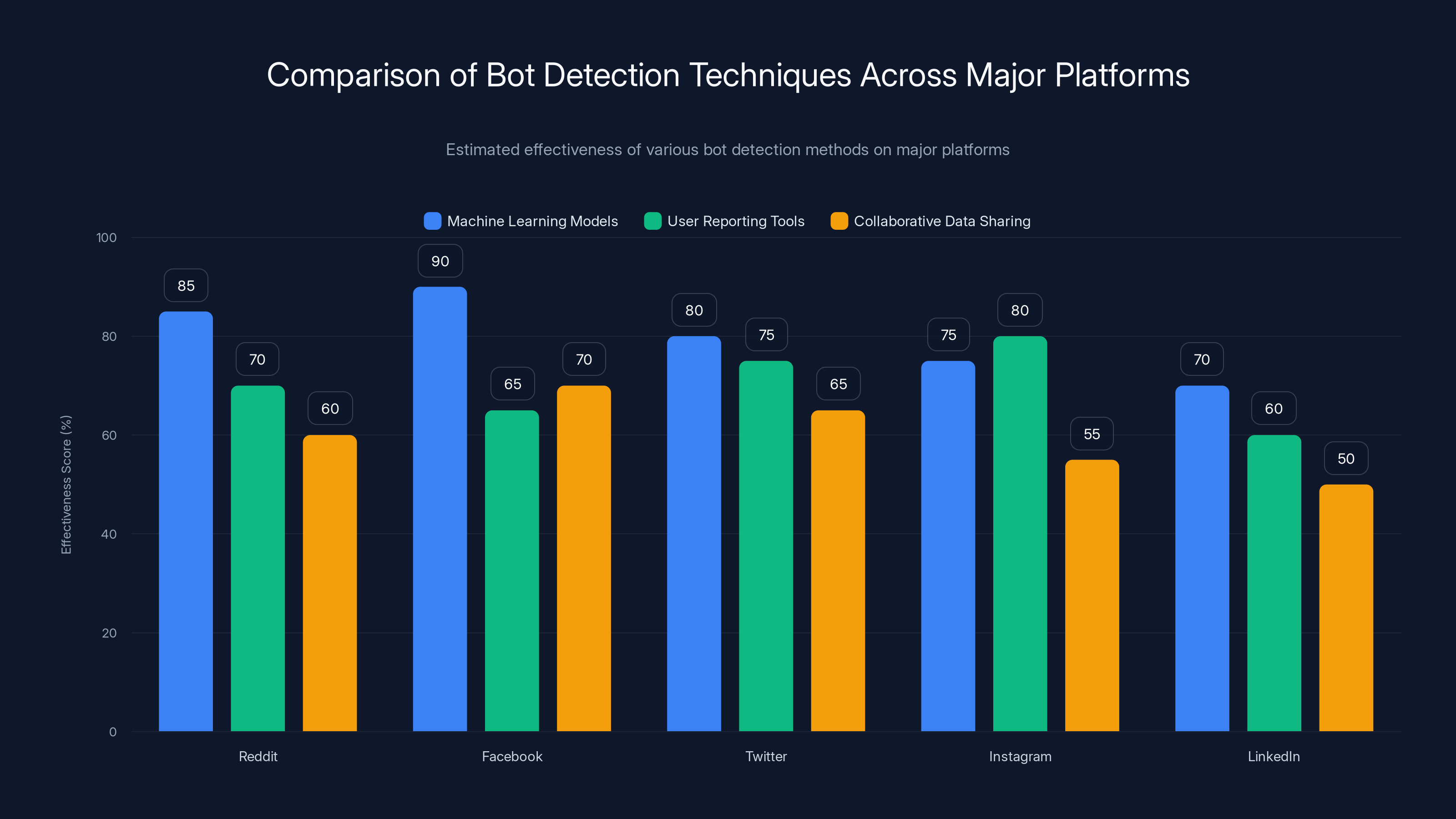

Machine learning is the most crucial feature in Reddit's verification process, scoring highest in importance. Estimated data.

The Rise of AI and Bots on Social Platforms

Before delving into Reddit's specific approach, it's essential to understand the broader context of AI and bots in social media. Over the past decade, automated accounts, or bots, have grown exponentially. From news dissemination and customer service to malicious activities like spreading misinformation, bots have become a double-edged sword.

Key Issues with Bots:

- Misinformation: Bots can amplify false narratives, affecting public opinion, as noted by GovTech.

- Spam: Automated accounts often flood platforms with irrelevant content, a concern highlighted in WHIO News.

- Security Risks: Bots can exploit vulnerabilities, leading to data breaches, as discussed in Global Security Review.

While AI has its benefits, the challenges it poses necessitate new measures to ensure platforms remain safe and trustworthy.

Real Talk: AI's Dual Role

AI isn't inherently good or bad. It's a tool, much like a hammer, that can build or destroy based on how it's wielded. Platforms like Reddit are recognizing the need to address both sides of the AI coin.

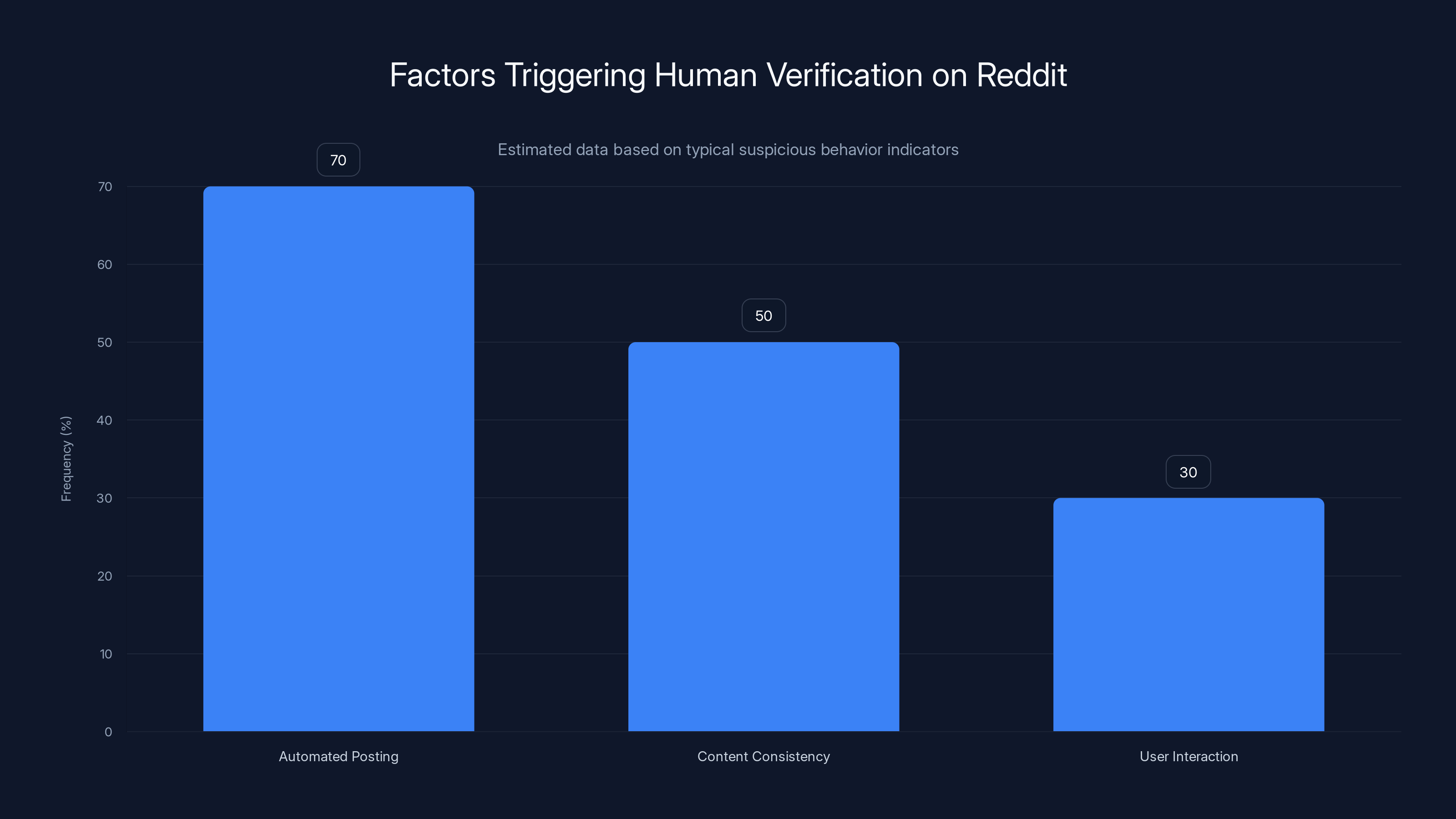

Automated posting patterns are the most common trigger for human verification on Reddit, followed by content consistency and limited user interaction. Estimated data.

Reddit's Approach to Human Verification

Reddit's decision to implement human verification for suspicious accounts stems from a need to balance user privacy with platform integrity. But how does this work in practice?

What Triggers Verification?

Suspicious Behavior Indicators:

- Automated Posting Patterns: Unnaturally high frequency of posts or comments, as reported by TechBuzz.

- Content Consistency: Repeatedly posting similar content across different subreddits.

- User Interaction: Limited human-like engagement with other users.

When such behaviors are detected, Reddit flags the account for verification. This process is not meant for the average user but targets those exhibiting clear signs of automation.

How Verification Works

Reddit uses third-party tools to verify human activity. These tools are designed to respect user privacy, ensuring that no personal data, Reddit username, or activity logs are exposed, as explained by Benzinga.

Verification Process:

- Initial Flagging: Account behavior is analyzed for anomalies.

- Tool Engagement: Third-party services assess the likelihood of human operation.

- Outcome: If proven human, the account remains unaffected. Otherwise, it may face restrictions.

Implications for Reddit Users

While this change aims to improve user experience by reducing bot interference, it also raises questions about privacy and user freedom.

Privacy Concerns

The biggest concern among users is privacy. However, Reddit's use of third-party tools prioritizes anonymity. User data is never directly shared, and the verification process is designed to be minimally invasive, as detailed in Yahoo Tech.

Privacy Safeguards:

- Anonymity: No personal data or Reddit activity is exposed.

- Data Protection: Third-party tools operate under strict data protection protocols.

Community Trust

For Reddit's community-driven ecosystem, trust is crucial. By ensuring that interactions are genuine, Reddit hopes to foster a more authentic environment, as noted by FindArticles.

Community Benefits:

- Reduced Misinformation: Less bot activity means lower chances of misinformation spread.

- Authentic Interactions: User discussions and debates remain genuine and human-centered.

This chart estimates the effectiveness of different bot detection techniques across major platforms. Machine learning models generally show higher effectiveness, while collaborative data sharing is less utilized. Estimated data.

Technical Details of Verification

Understanding the technical side of Reddit's verification process can help demystify how it works and what it entails.

Third-Party Tools

Reddit relies on sophisticated algorithms and machine learning models to determine account authenticity. These tools analyze patterns that are challenging for bots to mimic consistently, as explained by TechBuzz.

Tool Features:

- Behavioral Analysis: Tracks posting frequency and diversity.

- Interaction Patterns: Evaluates the nature of user interactions.

- Machine Learning: Continuously updates models to adapt to new bot strategies.

Implementation Best Practices

For users worried about being mistakenly flagged, here are some best practices to ensure your account remains in good standing.

- Diversify Activity: Engage in a variety of subreddits and discussions.

- Avoid Automation: Refrain from using third-party tools that automate posting.

- Regular Interactions: Maintain consistent, human-like interaction patterns.

Common Pitfalls and Solutions

As with any system, there are potential pitfalls in Reddit's verification process. Here are common issues and how to address them.

False Positives

Some users may be flagged despite being genuine. This could occur if a user engages heavily in one subreddit or uses automated tools for convenience.

Solution:

- Appeal Process: Reddit provides an appeal process for users to contest false flags.

- Manual Review: Accounts can be reviewed manually to ensure genuine users aren't penalized.

Privacy Concerns

Even with Reddit's assurances, some users remain skeptical about privacy.

Solution:

- Transparency Reports: Reddit can enhance trust by publishing regular transparency reports detailing verification cases and outcomes.

- User Education: Providing clear information on how data is handled can alleviate concerns.

Future Trends and Recommendations

As technology evolves, so too must our approaches to managing AI and bots. Reddit's current strategy is a step in the right direction, but what does the future hold?

Evolving AI Detection

AI capabilities are rapidly advancing, requiring platforms to stay ahead in detection methodologies.

Future Recommendations:

- Continuous Learning: Utilize machine learning models that evolve with emerging bot tactics.

- Collaboration: Work with other platforms to share data and strategies for combating bots.

User Empowerment

Empowering users to recognize and report suspicious activities can significantly enhance platform integrity.

Empowerment Strategies:

- User Tools: Provide tools for users to easily report potential bots.

- Educational Campaigns: Raise awareness about how to spot and handle bot interactions.

Best Practices for Other Platforms

Reddit's approach can serve as a model for other platforms grappling with similar challenges. Here are some best practices that can be universally applied.

- User-Centric Verification: Ensure that verification processes prioritize user privacy and convenience.

- Adaptive Systems: Regularly update detection algorithms to counter evolving bot strategies.

- Community Engagement: Involve the user community in developing and refining verification methods.

Case Study: Implementing Human Verification on Reddit

Let's explore a hypothetical case study of how Reddit's verification process might unfold for a suspicious account.

Scenario

An account, "Tech Guru 123," consistently posts similar tech-related content across multiple subreddits. The frequency and lack of engagement raise flags.

Verification Process

- Initial Flagging: Reddit's system detects unusual patterns in "Tech Guru 123's" activity.

- Third-Party Analysis: The account undergoes analysis by third-party tools assessing human vs. bot likelihood.

- Outcome: If "Tech Guru 123" is verified as human, no further action is taken. Otherwise, the account faces restrictions.

Lessons Learned

This case highlights the importance of diverse and authentic engagement on platforms like Reddit to avoid unnecessary scrutiny.

Conclusion

Reddit's decision to require human verification for suspicious accounts is a proactive measure to enhance platform integrity in an era of AI and bot proliferation. By balancing user privacy with community trust, Reddit sets a precedent for other platforms in managing AI's growing influence. As technology continues to evolve, ongoing adaptation and user empowerment will be crucial in maintaining safe and authentic online environments.

Use Case: Automate weekly reports with AI precision while ensuring human oversight and authenticity.

Try Runable For Free

FAQ

What is Reddit's human verification process?

Reddit's human verification process involves using third-party tools to analyze suspicious account behavior, ensuring accounts are operated by humans rather than bots, as explained in TechCrunch.

How does Reddit determine which accounts to verify?

Accounts exhibiting automated patterns, such as high-frequency posting or repetitive content, are flagged for verification, according to TechBuzz.

What privacy measures does Reddit implement during verification?

Reddit uses third-party tools that do not expose personal data, usernames, or activity logs, prioritizing user privacy, as highlighted by Benzinga.

What happens if an account fails verification?

Accounts that cannot verify human operation may face restrictions or limitations on their activities, as noted in Yahoo Tech.

How can users avoid being mistakenly flagged?

Users should engage in diverse subreddit activities and avoid automated posting to minimize the risk of false flags, as advised by FindArticles.

What impact does this process have on community trust?

By reducing bot interference, Reddit aims to foster more authentic interactions and enhance community trust, as discussed in TechBuzz.

Are there future plans to evolve the verification process?

Reddit intends to continuously update its verification methods to adapt to evolving AI and bot strategies, as reported by TechCrunch.

Key Takeaways

- Reddit's human verification targets suspicious accounts to counteract AI and bot influence.

- The verification process uses third-party tools to ensure privacy and anonymity.

- Most Reddit users will not be affected by this change; it targets accounts with unusual activity.

- Continuous adaptation is necessary to keep up with evolving AI and bot strategies.

- Community trust is enhanced by reducing bot interference and ensuring genuine interactions.

- Other platforms can adopt similar user-centric and privacy-focused verification processes.

Related Articles

- Reddit's New Human Verification: Enhancing Community Integrity [2025]

- There’s Something Very Dark About a Lot of Those Viral AI Fruit Videos | WIRED

- Understanding Social Media Addiction: Implications of Landmark Legal Cases [2025]

- Navigating the Implications: Supreme Court Rules Cox Communications Not Liable for Pirated Music [2025]

- Ultrahuman Is Back: Can the Ring Pro Beat Oura in the U.S. Market?

- The Ex-CIA Agent Going Viral Asking for a Trump Pardon | WIRED

![Understanding Reddit's Human Verification for Fishy Accounts [2025]](https://tryrunable.com/blog/understanding-reddit-s-human-verification-for-fishy-accounts/image-1-1774471020225.jpg)