Unpacking the Sudden Closure of Digg's Open Beta: The AI Bot Spam Dilemma [2025]

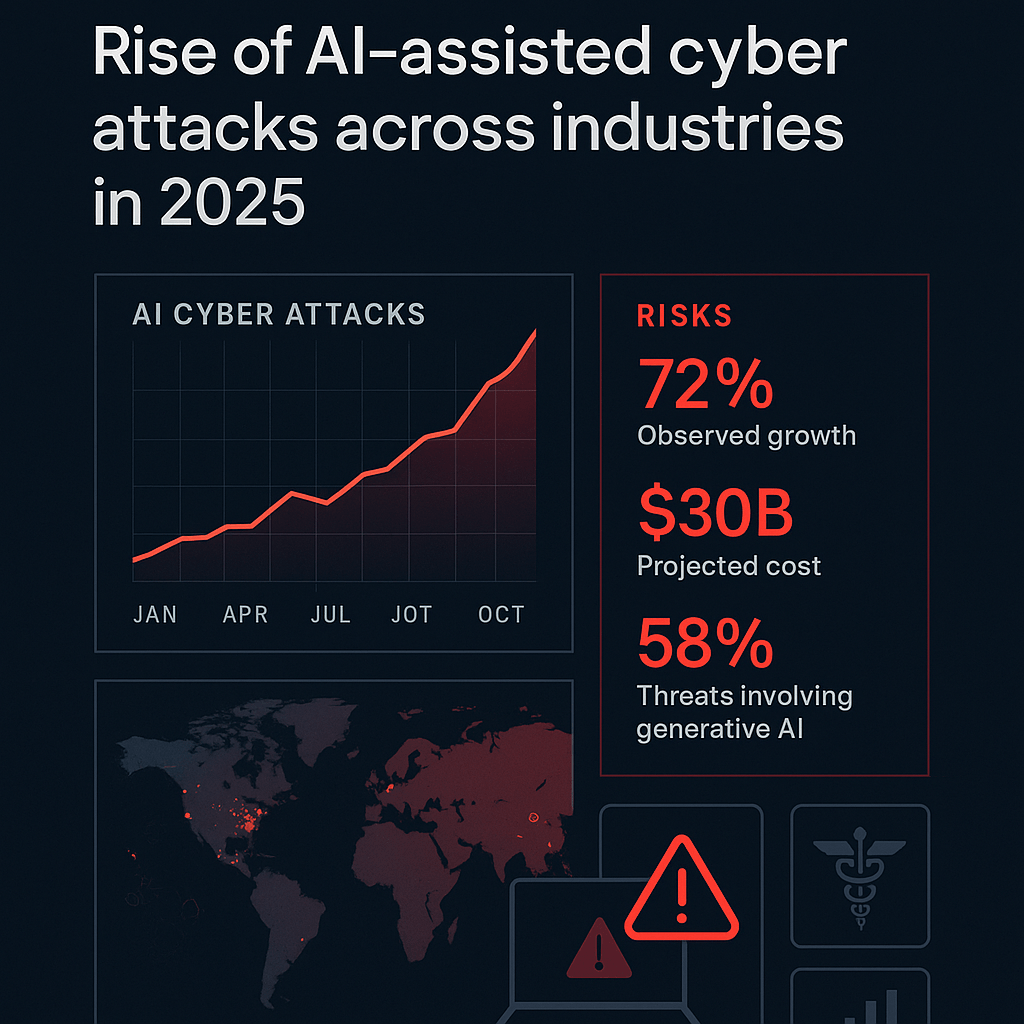

In a surprising move last month, Digg announced the shutdown of its open beta, blaming a surge of AI bot spam for the decision. This abrupt closure after only two months reveals significant challenges that modern platforms face in dealing with AI-driven threats. But what exactly happened, and how can similar platforms safeguard themselves in the future?

TL; DR

- AI Bot Surge: Digg's open beta faced overwhelming spam from AI bots, leading to its shutdown.

- Security Weaknesses: This incident highlights critical security gaps in handling AI-driven threats.

- Technical Solutions: Enhanced AI detection, user verification, and community moderation are key.

- Future Trends: Expect more sophisticated AI threats requiring advanced countermeasures.

- Platform Recommendations: Implement robust security frameworks and continuous threat monitoring.

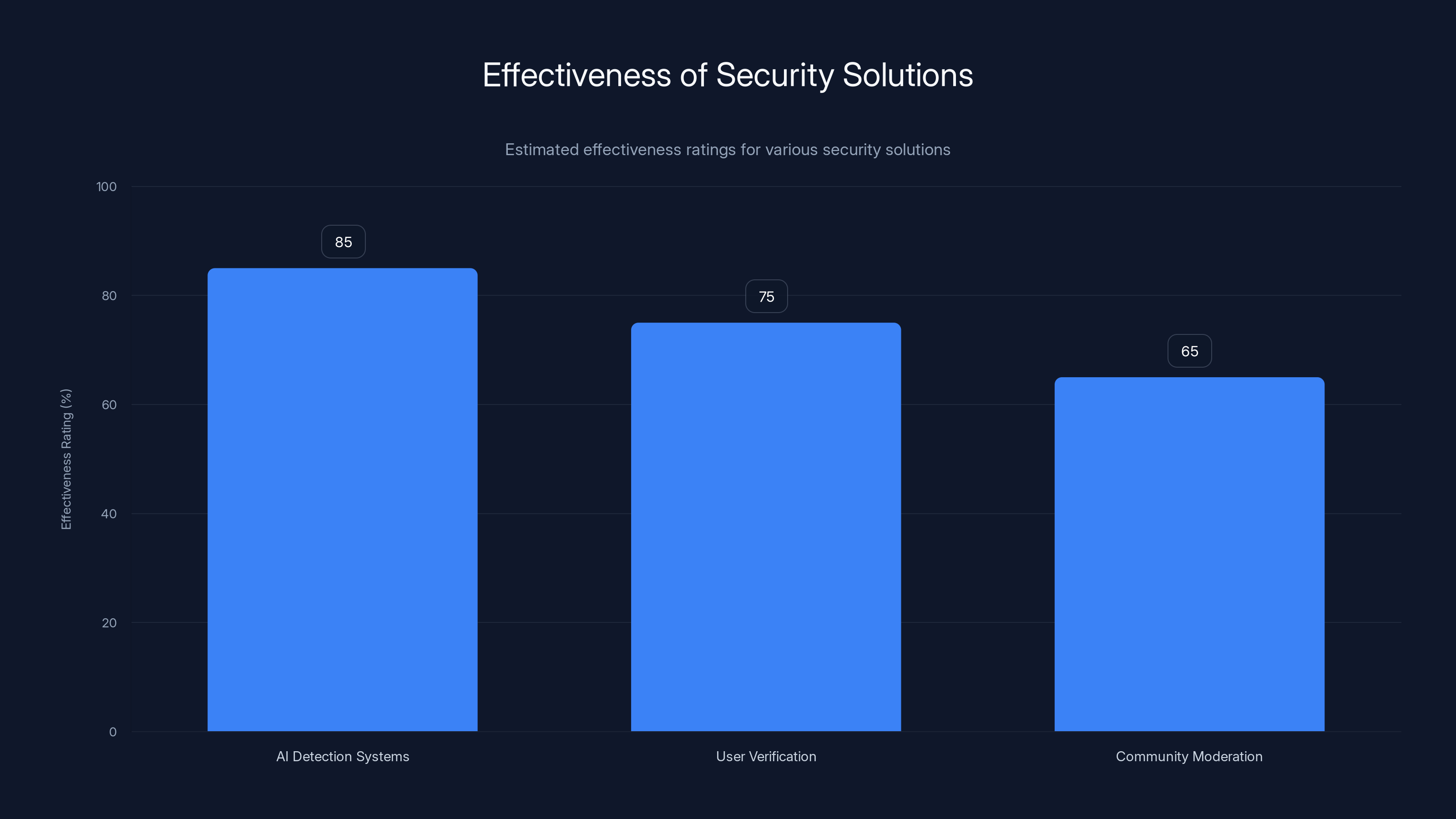

AI Detection Systems are estimated to be the most effective security solution with an 85% effectiveness rating, followed by User Verification at 75% and Community Moderation at 65%. Estimated data.

The Rise and Fall of Digg's Open Beta

When Digg launched its open beta, the goal was to rejuvenate the platform with user-driven content and community engagement. However, the initial excitement quickly turned to frustration as AI bots flooded the platform with spam, rendering it unusable.

What Went Wrong?

The core issue lay in Digg's inability to effectively distinguish between genuine user activity and malicious bot actions. This failure was largely due to outdated security measures that couldn't keep up with modern AI capabilities.

Key Factors:

- Inadequate Bot Detection: Traditional CAPTCHA systems and basic filters were no match for advanced AI bots capable of mimicking human behavior, as noted in recent studies on CAPTCHA limitations.

- Lack of User Verification: Insufficient verification processes allowed bots to create multiple fake accounts easily.

- Overwhelmed Moderation: The scale of spam overwhelmed human moderators, leading to delayed response times and a poor user experience.

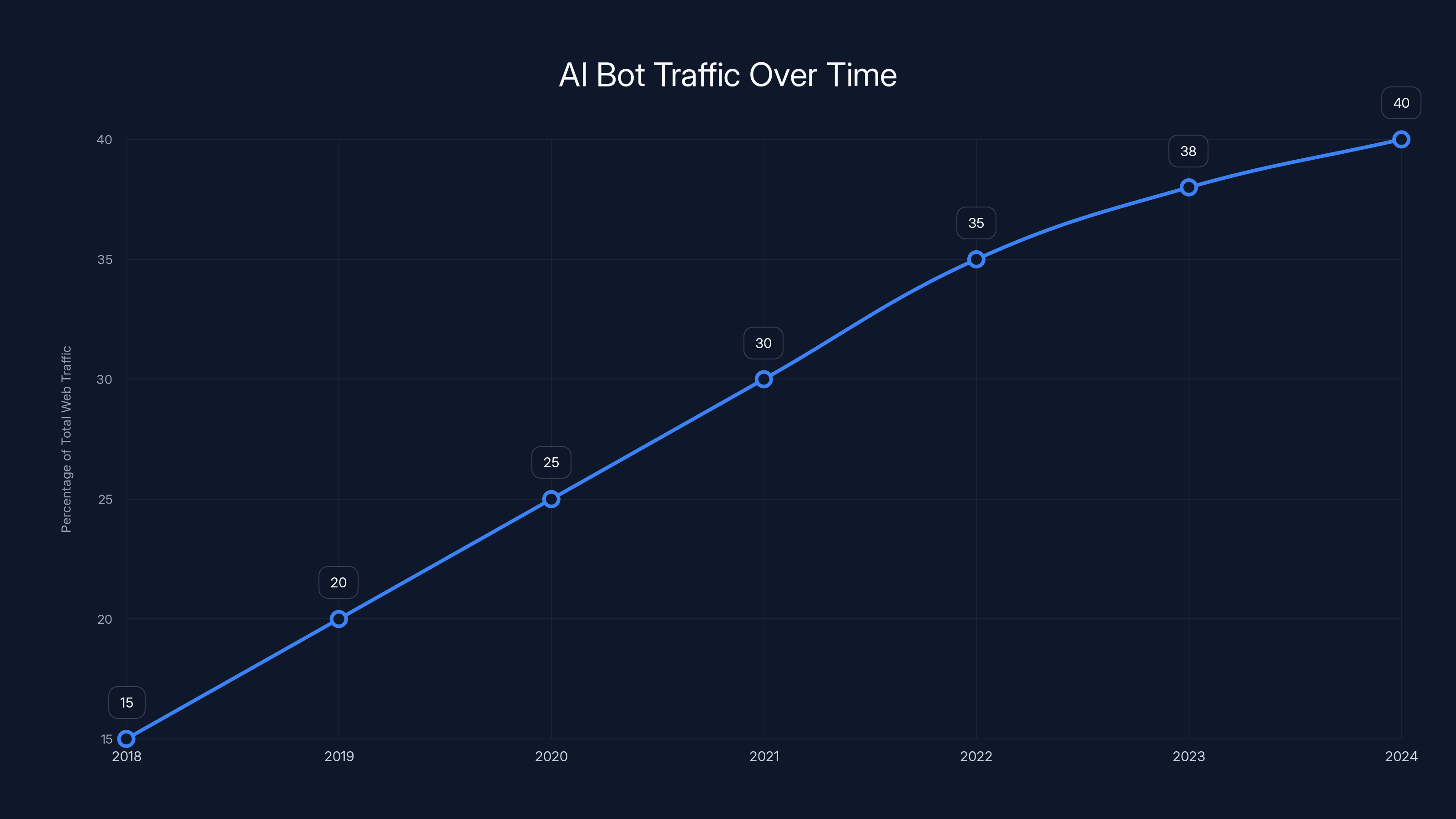

AI bots have increasingly dominated web traffic, rising from 15% in 2018 to an estimated 40% in 2024, highlighting the growing challenge for platforms like Digg. Estimated data.

Analyzing the AI Bot Spam Threat

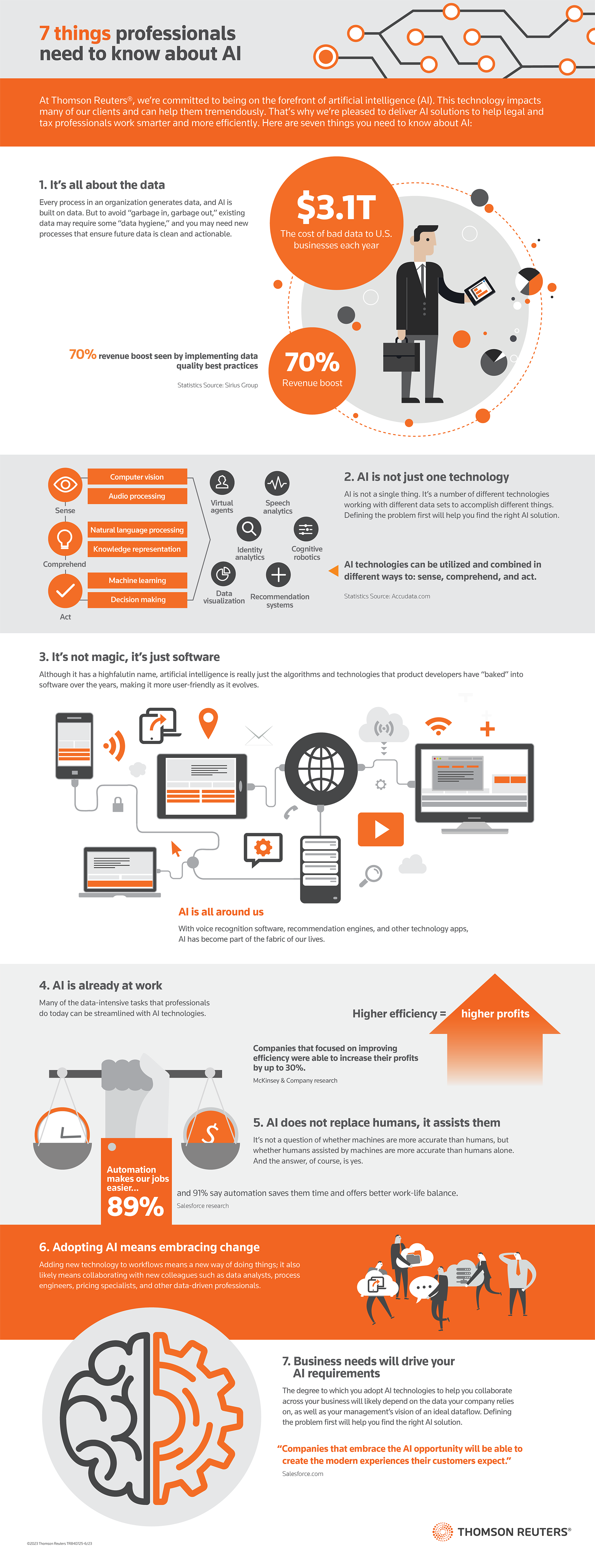

AI bots have evolved from simple scripts to sophisticated programs capable of generating human-like interactions. This evolution poses a new level of threat to digital platforms, necessitating advanced countermeasures.

How AI Bots Operate

AI bots leverage machine learning algorithms to learn and adapt their behavior, making them capable of bypassing traditional security protocols. They can:

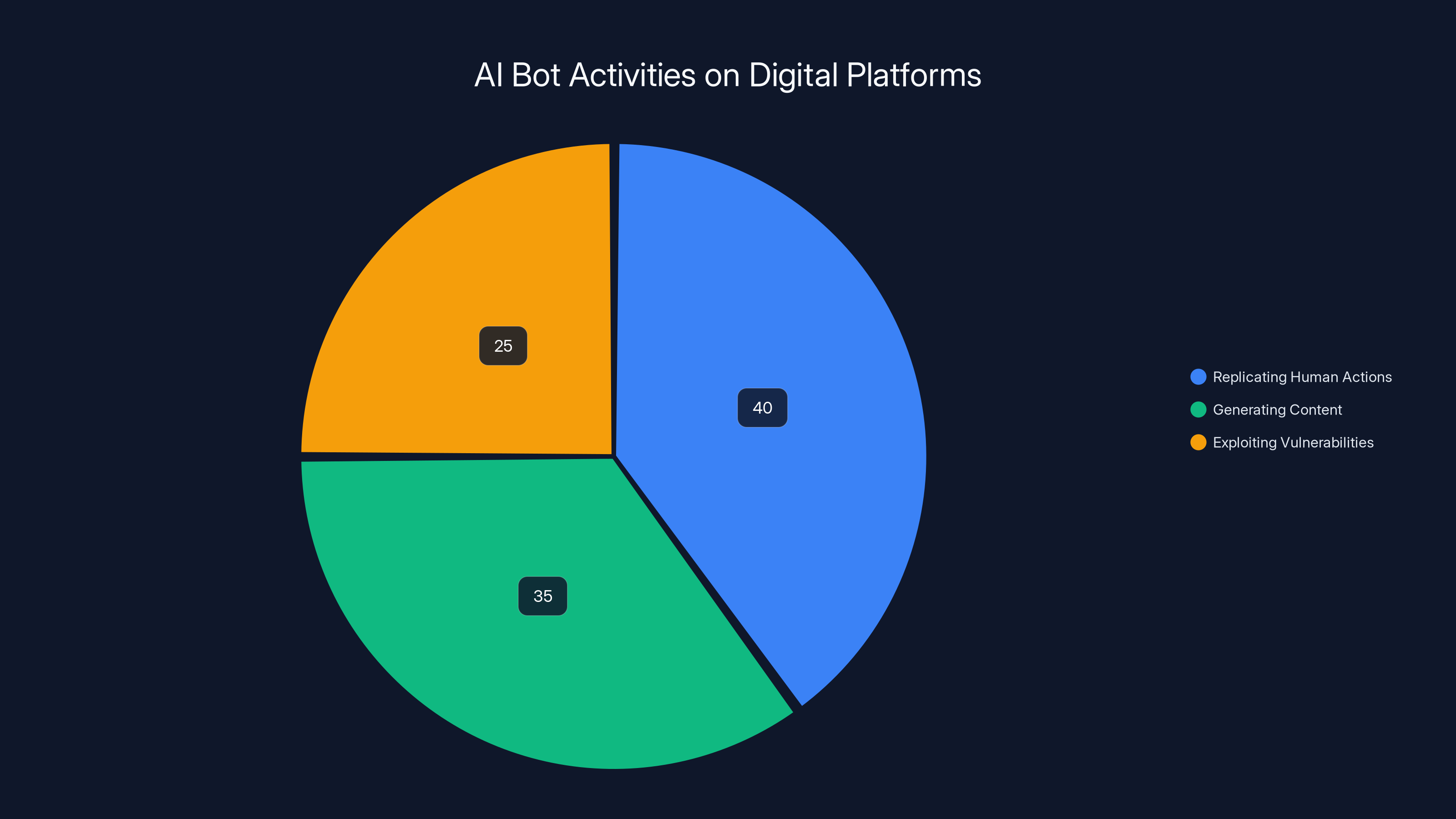

- Replicate Human Actions: Mimic clicks, keystrokes, and interactions.

- Generate Content: Produce text, comments, and posts that appear legitimate.

- Exploit Vulnerabilities: Identify and exploit security weaknesses in real-time, as discussed in Microsoft's security insights.

Case Study: Digg's Bot Invasion

On Digg, bots were able to create fake accounts en masse, post spammy content, and manipulate voting mechanisms. This not only degraded the quality of content but also drove genuine users away, fearing a compromised experience.

Building Better Defenses: Technical Solutions

To prevent similar incidents, platforms must adopt a multi-layered security approach that combines AI with traditional security measures. Here are some best practices:

1. Advanced AI Detection Systems

Implement AI-based detection systems that can analyze behavior patterns and identify anomalies indicative of bot activity.

Key Features:

- Behavioral Analytics: Track user interactions over time to detect irregular patterns.

- Machine Learning Models: Continuously train models to recognize new bot behaviors.

- Real-time Alerts: Notify administrators of suspected bot activity immediately.

2. Robust User Verification

Strengthen user verification processes to ensure that only legitimate users can access the platform.

Methods Include:

- Multi-Factor Authentication (MFA): Require additional verification steps beyond passwords.

- Biometric Verification: Use facial recognition or fingerprint scanning for account creation and access.

3. Community-Driven Moderation

Leverage community members as moderators to help identify and report spam quickly.

Strategies:

- Reputation Systems: Reward active and trustworthy users with moderation privileges.

- User Reporting Tools: Provide easy-to-use reporting features for flagging suspicious content.

Estimated data shows that AI bots primarily focus on replicating human actions (40%), followed by generating content (35%) and exploiting vulnerabilities (25%).

Future Trends in AI and Security

As AI technology continues to advance, so too will the sophistication of AI-driven threats. Platforms must stay ahead of these trends by investing in research and development.

Emerging AI Threats

Expect to see AI threats that:

- Use Deepfakes: Generate realistic but fake videos and images to spread misinformation, as highlighted by The New York Times.

- Execute Autonomous Attacks: Operate without human intervention, making them harder to trace.

- Adapt to Security Changes: Learn from their environment and adjust tactics accordingly.

Recommendations for Platform Security

To build resilience against AI bot spam, platforms should focus on the following areas:

1. Continuous Monitoring

Implement continuous monitoring systems to keep track of user activity and detect anomalies in real time.

2. Regular Security Audits

Conduct regular security audits to identify and address vulnerabilities before they can be exploited.

3. Collaboration with AI Experts

Work with AI researchers and security experts to stay informed about the latest threats and defenses, as suggested by Britannica's insights on AI.

Conclusion

The shutdown of Digg's open beta serves as a cautionary tale for digital platforms worldwide. As AI continues to evolve, so must our defenses. By adopting advanced technological solutions and fostering a proactive security culture, platforms can protect themselves from the growing threat of AI bot spam and ensure a safe, engaging experience for their users.

FAQ

What caused the closure of Digg's open beta?

Digg's open beta was shut down due to overwhelming spam from AI bots, which compromised user experience and platform integrity.

How can platforms safeguard against AI bot spam?

Platforms can implement advanced AI detection systems, strengthen user verification, and utilize community-driven moderation to combat AI bot spam.

What are the future trends in AI-driven cyber threats?

Future trends include deepfake generation, autonomous attacks, and adaptive threats that evolve with changing security measures.

Why is continuous monitoring important for platform security?

Continuous monitoring helps detect anomalies and suspicious activities in real time, allowing for immediate response and threat mitigation.

How can community-driven moderation help in managing platform security?

Community-driven moderation empowers trusted users to identify and report malicious activities quickly, enhancing the platform's ability to respond to threats.

What role do regular security audits play in platform protection?

Regular security audits help identify and fix vulnerabilities, ensuring that the platform remains secure against evolving threats.

Key Takeaways

- Digg's open beta was shut down due to AI bot spam.

- AI bots can mimic human behavior, posing significant security challenges.

- Advanced AI detection and user verification are critical for platform security.

- Community-driven moderation can enhance threat response.

- Future AI threats include deepfakes and autonomous attacks.

- Continuous monitoring and security audits are essential for resilience.

- Collaboration with AI experts helps in staying ahead of threats.

Related Articles

- From Weekend Project to Docker Deal: The Journey of NanoClaw [2025]

- Exploring Alexa+'s Sassy Upgrade: The Fun Side of AI Assistants [2025]

- Understanding the Impact of AI Chatbots in Facilitating Violence [2025]

- ByteDance's Strategic Move: Acquiring NVIDIA's Latest AI Chips for Global Expansion [2025]

- FBI Investigation: Malware Hidden in Steam Games [2025]

- Gemini's Task Automation: Transforming Workflows with AI [2025]

![Unpacking the Sudden Closure of Digg's Open Beta: The AI Bot Spam Dilemma [2025]](https://tryrunable.com/blog/unpacking-the-sudden-closure-of-digg-s-open-beta-the-ai-bot-/image-1-1773435982825.jpg)