YouTube's AI Deepfake Detection: A Comprehensive Guide [2025]

The digital landscape is constantly evolving, with new technologies reshaping how we interact, consume, and create content. One of the most transformative yet controversial advancements is the rise of deepfake technology. As the lines between reality and artificial creations blur, platforms like YouTube are stepping up to address the potential harms. This article delves into YouTube's recent expansion of its AI deepfake detection tool to all adult users, exploring the intricacies, challenges, and future implications of this significant move.

TL; DR

- YouTube expands AI deepfake detection to all adult users, enhancing content security, as reported by The Hollywood Reporter.

- AI technology identifies digital manipulations with improved accuracy.

- User guidelines and best practices provided to navigate the new tool.

- Challenges include potential false positives and ethical dilemmas.

- Future trends predict wider adoption and continuous technology refinement.

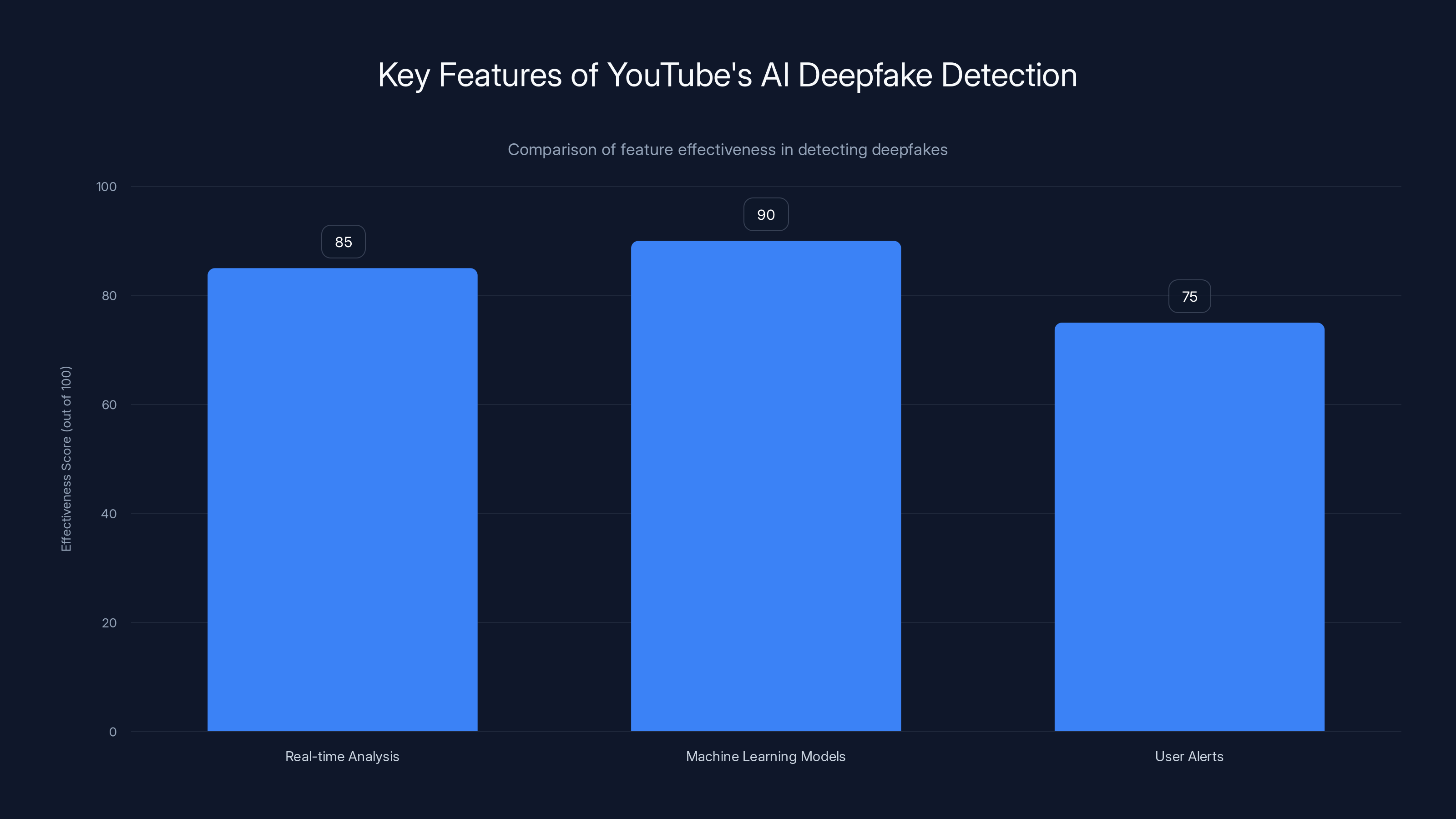

The machine learning models are the most effective feature in detecting deepfakes, followed by real-time analysis and user alerts. Estimated data.

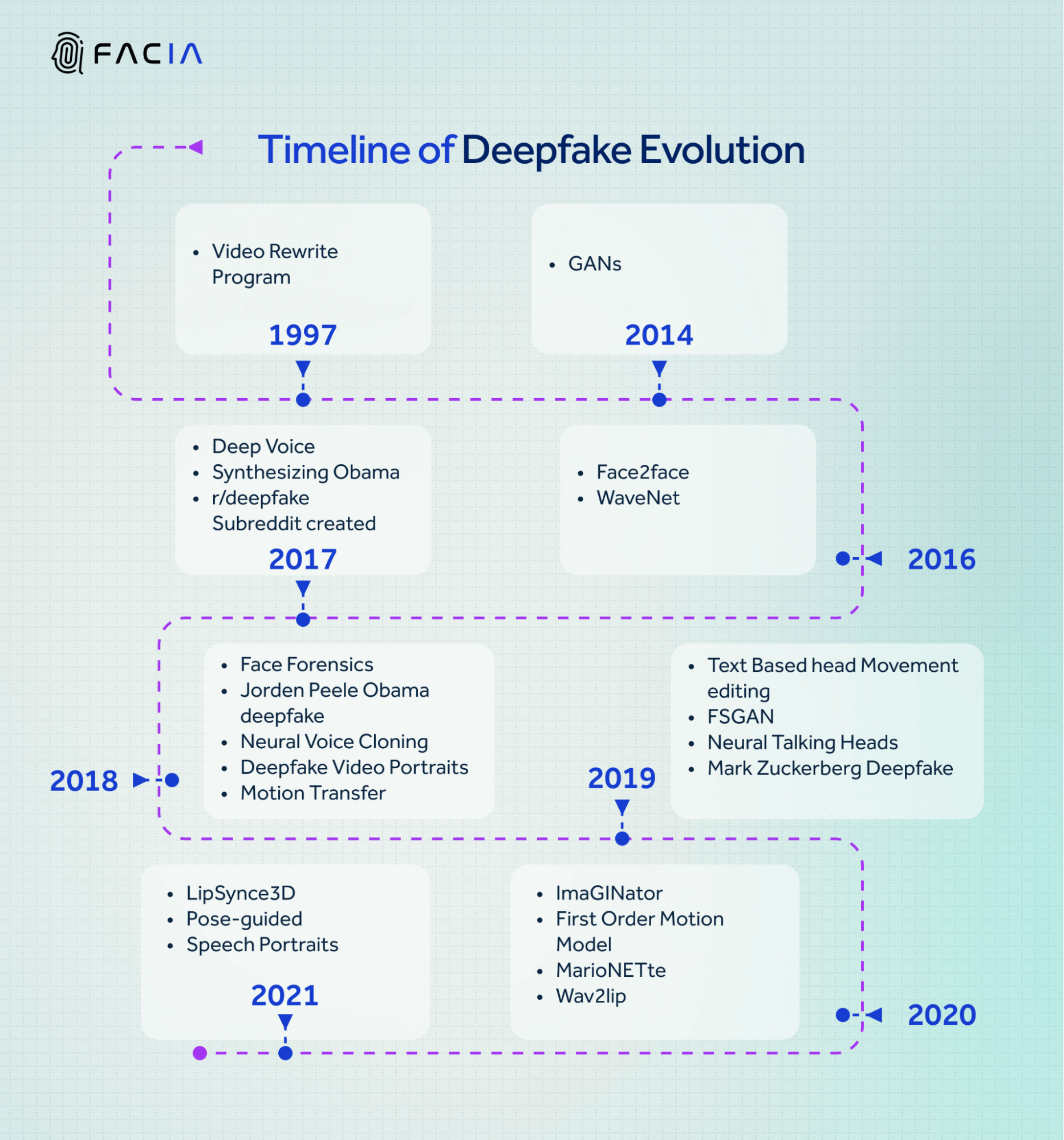

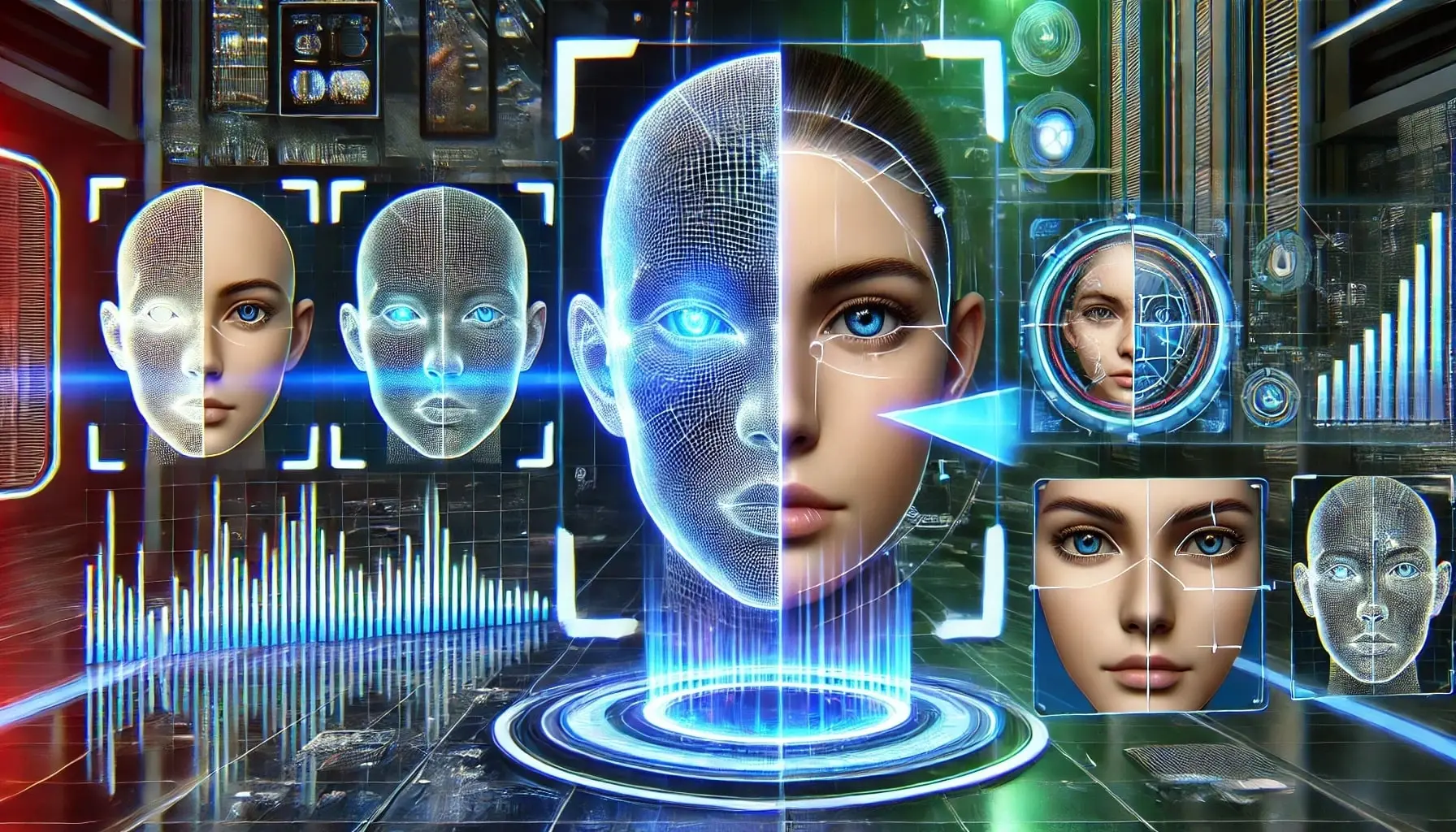

Understanding Deepfake Technology

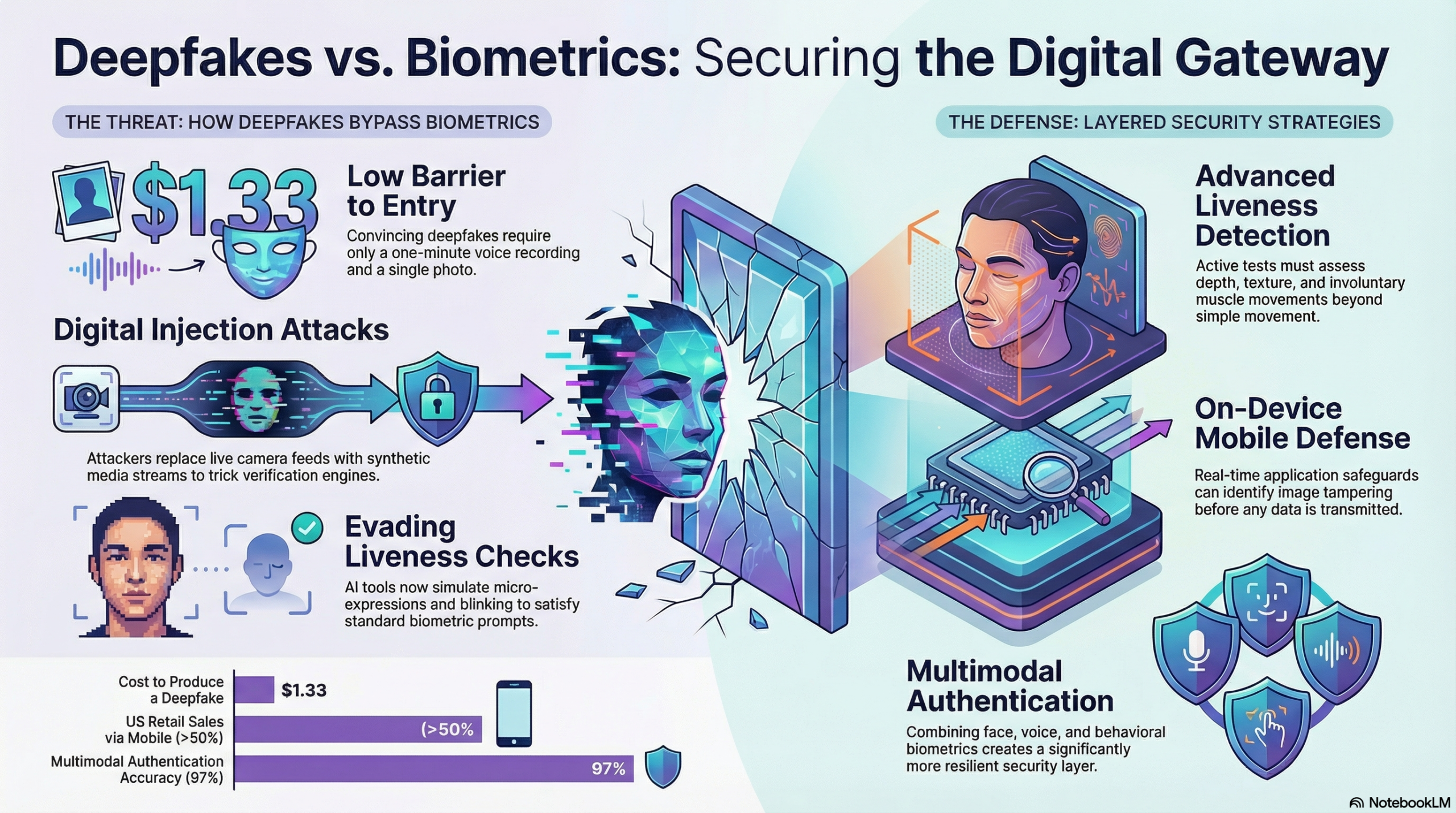

Deepfakes leverage artificial intelligence to create hyper-realistic videos where individuals appear to say or do things they never actually did. The technology uses a type of neural network called generative adversarial networks (GANs). These networks consist of two parts: a generator that creates fake content and a discriminator that attempts to identify the fakes. Through continuous training, GANs produce increasingly convincing deepfakes.

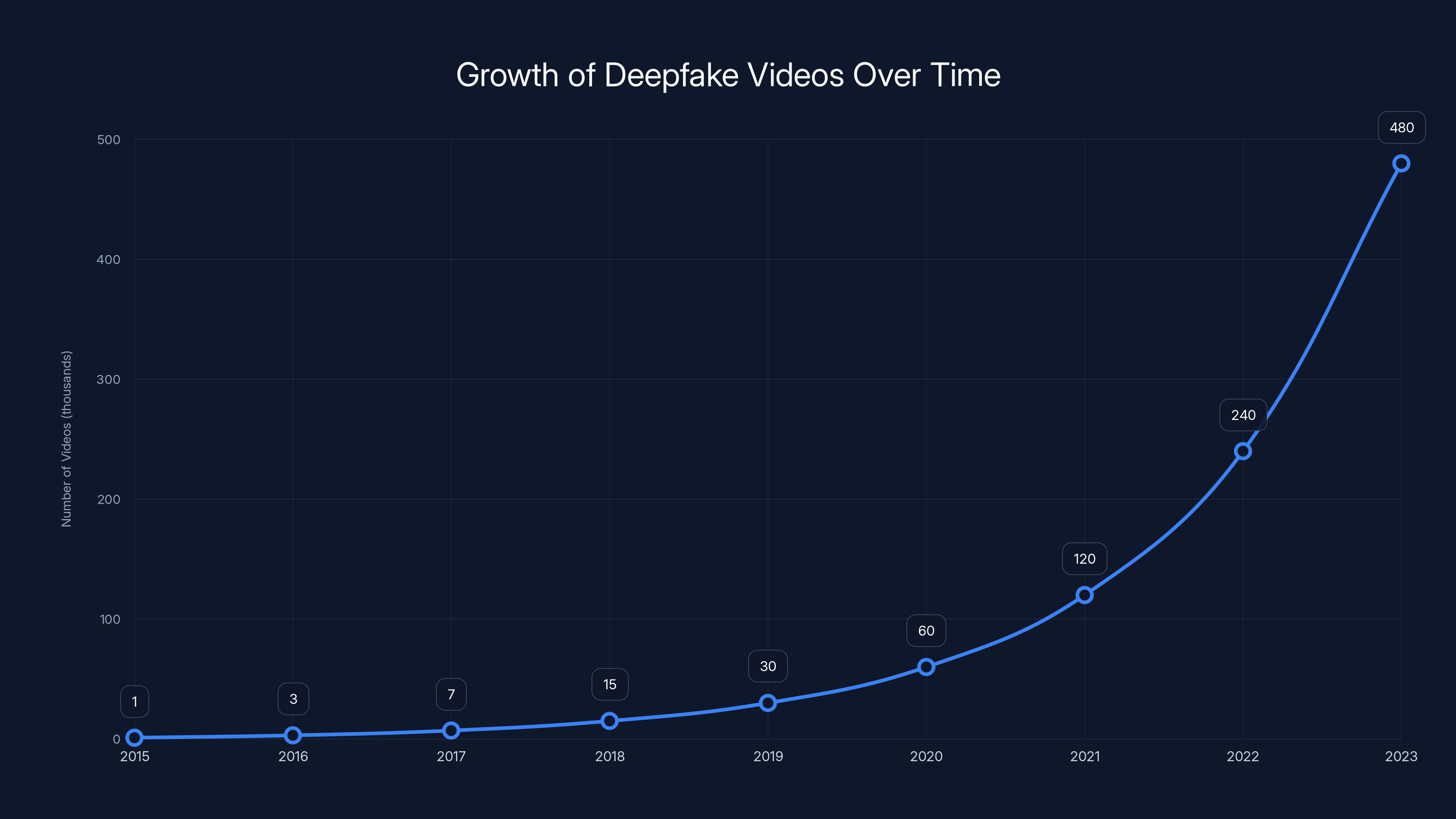

The Rise of Deepfakes

Initially a novelty, deepfakes have proliferated due to advances in AI and increased computing power. While they offer creative possibilities, their misuse poses significant threats, from misinformation and identity theft to damaging personal reputations, as highlighted by Prospect.

YouTube's Response

YouTube recognizes the dual-edged nature of deepfake technology and has taken steps to curb its potential misuse. By expanding its AI deepfake detection tool to all adult users, YouTube aims to maintain trust and safety on its platform, as detailed by Music Business Worldwide.

The number of deepfake videos has grown exponentially from 2015 to 2023, driven by advances in AI and computing power. (Estimated data)

How YouTube's AI Deepfake Detection Works

YouTube's detection tool employs sophisticated algorithms designed to analyze video content for signs of manipulation. It focuses on inconsistencies in visual artifacts, audio mismatches, and unnatural movements that often characterize deepfakes, as explained in The Hollywood Reporter.

Key Features

- Real-time Analysis: Scans videos as they are uploaded, providing immediate feedback.

- Machine Learning Models: Trained on vast datasets to identify subtle manipulations.

- User Alerts: Notifies users of potential deepfakes, allowing them to make informed decisions about content.

Practical Implementation: Best Practices

Adopting YouTube's AI tool requires understanding and adhering to best practices to maximize its effectiveness:

- Regular Updates: Keep abreast of updates and advancements in AI detection capabilities.

- User Education: Educate your audience on how to spot deepfakes and report suspicious content.

- Policy Compliance: Ensure all content complies with YouTube's community guidelines to avoid penalties.

Common Pitfalls

While AI tools are powerful, they are not infallible. Users may encounter false positives, where legitimate content is wrongly flagged. Address these by:

- Verifying Content Manually: Double-check flagged videos to confirm results.

- Appeal Processes: Utilize YouTube's appeal mechanisms if you believe content is incorrectly flagged.

The sophistication of deepfake technology poses the greatest challenge for AI detection tools, followed by false positives and privacy concerns. (Estimated data)

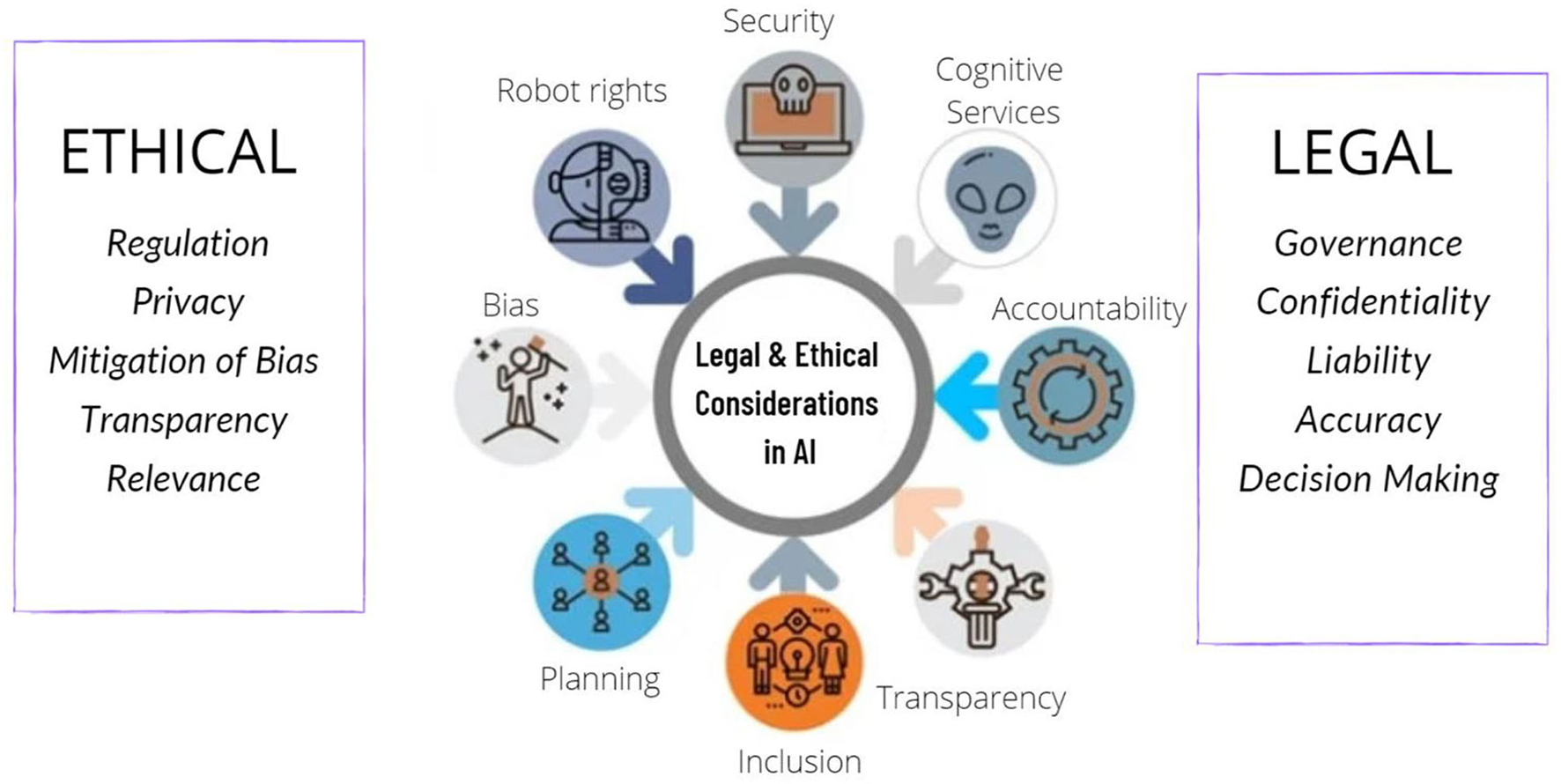

Ethical Considerations

The expansion of AI detection tools raises ethical questions about privacy, censorship, and the potential for overreach. Balancing these concerns with the need for safety and accuracy is critical, as discussed by CISO Series.

User Privacy

- Data Handling: Ensure transparency in how user data is used and protected.

- Informed Consent: Notify users about detection processes and obtain consent where necessary.

Future Trends and Recommendations

Looking ahead, AI deepfake detection will likely see broader adoption across digital platforms. Here are some predicted trends:

- Increased Accuracy: Ongoing advancements in AI will improve detection accuracy and reduce false positives, as noted by eMarketer.

- Cross-Platform Integration: Collaboration between platforms could lead to shared databases and resources for identifying deepfakes.

- User Empowerment: Tools to empower users to create more secure content environments.

Recommendations for Content Creators

- Stay Informed: Keep up with the latest developments in AI technology and platform policies.

- Engage with Communities: Participate in discussions around ethical AI use and help shape best practices.

Conclusion

YouTube's expansion of its AI deepfake detection tool marks a significant step in the ongoing battle against misinformation and digital manipulation. By understanding the technology, adhering to best practices, and considering ethical implications, users can navigate this complex landscape with confidence.

FAQ

What is a deepfake?

A deepfake is a synthetic media where a person in an existing image or video is replaced with someone else's likeness using artificial intelligence.

How does YouTube's AI detection tool work?

The tool analyzes videos for inconsistencies in visual and audio cues, using machine learning models trained on extensive datasets.

What are the benefits of using AI detection tools?

Benefits include enhanced content security, reduced spread of misinformation, and increased trust in online platforms.

What challenges does AI deepfake detection face?

Challenges include false positives, ethical concerns over privacy, and the evolving sophistication of deepfake technology.

How can users protect themselves from deepfakes?

Users can protect themselves by staying informed about AI developments, using detection tools, and critically evaluating content.

What is the future of AI deepfake detection?

The future includes improved detection accuracy, cross-platform collaboration, and increased user empowerment in content security.

Key Takeaways

- YouTube's AI detection tool helps combat misinformation by identifying deepfakes.

- Users must stay informed about updates and use best practices to maximize effectiveness.

- Ethical concerns about privacy and censorship need careful consideration.

- Future trends suggest improved accuracy and cross-platform integration.

- Content creators should engage with evolving AI technologies and best practices.

Tags

deepfake, AI detection, YouTube, content security, misinformation, ethical AI, GANs, digital manipulation, user privacy, future trends

Related Articles

- Inside Tesla's Robotaxi Incidents: What We've Learned and What's Next [2025]

- The Looming Exodus: Why Windscribe and Signal Are Threatening to Leave Canada [2025]

- How AI Could Impact Tech Jobs and Worsen Skill Shortage [2025]

- AI Isn't Failing; Your Enterprise Systems Are [2025]

- The AI Paradox: Why More AI Models Don't Equal Less Fraud [2025]

- UK Antitrust Enquiry into Microsoft's Dominance in Business Software [2025]

![YouTube's AI Deepfake Detection: A Comprehensive Guide [2025]](https://tryrunable.com/blog/youtube-s-ai-deepfake-detection-a-comprehensive-guide-2025/image-1-1778884438177.jpg)