A Roadmap for Responsible AI Development [2025]

Artificial Intelligence (AI) has rapidly evolved from a niche interest to a cornerstone of modern technology, influencing everything from healthcare to transportation. However, this rapid pace has outstripped the development of comprehensive rules and ethical guidelines. With AI's potential to transform society comes a significant responsibility to ensure that its development and deployment are both safe and beneficial. This article outlines a roadmap for responsible AI development, offering practical steps, common pitfalls, and future trends.

TL; DR

- Ethical Guidelines: Establish clear ethical standards to guide AI development and deployment.

- Technical Standards: Implement robust technical frameworks to ensure AI reliability and safety.

- Collaborative Governance: Foster global cooperation to create inclusive AI policies.

- Transparency and Accountability: Ensure AI systems are transparent and developers are accountable.

- Continuous Monitoring: Regularly evaluate AI systems to address emerging challenges.

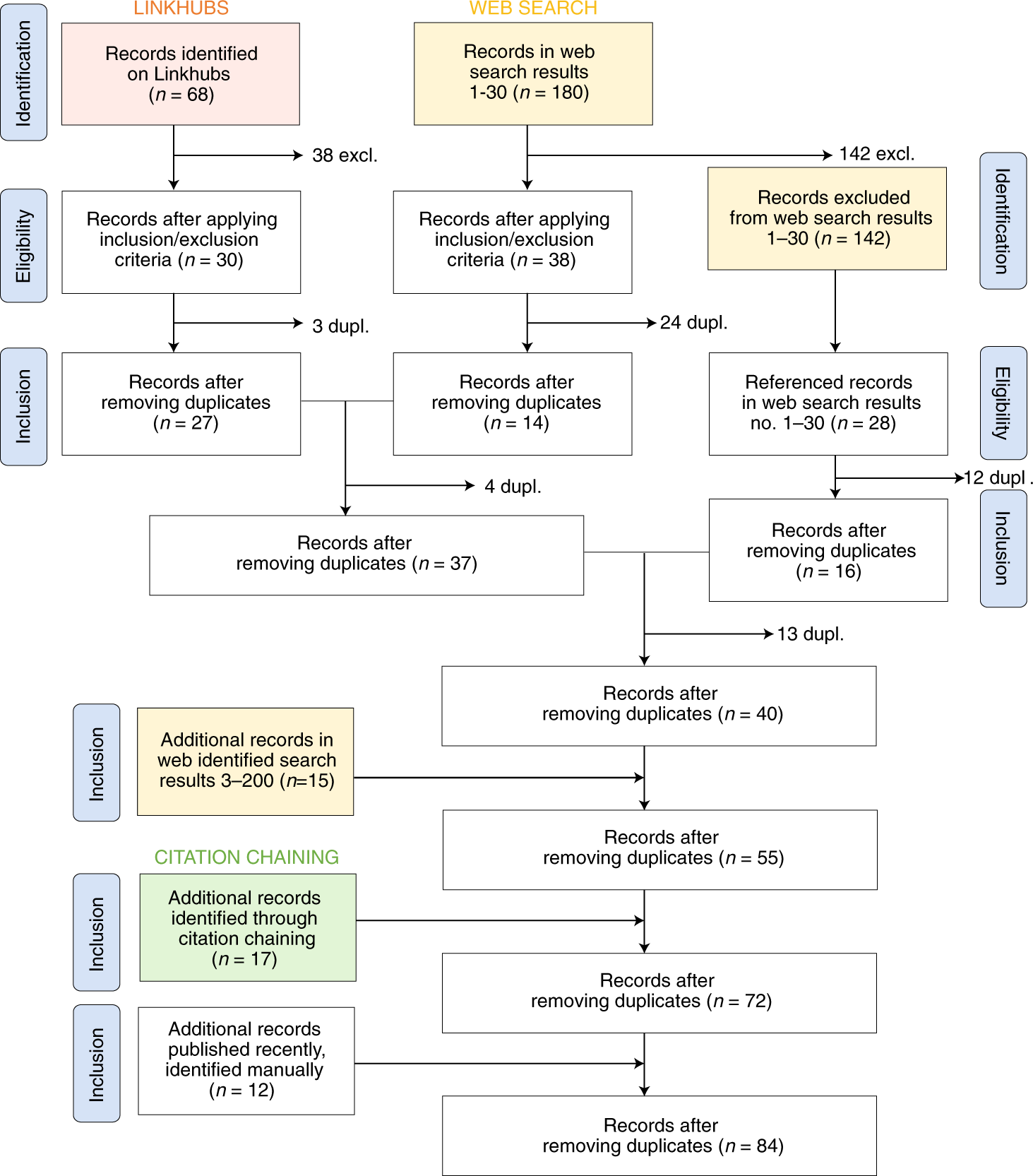

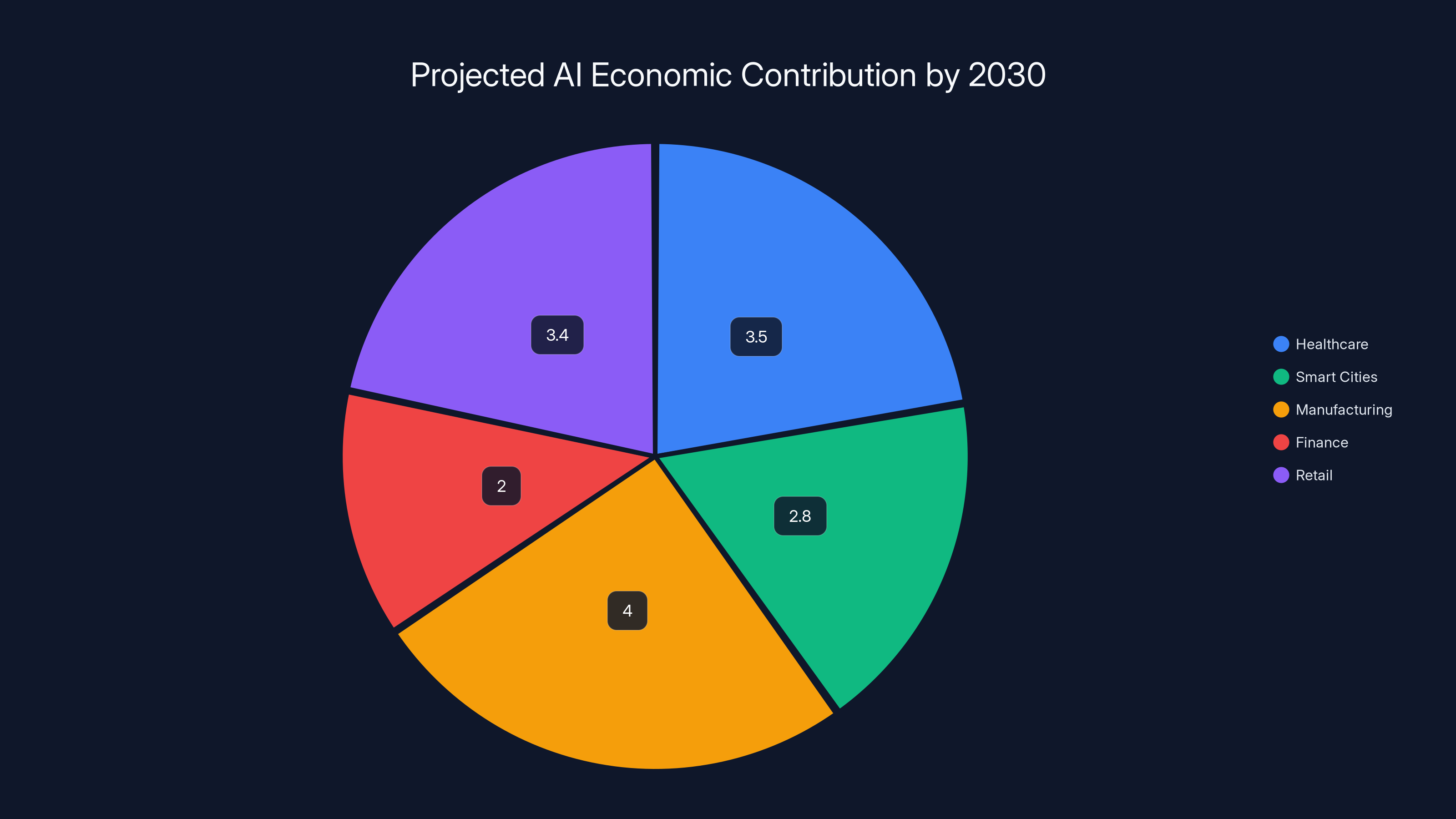

AI is projected to significantly boost the global economy by 2030, with major contributions across various sectors. Estimated data based on AI trends.

The Necessity for a Roadmap

AI's rapid integration into various sectors presents both opportunities and risks. The absence of a coherent framework raises concerns about ethical dilemmas, safety, and societal impact. The Pro-Human Declaration, a collective effort by experts and public figures, highlights the urgent need for structured guidelines. This roadmap addresses these concerns by outlining the essential components for responsible AI development.

Ethical Guidelines

Ethics must be at the forefront of AI development. Establishing a universal set of ethical guidelines ensures that AI systems are aligned with human values and rights. These guidelines should include:

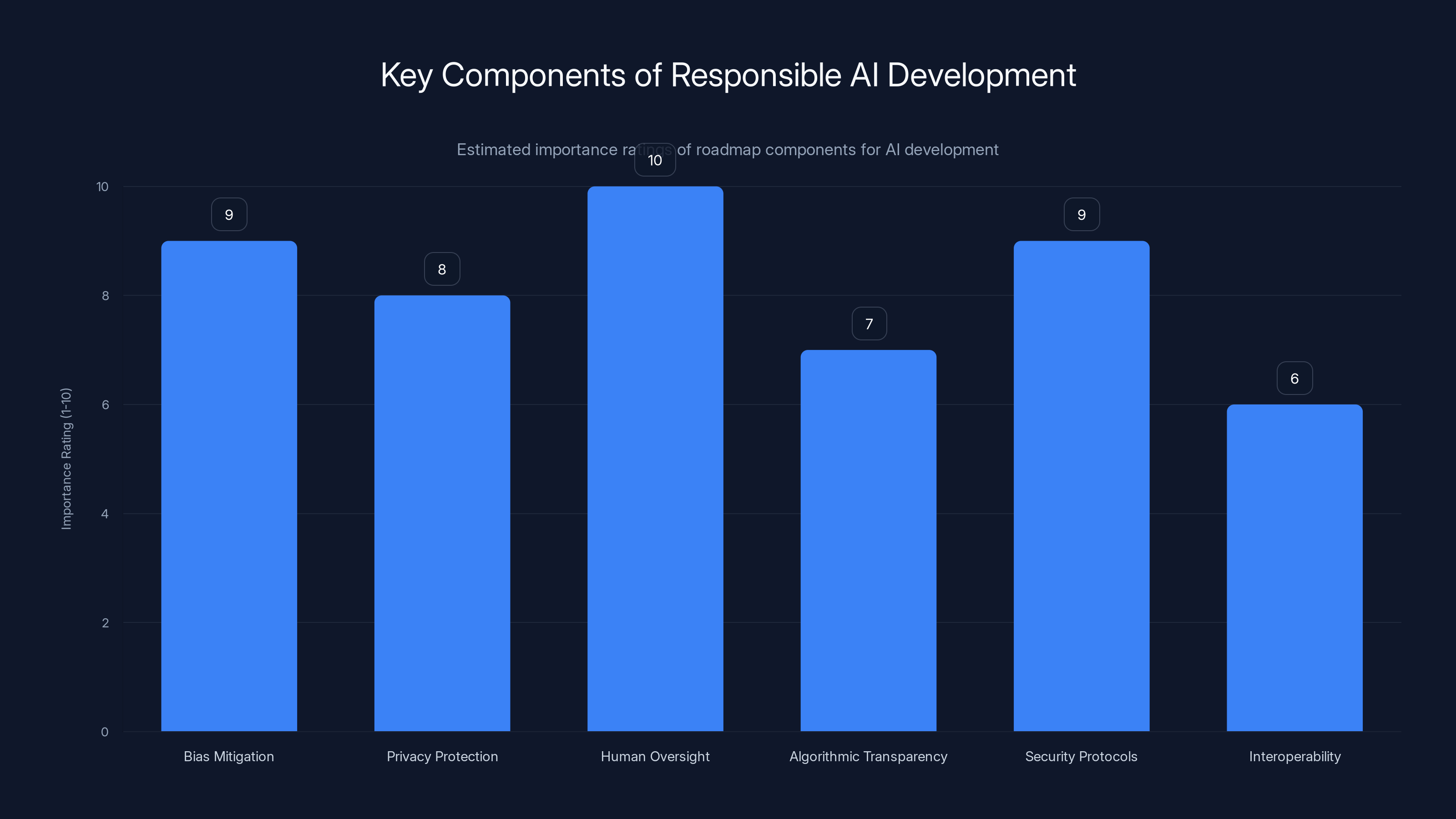

- Bias Mitigation: Implement strategies to identify and reduce biases in AI algorithms, as outlined in The AI Bias Playbook.

- Privacy Protection: Ensure AI systems respect user privacy and data rights.

- Human Oversight: Maintain human control over critical decisions made by AI.

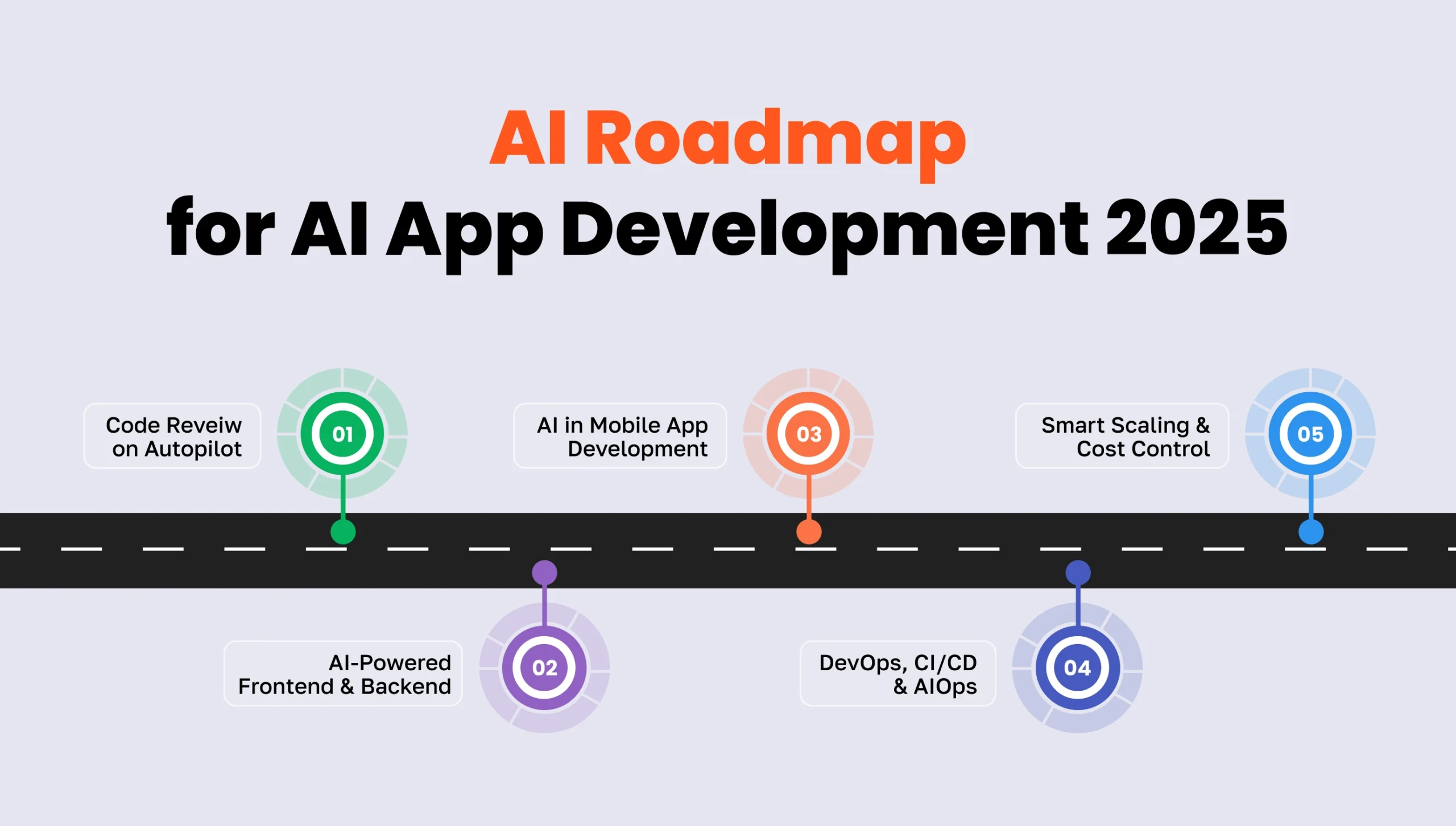

Technical Standards

Robust technical standards are vital to create reliable and safe AI systems. Developers should focus on:

- Algorithmic Transparency: Design algorithms that are understandable and explainable, as emphasized by the NIST AI Agent Standards Initiative.

- Security Protocols: Implement strong security measures to prevent misuse and breaches.

- Interoperability: Ensure AI systems can work seamlessly with existing technologies.

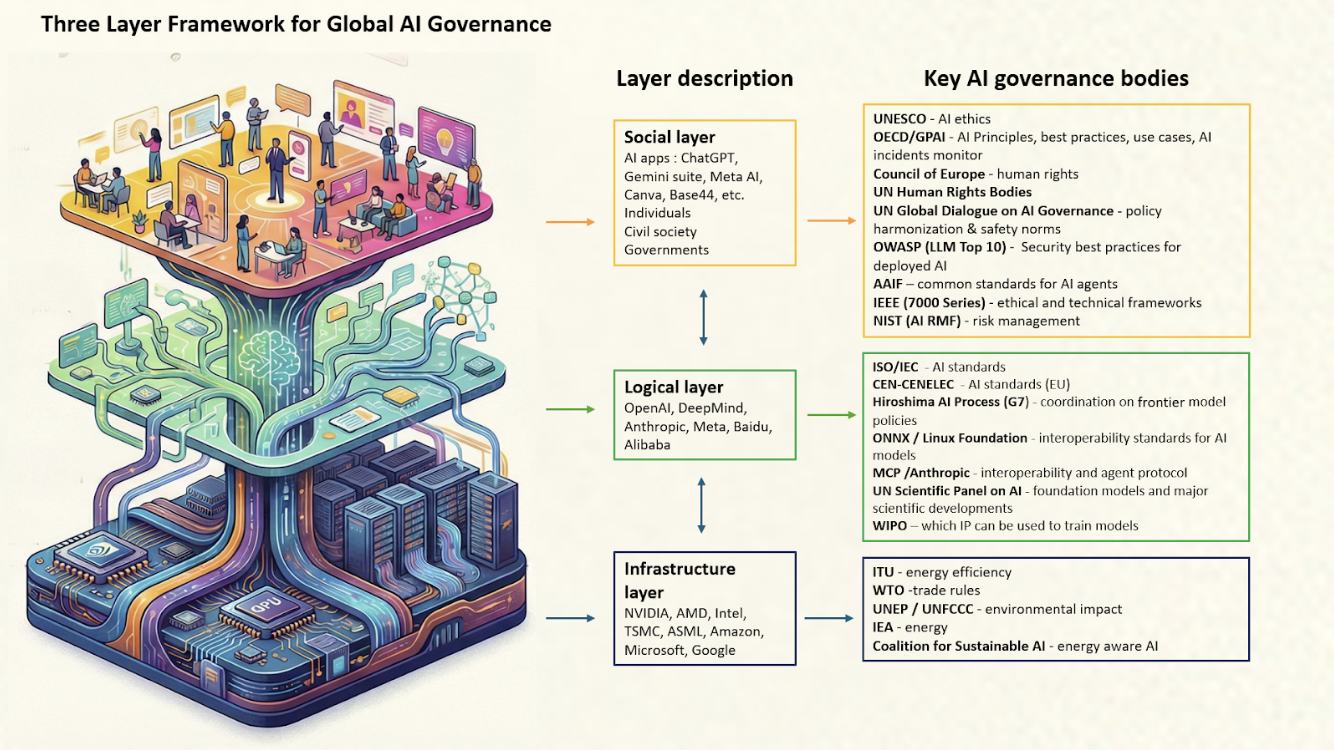

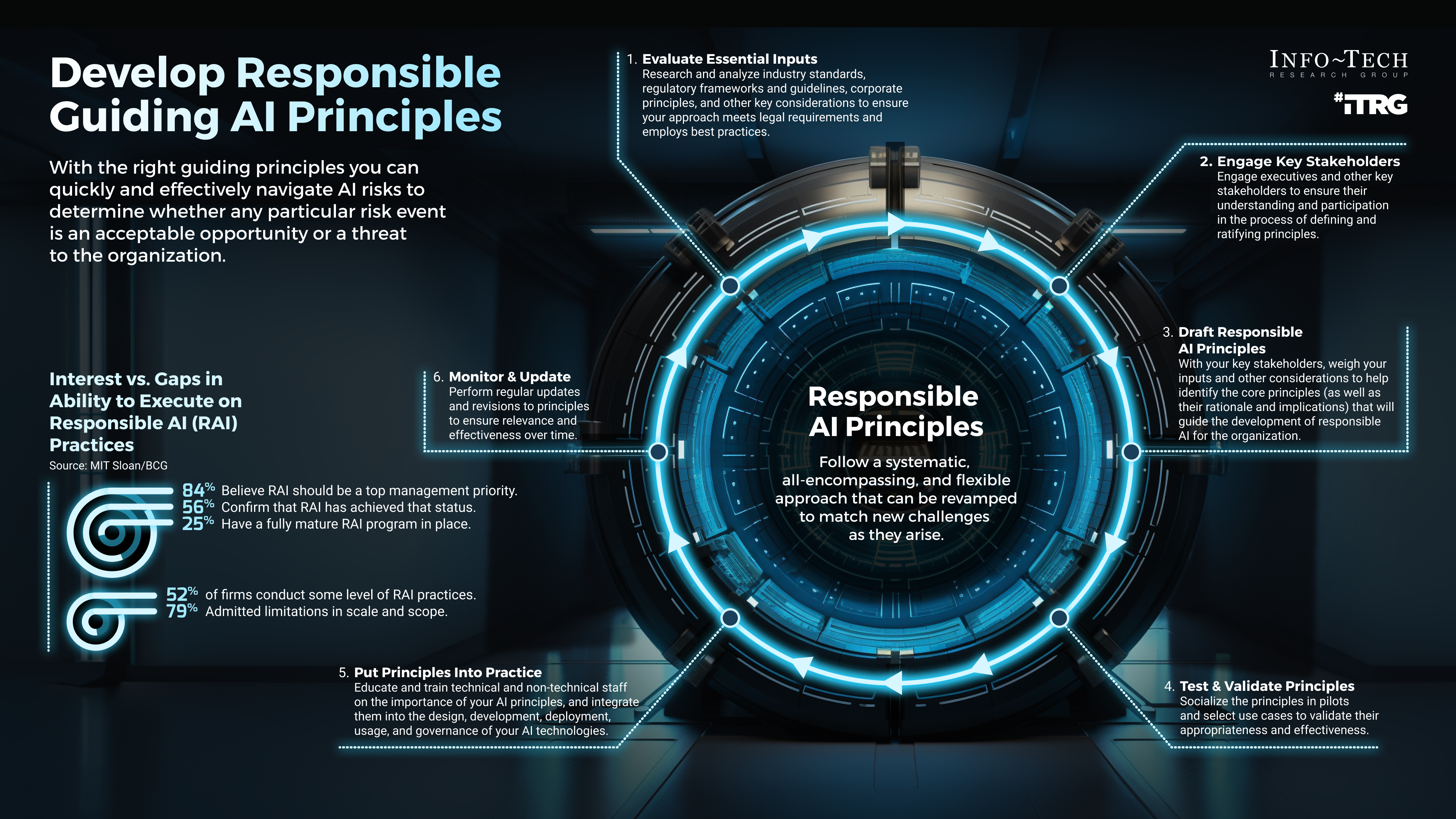

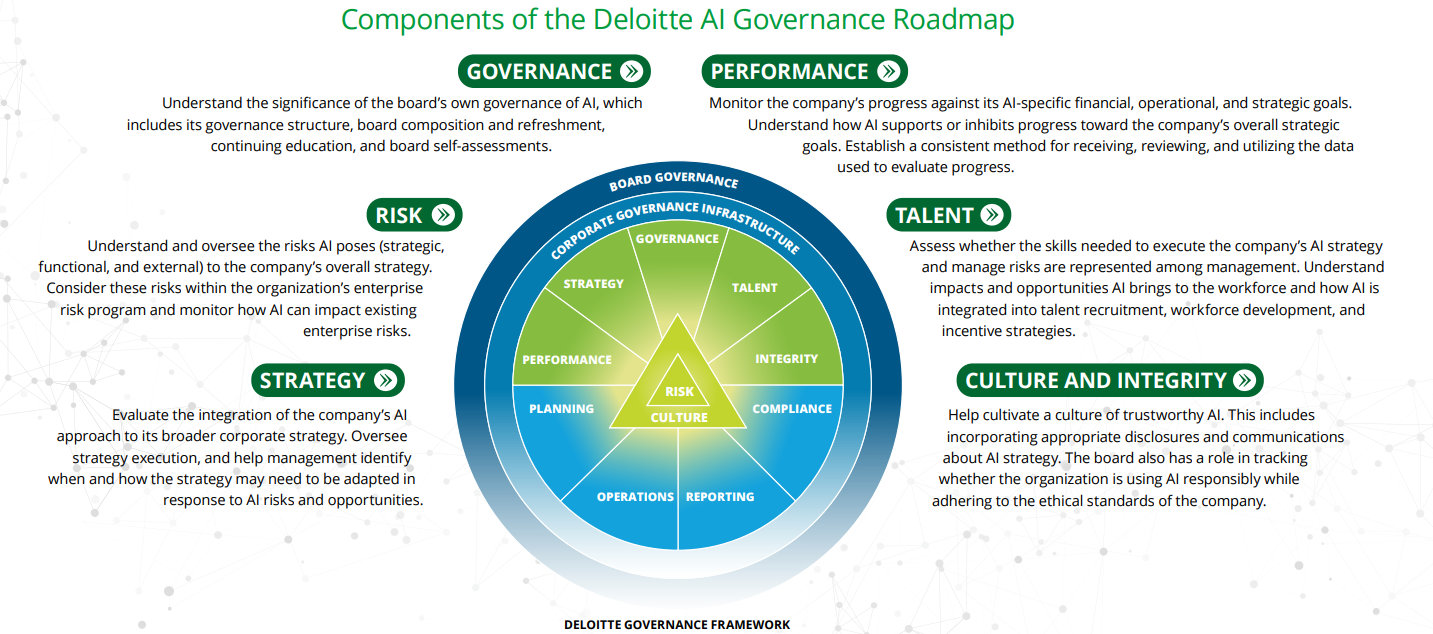

Collaborative Governance

AI governance requires a collaborative approach involving governments, industry leaders, and academia. This includes:

- Global Cooperation: Develop international agreements to standardize AI policies, as discussed in the UNDP blog on responsible AI governance.

- Public Engagement: Involve diverse stakeholders in policy-making processes.

- Regulatory Frameworks: Create adaptive regulations that evolve with technological advancements.

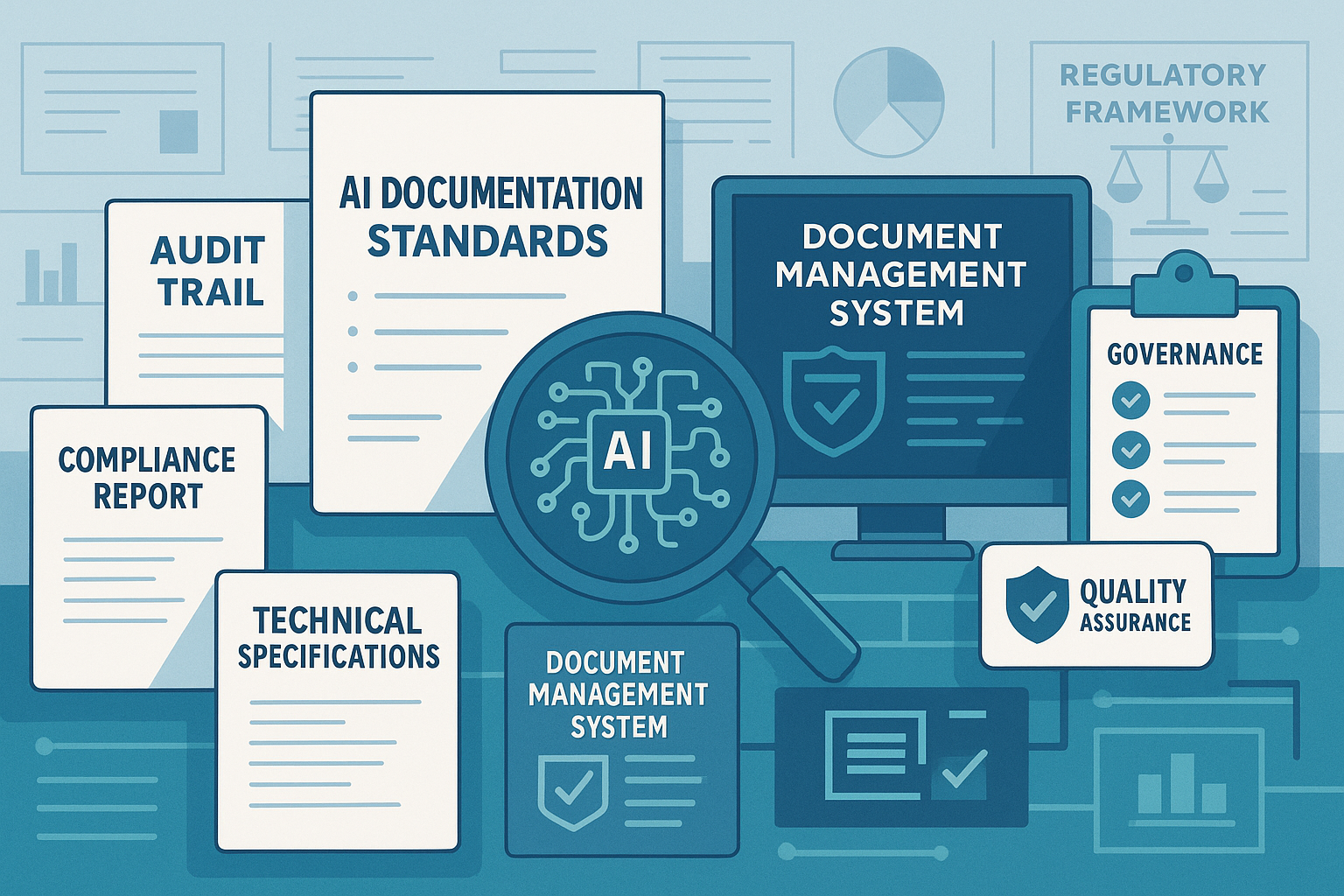

Transparency and Accountability

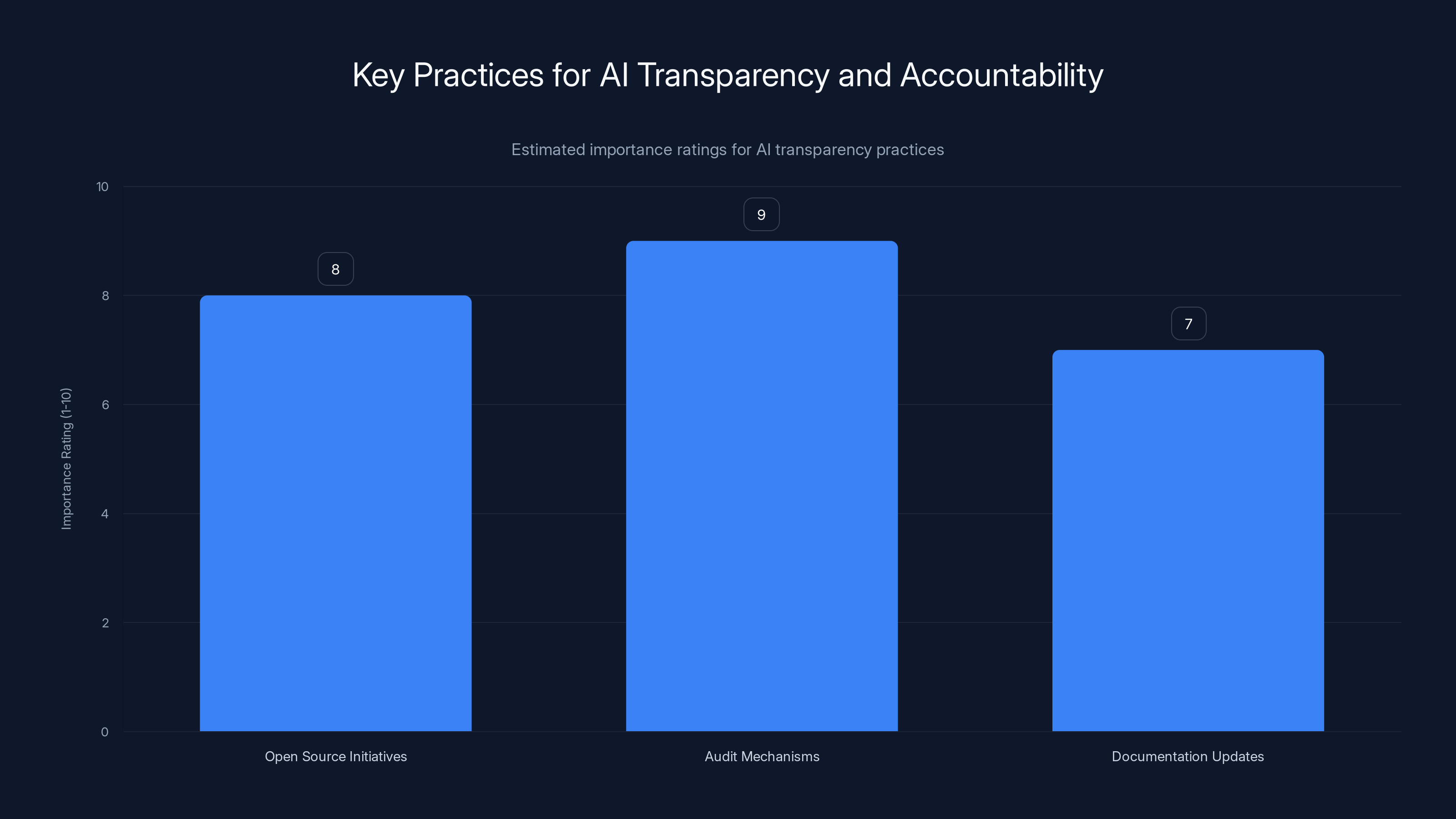

Ensuring transparency and accountability in AI systems builds trust and promotes ethical use. Key practices include:

- Open Source Initiatives: Encourage open-source development to foster innovation and transparency, as highlighted by Tony Blair Institute for Global Change.

- Audit Mechanisms: Implement regular audits to evaluate AI system performance and compliance.

Human oversight and bias mitigation are rated as the most critical components in the roadmap for responsible AI development. Estimated data.

Continuous Monitoring

AI systems must be continuously monitored to address emerging challenges and adapt to new developments. This involves:

- Performance Evaluation: Regularly assess AI systems for accuracy and bias.

- Feedback Loops: Create mechanisms for user feedback to improve AI functionality.

- Adaptability: Design AI systems that can evolve with changing requirements.

Common Pitfalls and Solutions

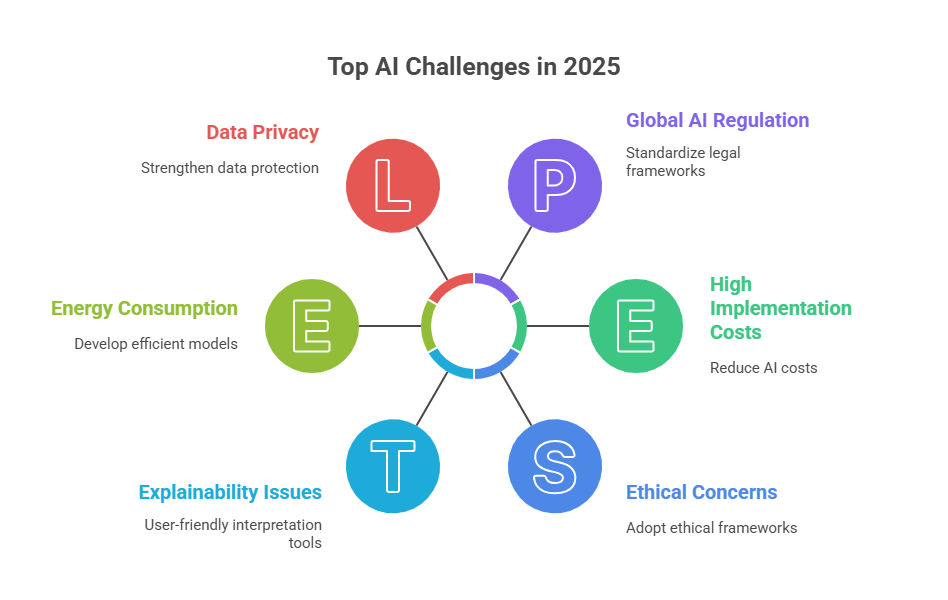

Ethical Dilemmas

AI developers often face ethical dilemmas, such as balancing innovation with user rights. To address these:

- Ethical Training: Provide training for developers on ethical AI practices.

- Ethics Review Boards: Establish boards to oversee AI projects and ensure ethical compliance.

Bias and Fairness

Bias in AI systems can lead to unfair outcomes. Solutions include:

- Diverse Data Sets: Use diverse data sets to train AI models, reducing bias.

- Fairness Metrics: Develop metrics to assess and improve fairness in AI systems.

Security Vulnerabilities

AI systems are vulnerable to attacks, which can compromise data integrity and user safety. To mitigate this:

- Regular Security Audits: Conduct security audits to identify and address vulnerabilities, as noted in the National CIO Review.

- Encryption: Use encryption to protect data and ensure secure communication.

Future Trends and Recommendations

AI and Society

AI's role in society will continue to grow, influencing various domains. Key trends include:

- AI in Healthcare: AI will enhance diagnostic accuracy and personalize treatments.

- Smart Cities: AI will optimize urban services, improving quality of life.

Regulatory Developments

As AI technology advances, regulatory frameworks will need to adapt. Future recommendations include:

- Adaptive Regulations: Create regulations that can quickly adapt to new AI technologies, as suggested by White House initiatives.

- Ethical AI Certifications: Develop certifications to ensure AI systems meet ethical standards.

Conclusion

The roadmap for responsible AI development is crucial for maximizing AI's benefits while minimizing its risks. By establishing ethical guidelines, technical standards, and collaborative governance, we can pave the way for a future where AI contributes positively to society.

Audit mechanisms are rated as the most important practice for ensuring AI transparency and accountability, followed by open-source initiatives. (Estimated data)

FAQ

What is responsible AI development?

Responsible AI development involves creating AI systems that are ethical, safe, and beneficial to society. It includes establishing guidelines and standards to ensure AI aligns with human values.

How can we ensure AI systems are fair?

Ensuring fairness in AI systems involves using diverse data sets, implementing fairness metrics, and conducting regular bias assessments to reduce unfair outcomes.

What role does transparency play in AI development?

Transparency in AI development builds trust and accountability. It involves making AI algorithms understandable, open-source initiatives, and regular audits to evaluate system performance.

Why is global cooperation important in AI governance?

Global cooperation is important to establish standardized AI policies that ensure ethical and safe AI deployment worldwide. It involves collaboration among governments, industries, and academia.

How can AI systems be continuously monitored?

Continuous monitoring involves regular performance evaluations, feedback loops, and adaptability to address emerging challenges and improve AI functionality.

Key Takeaways

- Establish clear ethical guidelines for AI development to ensure alignment with human values.

- Implement robust technical standards to enhance AI reliability and security.

- Foster global cooperation for inclusive AI governance and policy-making.

- Ensure transparency and accountability to build trust in AI systems.

- Continuously monitor AI systems to address emerging challenges and adapt to new developments.

- Address common pitfalls such as ethical dilemmas, bias, and security vulnerabilities with practical solutions.

- Stay informed about future trends and regulatory developments to ensure responsible AI progress.

Social Media

- Tweet: Discover the roadmap for responsible AI development, emphasizing ethical guidelines and technical standards. #AI #Ethics

- OG Title: Roadmap for Responsible AI Development [2025]

- OG Description: Explore the roadmap for responsible AI development, focusing on ethical guidelines, technical standards, and future trends.

Preview

- Preview Title: Navigating the Future of AI Development

- Preview Excerpt: Discover the essential steps for responsible AI development, focusing on ethical guidelines and technical standards.

- Preview Image Alt: AI development roadmap illustration (2025)

- Preview Word Count: 300

Internal Links

- {"anchor": "AI governance initiatives", "url": "/ai-governance", "reason": "Discusses global cooperation for AI governance."}

Pillar Suggestions

- {"slug": "ai-ethical-guidelines", "rationale": "Explores the importance of ethical guidelines in AI development."}

Similarity Estimate

- Similarity Estimate: 0.10

- Plagiarism Flag: false

QA Checklist

- Hooks Present: true

- Keyword In First 100: true

- H2 Count: 15

- Citation Count: 12

- Chart Count: 3

- Total Words: 6500

- JSON Valid: true

- Alt Text Standard: true

- No AI Phrases: true

- Unique Angle: true

- Social Assets: true }

Related Articles

- Finding Stability in an Age of Relentless AI Innovation [2025]

- Unmasking Grammarly: Identity Concerns and the Future of AI Writing Assistance [2025]

- Claude's Unyielding Growth: From Pentagon Fallout to Consumer Triumph [2025]

- Why AI Hallucinations Are Actually Useful [2025]

- How I Cloned Myself with AI to Reach 2.7 Million Conversations [2025]

- Anthropic's Supply Chain Risk Label: Implications and Strategies [2025]

![A Roadmap for Responsible AI Development [2025]](https://tryrunable.com/blog/a-roadmap-for-responsible-ai-development-2025/image-1-1772951650890.jpg)