Why AI Hallucinations Are Actually Useful [2025]

AI hallucinations have a bad reputation. Many see them as errors or failures of AI systems, particularly large language models (LLMs). But what if they're more than just mistakes? Let's dive into why AI hallucinations are not only inevitable but also potentially beneficial.

TL; DR

- AI Hallucinations Are Natural: They're a byproduct of probabilistic models.

- Creative Potential: Hallucinations can inspire new ideas and approaches.

- Diagnostic Tool: They reveal model limitations and help in debugging.

- Enhancing Human-AI Collaboration: Hallucinations can prompt critical thinking.

- Future of AI: Embracing hallucinations can lead to robust AI systems.

Understanding AI Hallucinations

AI hallucinations occur when a model generates information that appears plausible but is factually incorrect. These happen because AI models, especially LLMs, predict the next word or phrase based on probability rather than fact-checking against a database. According to Britannica, LLMs are designed to generate text based on learned patterns from vast datasets.

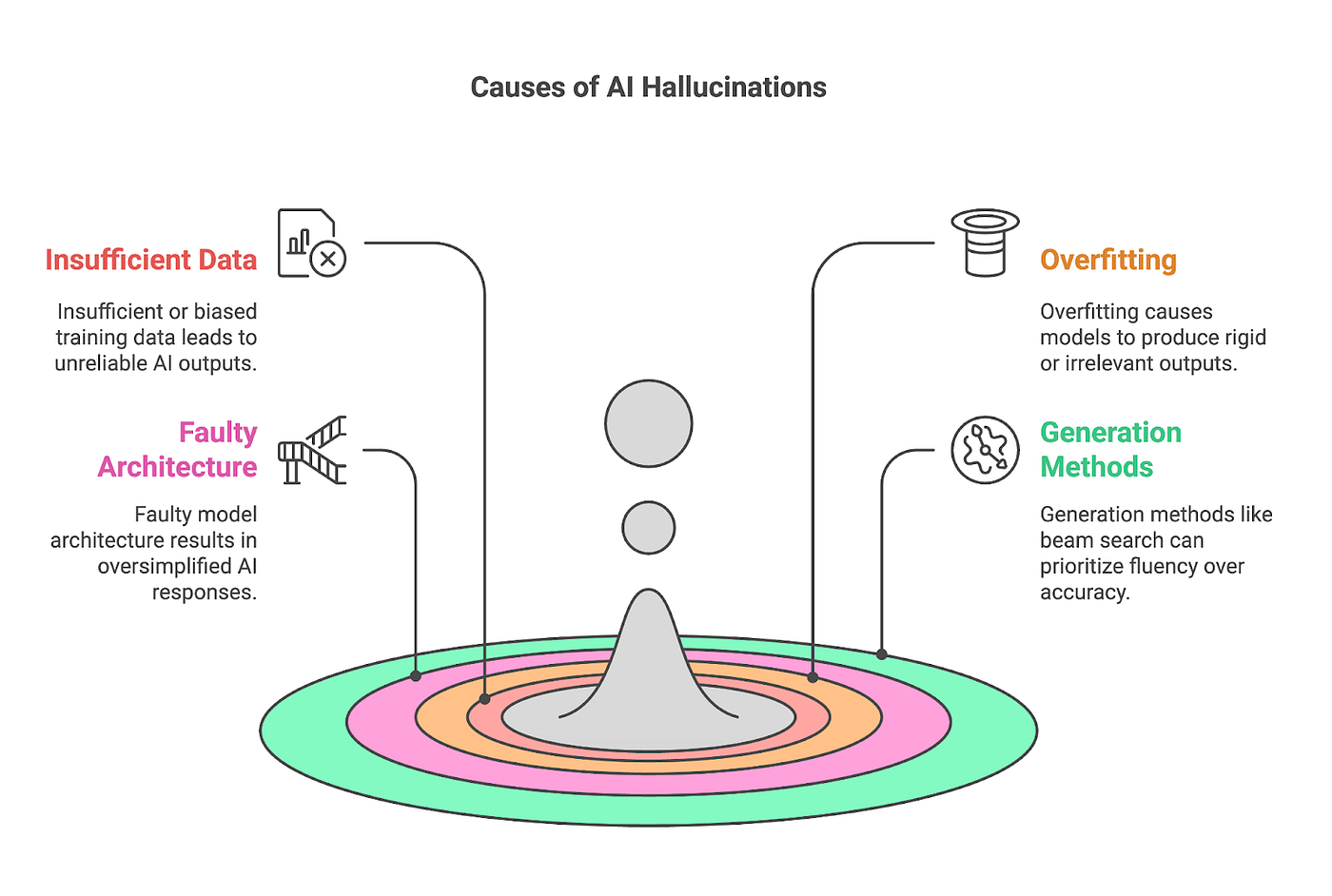

How They Happen

LLMs like GPT-3 and GPT-4 are trained on vast amounts of text data. They learn patterns, word associations, and context through this data. However, they do not understand the world as humans do. Instead, they operate on the principle of statistical likelihood. OpenAI's documentation explains that these models generate responses based on the most likely sequence of words.

When asked a question, these models generate responses based on the most likely sequence of words. If the model hasn't encountered enough reliable data on a topic—or if the data is ambiguous—it might create a plausible-sounding, yet incorrect, response. For instance, NVIDIA's CEO Jensen Huang has discussed the challenges of AI hallucinations in current models.

Example: Imagine asking an AI about a rare historical event. If the event is not well-documented in the training data, the AI might fill gaps with 'hallucinated' details.

The Creative Potential of Hallucinations

While hallucinations are often seen as errors, they can also be the seeds of innovation. Here's why:

Sparking Creativity

AI hallucinations can inspire creativity by offering unexpected ideas that humans might not consider. For example, in creative writing, an AI might introduce an unusual plot twist that a human writer could develop further. Simplilearn highlights how AI is increasingly being used in creative fields to generate novel content.

Encouraging Exploration

When a model hallucinates, it encourages users to explore alternative scenarios. This can be beneficial in fields like scientific research, where considering multiple hypotheses is crucial. A recent study in Nature suggests that AI-generated hypotheses can lead to new scientific discoveries.

Promoting Diverse Thinking

Hallucinations can encourage diverse thinking by presenting unconventional viewpoints. This diversity can lead to breakthrough ideas in problem-solving and innovation. Time Magazine discusses how AI is used in healthcare to provide diverse diagnostic perspectives.

Diagnostic Tool for AI Systems

Hallucinations can serve as a powerful diagnostic tool for understanding AI limitations.

Identifying Weaknesses

By analyzing hallucinations, developers can identify where a model lacks information or understanding. This insight can guide future training to improve model accuracy. AI Multiple highlights the importance of identifying data gaps in AI systems.

Practical Implementation:

- Log hallucinations during model deployment.

- Analyze patterns to identify data gaps.

- Retrain the model with additional data to address these gaps.

Debugging and Improvement

Hallucinations can also pinpoint errors in model logic or data preprocessing. By studying these errors, developers can refine their models to prevent similar issues in the future. Nature's research emphasizes the role of hallucinations in improving AI systems.

Enhancing Human-AI Collaboration

AI hallucinations can enhance human-AI collaboration by prompting critical thinking and cross-verification.

Encouraging Verification

When AI generates unexpected results, it prompts users to verify information, leading to more robust decision-making processes. Automotive News discusses how AI in finance encourages verification and deeper analysis.

Scenario: A financial analyst uses AI to generate predictions. An unexpected result prompts further investigation, leading to a more nuanced market analysis.

Building Trust Through Transparency

By acknowledging hallucinations, AI developers can build trust with users. Transparency about model limitations encourages users to engage critically with AI-generated content. Mediabistro highlights the importance of transparency in AI applications.

The Future of AI: Embracing Hallucinations

As AI continues to evolve, embracing hallucinations could lead to more robust and adaptable systems.

Training with Hallucinations

Incorporating hallucinations into training processes can help models learn to self-correct over time. This approach could lead to the development of AI systems that are both creative and reliable. Nature suggests that training with hallucinations can enhance AI capabilities.

Developing Hybrid Systems

Combining AI with human oversight can mitigate the risks of hallucinations while harnessing their creative potential. Hybrid systems can leverage the strengths of both AI and human intuition. Time Magazine discusses the benefits of AI-human hybrid systems in healthcare.

Future Trend: Expect to see more tools that integrate AI-generated ideas with human critical thinking in industries like design, marketing, and research.

Common Pitfalls and Solutions

While AI hallucinations offer potential benefits, they also come with challenges.

Pitfall: Over-Reliance on AI

Relying too heavily on AI outputs can lead to misinformation.

Solution: Encourage users to verify AI-generated information and provide tools for easy cross-referencing.

Pitfall: Misinterpretation of Data

Users may misinterpret hallucinated information as factual.

Solution: Implement clear labeling of AI-generated content and provide disclaimers where necessary.

Pitfall: Erosion of Trust

Frequent hallucinations can erode user trust in AI systems.

Solution: Maintain transparency about the nature of AI-generated content and actively involve users in model improvement processes.

Practical Implementation Guides

To effectively harness AI hallucinations, consider these practical steps:

- Data Logging: Continuously log AI outputs to identify patterns in hallucinations.

- User Feedback: Implement systems for users to report and correct hallucinations.

- Iterative Training: Regularly update AI models with new data and feedback.

- Cross-Verification Tools: Develop tools that allow users to easily verify AI-generated information.

Future Trends and Recommendations

The future of AI will likely see a shift towards embracing the creative potential of hallucinations.

Trend: AI as a Creative Partner

Expect AI to become a more integral part of creative processes, offering novel ideas and perspectives. Simplilearn discusses the growing role of AI in creative industries.

Recommendation: Balance AI Creativity with Human Judgment

While AI can generate innovative ideas, human judgment remains crucial in evaluating and refining these ideas. Collaborative systems that leverage the strengths of both AI and human intuition will likely dominate.

Trend: Transparency and Trust

As AI becomes more integrated into daily life, transparency about hallucinations and AI limitations will be key to maintaining user trust. Mediabistro emphasizes the importance of transparency in AI applications.

Conclusion

AI hallucinations are not merely errors to be corrected but opportunities to be embraced. By recognizing their potential, we can create AI systems that are not only more reliable but also more innovative.

Bottom Line: Embracing AI hallucinations can lead to more creative and robust AI systems that enhance human ingenuity.

FAQ

What are AI hallucinations?

AI hallucinations occur when a model generates information that is factually incorrect but appears plausible. They are common in probabilistic models like LLMs. Britannica provides an overview of how LLMs function.

How do AI hallucinations happen?

They happen when models predict responses based on statistical likelihood rather than factual accuracy, often due to limited or ambiguous data. OpenAI explains the mechanics behind these predictions.

Can AI hallucinations be beneficial?

Yes, they can inspire creativity, highlight model weaknesses, and enhance human-AI collaboration by prompting critical thinking. Nature's research supports the creative potential of AI hallucinations.

How can we manage AI hallucinations?

By logging outputs, encouraging user feedback, and regularly updating models with new data, we can manage hallucinations effectively. AI Multiple discusses strategies for managing AI outputs.

What is the future of AI hallucinations?

The future will likely see AI used as a creative partner, with a focus on transparency and trust to ensure effective human-AI collaboration. Simplilearn highlights future trends in AI development.

Key Takeaways

- AI hallucinations are a natural byproduct of probabilistic models and can be creatively beneficial.

- They encourage diverse thinking and inspire new approaches in various fields.

- Hallucinations serve as diagnostic tools, revealing model limitations and informing training improvements.

- Human-AI collaboration can be enhanced by using hallucinations to prompt critical thinking.

- Embracing hallucinations can lead to more robust and adaptable AI systems in the future.

Related Articles

- OpenAI's GPT-5.4: A Leap Toward Autonomous AI Agents [2025]

- How I Cloned Myself with AI to Reach 2.7 Million Conversations [2025]

- How Roblox Uses AI to Censor Chats: A Deep Dive [2025]

- Accelerating AI Development: Strategic Isolation and Empowerment of Engineering Teams

- Say Goodbye to Intrusive Ads: NymVPN’s New Ad-Blocker Revolutionizes Desktop Browsing [2025]

- Jack Dorsey Is Ready to Explain the Block Layoffs | WIRED

![Why AI Hallucinations Are Actually Useful [2025]](https://tryrunable.com/blog/why-ai-hallucinations-are-actually-useful-2025/image-1-1772811397131.jpg)