AI Agents: The Hidden Gatekeepers Giving Hackers Full System Access [2025]

AI agents are revolutionizing the way we interact with technology, automating tasks and streamlining operations. However, they are also quietly becoming the Achilles' heel of cybersecurity, granting hackers full system access through poorly secured implementations. This article delves into how AI agents are being exploited, the dangers of excessive permissions, and the best practices to protect your systems.

TL; DR

- AI agents can be a security risk if misconfigured, granting hackers unauthorized access. According to a recent report, the industry is aware of these vulnerabilities.

- Excessive permissions are often the root cause of these vulnerabilities, as highlighted in the GitGuardian blog.

- Regular audits and least privilege principles are essential to safeguard systems, as noted by Reuters.

- AI agent security requires ongoing monitoring and updates, emphasized by ESET's insights.

- User awareness is crucial in preventing unauthorized access, as discussed in a report by Industrial Cyber.

- The future of AI security will involve more sophisticated threat detection, according to Nature.

The Rise of AI Agents

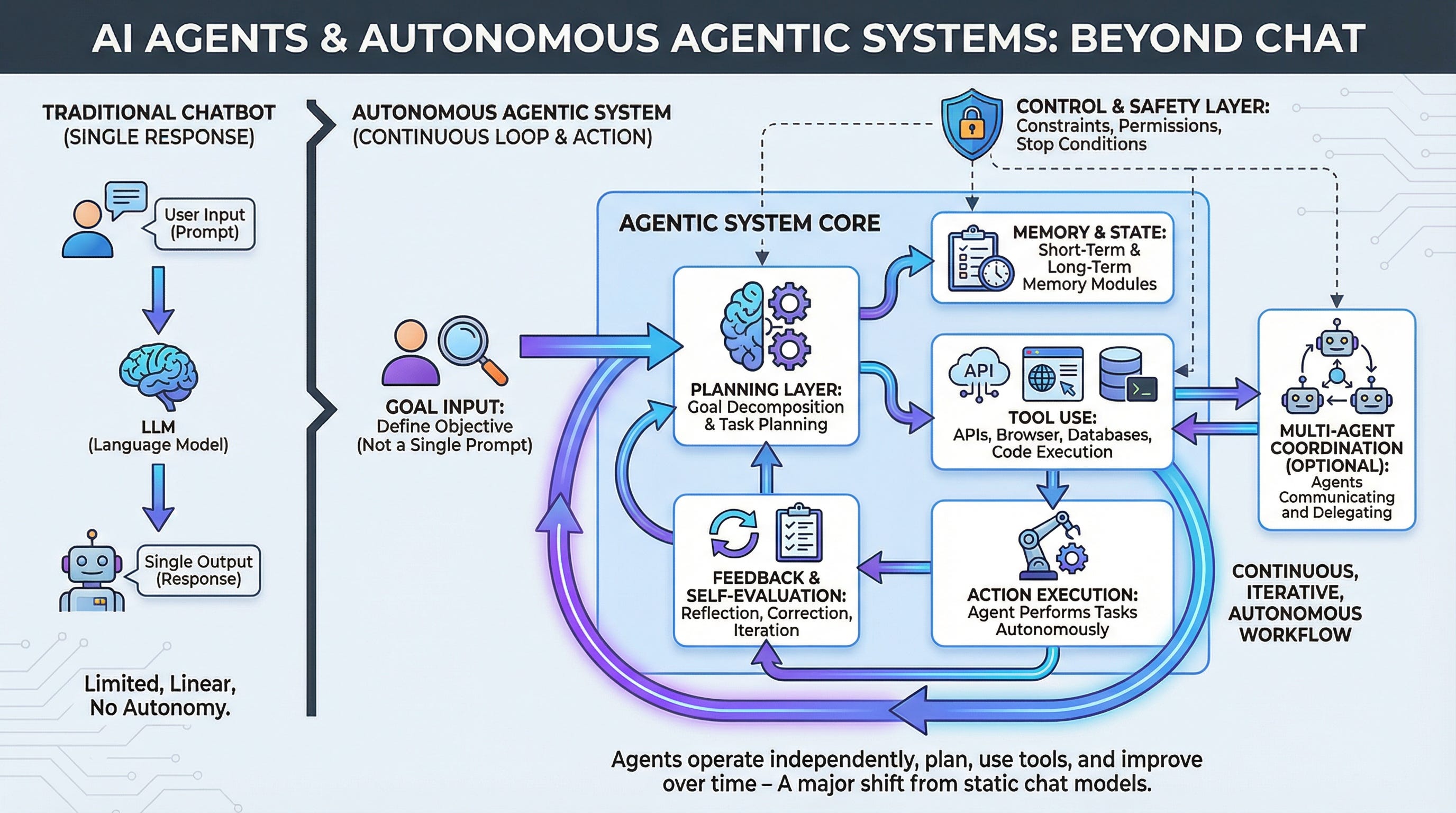

AI agents are designed to automate tasks, manage workflows, and interact with users in natural language. They are increasingly integrated into various systems, from customer support to complex data processing. Companies rely on these agents to improve efficiency and reduce costs.

What Are AI Agents?

AI agents are software programs that use artificial intelligence to perform tasks autonomously. They can analyze data, learn from interactions, and make decisions based on programmed logic or machine learning models.

Key Features:

- Automation: Performing repetitive tasks without human intervention.

- Learning: Adapting to new information and user behaviors.

- Interactivity: Communicating with users in natural language.

- Decision-Making: Analyzing data to make informed decisions.

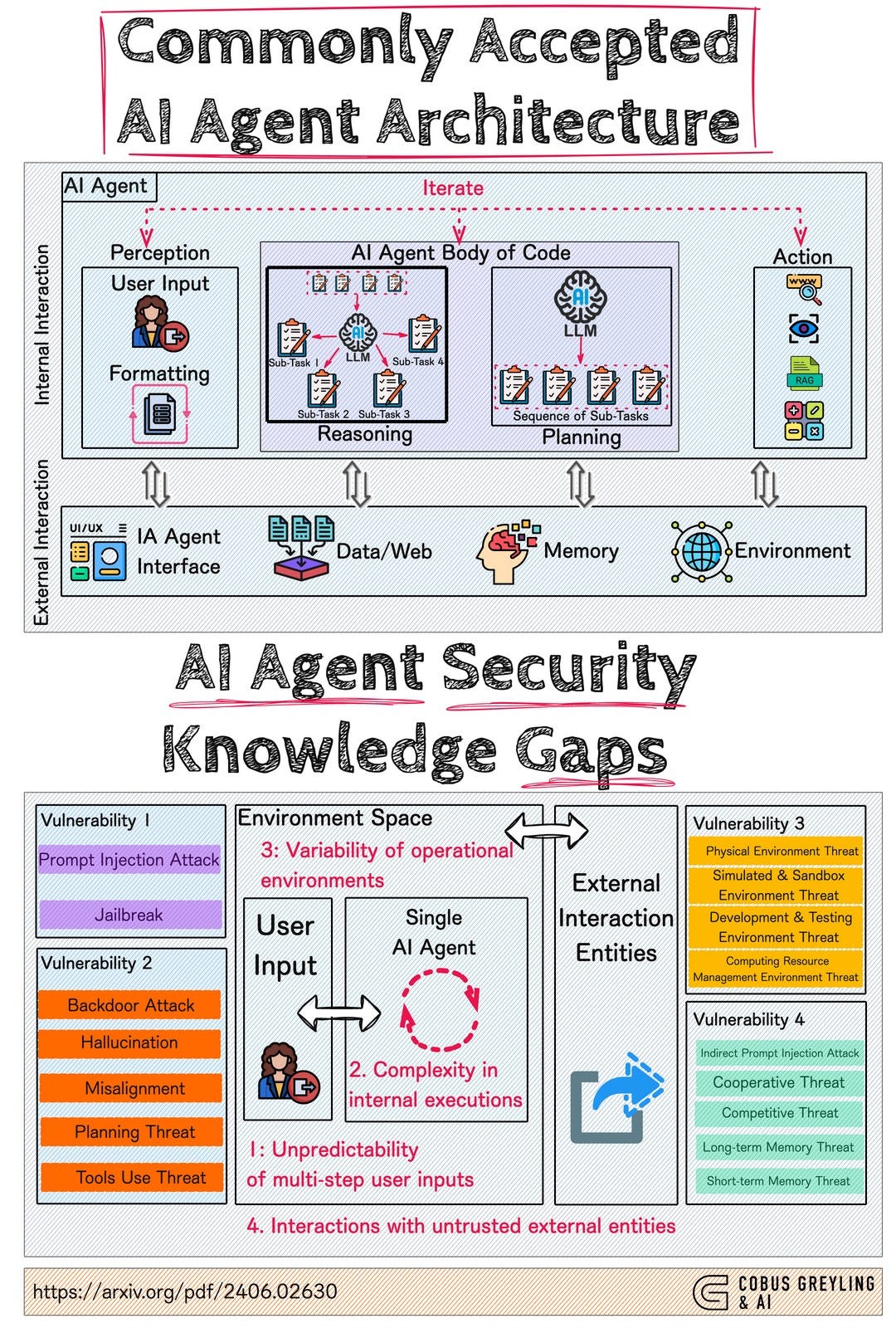

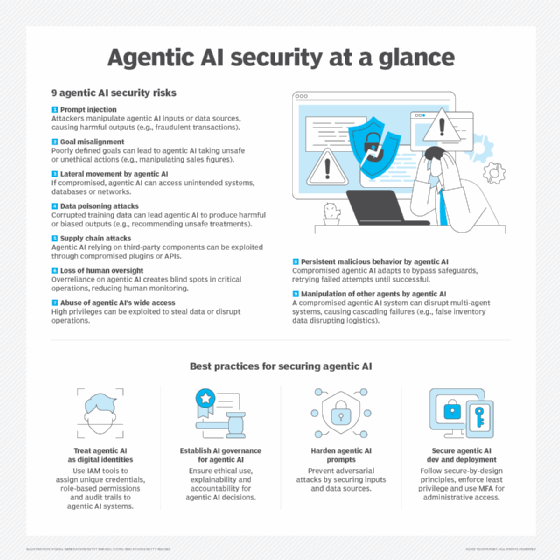

The Security Risks of AI Agents

While AI agents offer numerous benefits, they also pose significant security risks. Hackers can exploit these agents to gain unauthorized access to systems, often without the knowledge of the users or administrators.

Common Vulnerabilities

- Excessive Permissions: AI agents are often given more access than necessary, allowing hackers to exploit these permissions. This issue is highlighted in the Vertex AI vulnerability report.

- Weak Authentication: Poorly implemented authentication mechanisms make it easy for attackers to assume control, as discussed in the Vercel breach report.

- Lack of Monitoring: Without proper monitoring, suspicious activities go unnoticed, a point emphasized by Industrial Cyber.

- Outdated Software: Unpatched vulnerabilities in AI software can be exploited by hackers, as noted in TechCrunch.

How Hackers Exploit AI Agents

Hackers use a variety of techniques to exploit AI agents, often leveraging their access to sensitive data and critical system functions.

Techniques Used by Hackers

- Phishing Attacks: Tricking users into providing credentials that the AI agent can use, as detailed in The Hacker News.

- Social Engineering: Manipulating individuals to bypass security controls, a common tactic discussed in Vocal Media.

- Exploiting Software Bugs: Taking advantage of unpatched vulnerabilities in AI software, as reported by Microsoft Copilot Studio.

Example: A hacker sends a phishing email to an employee with a link to a fake login page. The employee enters their credentials, which the hacker then uses to access the AI agent and escalate privileges within the system.

Best Practices for Securing AI Agents

Ensuring the security of AI agents requires a multi-faceted approach, focusing on both technical and human factors.

Implementing the Principle of Least Privilege

The principle of least privilege involves granting AI agents only the access necessary to perform their tasks.

Steps to Implement:

- Identify Requirements: Determine the minimum permissions needed.

- Configure Permissions: Set access controls based on identified requirements.

- Regularly Review: Audit permissions regularly to ensure compliance.

Regular Security Audits

Conducting regular security audits helps identify and mitigate potential vulnerabilities.

Audit Checklist:

- Review access logs for unusual activity.

- Test systems for known vulnerabilities.

- Verify compliance with security policies.

User Education and Awareness

Educating users about the potential risks and best practices for interacting with AI agents is crucial.

Key Focus Areas for Training

- Recognizing Phishing Attempts: Teach users how to identify and report phishing emails.

- Password Management: Encourage strong, unique passwords and the use of password managers.

- Reporting Suspicious Activity: Create clear channels for reporting potential security incidents.

The Role of AI in Enhancing Security

AI technology itself can be used to enhance security measures, providing advanced threat detection and response capabilities.

AI-Driven Security Solutions

- Behavioral Analysis: AI can learn normal user behavior and detect anomalies, as shown in ResearchGate.

- Automated Threat Response: AI agents can automatically respond to detected threats, minimizing damage.

- Predictive Analytics: AI can anticipate potential threats based on historical data and trends.

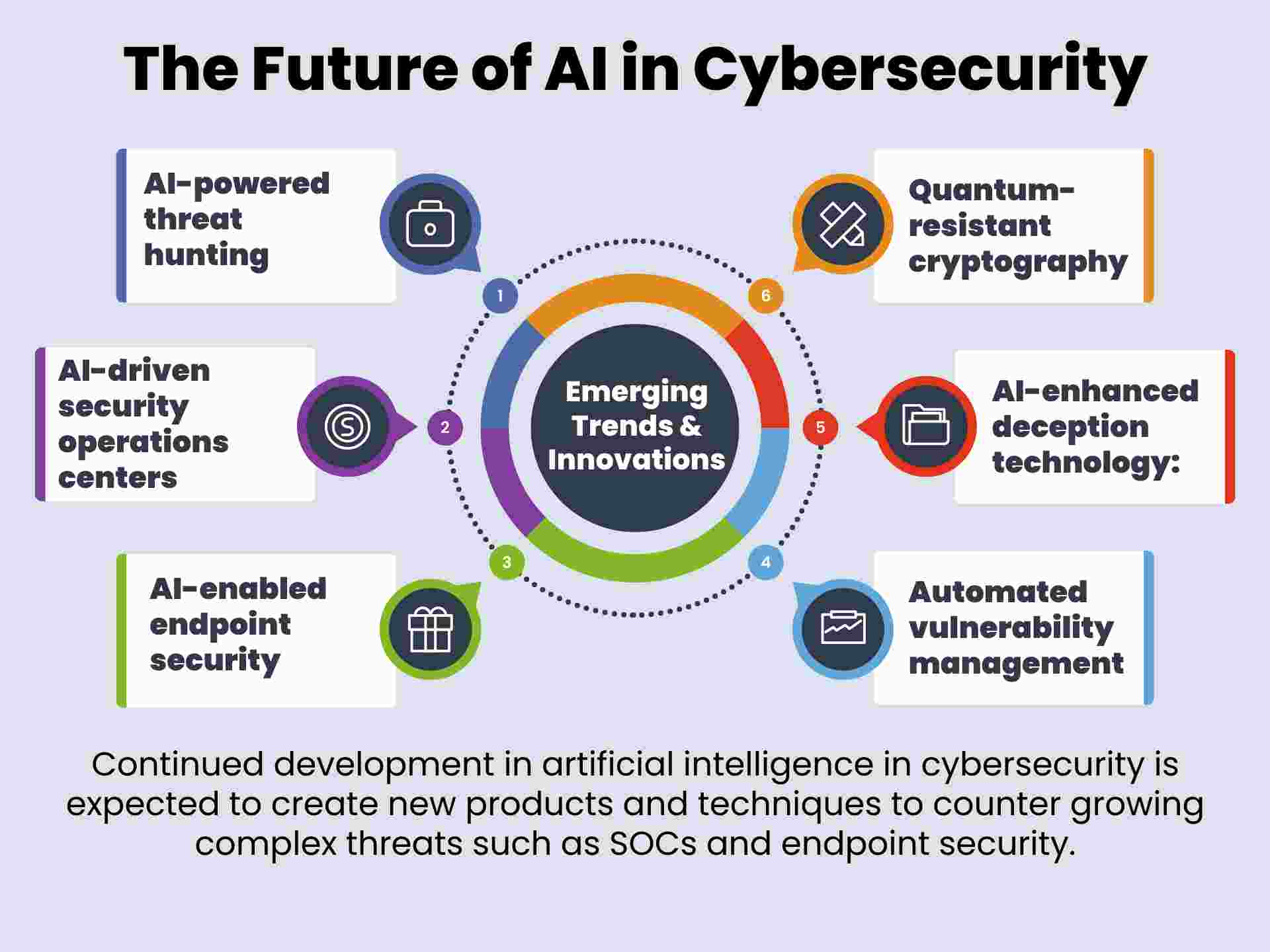

Future Trends in AI Security

As AI continues to evolve, so too will the strategies for securing AI agents against cyber threats.

Emerging Trends

- Enhanced Machine Learning Models: More sophisticated models will improve threat detection accuracy.

- Integration with Blockchain: Using blockchain for secure data transactions and identity verification.

- AI-Driven Forensics: Automating the forensic analysis of security incidents.

Implementing AI Agent Security in Your Organization

To effectively implement AI agent security, organizations must take a proactive approach, integrating security into every stage of AI deployment.

Steps to Take

- Conduct Risk Assessments: Understand the specific security risks associated with your AI agents.

- Develop Security Policies: Create comprehensive policies that govern AI agent use and security.

- Invest in Security Tools: Utilize advanced security tools to protect AI systems.

- Train Employees Regularly: Keep security training up to date with the latest threats and best practices.

Common Pitfalls and Solutions

Despite best efforts, organizations often encounter challenges when securing AI agents. Understanding these pitfalls can help in developing more effective security strategies.

Pitfalls to Avoid

- Overconfidence in AI Security: Assuming AI systems are inherently secure without proper validation.

- Ignoring User Feedback: Overlooking user reports of suspicious activity.

- Delayed Patch Management: Failing to promptly apply security patches.

Solutions:

- Continuously test and validate AI security measures.

- Encourage a culture of open communication regarding security concerns.

- Implement automated patch management systems.

Conclusion

AI agents are a powerful tool for enhancing productivity and efficiency, but they also pose significant security risks if not properly managed. By understanding these risks and implementing best practices, organizations can protect their systems from exploitation while reaping the benefits of AI technology.

FAQ

What are AI agents?

AI agents are software programs that use artificial intelligence to perform tasks autonomously, often interacting with users and systems to automate processes.

How do hackers exploit AI agents?

Hackers exploit AI agents by taking advantage of excessive permissions, weak authentication, and unpatched vulnerabilities to gain unauthorized access and control.

What are the best practices for securing AI agents?

Best practices include implementing the principle of least privilege, conducting regular security audits, educating users, and using AI-driven security solutions.

Why is user education important for AI security?

Educating users helps them recognize phishing attempts, manage passwords effectively, and report suspicious activities, all of which are crucial in preventing unauthorized access.

What future trends can we expect in AI security?

Future trends include more sophisticated machine learning models, integration with blockchain, and AI-driven forensic analysis to enhance security measures.

How can organizations implement AI agent security?

Organizations can implement AI agent security by conducting risk assessments, developing comprehensive security policies, investing in security tools, and providing regular employee training.

Key Takeaways

- AI agents offer significant productivity benefits but pose security risks if misconfigured.

- Excessive permissions are a common vulnerability that hackers exploit.

- Regular audits and the principle of least privilege are essential for securing AI agents.

- User education and awareness are crucial in preventing unauthorized access.

- AI technology itself can enhance security through advanced threat detection.

Related Articles

- The AI Governance Mirage: Understanding the Security Gap Enterprises Face [2025]

- Mastering NYT Connections: Comprehensive Guide [2025]

- The LHC's Strange Particle Decay: Is It Time to Rethink the Standard Model? [2025]

- Tech Overhaul on the ISS: Navigating the Next Frontier [2025]

- ASRock's HUDIMM: A New Contender in the RAM Market [2025]

- Understanding Cybersecurity Breaches: Lessons from the Seiko USA Incident [2025]

![AI Agents: The Hidden Gatekeepers Giving Hackers Full System Access [2025]](https://tryrunable.com/blog/ai-agents-the-hidden-gatekeepers-giving-hackers-full-system-/image-1-1776818072605.png)