AI Deepfakes: Navigating the Chaos and Samsung's Surprising Role [2025]

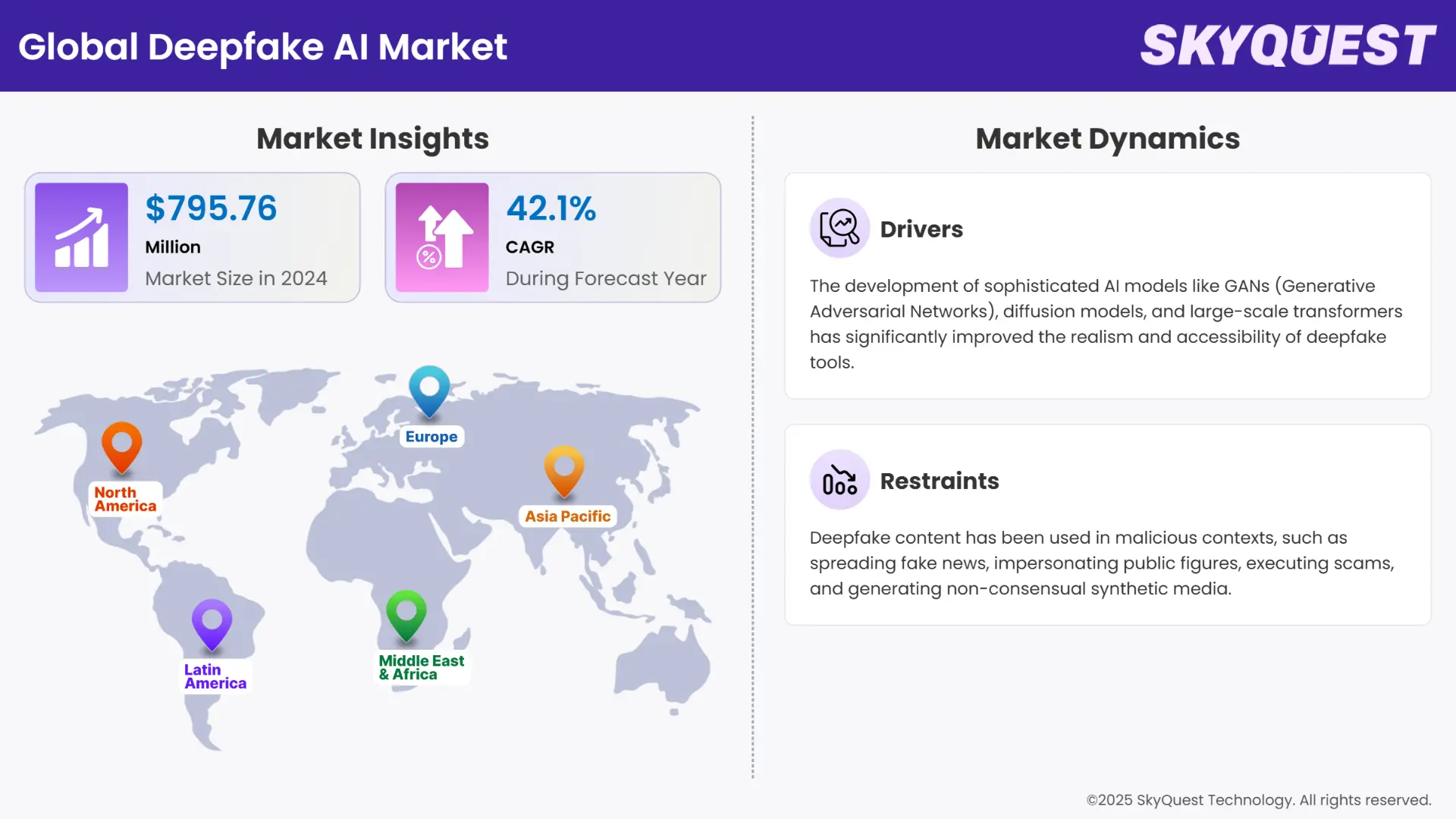

AI deepfakes have become a powerful yet controversial technology, blurring the lines between reality and fiction. In this article, we'll dive into the world of AI deepfakes, their implications, and why companies like Samsung are getting involved.

TL; DR

- AI deepfakes can convincingly mimic real people, posing significant ethical and security risks. According to a Forbes article, these risks include misinformation and identity theft.

- Samsung is exploring deepfake tech for legitimate applications, sparking debate. As reported by The Verge, Samsung's involvement in deepfake technology aims to enhance digital storytelling.

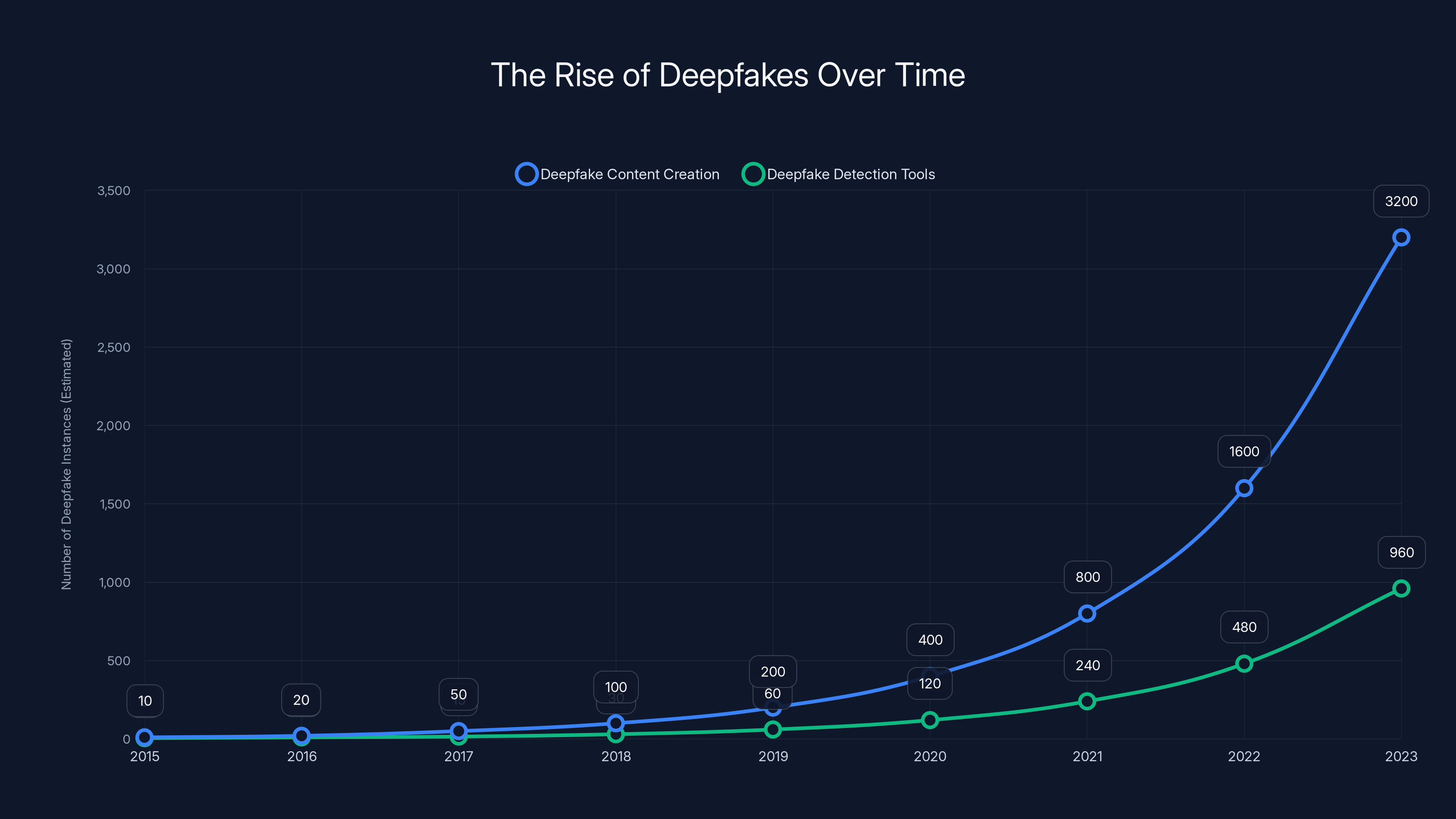

- Deepfake detection tools struggle to keep up with evolving AI capabilities. A Wired article highlights the ongoing challenges in developing effective detection methods.

- Regulations are lagging behind, leaving gaps in legal frameworks. The EU AI Act is one of the few legislative efforts addressing AI technologies, including deepfakes.

- Future trends suggest both increased sophistication and potential for misuse. A CNBC report predicts that deepfakes will become more sophisticated and harder to detect.

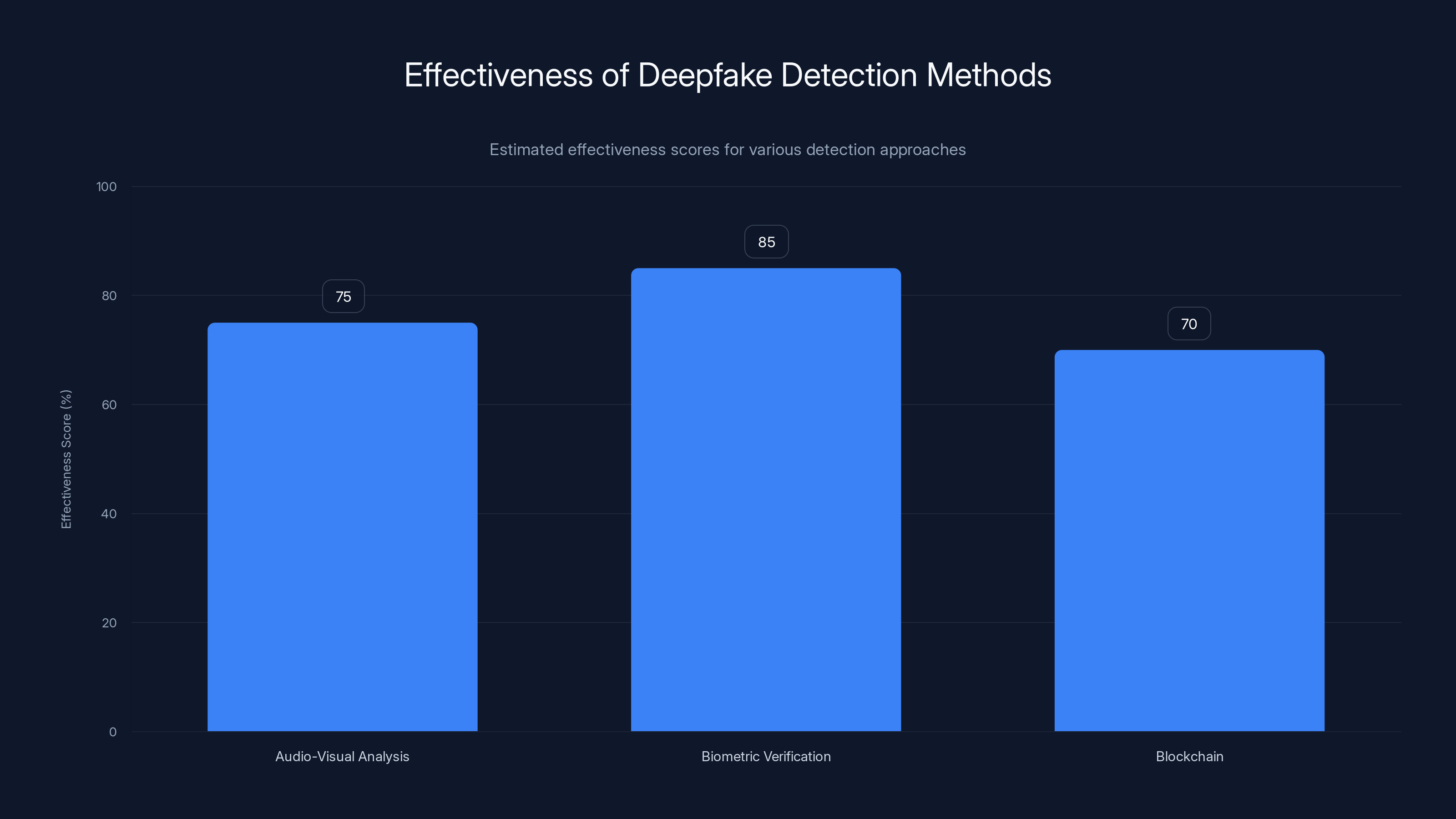

Biometric Verification is estimated to be the most effective method for detecting deepfakes, with a score of 85%. Estimated data.

A Brief Introduction to Deepfakes

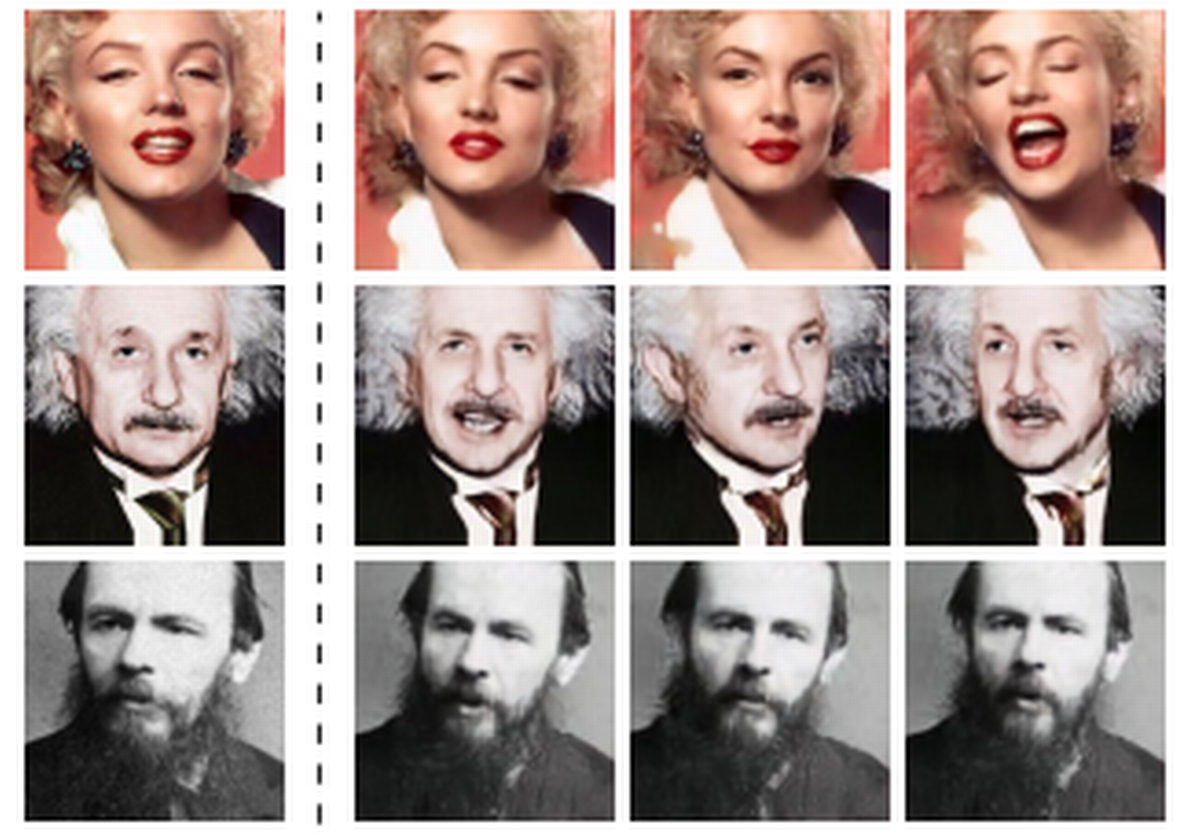

Deepfakes utilize artificial intelligence to create hyper-realistic fake videos or audio recordings. By analyzing vast amounts of data, these algorithms can replicate voices and faces with uncanny accuracy, making it challenging to distinguish between real and fake content.

How Deepfakes Work

Deepfakes rely on a type of AI called Generative Adversarial Networks (GANs). A GAN consists of two neural networks: the generator and the discriminator. The generator creates fake data, while the discriminator attempts to identify which data is fake. Through this adversarial process, deepfakes become increasingly realistic. For more on GANs, refer to the IBM Cloud documentation.

The Rise of Deepfakes

Initially a novelty, deepfakes gained notoriety through the manipulation of celebrity content. However, their potential for harm became apparent with political misinformation and identity theft on the rise. A BBC report discusses the impact of deepfakes on political stability.

Samsung's Role in the Deepfake Arena

Samsung has ventured into deepfake technology, not for malicious purposes but to explore potential applications in media and entertainment. Their involvement has sparked a mix of curiosity and concern. As reported by The Verge, Samsung's deepfake initiatives are focused on enhancing digital storytelling.

Samsung's Deepfake Applications

Samsung is reportedly developing tools to enhance digital storytelling, enabling more immersive and interactive media experiences. This can involve creating digital avatars or enhancing virtual meetings with lifelike interactions. A Samsung Newsroom article provides insights into their latest technological advancements.

Investing in detection software is estimated to be the most effective strategy for mitigating deepfake risks, followed by policy development and employee education. Estimated data.

The Ethical Quandary

Deepfakes present numerous ethical challenges. They can be weaponized for misinformation, harassment, or unauthorized use of personal data. According to a Nature article, these ethical dilemmas require urgent attention from policymakers.

Potential Risks and Misuse

- Political Manipulation: Fake videos can disrupt elections and political stability. The New York Times highlights the threat of deepfakes in political campaigns.

- Identity Theft: Deepfakes can impersonate individuals for fraudulent activities. A FTC blog post discusses the risks of identity theft associated with deepfakes.

- Reputation Damage: Public figures can be targeted with false content. As reported by BBC, deepfakes have been used to damage reputations.

Detecting Deepfakes: An Ongoing Battle

As deepfakes become more sophisticated, detection methods must evolve. Current tools rely on analyzing inconsistencies in video frames, audio glitches, and biological signals like blinking patterns. MIT researchers are developing new methods to improve detection accuracy.

Technical Approaches to Detect Deepfakes

- Audio-Visual Analysis: Detects mismatched audio and visual cues. A recent study in Expert Systems with Applications explores these techniques.

- Biometric Verification: Uses biometric data to verify authenticity. The NIST is actively researching biometric verification methods.

- Blockchain: Ensures content integrity through decentralized verification. A Forbes article discusses the potential of blockchain in deepfake detection.

Legal and Regulatory Challenges

The legal landscape struggles to keep pace with deepfake technology. While some jurisdictions have introduced laws, they often lack the clarity needed for effective enforcement. The Brookings Institution provides an overview of the current legal challenges.

Notable Regulations

- California's Anti-Deepfake Law: Prohibits malicious use in politics and pornography. Details can be found in the California Governor's Office press release.

- EU's AI Act: Proposes guidelines for AI technologies, including deepfakes. The AI Journal discusses the implications of the EU AI Act.

Deepfake content creation has grown exponentially since 2015, with detection tools also increasing but at a slower pace. Estimated data.

Future Trends in Deepfake Technology

The future of deepfakes could see even more realistic outputs, but also better detection and regulation. A CNBC analysis highlights the potential for both innovation and misuse.

Predictions and Recommendations

- Increased Sophistication: Deepfakes will become harder to detect as AI models improve. A MIT Technology Review article discusses the advancements in AI models.

- Enhanced Detection Tools: New methods will leverage AI to stay ahead of malicious use. The NIST is at the forefront of developing these tools.

- Stricter Regulations: Governments will need to create comprehensive laws to cover all aspects of deepfake creation and use. The Brookings Institution emphasizes the need for updated legal frameworks.

Practical Guidance for Organizations

Organizations can mitigate deepfake risks through a combination of technology, policy, and public awareness. A McKinsey report outlines strategies for businesses to protect themselves.

Best Practices

- Invest in Detection Software: Stay updated with the latest detection tools. The Gartner identifies leading detection software.

- Educate Employees: Conduct workshops on identifying deepfakes. A Forbes article emphasizes the importance of employee training.

- Develop Clear Policies: Create guidelines for handling and reporting suspected deepfakes. The NIST provides guidance on policy development.

Common Pitfalls and Solutions

Pitfalls

- Overreliance on Technology: Assuming technology alone can solve deepfake issues. A McKinsey report warns against this common mistake.

- Neglecting Human Training: Failing to educate employees on detection techniques. The Forbes article stresses the need for comprehensive training.

Solutions

- Holistic Approach: Combine technology with human vigilance and policy. The McKinsey report suggests a balanced approach.

- Regular Updates: Continuously update detection tools and training materials. The Gartner emphasizes the importance of staying current with technology.

Conclusion

AI deepfakes are a double-edged sword, with the potential for both innovation and harm. As companies like Samsung explore their capabilities, the industry must remain vigilant in addressing the ethical, legal, and technical challenges they present. For more insights on managing AI technologies, platforms like Runable offer solutions to optimize AI workflows.

FAQ

What are AI deepfakes?

AI deepfakes are synthetic media where AI technologies create realistic images, videos, or audio recordings of real people. The BBC provides an overview of how deepfakes work.

How do AI deepfakes pose risks?

They can be used for misinformation, identity theft, and damaging reputations by creating convincing fake media. The Nature article discusses these risks in detail.

What is Samsung's involvement with deepfakes?

Samsung is exploring deepfake technology to enhance media and entertainment experiences with digital avatars and interactive content. As reported by The Verge, their initiatives focus on digital storytelling.

How can deepfakes be detected?

Detection methods include audio-visual analysis, biometric verification, and blockchain for content integrity. The NIST provides insights into these techniques.

What are the legal challenges surrounding deepfakes?

Current laws often lack clarity and struggle to keep pace with the rapid development of deepfake technology. The Brookings Institution outlines the legal challenges.

What are future trends in deepfake technology?

Expect increased sophistication in deepfakes, improved detection tools, and stricter regulations to mitigate risks. The CNBC analysis highlights these trends.

What can organizations do to mitigate deepfake risks?

They should invest in detection software, educate employees, and develop clear policies for handling deepfakes. The McKinsey report provides practical guidance.

What are common pitfalls when dealing with deepfakes?

Overreliance on technology and neglecting human training are common pitfalls that can be addressed with a holistic approach. The Forbes article discusses these issues.

Key Takeaways

- AI deepfakes present significant ethical and security risks. The Nature article discusses these risks in detail.

- Companies like Samsung are exploring deepfakes for legitimate applications. As reported by The Verge, their initiatives focus on digital storytelling.

- Detection tools need to evolve to keep up with AI advancements. The MIT researchers are developing new methods to improve detection accuracy.

- Legal frameworks are currently inadequate to manage deepfake issues. The Brookings Institution outlines the legal challenges.

- Future trends suggest both increased sophistication and potential for misuse. The CNBC analysis highlights these trends.

Related Articles

- Anthropic CEO Dario Amodei's Standoff with the US Government: An Expert Analysis [2025]

- ChatGPT's Explosive Growth: 900M Weekly Users and Counting [2025]

- Beware of Google Tasks Scams: Protect Your Workplace from Phishing Attacks [2025]

- OpenAI's $110 Billion Funding: Pivotal Milestone in AI's Global Expansion [2025]

- Transformation in Action: How AI is Evolving Support Careers [2025]

- MWC 2026: The Future Unveiled in Barcelona – Phones, Gadgets, and Innovations

![AI Deepfakes: Navigating the Chaos and Samsung's Surprising Role [2025]](https://tryrunable.com/blog/ai-deepfakes-navigating-the-chaos-and-samsung-s-surprising-r/image-1-1772219095313.jpg)