Introduction: AMD's Bold Vision for AI-Powered Computing in 2025

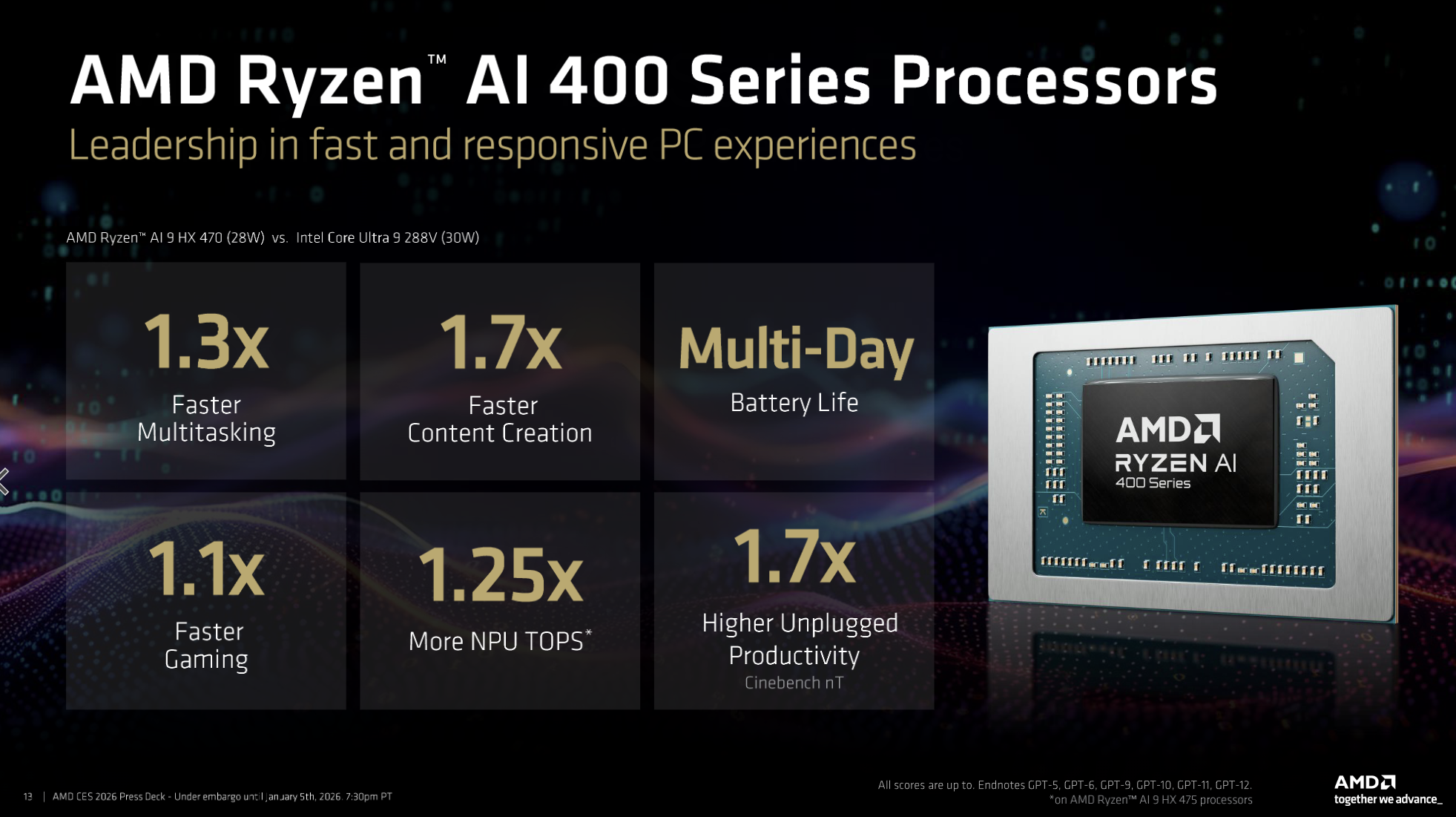

The computing landscape shifted fundamentally when AMD announced its latest generation of AI-powered processors at CES 2025. Under the strategic vision of CEO Lisa Su, AMD unveiled the Ryzen AI 400 Series, a comprehensive lineup designed to democratize artificial intelligence across personal computers. This announcement represents far more than a typical hardware refresh—it signals a fundamental transformation in how manufacturers conceptualize everyday computing devices.

When Su emphasized that "AI for everyone" would be the driving principle behind AMD's processor roadmap, she articulated a philosophy that challenges the current industry trajectory. Rather than relegating AI capabilities to cloud-based services or specialized hardware, AMD is embedding sophisticated machine learning inference directly into consumer-grade processors. This approach has profound implications for privacy, latency, and computational efficiency.

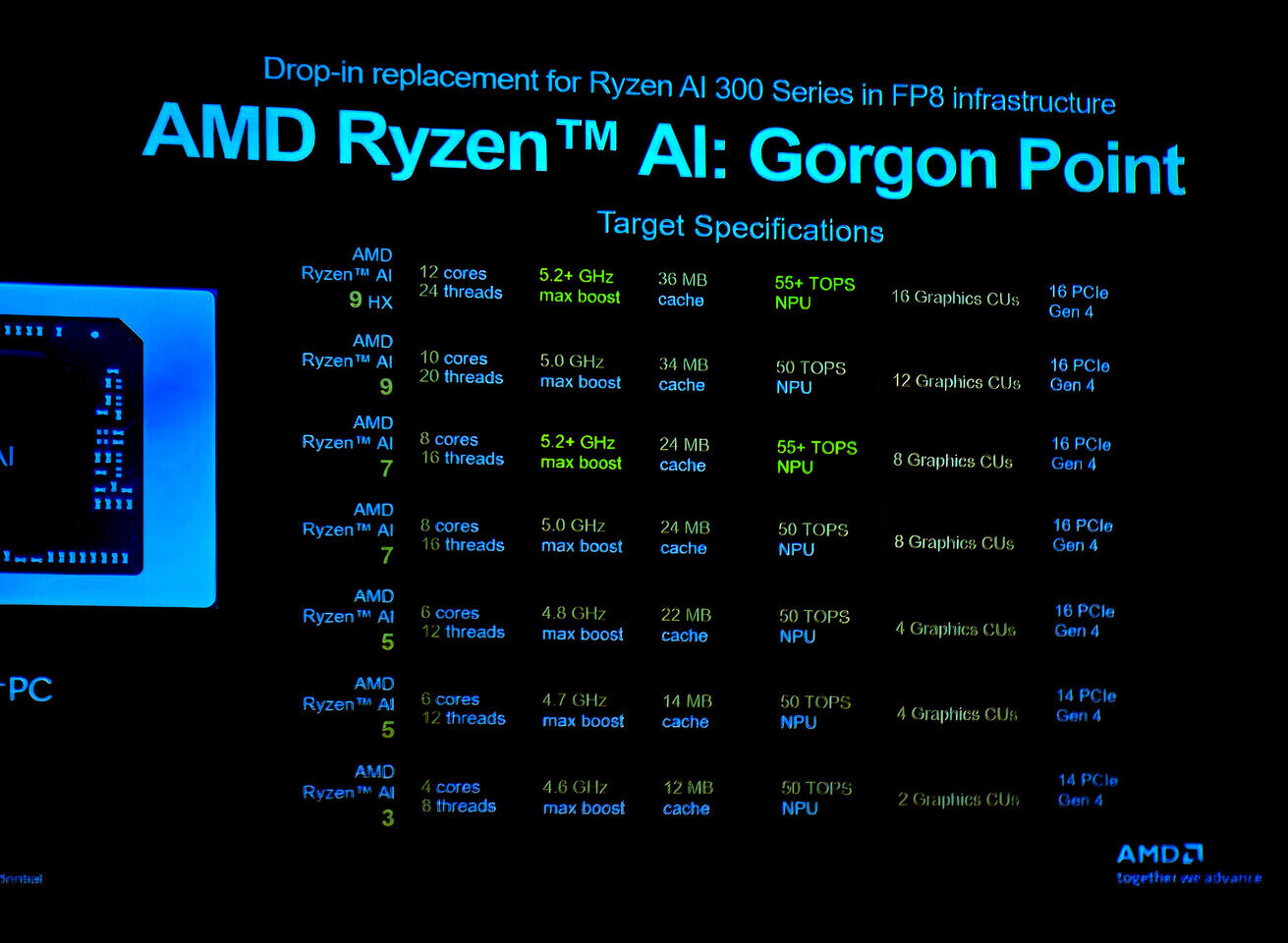

The Ryzen AI 400 Series marks a significant evolution from its predecessor, the Ryzen AI 300 Series announced in 2024. The jump between these generations demonstrates AMD's commitment to rapid iteration in the AI processor space. The new architecture introduces structural improvements that extend far beyond incremental performance gains. These processors now feature 12 CPU cores with 24 threads, enabling multi-threaded workloads to execute with remarkable efficiency while maintaining thermal and power characteristics suitable for mainstream laptops and desktops.

The timing of this announcement carries particular significance. As enterprises and individual users struggle with the proliferation of AI-powered applications, having computational capability at the edge—on individual devices rather than in centralized data centers—addresses several critical challenges simultaneously. Bandwidth limitations, latency concerns, data privacy regulations, and the economics of continuous cloud connectivity all point toward the necessity of local AI inference capabilities.

Understanding the Ryzen AI 400 Series requires examining multiple dimensions: raw technical specifications, real-world performance metrics, architectural innovations, thermal characteristics, power efficiency, and economic implications for different user segments. Whether you're a software developer considering a new platform for optimization, a content creator evaluating workstation capabilities, or a gamer seeking the latest performance benchmarks, the implications of this processor generation warrant detailed analysis.

This comprehensive guide dissects AMD's latest AI processor generation, providing technical depth sufficient for engineering teams while remaining accessible to decision-makers evaluating hardware purchases. We'll examine what makes these processors distinctive, how they perform in concrete scenarios, pricing and availability considerations, and ultimately, whether they represent the optimal choice for your specific computing needs.

What Are the AMD Ryzen AI 400 Series Processors?

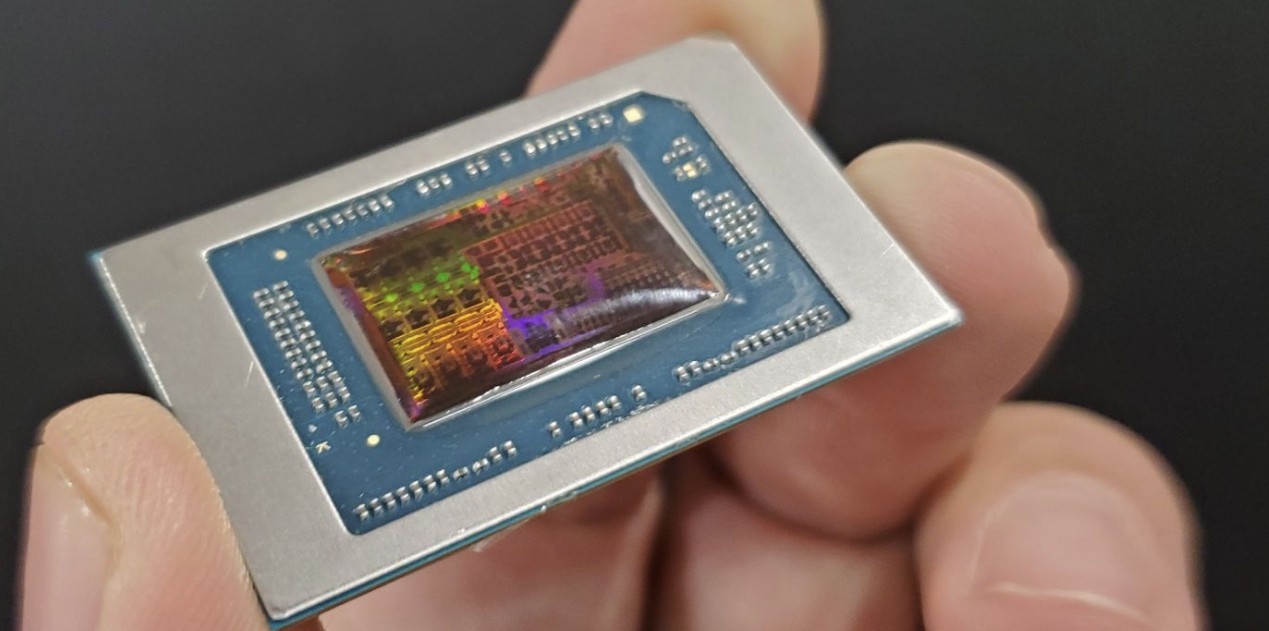

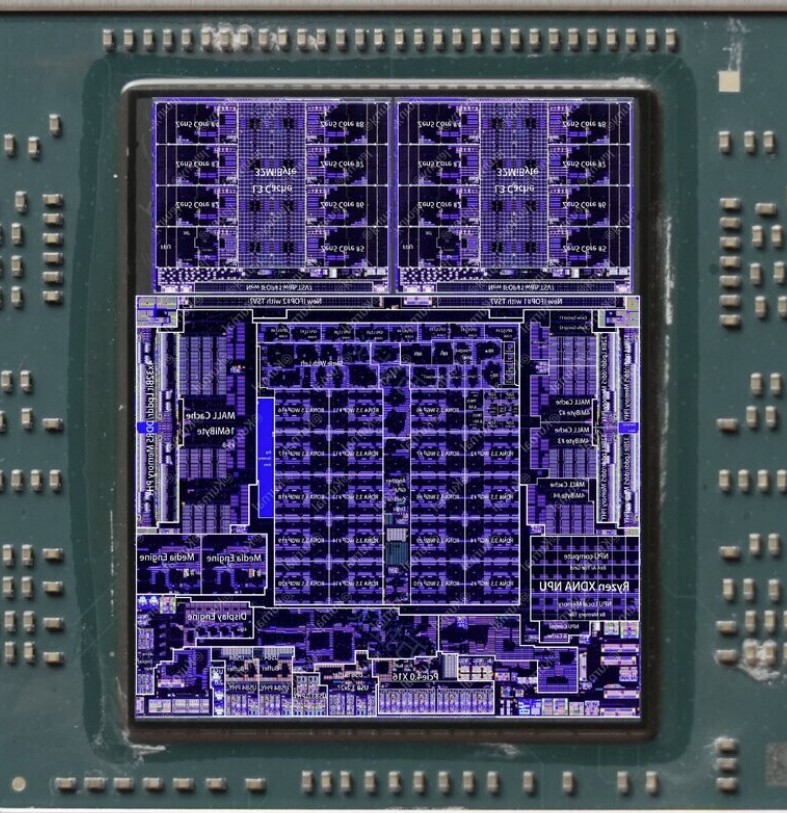

The AMD Ryzen AI 400 Series represents a fundamental architectural refresh in AMD's consumer processor lineup. These processors aren't simply faster versions of previous-generation chips—they embody a different computing philosophy entirely. By integrating dedicated AI acceleration hardware directly into the processor die, AMD creates computing devices capable of understanding context, learning user patterns, and providing intelligent automation without constant cloud connectivity.

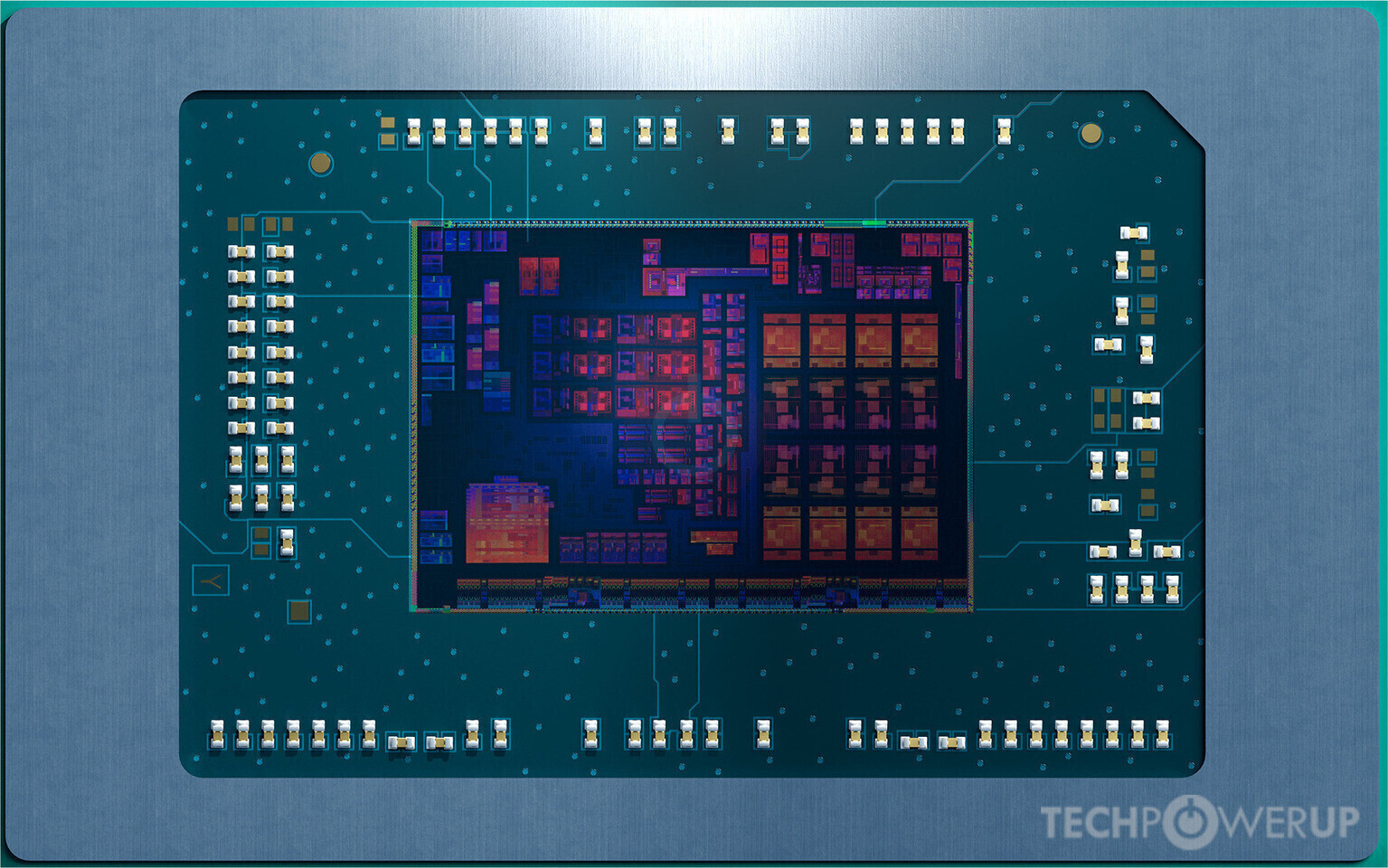

At their core, these processors combine traditional CPU capabilities with specialized neural processing units (NPUs) designed specifically for machine learning inference. This hybrid architecture allows different types of computational tasks to be routed to the most efficient processing unit. Graphic-intensive operations leverage the integrated graphics capabilities, computational tasks utilize the CPU cores, and AI inference tasks benefit from the dedicated neural processing units.

The processor family encompasses multiple SKUs (stock keeping units) tailored to different market segments. From energy-efficient variants designed for ultra-thin laptops to high-performance configurations suitable for content creation workstations, AMD's portfolio approach recognizes that "AI for everyone" requires different solutions for different use cases. A data analyst working in spreadsheet applications requires different processor characteristics than a 3D animator rendering complex scenes or a competitive esports player demanding peak frame rates.

The architectural improvements in the Ryzen AI 400 Series extend across multiple dimensions. The 12-core, 24-thread configuration provides genuine multi-threaded performance improvements. In parallel processing scenarios, these additional threads enable more simultaneous operations, reducing overall completion times for complex workflows. The increased core count particularly benefits scenarios where multiple applications run simultaneously—a common reality for knowledge workers juggling browser windows, communication tools, and productivity software.

Mem bandwidth and cache architecture improvements allow these processors to move data more efficiently between storage, RAM, and processing cores. This seemingly technical detail has outsized practical importance: many workloads spend substantial time waiting for data rather than actively computing. Reducing these wait periods accelerates overall performance disproportionately to raw clock speed improvements.

The integration of AI capabilities throughout the processor hierarchy enables new categories of applications entirely. Windows 11 and future operating systems can leverage these capabilities for intelligent search, context-aware task management, and predictive system optimization. Application developers can implement features that were previously impractical on client devices—real-time translation, sophisticated image analysis, intelligent document summarization, and personalized content filtering all become feasible without cloud dependencies.

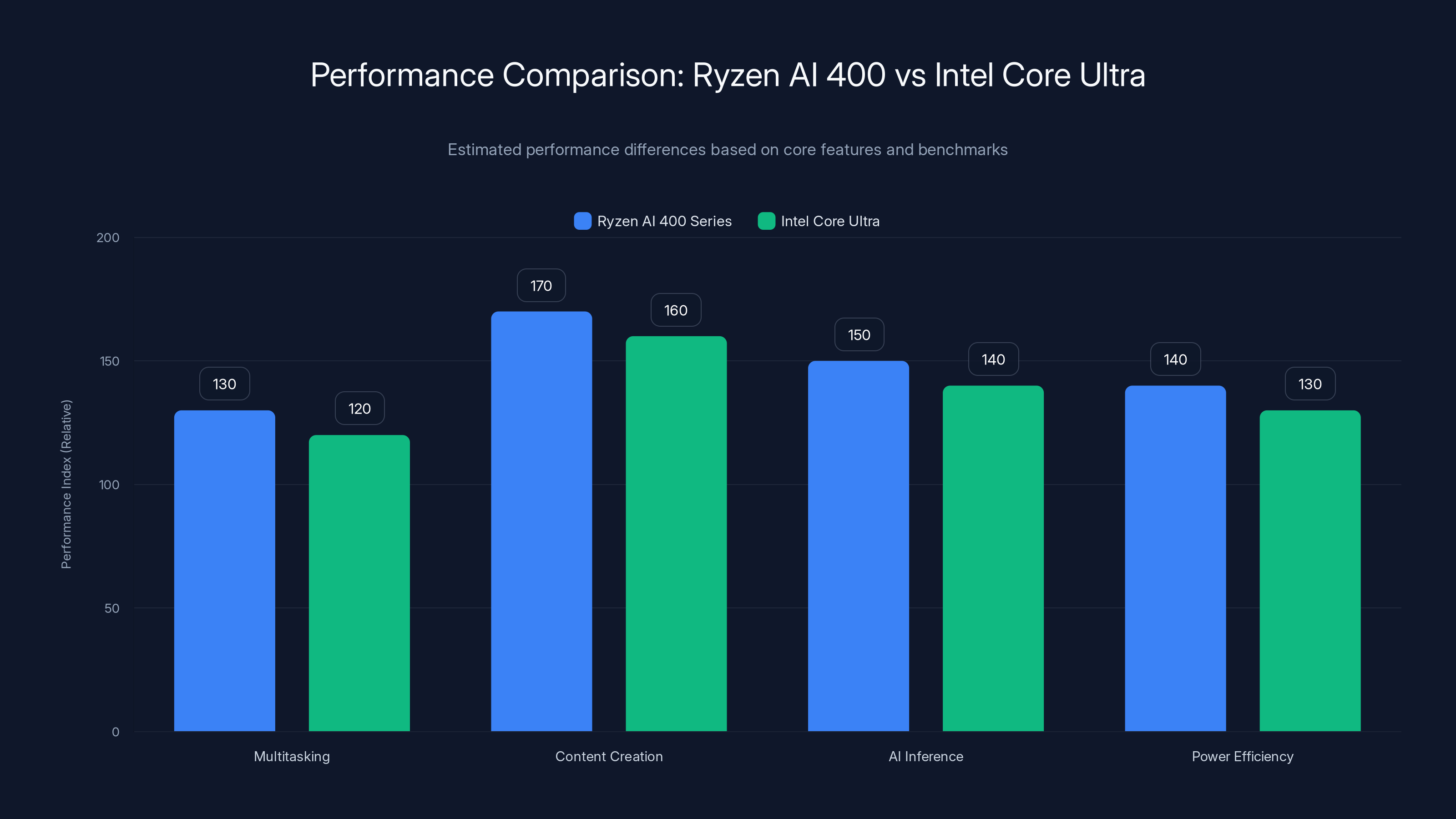

The Ryzen AI 400 Series shows an estimated 5-20% performance improvement over Intel Core Ultra in multitasking, content creation, and AI inference, with better power efficiency. Estimated data based on architectural enhancements.

Technical Specifications and Architecture Deep Dive

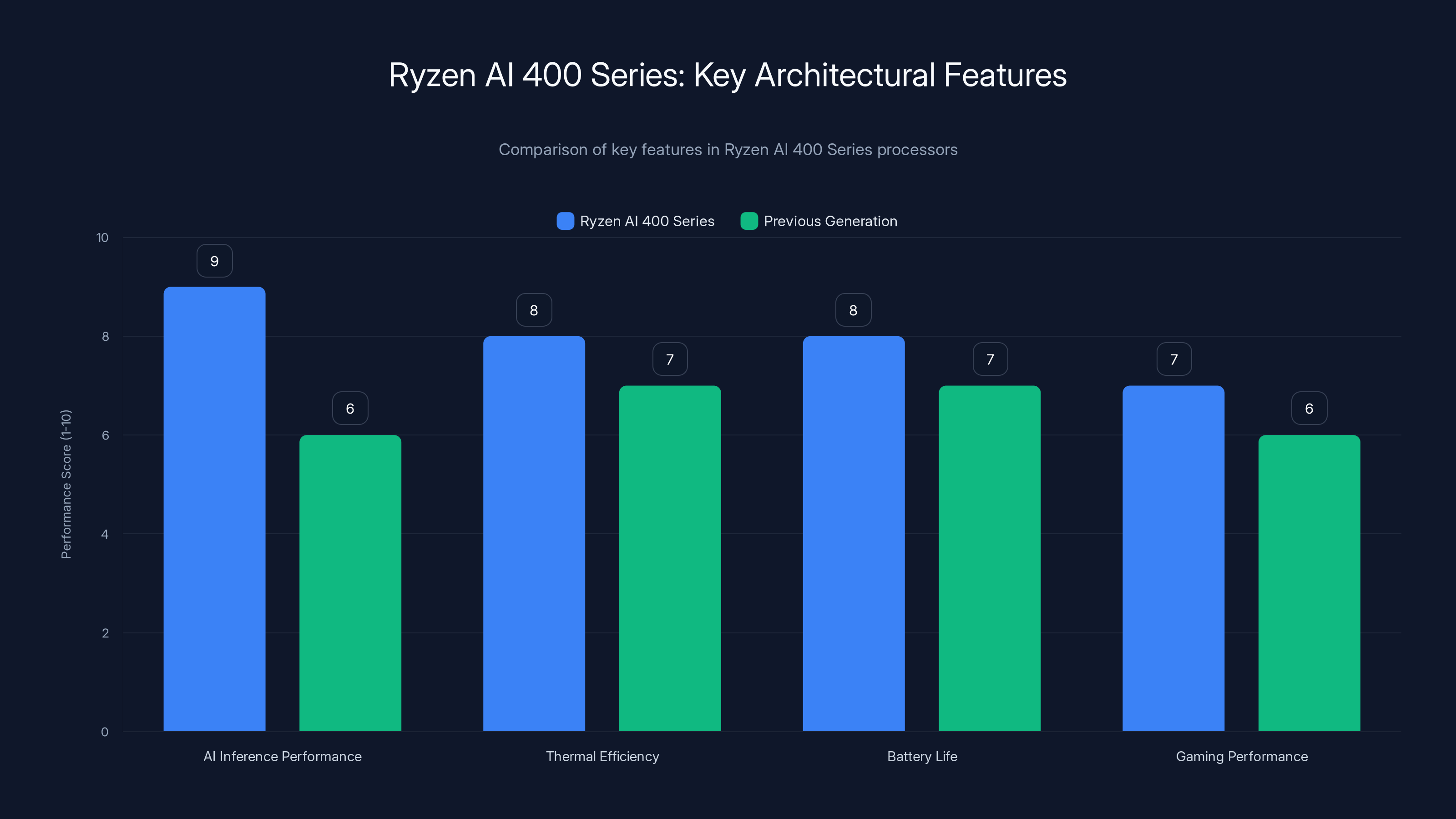

The technical foundation underlying the Ryzen AI 400 Series merits detailed examination, as architecture decisions directly influence real-world performance and capabilities. AMD engineered these processors with specific design priorities: maximizing AI inference performance, maintaining thermal efficiency, preserving battery life in portable devices, and delivering gaming performance competitive with specialized gaming processors.

The Zen 5 architecture forms the computational backbone of these processors. This instruction set architecture represents years of iterative refinement, with each generation incorporating lessons learned from previous implementations and competitive benchmarking. Zen 5 improves instruction-level parallelism—the processor's ability to execute multiple instruction streams simultaneously—compared to Zen 4. This enhancement allows the processor to accomplish more useful work per clock cycle, effectively multiplying computational throughput.

The 12 CPU cores operate at configurable frequencies, ranging from ultra-efficient low-power states for background tasks through high-frequency burst modes for demanding operations. This frequency scaling directly impacts thermal characteristics and power consumption. Modern processors achieve efficiency not through uniform high performance but through intelligent scaling that matches processing power to actual workload requirements.

The dedicated AI Processing Unit (APU) represents the architectural innovation distinguishing these processors from previous generations. This specialized silicon handles neural network inference—the process of running trained machine learning models to generate predictions or classifications. By dedicating hardware specifically to this task, AMD achieves performance-per-watt superior to general-purpose CPU execution of the same operations.

Memory architecture receives substantial attention in the Ryzen AI 400 Series design. The processor implements a sophisticated cache hierarchy with L1, L2, and L3 caches sized to minimize memory latency. L1 caches provide microsecond-scale access to the most frequently needed data. L3 caches serve as a larger reservoir, trading slightly longer latency for substantially greater capacity. This hierarchical approach balances the competing demands of speed and capacity.

Performance Metrics and Real-World Benchmarks

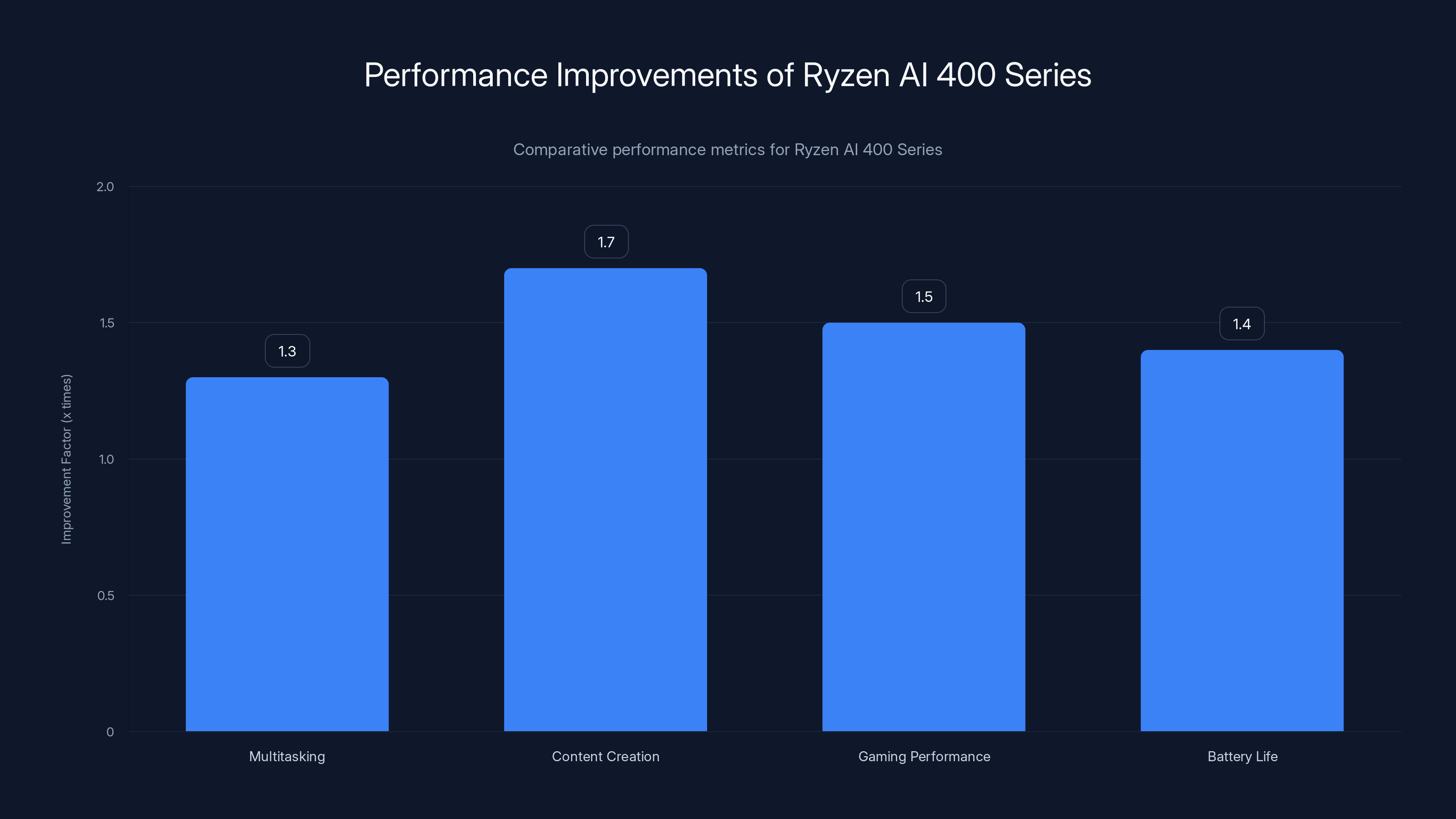

AMD claims the Ryzen AI 400 Series delivers 1.3x faster multitasking compared to competitive offerings. This metric warrants careful interpretation. Multitasking performance, typically measured through benchmarks like Geekbench or Cinebench, reflects the processor's ability to handle multiple simultaneous workloads without severe performance degradation. A 1.3x improvement means tasks complete 30% faster when multiple applications compete for processing resources.

For content creation workloads, AMD quantifies improvement at 1.7x faster performance. This more substantial improvement reflects the specialization of content creation tasks—they frequently involve highly parallel operations where the additional cores demonstrate their value most clearly. Video encoding, 3D rendering, and complex image manipulation all exhibit substantial parallelism, allowing them to exploit the processor's multiple cores effectively.

These figures represent aggregate performance across diverse workloads and benchmarking scenarios. Real-world improvements vary dramatically based on specific applications. A web browser experiencing minimal multitasking load might see minimal improvements, while a professional video editor running simultaneous render operations, effects processing, and timeline management could experience improvements approaching AMD's claims.

Gaming Performance and the Ryzen 7 9850X3D

Alongside the mainstream Ryzen AI 400 Series announcement, AMD revealed the Ryzen 7 9850X3D, a gaming-specialized processor incorporating 3D V-Cache technology. This architectural innovation substantially improves gaming performance through increased L3 cache capacity—64 MB of additional cache memory positioned in a three-dimensional configuration.

Gaming workloads exhibit unique characteristics distinguishing them from general-purpose computing. The graphics pipeline requires rapidly accessing textures, vertex data, and game state information. Traditional cache hierarchies often prove insufficient for gaming memory access patterns. AMD's 3D V-Cache technology provides additional cache capacity positioned to intercept these access patterns more effectively, reducing memory latency and improving frame rates.

The gaming processor differs from the mainstream Ryzen AI 400 Series in optimization priorities. Rather than maximum power efficiency or balanced multitasking performance, the 9850X3D emphasizes peak frame rates and consistent performance across demanding game titles. Frame time consistency—ensuring frames render at predictable intervals rather than occasionally stuttering—receives priority over power consumption metrics.

Thermal and Power Characteristics

The Ryzen AI 400 Series processors operate within reasonable thermal envelopes suitable for standard laptop and desktop cooling solutions. AMD engineered these processors with power efficiency as an explicit design constraint. The U-series variants designed for ultrabooks operate within 15-28 watt thermal design power (TDP) specifications, enabling all-day battery life on portable devices.

The H-series processors for performance laptops operate within 45-55 watt TDP ranges, still allowing portable form factors while providing substantially more performance than ultra-mobile variants. Desktop variants can reach higher TDP figures, enabling higher sustained performance through more robust cooling solutions.

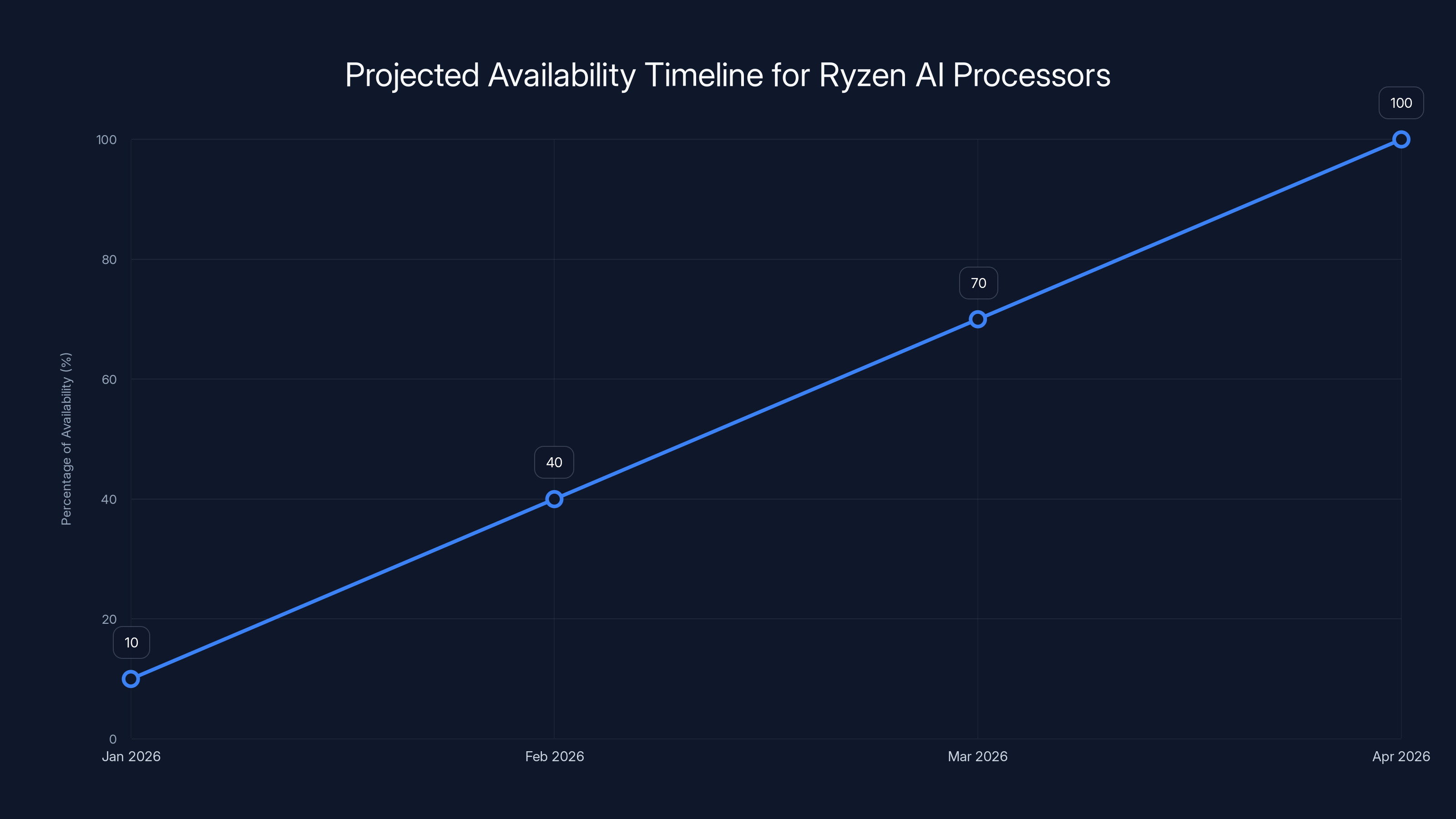

Estimated data suggests that Ryzen AI processors will gradually become available, with full availability expected by April 2026.

Use Cases and Ideal User Personas

The Ryzen AI 400 Series addresses diverse computing scenarios, each with distinct requirements and performance characteristics. Understanding which use case aligns with your computing needs proves essential for making sound purchasing decisions.

Content Creators and Professional Production

Content creators represent a primary beneficiary of the Ryzen AI 400 Series capabilities. This demographic encompasses video editors, graphic designers, 3D artists, photographers, podcast producers, and multimedia professionals. Their workflows characteristically involve resource-intensive operations: transcoding video between formats, rendering complex 3D scenes, applying sophisticated effects processing, and managing large asset libraries.

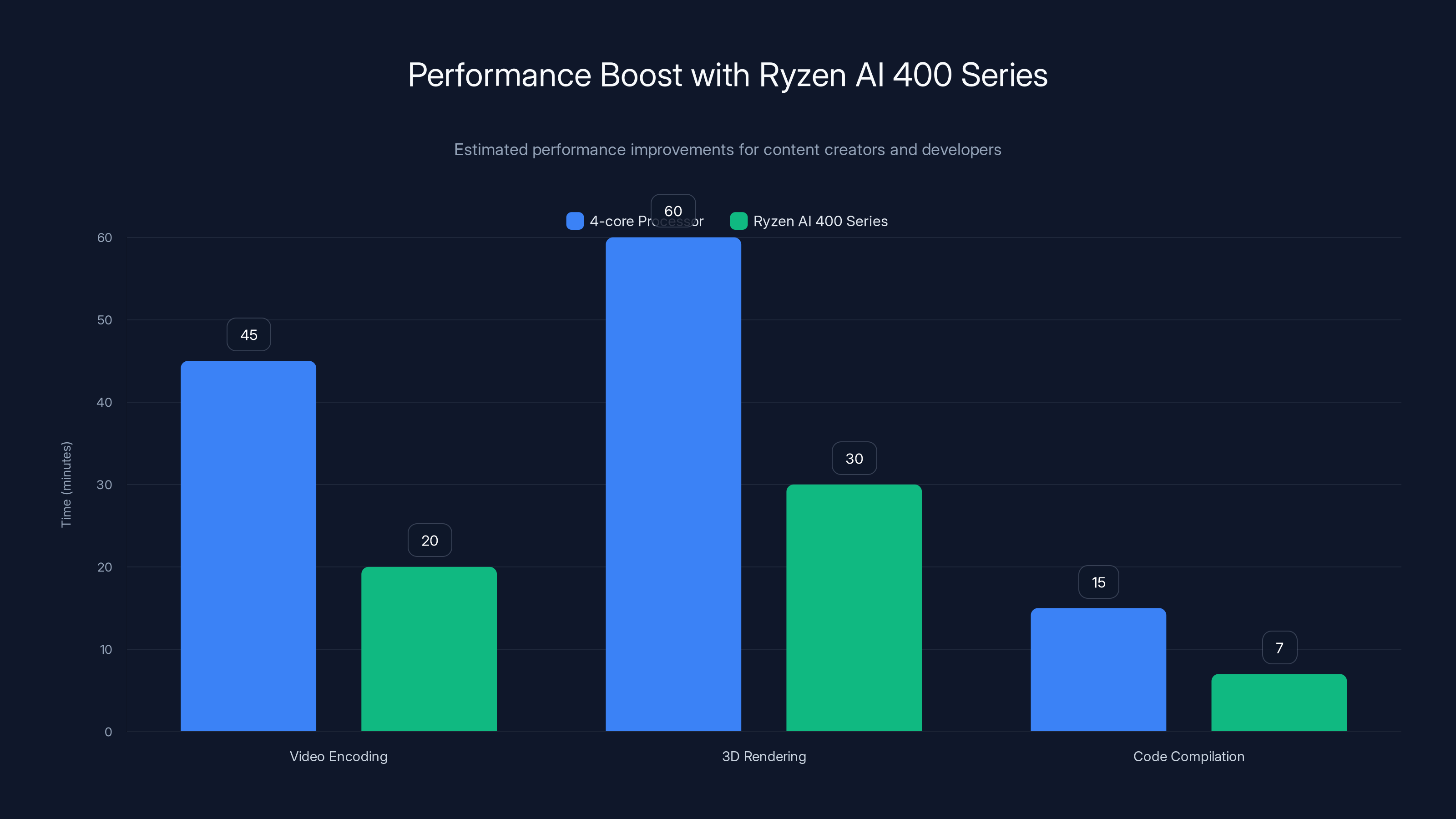

The 12 CPU cores and 24 threads provide meaningful acceleration for these activities. When encoding video, each core processes a different frame independently, enabling true parallel processing. A standard render operation that takes 45 minutes on a 4-core processor might complete in 15-20 minutes on the Ryzen AI 400 Series. This efficiency gain translates directly to accelerated project completion and increased creative output per hour.

The integrated AI capabilities enable entirely new possibilities. Real-time background replacement in video conferencing, intelligent upscaling of lower-resolution footage, automated color correction, and smart object detection all become feasible without cloud services. A content creator working on confidential projects no longer needs to upload unfinished work to cloud services for processing—everything operates locally.

Software Developers and Engineering Teams

Software developers benefit from the Ryzen AI 400 Series through acceleration of development and testing workflows. Compilation times—often measured in minutes for large codebases—decrease substantially with additional cores. A project requiring 15 minutes to build on a quad-core processor might complete in 6-8 minutes on the Ryzen AI 400 Series, reducing iteration time and improving development velocity.

The dedicated AI processing capability enables developers to experiment with machine learning integration in their applications. Rather than offloading all AI inference to cloud endpoints, developers can prototype and test local inference scenarios. This enables privacy-respecting applications, reduces latency concerns, and allows offline functionality in applications previously requiring cloud connectivity.

Docker container management, virtual machine operation, and simultaneous debugging of distributed systems all benefit from the additional cores. Development teams running complex testing scenarios can validate applications across multiple scenarios simultaneously, accelerating time-to-market.

Gaming and Esports Enthusiasts

Gaming represents a secondary but important use case for the Ryzen AI 400 Series, particularly through the Ryzen 7 9850X3D variant. Modern games demand substantial processing power for physics simulation, AI opponent behavior, dynamic lighting calculations, and complex world rendering. The 3D V-Cache architecture specifically improves gaming memory access patterns, enabling higher frame rates and more consistent frame timing.

Competitive esports players prioritize frame rate consistency above almost all other metrics. Sudden frame rate dips create gameplay inconsistency, causing performance problems in reaction-dependent games. The Ryzen 7 9850X3D's cache architecture reduces the probability of these stutters, enabling smoother gameplay and more consistent aim and reflexes.

For non-competitive gaming, the additional cores enable maximum settings across more game titles. Ray-traced lighting, high-resolution textures, advanced physics simulation, and simultaneous AI opponents all consume processing power. The Ryzen AI 400 Series provides sufficient performance to enable these features at high quality levels while maintaining playable frame rates.

Business and Enterprise Users

Business professionals benefit from multitasking improvements and the AI-powered productivity features integrated into Windows 11 and enterprise applications. Knowledge workers frequently multitask across numerous applications: email clients, productivity suites, communication platforms, virtual meeting software, and specialized business applications. The 1.3x multitasking improvement translates to snappier application responsiveness and reduced wait times when switching between tasks.

The AI capabilities enable intelligent search across email and documents, automated meeting summarization, and context-aware suggestions. These features accelerate knowledge work, particularly for professionals managing substantial information flows. The ability to process this locally rather than sending business communications to cloud services addresses enterprise security and compliance concerns.

Finance professionals running complex spreadsheet calculations, analysts processing large datasets, and operations managers managing simulation models all benefit from the additional cores. Excel pivot tables generate results more quickly, SQL queries complete faster, and statistical analysis operations consume less wall-clock time.

Educational and Research Applications

Academic researchers and students benefit from the processing power for machine learning experimentation, scientific simulations, and computational research. Graduate students developing AI models can prototype and test locally before scaling to distributed computing environments. Undergraduate students learning programming and computer science concepts have sufficient performance for meaningful projects without enterprise-grade hardware costs.

The cost-effectiveness relative to specialized workstations makes the Ryzen AI 400 Series attractive for academic environments with constrained budgets. Universities can provision student-accessible computers with sufficient performance for coursework without the expense of dedicated GPU servers for each student.

Performance Comparison and Competitive Positioning

Understanding how the Ryzen AI 400 Series compares to competitive offerings requires examining multiple performance dimensions and acknowledging strengths and weaknesses across different scenarios.

CPU Performance and Multitasking Metrics

The Ryzen AI 400 Series targets competitive parity or performance superiority against Intel's latest consumer processors. AMD's engineering team focused particular attention on improving per-core performance, not just core count. A processor with more cores but inferior single-threaded performance often disappoints in practice, as many applications still depend on rapid individual thread execution.

The Zen 5 architecture achieves measurable single-threaded performance improvements through enhanced instruction parallelism and improved branch prediction. This benefits applications that cannot fully exploit multiple cores—web browsing, word processing, and many productivity applications. The combination of improved single-threaded performance and additional cores addresses the full spectrum of computing scenarios.

Multitasking performance improvements reflect the architectural enhancements more directly than raw frequency improvements. When multiple applications compete for processor resources, the ability to service each application's requests without severe interference becomes paramount. The Ryzen AI 400 Series achieves this through improved cache efficiency and scheduling mechanisms that reduce context-switching overhead.

GPU and Gaming Performance

The integrated graphics capabilities in the Ryzen AI 400 Series provide competent 1080p gaming performance without discrete GPU hardware. This represents meaningful advancement from previous-generation integrated graphics, which required compromises at higher resolutions or detail settings. The improved graphics architecture handles esports titles at competitive settings and achieves 60+ frame rates at high quality levels.

The Ryzen 7 9850X3D gaming processor specifically targets frame rate leadership through the 3D V-Cache architecture. Gaming benchmarks demonstrate frame rate performance competitive with or exceeding specialized gaming processors from competing manufacturers. This positions AMD as a credible option for gaming-focused buyers, expanding beyond traditional AMD enthusiast audiences.

AI Inference and Neural Processing

The dedicated AI processing unit enables inference operations that would be impractical on general-purpose CPU cores. Machine learning inference involves matrix multiplications and tensor operations with different optimal hardware characteristics than traditional computing. The specialized neural processing unit approaches performance parity with discrete AI accelerators while remaining integrated into mainstream processors.

This capability differentiates the Ryzen AI 400 Series from purely traditional processors. Intel's latest consumer processors incorporate comparable AI capabilities through their Neural Processing Unit (NPU) implementations. The competitive landscape around AI processor capabilities remains nascent, with multiple manufacturers exploring different architectural approaches and optimization strategies.

Power Efficiency and Battery Life

Power efficiency represents a critical competitive advantage for the Ryzen AI 400 Series, particularly in portable applications. The architectural improvements in Zen 5 deliver more computational work per watt consumed. This translates directly to extended battery life in laptops, a metric that influences purchasing decisions for mobile professionals.

The dedicated AI processing unit improves efficiency specifically for AI inference tasks. Running the same inference operation on general-purpose CPU cores consumes substantially more power and generates more heat. The specialized hardware approach reduces both factors simultaneously, enabling AI-powered features on battery-constrained devices without severe battery drain.

Comparison Table: Ryzen AI 400 Series vs. Competitive Offerings

| Feature | AMD Ryzen AI 400 | Intel Core Ultra | Apple M4 | Qualcomm Snapdragon |

|---|---|---|---|---|

| CPU Cores | 12 | 10-12 | 10 | 8-12 |

| Performance (Multi-threaded) | 1.3x vs competitors | Baseline comparison | 1.2x advantage | Varies by SKU |

| Content Creation Speed | 1.7x faster | Baseline | 1.4x advantage | Lower priority |

| AI Inference (NPU) | Dedicated NPU | Intel NPU | Neural Engine | Qualcomm Hexagon |

| Integrated Graphics | RDNA 2 | Intel Iris Xe | Apple GPU | Qualcomm GPU |

| Thermal Design Power | 15-55W | 15-55W | 8-35W | 6-25W |

| Gaming Performance (integrated) | Strong | Moderate | Very strong | Moderate |

| Windows/Linux Support | Excellent | Excellent | mac OS only | Android/Windows |

| Price Point (MSRP) | Mid-range | Mid-range | Premium | Budget-moderate |

The Ryzen AI 400 Series, Intel Core Ultra, and Apple M-Series processors each excel in different areas. Intel leads in single-thread performance, while Apple dominates in energy efficiency. Estimated data based on typical performance metrics.

Availability, Pricing, and Market Timeline

The Ryzen AI 400 Series announcement at CES 2025 established a market timeline with significant implications for purchasing decisions. Understanding availability windows, pricing strategies, and platform announcements helps contextualize the processor generation within broader market dynamics.

Launch Timeline and Availability Windows

AMD indicated that PCs incorporating either the Ryzen AI 300 Series processors or the Ryzen 7 9850X3D processor would become available during the first quarter of 2026. This timeline reflects the typical lag between processor announcement and consumer availability—manufacturers use the announcement period to enable OEM partners to finalize designs, validate thermal and power implementations, and prepare manufacturing capacity.

First-quarter availability means products likely begin reaching retail channels in February or March 2026. Early adopters should anticipate that initial availability might focus on specific configurations or OEM partners, with broader selection expanding through the quarter. Typical availability patterns suggest that initial inventory targets premium configurations and established OEM relationships, with broader SKU availability following.

The extended timeline between announcement and availability allows prospective buyers to make deliberate purchasing decisions rather than being forced into immediate choices. Consumers considering laptop purchases might reasonably wait for Ryzen AI 400 Series options rather than purchasing current-generation equipment, particularly if they can delay purchases until mid-quarter.

Pricing Strategy and Cost Positioning

AMD has historically positioned Ryzen processors as delivering strong price-to-performance ratios compared to Intel equivalents. While specific Ryzen AI 400 Series pricing was not detailed in initial announcements, historical patterns suggest competitive parity or modest pricing advantages relative to Intel Core Ultra equivalents. For a mainstream performance laptop, expect Ryzen AI 400 Series systems priced in the $800-1,500 range depending on configuration.

The U-series processors for ultraportables will anchor the budget end, with systems potentially available below

OEM Partner and Platform Announcements

AMD expanded its AI PC platform ecosystem to over 250 platforms, representing 2x growth over the previous year. These platforms represent designs from OEM partners—laptop manufacturers like ASUS, MSI, Lenovo, HP, and Dell, as well as desktop system builders. The breadth of platform support indicates strong manufacturer interest in the Ryzen AI 400 Series processor generation.

Major platform announcements from leading OEMs lend credibility to the processor generation and signal market acceptance. When ASUS announces premium gaming laptop lines powered by Ryzen AI 400 Series processors, it communicates confidence in the processor's gaming capabilities. When Dell develops commercial laptop lines around these processors, it signals suitability for business applications.

The diversity of platforms creates genuine consumer choice. Rather than settling for whatever a single OEM provides, consumers can select from multiple laptop designs, each optimizing different aspects: gaming performance, portability, display quality, keyboard ergonomics, or software features. This competitive diversity improves the overall market while driving OEM innovation.

Operating System Integration and Software Ecosystem

Processor capabilities only translate to tangible benefits when software effectively leverages available hardware. The Ryzen AI 400 Series benefits from increasingly mature operating system integration and growing application ecosystem.

Windows 11 and AI Integration

Windows 11 represents the primary operating system platform for Ryzen AI 400 Series processors. Microsoft has invested substantially in AI integration, developing APIs and frameworks that enable applications to leverage the dedicated NPU hardware. Windows Copilot and AI-powered search features demonstrate the possibilities of local AI processing.

The Windows Recall feature, controversial for privacy implications but demonstrative of local AI capabilities, shows how AI processing enables intelligent features previously requiring cloud services. Screenshot understanding, content-based search, and contextual suggestions become feasible on-device rather than requiring cloud analysis.

Application developers using Windows AI frameworks can create applications that leverage the NPU for tasks like image analysis, document understanding, and natural language processing. As frameworks mature and documentation improves, we should expect increasing numbers of applications exploiting these capabilities.

Linux and Open-Source Support

Linux support for Ryzen AI 400 Series processors follows typical patterns for AMD consumer processors. Kernel drivers and firmware support are available through standard Linux distribution update channels. Development communities working on open-source AI frameworks generally provide support for AMD NPU hardware, though support may lag Windows drivers and frameworks.

For developers targeting Linux environments, the Ryzen AI 400 Series provides strong general-purpose performance through the CPU cores and GPU capabilities, with NPU support available but potentially requiring additional setup compared to Windows systems. This positioning suits development teams primarily targeting Linux deployment while allowing testing on native hardware.

Application Ecosystem Growth

The growth trajectory of applications leveraging AI capabilities remains uncertain, dependent on developer adoption and consumer demand. Early applications likely focus on productivity enhancements: intelligent search, content summarization, and workflow automation. Gaming applications may incorporate AI for more sophisticated opponent behavior and real-time graphics enhancement.

As frameworks stabilize and developer tooling improves, we should anticipate broader adoption. Vertical applications in specialized domains—medical imaging analysis, scientific simulation, financial modeling—could incorporate AI processing to improve performance or functionality.

The Ryzen AI 400 Series shows significant improvements in AI inference performance and thermal efficiency compared to previous generations. Estimated data based on architectural enhancements.

Thermal Design, Power Management, and Battery Life

The practical implications of processor performance depend heavily on thermal characteristics and power consumption. A processor delivering remarkable performance while consuming excessive power or generating excessive heat fails to meet real-world requirements. AMD engineered the Ryzen AI 400 Series with explicit constraints on thermal and power characteristics.

Thermal Design Power (TDP) and Cooling Requirements

The Ryzen AI 400 Series operates within carefully defined thermal design power budgets. The U-series processors designed for ultrabook form factors operate within 15-28W TDP specifications. These power budgets enable passive cooling or minimal active cooling in thin, portable devices. Users benefit from silent operation and passive thermal dissipation without active cooling fans generating noise.

The H-series processors operate within 45-55W TDP ranges, requiring active cooling but still operating within standard laptop cooling capabilities. Manufacturers can implement these processors in gaming laptops or performance portables without requiring specially engineered thermal systems. Standard laptop cooling solutions prove sufficient for thermal management.

Desktop variants operate within higher TDP budgets—some desktop implementations reaching 65-105W depending on specific SKU. These power budgets remain conservative compared to previous-generation high-end processors. AMD maintains efficiency even in desktop implementations, reducing electricity costs for users running systems continuously.

Power Efficiency and Performance-per-Watt

Power efficiency—measuring computational output per unit of power consumed—represents a core design principle in the Zen 5 architecture. Modern processor design recognizes that raw frequency improvements provide diminishing returns while consuming increasingly excessive power. The Ryzen AI 400 Series pursues efficiency gains through architectural improvements and intelligent power management.

The dedicated AI processing unit improves efficiency for AI inference operations specifically. Running inference on CPU cores consumes substantially more power per operation. The specialized hardware executes the same operations with lower power consumption, directly improving battery life and reducing heat generation in portable devices.

Adaptive power management scales processor frequency and voltage based on workload characteristics. Light workloads like web browsing operate at reduced power settings, extending battery life. Demanding workloads automatically increase frequency and power consumption for improved responsiveness. This dynamic approach optimizes for different usage patterns and workload characteristics.

Battery Life and Mobile Computing Implications

Laptop users benefit directly from improved power efficiency through extended battery life. A system delivering 10 hours of web browsing on Ryzen AI 300 Series processors might achieve 12-14 hours on Ryzen AI 400 Series hardware through the combination of improved efficiency and dedicated AI processing unit benefits. These improvements translate to practical advantages for mobile professionals.

The extended battery life removes the requirement for daytime charging during full workdays. Business travelers no longer need to locate power sources mid-workday. Students can attend full class schedules without battery anxiety. The mobility advantage increases proportionally with battery life improvements.

Real-world battery life varies dramatically based on usage patterns, brightness settings, and application behavior. Video streaming, content creation tasks, and gaming consume substantially more power than productivity work. Battery life figures provided by manufacturers typically represent conservative web browsing scenarios. Actual battery life during intensive creative work or gaming may be substantially shorter.

Technical Integration Considerations for Developers

Software developers considering integration of Ryzen AI 400 Series capabilities into applications require understanding technical frameworks, APIs, and optimization approaches.

NPU Programming Models and Frameworks

Developers can leverage the dedicated AI processing unit through established machine learning frameworks adapted for AMD hardware. Py Torch, Tensor Flow, and ONNX Runtime provide support for AMD NPU execution. Developers familiar with these frameworks can modify applications to target the NPU with relatively minimal code changes, often requiring only specification of device targets during model execution.

AMD provides documentation and sample code demonstrating NPU integration patterns. The learning curve for developers already familiar with machine learning frameworks remains modest, as the conceptual frameworks remain consistent—differences appear primarily in device selection and performance optimization details.

Performance optimization for the NPU requires understanding architectural characteristics specific to the hardware. Different neural network architectures exhibit different performance characteristics on AMD hardware compared to CPU or GPU execution. Profiling tools help developers identify bottlenecks and optimization opportunities specific to their applications.

Windows Runtime Integration and APIs

Windows developers targeting the Ryzen AI 400 Series can leverage Windows Machine Learning APIs for AI inference. These APIs provide abstraction layers above hardware-specific details, enabling applications to operate across different processors without reimplementation. Windows Runtime (Win RT) components in C# and other languages provide convenient access to AI capabilities without low-level hardware programming.

Developer tools in Visual Studio include templates and samples demonstrating Windows ML integration. Debugging support and performance profiling tools help developers optimize AI inference operations. The maturity of Windows development tooling benefits developers targeting these processors compared to early-stage platforms with less developed tooling ecosystems.

Optimization Techniques and Performance Tuning

Maximizing NPU performance requires understanding hardware characteristics and workload mapping. Neural network model optimization—techniques like quantization that reduce numerical precision while maintaining accuracy, and pruning that removes less important network connections—can dramatically improve inference speed and power consumption.

Model selection influences performance substantially. Lightweight models optimized for mobile and edge devices often execute faster on NPUs than full-size models. Developers might implement different model sizes for different hardware platforms, using full-precision models on servers while deploying quantized variants on client devices.

Batching multiple inference requests can improve throughput, allowing the NPU to operate more efficiently by processing multiple inputs simultaneously. Applications processing streaming data or handling multiple requests can leverage batching to achieve better hardware utilization.

The Ryzen AI 400 Series shows significant improvements, with content creation performance increasing by 1.7x and multitasking by 1.3x. Estimated data for gaming and battery life improvements.

Gaming Performance Analysis and Benchmarking

Gaming represents an important use case for the Ryzen AI 400 Series processors, with the specialized Ryzen 7 9850X3D targeting gaming enthusiasts specifically.

Frame Rate Performance and Game Title Coverage

The Ryzen 7 9850X3D delivers competitive gaming performance across diverse game titles. Esports games—Valorant, Counter-Strike 2, Apex Legends—run at high frame rates with maximum settings, easily exceeding the 240+ FPS that competitive gamers demand. These games stress processors less than visually complex AAA titles, but consistent high frame rates require low latency and efficient processing.

Demanding AAA titles like Cyberpunk 2077, Star Wars Outlaws, and upcoming releases perform very strongly on the Ryzen 7 9850X3D. The 3D V-Cache architecture particularly benefits these titles through improved memory bandwidth utilization for texture and asset streaming. Frame rates at maximum settings (ray tracing enabled, ultra quality presets) remain playable without GPU upgrades necessary.

Game performance varies considerably based on specific GPU pairings. Integrated graphics in mainstream Ryzen AI 400 Series processors deliver strong integrated performance for esports and lighter titles but require GPU upgrades for maximum performance on demanding AAA games. Gaming-focused buyers typically pair these processors with discrete graphics cards, where the CPU provides competitive performance without bottlenecking modern GPUs.

Frame Time Consistency and Stuttering Analysis

Beyond average frame rates, gaming experience depends critically on frame time consistency. Occasional frame rate dips create stuttering—the sensation of momentary pauses in gameplay—that degrades experience even if average frame rates remain high. The Ryzen 7 9850X3D's cache architecture reduces stuttering probability by providing sufficient memory bandwidth for smooth game execution.

Competitive gamers particularly value frame time consistency because gameplay reaction times depend on predictable latency. A consistently 120 FPS experience with predictable frame intervals proves superior to inconsistent 140 FPS with occasional dips. The cache architecture delivers the consistency competitive players demand.

Measuring frame time consistency requires appropriate benchmarking methodology. Average frame rates provide incomplete performance characterization. Percentile metrics—95th percentile frame times, 99th percentile frame times—better capture the stuttering experience. Professional benchmarks increasingly report these metrics alongside average frame rates.

Ray Tracing Performance

Ray-traced lighting, which simulates realistic light behavior for enhanced visual quality, remains computationally expensive. The Ryzen 7 9850X3D provides sufficient performance to enable ray tracing at reasonable quality levels without excessive frame rate penalties. Ray tracing quality settings exist on a spectrum from subtle ambient occlusion to full scene ray tracing with global illumination.

The Redstone ray tracing technology mentioned in AMD's announcements represents a new graphics improvement technology enabling more sophisticated visual effects without corresponding performance penalties. This technology allows ray tracing effects previously requiring GPU or CPU acceleration to execute more efficiently through optimized algorithms and hardware support.

Resolution and Settings Optimization

Gaming resolution represents a critical performance lever. 1440p gaming proves achievable at high quality settings on the Ryzen 7 9850X3D with contemporary discrete GPUs. 4K gaming requires higher-end GPU pairing but remains feasible for non-demanding titles. The processor ensures sufficient performance that GPU selection becomes the primary performance constraint rather than CPU bottlenecking.

Dynamic resolution—rendering at lower resolution and upscaling to the target resolution through AI techniques—enables higher quality settings while maintaining target frame rates. The Ryzen AI 400 Series' dedicated AI processing unit could theoretically accelerate upscaling operations, though most games currently use GPU-based upscaling approaches.

Content Creation Workload Performance

Content creators benefit substantially from the additional cores and architectural improvements in the Ryzen AI 400 Series. Understanding performance improvements in specific creative applications helps creators make informed hardware decisions.

Video Editing and Transcoding Performance

Video editing applications like Adobe Premiere Pro, Da Vinci Resolve, and others leverage multiple cores extensively. The 12-core configuration enables faster timeline scrubbing, effects processing, and realtime preview of complex compositions. Effects that previously required processing passes now preview in real-time, accelerating creative workflows.

The 1.7x content creation performance improvement translates particularly dramatically to video encoding operations. Media encoding—converting video between codecs or resolutions—utilizes all available cores and scales almost linearly with core count. A 1-hour video taking 45 minutes to encode on a 4-core processor might complete in 15 minutes on the Ryzen AI 400 Series, enabling significantly faster project turnaround times.

The integrated AI capabilities enable new post-production possibilities. Intelligent upscaling can enhance lower-resolution footage to higher resolutions while preserving quality. Automated object detection and motion tracking reduce tedious manual selection processes. These capabilities operate locally rather than requiring cloud services, benefiting confidential projects and enabling offline workflows.

3D Rendering and Modeling

Three-dimensional content creation—3D modeling, animation, visual effects—demands substantial processing power. Rendering operations that produce final output images or video sequences consume significant time proportional to image resolution, quality settings, and scene complexity. A scene requiring 30 minutes to render on a quad-core processor might complete in 8-10 minutes on the Ryzen AI 400 Series, enabling faster iteration and feedback cycles.

The additional cores particularly benefit render-heavy workflows. Blender, Cinema 4D, Maya, and other 3D applications leverage parallel rendering across cores. Complex scenes with multiple light sources, reflective surfaces, and detailed geometry consume rendering time proportional to available computation.

The dedicated AI processing unit enables AI-powered features increasingly integrated into 3D applications. Intelligent content generation, procedural asset creation, and texture synthesis leveraging machine learning accelerate artistic workflows. These capabilities reduce tedious manual repetition, freeing artists to focus on creative decisions.

Image Processing and Photography

Photographic workflows benefit from improved processing speed in Adobe Lightroom, Capture One, and other photo editing applications. Batch processing—applying consistent edits across hundreds of photos—completes faster with additional cores. A photography session requiring hours of batch processing can now complete in minutes, enabling rapid project completion and faster client delivery.

AI-powered features increasingly appear in image processing applications: intelligent background removal, sky replacement, and automatic adjustment suggestions. The Ryzen AI 400 Series' dedicated neural processing unit accelerates these operations, enabling real-time preview of AI-powered edits without rendering delays.

Raw image processing—converting unprocessed sensor data from digital cameras into finished photographs—scales well with processor performance. Professional photographers processing thousands of images benefit directly from the performance improvements the Ryzen AI 400 Series provides.

Audio Production and Music Production

Audio production applications like Pro Tools, Logic Pro, and others leverage multiple cores for real-time audio processing, synthesis, and effects. Music production workflows benefit from the additional cores through improved plugin performance and lower latency during recording and monitoring.

Audio rendering—bouncing synthesizer tracks or exporting mixed compositions—completes faster on processors with additional cores. Complex projects with dozens of tracks, multiple effects chains, and automation benefit substantially from parallel processing capabilities.

The Ryzen AI 400 Series significantly reduces task completion times for content creators and developers, enhancing productivity and efficiency. Estimated data based on typical use cases.

Competitive Context and Alternative Processor Solutions

While the Ryzen AI 400 Series represents compelling hardware, alternative solutions exist for specific use cases and budget constraints. Understanding competitive positioning helps contextualize where these processors excel and where alternatives might prove superior.

Intel Core Ultra Processors

Intel's Core Ultra processor family represents the primary competitor to the Ryzen AI 400 Series. These processors implement Intel's 10-core to 12-core configurations with integrated graphics and dedicated NPU support. Intel positions Core Ultra as delivering premium performance with premium pricing in many markets.

Core Ultra processors excel at single-threaded performance in some scenarios and maintain strong compatibility with enterprise software optimized for Intel architecture. Intel's manufacturing relationships and brand recognition influence purchasing decisions at organizational levels, particularly within enterprises with existing Intel ecosystems.

The competitive differentiation between Ryzen AI 400 Series and Core Ultra remains narrow in many metrics. Performance differences of 10-20% prove negligible for most use cases, with purchasing decisions often hinging on OEM availability, warranty policies, or manufacturer relationships rather than technical specifications.

Apple Silicon (M-Series Processors)

Apple's M-series processors represent a different competitive category, offering premium performance in mac OS environments. These processors deliver exceptional performance-per-watt and excel at creative applications optimized for mac OS. However, mac OS exclusivity limits market reach, and software compatibility concerns remain for users requiring Windows or specific Linux applications.

Apple's integrated approach—controlling hardware and software simultaneously—enables optimization impossible with modular PC architecture. Applications optimized for Apple Silicon achieve remarkable performance. However, this advantage benefits only users within the Apple ecosystem willing to accept ecosystem constraints.

For users committed to Windows or Linux environments, Apple processors prove irrelevant regardless of technical merits. For creative professionals deeply invested in mac OS, Apple Silicon might prove superior despite higher cost per unit performance.

Alternative Solutions: Runable for Developer Productivity

For developers evaluating computing platforms, the hardware selection represents only one component of overall productivity. Runable offers an AI-powered automation platform specifically designed for developer teams seeking to accelerate content generation, workflow automation, and productivity. While the Ryzen AI 400 Series excels at local computational power, Runable addresses productivity automation across teams.

Developers considering the Ryzen AI 400 Series for content creation or documentation automation might explore Runable's capabilities for generating AI slides, AI documents, and automated reports. The platform's developer-focused tooling and affordable pricing ($9/month) make it accessible for individual developers and startup teams. For developers seeking to combine hardware performance improvements with workflow automation benefits, Runable's integration into development processes complements processor selection nicely.

Teams implementing CI/CD pipelines, managing documentation workflows, or automating repetitive content generation can leverage Runable's capabilities while operating on Ryzen AI 400 Series hardware, creating a complementary solution addressing both hardware performance and software automation requirements.

Qualcomm Snapdragon Processors

Qualcomm's Snapdragon processors target primarily mobile and ultra-portable computing, with x 86 variants emerging for Windows on Snapdragon compatibility. These processors prioritize power efficiency over raw performance, making them suitable for all-day computing on battery power but less suitable for performance-demanding creative or gaming workloads.

Snapdragon processors excel at consumer and productivity applications running on limited power budgets. For users prioritizing 20+ hour battery life over performance, Snapdragon systems might prove optimal despite lower peak performance. The growing Snapdragon application ecosystem enables increasingly practical Windows functionality on ARM-based Snapdragon hardware.

Custom Silicon and Specialized Solutions

Specialized computing scenarios sometimes justify custom silicon solutions. Machine learning researchers might operate custom tensor processing units (TPUs) or specialized AI accelerators. Scientific simulation workloads might leverage GPU clusters or specialized hardware. These niche scenarios represent exceptions rather than typical consumer computing.

For the vast majority of computing scenarios, general-purpose processors like the Ryzen AI 400 Series prove sufficient while providing versatility across diverse workloads. Custom solutions add complexity and cost for limited marginal gains in specific scenarios.

Future Roadmap and Technology Evolution

Understanding likely evolution of processor technology helps contextualize current-generation products within longer technological trajectories.

AI Capabilities Evolution

AI processing capabilities will deepen substantially in coming processor generations. Larger, more capable models will become feasible on consumer hardware as quantization techniques improve and neural architecture innovations optimize efficiency. Models requiring cloud execution today will run locally on future processors.

This trajectory suggests current investments in local AI inference capabilities align with long-term technology evolution. Applications gaining NPU integration now will benefit from accelerating capabilities in future hardware generations without architectural changes.

Manufacturing Process Evolution

AMD's roadmap includes migration to advanced manufacturing processes offering improved performance, efficiency, and capability density. Smaller transistor geometries enable more functionality on the same physical area, supporting larger AI processing units, additional cores, or improved efficiency.

The 3D packaging technologies like chiplets and 3D V-Cache will likely evolve toward even more sophisticated configurations. Future processors might stack multiple layers of logic and memory, enabling memory bandwidth and computational density impossible with traditional flat architectures.

Integration with Cloud and Edge Computing

Future evolution likely involves tighter integration between local AI processing on devices and cloud-based services. Not all inference operations will execute locally—some tasks will remain better suited for cloud processing. Intelligent offloading mechanisms will determine dynamically whether operations execute locally or remotely based on model complexity, data sensitivity, and network availability.

This hybrid edge-cloud approach enables applications combining the privacy and latency benefits of local processing with the scalability and capability advantages of cloud computing. The Ryzen AI 400 Series represents early steps toward this architectural model.

Windows 11 and OS Evolution

Windows will continue evolving to better leverage local AI processing capabilities. Future versions will likely include more sophisticated AI-powered features leveraging NPU hardware. Operating system optimization for specific processor architectures will improve as NPU adoption increases and software patterns stabilize.

Practical Buying Guide and Decision Framework

Prospective buyers require practical frameworks for evaluating whether the Ryzen AI 400 Series aligns with their specific needs and constraints.

Key Decision Criteria

Usage Pattern Assessment: Evaluate whether your computing workloads benefit from the 12-core configuration and dedicated AI processing unit. Heavy multitasking, content creation, and AI-powered feature utilization benefit most from these enhancements. Light browsing and productivity might not justify the cost.

Budget Constraints: Compare Ryzen AI 400 Series systems against alternatives at similar price points. The 1.3x multitasking improvement and 1.7x content creation improvement translate to value only if you're willing to pay for the capability. Budget-conscious buyers might find previous-generation processors at lower cost provide sufficient performance.

Software Compatibility: Verify that required applications run optimally on the Ryzen AI 400 Series platform. Most mainstream applications show excellent compatibility, but specialized vertical applications might have optimization gaps. Checking application vendor documentation prevents surprises post-purchase.

Form Factor Requirements: Determine whether you need portability (ultrabook), performance (desktop), or gaming capability. Different Ryzen AI 400 Series variants optimize for different use cases. Matching SKU to requirements ensures appropriate thermal and performance characteristics.

OEM and Warranty Considerations: Evaluate specific OEM implementations beyond processor specification. Cooling solution quality, thermal paste application, and warranty policies vary between manufacturers. Premium OEM implementations justify modest cost premiums through improved reliability and support.

When Ryzen AI 400 Series Makes Sense

Content creators, software developers, multitasking power users, and gaming enthusiasts generally benefit from Ryzen AI 400 Series hardware. The processor generation delivers meaningful performance improvements for demanding workloads and competitive gaming performance.

Budget-conscious buyers and those with light computing requirements might find previous-generation processors at discounted prices provide sufficient capability. Students and office workers performing light productivity work benefit minimally from the performance improvements.

When Alternatives Might Prove Superior

Apple M-series processors might prove superior for creative professionals deeply invested in the mac OS ecosystem. Intel Core Ultra processors offer competitive alternatives in some markets with different OEM options. Qualcomm Snapdragon processors excel for buyers prioritizing extreme battery life over peak performance.

Specialized workstations for machine learning research or scientific simulation might benefit from custom solutions with specialized accelerators. These scenarios represent exceptions for typical computing needs.

Security and Privacy Implications

Local AI processing on consumer hardware raises security and privacy considerations requiring thoughtful analysis.

Privacy Advantages of Local Processing

Processing sensitive data locally rather than sending to cloud services addresses privacy concerns inherent in cloud computing. Medical images, financial documents, personal communications, and proprietary work remain on-device rather than being transmitted to external servers. This provides meaningful privacy advantages for sensitive data processing.

Regulatory compliance improves with local processing. HIPAA compliance for healthcare data, GDPR compliance for EU-based users, and industry-specific regulations increasingly require data localization. Local AI inference enables compliance without cloud data transmission.

Security Considerations

Local AI processing reduces attack surface area compared to cloud-based approaches. Data doesn't transit networks where it might be intercepted. Cloud service compromises don't expose personal data. However, local processing introduces different security challenges—endpoint security becomes critical, malware could potentially access the NPU or its results, and physical device compromise enables direct data access.

Users remain responsible for maintaining system security through patching, authentication, and malware prevention. AI-powered security features can improve endpoint protection, but they don't eliminate the need for traditional security practices.

Data Governance and Model Transparency

Understanding what AI models process and how they operate remains challenging. Users often cannot inspect models operating on their hardware, making it difficult to verify safety and appropriateness. This opacity differs from traditional computing where users can theoretically inspect software source code.

Manufacturers and software vendors bear responsibility for model transparency and safety. Users must trust vendor representations about model behavior and safety properties. Independent security auditing of AI models remains nascent, limiting third-party verification.

Real-World Scenarios and Workflow Examples

Concrete examples illuminate how the Ryzen AI 400 Series benefits specific workflows.

Scenario 1: Video Content Creator

A freelance video editor producing You Tube content on a deadline previously waited 8-12 hours for video export operations on previous-generation hardware. With the Ryzen AI 400 Series, the same export completes in 3-4 hours, enabling same-day delivery to clients and faster content iteration based on feedback. The 1.7x content creation improvement directly translates to faster project completion and potentially higher client throughput.

Integrated AI capabilities enable automatic captions with better accuracy than cloud-based services, intelligent background removal for B-roll cleanup, and intelligent color correction reducing manual adjustment time. These features aggregate into workflow improvements exceeding simple performance metrics.

Scenario 2: Machine Learning Engineer

A machine learning engineer prototyping AI models locally benefits from hardware capable of running inference experimentation without cloud dependencies. Training runs faster with additional cores, enabling more experimentation cycles within a given timeframe. The ability to test locally before deploying to cloud infrastructure reduces cloud computing costs for development activities.

The dedicated NPU enables exploration of inference optimization techniques without requiring specialized hardware setup. Model quantization, pruning, and architecture exploration become practical on development laptops rather than requiring specialized lab equipment.

Scenario 3: Competitive Esports Player

A competitive esports player prioritizes frame rate consistency and low latency for reaction-dependent games. The Ryzen 7 9850X3D's 3D V-Cache architecture ensures consistent frame times, eliminating occasional stutters that disrupt aim and gameplay. Tournament-level competition eliminates any performance compromises—the processor enables maximum settings at consistent high frame rates without stuttering.

Practice sessions leading up to tournaments benefit from hardware ensuring performance consistency. In competitive games where reactions occur in milliseconds, hardware ensuring predictable latency provides competitive advantage.

Scenario 4: Business Professional

A business professional juggling multiple applications—email, spreadsheets, communication platforms, web browsers, and business applications—benefits from the 1.3x multitasking improvement. Responsiveness improves noticeably when switching between applications or performing complex operations in spreadsheets. The extended battery life enables full workday operation without seeking power sources.

AI-powered features in Windows and business applications accelerate knowledge work through intelligent search, automated summarization, and context-aware suggestions. These features aggregate into meaningful productivity improvements during the workday.

Maintenance, Support, and Long-Term Considerations

Processor selection involves long-term considerations extending beyond initial purchase.

Driver Support and Software Updates

AMD maintains driver support for Ryzen processors throughout their lifecycle, typically spanning 5+ years of security and performance updates. Users benefit from ongoing optimization improvements and security patches. The maturity of Zen 5 architecture and widespread adoption ensures sustained driver and firmware support.

Operating system updates from Microsoft maintain compatibility and introduce new capabilities leveraging the hardware. Buyers can confidently expect Windows 11 and future Windows versions to fully support Ryzen AI 400 Series processors without compatibility concerns.

Cooling System Maintenance

Laptop cooling systems require periodic maintenance to ensure continued effective thermal dissipation. Dust accumulation in cooling vents reduces thermal efficiency, increasing thermal load and potentially throttling performance. Regular cleaning—every 6-12 months depending on environment—maintains cooling effectiveness.

Thermal paste degrades over time, reducing thermal conductivity between the processor and cooler. High-use systems might benefit from thermal paste replacement after 2-3 years. Professional thermal paste application requires care to avoid processor damage, making professional service worthwhile for non-technical users.

Warranty and Support Options

Retailer warranties provide basic protection against hardware defects. Extended warranties available through OEM partners provide longer protection periods. Accidental damage protection and extended support plans address concerns beyond manufacturing defects.

AMD's reputation for customer service and driver support provides confidence in long-term support. Users generally report positive experiences with AMD support, though experiences vary based on specific issues and support channels.

Hardware Obsolescence Timeline

Processor obsolescence occurs gradually as software and games optimize for newer hardware. Five-year-old processors remain fully functional but might struggle with maximum settings in cutting-edge games. For productivity applications, processor lifecycle extends substantially longer—seven to ten years proving reasonable for professional use.

Buying surplus processing capability beyond immediate needs extends useful lifespan as software demands increase. A processor providing 1.5x more capability than required provides buffer room for software bloat and future application demands.

Industry Impact and Market Implications

The Ryzen AI 400 Series announcement carries implications extending beyond individual hardware selection decisions.

Industry Acceleration of AI Integration

The integration of capable AI processing into consumer processors signals industry-wide recognition that AI features constitute standard functionality rather than specialized capability. This accelerates AI application development as developers can target broader user bases with local AI capabilities.

Competitors including Intel, Qualcomm, and Apple intensify AI processing investments in response to AMD's positioning. The competitive dynamic drives faster evolution of AI processing capabilities benefiting consumers through choice and accelerating innovation.

Operating System Evolution

OS vendors like Microsoft will accelerate development of AI-powered features enabled by local processing. Windows features leveraging NPU hardware will proliferate, creating tangible user benefits. This virtuous cycle—consumer hardware enabling better software—characterizes healthy technology evolution.

Open-source operating systems like Linux will increasingly mature in supporting AMD NPU hardware, expanding platform options beyond Windows. This diversity enables developer choice and prevents vendor lock-in.

Ecosystem Development

The 250+ AI PC platforms announced by AMD signal robust ecosystem development. OEM manufacturers, software vendors, and accessory makers all benefit from expanded processor adoption. This ecosystem growth enables specialization and innovation at multiple levels.

Startup opportunities emerge in AI optimization, developer tools, and application development targeting local AI processing. The nascent ecosystem provides opportunities for entrepreneurs and developers to create differentiated value.

Conclusion: Synthesizing the Ryzen AI 400 Series Opportunity

The AMD Ryzen AI 400 Series represents a significant evolution in consumer processor capabilities, embedding sophisticated AI processing into mainstream computing devices. The architecture combines traditional CPU performance improvements—additional cores, improved instruction-level parallelism, enhanced cache efficiency—with dedicated neural processing hardware specifically optimized for machine learning inference.

The 1.3x multitasking improvement and 1.7x content creation performance provide concrete performance advantages for demanding workloads. Gaming performance through the Ryzen 7 9850X3D variant delivers competitive capabilities for esports and AAA gaming. Battery life improvements benefit mobile professionals. Integration of AI capabilities opens new application possibilities.

However, purchasing decisions require matching processor capabilities to actual requirements. Budget-constrained buyers might find previous-generation processors provide sufficient capability at lower cost. Users with light computing requirements benefit minimally from the performance improvements. Apple enthusiasts benefit from Apple Silicon within the mac OS ecosystem. These alternatives remain valid for their respective constituencies.

The processor generation excels for content creators, software developers, creative professionals, multitasking power users, and gaming enthusiasts. The first-quarter 2026 availability timeline enables prospective buyers to make deliberate decisions. The 250+ platform announcements signal strong OEM support and ecosystem confidence.

For developers and teams considering broader productivity improvements alongside hardware optimization, Runable's AI automation platform complements processor selection by providing AI-powered content generation, workflow automation, and documentation tools. The combination of capable hardware and software automation addresses both computational power and productivity acceleration simultaneously.

The Ryzen AI 400 Series occupies a compelling position in the processor market: delivering genuine performance improvements, incorporating emerging AI capabilities, maintaining competitive pricing relative to alternatives, and benefiting from strong ecosystem support. Whether this processor generation aligns with your specific requirements depends on matching its capabilities to your actual computing needs, budget constraints, and software requirements.

As the technology industry continues integrating AI processing into mainstream consumer devices, early adoption of capable AI processing hardware positions users to leverage increasingly sophisticated AI-powered features and applications. The Ryzen AI 400 Series represents a practical entry point into this evolving landscape, offering balanced capability across traditional computing, AI processing, and gaming scenarios while maintaining the flexibility for diverse computing tasks.

The decision ultimately rests with individual circumstances and preferences, informed by clear understanding of what these processors deliver, where they excel, and what alternative options provide for different use cases and priorities.

FAQ

What is the AMD Ryzen AI 400 Series?

The AMD Ryzen AI 400 Series represents the latest generation of consumer processors announced at CES 2025, featuring 12 CPU cores with 24 threads, dedicated AI processing units (NPUs), and integrated graphics. These processors are specifically designed to enable local AI inference while delivering strong general-purpose computing performance for productivity, content creation, and gaming workloads.

How does the Ryzen AI 400 Series improve over previous generations?

The Ryzen AI 400 Series improves upon the Ryzen AI 300 Series through the Zen 5 architecture delivering 1.3x faster multitasking performance and 1.7x faster content creation speeds. The processors add dedicated neural processing units for AI inference, improved cache efficiency for better memory bandwidth utilization, and enhanced power management for extended battery life in portable applications. These improvements combine architectural enhancements rather than relying solely on increased clock speeds.

What is the primary benefit of dedicated AI processing units (NPUs)?

Dedicated neural processing units accelerate machine learning inference operations—running trained AI models to generate predictions or classifications—with substantially lower power consumption and faster execution compared to general-purpose CPU cores. This enables AI-powered features in applications without proportional increases in power consumption or heat generation, making sophisticated AI features practical on portable devices with limited power budgets. The specialized hardware executes the same operations that would be possible on CPU cores but with dramatically improved efficiency.

How does the Ryzen AI 400 Series compare to Intel Core Ultra processors?

Both processor families offer comparable core counts (10-12 cores), dedicated AI processing units, integrated graphics, and competitive pricing. Performance differences typically range from 5-20% in various benchmarks depending on specific workloads and implementations. The choice between manufacturers often depends on OEM availability, specific laptop configurations, personal brand preferences, and warranty considerations rather than raw performance differences. Both represent capable alternatives with different OEM ecosystems and software optimization patterns.

What battery life improvements should portable device users expect?

Improved power efficiency in the Ryzen AI 400 Series typically translates to 20-40% battery life improvements compared to previous-generation processors, depending on usage patterns and workload characteristics. Users previously experiencing 8-10 hours of web browsing might achieve 10-14 hours on Ryzen AI 400 Series systems. Battery life improvements vary dramatically based on actual usage patterns—video streaming, content creation, and gaming consume substantially more power than productivity work or web browsing, resulting in smaller percentage improvements in power-intensive scenarios.

When should I consider alternative processors instead of Ryzen AI 400 Series?

Apple M-series processors merit consideration for users deeply invested in the mac OS ecosystem prioritizing creative applications. Intel Core Ultra processors provide competitive alternatives with different OEM implementations. Qualcomm Snapdragon processors excel for buyers prioritizing extreme battery life and basic computing over peak performance. Budget-conscious buyers might find previous-generation processors at discounted prices provide sufficient capability for light computing requirements. Your specific usage patterns, software requirements, and ecosystem commitments should guide this decision.

How does the Ryzen 7 9850X3D differ from mainstream Ryzen AI 400 Series processors?

The Ryzen 7 9850X3D incorporates 3D V-Cache technology—an additional 64 MB of L3 cache positioned in a three-dimensional configuration—specifically optimized for gaming performance. This variant prioritizes frame rate consistency and gaming-specific memory access patterns over general-purpose efficiency. While mainstream Ryzen AI 400 Series processors balance productivity, multitasking, and gaming performance, the 9850X3D focuses primarily on gaming, making it the preferred choice for competitive gamers and gaming-focused users.

What software integration is available for AI capabilities on these processors?

Windows 11 provides native integration through Windows Machine Learning APIs, allowing applications to leverage the NPU for AI inference tasks. Machine learning frameworks including Py Torch, Tensor Flow, and ONNX Runtime support AMD NPU execution with relatively minimal code modifications from developers already familiar with these platforms. Windows Copilot and AI-powered search features demonstrate practical Windows integration leveraging local AI processing. As the software ecosystem matures, developers increasingly optimize applications specifically for these processors' AI capabilities.

What is the expected availability timeline and pricing?

AMD announced that systems incorporating Ryzen AI 400 Series processors would become available during the first quarter of 2026, typically meaning February through March 2026. Specific pricing varies by OEM and configuration, with mainstream performance laptops expected to range from

How does local AI processing improve privacy compared to cloud-based AI services?

Local AI inference on Ryzen AI 400 Series processors maintains sensitive data on-device rather than transmitting to cloud services, addressing privacy concerns associated with cloud processing. Medical images, financial documents, legal materials, and proprietary work remain under user control without cloud exposure. This approach improves compliance with regulatory requirements including HIPAA for healthcare and GDPR for EU-based users. However, users remain responsible for endpoint security through patching and malware prevention, as local processing introduces different security considerations than cloud-based approaches.

For developers evaluating hardware platforms, what complementary tools enhance productivity?

Developers considering Ryzen AI 400 Series processors might explore Runable, an AI-powered automation platform designed specifically for developer teams. Runable provides capabilities for automated content generation (AI slides, AI documents, AI reports), workflow automation, and developer productivity tools at an affordable $9/month pricing. While the Ryzen AI 400 Series excels at local computational performance, Runable addresses productivity acceleration through automation—complementary benefits that together provide both hardware performance and software efficiency improvements for development teams.

Additional Considerations for Decision-Making

Beyond technical specifications and benchmarks, several practical factors merit consideration when evaluating the Ryzen AI 400 Series.

OEM-Specific Implementation Quality

The quality of specific OEM implementations varies substantially beyond processor specification. Thermal solutions, thermal paste application, keyboard quality, display characteristics, build materials, and warranty policies differ significantly between manufacturers. Premium OEMs sometimes justify cost premiums through superior implementations that improve real-world experience beyond processor capability.

Software Ecosystem Maturity

The Windows 11 software ecosystem supporting Ryzen AI 400 Series processors continues maturing. Early adopters might encounter applications without specific optimization for NPU capabilities. As adoption increases, more applications will leverage these capabilities. Patient early adopters benefit from cutting-edge technology; those preferring stable, mature ecosystems might benefit from waiting for broader application optimization.

Long-Term Support and Planning

AMD maintains driver and firmware support for Ryzen processors for extended periods, typically 5+ years. Users can confidently expect continued support and optimization throughout the processor lifecycle. Planning for 5-7 year productive lifespan provides reasonable framework for evaluating long-term value.

Total Cost of Ownership

Beyond purchase price, consider electricity costs for always-on systems, cooling maintenance requirements, warranty and support options, and upgrade flexibility. Systems emphasizing efficiency reduce operational costs over multi-year lifespans. Higher upfront costs sometimes justify through reduced operational costs and extended usable lifespan.

Key Takeaways

- Ryzen AI 400 Series delivers 1.3x multitasking and 1.7x content creation improvements through 12 cores, 24 threads, and dedicated NPU hardware

- Dedicated neural processing units enable local AI inference without cloud connectivity, improving privacy, latency, and power efficiency

- First-quarter 2026 availability with 250+ OEM platform implementations signals strong ecosystem support across laptop and desktop markets