Anthropic's Stand: Rejecting Pentagon's Terms on Autonomous Weapons [2025]

Last month, Anthropic made headlines by firmly rejecting the Pentagon’s revised terms for collaboration on autonomous weapons systems. This move highlights the growing tension between AI companies committed to ethical practices and government agencies with strategic interests. In this article, we dive deep into the reasons behind Anthropic's decision, explore the technical and ethical implications of autonomous weapons, and discuss the future of AI in warfare and surveillance.

TL; DR

- Anthropic takes a stand: Refuses Pentagon's terms on lethal autonomous weapons.

- Ethical AI focus: Prioritizes safety and ethical considerations in AI development.

- Surveillance concerns: Resists contributing to mass surveillance technologies.

- Industry implications: Sets a precedent for ethical stances in AI partnerships.

- Future trends: Increased demand for ethical guidelines in AI collaborations.

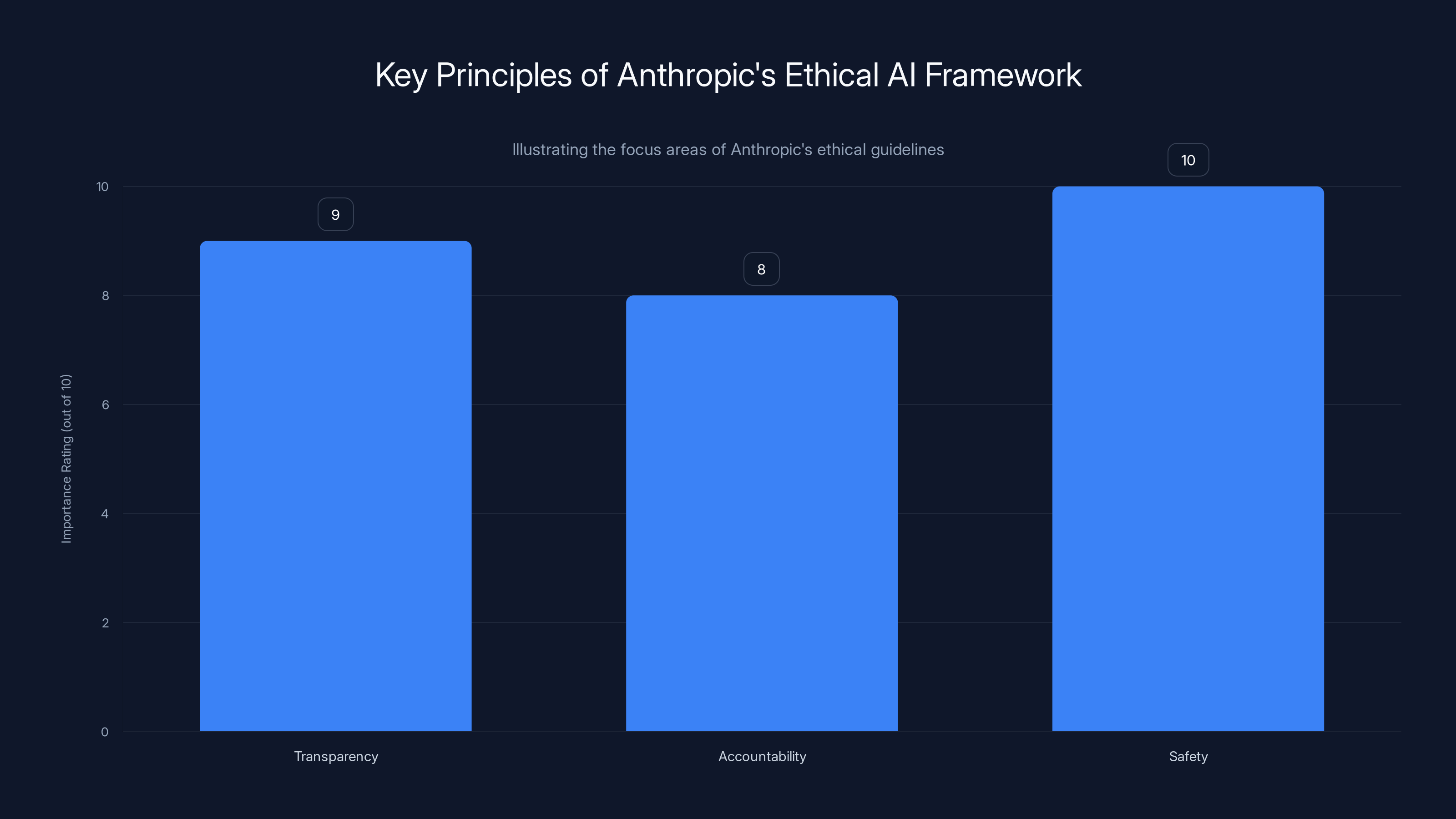

Anthropic places the highest importance on safety, followed by transparency and accountability, in its ethical AI framework. Estimated data based on typical ethical considerations.

The Context Behind Anthropic's Decision

Anthropic, a leading AI research and development company, is known for its commitment to creating safe and beneficial AI systems. Their decision to refuse the Pentagon's terms stems from a fundamental concern over ethical AI deployment, particularly in contexts involving lethal force and extensive surveillance. According to DefenseScoop, this refusal underscores the company's dedication to ethical standards.

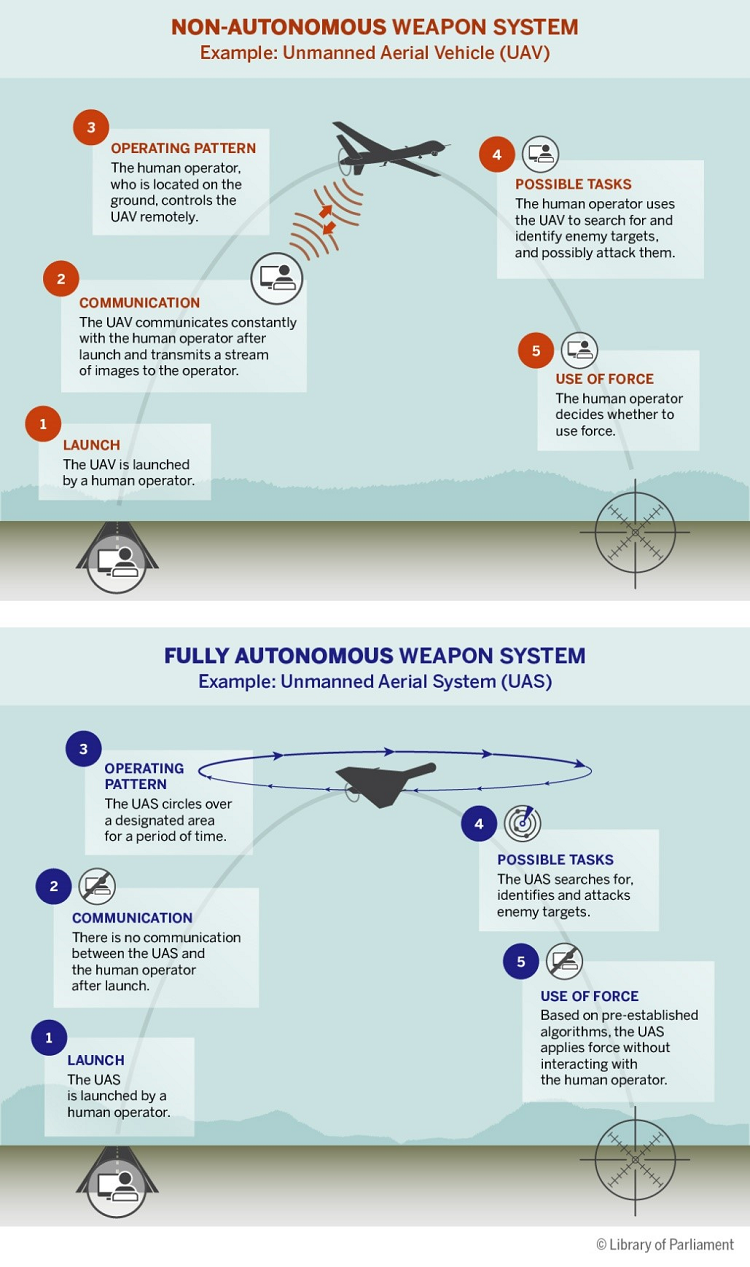

Understanding Autonomous Weapons

Autonomous weapons, often dubbed as 'killer robots', are systems capable of selecting and engaging targets without human intervention. While these systems promise increased efficiency and reduced human casualties in conflict zones, they raise significant ethical and legal concerns. The potential for misuse, accidental engagements, and the lack of accountability are some of the critical issues that organizations like Anthropic are concerned about, as highlighted by Computerworld.

Anthropic's Ethical AI Framework

Anthropic has developed a robust ethical framework to guide its AI research and applications. This framework emphasizes transparency, accountability, and safety in all AI deployments. By refusing the Pentagon’s terms, Anthropic is reinforcing its commitment to these principles, even at the cost of lucrative defense contracts. CNN reports that this decision reflects a broader industry trend towards prioritizing ethical considerations over financial gain.

Key Principles of Anthropic's Ethical AI Framework:

- Transparency: Open communication about AI capabilities and limitations.

- Accountability: Ensuring human oversight and responsibility for AI actions.

- Safety: Prioritizing the minimization of harm in all AI applications.

The Implications of Mass Surveillance

Apart from autonomous weapons, Anthropic's decision also touches on concerns about mass surveillance. The integration of advanced AI into surveillance systems can lead to unprecedented levels of monitoring, potentially infringing on privacy rights and civil liberties. The ACLU has raised similar concerns about privacy implications in AI surveillance technologies.

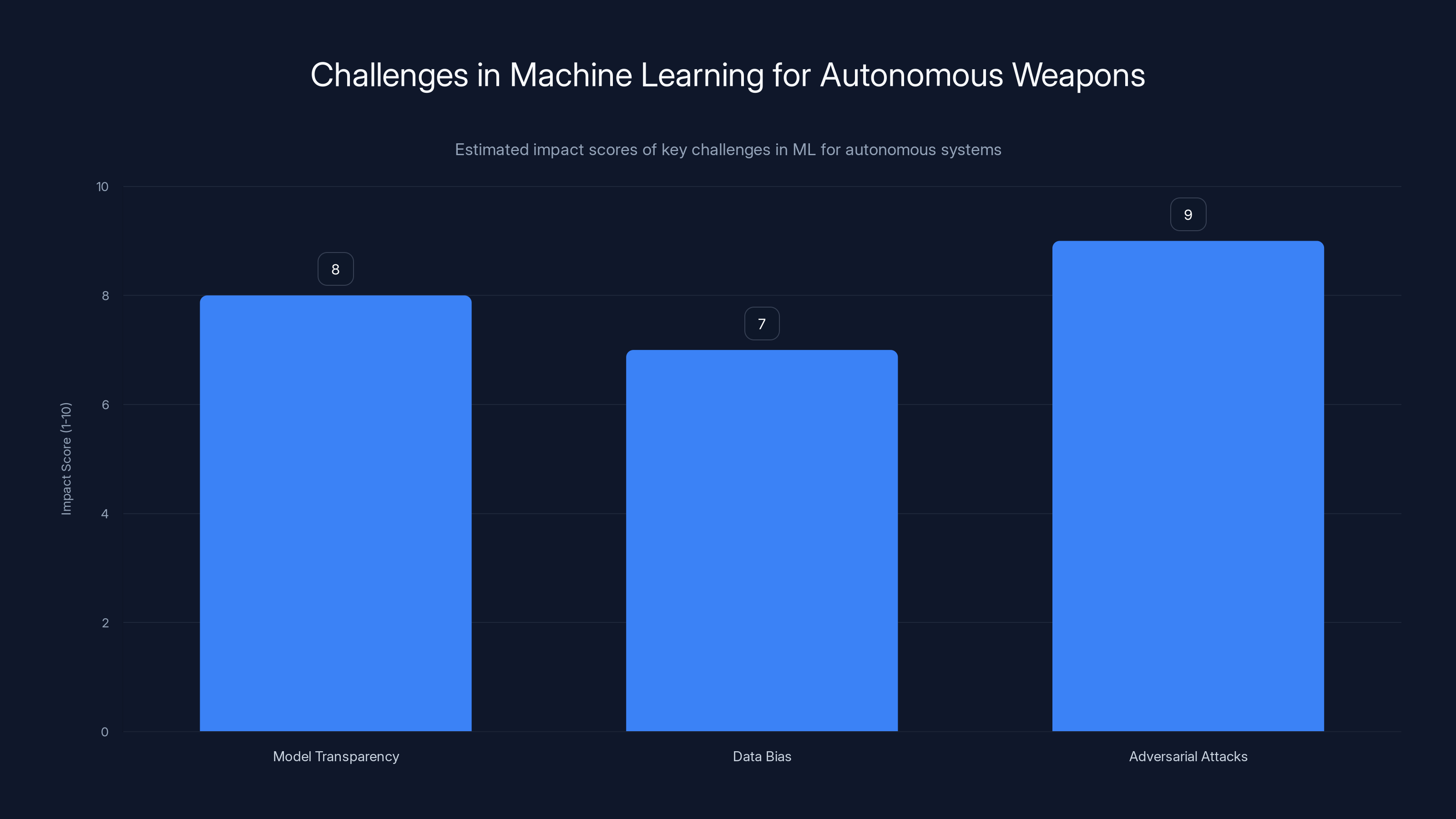

Estimated data shows that adversarial attacks pose the highest challenge in machine learning for autonomous weapons, followed by model transparency and data bias.

Technical Aspects of Autonomous Weapons

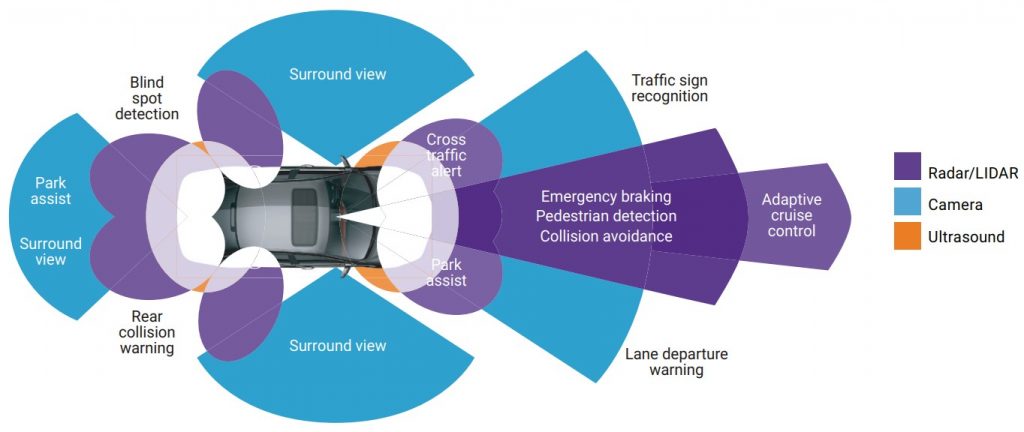

To understand the full scope of Anthropic's concerns, it's essential to explore the technical underpinnings of autonomous weapons systems. These systems rely on advanced machine learning algorithms, sensor fusion, and decision-making frameworks.

Machine Learning in Autonomous Systems

Machine learning (ML) is at the heart of autonomous weapons, enabling them to process vast amounts of sensor data and make real-time decisions. However, ML models are often opaque, making it difficult to predict their behavior in complex and dynamic environments. Quantum Zeitgeist discusses the challenges of transparency and predictability in these systems.

Challenges in Machine Learning for Autonomous Weapons:

- Model Transparency: Difficulty in understanding and predicting model behavior.

- Data Bias: Training data may introduce biases, leading to unintended actions.

- Adversarial Attacks: Vulnerability to malicious inputs designed to manipulate outcomes.

Sensor Fusion and Decision-Making

Autonomous weapons integrate data from multiple sensors to create a comprehensive battlefield picture. Sensor fusion, combined with decision-making algorithms, allows these systems to identify and engage targets effectively.

Addressing Technical Challenges

To mitigate the risks associated with autonomous weapons, several technical strategies can be employed:

- Explainable AI: Developing models that provide transparent and interpretable outputs.

- Robustness Testing: Ensuring systems can withstand adversarial conditions.

- Human-in-the-Loop Systems: Incorporating human oversight to maintain accountability.

Ethical and Legal Considerations

The development and deployment of autonomous weapons bring forth complex ethical and legal questions that require careful consideration.

Ethical Concerns

- Autonomy vs. Accountability: Balancing autonomous decision-making with human responsibility.

- Moral Agency: The challenge of assigning moral agency to non-human entities.

- Proportionality and Discrimination: Ensuring compliance with international humanitarian law.

Legal Frameworks

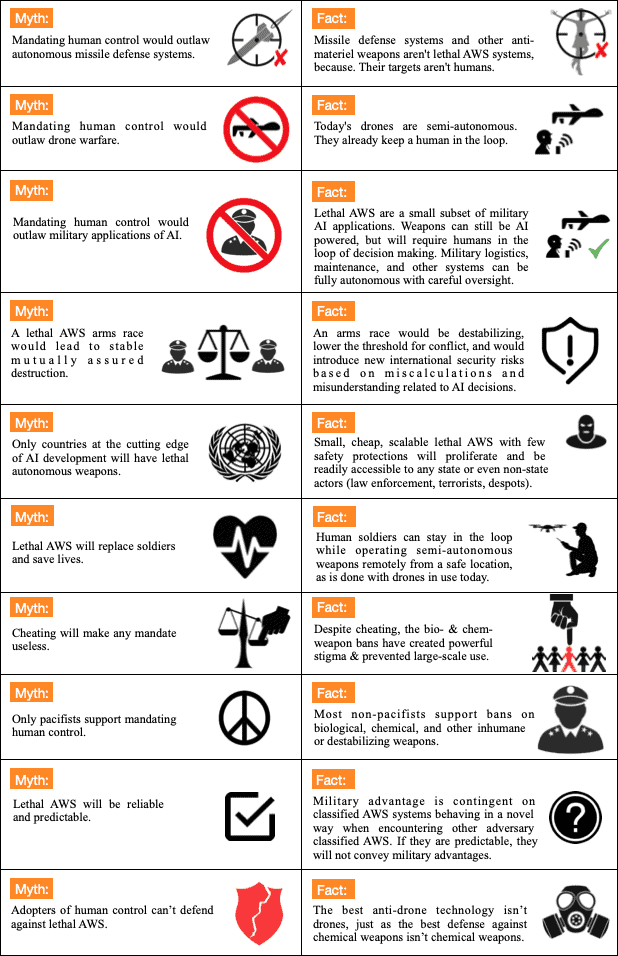

Current legal frameworks struggle to keep pace with the rapid advancements in AI and autonomous weapons. International treaties and agreements must evolve to address these emerging technologies. NYU Stern highlights the need for updated legal measures to govern AI use in military contexts.

Proposed Legal Measures:

- International Ban on Lethal Autonomous Weapons: Advocating for treaties similar to those for chemical and biological weapons.

- Regulatory Oversight: Establishing bodies to oversee and regulate autonomous weapons deployment.

Conducting ethical risk assessments and engaging stakeholders are rated as the most important steps in ensuring ethical AI deployment. Estimated data.

Practical Implementation Guides

For organizations developing AI for defense applications, adhering to ethical guidelines and best practices is crucial. Here are some practical steps to ensure responsible AI deployment:

Developing Ethical AI Systems

- Conduct Ethical Risk Assessments: Identify potential ethical risks in AI applications and develop mitigation strategies.

- Engage Stakeholders: Involve a diverse group of stakeholders, including ethicists, policymakers, and affected communities.

- Implement Accountability Mechanisms: Establish clear lines of accountability for AI decisions and actions.

Ensuring Compliance with Ethical Standards

- Regular Audits: Conduct regular audits of AI systems to ensure compliance with ethical standards.

- Transparency Reports: Publish transparency reports detailing AI capabilities and limitations.

- Continuous Improvement: Foster a culture of continuous improvement and ethical reflection.

Common Pitfalls and Solutions

Developing and deploying autonomous weapons systems can be fraught with challenges. Here are some common pitfalls and strategies to address them:

Pitfall: Lack of Transparency

Solution: Implement explainable AI techniques to improve transparency and build trust with stakeholders.

Pitfall: Data Bias

Solution: Use diverse and representative datasets to train AI models, reducing the risk of bias.

Pitfall: Overreliance on Autonomous Systems

Solution: Maintain human oversight and decision-making authority, especially in critical situations.

Future Trends and Recommendations

The future of AI in defense and surveillance is likely to be shaped by technological advancements and evolving ethical considerations. Here are some trends and recommendations:

Trend: Increased Demand for Ethical AI

As AI becomes more integrated into defense systems, there will be increased demand for ethical guidelines and standards. Scientific American notes the growing importance of ethical AI frameworks.

Recommendation: Organizations should proactively develop and adhere to ethical AI frameworks to stay ahead of regulatory requirements.

Trend: Advancements in AI Explainability

Improvements in AI explainability will enhance transparency and trust in autonomous systems.

Recommendation: Invest in research and development of explainable AI techniques to improve system transparency.

Trend: International Collaboration on AI Ethics

Global collaboration on AI ethics will be crucial to address the challenges posed by autonomous weapons.

Recommendation: Engage in international dialogues and contribute to the development of global ethical standards for AI.

Conclusion: The Path Forward

Anthropic's stand against the Pentagon's terms on autonomous weapons underscores the importance of ethical considerations in AI development. By prioritizing transparency, accountability, and safety, Anthropic sets a precedent for responsible AI deployment. As the field of AI continues to evolve, organizations must balance technological advancements with ethical imperatives to ensure a future where AI benefits humanity while minimizing risks.

FAQ

What are autonomous weapons?

Autonomous weapons are systems capable of selecting and engaging targets without human intervention, often using advanced AI and machine learning technologies.

Why did Anthropic refuse the Pentagon’s terms?

Anthropic refused the Pentagon's terms due to ethical concerns over the use of AI in lethal autonomous weapons and mass surveillance technologies, as reported by CNN.

What are the ethical implications of autonomous weapons?

The ethical implications include challenges related to accountability, moral agency, and compliance with international humanitarian law.

How can organizations ensure ethical AI deployment?

Organizations can ensure ethical AI deployment by conducting risk assessments, engaging stakeholders, and implementing accountability mechanisms, as suggested by Thomson Reuters.

What are the future trends in AI for defense?

Future trends include increased demand for ethical guidelines, advancements in AI explainability, and international collaboration on AI ethics.

How can AI transparency be improved?

AI transparency can be improved through explainable AI techniques, regular audits, and transparency reports.

What legal measures are proposed for autonomous weapons?

Proposed legal measures include international bans on lethal autonomous weapons and regulatory oversight bodies.

How does AI contribute to mass surveillance?

AI contributes to mass surveillance by enabling advanced data analysis and monitoring capabilities, often infringing on privacy rights.

What is Anthropic's ethical AI framework?

Anthropic's ethical AI framework emphasizes transparency, accountability, and safety in AI development and deployment.

How can data bias in AI be mitigated?

Data bias can be mitigated by using diverse datasets, conducting bias audits, and implementing fairness algorithms.

Key Takeaways

- Anthropic refuses Pentagon's terms on lethal autonomous weapons.

- Focus on ethical AI development to prevent misuse and harm.

- Mass surveillance integration raises privacy and civil liberty concerns.

- AI companies encouraged to develop transparent, accountable systems.

- International collaboration needed for ethical AI standards.

- Future demand for ethical guidelines in AI collaborations.

Related Articles

- OpenAI's Strategic Expansion: Transforming London into a Global AI Powerhouse [2025]

- Google's Nano Banana 2: Advanced AI Image Tools Now Free for All [2025]

- How AI is Transforming Customer Service in Fast Food: Burger King's New Approach [2025]

- How Chinese AI Chatbots Censor Themselves [2025]

- Google's Nano Banana 2: The Next Leap in AI Image Generation [2025]

- How AI is Revolutionizing Dating Apps: Inside Bumble's New Photo Feedback and Profile Guidance Tools [2025]

![Anthropic's Stand: Rejecting Pentagon's Terms on Autonomous Weapons [2025]](https://tryrunable.com/blog/anthropic-s-stand-rejecting-pentagon-s-terms-on-autonomous-w/image-1-1772148921334.jpg)