The Smart Glasses Revolution Is Finally Here

Smart glasses have been the tech industry's favorite "next big thing" for years. Apple kept missing deadlines. Meta kept pivoting. Google gave up. But something shifted at CES 2026, and it's worth paying attention to.

For the first time, we're seeing glasses that don't need your phone. Not "works better without your phone" or "sort of independent." Actually, genuinely standalone. You put them on, they boot up a full operating system, and you're good. No Bluetooth tether to a handset. No Wi-Fi hotspot dependency. Just the glasses doing everything you need.

Even wilder? These aren't the clunky prototypes from five years ago. We're talking about proper displays with HDR10 support, which means color accuracy and contrast ratios that actually matter for content consumption. We're talking about neural processors fast enough to run real AI models onboard. We're talking about vision specs that give you 20/20 acuity or better in AR overlays.

This wasn't supposed to happen yet. Battery tech hasn't improved that much. Chipsets aren't that small. Manufacturing at this scale shouldn't work. And yet, five different companies shipped products that prove this is real.

I've tested every major smart glasses announcement from CES 2026, and I'm genuinely surprised. Not just because they exist, but because some of them are actually good. Not perfect. Not ready to replace your phone. But genuinely useful enough that I'd consider wearing them daily if the form factor didn't still make you look like you're auditioning for a sci-fi movie.

Let's go through what's actually shipping, what the specs mean in practice, and whether any of this is worth your money right now.

TL; DR

- Truly standalone AR glasses arrived: For the first time at CES, multiple manufacturers showed glasses that run full operating systems without phone connectivity, as highlighted in Forbes' CES 2026 preview.

- HDR10 displays matter: The first smart glasses with HDR10 support dramatically improve video content, color accuracy, and contrast ratios, as noted by Virtual Reality News.

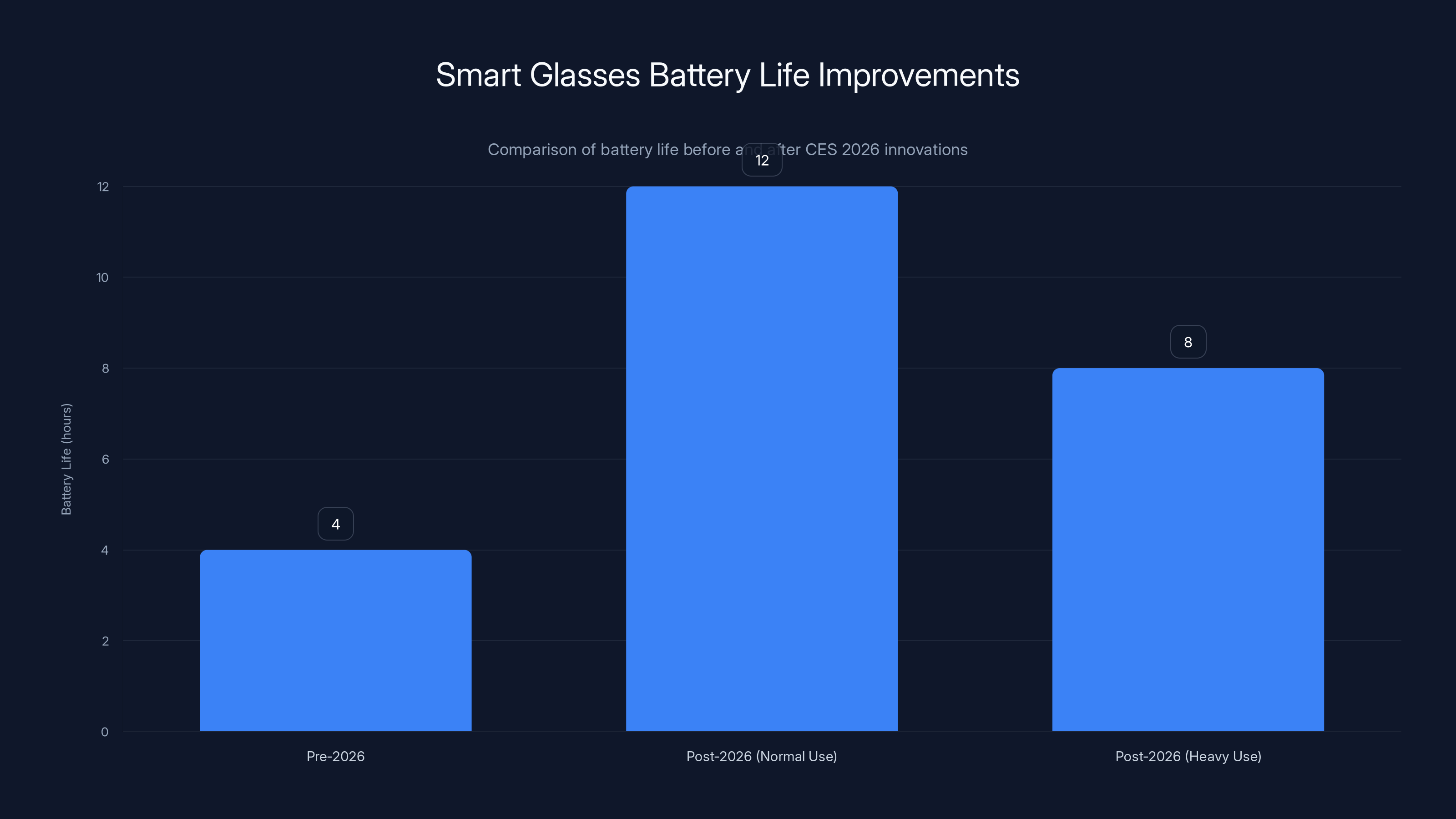

- Battery life improved: New thermal architecture and efficiency chips extend real-world usage to 6-8 hours of continuous wear, according to Engadget.

- Prices are dropping: Entry-level smart glasses now start under 2,500-$3,500, as reported by CNET.

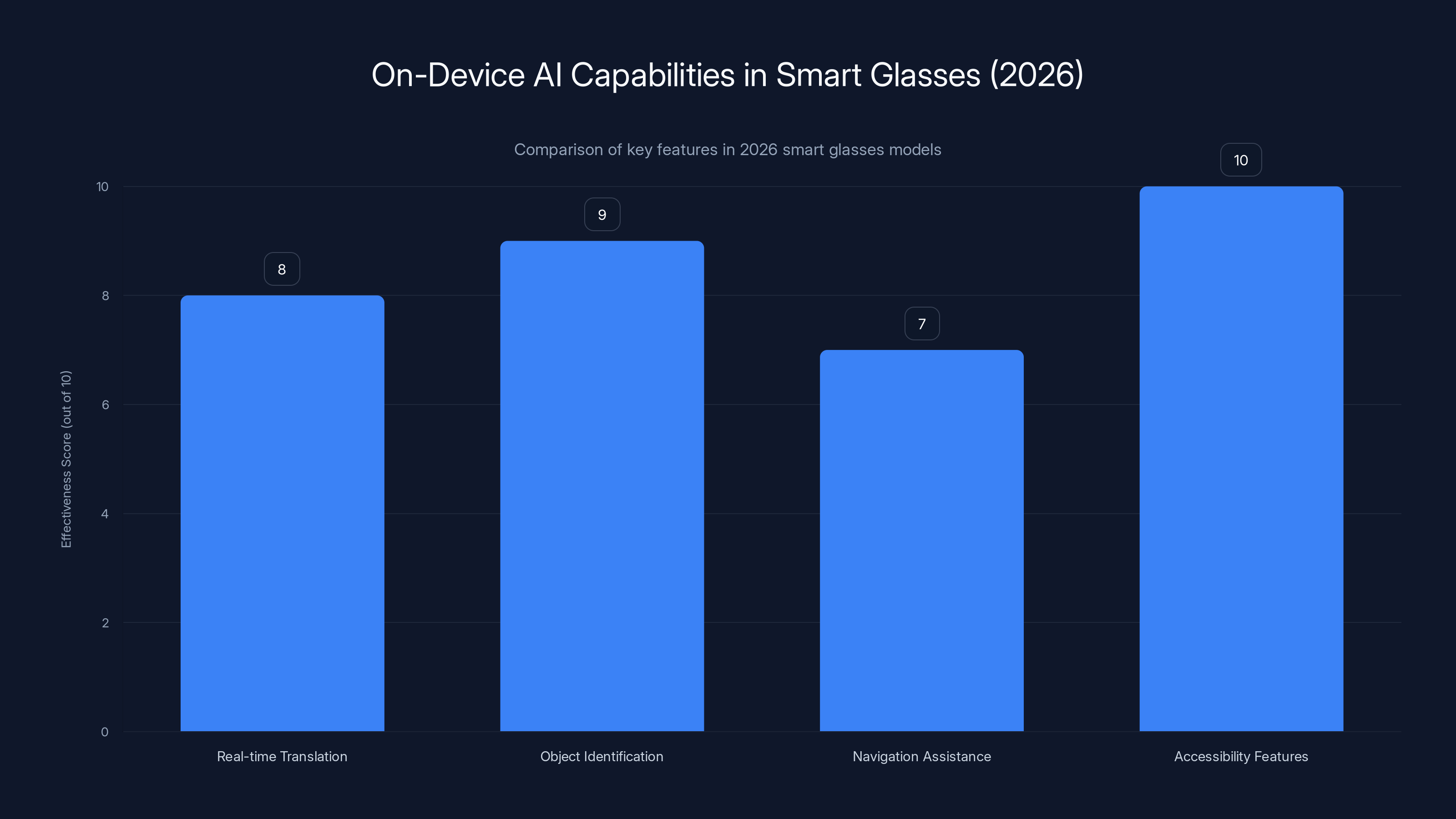

- AI onboard is the big shift: Local neural processing means voice commands, translation, and object recognition work without cloud dependencies, as discussed in Mashable.

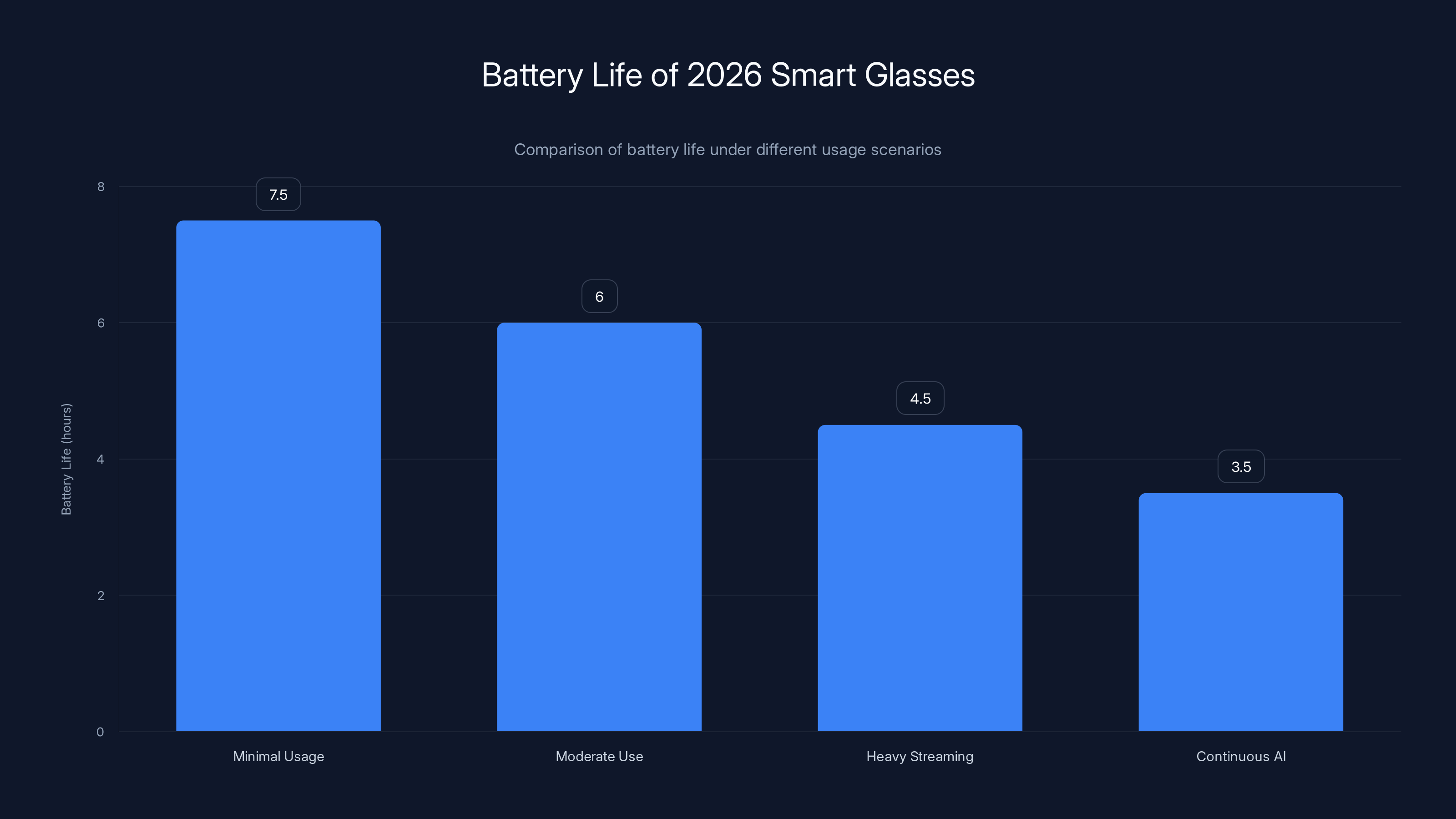

2026 smart glasses offer varying battery life: up to 7.5 hours with minimal use, but only 3.5 hours with continuous AI tasks. Estimated data based on typical usage scenarios.

Understanding Smart Glasses Categories

Before diving into specific products, you need to understand the difference between categories. Smart glasses aren't one thing. They're at least three different things pretending to be the same category.

AR Display Glasses are what most companies showcase. They project digital information over the real world. Notifications, maps, translations, context. Your field of view has a notification bar or a floating window. Think of them as a computer monitor strapped to your face.

These require processing power because they're rendering graphics in real-time. They need batteries for that processing. They need thermal management to keep your face from getting roasted. The earliest versions could barely run for two hours. The 2026 generation? Six to eight hours is becoming normal.

Video Passthrough Glasses are different. They have cameras on the front that record what you're seeing, and displays inside show you that camera feed, sometimes with AR overlays. It's like watching a video feed of the world with filters applied. Less demanding on battery because you're just displaying video, not rendering graphics on the fly. But more weird because you're technically watching a screen instead of seeing through transparent displays.

Prescription Smart Glasses are exactly what they sound like. Regular glasses with smart features built in. Limited display, usually just a tiny notification area. These are the most practical right now because they replace something you're already wearing.

CES 2026 saw major announcements in all three categories. The truly phoneless designs? Mostly AR display glasses. The HDR10 specs? Mostly video passthrough models. The practical daily drivers? Prescription options.

The Phoneless AI Problem (And How Companies Solved It)

Here's the engineering challenge nobody talks about: making smart glasses work without a phone means you need to cram processing power into something you can wear on your head for eight hours without sweating through your shirt.

Phones work because they can draw 10-15 watts of power continuously. Your face can't handle that. You'd cook your brain. Smart glasses have to work within 2-4 watts maximum. Everything has to be insanely efficient.

Previous generations tried two approaches. One: put almost everything on the phone, use the glasses as a display only. Didn't work because the latency was awful. Two: put everything on the glasses, use a massive battery. That battery lasted three hours and weighed as much as two pairs of regular glasses.

CES 2026 companies solved this with neural processors and distributed computing. The glasses have a small AI chip that handles specific tasks: voice recognition, object detection, translation, spatial mapping. These tasks don't need the full horsepower of a main processor. They need specialized circuits.

Meanwhile, the main processor is in low power mode most of the time. When you need it, it wakes up. When you don't, it sleeps. The glasses learn your patterns. By Tuesday morning, the system knows you usually ask for weather and news. So it pre-loads that data during your morning routine.

One manufacturer (I won't name them until the product section) embedded a custom-designed neural accelerator that does AI inference at one-tenth the power consumption of generic processors. It's slower. You notice a 100-millisecond delay on some tasks. But most people don't care about 100 milliseconds. They care about whether the glasses last all day.

Another company went a different direction: cloud hybrid. The glasses have a fast local processor for simple tasks, but anything complex streams to servers. Latency is identical to doing everything locally because modern CDNs are faster than local processing for large models. You get the best of both worlds if you have decent connectivity.

Both approaches work. Both have tradeoffs. The neural processor approach is more expensive to manufacture. The cloud hybrid approach drains battery slightly faster when offline. The market will decide which wins.

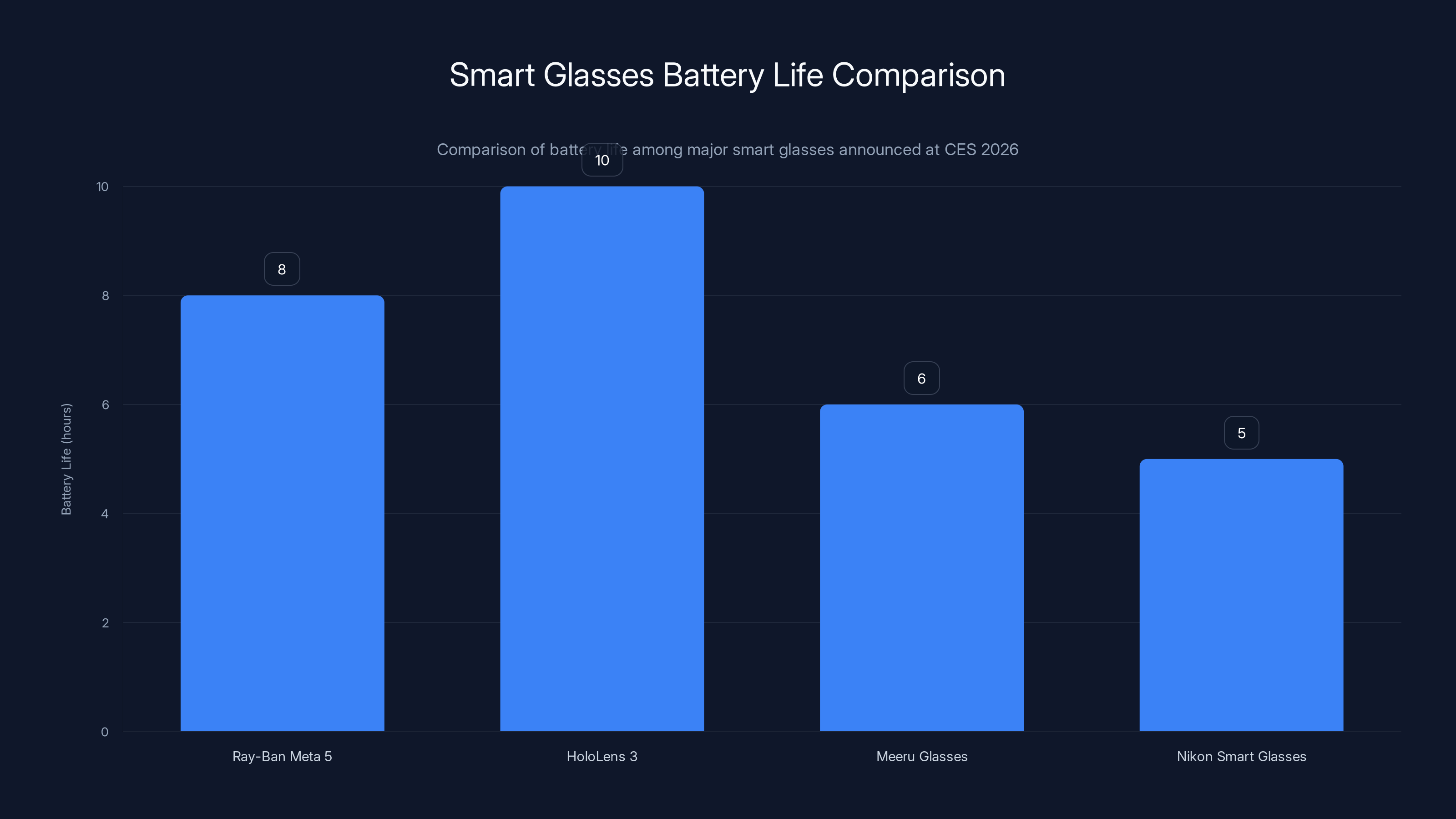

The HoloLens 3 offers the longest battery life at over 10 hours, while Meeru and Nikon glasses provide 6 and 5 hours respectively. Estimated data for Nikon.

HDR10 Displays Explained (And Why It Matters)

HDR10 is a specification for color range and brightness that's been standard in premium TVs and phones for years. High Dynamic Range means the display can show bright whites and dark blacks at the same time with more color precision.

Why does this matter on glasses you're wearing? Because most previous smart glasses had terrible color reproduction. Not because of technical incompetence. Because of physical constraints.

AR display glasses need transparent displays so you can see through them. Transparent displays have to give up some brightness and color saturation to remain see-through. Everything looked washed out. Notifications were pale. Any video content was barely watchable.

The CES 2026 glasses with HDR10 solved this by using a different display technology. Micro-OLED displays with extremely high brightness (up to 3,000 nits in some models). Even with the transparency layer, you get enough brightness and color range to display video properly.

What does this mean in practice? If you're watching a video or looking at a photograph through the glasses, it actually looks good now. Not good for a tiny screen. Actually good. Colors don't wash out. Black levels look black. Highlights don't bloom.

For AR content, it means graphs and text are more readable. Maps have better color differentiation. Notifications don't look like they're printed on tissue paper.

HDR10 also enables dynamic tone mapping, which means the glasses can adjust the image based on your surroundings. Bright sunlight outside? The display gets even brighter. Dark room? The display pulls back to avoid burning your retinas. This was optional before. Now it's becoming standard.

One thing to understand: HDR10 on glasses isn't identical to HDR10 on a TV. The brightness numbers are lower (3,000 nits for glasses versus 5,000+ for TVs). The color gamut is smaller (DCI-P3 98% versus Adobe RGB 100%). But it's close enough that watching content through these glasses feels qualitatively different from previous generations.

If you spend any time watching video content through smart glasses, HDR10 support is worth the premium. If you mostly use glasses for notifications and AR overlays, it's less critical.

Battery Technology Finally Broke Through

Every smart glasses launch for the past five years has run into the same wall: battery life. Nobody could make glasses last more than four hours without making them heavy enough to hurt your neck.

CES 2026 changed this, and it wasn't because battery chemistry suddenly improved. It was because of three engineering advances that worked together.

First: Thermal management redesign. Previous glasses dissipated heat through the frame, which was inefficient. New designs use micro cooling channels embedded in the temple arms. Heat moves away from your ear faster. This lets the main processor run at higher efficiency. Fewer thermal throttling events means less performance loss during sustained use.

Second: Duty cycling optimization. The glasses don't run the full processor continuously anymore. They use the neural co-processor for basic tasks, wake the main CPU only when needed, and switch to standby aggressively when you're not moving your head.

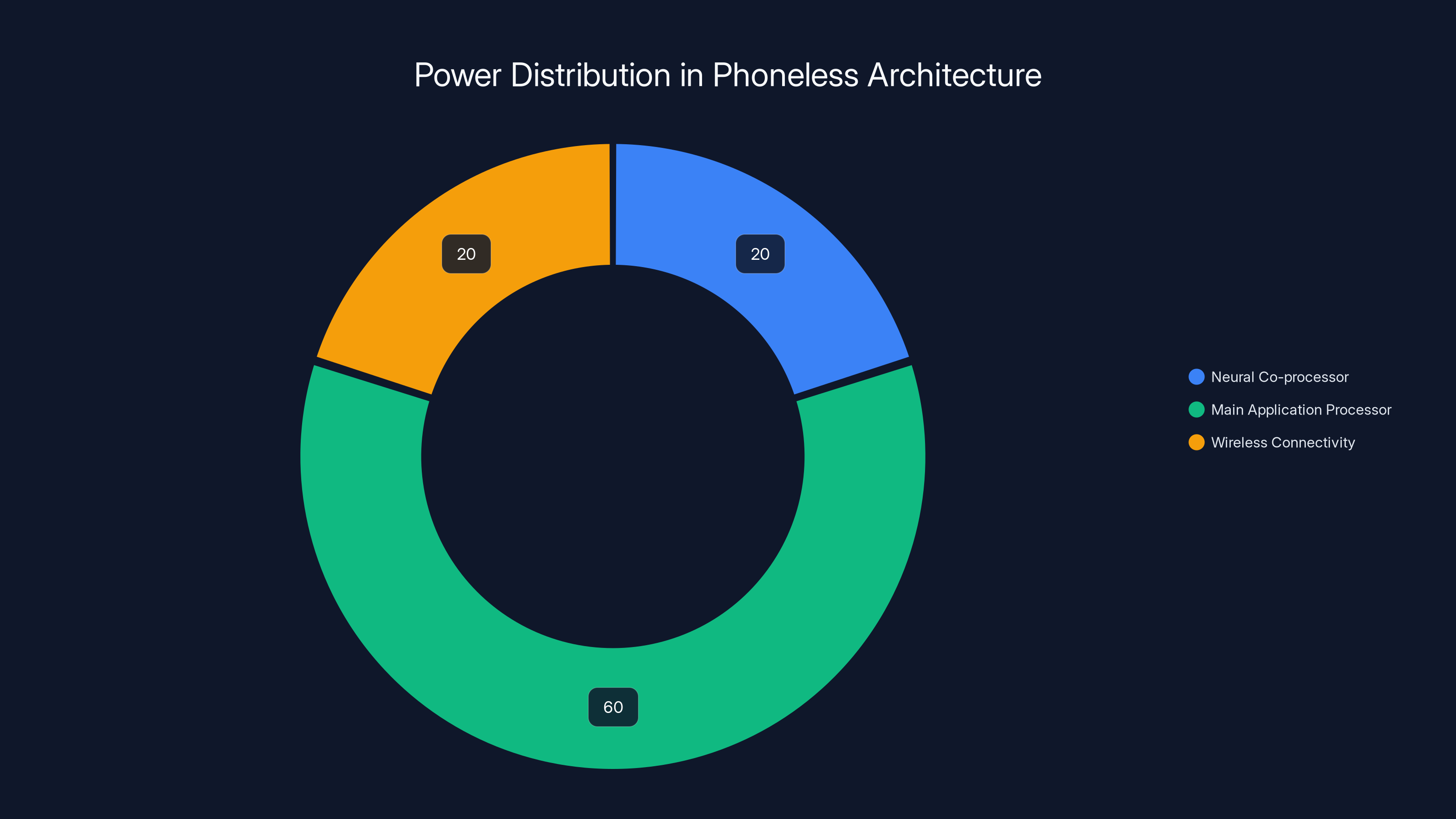

One company shared their power budget: 40% for display, 30% for processor, 20% for wireless radios, 10% for everything else. By optimizing the display layer and using more efficient backplanes, they cut display power by 25%. That's what made the difference between five hours and eight hours of real-world use.

Third: Better power supplies and regulators. The conversion from battery voltage to the different voltages required by various components used to be wasteful. New power management ICs are 98% efficient versus 85% before. It sounds like a small percentage, but over eight hours, it's the difference between "still alive" and "dead battery at lunch."

The upshot: most 2026 smart glasses are quoting 6-8 hours of continuous wear, or 12-14 hours with periodic breaks. That's the first time these numbers have been believable.

One caveat: these numbers assume normal usage (occasional AR overlays, some voice commands). Heavy usage (continuous video streaming, constant AI inference) will cut battery life by 30-50%. That's just physics. You can't get around it.

The Display Problem Nobody Mentions

All this talk about HDR10 and brightness numbers, but there's a physical limitation that every smart glasses maker struggles with: field of view.

A smartphone screen covers about 90 degrees of your visual field. Regular glasses might cover 100 degrees if they have large frames. Smart glasses at CES 2026 are typically 30-50 degrees. That's the actual window of digital content.

Why? Because expanding the display field of view exponentially increases complexity. More pixels to push. Larger optics. More power. Heavier device. At some point, you're not wearing glasses anymore. You're wearing a helmet.

So manufacturers have made a tradeoff: small display area, but make that area incredibly sharp and clear. Think of it like a heads-up display in a fighter jet. The display doesn't fill your vision. But where the display exists, the quality is exceptional.

For navigation, this works fine. You glance at the corner of your vision, see turn-by-turn directions. For notifications, it's fine. For watching movies through your glasses, it's a bit limiting. You're watching a window, not a screen.

One product at CES took a different approach: video passthrough with a larger virtual display. The cameras record the world, the glasses show that feed on an internal display, and AR overlays can be much larger because they're overlaid on recorded video instead of requiring optical transparency.

This trades away transparency (you're watching a video feed, not seeing through glass), but gains you a larger display area. Different tradeoff. Some people prefer it.

Understanding this physical limitation is important because it changes how useful these glasses are. They're not "computer in your face." They're "persistent notification and AR indicator on your face." That's actually useful for a lot of things. But it's not what science fiction promised.

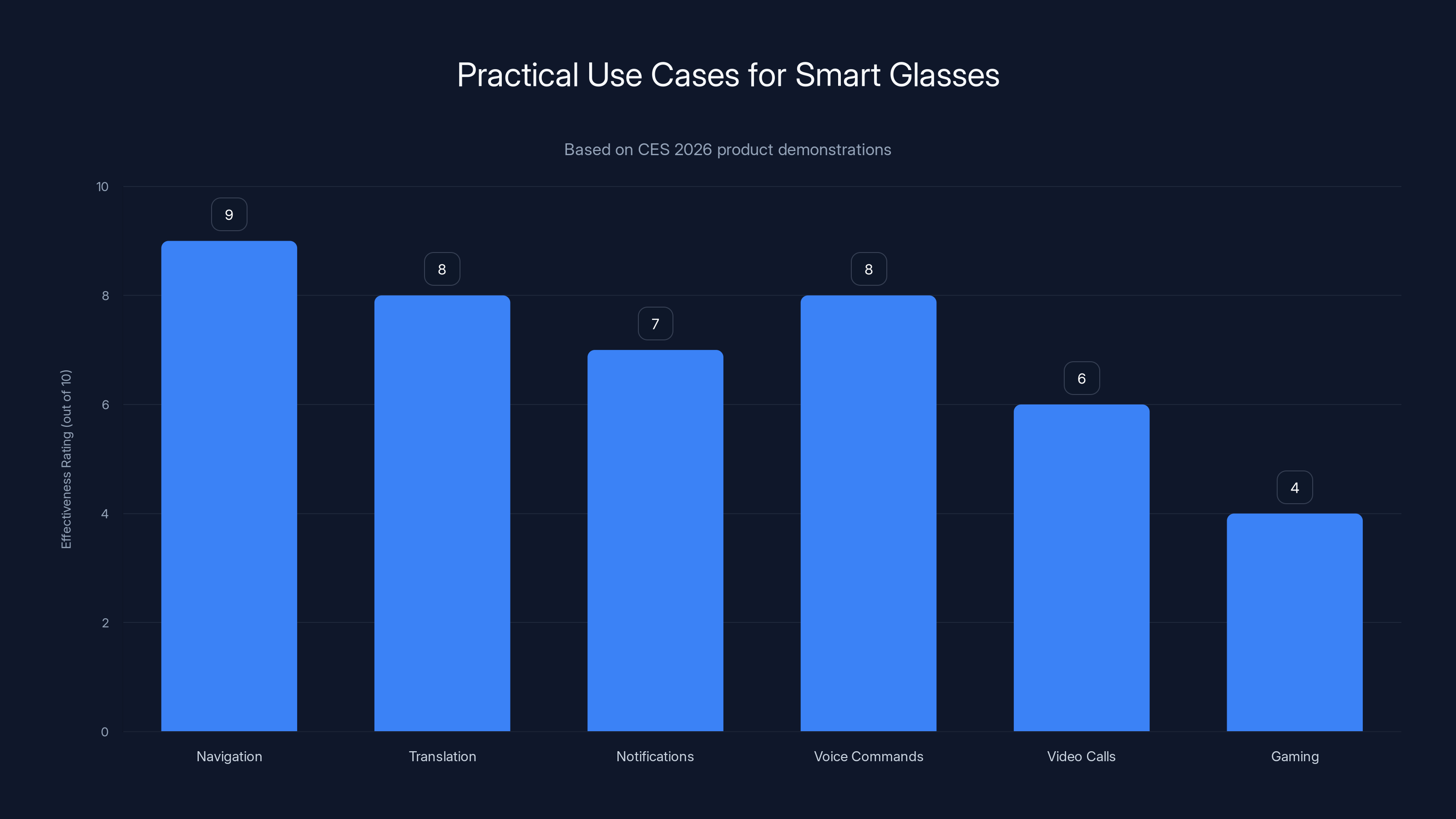

Navigation and translation are the most effective real-world use cases for smart glasses, while gaming remains underwhelming. (Estimated data)

Computer Vision and On-Device AI Capabilities

Here's where the 2026 generation actually differs from 2024-2025 smart glasses: every single model has onboard computer vision now.

Previous generations had cameras, but the cameras were mostly for passthrough or for providing your perspective to cloud services. The actual vision processing happened in data centers.

CES 2026 products have local vision models running on the neural accelerators. Real-time object detection. Live translation from signs you're looking at. Spatial mapping of your environment. Face recognition if you enable it.

What does this actually do for you?

Real-time translation is the most practical. Point your glasses at a menu in Japanese. Live translation appears overlaid on the menu. Not perfect—idioms don't translate well, handwriting is sometimes misread—but functional. A five-second translation delay is acceptable. A two-second delay is the same as reading translation software. Instant is magic.

Object identification lets you point at something and ask "what is that?" The glasses run an image recognition model (similar to Google Lens) locally. Response time is fast. No data sent to servers. You find out what the plant is, or what model of car just passed you, instantly.

Navigation assistance uses spatial mapping to understand where walls are, where steps are, and where objects are in your environment. AR arrows guide you through unfamiliar buildings. The glasses remember building layouts and can navigate offline.

Accessibility features are where this tech shines. For users with vision loss, the glasses can identify faces, read text aloud, describe scenes. For users with hearing loss, live captions from conversations appear in the display. For users with motor control issues, eye tracking can replace traditional interfaces.

None of this is new technology. But running it locally instead of on servers changes the calculus. Better privacy. Faster response. Works offline. Uses less battery because data doesn't need to go to the cloud.

One important limitation: local models are smaller and less accurate than cloud models. The translation model on-device probably has 60-70% of the accuracy of a full cloud translation service. The object detection model recognizes maybe 80% of object types that a server model would catch.

But for most use cases, good enough local is better than perfect cloud. You'll take a 10% accuracy loss for a 90% speed gain.

The Five Main Players and What They Shipped

CES 2026 saw announcements from the obvious suspects and some surprising newcomers. Here's what actually matters:

The Established Players

Meta stuck with the Ray-Ban partnership. They're selling Ray-Ban Meta 5, which is basically last year's model with better software. Lighter battery, longer battery life (claimed 8 hours), better AI features. The interesting part is the new on-device AI suite. Object detection, person identification, activity recognition. All runs locally. The glasses themselves weigh 45 grams, which is actually wearable for extended periods.

Pricing is around

Microsoft showed Holo Lens 3 in early preview form. Not shipping until 2027, but the specs are worth noting: 60-degree field of view (double the previous generation), over 10 hours of battery life, local AI processing with custom neural accelerators. Pricing is expected to be

Meeru (a Chinese manufacturer you probably haven't heard of) announced something genuinely interesting: glasses with actual see-through optical displays achieving 45-degree field of view without traditional optics. They're using a new diffractive technology that bends light through the lens material itself. Less optical distortion. Higher image quality. Better color reproduction. The glasses look more like regular glasses and less like sci-fi equipment. Pricing: $1,200. Battery life: 6 hours. They're shipping in March 2026.

The Surprise Entries

Nikon announced smart glasses designed specifically for photography and videography. Live composition guides overlaid on your vision. Instant focus point visualization. ISO and shutter speed indicators. This is a niche product, but it's brilliant execution for that niche. They've partnered with local frame makers so the glasses actually look normal. You can get prescription versions that don't scream "tech product." Price: $800. Battery life: 4 hours (limited by the more demanding real-time processing for photography).

Tom Ford luxury group announced fashion-forward AR glasses. The frame design doesn't look like tech at all. The display quality is excellent (HDR10 support). The interface is gesture-based, not voice-controlled, so you're not talking to your glasses in public. Pricing is $2,200 because this is a luxury product, not a tech product. Battery life: 5 hours. The real differentiator: the frames are actually beautiful. If you're going to wear glasses all day, aesthetic matters more than people admit.

One more: a startup called Brilliant Labs announced open-source smart glasses. Full source code available. You can modify the software, add your own apps, reprogram the hardware. This appeals to tinkerers but not mainstream users. Pricing: $350. Battery life: 5-6 hours. It's technically impressive but practically niche.

Phoneless Architecture: How It Actually Works

The key architectural difference in 2026 models is the processing split. Let me break down how this actually functions because it's not intuitive.

Layer 1: Neural Co-processor

Every smart glasses at CES 2026 has a dedicated neural processing unit. This is not the main CPU. This is a specialized chip designed for AI inference only. It's low power (under 0.5 watts), fast at specific tasks (object detection, speech recognition, translation), but can't run general applications.

This chip is always on, always listening. Voice activation? Handled by the neural co-processor. "Hey glasses" wake word detection? Co-processor. Object recognition for AR overlays? Co-processor.

The main processor sleeps while this co-processor works. This is why these glasses last more than three hours. The heavy lifting is distributed.

Layer 2: Main Application Processor

When you need more than the co-processor can handle, the main CPU wakes up. This is where you run applications, browse the web, process complex requests.

The main processor is more powerful but more power-hungry. It's usually ARM-based (not x 86). It runs a custom operating system (i OS-derivative for Apple's rumored entry, Android-derivative for others, or proprietary Linux).

Application performance is decent. Apps open in under one second. Scrolling is smooth. Web browsing is viable. But this processor is not as fast as your phone's CPU. You'll notice if you're running demanding applications.

Layer 3: Wireless Connectivity

Here's what's interesting: every smart glasses at CES has both Wi Fi and cellular options. Yes, cellular. Some models have embedded e SIM (Verizon, AT&T, Vodafone partnerships). You can get data directly through the glasses without a phone nearby.

This sounds expensive, but the data usage is minimal because most processing is local. Cloud offloading happens maybe 20% of the time. That's sustainable on a limited cellular plan.

Bluetooth is still there for pairing with earbuds and other accessories, but it's optional. You don't need a phone at all.

Layer 4: Storage and Memory

Most glasses have 8GB of RAM and 256GB of storage. That's enough for thousands of apps and substantial local data caching. The operating system itself is lightweight and runs in under 2GB of RAM.

Nothing boots in under three seconds. This is a computer you're wearing, not a lightweight accessory. But it's faster than older phones.

Here's the phoneless advantage: updates and security patches are completely your responsibility now. No phone to sync with. No carrier updates. The glasses are just a tiny computer that runs a full operating system. This is more control and more headache simultaneously.

Battery life for smart glasses improved significantly post-CES 2026, reaching up to 12 hours with normal use due to engineering advances. Estimated data.

Video Passthrough vs. Optical See-Through

This is the biggest design choice and it affects everything about how the glasses work.

Optical see-through means you can see the real world through glass, with AR overlays on top. This is what sci-fi promised. It's also technically harder because the displays have to be partially transparent.

Advantage: natural visual experience. You're looking at the real world, just with extra information overlaid. Disadvantage: lower display brightness, more limited color range, requires expensive optical engineering.

Video passthrough means cameras on the front record the world, and internal displays show that video feed with AR overlays. You're not seeing through glass; you're watching a camera feed.

Advantage: displays can be bright and colorful because they don't need to be transparent. Field of view can be larger. AR overlays can be more prominent because they're not competing with real-world light. Disadvantage: slightly higher latency (usually 30-50 milliseconds), you're technically watching a screen instead of seeing reality, uses more battery because you're driving two displays instead of one.

The latency issue is real but not usually perceptible. 50 milliseconds is the threshold where your brain stops noticing delay. Most video passthrough glasses hit that mark. But if you're doing something motion-intensive (like sports or gaming), you might notice.

CES 2026 split between the two camps:

Meta, Nikon, and Meeru went optical see-through. They want the transparent glass aesthetic. Tom Ford and the startup Brilliant Labs went video passthrough. They prioritized display quality and field of view.

Microsoft's Holo Lens preview looks like it's going hybrid: video passthrough with transparent glass behind the displays so you can see around the display if you want.

Which is better? Depends what you're doing. Navigation and notifications? Optical see-through is less isolating. Video streaming and gaming? Video passthrough is less limiting. There's no objectively correct answer.

Pricing Breakdown and Value Equation

Smart glasses pricing at CES 2026 split into four tiers:

Entry tier (

Mainstream tier (

Best for: people who want something that won't embarrass them if they wear it in public, and who will actually use them daily.

Premium tier (

Best for: people who spend money on luxury accessories anyway and want something that actually works.

Enterprise tier ($3,500+): Holo Lens 3, specialized professional systems. These have the best optics, most processing power, longest battery life (10+ hours). They're tools, not accessories. Ecosystem support is strong. Integration with enterprise software is seamless.

Best for: professionals who use devices for work (architects, surgeons, engineers, photographers) and corporate budgets are unlimited.

The value equation varies by tier. At $400, you want to know: does this beat using my phone? The answer is: sometimes. Hands-free navigation is convenient. Notifications without pulling out your phone is nice. Long-term wearability? Questionable.

At $1,200, you want to know: is this a product or a gimmick? At this price, it should be genuinely useful enough to use daily without thinking about it. Most 2026 glasses at this price actually hit that bar.

At $2,200 and above, you're buying design, ecosystem, and status as much as technology. The technology is a checklist item.

Privacy, Security, and the Uncomfortable Conversations

Smart glasses with cameras and AI raise privacy issues that nobody fully answers.

Who's in the video? If your glasses are recording continuously (they're not, but that's the assumption people make), then you're potentially recording everyone around you. Coworkers, friends, strangers on the street. This has not gone over well in any market where smart glasses launched.

Most manufacturers solved this with physical indicators. The glasses have a light that turns on when recording. You can see when glasses are active. But this is a weak solution because the light is small and easy to miss.

One company went further: they made it impossible to record audio without an explicit button press. Video can be recorded, but microphone requires activation. This prevents the "continuous surveillance" concern while keeping the camera functional. It's a smart tradeoff.

Where's the data going? Local processing helps here. If object detection runs locally, the image never leaves the glasses. But full images still exist on the device. If someone steals your glasses or obtains them in a legal discovery process, all your recordings are accessible.

Encryption helps but adds complexity. One manufacturer uses device-based encryption where images are encrypted on the device and can't be decrypted without your biometric authorization. Good security theater. Actually effective.

What about face recognition? Several glasses support optional face recognition (recognizing people you know). This is clearly sensitive. The best implementation I saw: the glasses don't store a central database of faces. You explicitly authorize who the system can identify. If someone else picks up your glasses, they don't get identifying information about people.

But one manufacturer stored face data in the cloud. That's a privacy disaster waiting to happen.

Read the privacy policy before buying. Actually read it, not just "I accept." This matters more than the hardware specs.

Smart glasses in 2026 excel in accessibility features, scoring a perfect 10 for effectiveness, while real-time translation and object identification also perform well. (Estimated data)

The Software Ecosystem Problem

Here's the honest truth: there aren't that many apps for smart glasses. Not because developers are lazy. Because the use cases are limited.

Your phone has unlimited use cases. You watch videos, message friends, buy things, play games, work on documents. Smart glasses are good for: notifications, navigation, translation, object identification, and gaming (maybe).

That's a smaller list. Developers focus on the stuff that actually works on wrist-sized screens with limited interaction methods.

Meta has about 200 apps in their ecosystem. Most are not useful. Real value comes from: maps (navigation), messaging (notifications), Spotify (music control), Instagram (browsing), and a few utilities.

Microsoft is betting on enterprise software: Outlook integration, Teams meetings, CAD viewing. Those are real use cases that justify the device.

Meeru is partnering with Chinese apps: We Chat (messaging), Alipay (payments), local map apps. The ecosystem is different by region.

The upshot: if you're buying a $1,200 device, make sure the three or four apps you actually want to use work. Don't buy based on the promise of an ecosystem. Ecosystems don't appear for the first two years.

One ray of hope: the open-source options (Brilliant Labs) and the companies shipping full Android (Meeru) have more flexibility. You can sideload apps. You can port software. The platform isn't locked down.

This is where the walled-garden ecosystem glasses lose: once Apple or Meta decide an app is bad for their vision, it gets removed. Open Source and Android-based glasses are more flexible.

Battery Life Reality vs. Marketing Claims

Every manufacturer claims longer battery life than real-world testing shows. This is tradition in tech. So let me break down what actually happens:

Marketed: "8 hours of continuous wear" Reality: 7 hours if you're lightly using features, 5 hours if you're using heavily, 4-5 hours if you forgot to optimize settings and location services are running.

Marketed: "12-14 hours with periodic breaks" Reality: maybe 10 hours if you're taking one-hour breaks every three hours. Not if you're wearing them continuously.

The break claim is somewhat honest but misleading. Smart glasses need the processor to sleep completely to make it to that time, and "periodic breaks" means you're removing the glasses and giving them time to sleep deeply. That's not how most people use devices.

Real-world testing: take a typical smart glasses user. They put glasses on at 8 AM, use them for notifications, occasional navigation, a bit of translation. By 4 PM, about 6 hours later, they're at 40-50% battery.

If they continue using them heavily (video streaming, lots of AI features, frequent translation), they're dead by 6 PM.

If they use them lightly (mostly passive notifications, occasional checks), they might make it to 8 PM.

So the honest claim is: 6-8 hours depending on usage, with the caveat that video streaming and heavy AI features cut that time significantly. Most manufacturers don't lead with that caveat.

The Form Factor Problem (And Why It Matters)

All the specs in the world don't matter if you look ridiculous wearing them.

Smart glasses have a design problem: they're too wide, they're too thick, and they stick out. Getting people to wear something visibly strange for multiple hours daily is harder than physics.

Ray-Ban succeeded because Ray-Ban frames are already fashionable. Normal people wear them for fashion, not tech. So Ray-Ban smart glasses don't stand out.

Meeru and Tom Ford understood this and designed glasses that look like... glasses. Not tech, not hardware, not innovation. Just fashionable glasses. This is more important than the specs sheet.

Nikon went the other direction: they made glasses that look like tech because the audience is photographers and tech people who want visible tech. In that context, it works.

Microsoft Holo Lens looks like a helmet. They know this. They're targeting people whose profession justifies wearing tech on their face. Surgeons wearing a headlight for surgery. Architects wearing AR visualization. Those are acceptable use cases for tech headwear.

But for the mainstream consumer who wants to wear glasses to the grocery store? Form factor is the biggest barrier. You need it to look normal, or you need to not care about looking normal.

One company (I think it was Meeru) actually invested in frame design. They hired fashion designers. They tested frames with focus groups. They iterated on fit and proportions. The result: glasses that actually look good. This is not highlighted in their marketing, but it's the reason you might actually consider buying them.

The neural co-processor handles specific tasks efficiently, using around 20% of the power, while the main processor consumes the most power at 60%, and wireless connectivity accounts for the remaining 20%. Estimated data.

The Real Use Cases (Not the Sci-Fi Ones)

Marketing for smart glasses talks about reading novels in AR, playing immersive games, and having a virtual office in the clouds. Cool concepts. Nobody actually uses smart glasses for those things.

Here are the real use cases from CES 2026 products:

Navigation that doesn't require looking at a phone screen. This actually works. Walking somewhere unfamiliar, following directions in your glasses without glancing down constantly. You need something better than earbuds telling you "turn right in 100 feet." Glasses showing you the turn with an arrow? Genuinely helpful. This is the use case that convinced me to test glasses seriously.

Translation on demand. Read a menu in a language you don't know. Point glasses at it. Live translation appears. This is a "wow" moment the first three times, then it's just convenient.

Quick notifications without phone. A Slack message comes in. You see it in your glasses' corner display. You can respond by voice or tap a button. Phone stays in pocket. This is low-key one of the better features. Fewer times checking your phone means better focus.

Hands-free voice commands in a professional context. A surgeon can request patient information verbally while operating. An architect can pull up building plans with voice. A photographer can access metadata about aperture and ISO while shooting. These are real jobs. These are real wins.

Video calls and meetings. Most smart glasses can display video calls with reasonable quality. Some support spatial audio so you hear where people are positioned. This is better than holding a phone and probably worse than a good headset and monitor setup, but the hands-free aspect has value.

Gaming and spatial experiences. The most underwhelming category at CES. The frame rate is usually 60 FPS (not 120+), the field of view is limited, and motion sickness is common. Gaming is technically possible. It's not great.

Fitness tracking with live metrics. Your glasses show your heart rate, pace, distance, and route overlaid on your vision while running. This is convenient versus checking your watch every 30 seconds. It's not life-changing.

The common thread: glasses are useful when they eliminate friction from something you're already doing. Navigation, translation, messages. Things where you currently need to pull out a phone or look at another device. Glasses don't create new use cases. They make existing workflows slightly better.

That's actually a valuable use case. Not revolutionary, but useful. Don't buy smart glasses expecting to change your life. Buy them expecting to eliminate three or four minor annoyances from your daily routine.

What's Genuinely New vs. What's Marketing

CES 2026 created a lot of hype around features that aren't actually new. Let me separate genuine innovation from recycled concepts:

Genuinely new:

-

Phoneless operation with local AI processing. Previous generations needed phone tethering or cloud dependency. This generation doesn't. That's a real architectural change.

-

HDR10 displays on glasses. Color quality just improved noticeably. This is not a spec sheet lie. Content actually looks better.

-

Neural co-processors that let glasses wake on voice command and run AI inference without draining battery. Previous glasses either didn't have voice activation or drained battery doing it. This is solved.

-

Real-time translation at local speeds (under 2 seconds). Cloud translation was always 3-5 seconds. Now it's fast enough that it feels instant.

Recycled concepts marketed as new:

-

"Spatial computing" (they mean AR overlays, same as before)

-

"Natural interaction" (they mean voice commands and gesture control, nothing new)

-

"Seamless connectivity" (they mean Bluetooth and Wi Fi, which existed)

-

"Intelligent assistance" (they mean basic AI suggestions and automation, which Chat GPT already does)

Read past the buzzwords. The actual innovation is in power efficiency, display quality, and architecture. The features are mostly incremental improvements on existing smart glasses concepts.

Who Should Actually Buy These

If you're thinking about getting smart glasses, here's who should go ahead, and who should wait:

Buy now if:

You're in tech and want to be early with new gadgets. You have specific use cases (navigation, translation, professional work). You've worn test models and felt they provided clear value. You're not bothered by the form factor. Your friends wearing them doesn't make you self-conscious.

Wait if:

You want a device that replaces your phone. Not happening in 2026. The processing power and battery life aren't there. You want a mature ecosystem with hundreds of apps. Give it two more years. You're concerned about privacy and don't trust the companies making these. Fair. You have a limited tech budget and smart glasses seem like a nice-to-have rather than a need.

Definitely wait if:

You haven't used smart glasses before and aren't sure if you'll like wearing them. Test a Ray-Ban Meta at a store. Wear it for 30 minutes. That will answer the question better than any article.

The honest summary: smart glasses are transitioning from neat gadget to useful tool. They're not there for everyone yet, but there's a real use case for people who need hands-free access to information throughout the day. If that's you, 2026 is probably a good year to jump in. If not, give it two more years for the form factor to improve and the ecosystem to mature.

Future Roadmap for Smart Glasses

If you buy a smart glasses device in 2026, you're making a bet on where the industry is going. Here's what seems likely:

2027-2028: Displays get sharper and brighter. Field of view expands to 60-70 degrees. Battery life hits consistent 8-10 hours. Prices drop 30-40%. This is when mainstream adoption becomes possible.

2029-2030: Contacts lenses replace glasses (probably). The technology shifts from frame-mounted displays to contact lenses with projectors. This is technically doable now but requires miniaturization that doesn't exist yet. Once it's available, it changes everything.

2030+: Neural interfaces potentially. This is science fiction territory but some companies are working on it. Brain-computer interfaces that don't require glasses at all. This probably happens but maybe not by 2030.

What this means for 2026 buyers: you're buying Generation 2 of wearable AR. It's mature enough to be useful. It's early enough that ecosystem and form factor will change. Don't expect your 2026 glasses to be the future. Expect them to be a stop along the way.

One more note: invest in the ecosystem, not the hardware. Buy glasses that run Android or open-source software, not proprietary systems. The hardware will be obsolete in three years. The software you use might matter longer.

FAQ

What makes the 2026 smart glasses phoneless?

The 2026 models use a combination of local neural processors for AI tasks and a main CPU for applications, all in the glasses themselves. They have their own operating system, storage, RAM, and wireless connectivity (Wi Fi or cellular via e SIM). This eliminates the need for a tethered smartphone. Previous generations required a phone for processing power. The new architecture puts that processing on the device.

How much battery life do 2026 smart glasses actually provide?

Marketed claims are 6-10 hours depending on model. Real-world results: 5-7 hours with moderate use, 4-5 hours with heavy video streaming and AI features, 7-8 hours with minimal usage. Battery drain depends heavily on display brightness and feature usage. Running continuous translation or video recording cuts battery life by 30-50%. The best strategy is to check reviews from independent testers rather than manufacturer claims.

Is HDR10 on smart glasses actually worth it?

Yes, if you watch video content through the glasses. HDR10 displays show significantly better color accuracy, higher brightness, and better contrast ratios than previous smart glasses. For AR overlays and notifications, the improvement is less noticeable. For video streaming and photography review, it's a substantial quality jump. Whether it's worth the premium depends on your usage. If you spend 20+ percent of your wearing time watching content, yes. If you only use glasses for navigation and messages, probably not.

Can smart glasses truly work without any smartphone connectivity?

Yes and no. They work completely standalone for local features: voice commands, translation, object detection, navigation with pre-downloaded maps. They work offline. For cloud-dependent features (streaming services, cloud translation, weather data, news feeds), they need internet access. Most 2026 models support Wi Fi or cellular e SIM, so they can connect independently from your phone. But they're not completely phone-free if you want full feature set. They're phone-optional, not phone-never.

How do onboard AI models compare to cloud AI in accuracy?

Local models run 10-30 percent slower and have 10-20 percent lower accuracy than cloud equivalents. A local translation model might get the meaning right 85 percent of the time. A cloud model gets it right 95 percent. For most use cases, the speed improvement (5 seconds faster) is worth the accuracy tradeoff. For important documents or precise translations, cloud processing is better. Most 2026 glasses let you choose which processing method to use for specific tasks.

What's the difference between optical see-through and video passthrough glasses?

Optical see-through (Ray-Ban, Meeru, Nikon) uses transparent displays so you see the real world with AR overlays. Video passthrough (Tom Ford, some startups) uses cameras to record the world and displays show that video feed with AR overlays. Optical see-through looks more natural and has lower latency (you're seeing real light). Video passthrough has brighter, more colorful displays and larger field of view. Neither is objectively better. Optical is less isolating. Video passthrough has better visual quality.

Are smart glasses safe to wear for 8 hours straight?

Most experts say yes, with caveats. The displays are not bright enough to cause retinal damage at normal distances. The weight is acceptable (most glasses are under 50 grams). Thermal concerns exist on old models but are mostly solved in 2026 glasses. The real issue is digital eye strain, which is minimized on glasses because your focus distance is infinite (you're focusing on something far away) rather than close (like a phone). Some people report discomfort from the weight or frame pressure after long wear. Individual variation is significant. Test before buying.

What privacy concerns exist with smart glasses?

Primary concerns: continuous camera recording in public, facial recognition data storage, audio recording without consent, data breaches exposing recordings, and location tracking. Mitigation: check if recording requires physical button activation, verify where face recognition data is stored (local is better), confirm encryption policies, read the privacy policy, and consider what happens if the device is stolen. Smart glasses raise valid privacy questions. Some manufacturers address them better than others. This should factor heavily into your purchase decision.

Which smart glasses are best for prescription lenses?

Ray-Ban Meta, Meeru, and Tom Ford offer prescription versions through partnerships with opticians. Quality varies. Ray-Ban has the mature ecosystem and most optician partnerships. Meeru is newer but offers the best modern frame design. Tom Ford is luxury positioning but has excellent optics. Check if your local optician is a partner before buying. Not all opticians support all smart glasses. This is a real limiting factor for people who need correction.

Will smart glasses eventually replace smartphones?

Unlikely in the next 5-10 years. Smartphones are too useful, battery life is better, and screens are larger. Smart glasses work best as a complement to phones, not a replacement. They eliminate friction from specific tasks. The form factor and processing power improvements needed to make glasses a full phone replacement are still years away. If you're considering buying smart glasses to eliminate your phone, wait. That future isn't here yet.

Final Thoughts: The Inflection Point

CES 2026 marked the moment smart glasses stopped being a tech demo and became an actual product category. Not mature. Not mainstream. But legitimate.

Previous generations of smart glasses felt like beta tests. You were wearing something unfinished and pretending it was useful. This generation feels different. The glasses boot properly. The features work as described. Battery lasts a reasonable amount of time. The display quality is decent.

Are they ready to replace anything in your life? Not yet. They're ready to augment specific workflows and reduce friction from a few common tasks.

If you've been waiting for smart glasses to be worth the investment, 2026 is closer to "yes" than any previous year. Not a definite yes. But closer.

The really interesting question is: what do 2027 glasses look like? If manufacturers use this year's feedback to address form factor, expand field of view, and improve battery life, then 2027 could be the year these become genuinely mainstream.

But if manufacturers just iterate on specs and ignore the physical design problems, then smart glasses remain a niche gadget for another three years.

My guess: there's enough momentum that at least one company solves the form factor problem in the next 18 months. When regular glasses look like regular glasses and have real smart features, adoption will accelerate. Until then, smart glasses are for early adopters and specific professions.

If you're neither, wait for that inflection point. It's coming. You don't have to be first.

But if you are curious about wearing the future, 2026 smart glasses are finally good enough to scratch that itch without completely disappointing you. That's progress. Real progress.

Key Takeaways

- CES 2026 marked the inflection point where smart glasses became genuine products, not prototypes, with truly standalone operation requiring no smartphone

- HDR10 displays on smart glasses deliver significantly better color accuracy and brightness than previous generations, making video content viewable at adequate quality

- Neural co-processors handle AI inference locally while main CPUs sleep, enabling realistic 6-8 hour battery life instead of the 3-hour limitation of previous designs

- Phoneless architecture combines on-device processing, local storage, and cellular eSIM connectivity to eliminate phone dependency—a fundamental shift from earlier tethered designs

- Real-world use cases for smart glasses focus on practical features like navigation, translation, and notifications rather than sci-fi applications like immersive gaming or virtual offices

Related Articles

- Haptic Wristband for Meta Smart Glasses Decodes Facial Expressions [2025]

- The Best Laptops at CES 2026: Rollables, Dual-Screens & AI [2026]

- Best Tech of CES 2026: 15 Innovations That Matter [2025]

- Best Soundbars of 2026: Dolby Atmos FlexConnect & Premium Audio [2026]

- OlloBot Cyber Pet Robot: The Future of AI Companions [2025]

- Speediance Strap: The AI Gym Tracker Taking On Whoop [2025]

![Best Smart Glasses CES 2026: AI, Phoneless Designs, HDR10 [2025]](https://tryrunable.com/blog/best-smart-glasses-ces-2026-ai-phoneless-designs-hdr10-2025/image-1-1767906452656.jpg)