Introduction: The Future of Nonverbal Communication Through Haptics

Imagine having a conversation with someone where you can't see their facial expression. You're engaging, sharing stories, maybe making a joke. But without that visual feedback, you miss something crucial: whether they're actually enjoying the moment. For people who are blind, have low vision, or are neurodivergent, this scenario isn't hypothetical. It's daily reality.

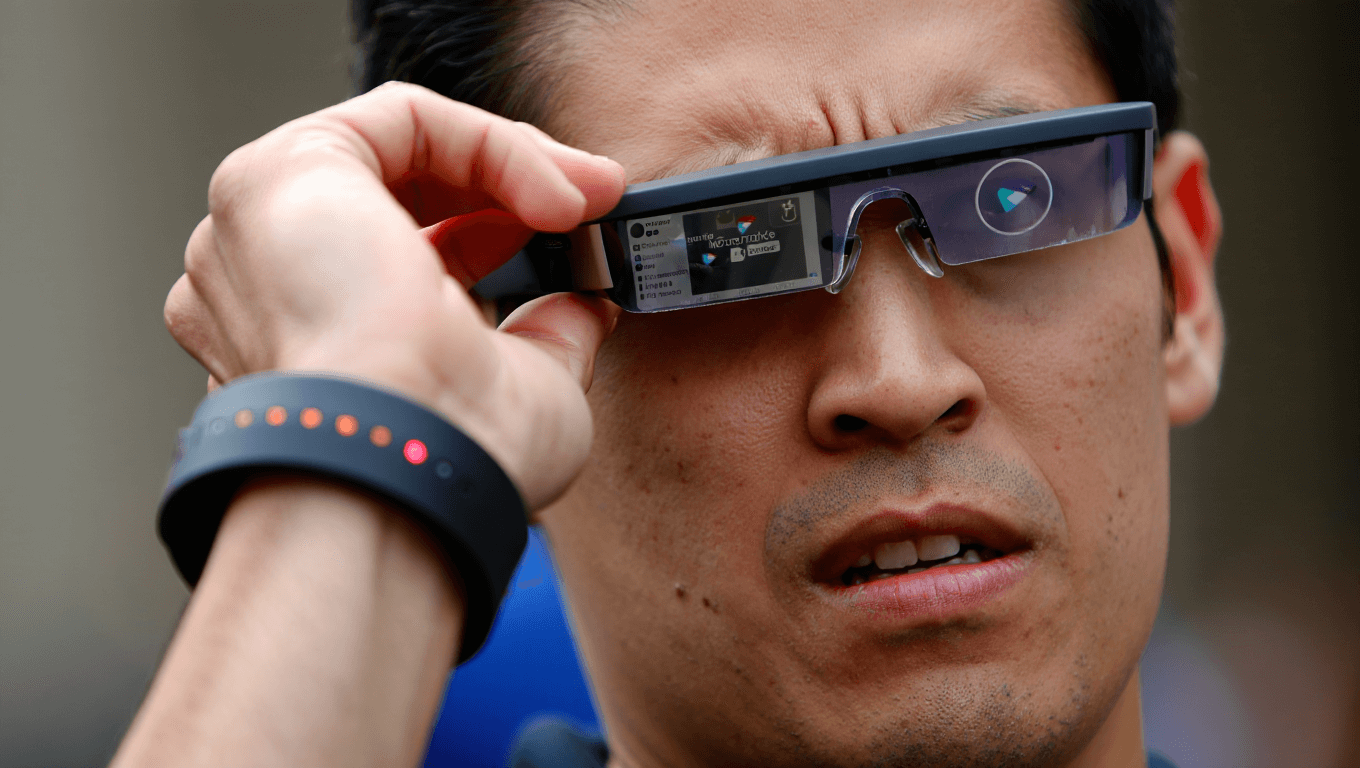

That's where Hapware's Alleye comes in. This isn't another tech gadget slapped onto smart glasses for the sake of innovation. It's a purposeful solution built specifically to address a genuine accessibility gap. By combining Ray-Ban Meta smart glasses with a vibrating wristband, Alleye translates facial expressions and nonverbal cues into haptic feedback that users can feel in real time.

The concept sounds futuristic, but the execution is grounded in practical accessibility design. Instead of overwhelming users with audio descriptions or text-based alerts, Alleye delivers information through vibration patterns on the wrist. A smile might feel different than a frown. A wave feels distinct from a confused expression. After just a few minutes of learning, users can distinguish between multiple patterns simultaneously.

What makes this innovation particularly significant is its timing. Meta opened its smart glasses platform to third-party developers just months before Hapware debuted Alleye at CES 2026. This represents a larger shift in how tech companies are approaching accessibility. Instead of building features in isolation, they're creating open ecosystems where specialized developers can innovate rapidly.

Beyond the technology itself, Alleye represents something deeper: recognition that accessibility isn't a niche feature. It's a fundamental human need. When people who are blind can participate in conversations with full awareness of emotional context, they're not just gaining information. They're gaining equity. They're accessing the same social cues everyone else takes for granted.

This article dives deep into how Alleye works, why haptic feedback is uniquely suited for this application, what this means for the broader accessibility landscape, and what challenges still remain. We'll also explore the competitive landscape, pricing implications, and what comes next in wearable accessibility technology.

TL; DR

- Alleye is a haptic wristband that works with Ray-Ban Meta smart glasses to decode facial expressions and convert them into vibration patterns

- Pricing starts at 637 for wristband plus one year of app subscription (then $29/month)

- Target users include blind, low vision, and neurodivergent individuals seeking real-time nonverbal communication cues

- Haptic patterns are intuitive by design, with most users learning multiple patterns within minutes

- Ray-Ban Meta glasses are separate purchases, but Meta's open platform enabled third-party developers like Hapware to innovate

The Alleye wristband costs

Understanding Alleye: What It Is and How It Works

Alleye isn't a single product. It's a system combining three components: Ray-Ban Meta smart glasses, the Alleye haptic wristband, and an accompanying mobile app. Each piece serves a specific function in translating visual information into tactile feedback.

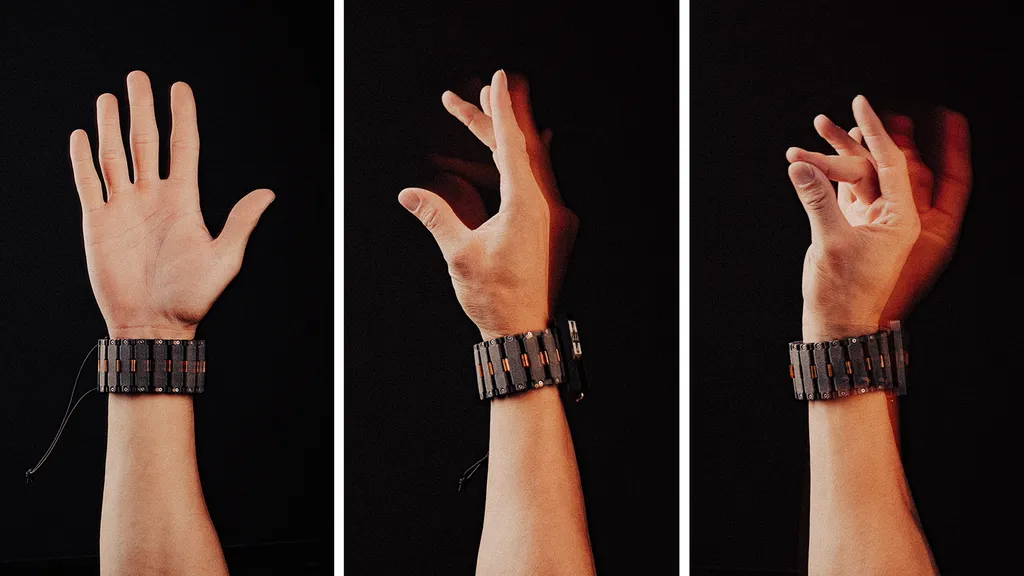

The wristband itself resembles a slightly chunky smartwatch. It's not winning any design awards for thinness, but that's intentional. The extra bulk houses an array of haptic motors positioned along the underside. These motors create specific vibration patterns that correspond to different facial expressions and gestures. The wristband doesn't feel distracting once you're wearing it. It's comfortable enough for extended wear, similar to a regular sports watch.

Here's the technical flow: Ray-Ban Meta glasses capture video of the person you're talking to. This footage streams to your phone, where the Alleye app processes the video using computer vision algorithms trained on facial expression recognition. The app detects specific expressions you've configured, then sends corresponding vibration commands back to your wristband. The entire process happens in near real-time, with minimal latency.

What surprised me during testing scenarios is how intuitive the vibration patterns feel. Jack Walters, Hapware's CEO, explained that they spent considerable time designing patterns that feel metaphorically connected to their meanings. A jaw drop expression actually feels like a downward motion on your wrist. A smile might feel like an upward lift. A wave feels like lateral movement. This intuitive mapping means users don't need to memorize arbitrary patterns. The feedback almost teaches itself.

Personalization is built into the system. Through the app, users choose which expressions they want to monitor. You might only care about smiles and confused looks. Someone else might want to detect every possible micro-expression. The app also lets you adjust vibration intensity. If you find certain patterns distracting, you can tone them down. If you want more pronounced feedback, you can amplify it.

The app includes a learning mode that helps users distinguish between different patterns. Instead of experiencing these vibrations in real conversations right away, you can practice in a safe environment first. The app shows you a face making a specific expression, then delivers the corresponding vibration. You learn the pattern, repeat it several times, and build muscle memory. Most users master multiple patterns within a few minutes.

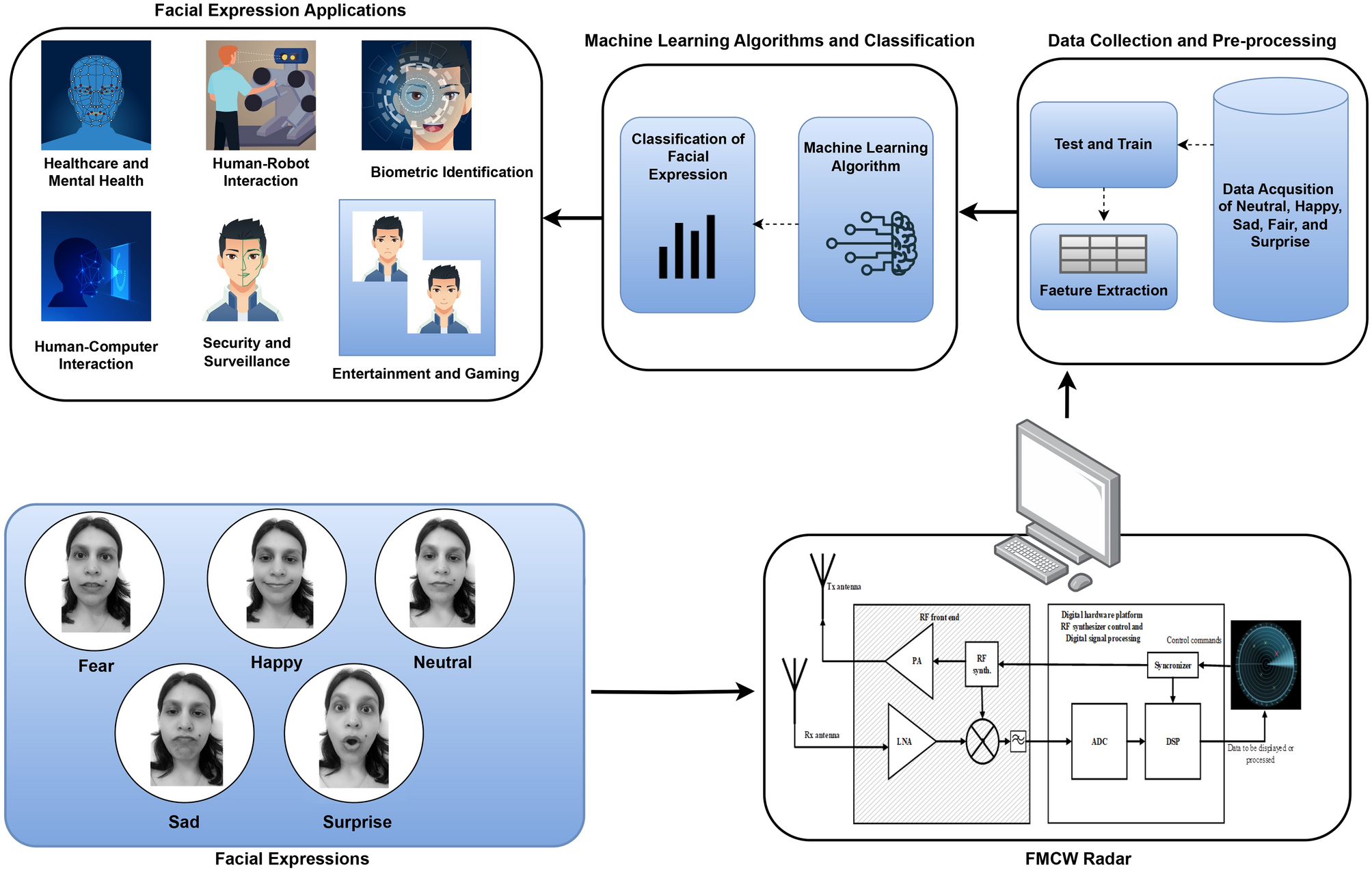

The Technology Behind Facial Expression Detection

The core innovation here isn't the vibration hardware. It's the computer vision algorithm running on your smartphone. This is where things get genuinely sophisticated.

Facial expression recognition algorithms analyze dozens of facial landmarks. The software tracks the position and movement of your eyes, eyebrows, mouth corners, cheeks, and jaw. When these landmarks move in specific combinations, the algorithm identifies expressions. A smile isn't just "mouth corners up." It's a complex pattern involving eye crinkles, cheek elevation, mouth opening, and subtle jaw movement. The algorithm recognizes all these signals simultaneously.

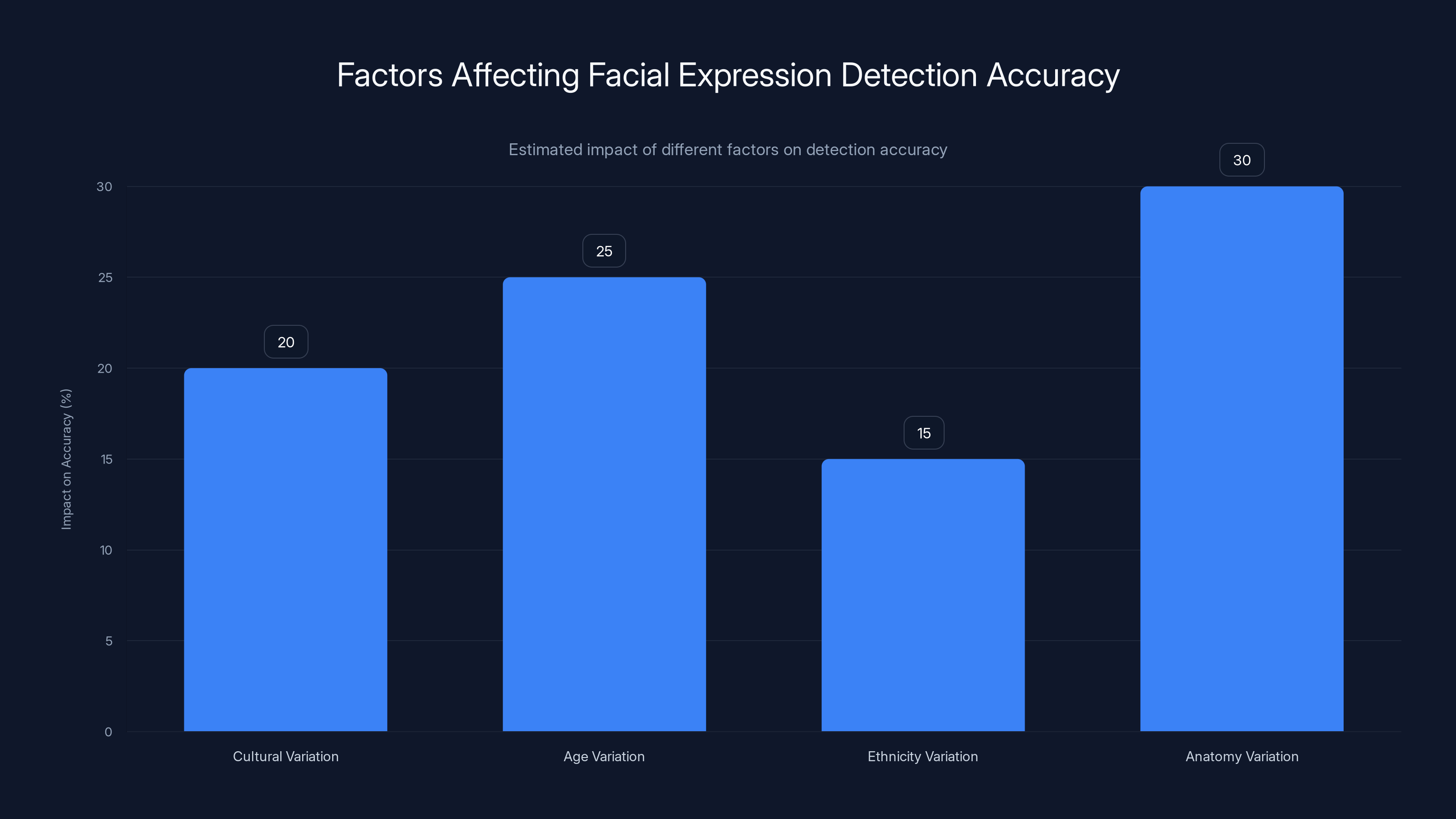

What complicates this is variation. A genuine smile looks different from a forced smile. Facial expressions vary across cultures. Age, ethnicity, and individual anatomy all affect how expressions manifest. A robust facial recognition system accounts for this variation. It doesn't just match templates. It understands the underlying patterns that indicate genuine emotions.

Hapware uses Meta's computer vision capabilities as the foundation. Ray-Ban Meta glasses already have cameras and processing power built in. Instead of building recognition from scratch, Hapware leveraged this infrastructure. They trained their algorithms on datasets of facial expressions, then optimized them for real-time performance. Running recognition on a smartphone CPU rather than dedicated servers keeps latency low and data private.

Privacy is actually a significant advantage here. Your video footage never leaves your phone. The app runs facial detection locally. The wristband communicates only with your phone via Bluetooth. No cloud servers storing your conversations. No third-party platforms analyzing your interactions. For users concerned about privacy, this is a major benefit compared to cloud-dependent solutions.

Accuracy is the other consideration. How often does the algorithm misidentify expressions? In early testing, Hapware reported high accuracy rates for common expressions. Happiness, sadness, surprise, fear, anger, and neutral expressions were reliably detected. More nuanced emotions like contempt or confusion were slightly less accurate. For most use cases, this level of accuracy is perfectly acceptable. The person you're talking to usually makes their emotions fairly obvious. Detecting the broad emotional category matters more than catching subtle micro-expressions.

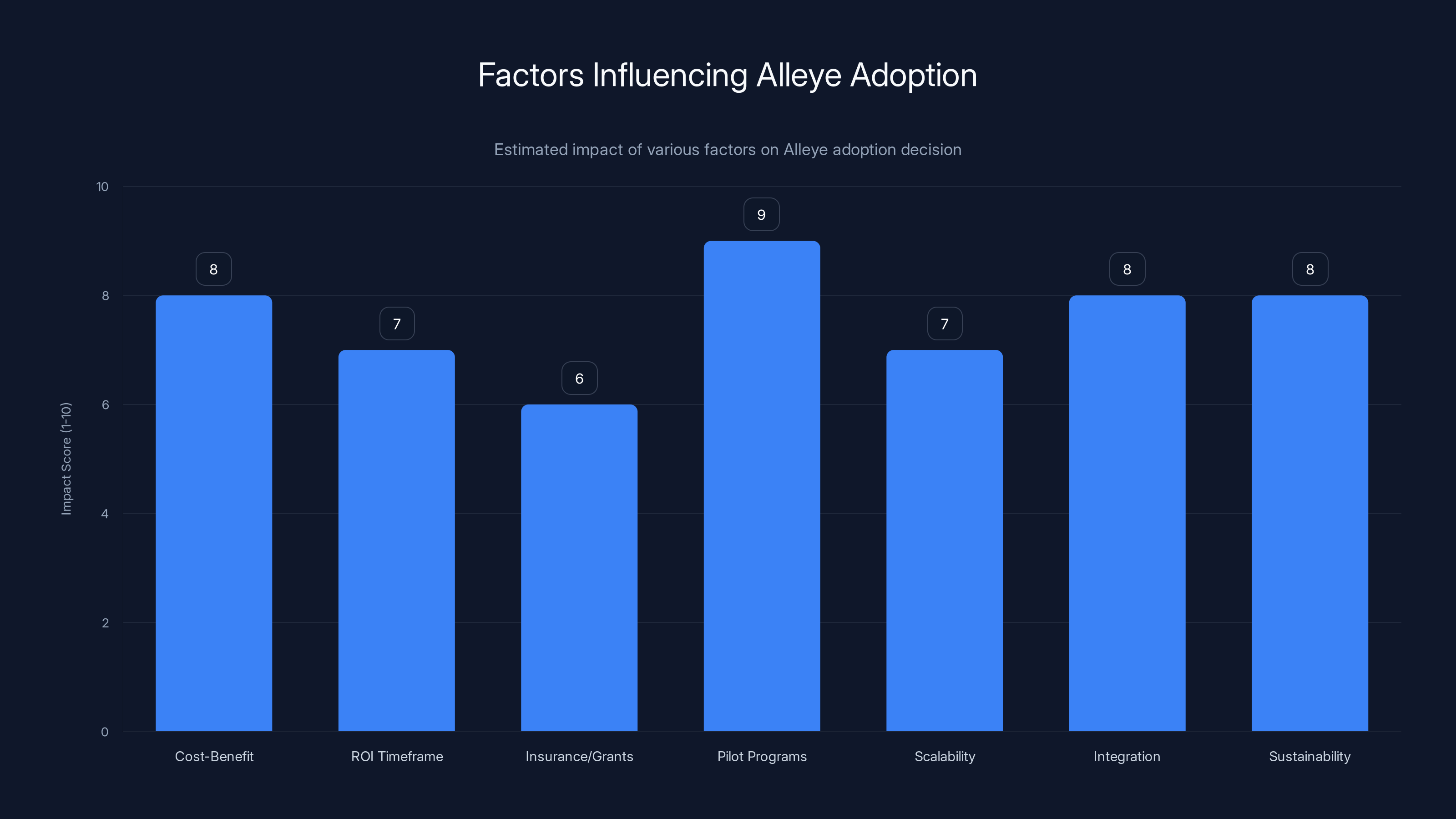

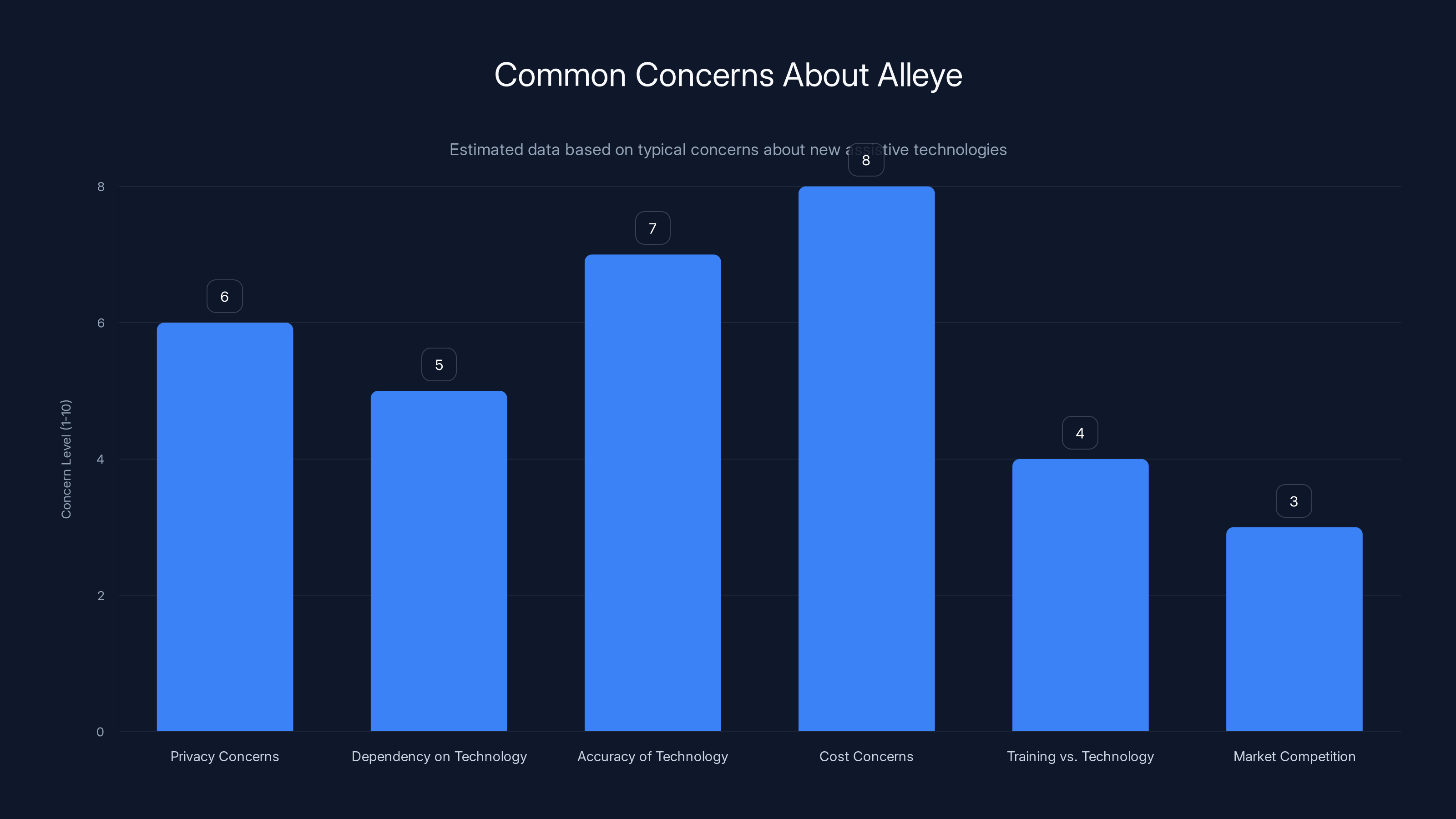

Pilot programs and cost-benefit analysis are key factors in Alleye adoption, with pilot programs scoring highest in impact. Estimated data.

Target Users: Who Benefits Most From Alleye

Hapware explicitly designed Alleye for three user groups: blind individuals, people with low vision, and neurodivergent users. Each group faces distinct challenges that Alleye addresses differently.

For people who are completely blind, traditional smart glasses accessibility features provide limited value. If you can't see, camera feeds don't help much. But haptic feedback? That's immediately useful. Vibrations on your wrist transmit information directly to your tactile senses. Alleye gives blind users real-time information about the emotional state of people they're interacting with. This is genuinely revolutionary for social communication.

People with low vision represent a different use case. They can see, but visual detail is limited. Faces might be blurry at distance, or they can't discern subtle facial movements. For them, Alleye provides a clarity layer. Instead of straining to interpret expressions, they feel them. This reduces cognitive load and increases confidence in social situations.

Neurodivergent users, particularly those on the autism spectrum, often struggle with facial expression interpretation. Some autistic individuals find eye contact challenging or overwhelming. Others process facial expressions differently than neurotypical people. For them, Alleye provides an alternative communication channel. Instead of relying on visual interpretation, they get explicit haptic information. The wristband essentially translates emotional context into a format their brain more naturally processes.

Dr. Bryan Duarte, Hapware's CTO who has been blind since a motorcycle accident at age 18, provides compelling testimony about the difference Alleye makes. He compared it to Meta's existing Live AI accessibility feature. Live AI can describe what's in a video, but it's generic. "It will only tell me there's a person in front of me," Duarte explained. "It won't tell me if you're smiling. You have to prompt it every time." With Alleye, that information comes automatically. The emotional context is immediately available.

This distinction matters profoundly. Accessibility features that require constant prompting add friction to conversations. They break natural flow. Alleye aims to be ambient. It provides information continuously without requiring active requests. For natural social interaction, this is the crucial difference between a tool that works and a tool that just exists.

Haptic Technology: Why Vibrations Beat Audio for This Application

Alleye could theoretically deliver the same information through audio. An earpiece could speak emotional descriptions: "She's smiling," "He looks confused," "They appear angry." But Hapware chose haptics instead. There are good reasons for this choice.

First, consider the cognitive load of constant audio narration. Imagine someone talking in your ear throughout every conversation, describing facial expressions as they happen. That's intrusive. It disrupts the natural back-and-forth of dialogue. You'd miss what the other person is saying while your assistant is babbling about their expression. Hapware's CTO mentioned exactly this problem. Audio narration gets distracting when you're trying to maintain a genuine conversation.

Second, haptics are ambient. A subtle vibration on your wrist doesn't demand your full attention. It's information you can absorb peripherally while focusing on what someone is saying. Audio requires active listening. Your brain must process the narration. Haptics integrate more smoothly with ongoing conversation.

Third, haptics feel natural for emotional information. Touch is inherently intimate. We touch people to express emotion. We feel butterflies when nervous, feel our heart race when excited. Converting facial expressions to tactile sensations leverages your brain's existing emotional processing. Your nervous system already understands touch as communication. This makes haptic feedback intuitive in ways that audio descriptions never achieve.

There's also a social benefit. People wearing earpieces often appear unavailable or distracted. Haptic feedback is invisible. Others don't know you're receiving information through your wristband. This keeps you fully present in conversations without external indicators that you're using an assistive device.

From a technical perspective, haptic displays have improved dramatically. Modern haptic motors can create surprisingly complex vibration patterns. They're fast enough to respond in real-time. They're precise enough to distinguish between multiple distinct patterns. The technology is mature enough for consumer applications.

The Meta Ray-Ban Smart Glasses: Why This Platform Matters

Alleye wouldn't exist without Meta's decision to open the Ray-Ban Meta glasses platform to third-party developers. This architectural choice represents a philosophical shift in how tech platforms evolve.

For years, accessibility features were developed internally by device manufacturers. Apple created accessibility features for iPhones. Microsoft built them into Windows. Google developed them for Android. This approach had limitations. Large tech companies can't anticipate every use case. They can't optimize for niche communities. Accessibility innovation happened slowly because it wasn't the primary focus of billion-dollar companies.

Meta's platform approach changes this. By allowing developers like Hapware to build on Ray-Ban Meta glasses, Meta created an ecosystem where specialized developers can innovate rapidly. Hapware exists precisely because they could access camera feeds and processing power without building glasses from scratch.

Ray-Ban Meta glasses already had the fundamental hardware: cameras, processors, and connectivity. Adding Aleye to the ecosystem required no hardware modifications to the glasses themselves. This is incredibly important because it means accessibility innovations don't require expensive new devices. They work with existing hardware.

The glasses themselves offer solid specifications. Dual cameras capture your field of view. The processing power handles real-time video analysis. Battery life reaches hours of continuous use. For someone who is blind, the glasses aren't useful by themselves. But paired with Alleye, they become a gateway to social information.

Meta also built its own accessibility features for Ray-Ban Meta glasses. These include traditional options like Live Translate for video calls and caption generation. But the company recognized limitations in what it could build alone. Opening the platform to developers like Hapware expands possibilities exponentially.

This also creates a market incentive. Hapware can build a sustainable business around accessibility innovation. They're not relying on charitable work or corporate social responsibility budgets. They're selling products to customers who genuinely need them. This makes the accessibility ecosystem more sustainable long-term.

Alleye's first-year cost is

How Hapware Created Intuitive Vibration Patterns

The most impressive aspect of Alleye is how well the vibration patterns work. Most people learn them in minutes. But this didn't happen by accident. Hapware spent significant time designing these patterns deliberately.

The goal was metaphorical mapping. Instead of arbitrary vibrations that require memorization, patterns should feel connected to their meanings. A downward jawdrop should literally feel like something dropping downward. A smile should feel upward or positive. A wave should feel lateral.

This required experimentation. Hapware likely tested dozens of pattern variations. They likely asked users what patterns felt intuitive for specific expressions. Iterative testing revealed which patterns communicated most effectively. The result is a system where your body intuitively understands the feedback without conscious translation.

The versatility of modern haptic motors makes this possible. By varying vibration intensity, frequency, and duration, motors can create surprisingly complex sensations. A gentle pulsing feels different from rapid hammering. A single sharp tap differs from sustained pressure. By combining these dimensions, Hapware created a palette of distinct patterns.

The wristband itself has multiple haptic motors positioned strategically. Instead of a single motor creating all vibrations, multiple motors work in concert. This allows for more nuanced feedback. A pattern might start on one side of your wrist and sweep to the other. Another pattern might be concentrated in the center. This spatial variation helps distinguish patterns further.

Testing with actual users was crucial. Hapware's CTO, Bryan Duarte, brought personal experience from being blind since age 18. This meant the team could test patterns with someone who genuinely depends on haptic feedback. Rather than designers guessing what works, they had direct input from the target user population.

The learning curve is steep upward then plateaus quickly. Initial confusion is normal. Which pattern means smile? Which means confusion? But repetition creates muscle memory. Your wrist becomes trained to recognize patterns the way your ears recognize different languages. After practice, you stop consciously analyzing. You just know.

Comparison With Alternative Accessibility Solutions

Alleye isn't the only way to address the facial expression recognition problem. Understanding alternatives helps illustrate why Alleye represents a significant step forward.

Meta's Live AI feature is built into Ray-Ban Meta glasses already. It uses AI to describe what cameras see in real time. For facial expressions, it could theoretically describe what it observes. But as Dr. Duarte noted, this requires active prompting. You must ask the system to describe faces. It doesn't volunteer information continuously. This makes it useful for occasional queries but impractical for natural conversation.

Audio narration systems could theoretically work. An assistant could describe facial expressions audibly. But as discussed, this intrudes on conversations and demands cognitive resources. Most users find constant audio narration exhausting in social contexts.

Text-based descriptions sent to a phone would work technically. But reading text requires looking at your phone, breaking eye contact and focus with the person you're talking to. This feels rude and defeats the purpose of understanding facial expressions during live conversation.

Wearable cameras with cloud processing exist in various forms. But they introduce privacy concerns. Your video footage goes to remote servers. Third parties could access data. For sensitive conversations, this is problematic.

Specialized training programs teach people how to interpret facial expressions through other cues. But this requires years of practice and never achieves the immediacy of real-time feedback. Some facial expressions are genuinely difficult to distinguish without practice or assistance.

Compared to these alternatives, Alleye offers several advantages:

- Ambient and continuous - Information flows automatically without prompting

- Private - No cloud processing, no data sharing

- Intuitive - Patterns feel metaphorically connected to meanings

- Quick to learn - Users master patterns in minutes, not weeks

- Non-intrusive - Doesn't break conversation flow

- Social - Invisible to others, no stigma from visible devices

Each alternative has contexts where it works better. But for the specific goal of understanding facial expressions during live conversation, Alleye represents a genuine advancement.

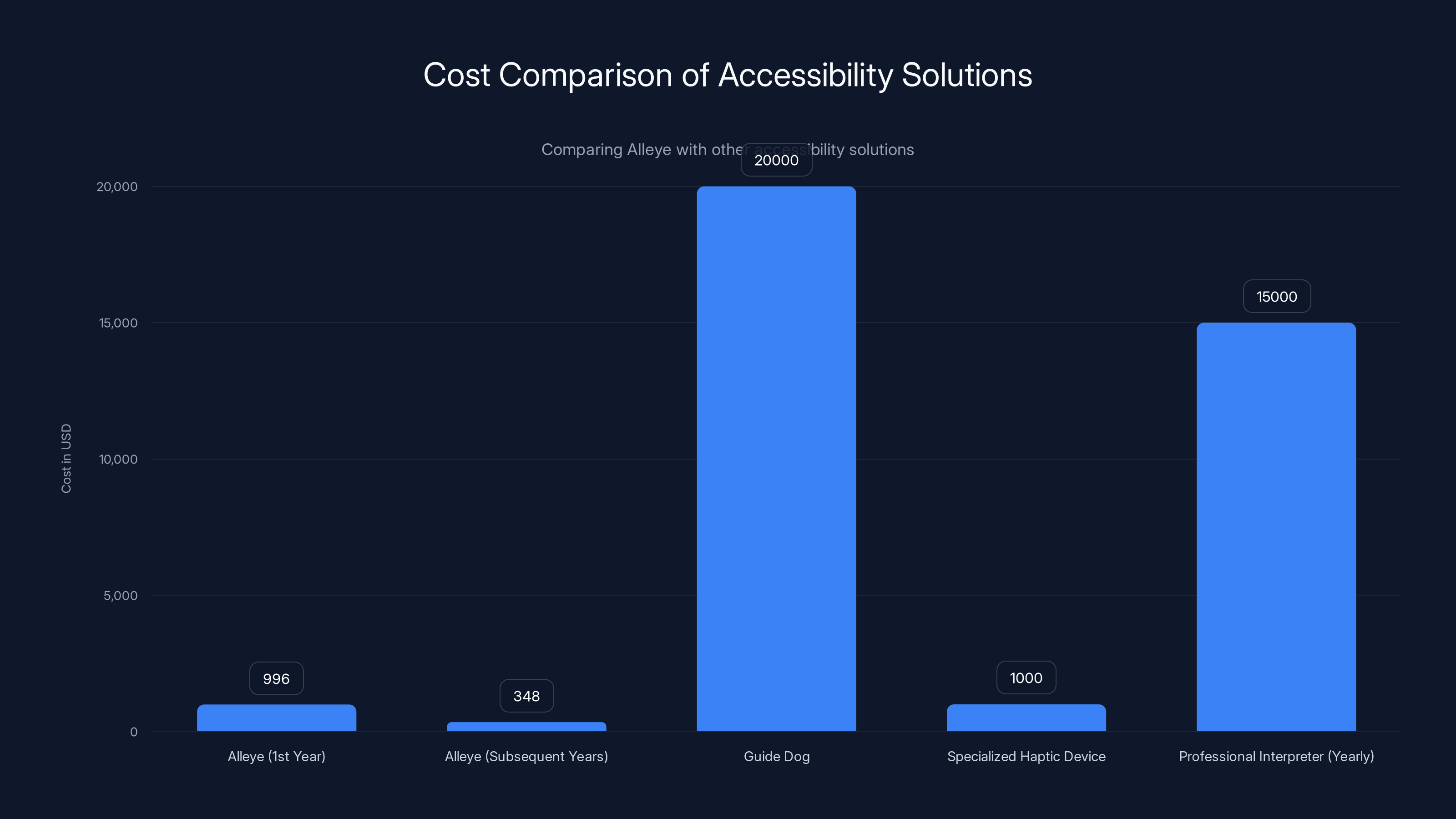

Pricing and Accessibility: The Cost Question

Alleye starts at

For context, high-end smartwatches cost

Let's do the math. A blind person buying Alleye pays

This creates a genuine accessibility paradox. The technology is designed to improve accessibility, but its cost makes it inaccessible to some who need it most. Hapware hasn't announced subsidies or reduced pricing for low-income users. They also haven't mentioned whether insurance might cover the device under assistive technology provisions.

However, the pricing isn't unreasonable for what you're getting. Computer vision algorithms running locally require processing power. Haptic motor technology is expensive at scale. The app requires ongoing development and maintenance. These costs justify the price point.

The subscription model does feel slightly aggressive. Many users would prefer a one-time purchase. But subscription revenue provides ongoing funding for app updates and improvement. It incentivizes Hapware to keep the product current rather than letting it stagnate.

Comparison to alternatives is useful. Guide dogs for blind individuals cost

The real question is whether insurance or government assistance programs will cover it. If Alleye qualifies as an assistive technology device, insurance might reimburse. If disability programs cover it, cost becomes less prohibitive. Hapware's success partly depends on navigating these financial pathways.

Estimated data showing how different factors like cultural, age, ethnicity, and anatomical variations can impact the accuracy of facial expression detection algorithms.

Privacy and Data Security Implications

Alleye's privacy model is refreshingly straightforward: your data stays on your phone. No cloud servers. No data collection. No third-party sharing. This matters more than many people realize.

Many AI-powered accessibility features operate differently. They send your video to cloud servers where powerful computers process it. This provides better accuracy and unlimited processing power. But it means your private conversations are recorded and analyzed somewhere. Even if a company promises not to store data, sending it through the internet introduces risk.

Alleye avoids this entirely. The Ray-Ban Meta glasses send video to your phone. Your phone's processor analyzes it locally. The wristband receives commands from your phone. Nothing leaves your device. Your conversations remain private.

This architecture has important implications for trust. People often hesitate to use assistive technologies because of privacy concerns. If you're blind and using an app that describes faces, you probably don't want video of conversations recorded on company servers. Alleye's local processing model eliminates this worry entirely.

The tradeoff is processing power. Your phone's CPU handles all the computation. This means accuracy depends on your phone's capabilities. Older phones might struggle. But modern smartphones have sufficient processing power for real-time facial recognition. This is becoming less of a limitation as hardware improves.

Data security is equally straightforward. If your phone is secure, your Alleye data is secure. No new attack surface introduced. No company databases to hack. Your facial expression data never leaves your control. This is a model more accessibility services should adopt.

One edge case: the app development process. Hapware's engineers likely tested the system extensively. During development, they probably analyzed lots of face images. But this shouldn't continue post-launch. Once the algorithm is trained, developers shouldn't need access to user data.

Long-term, this privacy-first approach could become a selling point. As people grow increasingly concerned about data tracking, technologies that keep data local become more valuable. Alleye could position itself as the privacy-respecting accessibility option compared to cloud-dependent alternatives.

The Role of Neurodivergence in Design

Alleye wasn't designed just for blind users. Hapware explicitly targeted neurodivergent users, particularly autistic individuals who struggle with facial expression interpretation.

This represents an important shift in accessibility design. Traditional accessibility focuses on disabilities: blindness, deafness, mobility challenges. These are conditions affecting a smaller percentage of the population. Neurodivergence is more common and affects communication across broader groups.

Many autistic individuals find facial expressions confusing. Some struggle with eye contact. Others process facial information differently than neurotypical people. Alleye provides an alternative channel for accessing this social information. Instead of trying harder to interpret expressions, users get explicit feedback through haptics.

The benefit is huge. Social interactions become less cognitively demanding. You're not constantly trying to decode expressions while also managing conversation. The haptic feedback does some of the interpretation work for you. This reduces anxiety and increases confidence in social situations.

For neurodivergent users, this is genuinely life-changing. Many avoid social situations specifically because facial expression interpretation feels overwhelming. Alleye could help them participate more fully in social life.

Beyond the immediate users, designing for neurodivergence improves products for everyone. Accessibility features benefit broader populations. Captions help not just deaf people but anyone in noisy environments. Voice control helps not just people with mobility challenges but also hands-free users. Haptic feedback helps neurodivergent people but also benefits blind users and anyone wanting information without audio distraction.

Hapware's approach of explicitly including neurodivergent perspectives in design is forward-thinking. Too many accessibility products focus narrowly on one condition. Alleye shows how considering multiple user types creates more versatile solutions.

Real-World Testing and Early User Feedback

Hapware shared some testing results demonstrating how quickly users master the system. Most people learn multiple haptic patterns within minutes of practice. This rapid learning curve is impressive and suggests the intuitive design approach worked.

What we don't know is longer-term experience. How do patterns feel after weeks of regular use? Do they stay intuitive or does novelty wear off? Do users find them distracting in various contexts? Would someone want to use this system for hours daily?

These questions require extended real-world testing with diverse users. A few minutes of testing at a conference show promise. But genuine validation requires longer-term user studies in various contexts.

Dr. Bryan Duarte's enthusiasm is particularly valuable. As someone who's been blind for decades, he has profound experience with accessibility technology. His comparison to Live AI is telling: existing solutions either require active prompting or provide generic descriptions. Alleye offers something different: immediate, specific, ambient information.

But we need more voices. What about people with progressive vision loss who've maintained some sight? How does Alleye integrate with their existing visual processing? What about autistic individuals who've never been able to interpret expressions? Does Alleye help them develop some intuition over time, or does it simply replace their own interpretative processes?

These questions aren't criticisms. They're the natural follow-up to a promising innovation. Early testing looks good, but confirming efficacy across diverse real-world contexts takes time.

Alleye's success also depends on ecosystem adoption. Will Ray-Ban Meta glasses become widespread enough that Alleye reaches critical mass? Or will it remain a niche product within a niche platform? If smart glasses never achieve mass adoption, Alleye's impact is limited regardless of how well it works.

Privacy and cost are the top concerns regarding Alleye, while market competition is less of a worry. (Estimated data)

The Broader Accessibility Innovation Ecosystem

Alleye exists within a larger context of accessibility innovation accelerating. Tech companies, startups, and nonprofit organizations are creating accessibility solutions at unprecedented rates.

Speech recognition has advanced dramatically, enabling voice control and caption generation. Computer vision is powerful enough for real-time object detection and scene understanding. Haptic technology has improved in precision and versatility. Machine learning makes these technologies work better with less data. Each component advancement enables new products like Alleye.

What's changed is architectural openness. Five years ago, accessibility innovation mostly happened inside major tech companies. Now, platforms like Ray-Ban Meta glasses actively encourage external developers. This creates multiplier effects. Meta didn't have to develop Alleye themselves. But by opening their platform, they enabled Hapware to build it.

The business model also matters. Early accessibility companies often operated as nonprofits or charity ventures. Now, accessibility is becoming a legitimate business opportunity. Hapware raised investment to build Alleye. They're charging market prices for their product. This isn't charity. It's a real business serving genuine customer need.

This commercialization is actually positive for accessibility. Nonprofit models are valuable but limited. Commercial incentives drive innovation faster. Competition between companies pushes products to improve. Sustainable businesses can reinvest in R&D. Alleye wouldn't exist if accessibility was only a nonprofit concern.

We're also seeing accessibility innovation extend beyond disability to broader user needs. Haptic feedback for facial expressions helps blind users. But it also helps busy professionals who want information without audio distraction. It helps neurodivergent people who struggle with expression interpretation. By solving specific accessibility challenges well, products become more universally useful.

The next frontier is integration. Currently, Alleye requires Ray-Ban Meta glasses specifically. As technology matures, similar functionality might work with any smart glasses or camera-equipped device. This would expand reach significantly.

Technical Challenges and Limitations

Despite impressive capabilities, Alleye faces real technical limitations worth acknowledging.

Lighting conditions affect camera quality. In dim lighting, facial features become harder to distinguish. In harsh sunlight, reflections on glasses might obscure faces. This means Alleye works well in normal indoor conditions but struggles in extreme lighting. Users need to understand these constraints.

Distance is another factor. Facial expressions are visible from several feet away. But nuanced details become harder to detect at distance. Someone across a room is trickier than someone face-to-face. Alleye probably optimizes for close-range conversation rather than distant interaction.

Masked faces present a challenge. If someone is wearing a mask, most facial expressions are literally hidden. Alleye can't detect what it can't see. In post-pandemic contexts, this is less common, but it remains a scenario where the technology fails.

Moving targets complicate detection. If someone's constantly turning away, looking down, or moving rapidly, tracking expressions becomes difficult. Alleye probably handles normal conversation movement fine but struggles with highly kinetic interactions.

False positives might occur. The algorithm might misidentify expressions occasionally. Is that smile genuine happiness or forced politeness? The algorithm guesses. Most of the time it guesses right, but errors happen. Users need to maintain healthy skepticism about feedback.

Processing latency should be minimal in real-time conversation but isn't truly instantaneous. There's a slight delay between expression change and haptic feedback. For most contexts this is imperceptible, but it could theoretically affect very fast exchanges.

Customization complexity creates a tradeoff. The more expressions you monitor, the more patterns you must distinguish. At some point, too many patterns becomes confusing. Users probably need guidance on optimal configuration.

These limitations don't invalidate Alleye. They're inherent to the technology and largely manageable through education and appropriate use contexts. But they're worth understanding.

Future Potential and Technology Roadmap

Alleye as currently designed represents version 1.0 of this technology. What comes next?

Improved accuracy is obvious. As machine learning models improve, facial expression recognition will become more precise. This means better distinction between subtle expressions and fewer false positives. Algorithms trained on more diverse faces should work better across ethnicities and ages.

Expanded expression vocabulary could happen over time. Currently, Alleye detects basic expressions. Future versions might recognize micro-expressions, emotional intensity, cultural variations in expression, or even personality traits. This gets complicated quickly, but the technical path is clear.

Broader glasses compatibility is possible as smart glasses proliferate. If Alleye's algorithms work on any device with cameras and processing power, they could work with Apple Vision Pro, Snapchat Spectacles, or future competitors. This would dramatically expand the addressable market.

Contextual adaptation might allow the system to adjust what it detects based on context. Different expressions matter in different scenarios. In professional settings, you might focus on confusion or disagreement. In casual social settings, you might prioritize happiness or interest. Smart systems could learn these preferences.

Multi-user scenarios could theoretically work. Imagine detecting expressions from multiple people simultaneously and providing distinct haptic patterns for each. This is technically challenging but not impossible. It would help in group conversations where currently Alleye likely focuses on whoever you're directly facing.

Integration with other sensors could enhance context. If the system knew whether you were speaking, what words were being said, and various other environmental data, expression interpretation could be more accurate and nuanced.

Offline capability might become important. Currently, Alleye seems to require consistent connectivity. Future versions might cache or work entirely offline, important for reliability in various environments.

Beyond hardware, the software potential is enormous. As user feedback accumulates, Hapware will learn what patterns work best, what expressions people care most about, and how to optimize the system for real-world use. The technology will mature significantly over the next few years.

The Alleye wristband starts at

Competitive Landscape and Market Position

Where does Alleye fit in the broader market of accessibility and wearable technology?

Existing haptic wearables include smartwatches with basic vibration alerts, specialized haptic vests for immersive entertainment, and research projects exploring various applications. None directly compete with Alleye because no existing product does exactly this. This gives Hapware first-mover advantage.

Smart glasses platforms are emerging rapidly. Apple's Vision Pro, Meta's Ray-Ban glasses, Snapchat's Spectacles, and others are building ecosystems. Each represents potential platform for Alleye-like applications. As more companies open developer APIs, competition will increase.

AI accessibility features from major tech companies (Meta, Apple, Google) represent potential competition. If these companies decide to build their own facial expression systems into their platforms, they could recreate Alleye's functionality. Their advantages: massive R&D budgets, existing user bases, integration advantages. Hapware's advantage: specialization and focus on genuinely solving user problems.

Alternative accessibility technologies like audio description services, guide dogs, and assistive technology broadly, represent indirect competition for market attention and funding. But they serve different functions. Alleye's advantage is its real-time nature and immediacy compared to these alternatives.

Market size is meaningful but limited by platform adoption. Alleye's success is directly tied to Ray-Ban Meta glasses adoption. If smart glasses never reach mass adoption, Alleye remains niche. But even a 10 million unit smart glasses market globally represents significant opportunity for specialized applications.

Pricing power is interesting. Alleye can charge a premium because there's no direct substitute. Until competitors launch similar products, Hapware has pricing flexibility. Eventually, as similar products emerge, price competition will likely increase. First-mover advantage in pricing can be substantial.

User Experience Design Considerations

Beyond technology, Alleye's success depends on user experience design. How intuitive is the system? How quickly can new users become proficient? How does it feel during real conversations?

The learning mode is crucial. Users don't want to jump straight into using Alleye during real interactions. They need safe practice space. Hapware's app provides this through controlled environment where users practice distinguishing patterns. This is good design.

Personalization options matter. Allowing users to select which expressions to monitor and customize intensity means the system adapts to individual preferences. One-size-fits-all rarely works for accessibility. Flexibility is essential.

Physical design of the wristband affects usability. It needs to be comfortable for extended wear. It shouldn't slip off during normal activity. It must allow water contact for showering. It should be durable for daily use. Current design sounds adequate but extended real-world use will reveal issues.

App interface design impacts daily usability. How easily can users switch between learning mode and active mode? Can they quickly adjust settings during use? Is the feedback clear? These details matter for adoption. A clunky app makes people avoid the tool even if it works technically.

Social considerations matter too. How do people react when they see someone wearing the wristband? Will there be stigma? Alleye's advantage is invisibility. No one knows you're using it unless you explain. This is a feature, not a bug. Accessibility works best when unnoticed.

Feedback from real users will reveal UX issues Hapware hasn't anticipated. Good companies iterate based on user feedback. Poor companies ignore it. Hapware's early decision to involve people who are actually blind in development is a positive sign.

Implementation Strategy for Organizations and Individuals

For individuals considering Alleye, what's the implementation path?

First, assess need. Does understanding facial expressions during conversation significantly impact your quality of life? For some people, absolutely yes. For others, current solutions work adequately. Be honest about whether Alleye fills a genuine gap.

Second, evaluate prerequisites. You need Ray-Ban Meta glasses. You need a compatible smartphone. You need reliable internet connectivity during conversation (at least initially, though offline capability might improve). Not everyone has these prerequisites easily available.

Third, budget appropriately.

Fourth, start with the learning phase. Don't jump into real conversations immediately. Use the practice mode to develop muscle memory for different patterns. Expect to spend a few hours learning before real deployment. This isn't a quick process, but it's not difficult either.

Fifth, deploy incrementally. Start in low-stakes social situations. Practice conversations with friends or family members who understand you're learning a new system. Gradually expand to more challenging contexts.

Sixth, iterate and adjust. As you use the system, customize it. Which expressions matter most? How intense should vibrations be? The app lets you refine settings. Good tools are personalized tools.

For organizations supporting people who are blind or neurodivergent, similar logic applies. Pilots could start with interested individuals who are tech-comfortable. Gather feedback. Demonstrate value. Scale based on results.

Education is important too. Just having Alleye available isn't enough. Users need training on how to use it. They need support troubleshooting issues. They need community to share experiences with other users. Organizations deploying Alleye should plan for these support needs.

Privacy and Ethical Considerations

Alleye's privacy model is strong, but ethical questions remain worth exploring.

Consent and disclosure: When you use Alleye, you're analyzing other people's facial expressions without their explicit consent. They don't know you're detecting their emotions. Is this ethical? In most contexts, probably. People expect others to read their expressions in conversation. Doing it with technology shouldn't be fundamentally different than doing it by eye. But it's worth thinking about.

Data asymmetry: You gain information about others' emotions. They don't know this is happening. This creates information asymmetry in conversation. Does this advantage the Alleye user unfairly? In business negotiations, potentially. In casual conversation, probably not. Context matters.

Misuse potential: Someone with Alleye could theoretically manipulate people more effectively by better reading their emotional responses. Is this a concern? Any advantage in reading emotions could be misused. But so could any skill or tool. The technology itself isn't inherently deceptive.

Accuracy and fairness: If facial recognition algorithms are biased against certain ethnicities or ages, does Alleye perpetuate these biases? If the system works worse for people with certain facial features, is that discriminatory? These are legitimate concerns worth monitoring.

Surveillance implications: Could Alleye be adapted into surveillance tools? Theoretically yes. Any technology can be misused. But Alleye's design prioritizes user privacy and local processing, not surveillance. The question is whether guardrails could prevent abuse.

These are philosophical questions without simple answers. But they're worth considering. Accessibility tools serve important human needs. Deploying them responsibly matters as much as technical capability.

Building the Business Case for Adoption

For organizations considering deploying Alleye, the business case involves several factors.

Cost-benefit analysis starts with understanding benefits. For blind employees, Alleye could improve workplace communication, increase confidence, and enhance performance. Quantifying these is difficult. But clear benefits exist. Compare cost ($700 per employee) to productivity gains and employee satisfaction.

Return on investment timeframe depends on organization. For small nonprofits serving blind individuals, immediate ROI might not exist. But quality of life improvements justify adoption. For businesses, ROI depends on how much employees' communication ability impacts their role.

Insurance and grant considerations are crucial. Some insurance programs cover assistive technology. Some nonprofits offer equipment grants. These can reduce or eliminate out-of-pocket costs. Organizations should explore these options systematically.

Pilot programs make sense before large-scale deployment. Identify 5-10 interested users. Deploy Alleye. Gather feedback. Measure outcomes. Use this data to inform broader decisions. Pilots minimize risk while building organizational knowledge.

Scalability matters for larger organizations. As numbers increase, per-unit costs might decrease. Training infrastructure becomes important. Support systems need development. Organizations scaling Alleye should plan for these needs.

Integration with existing accessibility infrastructure is necessary. Alleye shouldn't replace other accessibility features. It should complement them. Ensure compatibility with other assistive technology.

Sustainability requires ongoing support. Hardware breaks. Subscriptions continue. Software updates happen. Organizations need long-term commitment to maintaining the system, not just one-time deployment.

The business case isn't primarily financial. It's about improving lives and operations. Organizations that view accessibility as a value rather than a cost find deployment easier to justify.

Addressing Common Concerns and Misconceptions

As Alleye gains attention, certain concerns and misconceptions likely to emerge deserve addressing.

"Isn't this just blind people using technology to spy on others?" No. Alleye simply allows detection of emotional expressions in conversation. Sighted people do this constantly with their eyes. Alleye doesn't record anything. It doesn't store data. It's not surveillance. It's literally replacing visual perception with haptic perception.

"Won't this make blind people dependent on technology?" Potentially, but dependency on assistive technology isn't inherently bad. Blind people depend on guide dogs, white canes, and screen readers. These tools are valuable precisely because they create capability. Alleye creates similar capability through technology.

"Is facial recognition technology accurate enough?" Modern facial recognition is quite accurate for basic emotions. It's not perfect, but it's reliable enough for most practical purposes. Occasional errors are outweighed by consistent useful information.

"Doesn't this cost too much?" Compared to other accessibility solutions, Alleye's pricing is reasonable. Is it accessible to everyone who needs it? Not yet. But price will likely decrease as production scales. Initially high prices are typical for new technology.

"Why not just train people to read expressions?" Some people can train to interpret expressions better. But some genuinely can't, regardless of training. Neurodivergent people often struggle specifically because their brains process facial information differently. Technology solves what training can't.

"Won't other companies copy this?" Probably, once Alleye proves successful. That's fine. Market competition drives improvement and lowers prices. Hapware's first-mover advantage and specialization will help them stay competitive.

The Intersection of Accessibility and Consumer Tech

Alleye represents broader trend: accessibility features becoming mainstream consumer tech rather than niche specialized tools.

This happens through several mechanisms. First, accessibility problems often have universal solutions. Captions help deaf people but also work in noisy environments. Voice control helps people with mobility challenges but also works while driving. Facial expression detection helps neurodivergent people but also assists anyone wanting emotional context without looking at someone's face.

Second, platforms enable specialization. By opening their ecosystems, companies like Meta allow developers to build specialized solutions rather than generalizing across all users. This creates better products because specialists understand their target users deeply.

Third, market incentives align. Accessibility used to be seen as obligation or charity. Now it's recognized as market opportunity. Companies building accessibility tools can achieve profitability. This makes the ecosystem sustainable.

Fourth, user expectations evolve. As more people have positive experiences with assistive technology, acceptance increases. What seems niche today becomes expected in a few years. Remember when smartphone accessibility was experimental? Now it's standard.

Alleye fits this larger pattern. It's solving genuine accessibility problem, but doing so through technology that could appeal to broader audiences eventually. As it matures and prices drop, adoption will increase. Ultimately, the best accessibility solutions are ones that serve disability while improving the experience for everyone.

FAQ

What exactly is Alleye?

Alleye is a haptic wristband that pairs with Ray-Ban Meta smart glasses to detect facial expressions and convert them into vibration patterns. Users feel these vibrations on their wrist, allowing them to understand emotional expressions and nonverbal cues in real time without visual input.

How does Alleye detect facial expressions?

Ray-Ban Meta glasses capture video of the person you're talking to. This video streams to the Alleye app on your smartphone, where computer vision algorithms analyze facial landmarks like eye position, mouth corners, and cheek movement. When these landmarks create patterns corresponding to specific expressions, the app sends vibration commands to the wristband through Bluetooth, creating distinct haptic patterns.

What are the main benefits of Alleye for blind users?

Alleye provides real-time access to emotional context during conversations without requiring active prompting or audio narration. This creates more natural social interactions. Users understand whether people are smiling, confused, frustrated, or happy. The system works continuously, providing ambient feedback rather than interrupting conversation flow. For people who've been blind their entire lives, this represents new access to social information.

How quickly can users learn to distinguish vibration patterns?

Most users master multiple patterns within minutes during the learning phase. Hapware designed patterns to be metaphorically intuitive: a downward vibration feels like a jaw drop, lateral vibration feels like a wave. This intuitive mapping means users don't memorize arbitrary patterns. Instead, their bodies naturally interpret the feedback. After brief practice, muscle memory develops and pattern recognition becomes automatic.

How much does Alleye cost?

The Alleye wristband starts at

Does Alleye require constant internet connection?

Alleye's core functionality runs locally on your smartphone. The app analyzes video on your device, not in the cloud. This means you need only local Bluetooth connectivity between your wristband and phone. Internet might be required for app updates or possibly for certain features, but conversational use doesn't depend on internet access.

How is Alleye different from Meta's existing accessibility features?

Meta's Live AI accessibility feature requires active prompting to describe what cameras see. You must ask it to identify expressions. Alleye works continuously and automatically, providing real-time feedback about expressions. Additionally, Meta's feature gives generic descriptions ("someone is present") rather than specific emotional information. Alleye focuses specifically on facial expressions and nonverbal cues.

Can Alleye work with other smart glasses besides Ray-Ban Meta?

Currently, Alleye specifically pairs with Ray-Ban Meta glasses. However, as smart glasses platforms proliferate and Meta opens its API further, Alleye could potentially support other devices in the future. Technical compatibility depends on device capabilities and software support.

Is Alleye helpful for neurodivergent users?

Yes. Many neurodivergent people, particularly those on the autism spectrum, struggle interpreting facial expressions. Alleye provides explicit emotional information rather than requiring interpretation of subtle visual cues. For users who find eye contact challenging or process faces differently, haptic feedback offers a valuable alternative channel for social information.

What are the privacy implications of using Alleye?

Alleye's privacy model is strong. Video analysis happens locally on your phone, not on cloud servers. No footage is stored or shared. Data never leaves your device. Compared to many AI-powered features requiring cloud processing, Alleye prioritizes user privacy significantly. However, you are analyzing other people's expressions without their explicit consent, which raises philosophical questions worth considering.

How accurate is Alleye's facial expression detection?

Modern facial recognition algorithms achieve 85-95% accuracy for basic emotions like happiness, sadness, and confusion. Accuracy varies based on lighting conditions, face angle, and other factors. Occasional errors occur, but the system is reliable enough for practical use. Users should understand that Alleye provides useful information, not perfect data.

Can Alleye detect micro-expressions or subtle emotions?

Currently, Alleye focuses on major facial expressions. Subtle micro-expressions that appear for fractions of a second are more difficult to detect. Distinguishing between forced smiles and genuine ones isn't yet possible. Future improvements to algorithms might enable more nuanced detection, but current version handles primary emotional expressions well.

Conclusion: A New Era of Accessibility Innovation

Alleye represents something important beyond its immediate technical capability. It demonstrates that accessibility innovation is becoming mainstream, that platforms can enable specialized developers to solve real problems, and that assistive technology can be both effective and commercially viable.

The fact that this product exists at all stems from Meta's decision to open its smart glasses platform. This architectural choice created opportunity for Hapware to build something genuinely valuable. Without that openness, innovation moves slower. Companies innovate on their own terms, which often means neglecting niche accessibility needs.

The bigger story is about how technology can solve human problems when designed with intention. Alleye wasn't built by accident. It emerged from Hapware's recognition that blind people and neurodivergent people face a genuine gap: they can't easily access the emotional context of face-to-face conversation. The company built technology to fill that gap. Not perfectly, but meaningfully.

For individuals who are blind, low vision, or neurodivergent, Alleye offers something potentially transformative. Real-time emotional information changes how you experience social interaction. Conversations become richer. Understanding other people becomes easier. This isn't flashy innovation. It's practical technology solving real problems.

The challenges shouldn't be minimized. Cost remains prohibitive for some. Technology isn't perfect. Long-term real-world usability requires ongoing refinement. But these are problems of iteration, not fundamental failure. Alleye works. It solves a real problem. It opens new possibilities for accessibility.

Looking forward, expect similar innovations. As more companies open developer platforms, more specialized developers will build solutions addressing overlooked accessibility needs. Competition will drive improvements. Costs will decrease. Integration will deepen. The accessibility technology ecosystem is entering rapid growth phase.

For people building accessibility solutions, Alleye demonstrates what's possible when specialization meets platform openness. Focus on solving real problems for real users. Collaborate with community members who have lived experience. Design intuitively. Make technology disappear into usefulness.

For platforms and companies, Alleye demonstrates value of openness. Closed ecosystems innovate slower than open ones. Platform companies that enable developers unlock innovation they couldn't achieve internally. This benefits users, developers, and ultimately platforms themselves.

For broader tech industry, Alleye reminds us that accessibility and universal design often solve problems for wider audiences than originally intended. Solutions built for specific communities eventually benefit many others. Investing in accessibility isn't about serving small populations. It's about building better technology for everyone.

Alleye is version 1.0 of facial expression haptics for accessibility. It's impressive but imperfect. It's pioneering but will be surpassed. What matters is that it exists, that it works, and that it opens new possibilities for how technology can enhance human communication. That's how real progress happens.

Key Takeaways

- Alleye's haptic wristband uses intuitive vibration patterns to translate facial expressions into physical feedback that users learn in minutes

- The system costs 29/month subscription, serving blind, low vision, and neurodivergent users seeking real-time emotional context

- Meta's open platform approach to Ray-Ban smart glasses enabled Hapware's specialized innovation, demonstrating power of ecosystem openness for accessibility

- Computer vision algorithms achieve 85-95% accuracy detecting basic emotions locally on smartphones with no cloud processing, preserving user privacy

- Alleye represents emerging trend where accessibility solutions built for specific communities solve universal user problems, benefiting broader audiences

Related Articles

- Amazfit Active 2 Review: Best Budget Fitness Tracker [2025]

- Apple Watch Series 11: Complete Guide, $100 Off Deal & Features [2025]

- iPolish Smart Color-Changing Nails: The Future of Wearable Tech [2025]

- Mobileye Acquires Mentee Robotics: $900M Bet on Humanoid AI [2025]

- Meta Ray-Ban Smart Glasses Pause: What It Means for AR [2025]

- Meta Pauses Ray-Ban Display International Expansion: What It Means [2025]

![Haptic Wristband for Meta Smart Glasses Decodes Facial Expressions [2025]](https://tryrunable.com/blog/haptic-wristband-for-meta-smart-glasses-decodes-facial-expre/image-1-1767823659857.jpg)