Building AI with a Physical World Understanding: Yann Le Cun's Vision [2025]

Last month, when Yann Le Cun announced that his startup, Advanced Machine Intelligence (AMI), had raised a staggering $1 billion, the AI community was abuzz with speculation and excitement. This isn't just another AI venture—it's a bold leap into developing AI systems that truly understand the physical world. Le Cun, a pioneer in the field of artificial intelligence, envisions machines that can reason about the world in the same way humans do, challenging the longstanding dominance of language models in AI. According to TechCrunch, this funding round underscores the industry's confidence in Le Cun's vision.

TL; DR

- Yann Le Cun's AMI raises $1 billion: The goal is to build AI that can understand and reason about the physical world.

- Beyond language models: Le Cun critiques current AI's reliance on language models, advocating for models grounded in physical experiences.

- New AI paradigms: Focus on developing AI that mimics human-like perception and interaction with the environment.

- Real-world applications: Potential to revolutionize industries like robotics, autonomous vehicles, and healthcare.

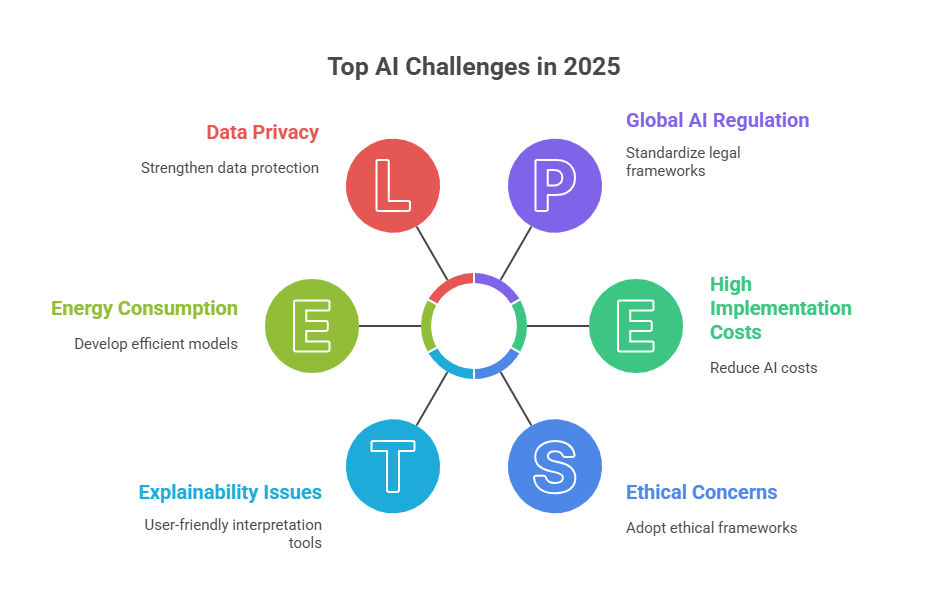

- Challenges and solutions: Addressing data complexity, sensor integration, and ethical considerations.

The Current State of AI: A Language-Centric Approach

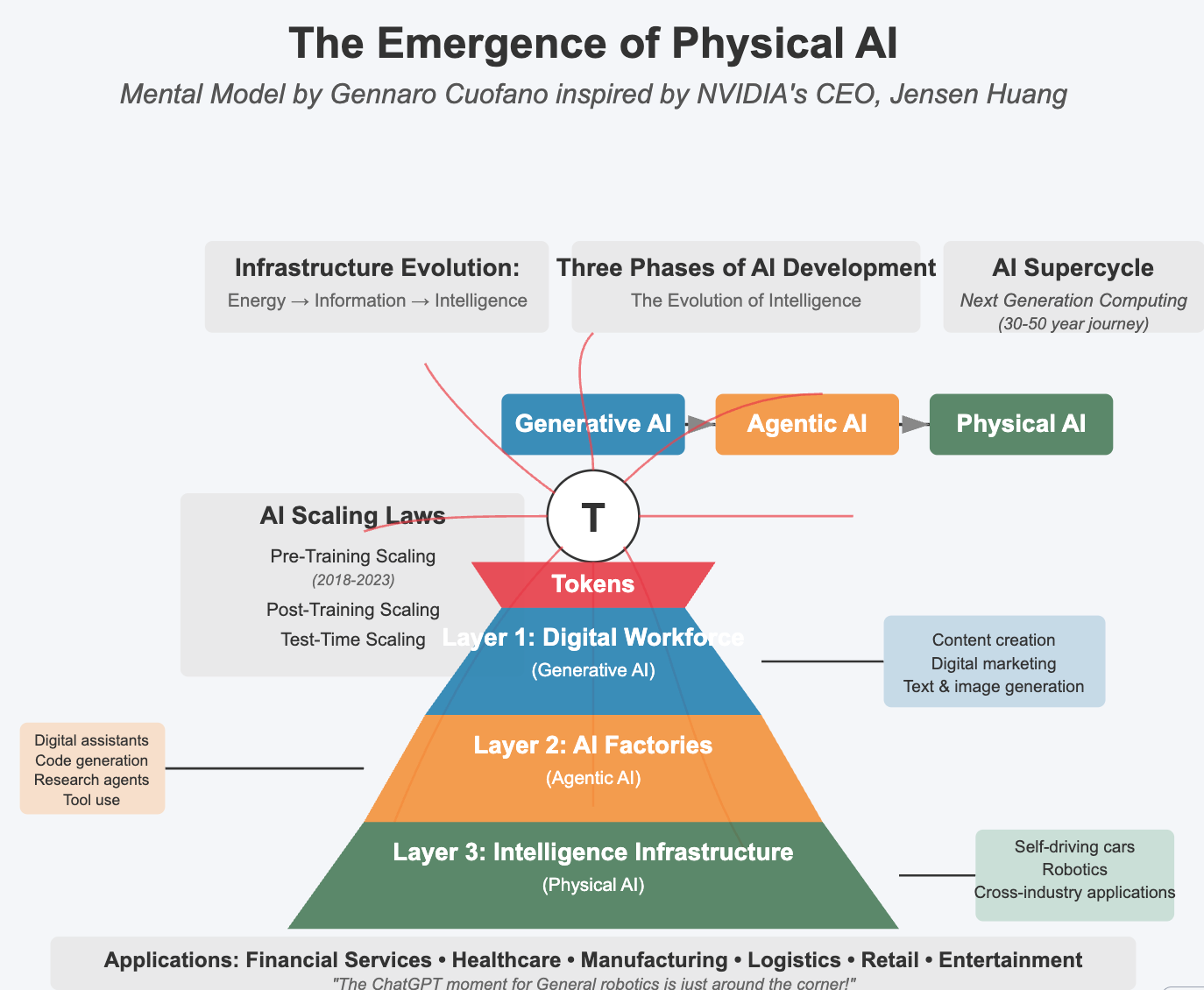

Before diving into Le Cun's vision, it's essential to understand the current landscape of AI, which heavily relies on language models. Large Language Models (LLMs) like GPT-5.4 have dominated the AI field by demonstrating remarkable capabilities in understanding and generating human-like text. However, these models often lack a true understanding of the world they describe—an issue Le Cun aims to address.

The Limitations of Language Models

While LLMs are impressive in their ability to process and generate language, they inherently lack a grounding in the physical world. This limitation manifests in several ways:

- Contextual Misunderstandings: LLMs can generate coherent text but often miss the nuances of physical interactions. For example, an AI might understand the word "apple" but not the tactile experience of holding one.

- Lack of Sensory Input: Language models do not process sensory data like images, sounds, or tactile feedback, limiting their ability to perceive the world.

- Static Learning: These models rely heavily on pre-existing data and struggle with real-time learning from physical interactions.

The Need for Physical World Models

To create AI that truly understands its environment, it's crucial to integrate physical world models, which focus on perceiving and interacting with the environment through multiple sensory inputs. This approach could lead to AI systems that:

- Understand Causality: By interacting with the world, AI can learn cause-and-effect relationships, much like humans do from infancy.

- Adapt to New Situations: Physical world models enable real-time learning and adaptation to new, unforeseen scenarios.

- Enhance Decision-Making: With a more profound understanding of physical contexts, AI can make more informed decisions.

AMI's Vision: Bridging the Gap Between AI and the Physical World

Yann Le Cun's AMI is set to revolutionize AI by developing models that blend cognitive and physical understanding. This ambitious goal requires overcoming several technical and conceptual challenges.

The Core Principles of AMI's Approach

AMI's approach focuses on developing AI systems with the following core principles:

- Multimodal Learning: Integrating data from various sources, including visual, auditory, and tactile inputs, to create a comprehensive understanding of the environment.

- Self-Supervised Learning: Allowing AI to learn from the world without explicit human guidance, similar to how children learn through exploration.

- Dynamic Interaction: Enabling AI to interact with the physical world, test hypotheses, and learn from the outcomes.

Real-World Use Cases

The potential applications of AI that understand the physical world are vast and transformative:

- Robotics: AI can enhance robotic systems, enabling them to navigate complex environments, perform delicate tasks, and collaborate with humans safely.

- Autonomous Vehicles: With a better understanding of physical and spatial contexts, AI can significantly improve the safety and efficiency of self-driving cars.

- Healthcare: AI could revolutionize medical diagnostics and treatment planning by understanding patient symptoms in the context of real-world environments.

- Manufacturing: AI can optimize processes by understanding the physical properties of materials and the dynamics of machinery.

Technical Challenges and Solutions

Building AI systems that comprehend the physical world involves several technical hurdles that require innovative solutions.

Data Complexity and Integration

Challenge: Integrating diverse data types from multiple sensors into a coherent model is complex.

Solution: Develop advanced data fusion techniques to combine visual, auditory, and tactile data seamlessly. This involves creating algorithms that can process and interpret different types of information simultaneously.

Sensor and Hardware Limitations

Challenge: Current sensors may not provide the level of detail needed for comprehensive understanding.

Solution: Invest in developing high-resolution sensors and hardware that can capture nuanced physical interactions. Additionally, leveraging edge computing can help process this data in real-time.

Ethical and Safety Considerations

Challenge: Ensuring AI systems interact safely with humans and the environment.

Solution: Implement rigorous testing and safety protocols to prevent unintended consequences. Establish ethical guidelines to govern AI interactions in sensitive environments, such as healthcare and autonomous driving.

Practical Implementation Guide

For developers and companies looking to integrate physical world understanding into their AI systems, here is a practical roadmap:

Step 1: Define Objectives and Scope

Begin by clearly defining the objectives and scope of your AI project. Determine which physical interactions are most critical to your goals and how AI can enhance these processes.

Step 2: Select Appropriate Sensors

Choose sensors that provide the necessary data for your AI model. Consider factors such as resolution, range, and compatibility with existing systems.

Step 3: Develop Multimodal Models

Create models that can process data from multiple modalities. Use machine learning frameworks that support multimodal data processing, such as TensorFlow or PyTorch.

Step 4: Implement Self-Supervised Learning

Incorporate self-supervised learning techniques to enable AI to learn from its interactions with the environment. This approach reduces the need for labeled data and allows for continuous learning.

Step 5: Test and Iterate

Conduct extensive testing in controlled environments to ensure the safety and effectiveness of your AI systems. Use the feedback to refine models and improve performance.

Future Trends and Predictions

As AI continues to evolve, the integration of physical world understanding will likely drive several key trends:

Enhanced Human-AI Collaboration

AI systems that understand the physical world will become more adept at collaborating with humans, enabling seamless interaction in various settings, from factories to homes.

Personalized AI Experiences

With a deeper understanding of physical contexts, AI can offer more personalized experiences, adapting to individual preferences and real-world situations.

Expansion into New Domains

AI's ability to understand and interact with the physical world will open up new domains for AI applications, including agriculture, construction, and environmental monitoring.

Increasing Ethical and Regulatory Focus

As AI systems become more integrated into daily life, ethical and regulatory considerations will become increasingly important. Ensuring transparency and accountability in AI decision-making will be crucial.

Common Pitfalls and How to Avoid Them

In the journey to developing AI with physical world understanding, several common pitfalls can hinder progress:

Overreliance on Simulation

Pitfall: Relying too heavily on simulated environments can lead to models that perform well in theory but fail in real-world applications.

Solution: Balance simulation with real-world testing to ensure models are robust and adaptable.

Neglecting User-Centric Design

Pitfall: Focusing solely on technical capabilities without considering user experience can lead to poor adoption.

Solution: Involve end-users in the design process to ensure AI systems meet their needs and preferences.

Ignoring Security Implications

Pitfall: Overlooking security risks can lead to vulnerabilities in AI systems.

Solution: Implement robust security measures to protect data and prevent unauthorized access.

Conclusion: A New Era of AI

Yann Le Cun's vision for AI that understands the physical world represents a significant shift in the development of artificial intelligence. By moving beyond language models and embracing the complexities of physical interactions, Le Cun and AMI are paving the way for a new era of AI capabilities. This journey is fraught with challenges, but the potential rewards—in terms of enhanced AI performance and new applications—are immense. As we stand on the brink of this new frontier, the possibilities for AI are as boundless as the physical world it seeks to understand.

FAQ

What is the significance of AI understanding the physical world?

AI that understands the physical world can interact more naturally and effectively with its environment, leading to improved performance in tasks like robotics, autonomous driving, and healthcare.

How does AI with physical world understanding differ from language models?

Unlike language models, AI with physical world understanding processes sensory data and learns from real-world interactions, enabling it to comprehend context and causality.

What are the challenges in developing AI with physical world understanding?

Key challenges include data complexity, sensor limitations, and ensuring ethical and safe interactions with humans and the environment.

How can businesses implement AI with physical world understanding?

Businesses can start by defining objectives, selecting appropriate sensors, developing multimodal models, and incorporating self-supervised learning techniques.

What industries will benefit most from AI with physical world understanding?

Industries such as robotics, autonomous vehicles, healthcare, manufacturing, and agriculture stand to benefit significantly from AI systems that understand and interact with the physical world.

What future trends are expected in AI development?

Future trends include enhanced human-AI collaboration, personalized AI experiences, expansion into new domains, and increased focus on ethical and regulatory considerations.

How can developers avoid common pitfalls in AI development?

Developers should balance simulation with real-world testing, involve users in design processes, and implement robust security measures to avoid common pitfalls.

Key Takeaways

- Yann LeCun's startup, AMI, raised $1 billion to develop AI with physical world understanding.

- AI beyond language models enhances real-world interaction and decision-making.

- Key applications include robotics, autonomous vehicles, and healthcare.

- Technical challenges include data complexity and sensor limitations.

- Future trends involve personalized AI experiences and ethical considerations.

Related Articles

- Exploring OpenAI's GPT-5.4: Strengths, Weaknesses, and Future Enhancements [2025]

- Nvidia's Open-Source AI Agent Platform: A Comprehensive Guide [2025]

- The Anthropic DOD Lawsuit: Navigating AI Ethics and National Security [2025]

- WIRED - Unraveling the Latest Innovations in Tech, Science, and Culture [2025]

- OpenAI's Acquisition of Promptfoo: Securing AI Agents for the Future [2025]

- The Science of Falling Cats: How Felines Always Land on Their Feet [2025]

![Building AI with a Physical World Understanding: Yann LeCun's Vision [2025]](https://tryrunable.com/blog/building-ai-with-a-physical-world-understanding-yann-lecun-s/image-1-1773120888594.jpg)