The Anthropic DOD Lawsuit: Navigating AI Ethics and National Security [2025]

In a world increasingly governed by algorithms and data, the intersection of artificial intelligence (AI) and national security raises profound questions. Recently, Anthropic, a notable AI research company, found itself embroiled in a legal battle with the U.S. Department of Defense (DOD). At the heart of this lawsuit is a clash between AI ethics and national security imperatives. This article delves deep into the nuances of this case, exploring its implications for the AI industry, ethical AI development, and future trends in technology governance.

TL; DR

- Ethical Concerns: The lawsuit highlights critical ethical considerations in AI deployment, particularly regarding surveillance and autonomous weapons.

- Industry Backlash: Over 30 tech employees, including from OpenAI and Google, support Anthropic's stance against DOD's demands.

- Legal Precedent: This case could set significant legal precedents for AI usage in national security.

- Future Implications: The outcome may influence how tech companies negotiate government contracts regarding AI.

- AI Governance: There is a pressing need for clear guidelines and frameworks for ethical AI use in sensitive applications.

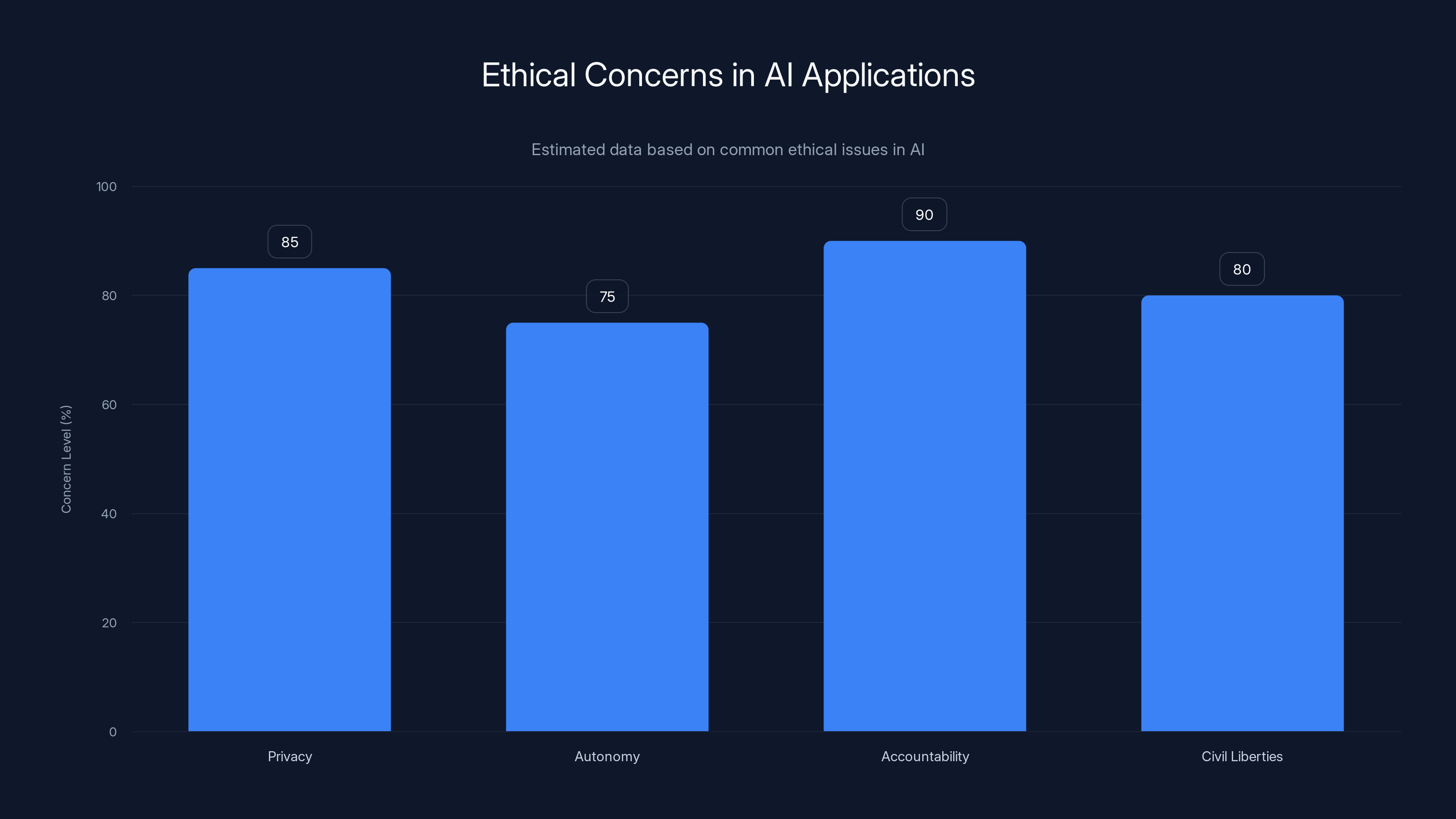

Privacy and accountability are the top ethical concerns in AI, with high levels of concern about autonomy and civil liberties as well. Estimated data based on common discussions in AI ethics.

Understanding the Case

Anthropic, founded by former OpenAI researchers, is known for developing safe, human-compatible AI systems. The company's ethos revolves around ensuring that AI systems are aligned with human values and ethics. This philosophy clashed with the DOD's request to use Anthropic's technology for mass surveillance and potentially lethal autonomous weapons systems.

The DOD's designation of Anthropic as a supply chain risk, typically reserved for foreign threats, was unprecedented. This classification has significant ramifications, as it implies that Anthropic could be barred from future government contracts, a potentially crippling blow for any tech company.

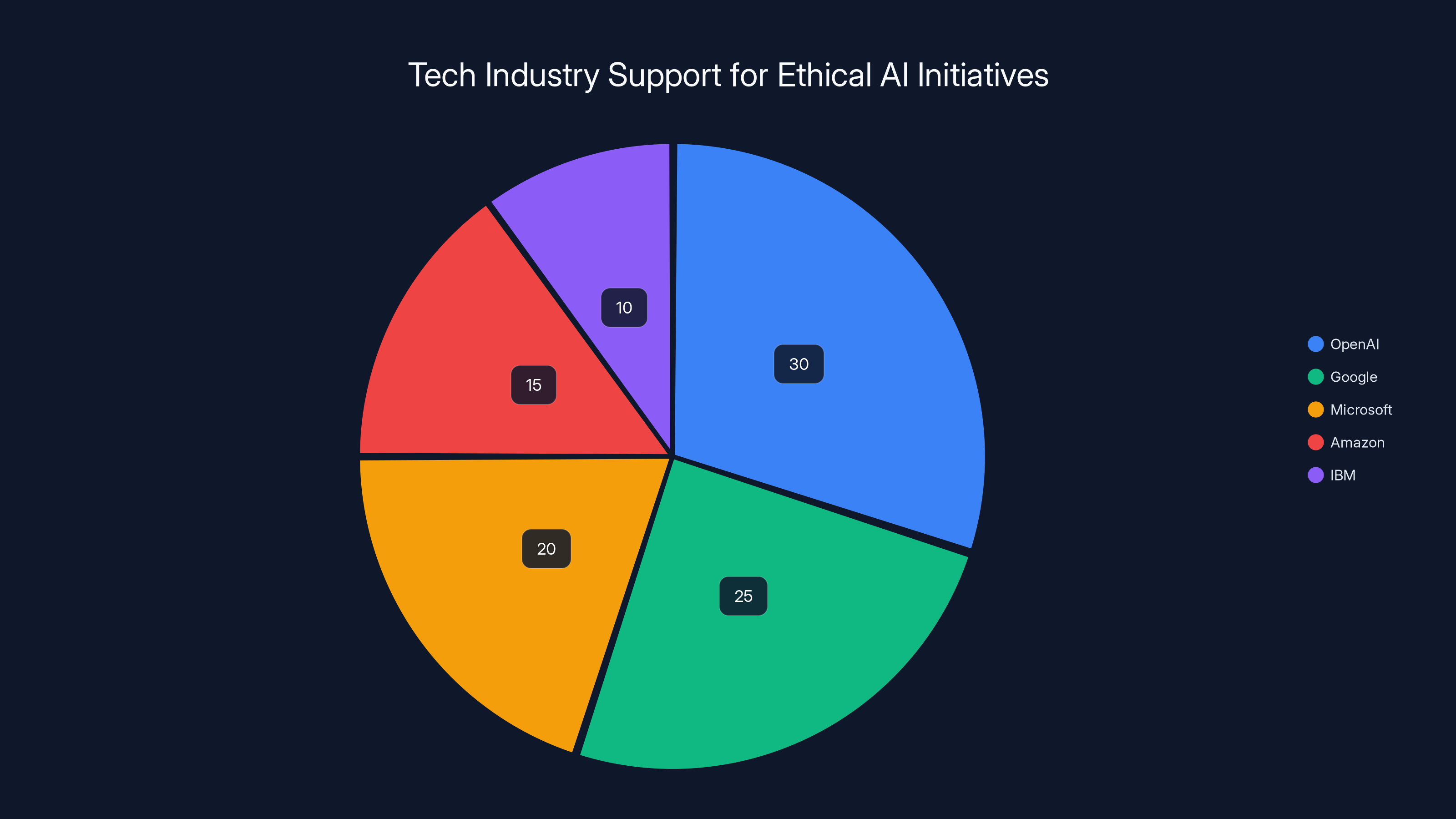

Estimated data shows that OpenAI and Google lead the support for ethical AI initiatives, reflecting their commitment to responsible AI development.

The Role of Amicus Briefs

In response, Anthropic filed lawsuits challenging this designation. An amicus brief—a document filed by non-litigants with a strong interest in the subject matter—was submitted by over 30 employees from OpenAI and Google DeepMind, including Jeff Dean, a prominent AI researcher. The brief argues that the DOD's actions were arbitrary and could stifle innovation in the AI industry.

What is an Amicus Brief?

Amicus briefs are crucial in legal cases involving complex technical and ethical issues, as they bring diverse perspectives to the court's attention. In this scenario, the brief supports Anthropic's position, emphasizing the broader industry impact of the DOD's stance.

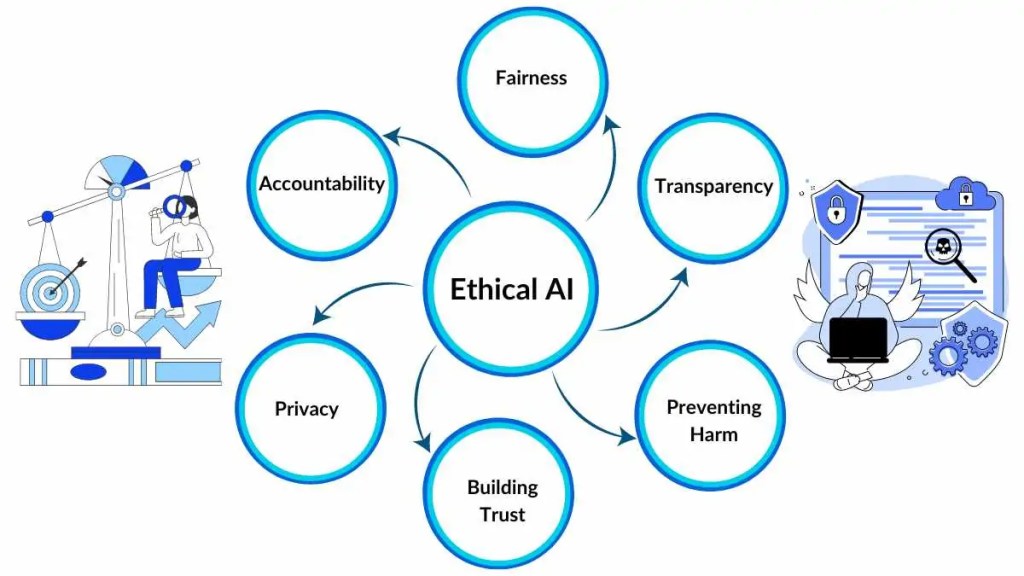

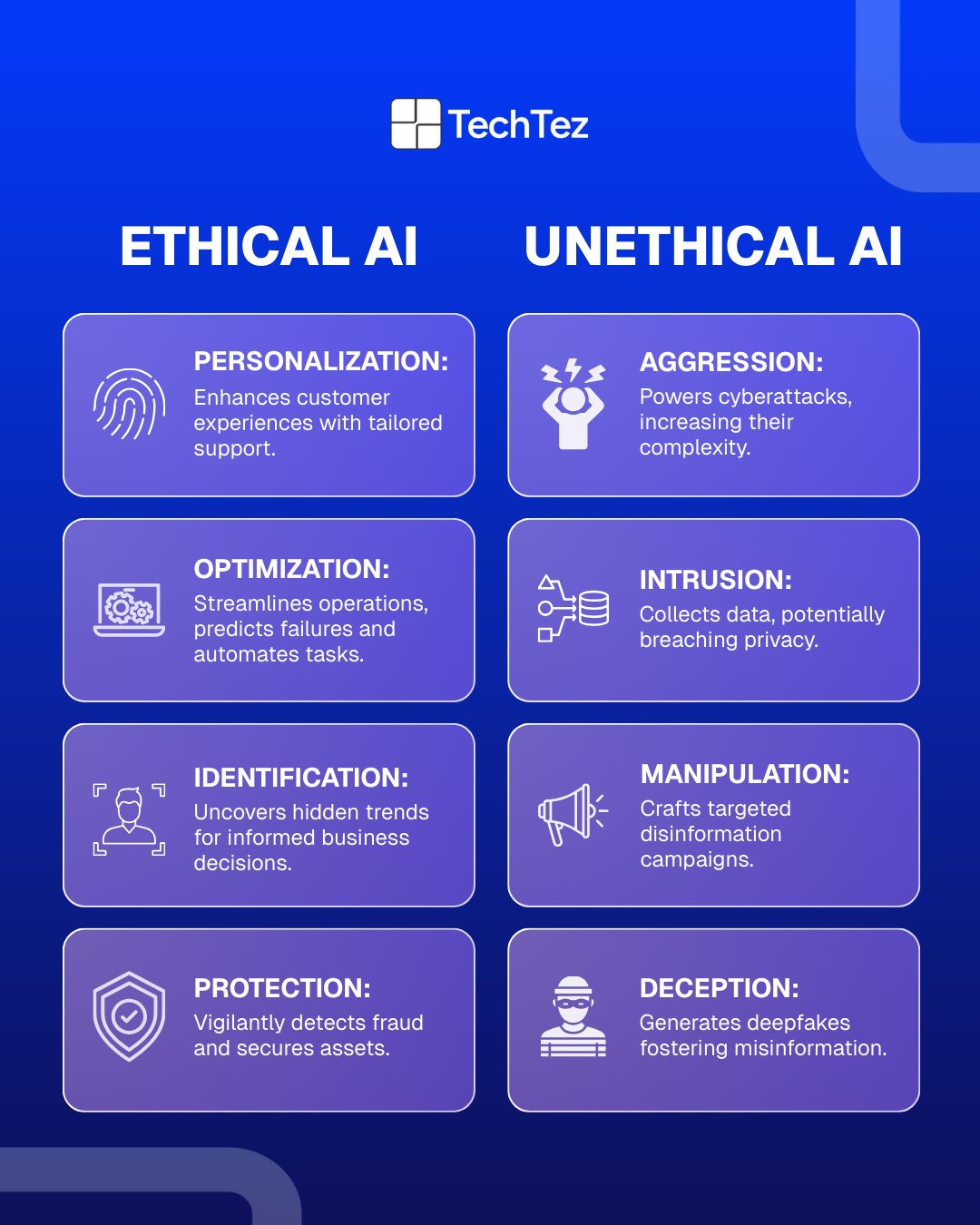

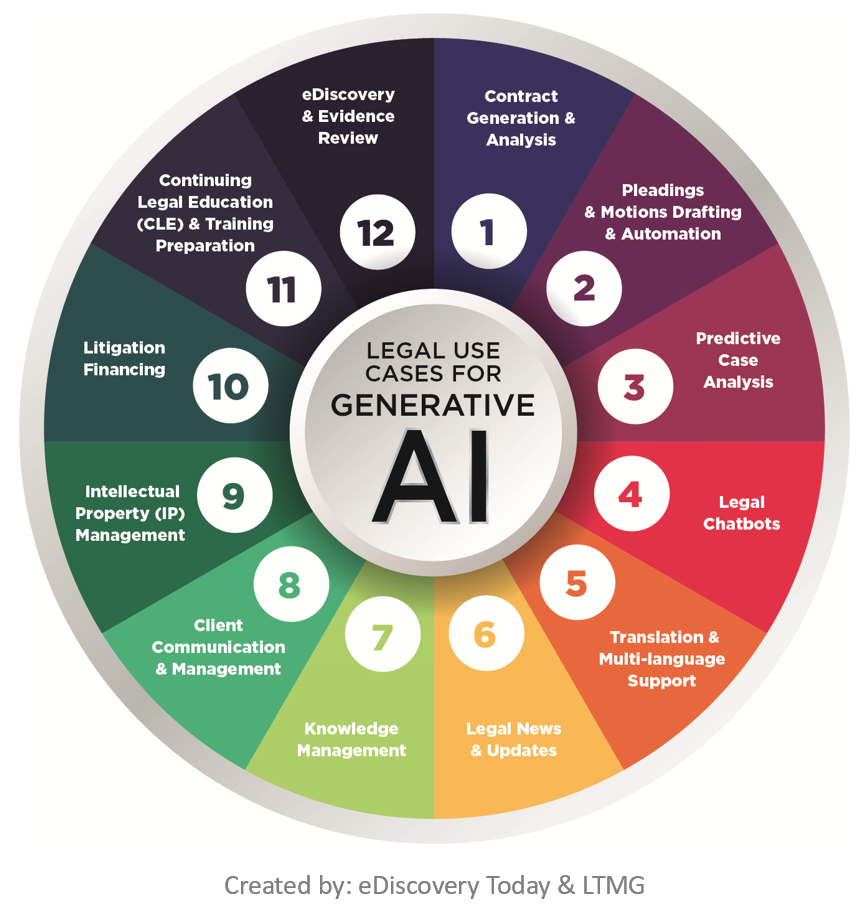

Ethical Dilemmas in AI

The Anthropic case underscores the ethical dilemmas inherent in AI development and deployment. AI's potential for surveillance and weaponization raises significant concerns about privacy, autonomy, and human rights.

AI in Surveillance

AI technologies enable unprecedented levels of surveillance, from facial recognition systems to data mining. These capabilities can enhance national security but also pose risks to individual privacy and civil liberties. The debate centers on finding a balance between security needs and ethical considerations.

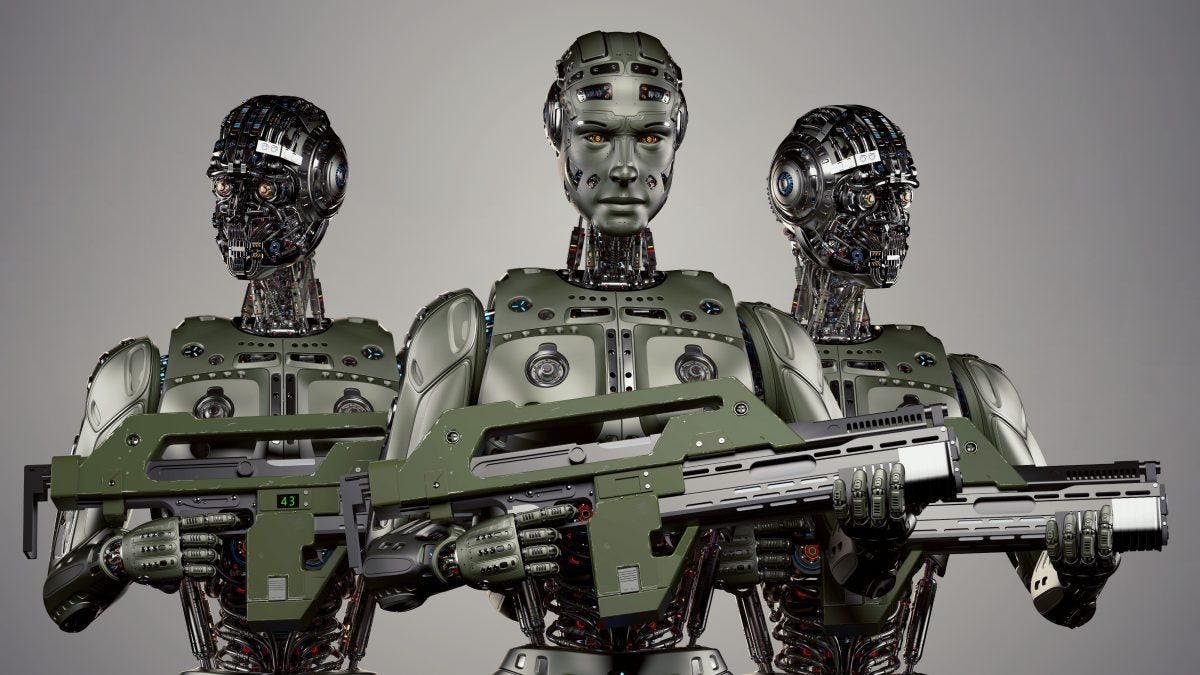

Autonomous Weapons

The prospect of AI-driven autonomous weapons introduces further ethical challenges. These systems could potentially make life-and-death decisions without human intervention, raising questions about accountability and the moral implications of delegating lethal force to machines.

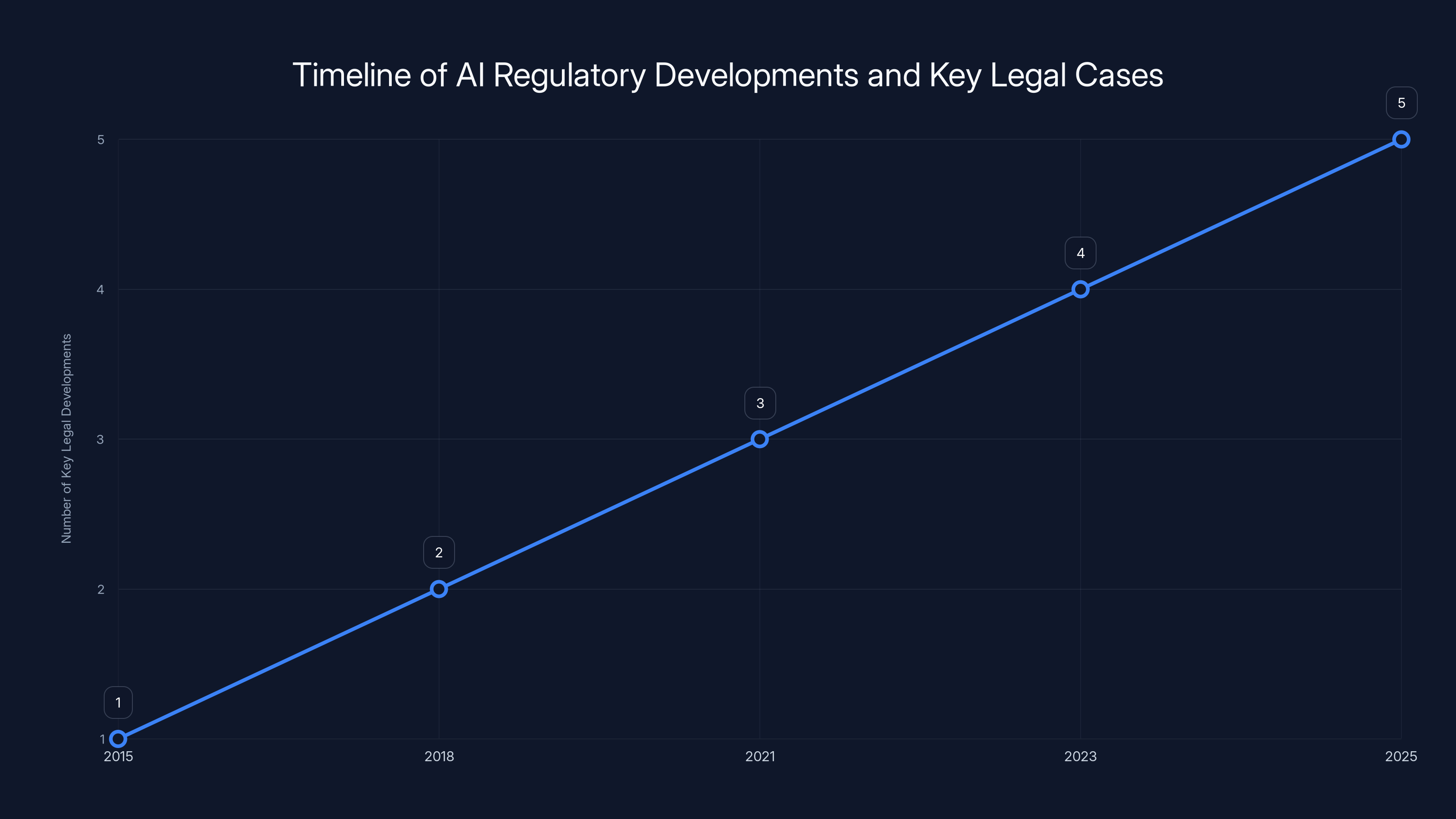

This timeline illustrates the progression of key legal cases and regulatory developments in AI governance, projecting an increase in legal activity as AI technologies advance. Estimated data.

Industry Response

The support for Anthropic from OpenAI and Google employees is indicative of a broader industry movement advocating for responsible AI development. This coalition highlights the importance of ethical considerations in AI and the need for industry-wide standards.

Why Industry Support Matters

The backing from tech giants like OpenAI and Google sends a strong message to policymakers about the tech community's commitment to ethical AI. It also underscores the potential consequences of government overreach in technology regulation.

Legal and Regulatory Implications

The Anthropic lawsuit could set important legal precedents for AI governance. If the court rules in favor of Anthropic, it may establish limitations on government use of AI technologies, especially in areas involving privacy and autonomy.

Future Legal Frameworks

As AI technologies evolve, so too must the legal frameworks governing their use. This case highlights the need for comprehensive regulations that address the unique challenges posed by AI, balancing innovation with ethical considerations.

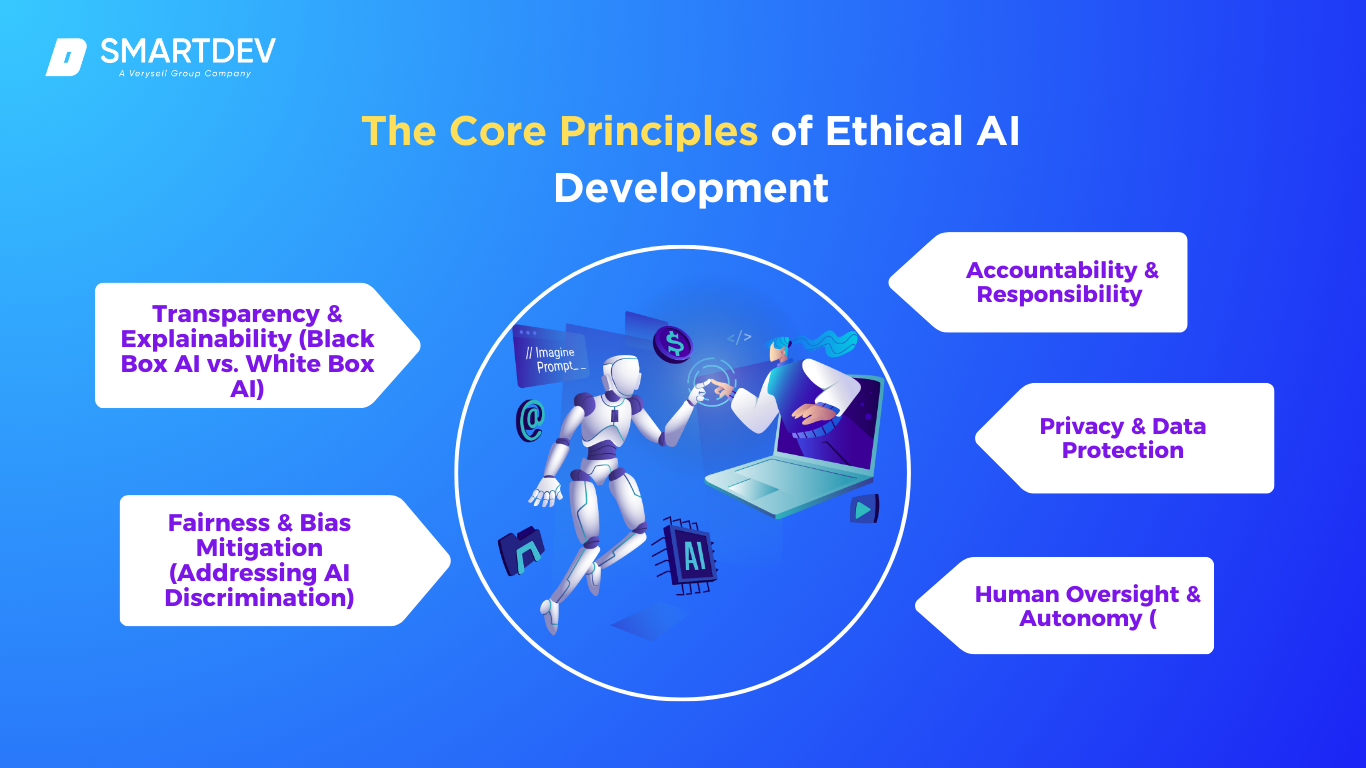

Best Practices for Ethical AI Development

For companies navigating the complex landscape of AI ethics and regulation, certain best practices can help mitigate risks and align with industry standards.

- Establish Ethical Guidelines: Develop clear guidelines for ethical AI use, focusing on transparency, accountability, and human oversight.

- Engage Stakeholders: Involve diverse stakeholders, including ethicists, policymakers, and affected communities, in AI development processes.

- Conduct Impact Assessments: Regularly assess the social and ethical impacts of AI technologies, and implement measures to address identified risks.

- Foster Industry Collaboration: Collaborate with other organizations to share insights and develop industry-wide standards for ethical AI.

Common Pitfalls and Solutions

Despite best efforts, companies may encounter challenges in implementing ethical AI practices. Here are some common pitfalls and solutions:

Pitfall: Lack of Transparency

Many AI systems operate as "black boxes," making it difficult to understand their decision-making processes.

Solution: Implement explainable AI techniques to increase transparency and build trust with users.

Pitfall: Bias in AI Models

AI models can inadvertently perpetuate biases present in training data, leading to unfair outcomes.

Solution: Regularly audit AI models for bias and implement strategies to mitigate it, such as diverse training datasets and bias correction algorithms.

Pitfall: Inadequate Stakeholder Engagement

Failing to involve stakeholders in AI development can result in technologies that do not align with societal values.

Solution: Foster open dialogue with stakeholders and incorporate their feedback throughout the development process.

Future Trends and Recommendations

As AI continues to evolve, several trends and recommendations can guide ethical AI development and deployment:

Trend: Increased Focus on AI Governance

Governments and organizations are likely to place greater emphasis on AI governance, developing policies and frameworks to ensure ethical and responsible AI use.

Trend: Emergence of AI Ethics Certifications

Expect the rise of AI ethics certifications, providing companies with a way to demonstrate their commitment to ethical AI practices.

Recommendation: Prioritize Ethical AI in Education

Educational institutions should incorporate AI ethics into curricula, preparing future developers and policymakers to navigate the complexities of AI.

Conclusion

The Anthropic DOD lawsuit is more than a legal dispute; it is a pivotal moment in the ongoing dialogue about AI ethics and national security. The outcome of this case could shape the future of AI governance, influencing how technology companies and governments collaborate on sensitive applications. As AI continues to transform our world, the need for ethical considerations and responsible development practices becomes increasingly urgent.

FAQ

What is the Anthropic DOD lawsuit about?

The lawsuit involves Anthropic challenging the U.S. Department of Defense's designation of the company as a supply chain risk, which could affect its ability to secure government contracts.

Why did the DOD label Anthropic a supply chain risk?

The DOD's classification came after Anthropic refused to allow its AI technology to be used for mass surveillance and autonomous weapons, raising ethical concerns.

How did the tech industry respond to the lawsuit?

Over 30 employees from OpenAI and Google DeepMind supported Anthropic by filing an amicus brief, highlighting concerns about the DOD's actions and advocating for ethical AI practices.

What are the ethical concerns associated with AI in national security?

Key concerns include the potential for AI-driven surveillance to infringe on privacy rights and the ethical implications of autonomous weapons systems.

What legal precedents could the Anthropic case set?

A ruling in favor of Anthropic may establish limitations on government use of AI technologies, especially in areas involving privacy and autonomy.

How can companies ensure ethical AI development?

Companies can adopt best practices such as establishing ethical guidelines, engaging stakeholders, conducting impact assessments, and collaborating with industry peers.

What are some common pitfalls in ethical AI development?

Pitfalls include lack of transparency, bias in AI models, and inadequate stakeholder engagement, which can be addressed through explainable AI techniques, bias audits, and open dialogue.

What future trends are expected in AI ethics and governance?

Trends include increased focus on AI governance, the emergence of AI ethics certifications, and prioritization of ethical AI education in academic curricula.

Key Takeaways

- The lawsuit highlights critical ethical considerations in AI deployment, particularly regarding surveillance and autonomous weapons.

- Support from tech giants like OpenAI and Google underscores the importance of ethical AI development.

- The case could set significant legal precedents for AI usage in national security.

- There is a pressing need for clear guidelines and frameworks for ethical AI use in sensitive applications.

- Future trends point towards increased focus on AI governance and the emergence of AI ethics certifications.

- Educational institutions should prioritize AI ethics to prepare future developers and policymakers.

Related Articles

- Understanding the Conflict: Anthropic's Legal Battle with the Defense Department [2025]

- OpenAI and Google Support Anthropic in High-Stakes Legal Battle Against US Government [2025]

- Exploring OpenAI's GPT-5.4: Strengths, Weaknesses, and Future Enhancements [2025]

- WIRED - Unraveling the Latest Innovations in Tech, Science, and Culture [2025]

- The Future of Rugged Phones: Pop-Out Action Cams Revolutionizing Mobile Photography [2025]

- Trump's Cyber Strategy: A New Era for U.S. Cyber Power [2025]

![The Anthropic DOD Lawsuit: Navigating AI Ethics and National Security [2025]](https://tryrunable.com/blog/the-anthropic-dod-lawsuit-navigating-ai-ethics-and-national-/image-1-1773092086711.jpg)