Building Realistic Electric Transmission Grid Datasets at Scale [2025]

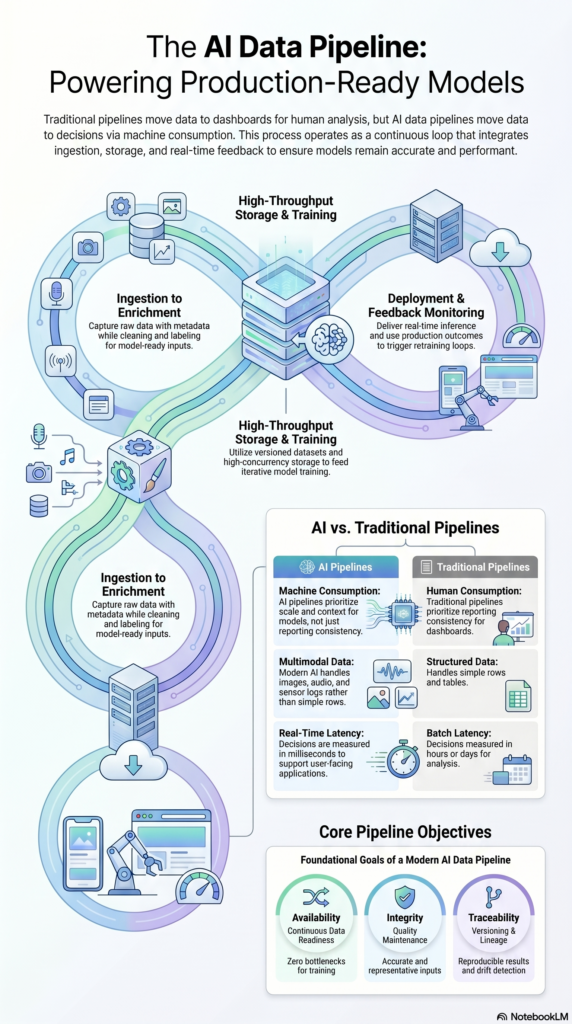

As power demands increase globally, the need for efficient and realistic electric transmission grid datasets becomes vital. These datasets help in planning, simulating, and optimizing electric grids to meet future energy needs effectively. Let's dive into how you can build a robust pipeline for creating these datasets using open data sources.

TL; DR

- Open Data Utilization: Leverage open datasets to construct scalable electric grids.

- Data Integration: Combine various data sources for comprehensive modeling.

- Simulation Tools: Use advanced simulation tools for grid testing.

- Challenges & Solutions: Address common data inconsistencies and integration issues.

- Future Outlook: Embrace AI and machine learning for predictive grid analysis.

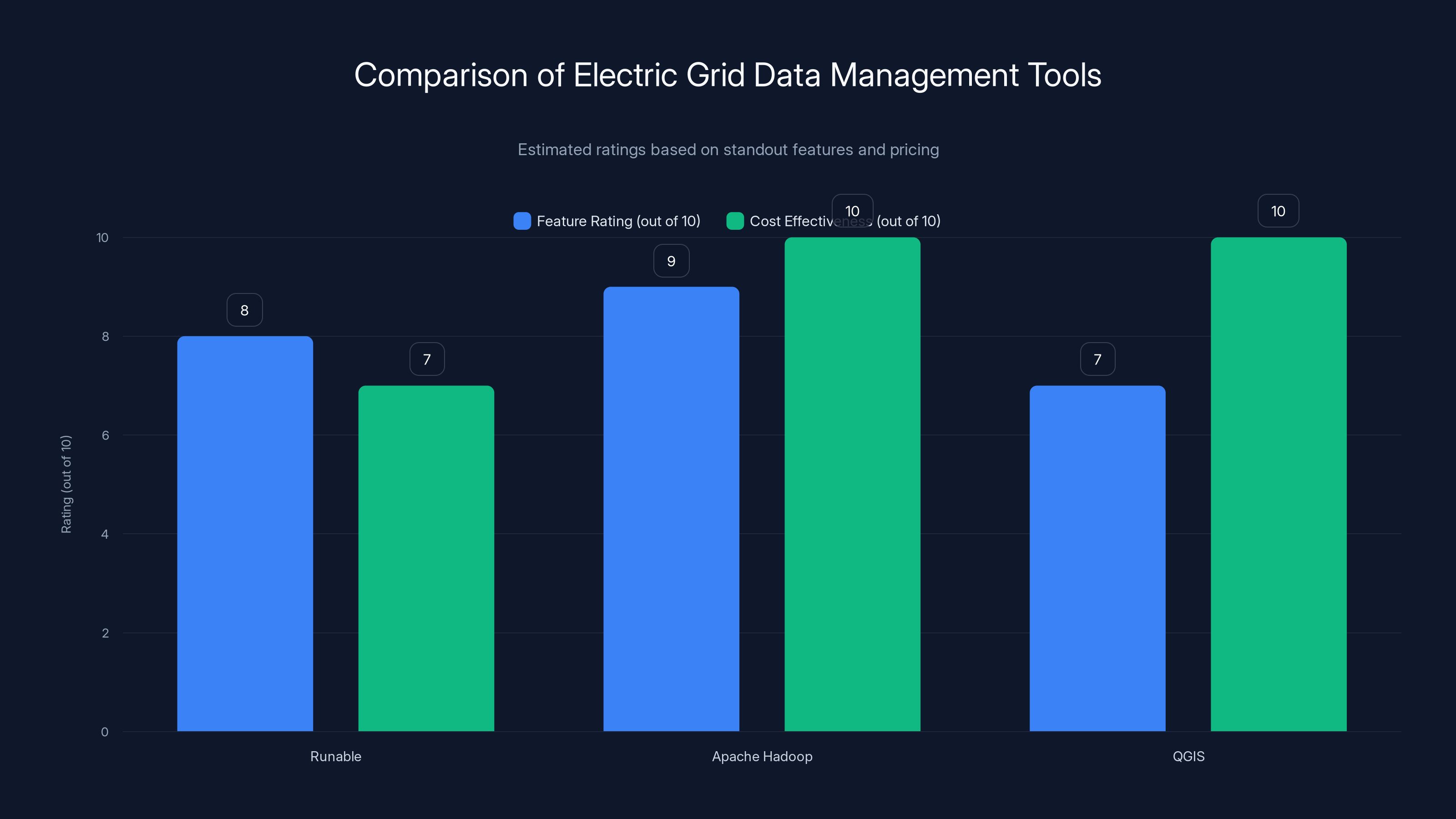

Apache Hadoop scores highest in both feature rating and cost effectiveness due to its scalable capabilities and open-source nature. Estimated data based on tool features and pricing.

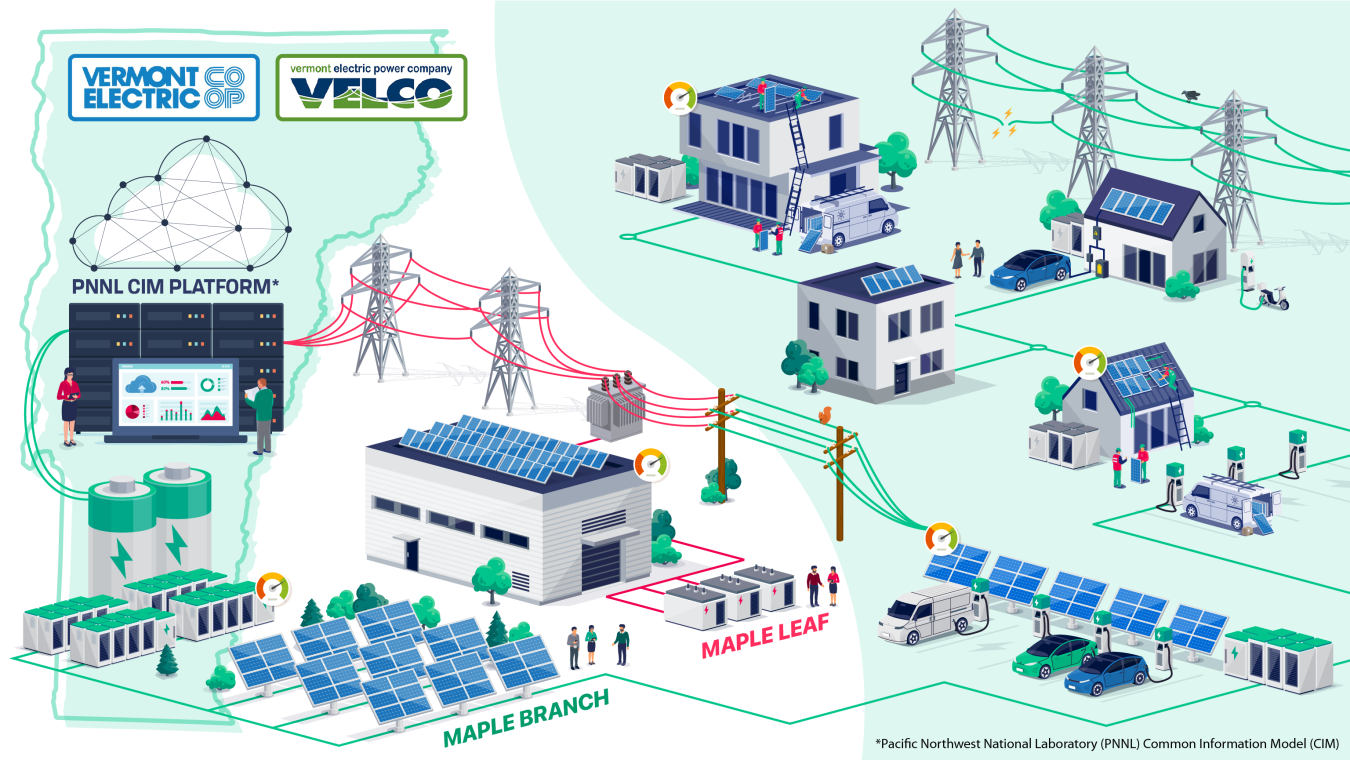

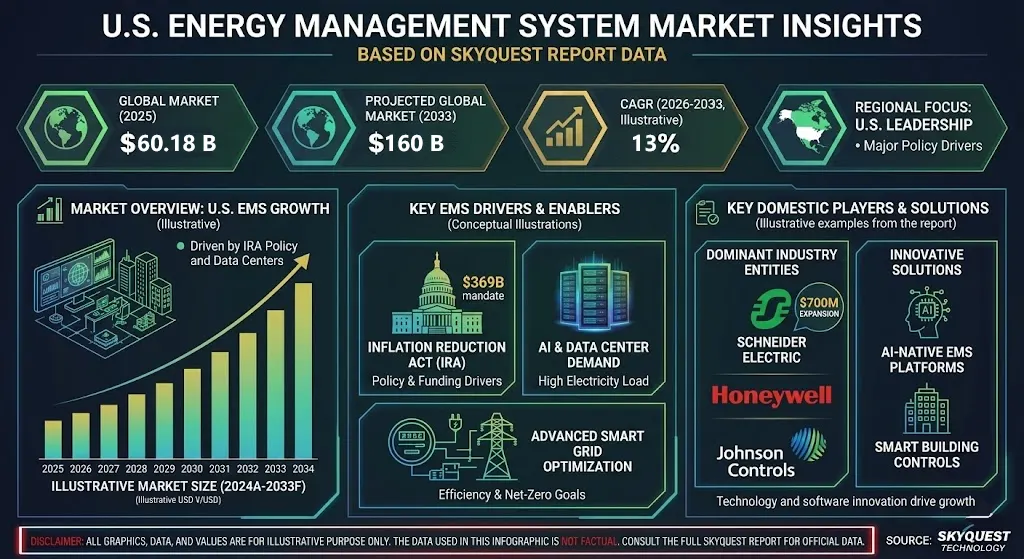

Understanding the Electric Grid Landscape

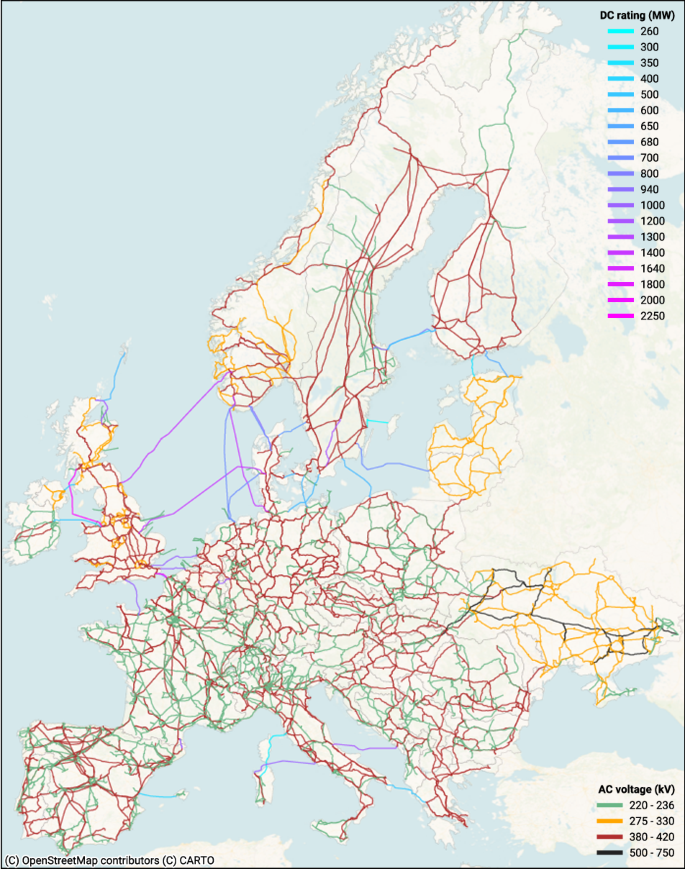

Electric grids are complex networks that deliver electricity from producers to consumers. They consist of power generation stations, transmission lines, substations, and distribution lines. To model these systems accurately, datasets need to reflect real-world conditions, including geographical, environmental, and consumption variables.

The Importance of Realistic Grids

Realistic grid datasets are crucial for:

- Planning and Development: Ensuring new grid infrastructures meet current and future demands.

- Operational Efficiency: Enhancing the reliability and efficiency of electricity delivery.

- Predictive Maintenance: Identifying potential failures before they occur.

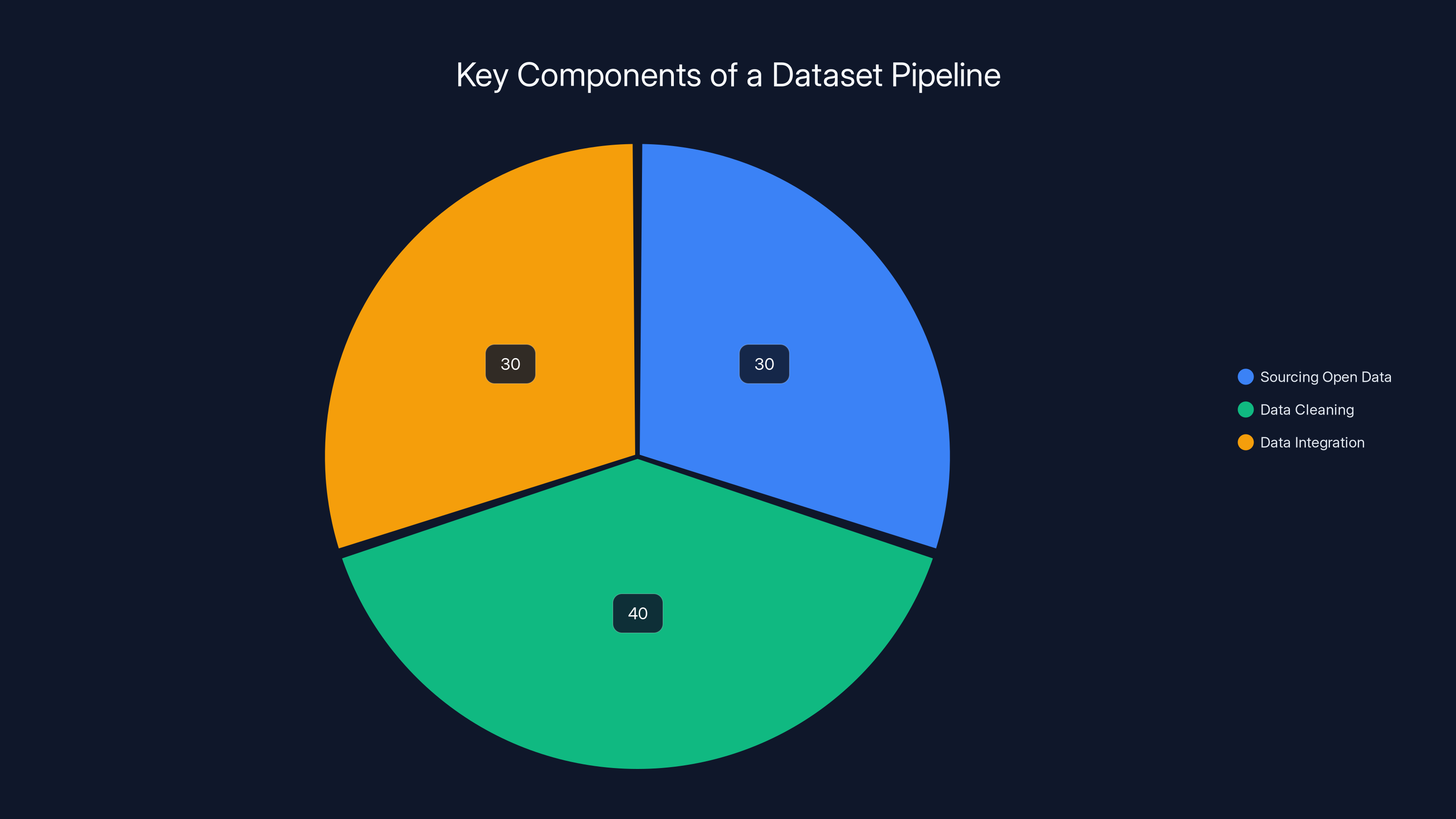

Data cleaning typically consumes the most time in a dataset pipeline, followed by sourcing and integration. Estimated data.

Building the Pipeline: Key Components

Developing a robust dataset pipeline involves several stages:

1. Sourcing Open Data

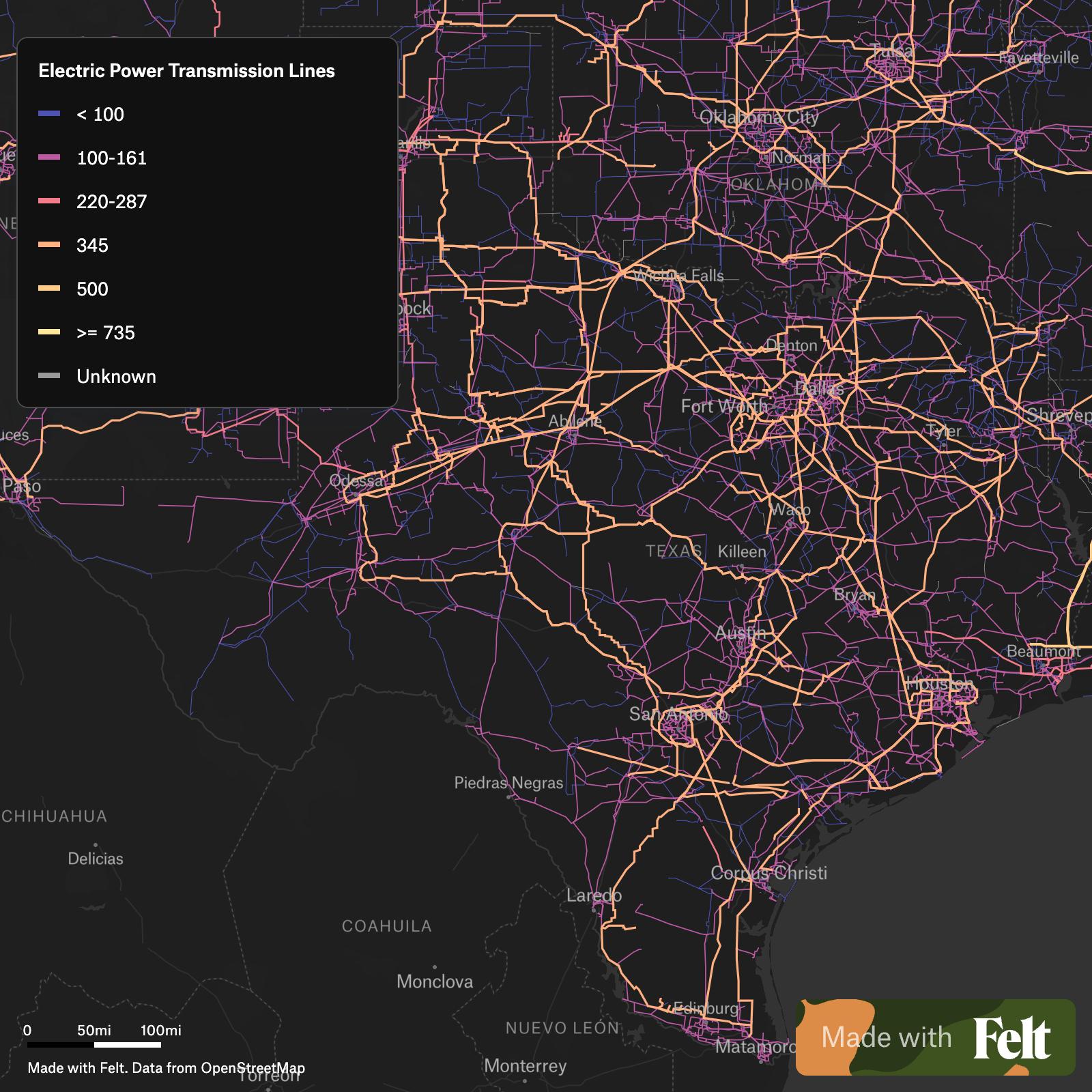

Open data is the backbone of scalable dataset creation. Begin by identifying reliable data repositories such as government databases, academic publications, and industry reports. The U.S. Energy Information Administration (EIA) offers comprehensive energy data, while OpenStreetMap provides geographical data that can map transmission lines. Additionally, the Global Energy Observatory contains details on power plants and transmission networks.

2. Data Cleaning and Preprocessing

Raw data is often messy and inconsistent. Cleaning involves:

- Removing Duplicates: Ensure unique records to prevent skewed data.

- Standardizing Units: Convert all measurements to a common standard.

- Filling Missing Values: Use interpolation or machine learning techniques to estimate missing data points.

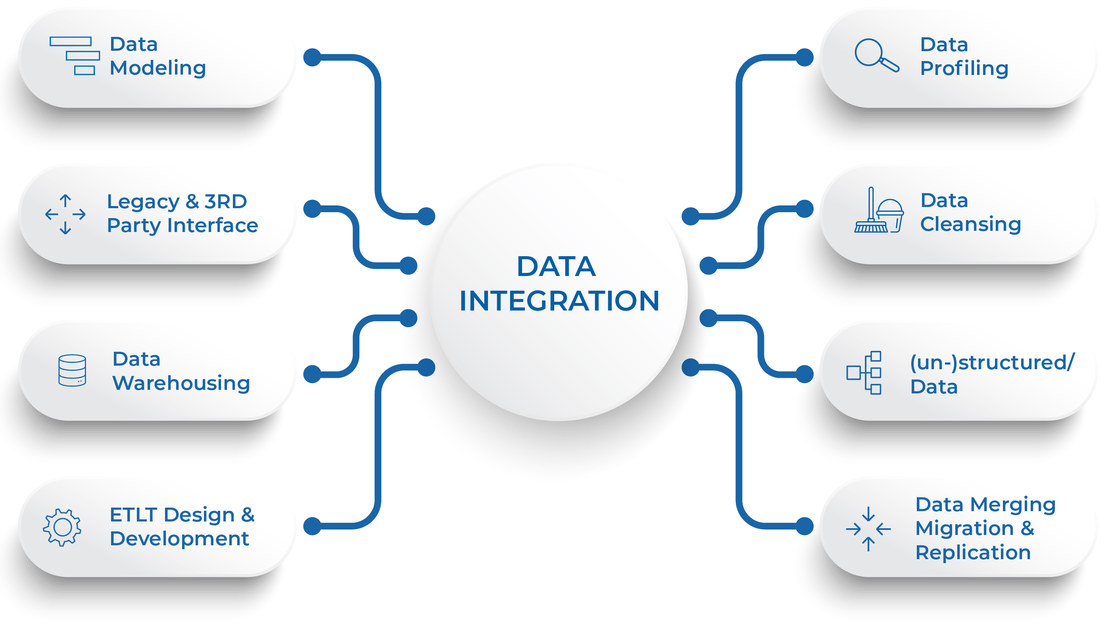

3. Data Integration

Combining data from various sources creates a holistic dataset. Use tools like Apache Spark or Pandas for:

- Merging Datasets: Align data points across datasets.

- Handling Temporal Data: Synchronize datasets that have different time intervals.

Practical Implementation Guide

Let's walk through a practical example of building a dataset pipeline:

Step 1: Define Objectives

Identify the primary goals of your dataset. Are you focusing on grid expansion? Efficiency improvements? This will guide your data sourcing and processing decisions.

Step 2: Select Tools

Choose the right tools for data collection and processing:

- Apache Hadoop: For handling large-scale data processing.

- QGIS: For geographical data visualization.

- Neo4j: For managing and querying graph databases of electric grids.

Step 3: Build the Pipeline

- Data Ingestion: Use APIs to pull data from sources.

- Data Cleaning: Apply scripts to clean and preprocess data.

- Data Storage: Store data in a scalable database like PostgreSQL.

- Data Analysis: Use Python or R for initial data analysis to ensure integrity.

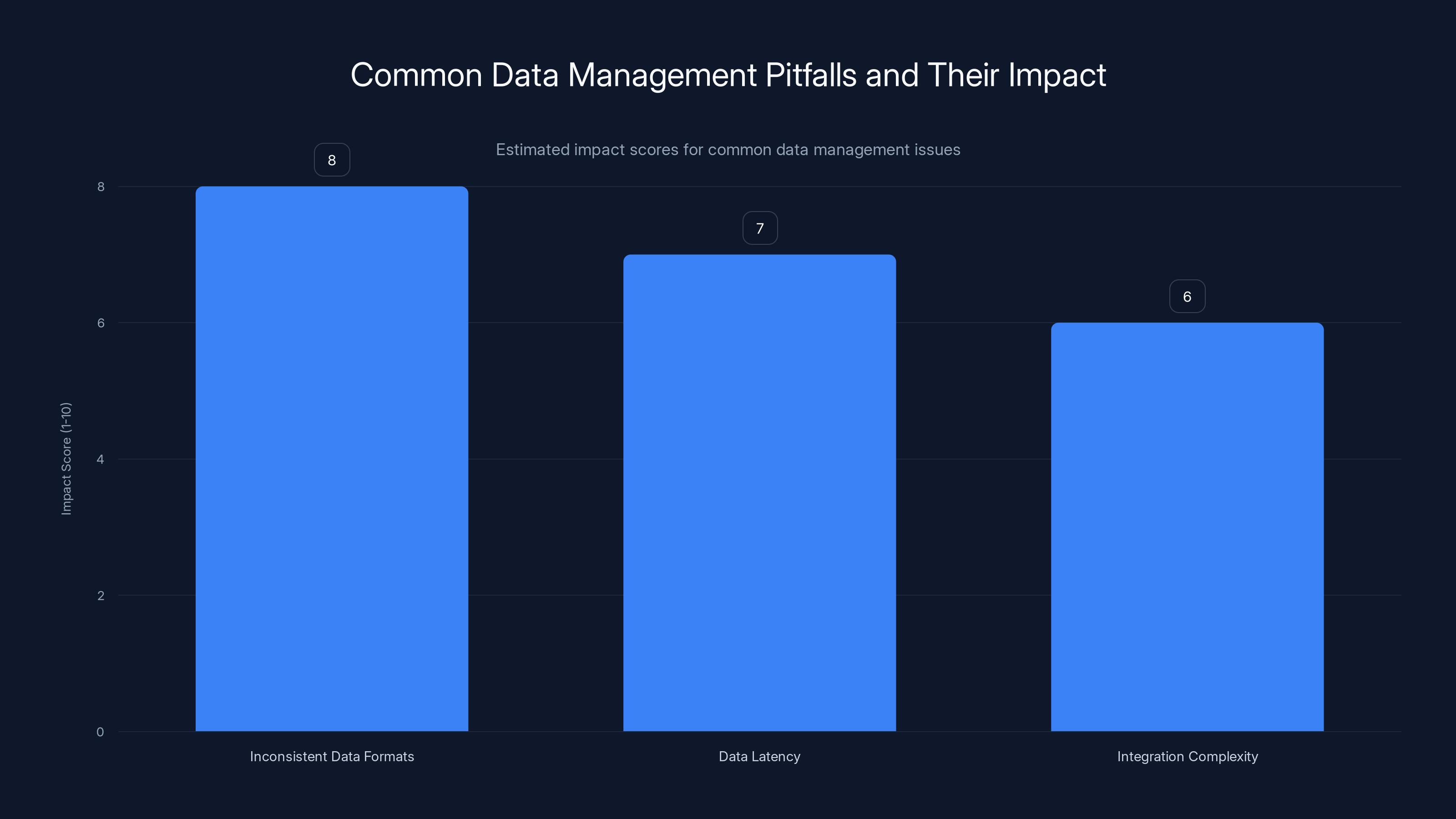

Inconsistent data formats have the highest impact on data management, followed by data latency and integration complexity. Estimated data.

Common Pitfalls and Solutions

Pitfall 1: Inconsistent Data Formats

Solution: Establish a clear data dictionary and enforce schema consistency across datasets.

Pitfall 2: Data Latency

Solution: Implement real-time data processing using stream processing frameworks like Apache Kafka.

Pitfall 3: Integration Complexity

Solution: Use data integration platforms like Talend to simplify the merging of disparate datasets.

Future Trends in Electric Grid Modeling

AI and Machine Learning Integration

AI technologies can enhance grid modeling by predicting demand and optimizing resource distribution. Machine learning algorithms can identify patterns and anomalies that might not be apparent in traditional analysis, as discussed in a recent study.

Increased Use of IoT Devices

The integration of IoT devices in electric grids will provide real-time data insights, leading to more responsive and adaptive grid management systems.

Blockchain for Grid Security

Blockchain technology offers a secure way to manage grid transactions and data, reducing the risk of cyber threats, as highlighted in recent research.

Conclusion

Building realistic electric transmission grid datasets is a complex but rewarding endeavor. By leveraging open data, utilizing advanced tools, and staying ahead of technological trends, you can develop datasets that significantly improve grid management and efficiency.

Use Case: Automate your electric grid data processing workflows with AI-powered tools.

Try Runable For Free

FAQ

What is an electric transmission grid dataset?

An electric transmission grid dataset includes data about the infrastructure and operational parameters of power grids, essential for planning and optimizing electricity delivery.

How do I source open data for grid datasets?

Utilize public databases like the U.S. Energy Information Administration, OpenStreetMap, and Global Energy Observatory for reliable data.

What tools are best for data integration?

Tools like Apache Spark and Talend are effective for integrating and processing large datasets from multiple sources.

How can AI improve grid modeling?

AI can predict electricity demand, optimize resource allocation, and identify potential failures before they occur, enhancing grid reliability.

What are the benefits of using blockchain in grid management?

Blockchain provides a secure method for managing transactions and data, reducing cyber threats and ensuring data integrity.

The Best Tools for Electric Grid Data Management

| Tool | Best For | Standout Feature | Pricing |

|---|---|---|---|

| Runable | AI automation | AI agents for presentations, docs, reports, images, videos | $9/month |

| Apache Hadoop | Big data processing | Scalable data storage and processing | Open Source |

| QGIS | Geographical data | Advanced mapping capabilities | Free |

Quick Navigation:

- Runable for AI-powered data processing

- Apache Hadoop for big data handling

- QGIS for geographical insights

Key Takeaways

- Open datasets are crucial for building scalable electric grids.

- Data integration is key to comprehensive grid modeling.

- AI and machine learning enhance predictive grid analysis.

- Real-time data processing mitigates data latency issues.

- Blockchain secures grid transactions and data.

Related Articles

- Harnessing AI: How PlayStation is Revolutionizing Gaming with Artificial Intelligence [2025]

- Understanding Valve's New System to Combat Steam Controller Scalping [2025]

- ZAYA1-8B: The Future of Efficient AI Reasoning Models [2025]

- Building League-Winning AI Agents: Lessons from the Football Pitch [2025]

- How Supercomputers Are Paving the Way to Simulate Earth as a Living Digital System [2025]

- AI Diagnosis Tools: Revolutionizing Rare Disease Detection While Posing New Challenges [2025]

![Building Realistic Electric Transmission Grid Datasets at Scale: A Comprehensive Guide [2025]](https://tryrunable.com/blog/building-realistic-electric-transmission-grid-datasets-at-sc/image-1-1778270636291.jpg)