Introduction

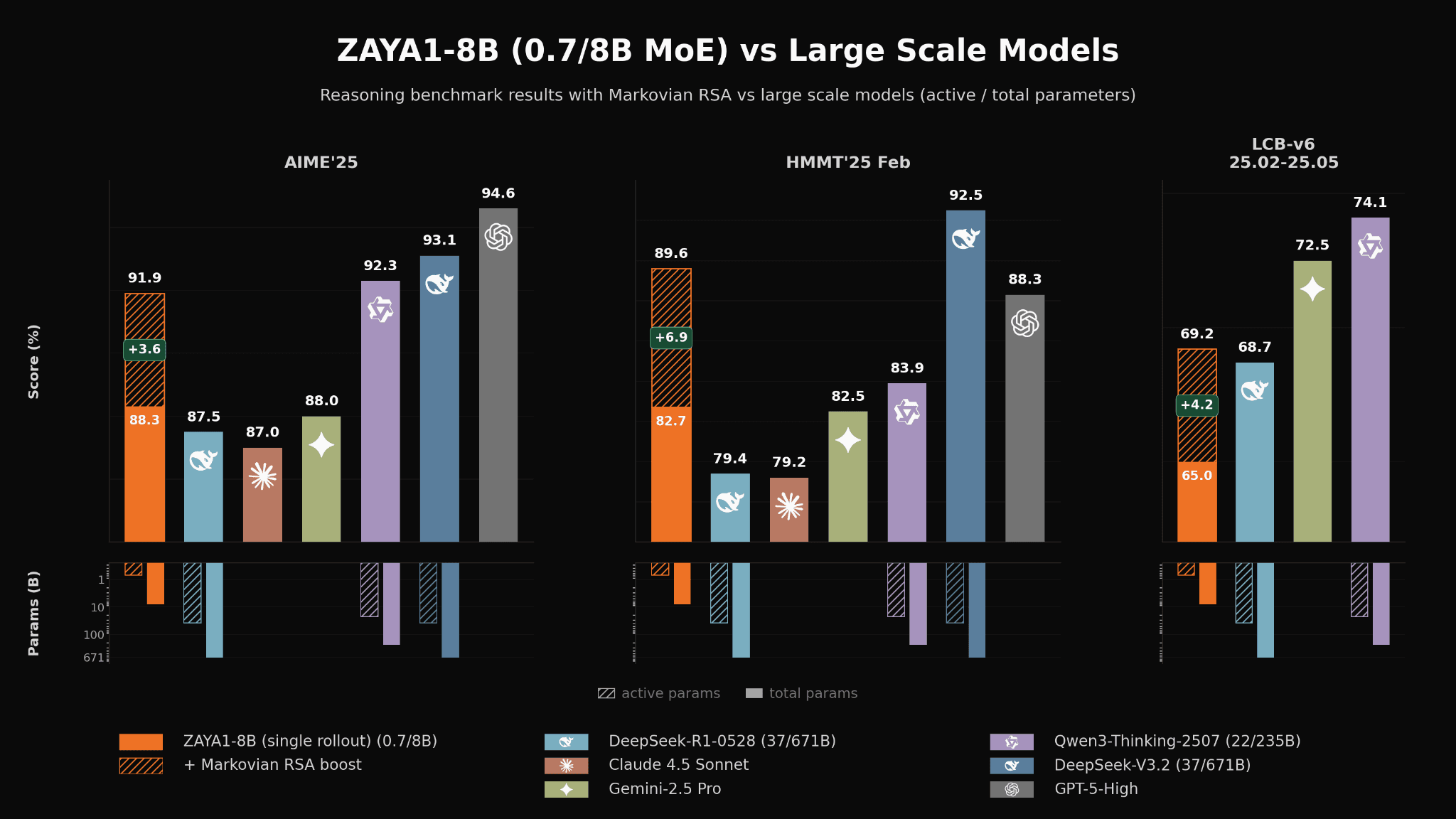

The AI landscape is often dominated by headlines about ever-larger models, yet there's a compelling argument for efficiency over sheer size. Enter ZAYA1-8B, a model that exemplifies this ethos by delivering powerful reasoning capabilities with only 8 billion parameters. Developed by Zyphra, a Palo Alto-based startup, ZAYA1-8B is trained on the cutting-edge AMD Instinct MI300 GPUs, marking a significant step forward in the development of efficient AI models.

TL; DR

- ZAYA1-8B is a super-efficient AI model with 8 billion parameters.

- Trained using AMD Instinct MI300 GPUs, it offers competitive performance against larger models.

- Designed as an open-source model, it's accessible on Hugging Face.

- Ideal for applications requiring efficient computation without sacrificing accuracy.

- Represents a shift towards smaller, more efficient AI models.

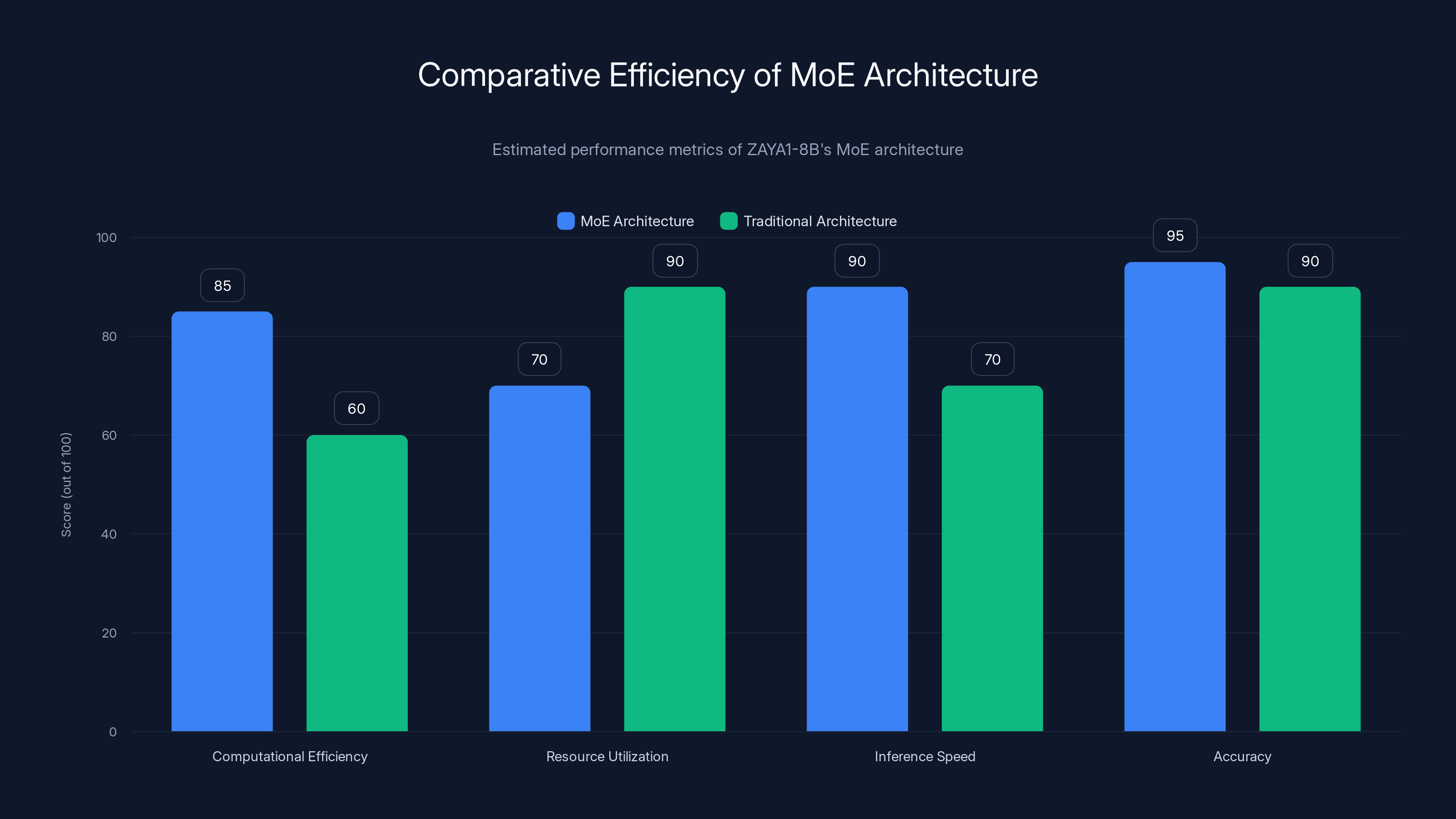

The MoE architecture of ZAYA1-8B shows superior computational efficiency and inference speed compared to traditional architectures, while maintaining high accuracy. (Estimated data)

The Rise of Efficient AI Models

In a world where AI models are rapidly expanding in size, ZAYA1-8B offers a refreshing alternative. The trend towards smaller, more efficient models is driven by several factors:

- Cost efficiency: Larger models require more resources, leading to higher training and operating costs.

- Accessibility: Smaller models lower the barrier to entry, making advanced AI capabilities available to a broader audience.

- Environmental impact: Reducing the computational load decreases energy consumption, aligning with sustainable practices.

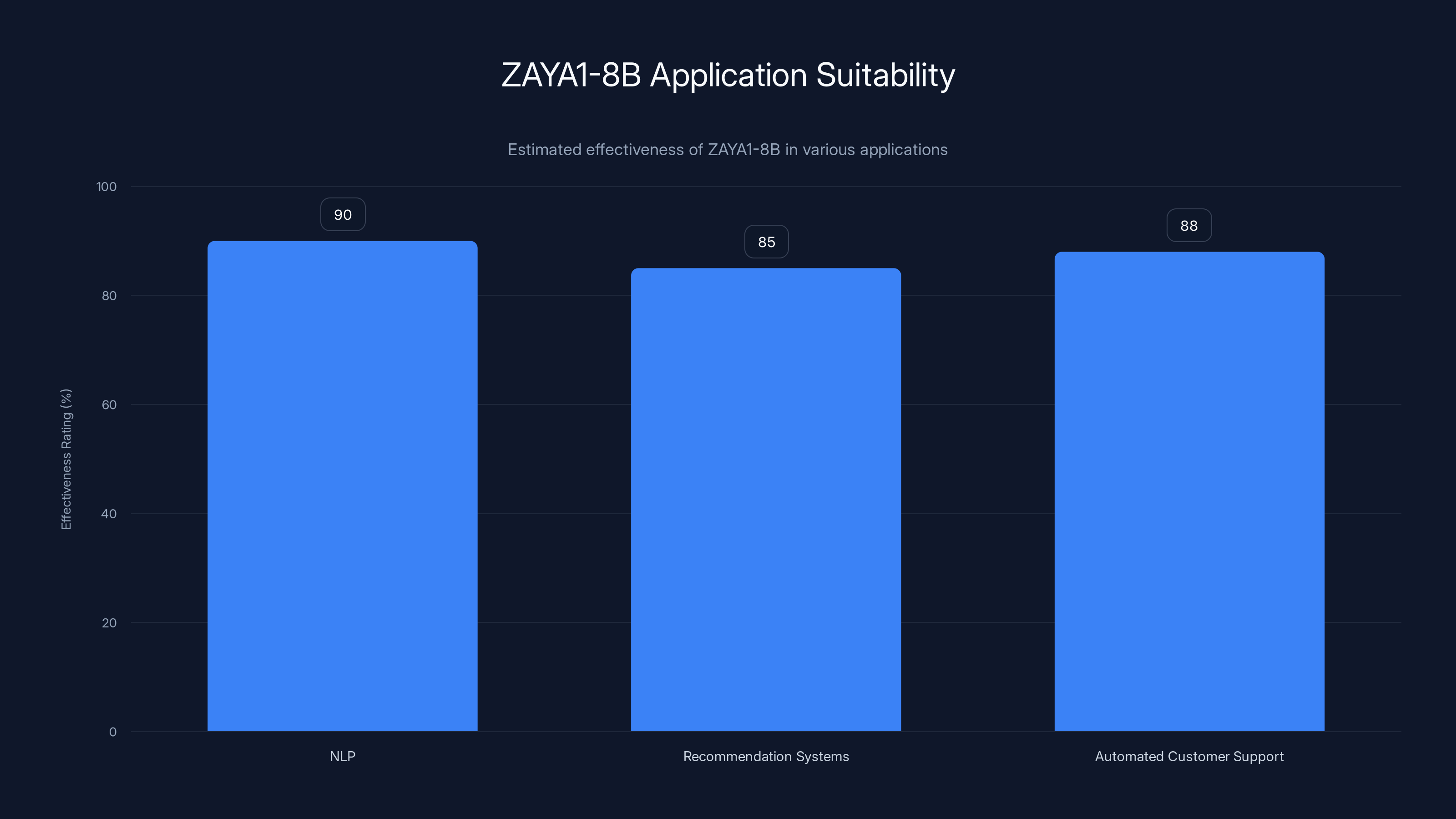

ZAYA1-8B shows high effectiveness in NLP, recommendation systems, and automated customer support applications. Estimated data based on typical use cases.

Technical Foundations of ZAYA1-8B

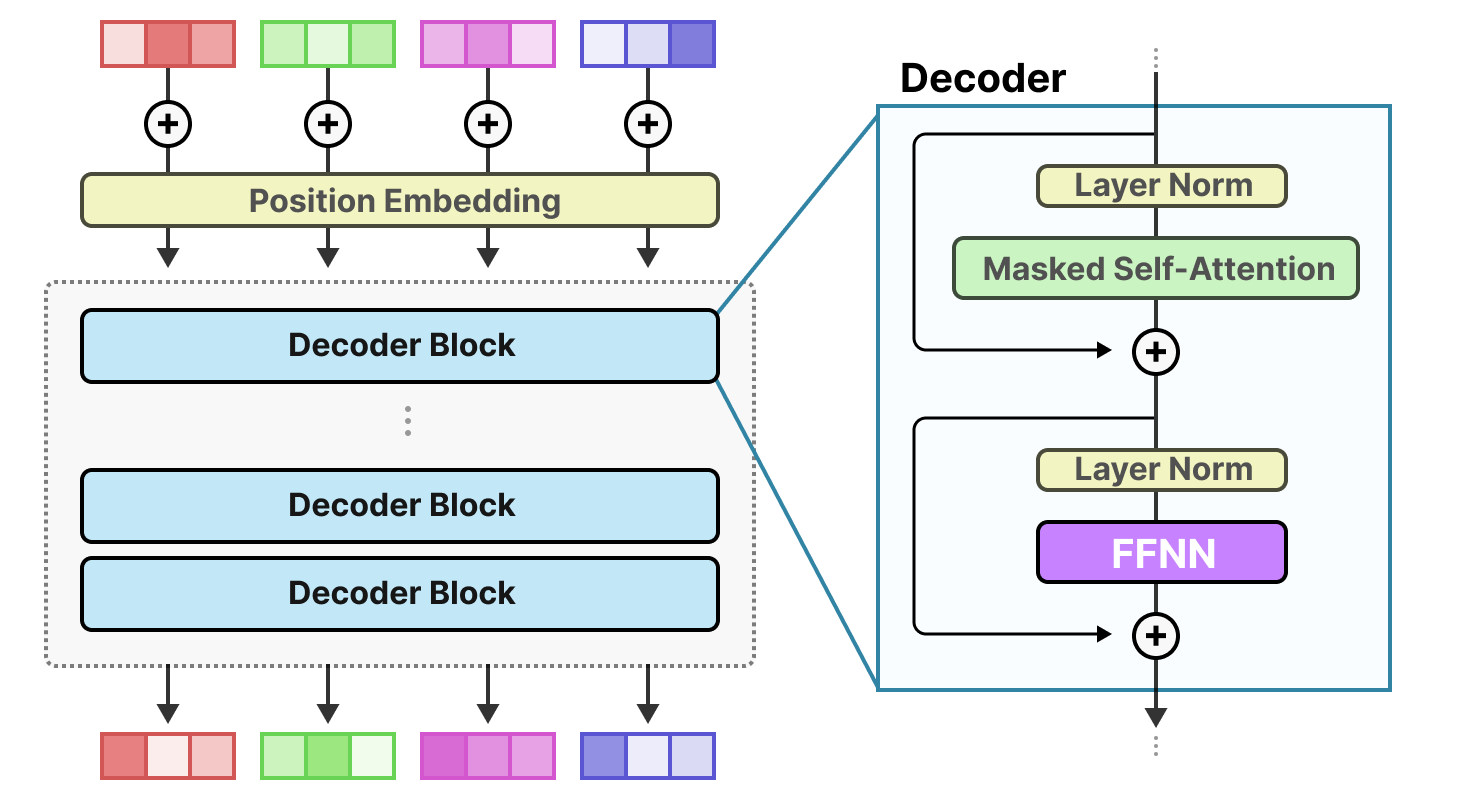

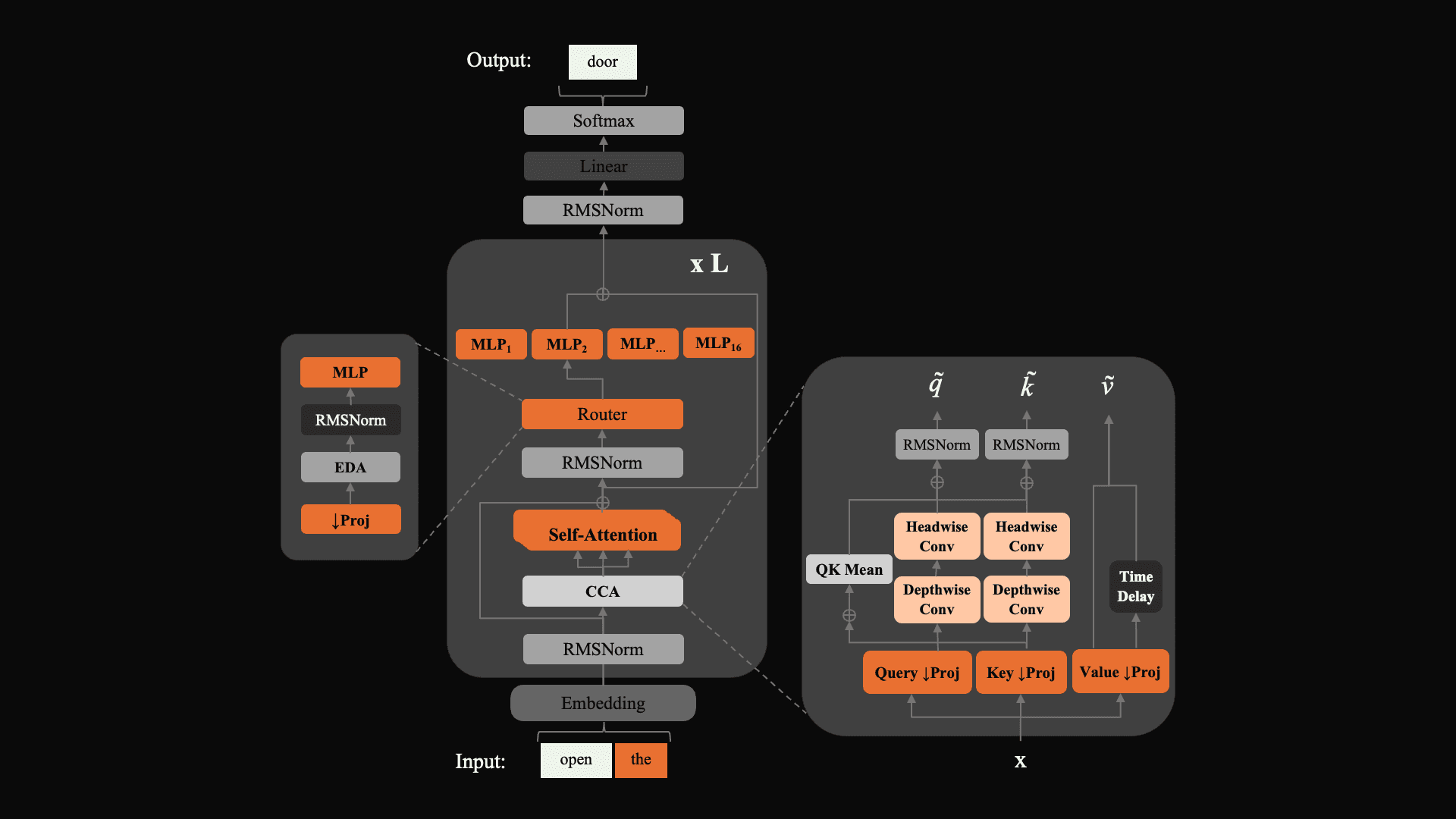

Architecture and Design

ZAYA1-8B is built on a mixture-of-experts (MoE) architecture, which allows it to dynamically allocate computational resources based on the input task. This design choice enhances efficiency by activating only a fraction of the model's parameters during inference.

Here's a simplified breakdown of the MoE architecture process:

- Input Handling: The model receives input data and identifies the relevant 'experts' needed for processing.

- Expert Selection: Based on the input, specific subsets of the model's parameters are activated.

- Inference Execution: Only the selected parameters are used, significantly reducing computational overhead.

- Output Generation: The model produces an output that's both accurate and resource-efficient.

Training on AMD Instinct MI300 GPUs

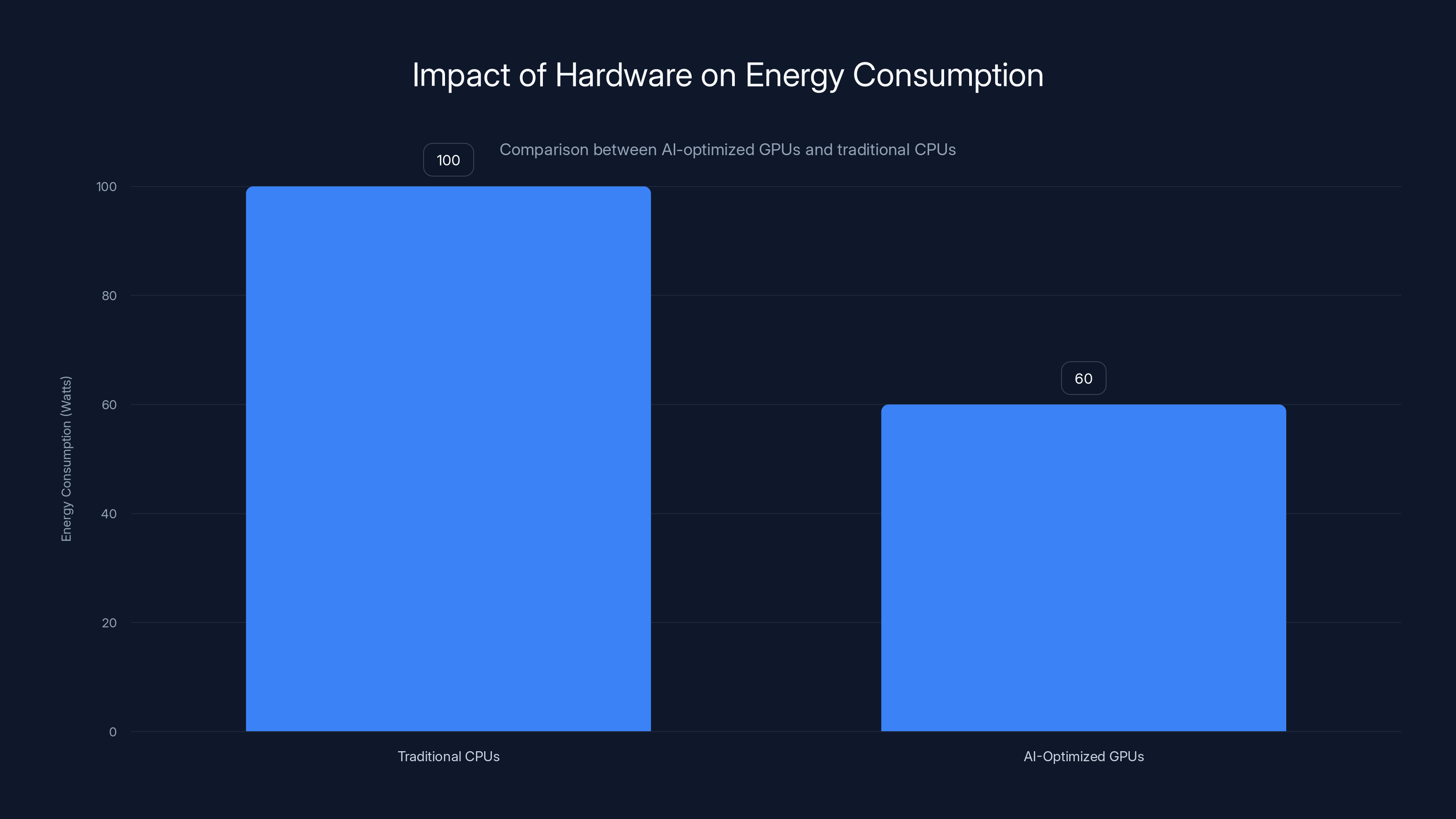

The training of ZAYA1-8B on AMD Instinct MI300 GPUs is a testament to the power of these hardware accelerators. These GPUs are designed to handle the rigorous demands of AI workloads, providing:

- High throughput: Capable of managing large-scale data processing with impressive speed.

- Energy efficiency: Optimized for reducing power consumption while maintaining high performance.

- Advanced software support: Compatible with leading AI frameworks, facilitating seamless integration.

Implementing ZAYA1-8B in Real-World Applications

Use Cases

ZAYA1-8B is particularly suited for applications where efficiency and reasoning are paramount. Here are a few scenarios where it excels:

- Natural Language Processing (NLP): Tasks such as sentiment analysis and language translation benefit from the model's reasoning capabilities.

- Recommendation Systems: Efficiently processes user data to deliver personalized content suggestions.

- Automated Customer Support: Provides accurate and swift responses to customer queries, improving service quality.

Best Practices for Deployment

To maximize the benefits of ZAYA1-8B, consider the following best practices:

- Hardware Compatibility: Ensure the deployment environment supports AMD Instinct MI300 GPUs to leverage the model's full potential.

- Resource Management: Use dynamic resource allocation strategies to optimize computational efficiency.

- Continuous Monitoring: Implement monitoring systems to track performance and adjust configurations as needed.

AI-optimized GPUs can reduce energy consumption by up to 40% compared to traditional CPUs, enhancing efficiency and sustainability. Estimated data.

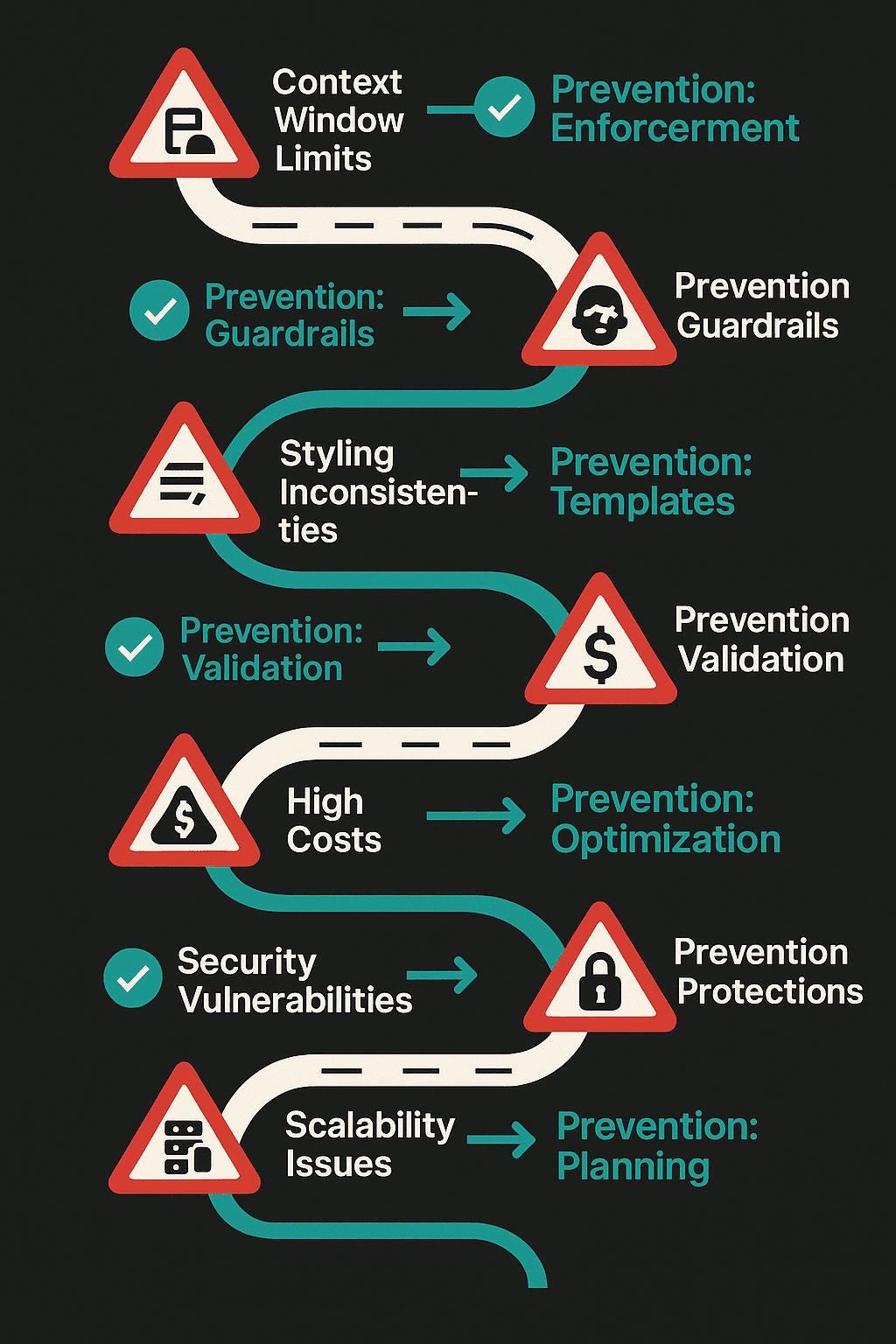

Common Pitfalls and Solutions

Pitfall: Suboptimal Hardware Utilization

Solution: Conduct a thorough assessment of your computing infrastructure to ensure compatibility with the model's requirements. Consider upgrading to compatible GPUs for optimal performance.

Pitfall: Overlooking Model Fine-Tuning

Solution: Fine-tune ZAYA1-8B for your specific application domain to enhance accuracy and efficiency. Leverage transfer learning techniques to expedite this process.

The Future of AI with Models Like ZAYA1-8B

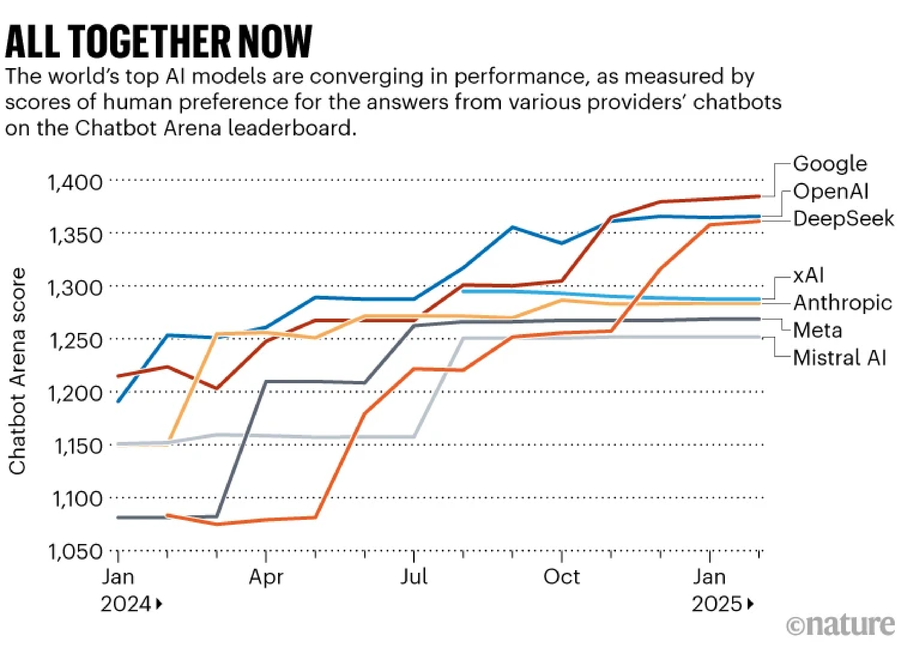

The development of ZAYA1-8B signals a broader shift in the AI industry towards models that prioritize efficiency without compromising performance. As we look to the future, several trends are likely to shape the trajectory of AI model development:

- Hybrid Models: Combining different architectural approaches to balance efficiency and power.

- Sustainability Focus: Increasing emphasis on reducing the carbon footprint of AI operations.

- Wider Accessibility: Open-source models like ZAYA1-8B democratize AI, enabling more organizations to leverage advanced technologies.

Recommendations for Practitioners

- Stay Informed: Keep abreast of the latest developments in AI hardware and software to capitalize on new opportunities.

- Embrace Open Source: Engage with the open-source community to share insights and collaborate on improving model performance.

- Prioritize Scalability: Design systems that can scale efficiently as demand for AI capabilities grows.

Conclusion

ZAYA1-8B represents a significant advancement in the field of AI, offering a pathway to more efficient and accessible machine learning models. By focusing on scalability and sustainability, it sets a new standard for what AI can achieve. As the industry continues to evolve, models like ZAYA1-8B will play a crucial role in shaping the future of technology.

Use Case: Leverage Runable to automate your AI workflow, integrating ZAYA1-8B efficiently into your operations.

Try Runable For FreeFAQ

What is ZAYA1-8B?

ZAYA1-8B is an efficient AI reasoning model with 8 billion parameters, designed to deliver high performance with reduced computational resources.

How is ZAYA1-8B trained?

It's trained on AMD Instinct MI300 GPUs, which provide high throughput and energy efficiency crucial for handling large AI workloads.

What are the advantages of using ZAYA1-8B?

The model offers significant efficiency in processing, making it ideal for NLP, recommendation systems, and customer support applications.

How can ZAYA1-8B be integrated into existing systems?

Ensure compatibility with the necessary hardware and utilize dynamic resource allocation to seamlessly integrate the model.

What future trends will influence AI models like ZAYA1-8B?

Expect a rise in hybrid models, increased focus on sustainability, and greater accessibility through open-source platforms.

Can ZAYA1-8B be customized for specific tasks?

Yes, it can be fine-tuned using transfer learning techniques to optimize performance for specific applications.

What are the best practices for deploying ZAYA1-8B?

Monitor system performance continuously, optimize resource management, and ensure hardware compatibility for best results.

Key Takeaways

- ZAYA1-8B offers efficient performance with only 8 billion parameters.

- The model is trained using AMD Instinct MI300 GPUs for high throughput.

- Open-source nature allows for customization and widespread accessibility.

- Ideal for applications like NLP, recommendation systems, and customer support.

- Future trends include hybrid models and a focus on sustainability.

Related Articles

- Google's Gemma 4: Unlocking Speed with Speculative Decoding [2025]

- The Hidden Carbon Footprint of Video Calls [2025]

- China's Moonshot AI Raises $2B Amid Open-Source AI Boom [2025]

- Building League-Winning AI Agents: Lessons from the Football Pitch [2025]

- Anthropic's Expansion: Doubling Claude Code Usage and Partnering with SpaceX [2025]

- AI Diagnosis Tools: Revolutionizing Rare Disease Detection While Posing New Challenges [2025]

![ZAYA1-8B: The Future of Efficient AI Reasoning Models [2025]](https://tryrunable.com/blog/zaya1-8b-the-future-of-efficient-ai-reasoning-models-2025/image-1-1778178831582.png)