Chrome's 4GB AI Download Controversy: Unpacking User Consent and Privacy Implications [2025]

Google Chrome is at the center of a privacy storm, with allegations that it downloads a hefty 4GB AI file without user consent. This incident raises significant questions about user privacy, consent, and the ethical deployment of AI technologies. In this comprehensive article, we'll explore the technical intricacies of this case, the implications for user privacy, and best practices for developers and companies deploying AI models.

TL; DR

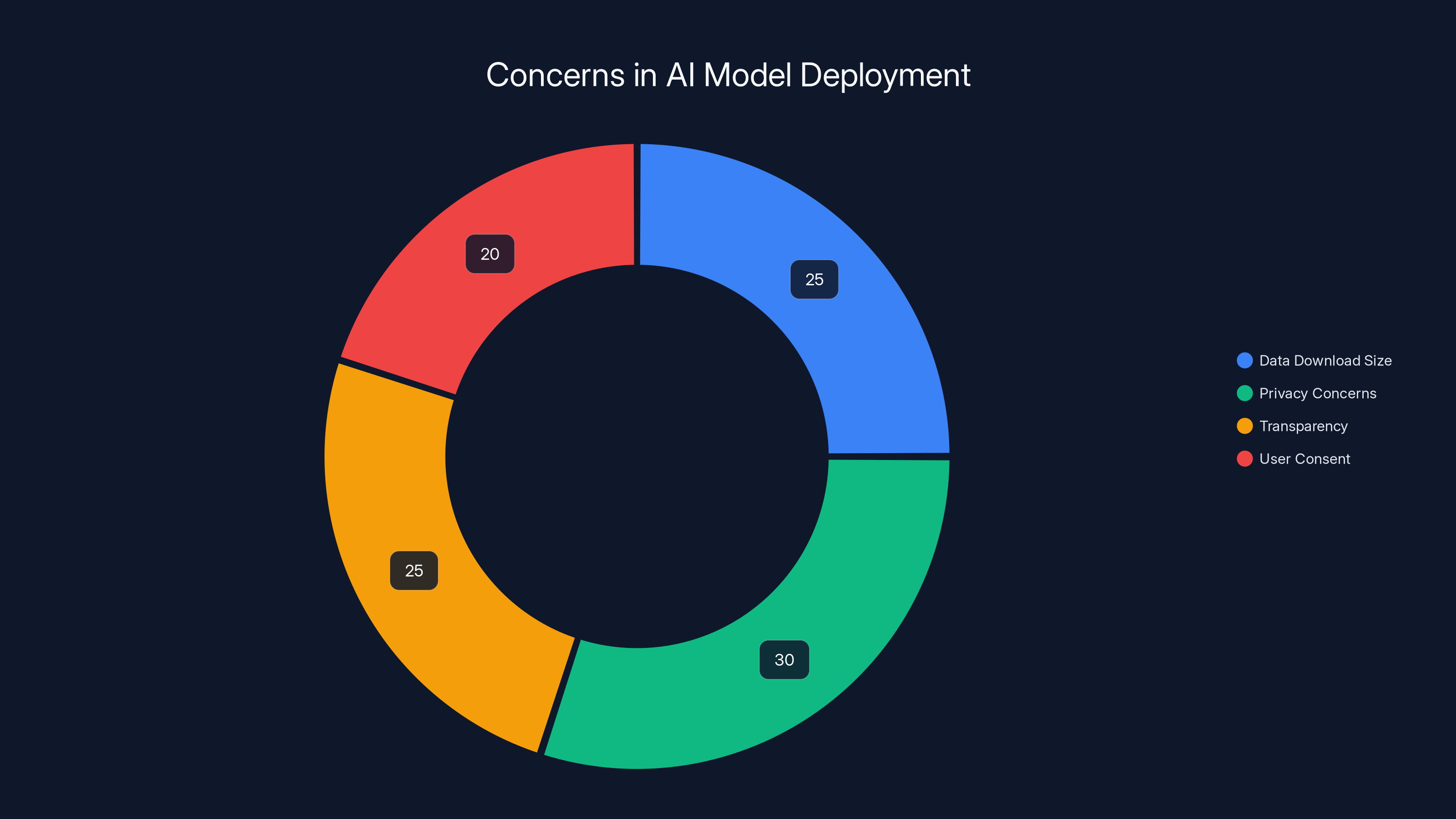

- Key Point 1: Chrome reportedly downloads a 4GB AI file without explicit user consent, as detailed in The Verge's report.

- Key Point 2: The file, named "weights.bin," is linked to Google's Gemini Nano AI model, which is designed to enhance features like predictive text and scam detection, according to FoneArena.

- Key Point 3: Privacy concerns arise over undisclosed data usage, as highlighted in Google's blog.

- Key Point 4: Ethical AI deployment requires transparency and user consent, a sentiment echoed in KPMG's AI insights.

- Bottom Line: This case highlights the need for clear privacy standards in AI integration, as discussed in KPMG's AI assurance report.

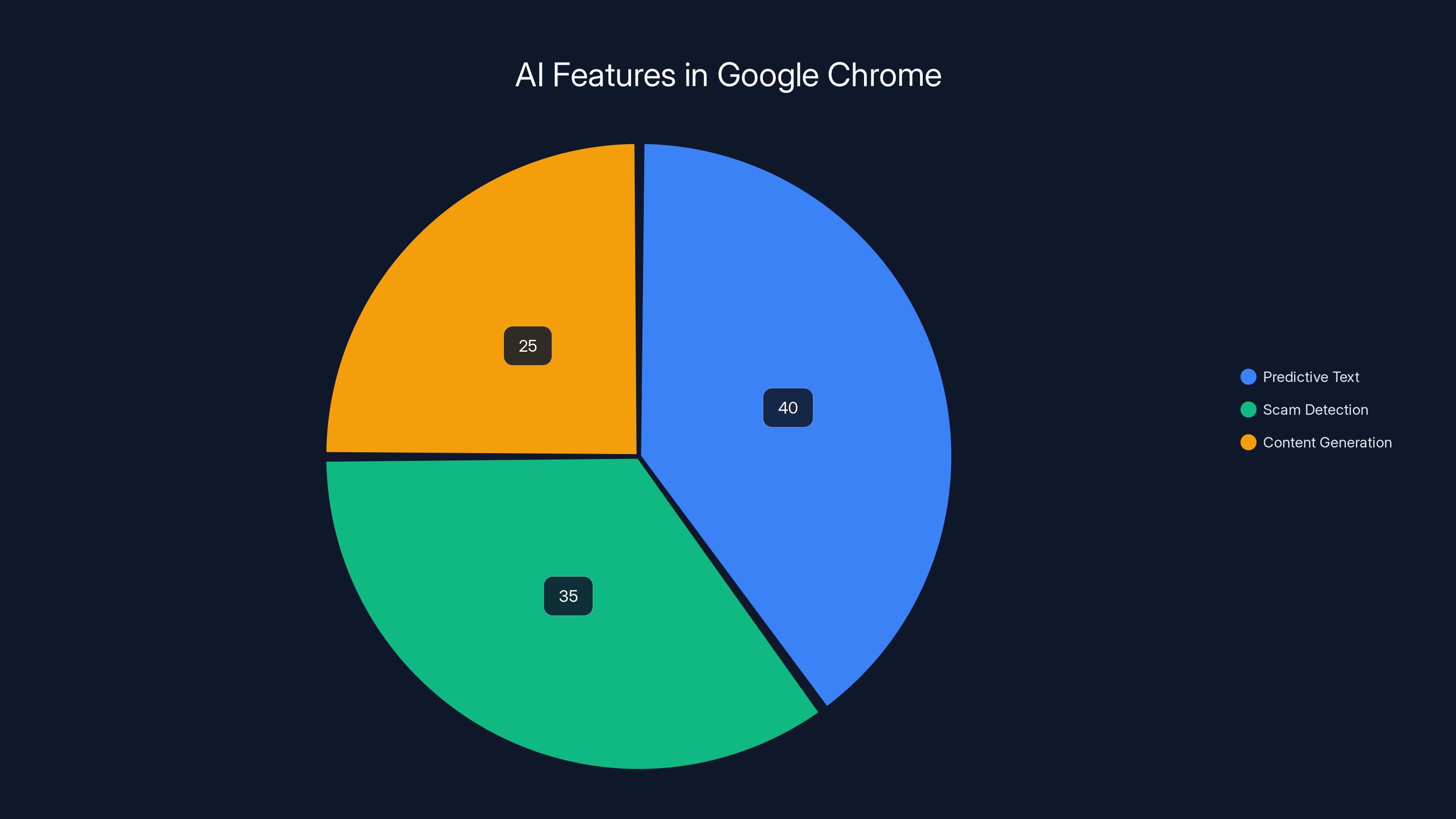

Predictive text is the most used AI feature in Chrome, followed by scam detection and content generation. Estimated data.

Introduction

In a world increasingly reliant on artificial intelligence, privacy and user consent have become paramount. Recently, a computer scientist, Alexander Hanff, brought to light an unsettling discovery: Google Chrome allegedly downloads a 4GB AI file without notifying users. Dubbed "weights.bin," this file is part of Google's Gemini Nano, an on-device language model aimed at enhancing features like "help me write" and scam detection, as reported by Engadget.

The Allegations

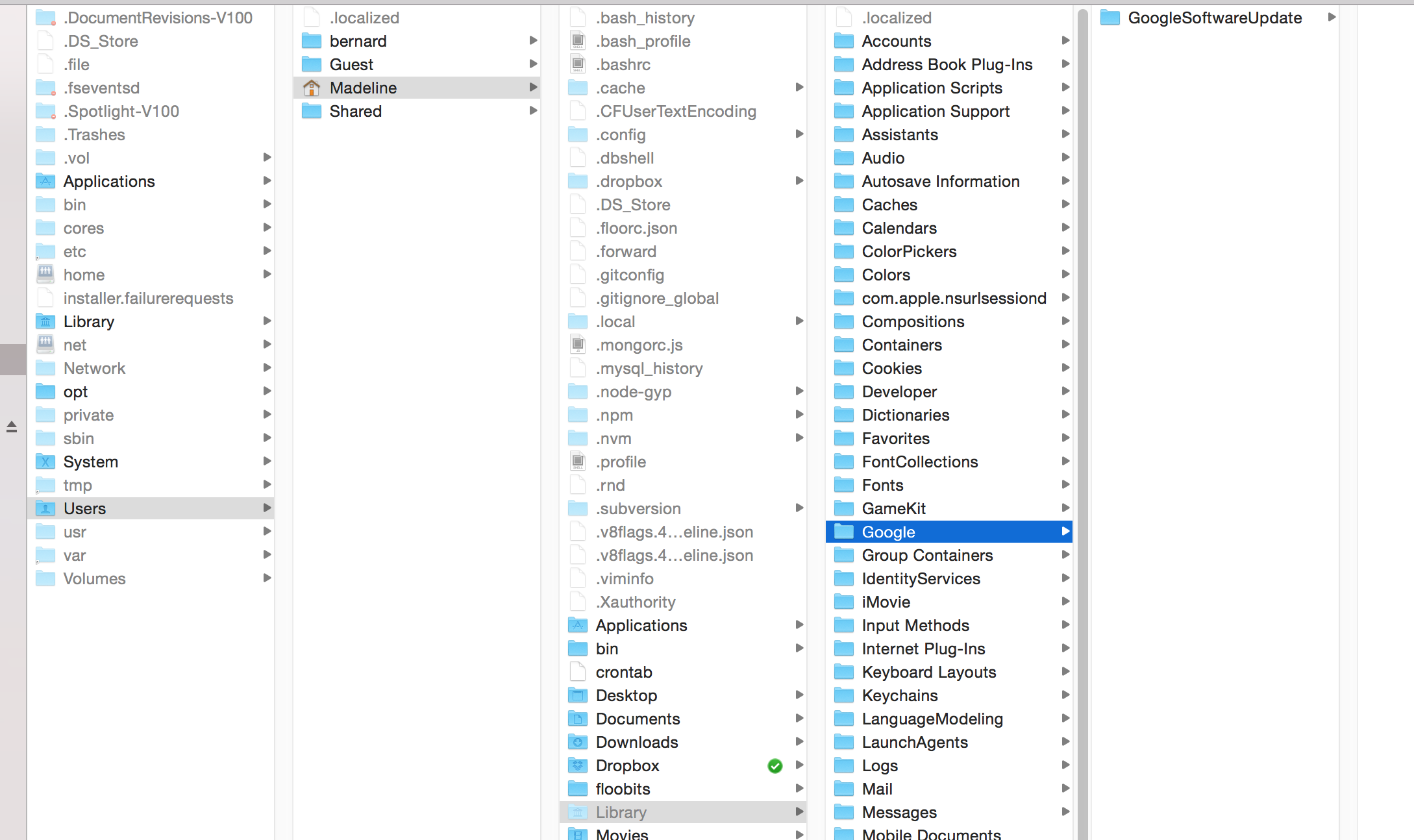

According to Hanff, the file is installed in a hidden directory within the mac OS Library, a location usually inaccessible to casual users. The lack of user prompts during this installation process has sparked debate over Google's transparency and respect for user privacy, as noted by Tom's Hardware.

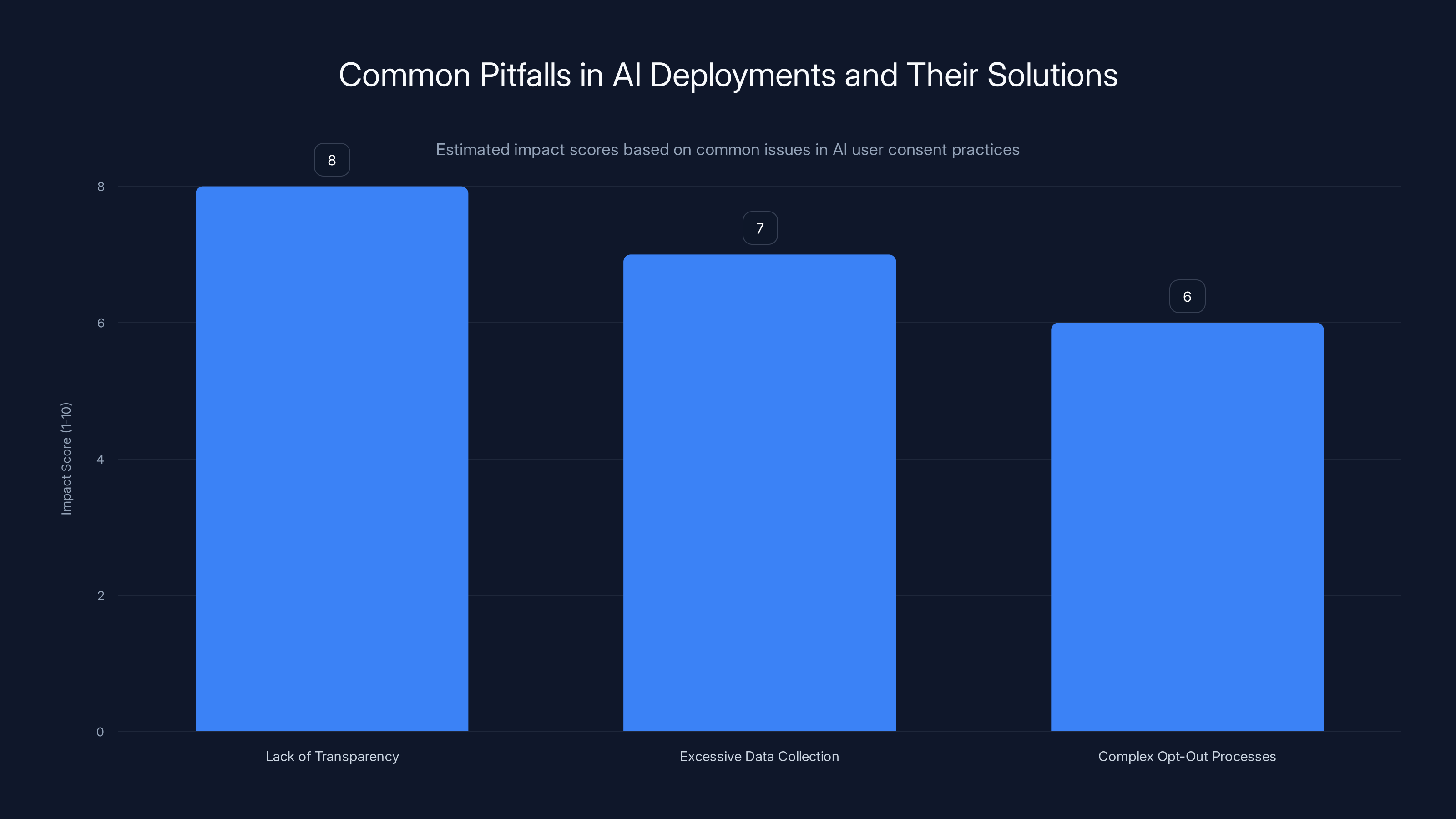

Estimated data shows 'Lack of Transparency' has the highest impact score, indicating it is the most critical issue to address in AI deployments.

Understanding AI Models and Data Files

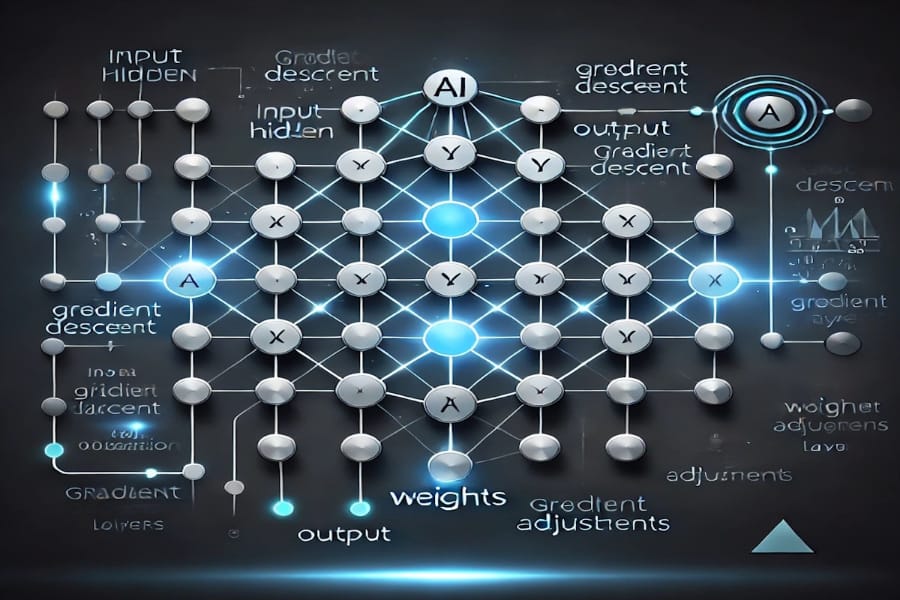

Before diving deeper into the controversy, it's essential to understand the role of AI models and data files like "weights.bin." AI models require data files containing pre-trained weights to function. These weights are essentially the learned parameters that allow the model to make predictions and perform tasks.

What are AI Weights?

AI weights are numerical values that adjust how a model interprets data inputs. They are crucial for the model's performance and are typically large in size, often ranging from hundreds of megabytes to several gigabytes, as seen with Gemini Nano's 4GB file, as explained by The Decoder.

The Technical Breakdown: Chrome's AI Download

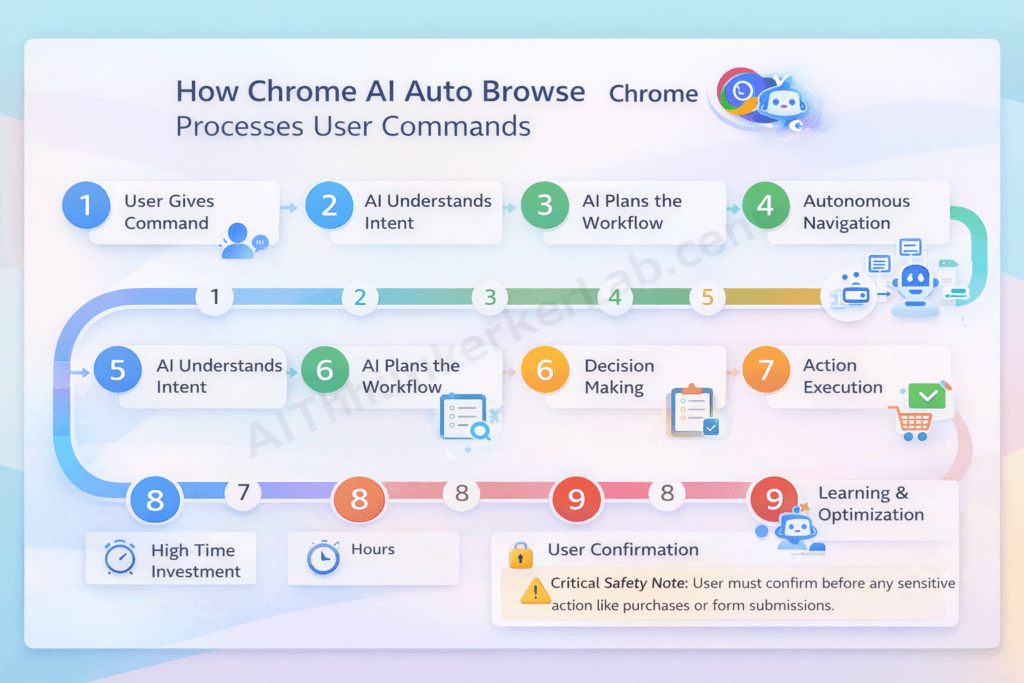

How Does AI Integration Work in Chrome?

Google Chrome has integrated AI features designed to improve user experience. These include predictive text, scam detection, and content generation. To enable these features, Chrome must access AI models stored locally on the device. This is where the 4GB "weights.bin" file comes into play, as detailed in Cisco's blog.

Implementation Details

- File Location: The file is stored in mac OS's hidden Library directory.

- Purpose: Provides necessary data for Gemini Nano's functionality.

- Automatic Download: Allegedly downloaded without user consent, as reported by The Verge.

Privacy Concerns and User Consent

The core issue here is consent—or the lack thereof. Users were reportedly not informed about the download or given a choice to opt in. This lack of transparency is concerning, especially given the size of the file and its potential implications for device performance and data privacy, as highlighted by CHCF.

Ethical Implications

- User Consent: Essential for any software installation, especially one involving large data files.

- Transparency: Users should be informed about what is being installed and why.

- Privacy Risks: Undisclosed installations can lead to distrust and potential misuse of personal data, as discussed in Nature.

Privacy concerns and transparency are major issues in AI deployment, with significant attention on undisclosed data usage and user consent. (Estimated data)

Practical Implementation Guides for Developers

Ensuring User Consent in AI Deployments

For developers and companies, ensuring user consent is crucial when integrating AI models into applications. Here are some best practices:

- Clear Notifications: Inform users about what will be downloaded and why.

- Opt-In Mechanisms: Allow users to choose whether they want to download the AI file.

- Detailed Privacy Policies: Clearly outline how data will be used and stored, as recommended by KPMG.

Common Pitfalls and Solutions

-

Lack of Transparency

- Solution: Implement clear communication strategies and user education.

-

Excessive Data Collection

- Solution: Collect only necessary data and provide users with control over their data.

-

Complex Opt-Out Processes

- Solution: Simplify opt-out mechanisms to enhance user control.

Future Trends and Recommendations

The Evolution of AI and Privacy

As AI continues to evolve, so too must our approach to privacy and consent. Here are some trends and recommendations for the future:

- Decentralized AI Models: By running models locally, companies can reduce data transmission risks.

- Enhanced User Control: Users should have the ability to manage AI features and their associated data easily.

- Regulatory Compliance: Adhering to regulations like GDPR ensures user rights are protected, as emphasized by KPMG's insights.

Industry Best Practices

- Regular Audits: Conduct audits to ensure compliance with privacy standards.

- User Education: Educate users on AI functionalities and privacy implications.

- Collaborative Development: Work with privacy experts to develop ethical AI solutions.

Conclusion

The controversy surrounding Chrome's 4GB AI file download underscores the importance of transparency, user consent, and ethical AI deployment. As AI becomes more integrated into our daily lives, companies must prioritize user privacy and trust. By adopting best practices and staying ahead of privacy trends, developers can create AI solutions that respect user rights and foster trust.

FAQ

What is the "weights.bin" file?

"Weights.bin" is a 4GB file associated with Google's Gemini Nano AI model, designed to enhance features like text prediction and scam detection in Chrome, as noted by FoneArena.

How does Gemini Nano work?

Gemini Nano is an on-device language model that processes data locally to provide AI-powered features without the need for constant internet connectivity, as explained by The Verge.

What are the concerns around Chrome's AI download?

The primary concerns are the lack of user consent and transparency regarding the download and installation of the "weights.bin" file, as reported by Engadget.

How can developers ensure user privacy?

Developers can ensure privacy by implementing clear notifications, opt-in mechanisms, and detailed privacy policies outlining data usage, as recommended by KPMG.

What are the future trends in AI privacy?

Future trends include decentralized AI models, enhanced user control, and adherence to regulatory standards to protect user privacy, as discussed in KPMG's AI insights.

How can users protect their privacy?

Users can protect their privacy by staying informed about software updates, reviewing privacy policies, and adjusting settings to control data usage, as advised by Cisco.

What role do regulations play in AI deployment?

Regulations like GDPR play a crucial role in protecting user rights by setting standards for data usage, consent, and transparency, as emphasized by KPMG.

How can companies build trust with users?

Companies can build trust by prioritizing transparency, user consent, and ethical AI deployment practices, as recommended by KPMG.

Key Takeaways

- Chrome's 4GB AI file download raises significant privacy concerns, as noted in Engadget's report.

- User consent is crucial for ethical AI deployment, as emphasized by KPMG.

- Transparency and clear communication are essential in AI integrations, as discussed in KPMG's AI assurance report.

- Future AI trends emphasize user control and decentralized models, as highlighted by KPMG.

- Regulatory compliance ensures protection of user rights and data privacy, as noted by KPMG.

Related Articles

- Why 42% of Business AI Projects Are Failing: A Deep Dive [2025]

- GPT-5.5 Instant: A Peek into AI's Memory Revolution [2025]

- 'Trust and AI: Technological Capability Outpaces Acceptance [2025]'

- AI Agents Revolutionizing Financial Services: Insights and Implementation [2025]

- Maximize Your Impact at TechCrunch Disrupt 2026: Last Chance for 50% Off [2025]

- Rivian's R2 Variants: Beyond the Electric SUV [2025]

![Chrome's 4GB AI Download Controversy: Unpacking User Consent and Privacy Implications [2025]](https://tryrunable.com/blog/chrome-s-4gb-ai-download-controversy-unpacking-user-consent-/image-1-1778081548499.jpg)