Deep Seek V4: The AI Model Revolutionizing Large Language Models [2025]

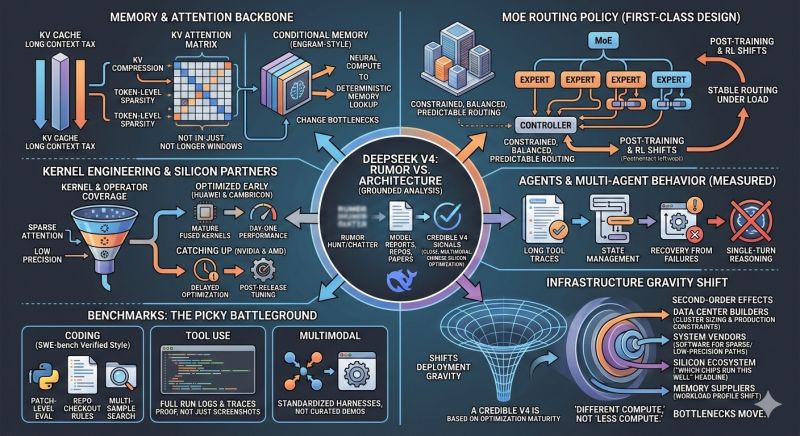

In the fast-evolving landscape of artificial intelligence, Deep Seek's latest release, the V4 model, is making waves. With its innovative mixture-of-experts approach and unprecedented scale, it's set to redefine what's possible in AI applications. Let’s delve into how Deep Seek V4 is closing the gap with frontier models and what this means for the future of AI.

TL; DR

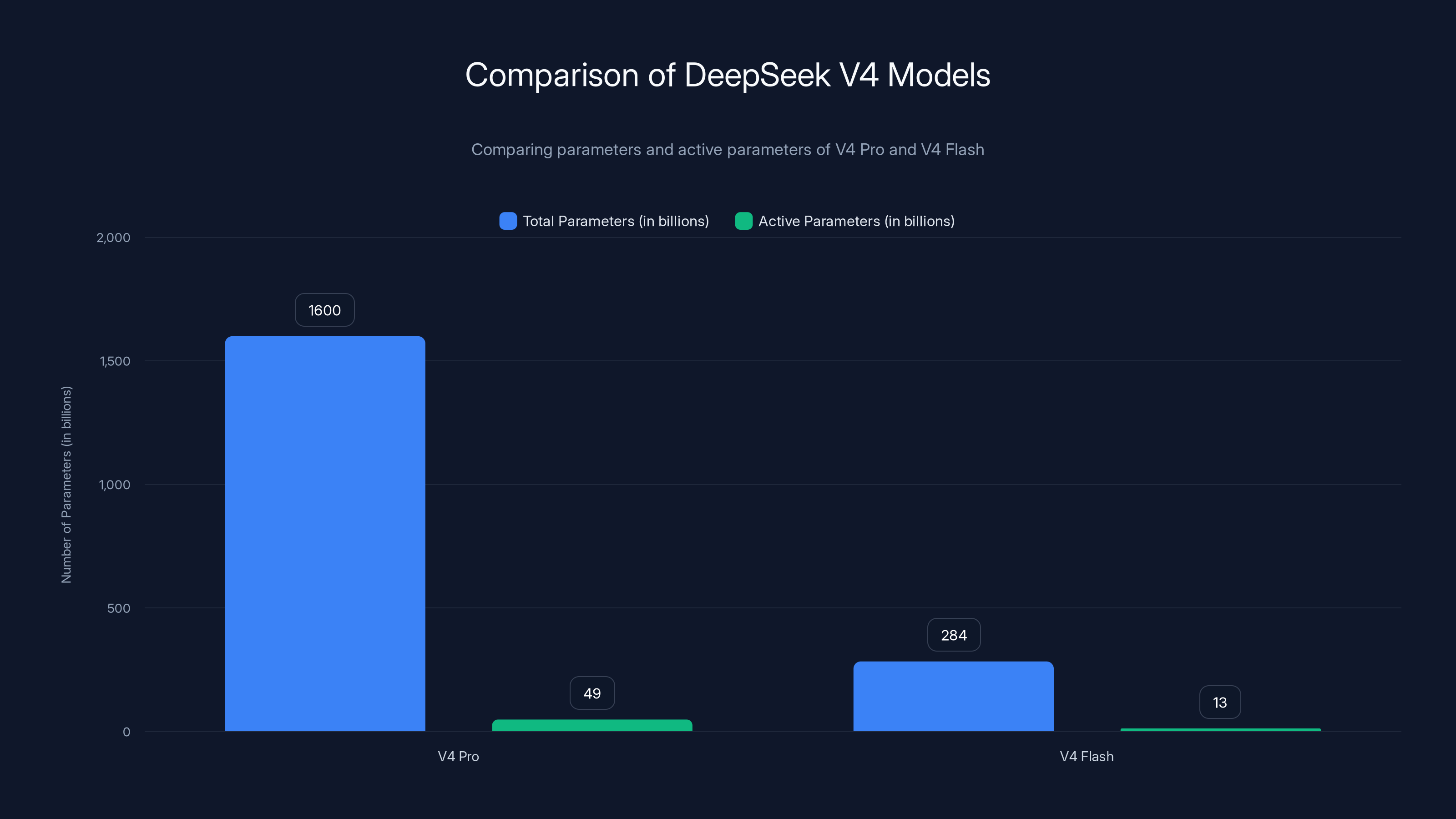

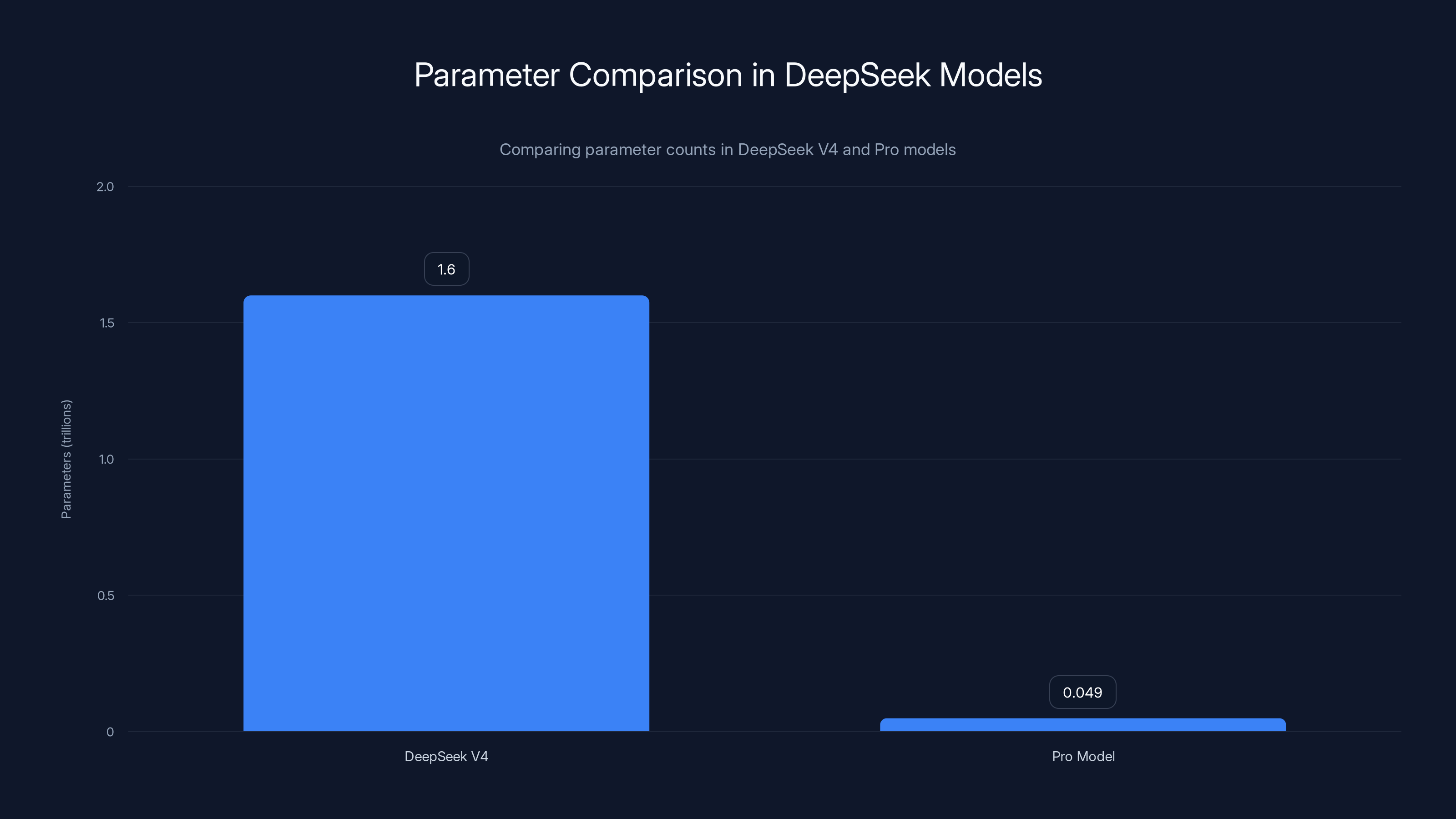

- Deep Seek V4 introduces a mixture-of-experts model with 1.6 trillion parameters, setting a new standard in AI.

- The Pro model uses only 49 billion active parameters, optimizing efficiency and performance.

- Both V4 Flash and Pro offer 1 million-token context windows, ideal for large datasets.

- Deep Seek's advancements make AI more accessible and cost-effective for diverse industries.

- The future of AI lies in scalable, efficient models that can handle complex tasks with ease.

DeepSeek V4 Pro has significantly more total parameters than V4 Flash, but both models use a fraction of their parameters actively to optimize resource efficiency.

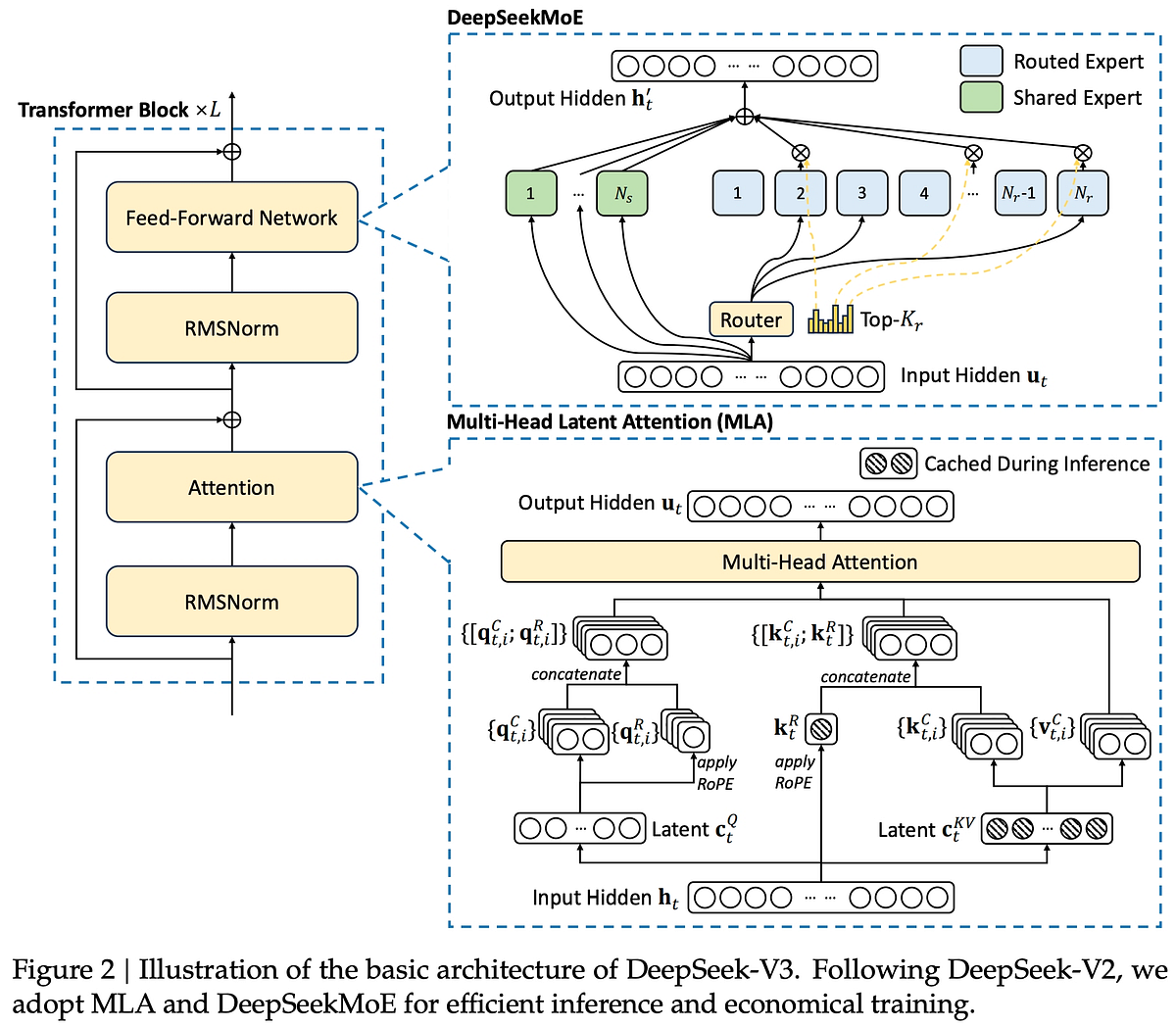

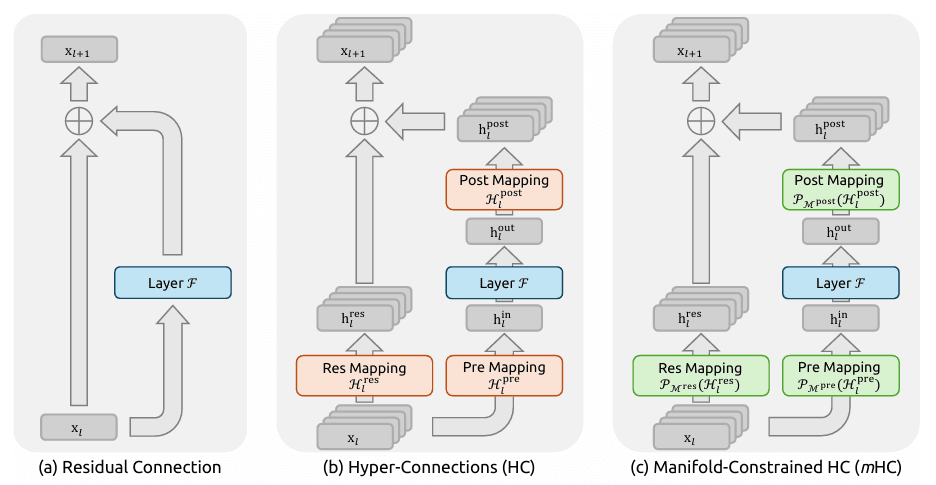

The Rise of Mixture-of-Experts Models

Deep Seek's new V4 model utilizes a mixture-of-experts framework, which activates only a subset of its vast parameters during any given task. This approach not only reduces computational costs but also enhances the model's ability to manage diverse and complex tasks efficiently.

What is a Mixture-of-Experts Model?

A mixture-of-experts (MoE) model operates by engaging only a portion of its parameters for each task. This means that while the model has a massive total number of parameters, only the most relevant ones are activated for specific tasks.

Benefits of MoE Models:

- Efficiency: Reduces computational overhead by utilizing only necessary parameters.

- Scalability: Supports larger models without a proportional increase in resource demands.

- Task Specialization: Allows for more specialized responses by leveraging different parameter subsets.

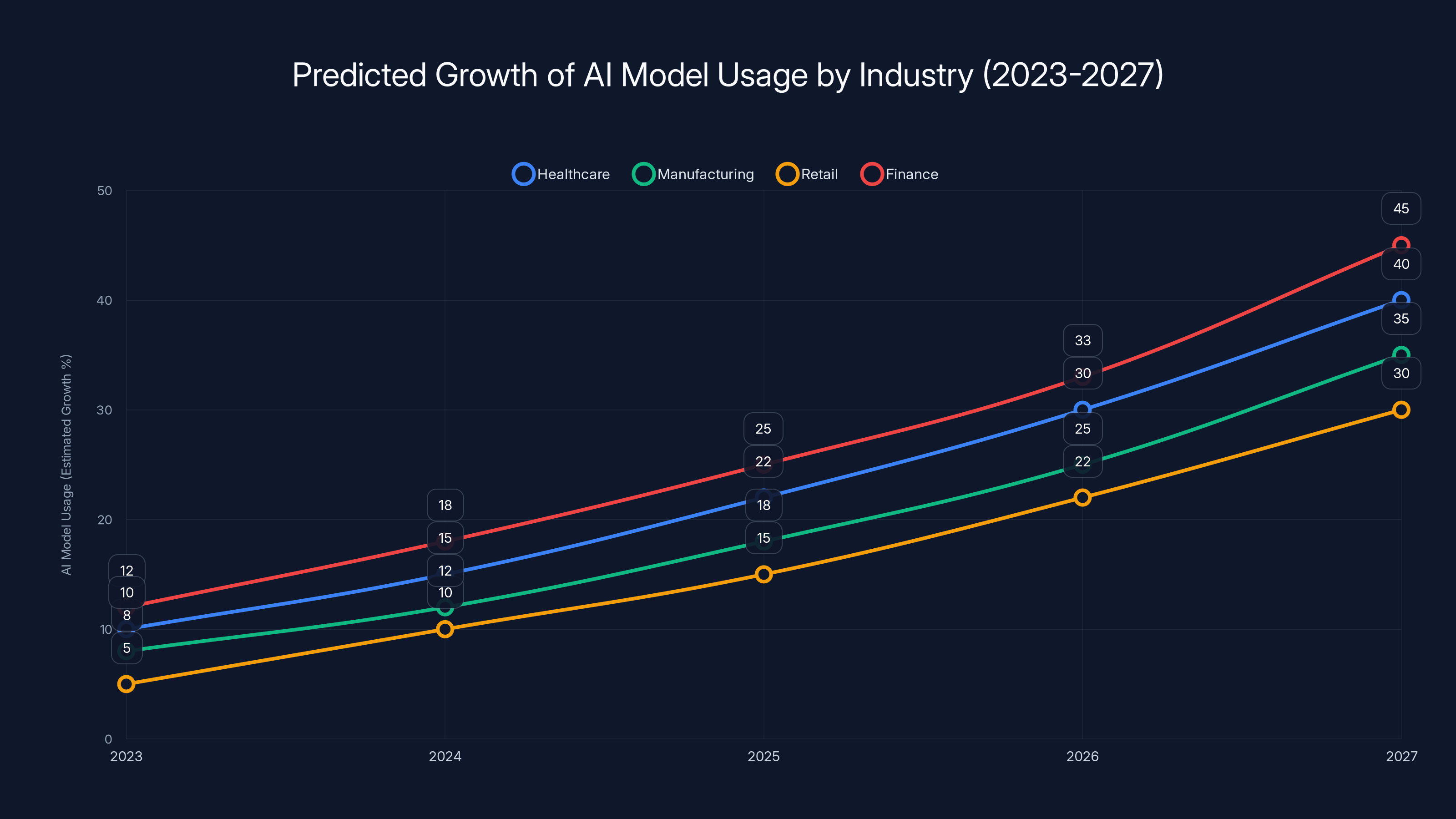

Projected growth in AI model usage across industries shows significant increases, particularly in finance and healthcare, by 2027. Estimated data based on current trends.

Inside Deep Seek V4: A Technical Dive

Deep Seek V4 comes in two versions: V4 Flash and V4 Pro. Both are designed to push the boundaries of what's achievable with AI models today.

Deep Seek V4 Pro

With 1.6 trillion parameters, Deep Seek V4 Pro is the largest open-weight model to date. Despite its size, it uses only 49 billion active parameters for any given task, ensuring resource efficiency.

Key Features:

- 1 Million Tokens Context Window: Ideal for handling large datasets such as codebases or extensive documents.

- Architectural Innovations: Improved efficiency and performance over previous models.

Deep Seek V4 Flash

The V4 Flash variant, with 284 billion parameters and 13 billion active, offers a more compact alternative for those needing robust AI capabilities without the full scale of V4 Pro.

Use Cases:

- Suitable for applications requiring high efficiency without the need for extensive parameter engagement.

- Ideal for industries with computational resource constraints.

Real-World Applications of Deep Seek V4

Deep Seek V4's capabilities expand the possibilities for AI across various sectors. Here are a few scenarios where V4 can make a significant impact:

1. Healthcare and Diagnostics

By utilizing its extensive context window, Deep Seek V4 can analyze vast amounts of medical data to identify patterns and predict patient outcomes more accurately. According to Manatt Health, AI's role in healthcare is rapidly expanding, with models like V4 leading the way.

2. Financial Services

In finance, where quick decision-making based on large datasets is crucial, V4 can streamline processes and enhance predictive analytics. The financial sector is increasingly adopting AI for its efficiency and accuracy.

3. Autonomous Systems

For autonomous vehicles and robotics, V4's ability to process and react to real-time data inputs makes it an invaluable tool.

DeepSeek V4's mixture-of-experts model boasts 1.6 trillion parameters, significantly more than the Pro model's 49 billion, highlighting its advanced capabilities.

Technical Implementation and Best Practices

Implementing Deep Seek V4 efficiently requires understanding its architecture and leveraging its strengths.

Key Steps for Implementation

- Understand Your Needs: Assess if your application requires the full scale of V4 Pro or if V4 Flash suffices.

- Optimize Resource Allocation: Use the mixture-of-experts configuration to reduce unnecessary computational load.

- Leverage Context Windows: Utilize the extensive token windows for applications involving large datasets.

Common Pitfalls and Solutions

Pitfall 1: Over-reliance on Active Parameters

- Solution: Regularly evaluate the model's performance across different tasks to ensure active parameters are optimized.

Pitfall 2: Underutilized Context Windows

- Solution: Fully utilize the 1 million-token capacity for comprehensive data analysis and predictions.

Future Trends and Recommendations

The advancements in models like Deep Seek V4 indicate a shift towards more efficient and scalable AI systems. Here are some trends to watch:

- Increased Accessibility: As models become more efficient, AI will become accessible to a broader range of industries.

- Focus on Specificity: Future models will likely incorporate even more specialized parameter activations for niche applications.

- Blending AI with IoT: The integration of AI models with IoT devices will enhance real-time data processing capabilities.

Conclusion

Deep Seek V4 is not just another AI model; it's a significant leap forward in how AI can be implemented and utilized across sectors. By closing the gap with frontier models through its innovative mixture-of-experts approach, Deep Seek is setting a new standard for efficiency and performance. As these technologies continue to evolve, we can expect even greater integration and innovation in the AI landscape.

Use Case: Automate extensive data analysis with Deep Seek V4, optimizing resource use and enhancing predictive accuracy.

Try Runable For Free

FAQ

What is Deep Seek V4?

Deep Seek V4 is a large language model utilizing a mixture-of-experts approach, designed to handle complex tasks efficiently by activating only relevant parameters.

How does the mixture-of-experts model work?

The mixture-of-experts model engages a subset of the total parameters for each task, optimizing computational resources and enhancing task-specific performance.

What are the benefits of using Deep Seek V4?

Benefits include improved efficiency, scalability to handle large datasets, and specialized task handling due to its mixture-of-experts architecture.

Who can benefit from Deep Seek V4?

Industries such as healthcare, finance, and autonomous systems can benefit from Deep Seek V4’s advanced data processing and predictive capabilities.

How does Deep Seek V4 differ from previous models?

V4 introduces architectural improvements with a larger parameter set and enhanced efficiency through its mixture-of-experts framework.

What are the potential challenges with Deep Seek V4?

Challenges include ensuring optimal parameter activation and fully utilizing its extensive context windows for maximum data analysis.

Key Takeaways

- DeepSeek V4 models utilize a mixture-of-experts approach, activating only necessary parameters for each task.

- The V4 Pro model boasts 1.6 trillion parameters, optimizing efficiency with only 49 billion active at any time.

- Both V4 Flash and Pro have 1 million-token context windows, ideal for large datasets.

- DeepSeek V4 advancements enable more accessible and cost-effective AI applications across industries.

- Future AI models will focus on increased accessibility and specificity, blending with IoT for enhanced real-time data processing.

Related Articles

- AI Coachella: Silicon Valley's Royalty Inspires the Next Generation of Innovators [2025]

- The AI Revolution on Your Desktop: How AI Apps Are Transforming PCs [2025]

- AI is No Longer Borderless: Navigating a Fragmented Future [2025]

- DeepSeek's New AI Model: A Year After Shocking US Competitors [2025]

- The Intersection of Reality and AI: Understanding the Complex Case of Iranian Women 'Saved' from Execution [2025]

- AI Models: The New Generation of Scammers [2025]

![DeepSeek V4: The AI Model Revolutionizing Large Language Models [2025]](https://tryrunable.com/blog/deepseek-v4-the-ai-model-revolutionizing-large-language-mode/image-1-1777039598602.jpg)