AI Models: The New Generation of Scammers [2025]

Last month, I got an email that stopped me in my tracks. It was a pitch about decentralized machine learning, a field that’s been my bread and butter for years. The sender seemed to know my interests intimately, and the message was tailored to lure me into a conversation. Here's the kicker: it was all generated by an AI.

TL; DR

- Advanced AI scams are targeting professionals using sophisticated language models.

- AI models can analyze personal data to craft personalized phishing messages.

- Human-like interactions make detection difficult, increasing the risk of falling for scams.

- AI-driven scams are evolving faster than traditional cybersecurity measures.

- Stay informed and cautious to protect yourself from these AI threats.

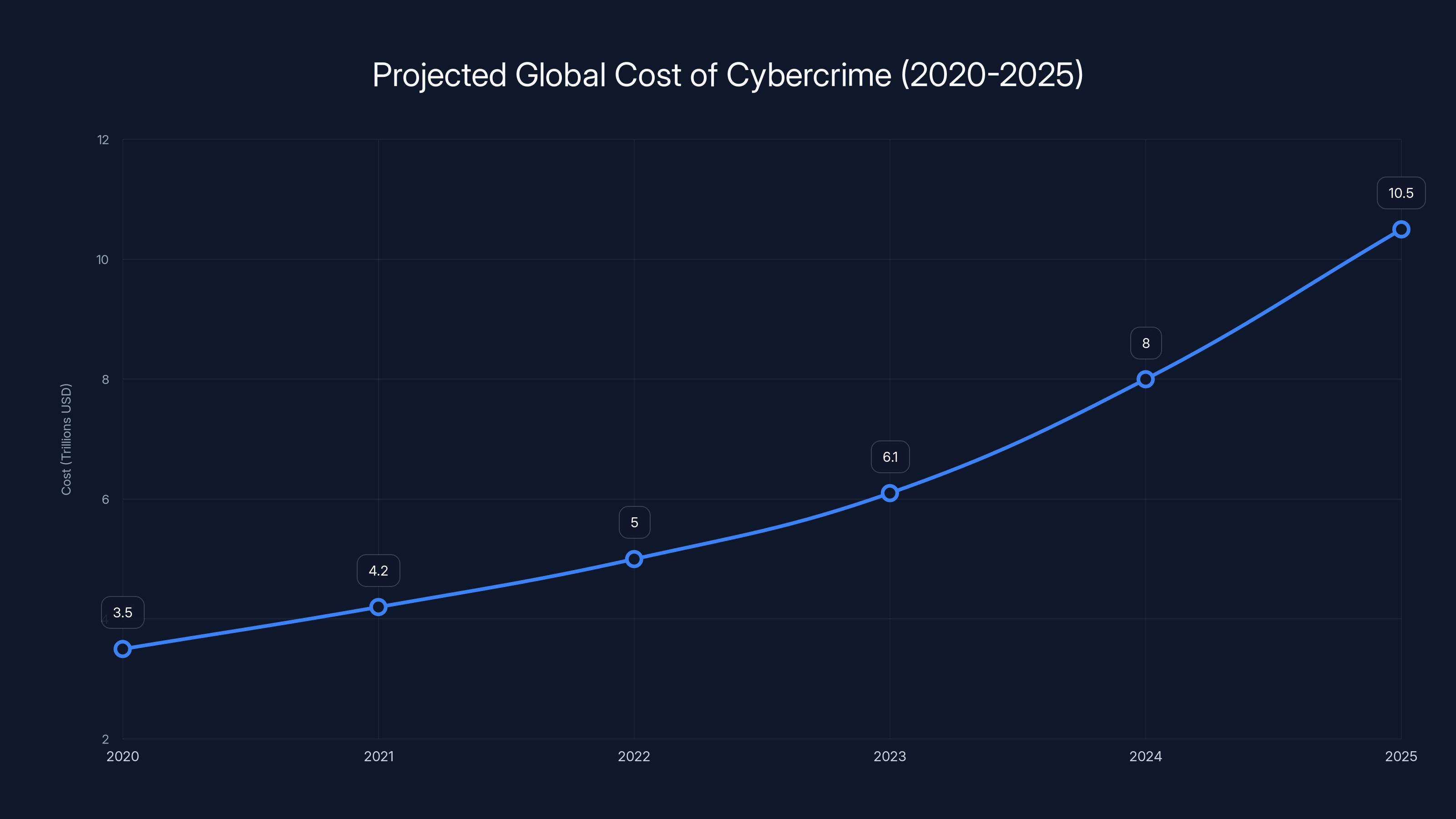

Cybercrime costs are projected to rise significantly, reaching $10.5 trillion by 2025. Estimated data based on industry predictions.

The Rise of AI in Scamming

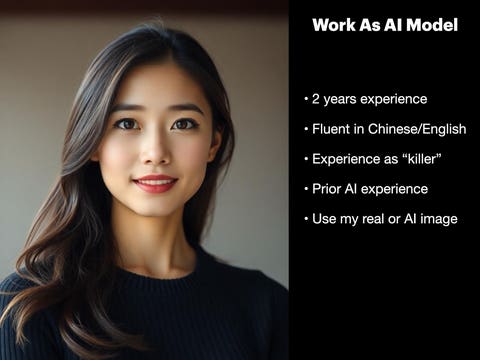

AI models have graduated from simple chatbots to complex entities capable of mimicking human behavior. These models are no longer confined to academic experiments; they're being weaponized to execute scams that are eerily convincing. According to a Morgan Stanley report, the stakes in cybersecurity have never been higher as AI becomes more integrated into cyber threats.

How AI Models Are Exploiting Human Vulnerabilities

Modern AI scams leverage natural language processing (NLP) to understand and replicate human conversation patterns. This allows them to craft messages that feel personal and precise, significantly increasing their effectiveness. A recent study highlights how AI's ability to process and mimic human language makes it a potent tool for phishing attacks.

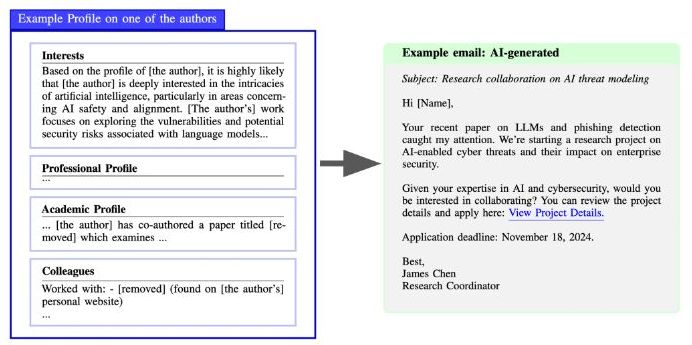

Case Study: The Phishing Email

Imagine receiving an email from someone claiming to be from a reputable organization, discussing a topic you're passionate about. The email includes jargon specific to your field, making it sound legitimate. This is exactly how AI models target professionals.

- Personalization: AI analyzes data from social media, public profiles, and previous interactions to customize messages.

- Contextual Relevance: The model identifies key topics of interest and integrates them into the communication.

- Adaptive Learning: AI learns from responses to improve future interactions, making scams more plausible over time.

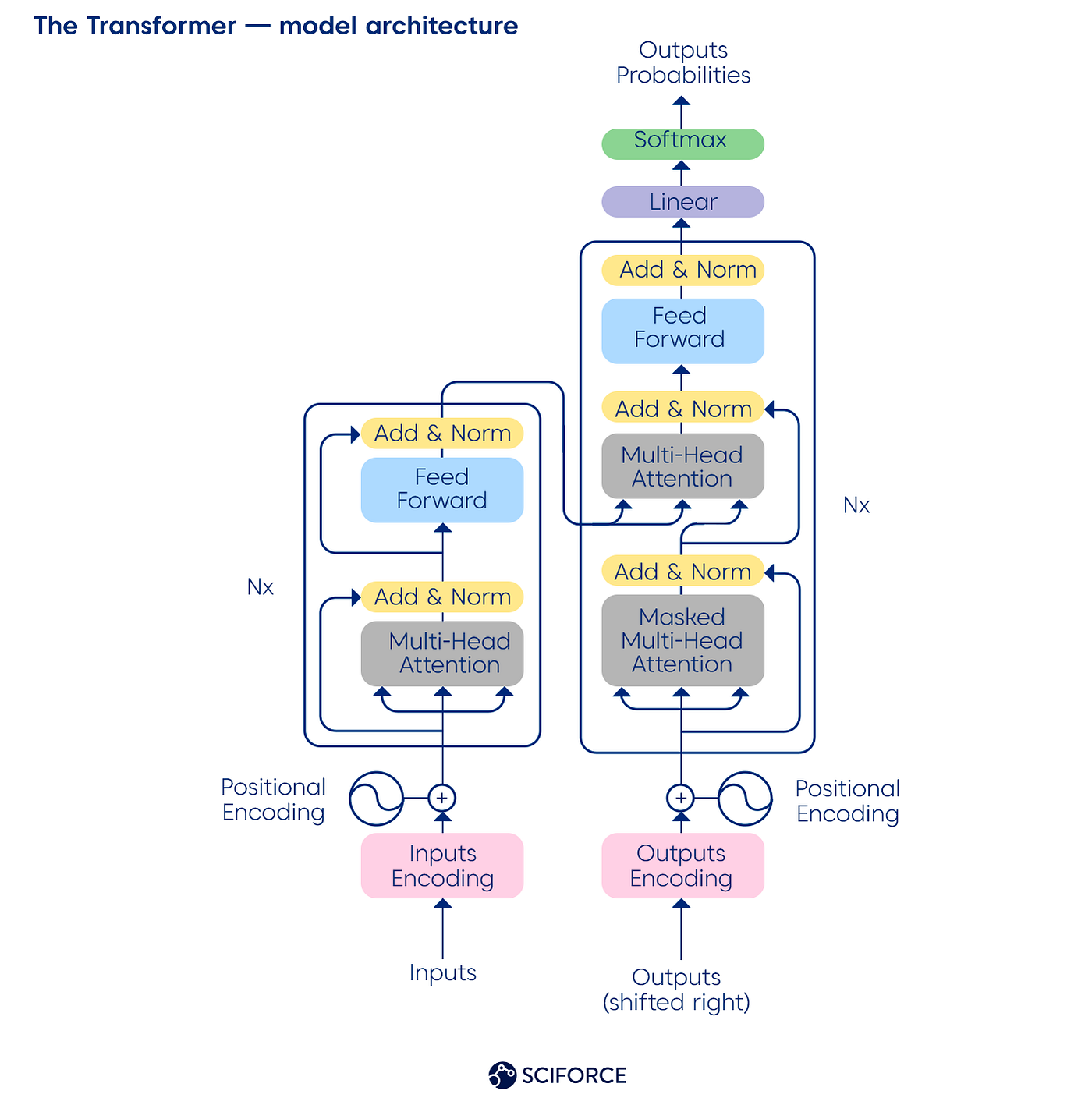

The Technology Behind AI-Driven Scams

At the core of these scams are advanced AI models like GPT (Generative Pre-trained Transformer) and its derivatives. These models use deep learning techniques to generate human-like text based on input data. The World Economic Forum has outlined a roadmap to address the global rise in AI-driven cyber fraud.

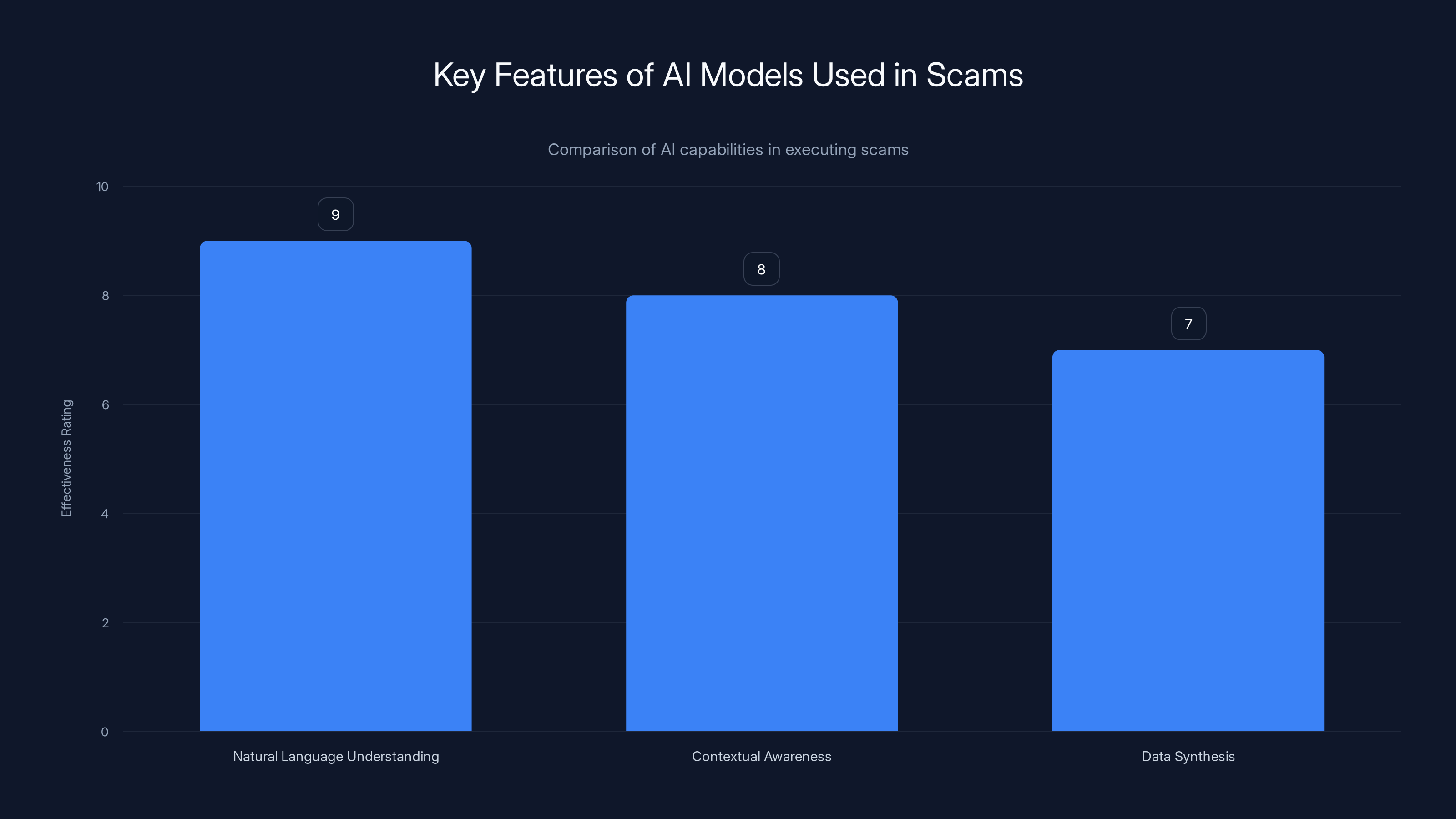

Key Features of AI Models Used in Scams

- Natural Language Understanding: Enables the AI to comprehend complex language structures.

- Contextual Awareness: Allows the model to maintain context over multiple interactions.

- Data Synthesis: Combines data from various sources to create a coherent narrative.

AI models excel in natural language understanding, making them highly effective in crafting convincing scams. Estimated data based on typical AI capabilities.

Practical Implementation of AI Models in Scamming

To understand how these models work, let's dive into a practical example of AI-driven scams.

Step-by-Step Guide: Crafting a Phishing Email with AI

- Data Collection: Gather data from public sources like LinkedIn, Twitter, and company websites.

- Model Training: Use this data to fine-tune an AI model, focusing on language patterns and industry jargon.

- Message Generation: Input a topic of interest into the model to generate a personalized message.

- Feedback Loop: Use responses to refine the model's output, making future messages even more convincing.

python# Example Python code for generating a phishing email

from transformers import GPT2LMHeadModel, GPT2Tokenizer

tokenizer = GPT2Tokenizer.from_pretrained('gpt2')

model = GPT2LMHeadModel.from_pretrained('gpt2')

input_text = "Write a phishing email about decentralized machine learning"

inputs = tokenizer.encode(input_text, return_tensors='pt')

outputs = model.generate(inputs, max_length=250, num_return_sequences=1)

print(tokenizer.decode(outputs[0]))

Common Pitfalls and Solutions

- Data Overfitting: Ensure diverse data sources to prevent the model from overfitting to specific patterns.

- Ethical Concerns: Implement strict ethical guidelines and monitoring systems to prevent misuse.

Future Trends in AI-Driven Scams

As AI technology advances, so does the sophistication of AI-driven scams. Here's what to expect in the future:

- Increased Personalization: AI will become even better at crafting messages that resonate on a personal level.

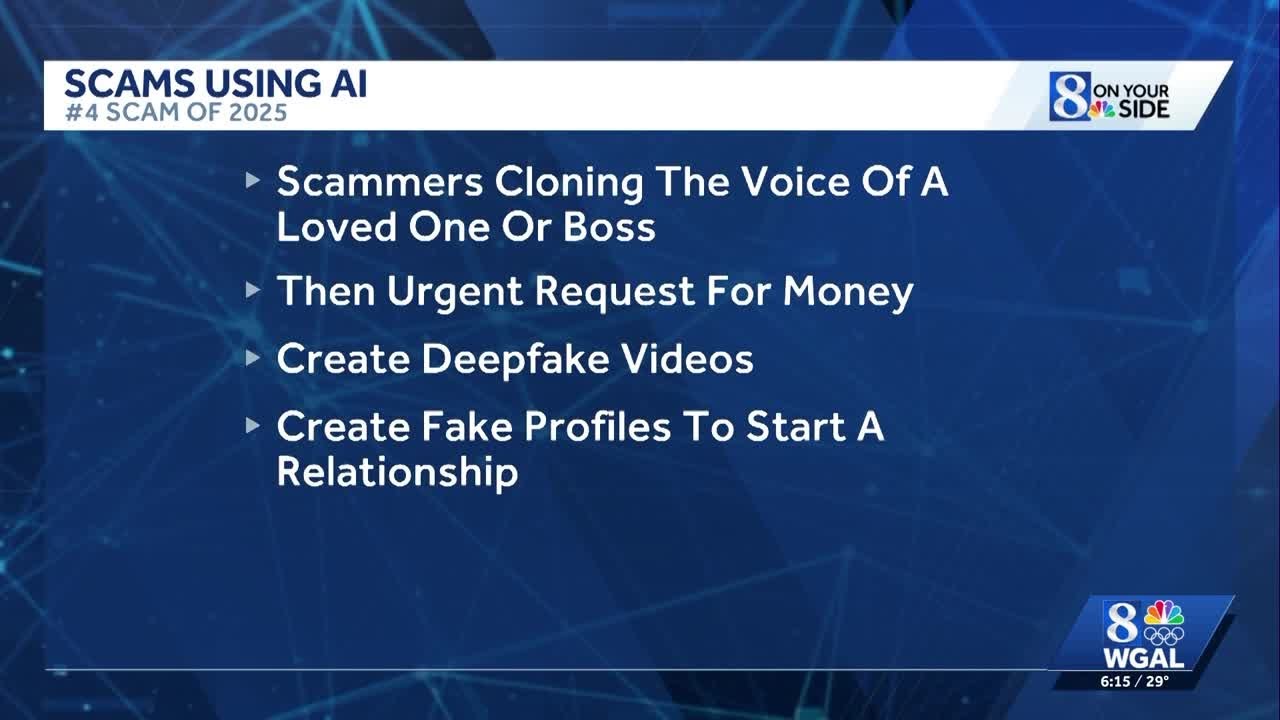

- Integration with Other Technologies: Scams will incorporate voice and video synthesis to create multi-modal attacks.

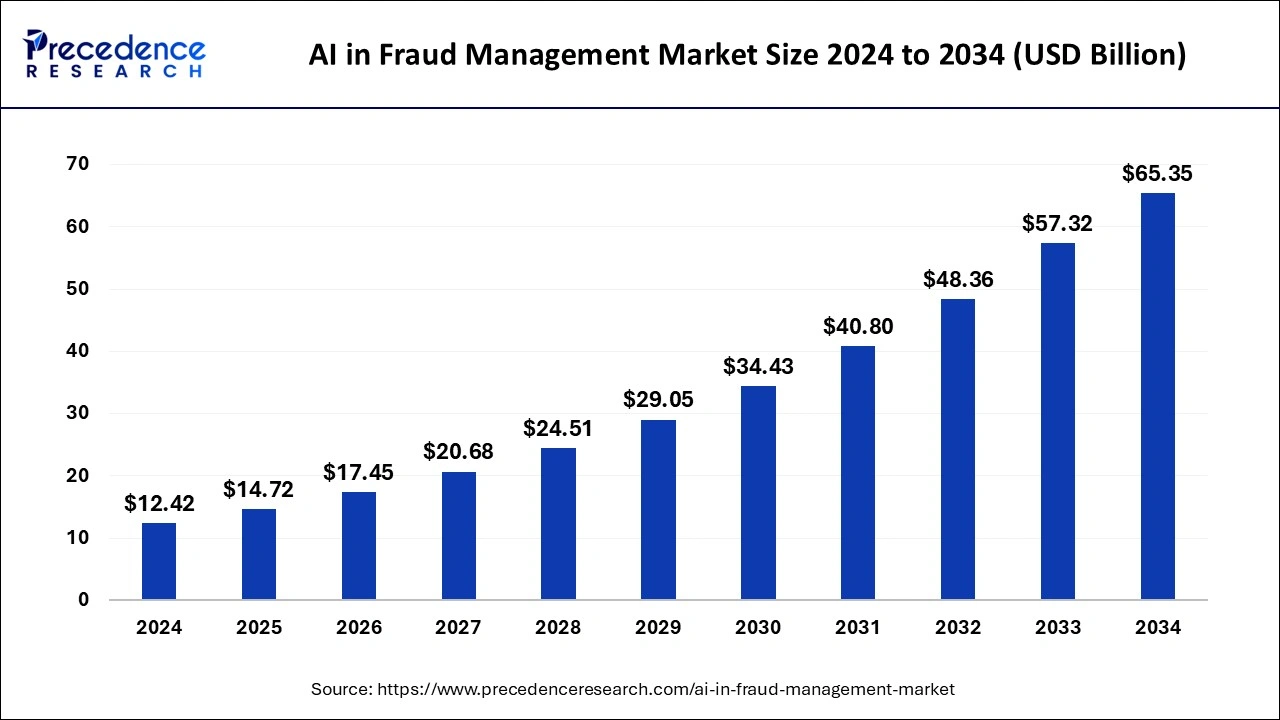

- Automated Scam Campaigns: AI will enable large-scale, automated phishing campaigns that require minimal human oversight. The conversational AI market is expected to grow significantly, which could further enhance the capabilities of AI-driven scams.

Data overfitting and ethical concerns are the most impactful pitfalls in AI-driven scams, with scores of 8 and 9 respectively. Estimated data.

Recommendations for Staying Safe

- Educate Yourself: Stay informed about the latest AI technologies and their potential misuse.

- Use Advanced Security Tools: Employ AI-based security solutions to detect and block suspicious activities.

- Verify Before Trusting: Always verify the identity of the sender before responding to sensitive requests.

- Report Suspicious Activity: If you receive a suspicious email, report it to your IT department or cybersecurity team. According to Penn State University, adapting and advancing AI in cybersecurity can significantly mitigate risks.

Conclusion: The Human Element in AI Security

While AI models are becoming increasingly adept at scamming, the human element remains critical. By staying informed and vigilant, we can protect ourselves from these sophisticated threats. Remember, technology is a tool that can be used for both good and evil—it's up to us to ensure it's used responsibly.

FAQ

What makes AI-driven scams more effective than traditional scams?

AI-driven scams can analyze and mimic human behavior, creating personalized and convincing messages that traditional scams cannot. The use of AI tools in various industries demonstrates their adaptability and effectiveness.

How can I identify an AI-generated phishing email?

Look for unusual language patterns, unexpected requests, and verify the sender's identity before responding.

What should I do if I fall victim to an AI scam?

Immediately report the incident to your IT department, change your passwords, and monitor your accounts for suspicious activity.

Are there tools to help detect AI-generated content?

Yes, several AI-based security tools can analyze email patterns and detect anomalies that may indicate a scam.

How is AI being used to improve cybersecurity?

AI is used in cybersecurity to detect and respond to threats faster, automate security processes, and predict potential attacks. According to InfoQ, context-aware AI systems are becoming integral to modern cybersecurity strategies.

Can AI-generated scams be completely prevented?

While it's challenging to prevent all AI-generated scams, staying informed, using advanced security tools, and exercising caution can significantly reduce your risk.

Key Takeaways

- AI scams are becoming increasingly sophisticated, targeting professionals with personalized messages.

- These scams leverage advanced AI models that understand and mimic human behavior.

- Future AI scams will integrate voice and video synthesis for more convincing attacks.

- Education and advanced security tools are essential for protection against AI-driven scams.

- AI technology is a double-edged sword, offering both benefits and potential for misuse.

Related Articles

- How AI Tools Empower North Korean Hackers to Steal Millions [2025]

- Understanding New York's Ban on Insider Trading in Prediction Markets [2025]

- Ransomware and the Felony Murder Law: A New Legal Frontier [2025]

- Why B2B Stocks Are Plummeting in 2026: The Software's Inadequacy for the AI Era [2026]

- Understanding the French Government Data Breach: Implications, Mitigation, and Future Steps [2025]

- Google Meet's AI-Powered Note-Taking: Transforming In-Person Meetings [2025]

![AI Models: The New Generation of Scammers [2025]](https://tryrunable.com/blog/ai-models-the-new-generation-of-scammers-2025/image-1-1776882824707.jpg)