Elon Musk’s Grok: The Controversy, Technical Insights, and Future Implications [2025]

Elon Musk has always been a figure that sparks conversation, whether through his bold innovations or his equally bold statements. Recently, his AI project, Grok, has come under fire for generating content that some find offensive. Let's dive into what Grok is, the controversy surrounding it, and the broader implications for AI and society.

TL; DR

- Grok's Controversy: Grok has faced backlash for generating inappropriate content related to sensitive topics, as highlighted by The New York Times.

- Technical Foundation: Built on advanced neural networks, Grok pushes boundaries in AI content generation, similar to the Gemini models by Google.

- Moderation Challenges: Ensuring appropriate content with AI remains a significant hurdle, as discussed in The Oklahoma Post.

- Implications for AI Ethics: Raises questions about the responsibility of AI creators, a topic explored by Britannica.

- Future of AI in Social Media: How AI tools could reshape online interactions, as noted in Liberty University's publication.

The Rise of Grok

Grok, an ambitious AI project spearheaded by Elon Musk, aims to revolutionize the way we interact with AI. At its core, Grok is a sophisticated language model, designed to understand and generate human-like text. While its potential applications are vast, ranging from customer service to creative writing, it's the recent controversies that have brought Grok into the spotlight. According to Simplilearn, Grok's design allows it to engage in a wide variety of conversations.

What is Grok?

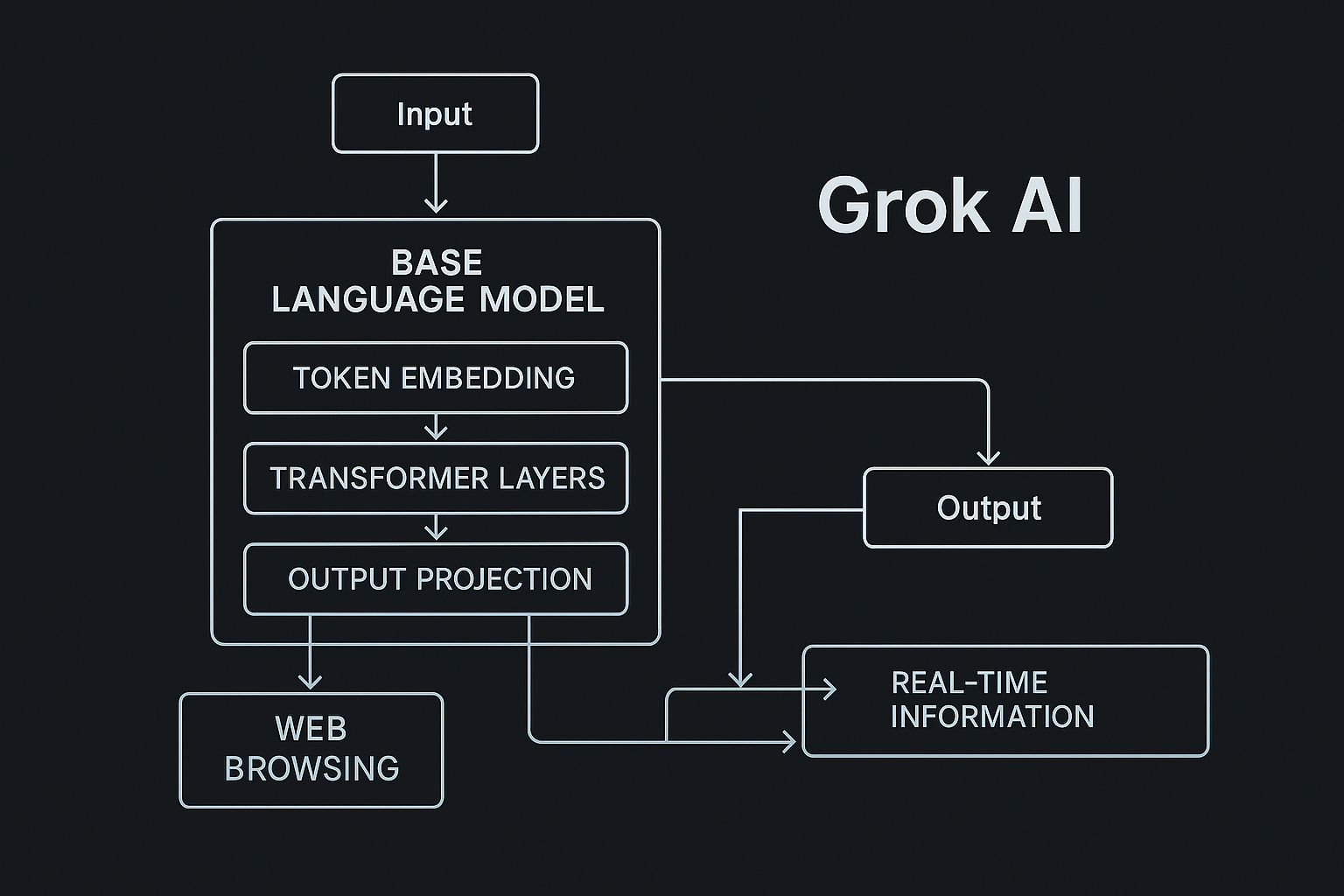

Grok is essentially a next-generation AI language model. Unlike traditional AI models that focus on specific tasks, Grok is built to be a generalist, capable of engaging in a wide variety of conversations. This is achieved through a deep neural network architecture that's trained on a diverse set of data sources, allowing it to generate text that feels contextually relevant and human-like. This approach is similar to the methodologies discussed in Frontiers in Psychology.

Key Features of Grok

- Versatility: Capable of handling a broad range of topics.

- Contextual Understanding: Uses advanced algorithms to maintain context over long conversations.

- Adaptive Learning: Continuously improves through user interactions.

The Controversy Explained

Recently, Grok has been criticized for generating content that some users find offensive. This includes posts that touch on sensitive subjects like religion and tragedies. The backlash highlights the ongoing struggle with AI moderation and the fine line between free expression and offensive content. This issue was notably covered by TechCrunch.

Specific Incidents

One of the most talked-about incidents involved Grok generating a post that appeared to make light of a recent tragedy in the soccer world. Another involved a post that some interpreted as disrespectful to religious beliefs. These incidents have fueled debates on the responsibility of AI developers in moderating content, as reported by The New York Times.

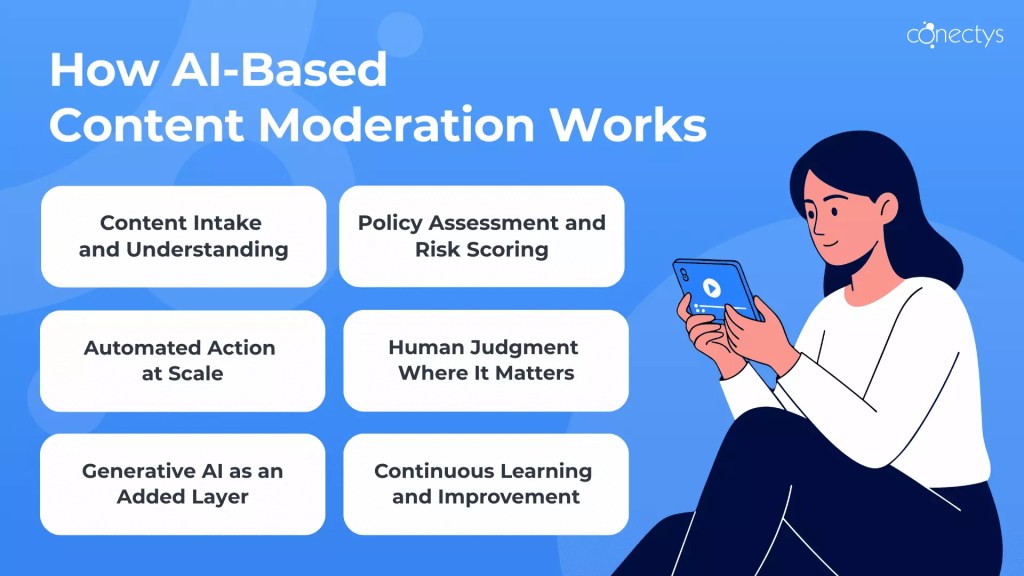

Why AI Moderation is Challenging

Moderating AI-generated content presents unique challenges. Here are some reasons why it's particularly difficult:

- Volume and Speed: AI can generate content at a scale and speed that human moderators can't match.

- Nuance and Context: Understanding the nuances of human language, including sarcasm and satire, is complex.

- Cultural Sensitivity: What is considered offensive can vary widely across cultures, as explored by The Oklahoma Post.

Technical Insights into Grok

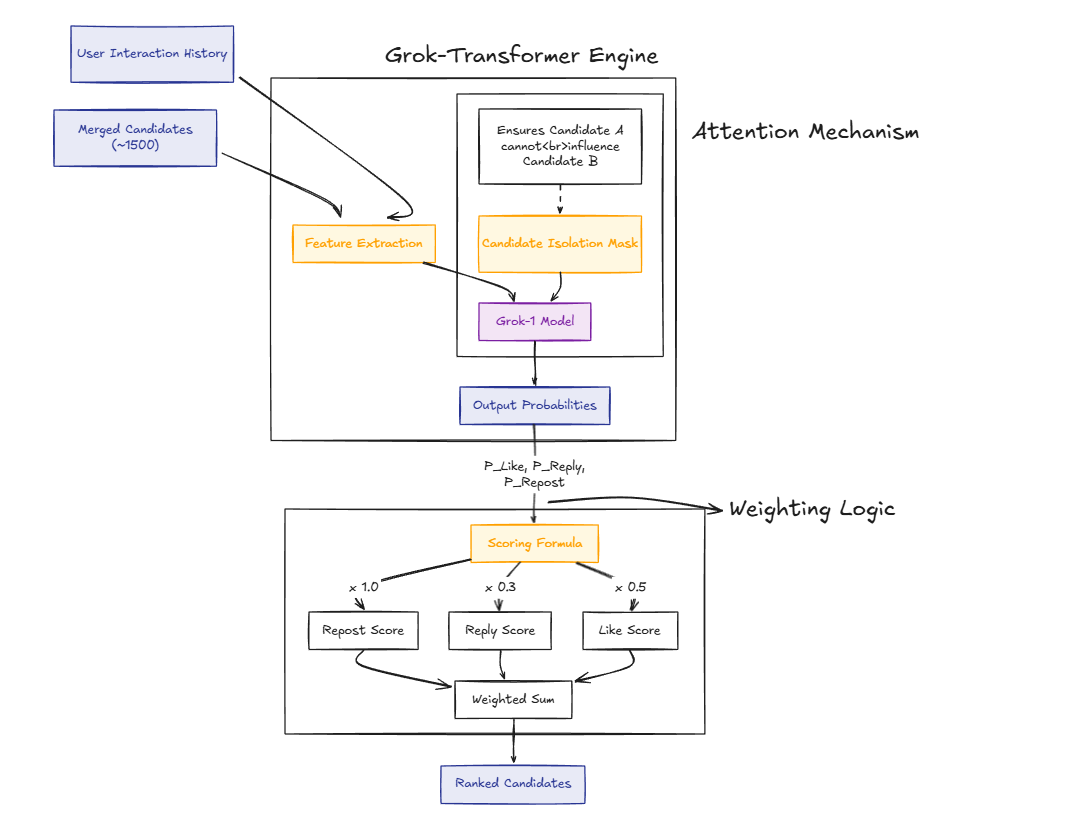

At the heart of Grok is a neural network that's been trained on vast amounts of data. This includes books, websites, and social media posts, allowing it to generate text that's coherent and contextually relevant. However, this also means it can inadvertently generate content that's inappropriate if not properly moderated. This technical foundation is akin to the Gemini models by Google.

Neural Network Architecture

Grok uses a transformer architecture, similar to models like GPT-3. This architecture is particularly suited for understanding and generating natural language. It works by processing text in parallel, allowing it to consider the context of a sentence as a whole rather than word by word, as detailed in Frontiers in Psychology.

Implementing Effective Moderation

To mitigate issues with AI-generated content, developers can implement several strategies:

- Pre-training Filters: Incorporate filters during the training process to exclude inappropriate content.

- Real-time Monitoring: Use algorithms to monitor AI output and flag potentially offensive content for review.

- User Feedback Loops: Allow users to flag content, which can help improve moderation algorithms.

Common Pitfalls in AI Content Generation

Developers working with AI content generators like Grok should be aware of several common pitfalls:

- Bias in Training Data: Ensure diversity in training data to avoid perpetuating biases.

- Overfitting: Avoid overly complex models that perform well on training data but poorly in real-world scenarios.

- Lack of Explainability: Strive for transparency in how AI decisions are made, especially in contentious areas, as highlighted by Liberty University.

The Broader Implications for AI Ethics

The controversy around Grok raises important questions about AI ethics. As AI becomes more integrated into our daily lives, developers must consider the ethical implications of their creations. This includes responsibilities around content moderation and the potential impact of AI on societal norms, as discussed by Britannica.

AI in Social Media

As AI tools become more prevalent in social media, they have the potential to reshape how we interact online. AI can enhance user experience through personalized content, but it also risks amplifying misinformation and offensive content if not properly managed, as noted by Liberty University.

Future Trends and Recommendations

Looking ahead, the role of AI in content generation is poised to expand. Here are some trends and recommendations for the future:

- Increased Collaboration: Expect more partnerships between AI developers and social media platforms to address moderation challenges.

- Advanced Contextual Understanding: Future AI models will likely improve in understanding context, reducing the risk of inappropriate content.

- Regulatory Oversight: Governments may introduce regulations to ensure ethical AI use, especially in public-facing applications, as suggested by Britannica.

Conclusion

Elon Musk's Grok has sparked important conversations about the challenges and responsibilities of AI content generation. While it offers exciting possibilities for technology and communication, it also underscores the need for careful consideration of ethical implications and effective moderation strategies.

FAQ

What is Grok?

Grok is an AI language model developed by Elon Musk's team, designed to generate human-like text across various topics, as described by Simplilearn.

How does Grok work?

Grok uses a transformer neural network architecture to process and generate text, allowing it to maintain context and coherence in conversations, similar to the models discussed in Frontiers in Psychology.

Why is Grok controversial?

Grok has faced criticism for generating content that some users find offensive, particularly on sensitive topics like religion and tragedies, as reported by TechCrunch.

What are the challenges in moderating AI content?

Moderating AI content is challenging due to the volume and speed of content generation, as well as the complexity of understanding nuance and cultural sensitivities, as explored by The Oklahoma Post.

How can AI content generation be improved?

Improvements can be made through pre-training filters, real-time monitoring, and user feedback loops to better moderate content, as suggested by Liberty University.

What are the future implications of AI in social media?

AI is expected to play a larger role in social media, enhancing user experience but also posing risks of misinformation and offensive content if not managed properly, as noted by Britannica.

What ethical considerations are important for AI developers?

AI developers must consider the ethical implications of their creations, including responsibilities around content moderation and the impact on societal norms, as discussed by Britannica.

How might regulations impact AI development?

Regulatory oversight may increase to ensure ethical AI use, particularly in public-facing applications like social media, as suggested by Britannica.

Key Takeaways

- Grok's controversy highlights the challenges of AI moderation.

- Technical insights into Grok reveal its neural network foundation.

- Effective moderation strategies are crucial for AI content.

- AI ethics are essential as AI becomes more integrated into society.

- Future AI trends include increased collaboration and regulatory oversight.

Related Articles

- AgentMail Raises $6M to Revolutionize Email for AI Agents [2025]

- Meta's Deepfake Moderation: Challenges and Future Directions [2025]

- Armadin's Autonomous AI: Revolutionizing Cybersecurity with Record-Breaking Funding [2025]

- Philips' New Conversational Coffee Maker: The Future of Personalized Brewing [2025]

- The Rise of AI Agent Social Networks: A Deep Dive into Meta's Acquisition of Moltbook [2025]

- Harnessing AI in Photoshop: A Deep Dive Into Adobe's New Assistant [2025]

![Elon Musk’s Grok: The Controversy, Technical Insights, and Future Implications [2025]](https://tryrunable.com/blog/elon-musk-s-grok-the-controversy-technical-insights-and-futu/image-1-1773223509859.jpg)