Meta's Deepfake Moderation: Challenges and Future Directions [2025]

Introduction

In recent years, the rise of deepfakes — hyper-realistic synthetic media created using artificial intelligence — has posed significant challenges to digital platforms. Meta, the parent company of Facebook and Instagram, has been at the forefront of moderating such content. However, the company's Oversight Board recently criticized its current moderation strategies, highlighting the inadequacies in dealing with deepfakes effectively.

This article explores the complexities of deepfake technology, the challenges Meta faces in moderating such content, and potential solutions and best practices for the future. We'll delve into technical details, practical implementation guides, and explore future trends in AI content moderation.

TL; DR

- Deepfake Technology: Deepfakes are AI-generated media that mimic real people, posing risks to privacy and misinformation.

- Moderation Challenges: Meta struggles with identifying and moderating deepfakes due to their sophistication and volume.

- Technical Solutions: AI and machine learning offer potential solutions but require constant updates and ethical considerations.

- Future Trends: Expect advancements in detection algorithms and regulatory frameworks to evolve.

- Best Practices: Combining AI tools with human oversight can enhance moderation effectiveness.

Understanding Deepfake Technology

Deepfakes are digital forgeries created using deep learning and neural networks. By analyzing and mimicking the voice, appearance, and mannerisms of a person, these algorithms can create highly realistic videos and audio clips. The implications are vast, ranging from benign uses in entertainment to malicious applications such as political misinformation and identity theft. According to Copyleaks, deepfakes have become increasingly sophisticated, making detection more challenging.

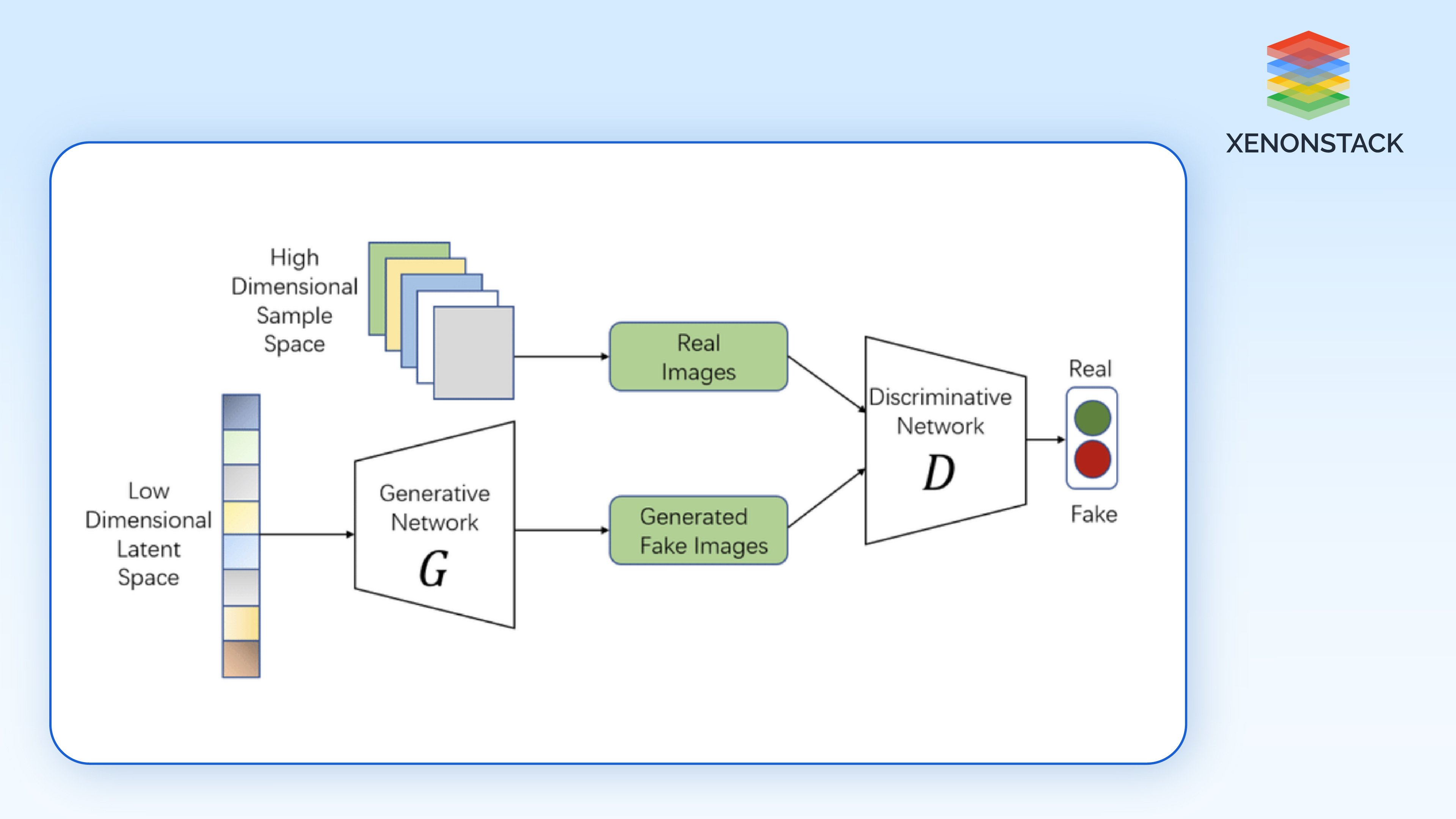

How Deepfakes Work

Deepfakes primarily rely on Generative Adversarial Networks (GANs). These networks consist of two parts: a generator and a discriminator. The generator creates fake data, while the discriminator evaluates its authenticity. Through iterative training, the generator improves its ability to produce convincing forgeries. ExpressVPN provides a detailed explanation of how GANs function in creating deepfakes.

Technical Challenges in Moderating Deepfakes

Scale and Sophistication

One of the primary challenges Meta faces is the sheer scale of content on its platforms. With billions of users, the volume of data generated daily is staggering. Deepfakes add another layer of complexity due to their sophistication.

Common Pitfalls:

- High False Positives: Automated systems may inaccurately flag legitimate content as deepfakes, leading to user dissatisfaction.

- Evasion Tactics: Malicious actors continuously refine their techniques to bypass detection algorithms.

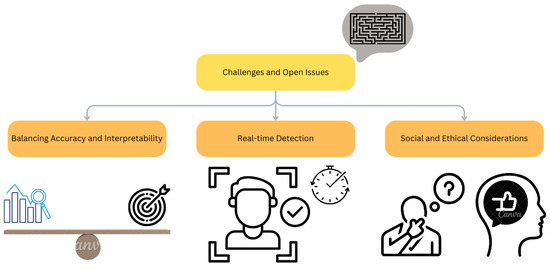

Ethical Considerations

Moderating deepfakes involves ethical dilemmas, such as balancing free speech with the need to prevent harm. Overzealous moderation can stifle legitimate expression, while under-moderation may allow harmful content to proliferate. The Gartner Security & Risk Management Summit highlighted these ethical challenges in its recent discussions.

Practical Implementation Guides

Building Effective Moderation Frameworks

To effectively moderate deepfakes, platforms like Meta need to implement robust frameworks that combine technological and human elements.

Step-by-Step Guide:

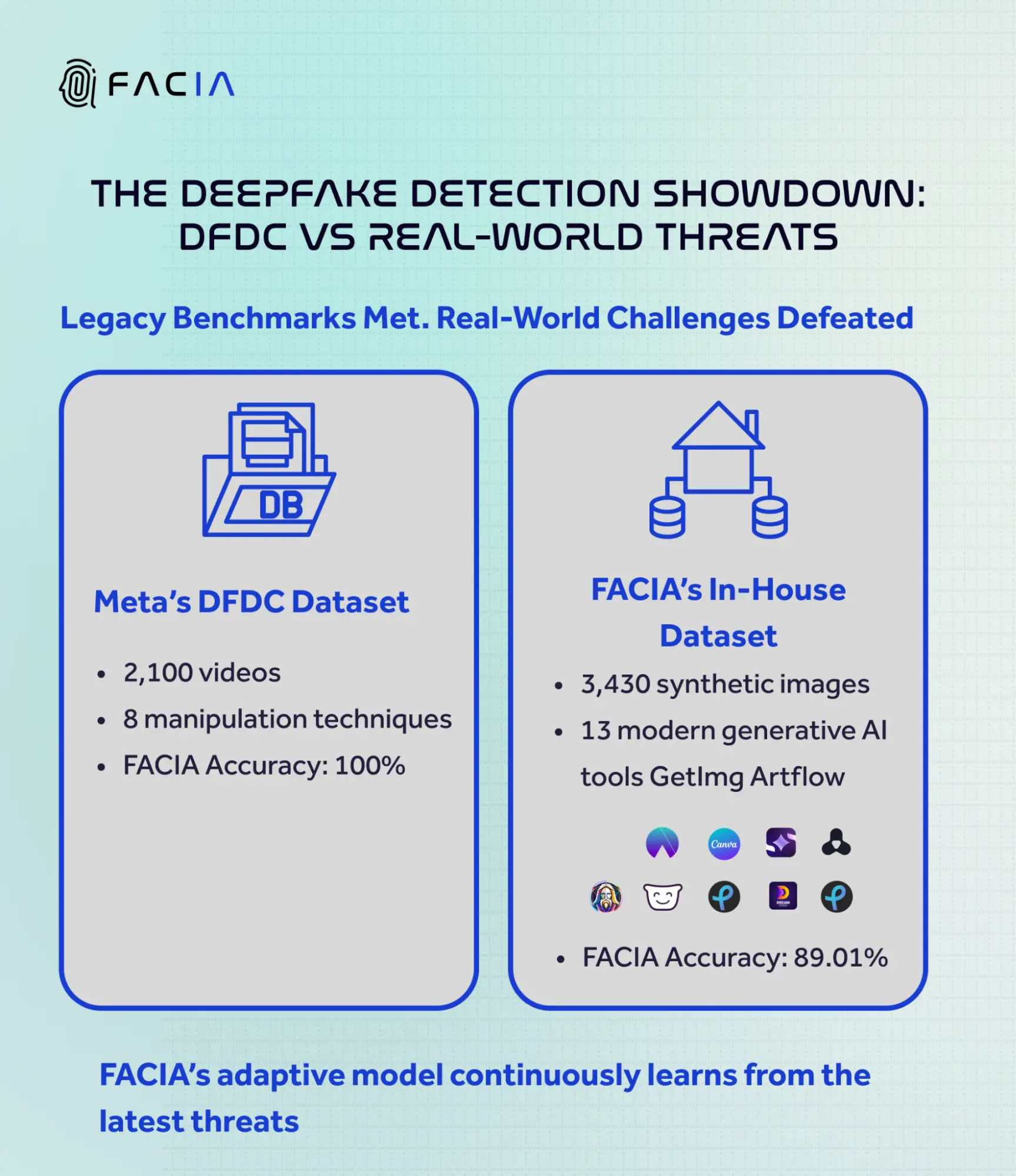

- AI-Powered Detection: Deploy AI models trained on large datasets to identify potential deepfakes.

- Human Review: Employ human moderators to verify AI decisions, especially in ambiguous cases.

- User Reporting: Encourage users to report suspicious content, providing additional data for AI systems.

- Transparency and Feedback: Maintain transparency about moderation practices and allow users to appeal decisions.

Leveraging AI and Machine Learning

AI and machine learning offer promising tools for deepfake detection, but they require constant updates to remain effective against evolving threats. Microsoft's security blog discusses how AI can be operationalized to enhance detection capabilities.

Best Practices:

- Regularly update AI models with new data to improve accuracy.

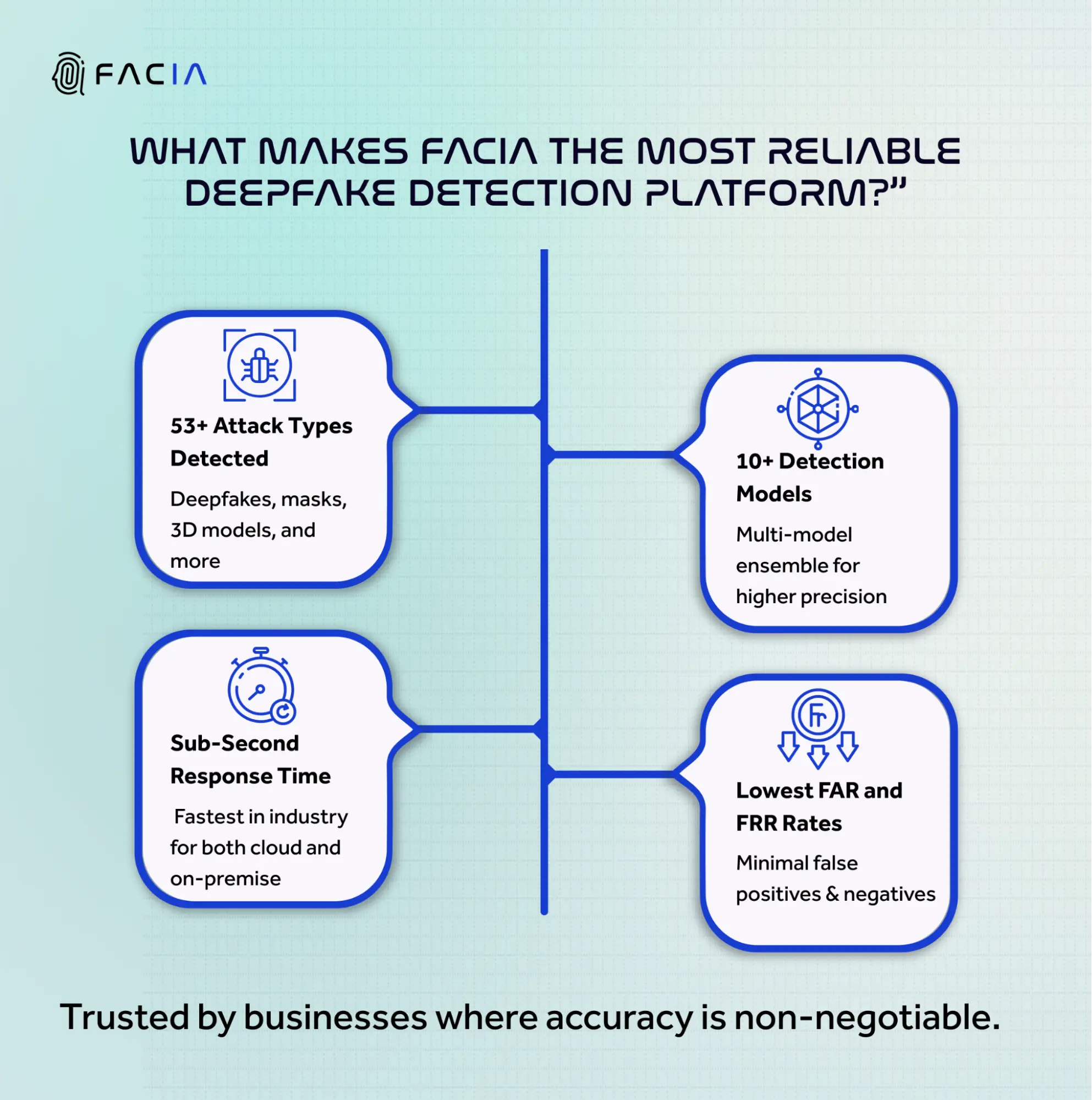

- Implement ensemble models that combine multiple algorithms for better detection rates.

- Use watermarking techniques to distinguish original content from manipulated media.

Future Trends in Deepfake Moderation

Advancements in Detection Technology

As deepfakes become more sophisticated, detection algorithms must evolve. Future advancements may include:

- Enhanced GAN Models: Utilizing more complex GANs for both creation and detection of deepfakes.

- Real-Time Analysis: Developing algorithms capable of analyzing video content in real-time.

Regulatory Developments

Governments worldwide are beginning to recognize the threat posed by deepfakes. Expect increased regulation and collaboration between tech companies and policymakers to establish standards for content moderation. The Boston Consulting Group has explored how generative AI could reshape cybersecurity and online safety, emphasizing the need for regulatory frameworks.

Common Pitfalls and Solutions

Over-Reliance on Automation

While AI is powerful, relying solely on automated systems can lead to oversights.

Solution:

- Balance AI with human oversight to ensure nuanced decision-making.

- Continuously test and refine AI models to reduce false positives and negatives.

Lack of Transparency

Users often distrust moderation processes due to a lack of transparency.

Solution:

- Provide clear communication about how deepfake detection works.

- Allow users to understand why content was flagged and offer recourse for false positives.

Conclusion

Deepfake technology presents both fascinating opportunities and significant challenges. As Meta navigates these complexities, it must innovate and adapt its moderation strategies continually. By leveraging AI advancements, embracing ethical frameworks, and collaborating with regulators, Meta can better manage the risks associated with deepfakes. The Digiday report on Meta's LLM strategy highlights the importance of integrating AI into moderation practices.

FAQ

What are deepfakes?

Deepfakes are realistic synthetic media generated using AI algorithms, capable of mimicking real individuals' voices and appearances.

How does Meta currently moderate deepfakes?

Meta employs AI models to detect deepfakes, supplemented by human review and user reporting systems.

Why is deepfake moderation challenging?

The challenges include the rapid evolution of deepfake technology, high content volume, and balancing ethical considerations.

What role does AI play in moderating deepfakes?

AI is crucial for detecting deepfakes due to its ability to analyze large datasets and identify subtle manipulations in media.

What are the future trends in deepfake moderation?

Expect advancements in detection algorithms, regulatory frameworks, and collaboration between tech companies and governments.

How can platforms improve their deepfake moderation?

Platforms can enhance moderation by combining AI with human oversight, maintaining transparency, and continuously updating detection models.

Key Takeaways

- Deepfakes are AI-generated media that pose risks to misinformation.

- Meta struggles with effective moderation at scale.

- AI and human oversight are crucial for accurate detection.

- Expect regulatory changes and technological advancements.

- Balancing free speech with moderation is an ethical challenge.

Related Articles

- Building AI with a Physical World Understanding: Yann LeCun's Vision [2025]

- Exploring OpenAI's GPT-5.4: Strengths, Weaknesses, and Future Enhancements [2025]

- Navigating the Pentagon's AI Controversy: Implications for Startups in Defense [2025]

- TikTok's Continued Operations in Canada: Navigating Enhanced Security Measures [2025]

- The Future of Work: Lawyers and Scientists Training AI to Steal Their Careers [2025]

- Nvidia's Open-Source AI Agent Platform: A Comprehensive Guide [2025]

![Meta's Deepfake Moderation: Challenges and Future Directions [2025]](https://tryrunable.com/blog/meta-s-deepfake-moderation-challenges-and-future-directions-/image-1-1773138966573.jpg)